Samaaro + Your CRM: Zero Integration Fee for Annual Sign-Ups Until 30 June, 2025

- 00Days

- 00Hrs

- 00Min

Bottom Line:

Education happens when engagement is visible and verifiable. Without it, CME conferences deliver content not proven learning.

CME conferences look successful by every institutional measure that exists. Scientific content is strong. Expert faculty deliver credible education. Attendance is consistent. Compliance requirements are fully met. On paper, nothing is broken.

But educational impact does not come from content delivery. It comes from participant engagement. And that is where visibility disappears.

Organizations can confirm who attended. They cannot confirm who actively learned. They can document session completion. They cannot prove knowledge absorption or educational influence.

This creates a structural illusion. Education appears effective because it was delivered. Not because it changed anything.

When engagement is invisible, learning becomes an assumption rather than an outcome. Institutions protect the educational process while losing control over educational impact.

This blog covers why educational delivery creates institutional confidence without guaranteeing educational impact, and why engagement visibility is the missing link between content and learning.

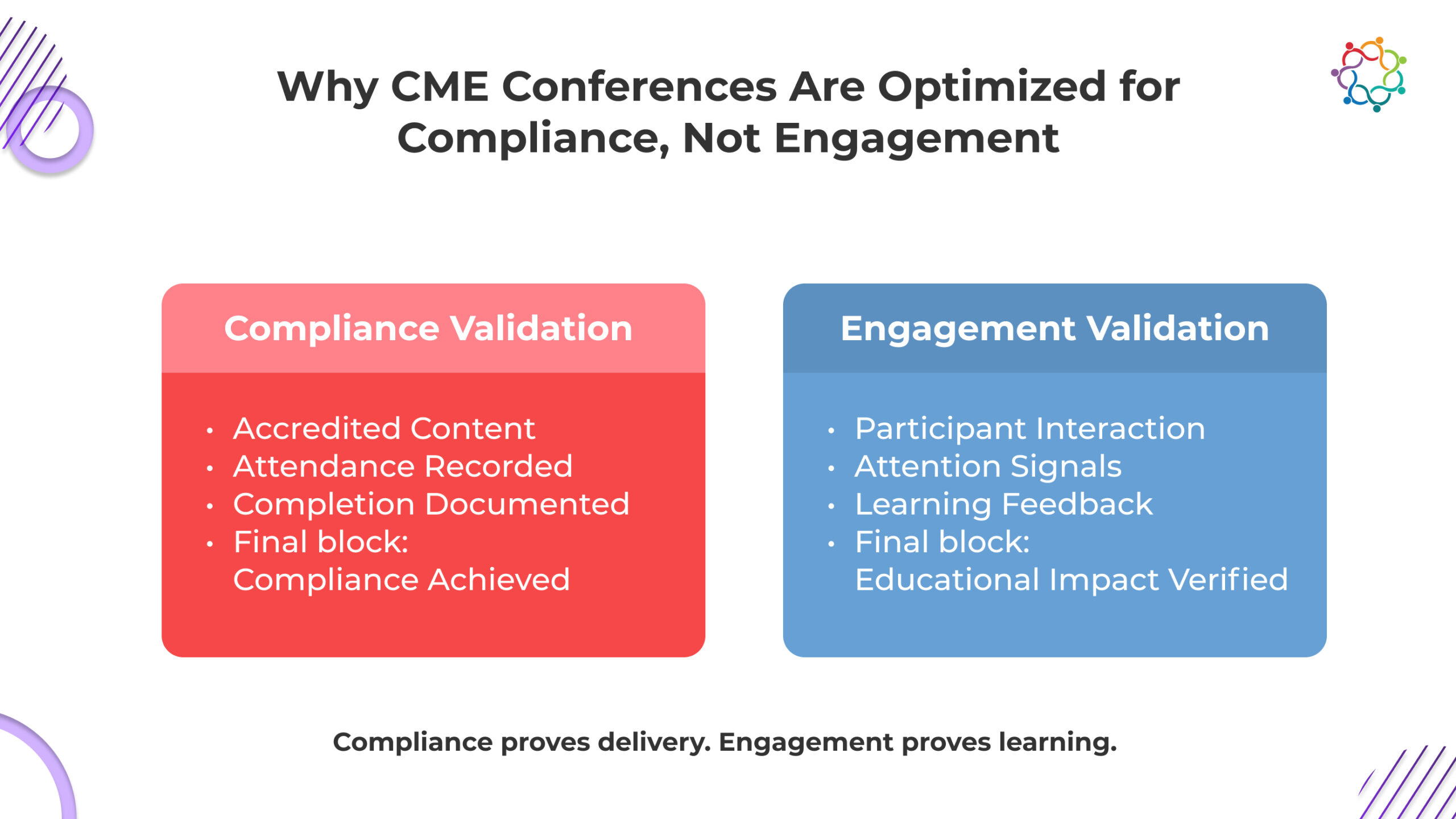

Compliance frameworks exist to protect educational credibility. They ensure scientific validity. They enforce content accuracy. They confirm that medical education meets institutional and ethical standards.

This structure serves an essential purpose. It protects trust in continuing medical education events. But compliance frameworks were never designed to measure engagement.

They confirm that the content was delivered correctly. They do not confirm that participants actively engaged with the content, causing structural misalignment.

When education systems optimize around compliance, they prioritize delivery integrity. Engagement becomes secondary. Interaction becomes optional. Participation depth becomes invisible.

The system rewards completion. It does not reward cognitive involvement.

This creates a predictable chain of consequences:

This is not a failure of intent. It is a structural outcome.

Compliance protects credibility. It does not create engagement. Educational effectiveness depends on interaction, reflection, and reinforcement. Compliance systems do not track these variables.

The institutional consequence is unavoidable. Education becomes operationally complete but educationally unverified. This creates a silent erosion of educational influence.

Organizations continue delivering education. They lose visibility into whether learning is actually occurring.

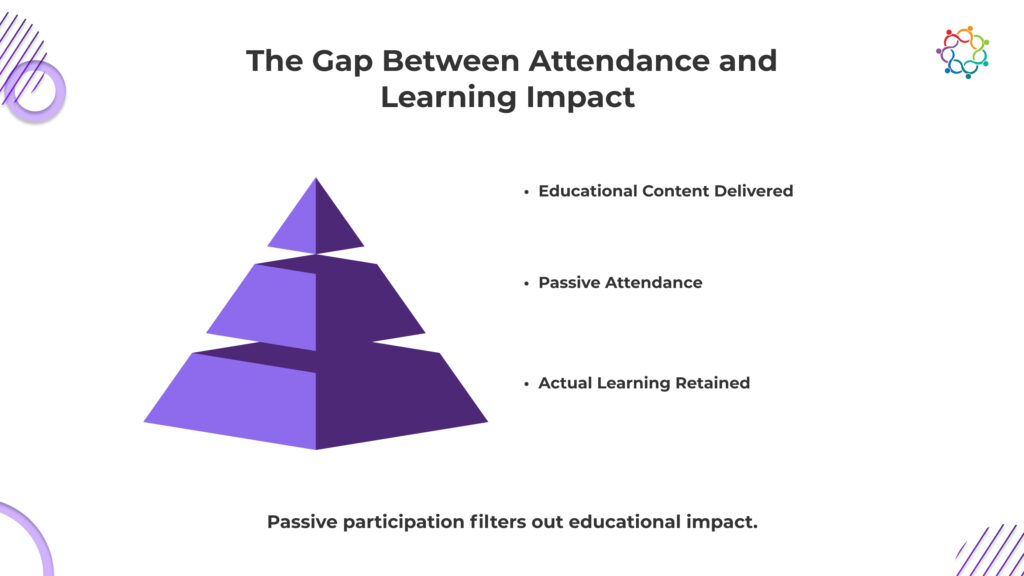

Attendance provides reportable metrics. It confirms participant presence and session completion. It does not confirm comprehension, retention, or applied change.

Presence in a session does not indicate cognitive engagement. Exposure to content does not demonstrate understanding. Completion does not establish clinical influence.

In many educational environments, attendance metrics function as proxies for impact. However, proximity to content is not equivalent to knowledge transfer.

If institutions cannot identify which participants internalized key concepts, where confidence shifted, or whether clinical reasoning evolved, learning impact remains unverified.

This distinction matters. Reporting participation volume without validating educational progression creates a measurement gap.

Attendance supports documentation. Measurable learning progression supports credibility.

Without separating the two, reported educational success may not reflect actual educational influence.

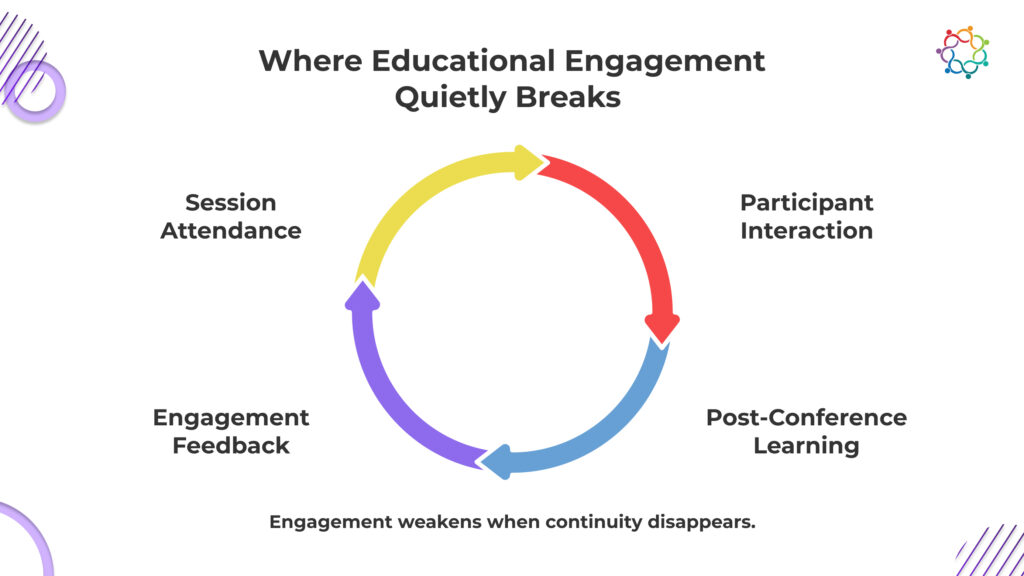

Educational engagement does not collapse instantly. It deteriorates through a sequence of invisible failures. Each stage reduces engagement clarity. Each stage weakens the learning impact.

Participants sit through sessions, but organizers cannot identify who asked questions, who responded to key concepts, or who disengaged midway. Attendance logs flatten every participant into the same category. Active cognitive involvement and passive listening look identical in institutional records.

This removes the ability to isolate where learning actually happened.

Educational leaders are left with session completion data, not learning evidence. Without interaction visibility, educational effectiveness remains an unverified assumption, not a confirmed outcome.

During sessions, participants experience moments of clarity, confusion, and doubt. These moments define learning. Yet most of this feedback is never captured when it happens. Post-event surveys rely on delayed recall, which weakens accuracy. Participants forget specific friction points. Educational leaders receive generalized satisfaction responses instead of precise learning signals.

This prevents faculty and institutions from identifying where education succeeded or failed.

Without real-time feedback integrity, educational evaluation becomes a broad opinion, not precise educational intelligence.

Once the conference ends, participant visibility stops. Institutions do not know whether attendees revisited concepts, applied knowledge in clinical settings, or disengaged entirely. There is no structured mechanism to observe knowledge progression after session completion. Educational influence becomes time-bound to the event itself.

This prevents organizations from linking education to sustained professional impact.

Without post-conference engagement continuity, learning remains an isolated exposure rather than a measurable progression that strengthens clinical competence over time.

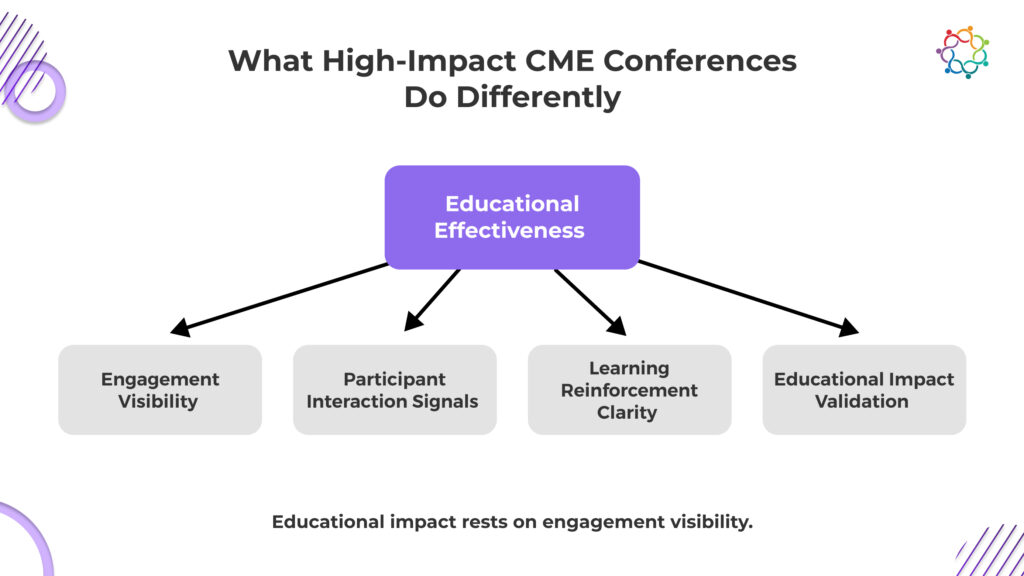

The difference between low-impact and high-impact educational environments is not content quality. It is engagement visibility.

Low-impact environments optimize for:

High-impact environments optimize for:

This structural difference determines educational effectiveness.

Content does not create impact. Engagement does.

Educational value emerges when participants interact with knowledge, not when they are exposed to it. This is where CME conferences either create influence or lose it entirely.

When engagement is visible, educational leaders gain control. They can see learning progression. They can measure educational clarity. They can validate educational effectiveness.

When engagement is invisible, education becomes speculative.

Institutions assume learning occurred. They cannot prove it. This distinction separates procedural education from impactful education.

Most educational programs operate without persistent proof of educational control. Sessions are delivered. The event concludes. Visibility into participant progression diminishes immediately after.

This is not a communications issue. It is an infrastructure limitation.

If education influences clinical thinking, that influence should generate observable signals. Without observable signals, educational impact becomes inferential rather than measurable.

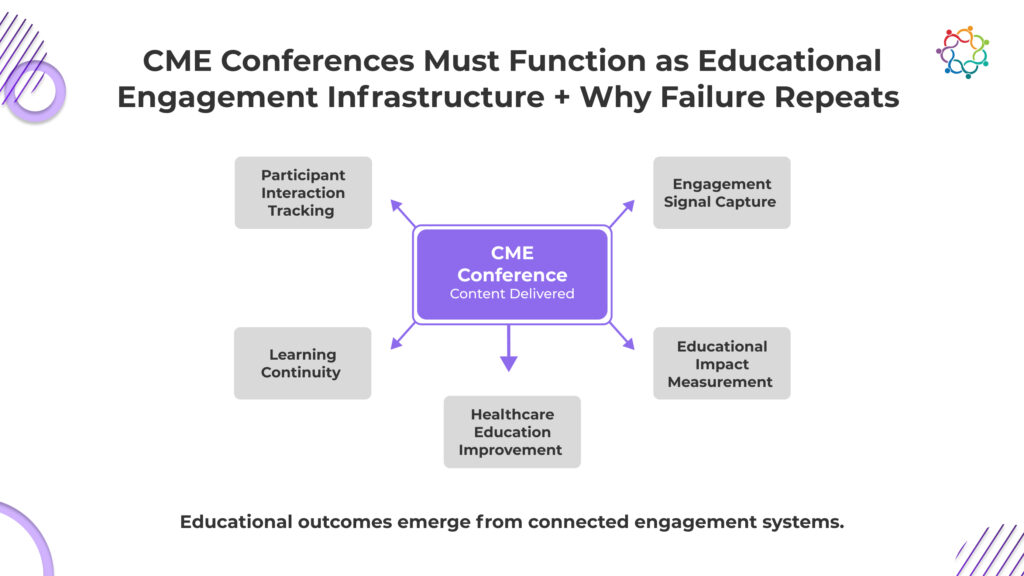

Engagement infrastructure means the event produces structured, traceable learning signals that persist beyond the session itself, signals that can be analyzed, reinforced, and institutionally defended over time.

In practical terms, this requires:

Without these mechanisms, education is delivered but not governed.

Attendance records do not demonstrate comprehension.

Session completion does not demonstrate retention.

Faculty credibility does not demonstrate applied impact.

Only structured engagement evidence does.

When educational impact is questioned – by leadership, accreditation bodies, or institutional stakeholders, defensibility depends on measurable progression, not participation volume.

Interactive learning models and structured signal capture are central to building this kind of engagement architecture. A deeper exploration of how interactive design strengthens CME defensibility can be found in this analysis on Enhancing CME Event Engagement with Interactive Learning.

This problem persists because your systems never demanded anything better.

Educational success is declared the moment attendance thresholds are met and compliance boxes are checked. Once those signals appear, scrutiny stops. No one asks who struggled to understand. No one investigates whether clinical confidence actually improved. The absence of engagement evidence is quietly tolerated because operational completion is easier to defend.

This is where leadership becomes exposed.

You are not facing engagement failure because it is unsolvable. You are facing it because your evaluation standards allow it to exist.

As long as attendance is accepted as proof, engagement will remain invisible. And when engagement remains invisible, learning remains unproven.

This means every future conference will repeat the same pattern. Education will be delivered. Success will be declared. And the one outcome that actually matters, verified learning, will remain the one thing you still cannot prove.

Healthcare institutions exist to influence clinical behavior, not to host educational gatherings. Yet most cannot prove that influence. CME conferences strengthen scientific credibility on the surface, but without engagement, their institutional value remains exposed. Leadership believes education is working because delivery is complete.

But when impact is questioned, belief is not a defensible position. The absence of proof shifts education from a strategic asset to an unverified expense.

You can confirm sessions occurred. You cannot confirm they changed their thinking. This leaves educational outcomes open to doubt when leadership, regulators, or sponsors demand evidence of real impact.

Significant resources fund medical education. Without engagement visibility, you cannot show return in terms of learning progression, making future investment harder to justify with authority.

Education exists to improve decisions. If you cannot track retained knowledge or applied learning, clinical improvement becomes a claim without institutional backing.

Credibility is not built on delivery. It is built on provable influence. The moment you cannot prove learning, your educational leadership stands on completion records, not educational evidence.

Education delivery is not the same as educational impact. Sessions can be scheduled, delivered, and documented. That does not mean learning persisted.

Most institutions can prove attendance. Few can prove progression. That gap is not academic – it is structural risk.

When educational programs are questioned by leadership, accreditation bodies, or stakeholders, attendance records will not demonstrate comprehension. Completion certificates will not demonstrate application.

Only engagement evidence establishes defensible impact.

If a CME conference cannot demonstrate measurable participant engagement, it cannot credibly demonstrate educational effectiveness. At that point, impact becomes an assumption rather than an asset.

Forward-looking institutions are already reframing CME conferences as measurable learning infrastructure. The shift is not about improving content quality. It is about improving visibility into educational progression.

If engagement is invisible, educational authority is vulnerable.

Platforms built for structured engagement capture and longitudinal visibility – such as Samaaro – are enabling institutions to operationalize this shift from event delivery to measurable educational governance.

Samaaro is an AI-powered event marketing platform that enables marketing teams to turn events into a measurable growth channel by planning, promoting, executing, and measuring their business impact.

Location

© 2026 — Samaaro. All Rights Reserved.