Samaaro + Your CRM: Zero Integration Fee for Annual Sign-Ups Until 30 June, 2025

- 00Days

- 00Hrs

- 00Min

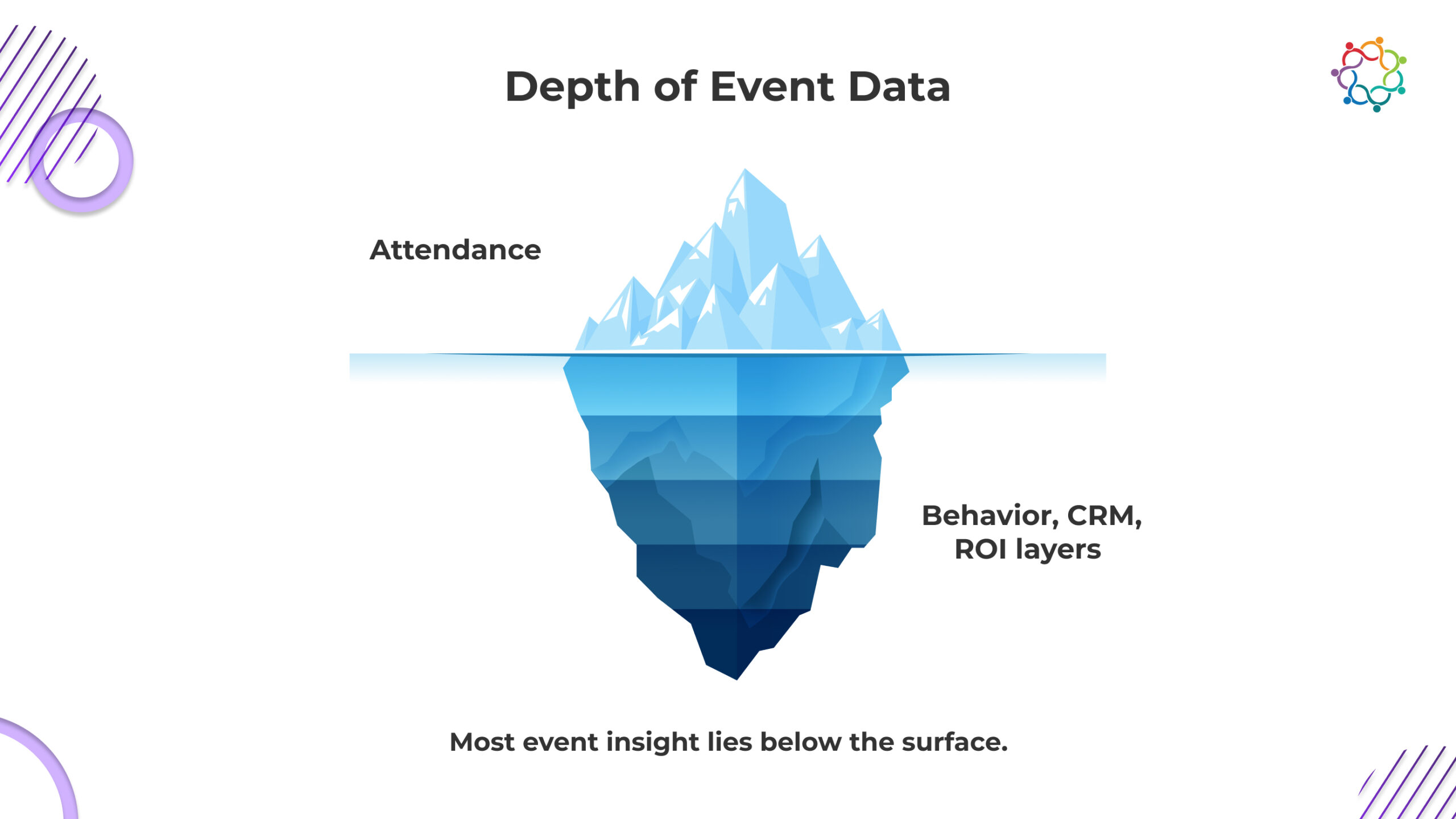

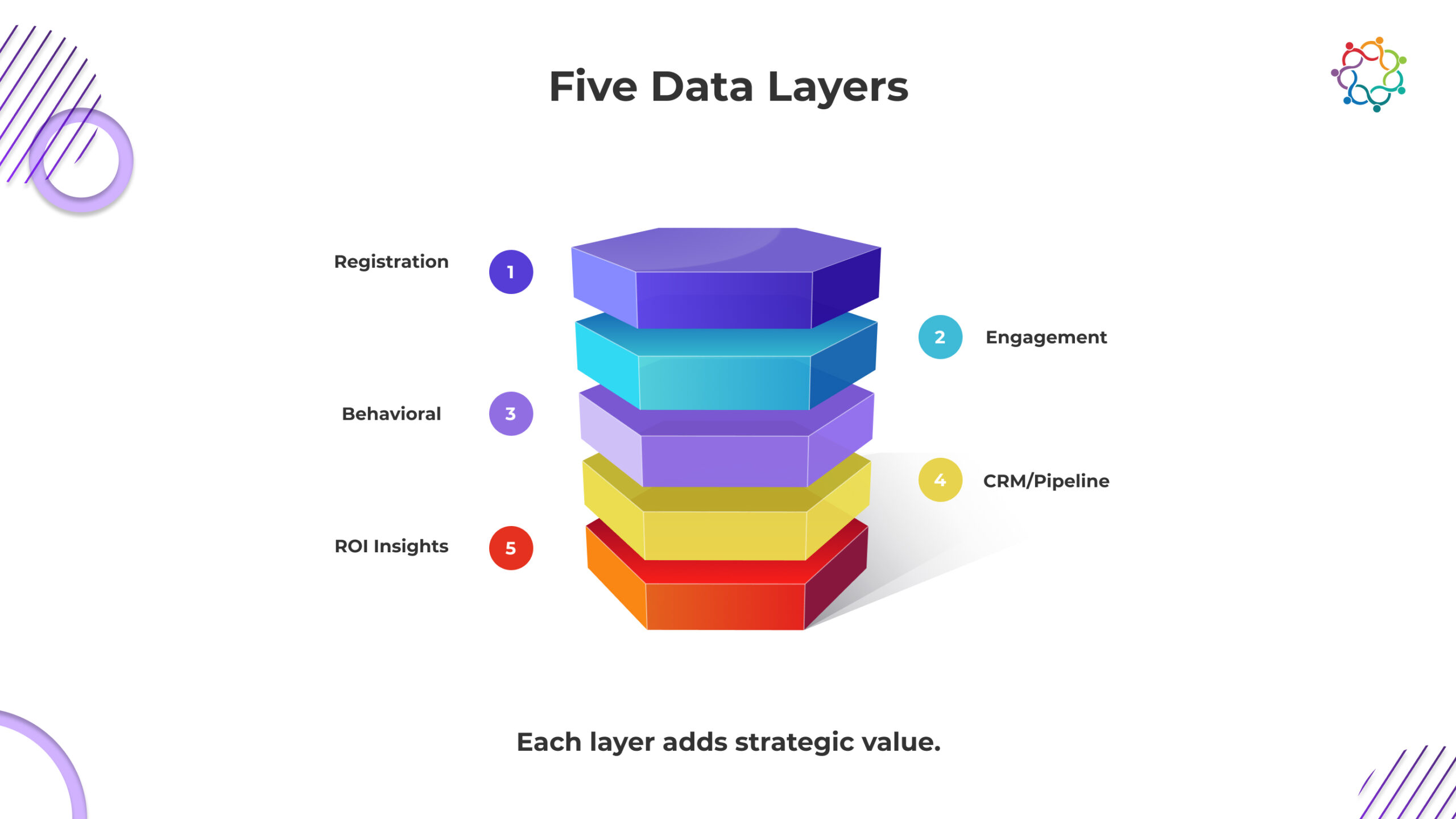

Event data has evolved into one of the most valuable strategic assets for modern marketing teams. Yet many organisations still view it through a narrow lens, focusing only on surface-level indicators such as attendance or registrations. In reality, every click, dwell, check-in, or content interaction reveals intent, readiness, and the true quality of audience engagement. When analysed as a unified system instead of isolated data points, these signals form a powerful intelligence model that can shape content strategy, optimise resource allocation, and directly influence pipeline outcomes. This blog explores the five layers of event data and how each contributes to enterprise decision-making.

The first layer of event intelligence starts long before your event begins. Registrant data reveals audience intent, discovery channels, and potential for segmentation. As a marketer, you are identifying which campaigns brought in the most registrations, which industries are the most interested in your event, and which regions are generating the most early engagement. The speed at which registrants are adding their names also reveals some insight into how your audiences behave, so you can understand if they are exhibiting the behaviors of planners, last-minute decision-makers, or both.

This layer shapes strategic decisions regarding messaging, outreach, and resource allocation. If you notice a high percentage of registrants are coming from a specific sector, the content of the sessions can be modified to reflect the registrants. Likewise, if there are geographic areas that are lagging behind in registrations, campaigns can be initiated in those regions to drive registrants. Registration data is relatively basic at first glance, but is foundational to forecasting demand, prioritizing the audience, and strategizing for the event in its early stages.

Once participants enter the event environment, engagement data is the next critical indicator of value offered. Engagement informs us where participants went, and how they engaged. This may include session join rates, poll answers, questions and answers, booth attendance, networking engagement, content downloads, etc. The aim of engagement data is to evaluate how well value was offered, and what sessions or activities provided that value.

Engagement data can also give insight into periods of the event that had the highest energy levels, and the topics that highlighted the most alignment with attendee interests. For example, if a session had low engagement, but high registration, this may indicate a timing issue. Or, if a workshop had high dwell time, and a second engagement, this may indicate a good content-community fit. Engagement data will also allow you to evaluate the speaker’s performance as well as the efficiency of the event format and content relevancy; however, engagement data will always be a primary action if you are committed to investing in optimising your event, long-term.

Behavioral data extends beyond direct engagement actions and uncovers the “why” behind attendee movement and attention patterns. It tracks elements such as page views, dwell time in different event areas, navigational flow, mobile app usage, and repeated visits to certain zones or links. This type of data provides deep qualitative insight into intent.

For example, an attendee repeatedly viewing a product page or revisiting a specific session recording signals interest and potential readiness for a sales conversation. Long dwell time at knowledge hubs or exhibitor sections may indicate a need for more personalised content follow-up. Behavioral data gives marketers a richer narrative about what the attendee actually cares about, enabling highly targeted post-event communication, refined content strategies, and more precise audience segmentation.

While behavioral and engagement data indicate intent, CRM and pipeline data connect that intent to business outcomes. This is the point where event intelligence (like an exit questionnaire) begins to be tied to revenue. Connecting event analytics to CRM visibility allows teams to see which breakout sessions led to booked meetings, which attendee actions helped accelerate the deal, and which sessions moved the pipeline.

This is especially important for CMOs and revenue leaders. It is clear after an event whether they succeeded in attracting their intended audience, whether engagement led into sales conversations, and where marketing and sales alignment need adjustments. When event data is linked to a CRM, the team no longer relies on subjective feedback after the event, instead uses solid proof to assess whether the event had an impact. The team is also equipped to see which cohort they truly value, how to nurture that cohort more strategically, and measure the actual impact of each event in growing the business.

In the final phase, you’ll translate raw data into macro-level intelligence that will support your organisation to improve long-term event strategy. ROI and strategic insight are made up of the costs of engagement, pipeline contribution, audience retention and brand lift to support a true retrospective view of an event’s overall impact. Rather than to simply look at singular parameters such as attendance, this phase will support evaluating which format, topics or engagement led to a higher return on investment.

This level of event intelligence supports leaders to make better informed decisions around budgets allocation, prioritisation of channels and event design. For example, if data shows that thought-leadership sessions positively influence pipeline better than product demos consistently, teams can focus their attention for the next event in a similar way. Similarly, retention insight suggests how the event performed in influencing community building or loyalty. Strategic intelligence takes us from the tactical execution of event marketing to upon enterprise plan for growth.

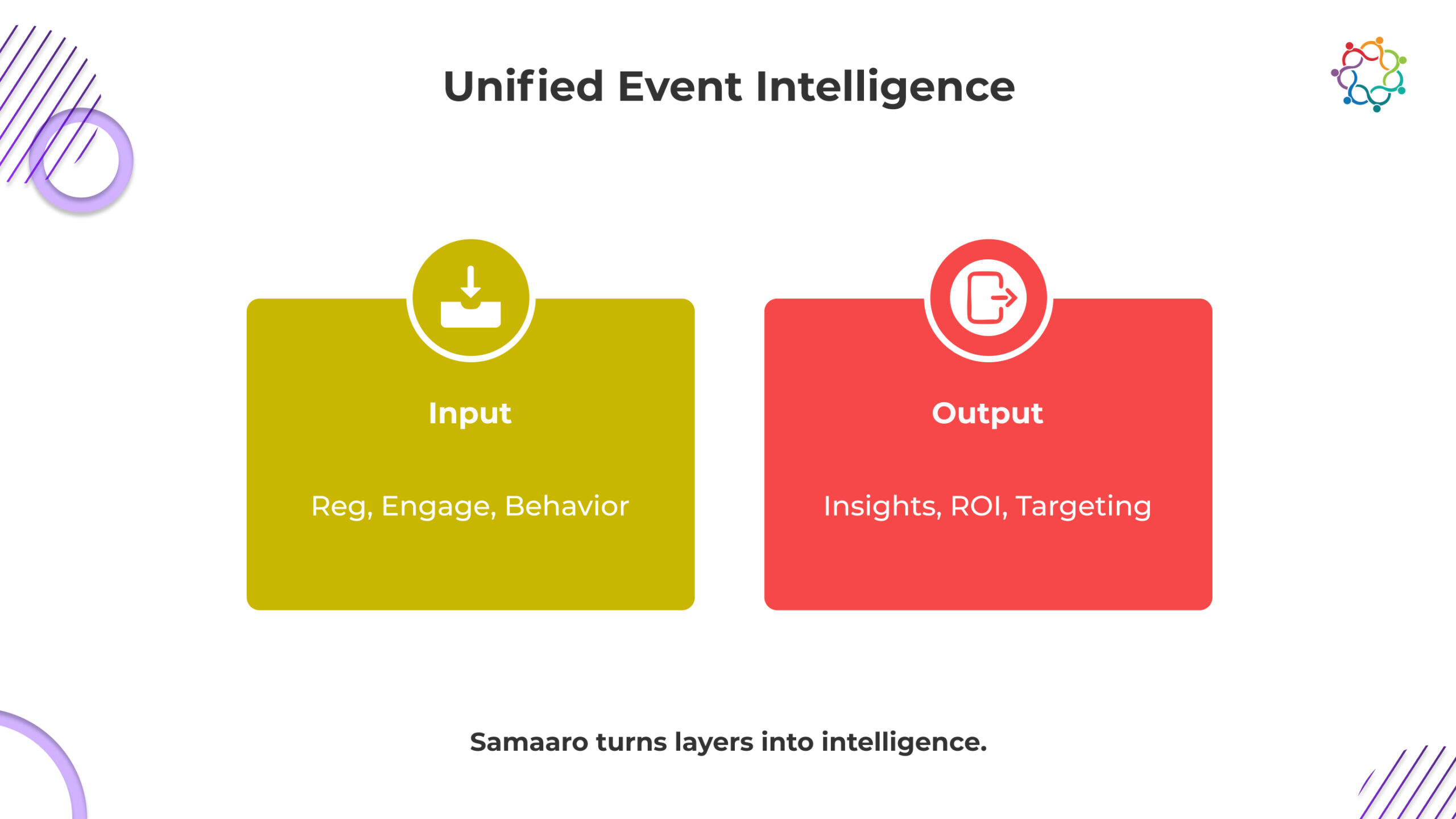

Most organisations handle registration data in one tool, engagement analytics in another, behavioural signals in a third, and CRM outcomes in a fourth. Samaaro removes that fragmentation by unifying all five layers of event data into a single analytics engine designed for enterprise decision-making.

Samaaro captures acquisition channels, sector mix, regional distribution, and signup velocity, then connects these patterns to actual behaviour and pipeline outcomes. This turns registration data from a vanity metric into an early predictor of demand and audience quality.

Session join rates, poll responses, engagement hotspots, and content downloads flow into a real-time dashboard. Samaaro highlights what delivered value and what underperformed, giving teams immediate clarity on content relevance and speaker impact.

Heatmaps, dwell time, navigation flow, repeat visits, and mobile usage patterns are merged with engagement data to reveal intent, not just participation. Samaaro shows who is exploring deeply, who is circling high-value content, and who is signalling readiness for a sales conversation.

Samaaro connects every interaction to CRM records to surface account-level impact: which sessions accelerated deals, which content triggered meetings, and which behaviours correlate with pipeline movement. This creates a verifiable bridge between marketing activity and revenue outcomes.

The platform consolidates depth, influence, sentiment, and pipeline contribution into a single ROI layer. Leaders can see which formats produce the highest ROI, which audiences convert, which topics create momentum, and which events deserve future investment.

Instead of isolated metrics, Samaaro produces a connected narrative, from the first registration signal to the last pipeline movement. This gives enterprises the ability to design sharper events, predict behaviour, and allocate budgets based on evidence, not instinct.

Samaaro transforms event data from scattered numbers into a unified intelligence system built for enterprise growth.

Event data is more intricate and significant than most organisations might think. Users’ interactions, when connected, represent a fuller picture of who your audience is, what is important to them, and how they view your event or event experience as part of a larger business result. Every click, tap or interaction contributes to a cohesive narrative that provides teams with the insights to make more informed decisions and to create purposefully curated event experiences that are valuable, interesting, and engaging. As in many cases the enterprise ecosystem supports a movement to predictive event strategy, adding integrated event intelligence to try insights well not only support this evolution but is essential to modern experience design and event success.

Most event teams at enterprises still lean on top-line metrics to determine success. They are enthusiastic about high registration numbers, a crowded venue, and other superficial benchmarks, like the number of unique badge scans at the door, but none of these numbers reflect what business leaders care about during the planning process and afterward. The question is not how many people were there, but did the event move prospects further along to purchasing, influence key accounts, or did the event create a deeper long-term affinity for the brand?

This is where the event ROI blind spot comes into play. By focusing exclusively on traditionally grounded key performance indicators (KPIs), teams feel good about the marketing metrics and how many people attended and experienced the event. However, their KPI focus shields them from determining what actually moves the business needles. As a result of selective audiences, rising costs, and closer scrutiny on event lift, teams need a modern ROI framework that goes beyond vanity metrics while capturing an aggregate impact.

For a long time, the success of an event has hinged on things that could be easily measured. We’ve celebrated total registrations, how many people walked by the booth, how many leads we collected, how many people we actively engaged in sessions, how many social impressions we had, etc. But measuring things like these provides too narrow of a view.

For example,

Limitations become obvious when we try to prove the impact of an event throughout the sales cycle. A campaign that generated thousands of leads could have otherwise had no impact on pipeline velocity. Conversely, an event that only had 10 people in the audience could open more quality conversations and yield counterparts that progress qualified accounts.

Traditional measures and context, such as registrations, foot traffic, and leads collected have never addressed the difference between engagement and true business value.

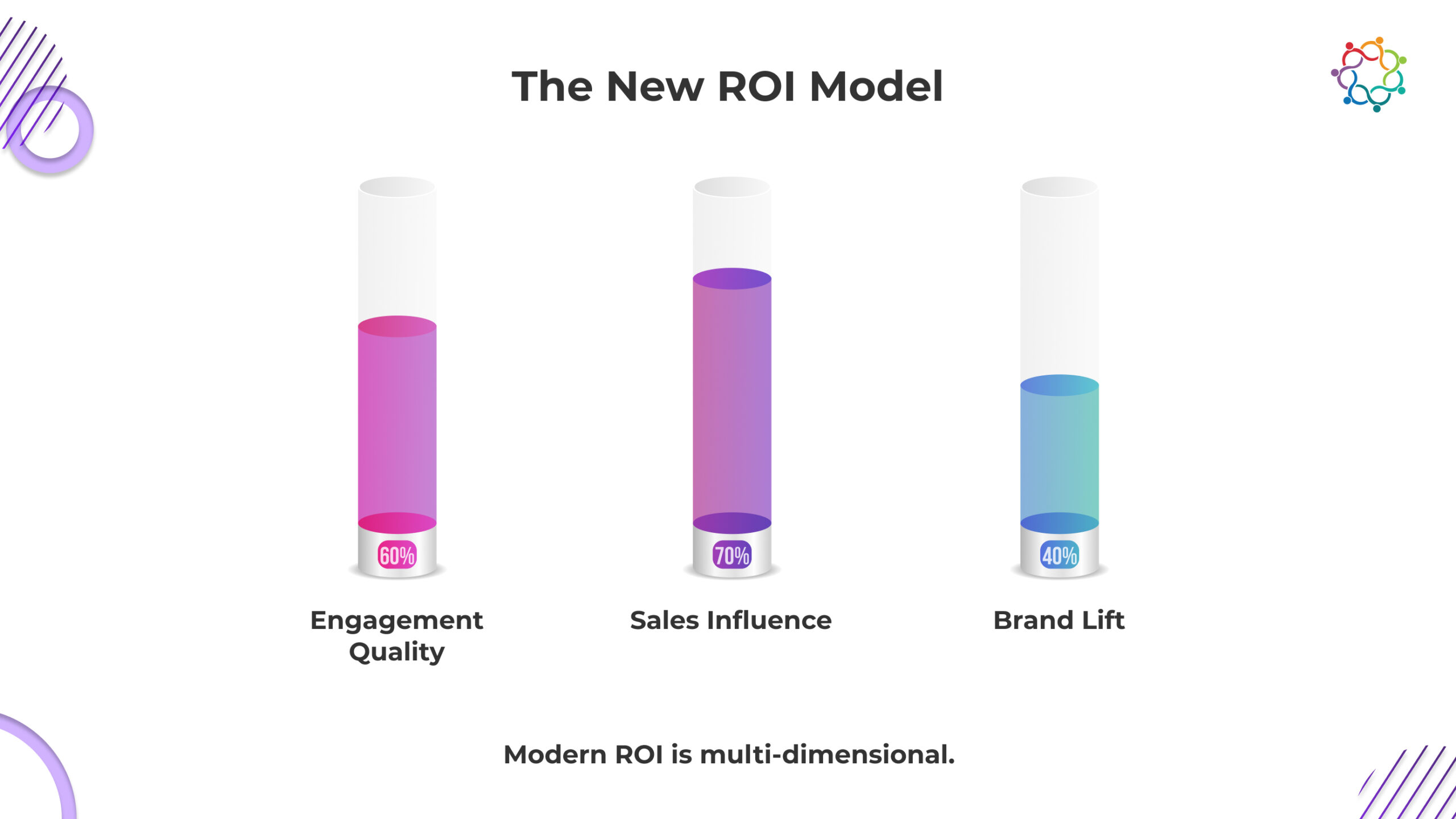

Modern event strategies require a measurement framework that captures depth, influence, and value over time. The new ROI equation is shifting away from tracking what happened to understanding why it mattered. It combines three primary dimensions.

Metrics that focus on depth quantify how much time and how much of the audience’s attention is spent with your content. These metrics include session dwell time, minutes spent interacting with a booth, repeated touchpoints, and consumption of content across digital channels. In-depth engagement suggests that there is a real interest and intent.

Metrics that represent sales influence indicate how events accelerate deals. The metrics include pipeline sourced, pipeline influenced, opportunity conversion and velocity, and account-based interaction scoring. Instead of tracking leads, this is tracking how well event touchpoints nurture momentum for sales.

Events also create impressions of the brand in a way that also ultimately impacts long-term revenue. The qualitative metrics include sentiment analysis, post-event NPS, message recall, and social advocates. All of these are representative of how the event builds trust and awareness.

Together these three dimensions create an overall measure of business impact. The new ROI equation recognizes that events impact customers on three levels: creating an emotional connection, creating educational value, and creating confidence for purchase. And it is a real representation of the role events play in enterprise growth.

A contemporary ROI framework is not feasible without a data connection across systems. Often event teams function in silos; marketing owns lead capture while sales own outcomes – limiting visibility. Meaningful insights into ROI only occur when event data is combined with records of CRM insights, behavioural analytics, and sales progress.

By tracking which accounts attended, what they engaged with and how those engagements impacted deal stages – teams can home in on which interactions truly promote motion and velocity in the pipeline.

Patterns across channels demonstrate buyer engagement and interest beyond the event venue. If attendees engage with post-event emails, resources, demos, etc. – this indicates a higher likelihood of conversion.

Sales teams can also measure whether accounts that experienced event activity progress more quickly than those that did not – which is a much stronger indicator of influence.

Changing the conversation on measuring data improves conversation over raw numbers to understand how an event or events contributed to conversions, renewals, or upsell opportunities.

When organizations adopt a new investment return equation, decisions are made quickly, and decisions become tactical and deliberate. Event strategies go from being intuition-driven to insight-determined.

Teams can determine what types of events create the most engagement depth or sales influence to determine the format to allocate the budget to that will deliver results time and again.

By determining what sessions, delivered content in what topics had the greatest business outcome, the marketing teams can shift strategies for messaging to their event and campaigns.

Depth of engagement is signalling to teams who to present their events this first who are worth even more or in a segment that should be explored due to high intent potential. Teams now have the ability to focus on high-intent, high-potential buyers with no concern to the headcount to attend.

Marketing and sales teams are more visible and coordinated with being able to plan follow up easier. The high intent attendees will take action right away increasing conversion rate.

These insights will elevate events from cost centres to predictable growing engines. Event leaders will gain the confidence to back spending, and defend a event decision with evidence, data formalities and details with context.

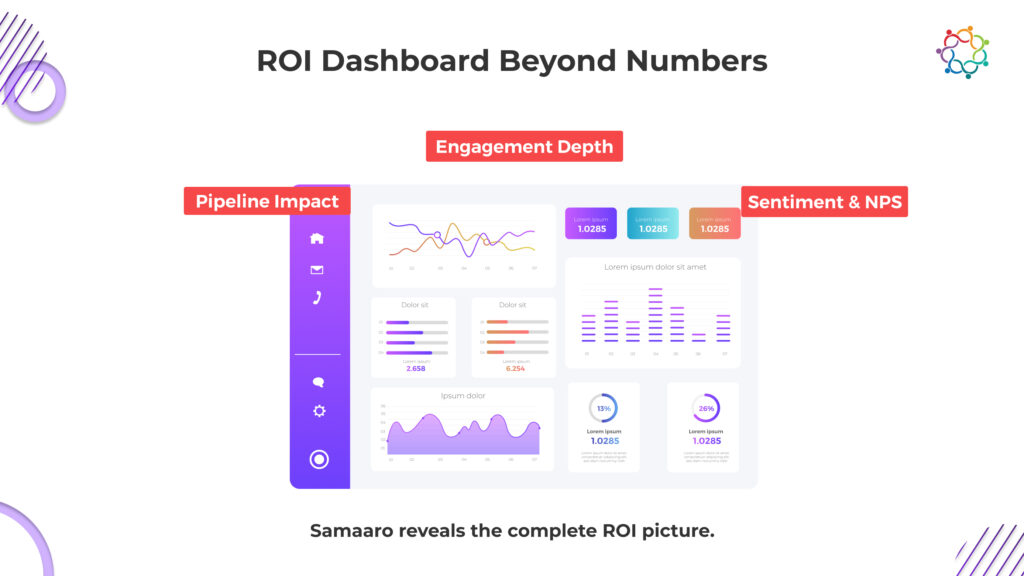

Most tools stop at attendance numbers. Samaaro is built to measure what actually moves revenue, aligning directly with the new ROI equation you’ve outlined.

Samaaro captures engagement depth at a granular level:

These signals differentiate passive attendance from real intent, the foundation of depth-based ROI.

It also tracks sales influence through direct CRM alignment. Every interaction feeds into the account record: who engaged with which session, how deeply, which assets they consumed, and how that behaviour affected opportunity stages, velocity, or deal size. Samaaro shows which touchpoints accelerated movement, and which didn’t matter.

For brand amplification, Samaaro layers qualitative intelligence on top of behavioural and CRM data. Sentiment trends, open-text insights, NPS drift, and message recall indicators sit alongside quantitative metrics so teams can understand how the event changed perception, not just activity.

The ROI dashboard does not present disconnected metrics. It produces a coherent influence map:

Instead of guessing what mattered, teams see precisely why an event drove revenue, or where value was lost. Samaaro turns ROI from a retrospective report into a forward-planning engine that guides investment, content, formats, and audience strategy.

Samaaro isn’t just reporting events; it’s measuring business impact.

Events have outgrown traditional KPIs. To understand their true impact, organisations need to measure depth, influence, and long-term value. Vanity metrics can show activity, but only modern ROI metrics can show meaningful progress toward enterprise goals.

The future of event measurement lies in smarter analytics that integrate sales, marketing, and behavioural data. By adopting the new ROI equation, leaders can finally answer the question that matters most: did the event move the business forward?

Unlock the complete ROI picture with Samaaro’s analytics suite.

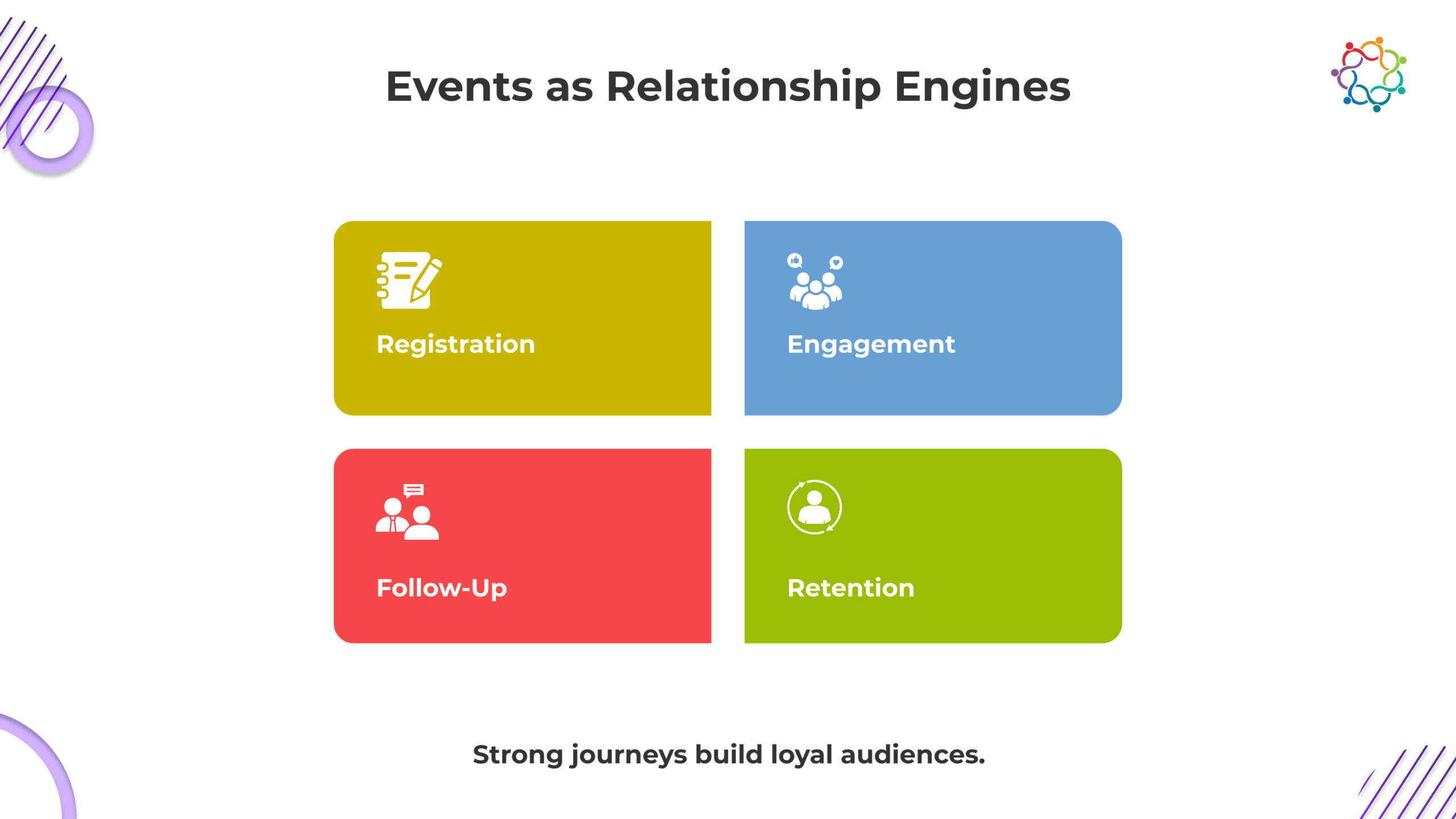

Most event teams consider events as stand-alone campaigns rather than as long-term relationship builders. However, attendees are not leads, or simply numbers on a dashboard. Like a customer journey, attendees go through a lifecycle shaped by their expectations, experiences, and emotions, before, during, and after the event. When this lifecycle aspect is purposely designed, this one of the strongest drivers of brand loyalty.

A well-planned attendee journey will provide added value to every touchpoint, reinforce intent, and move people closer to your long-term ecosystem. This shift from thinking about a single event, to thinking about a lifecycle, gives modern event marketers the ability to increase attendee satisfaction, reduce drop-off, and improve retention through a series of events.

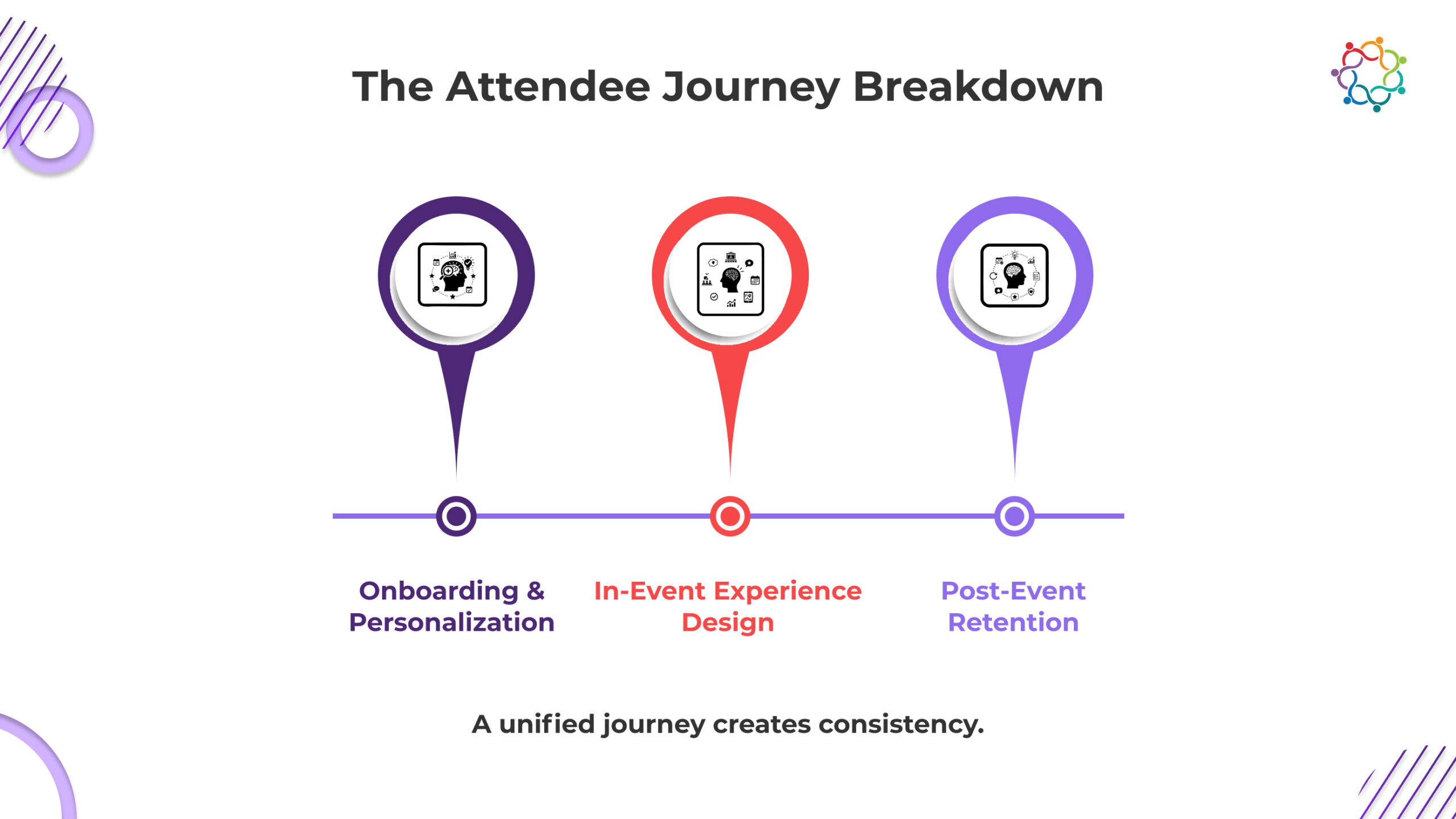

The process of an attendee starts long before they ever step foot inside a venue. It begins the moment they register. An overwhelming registration form or convoluted onboarding process can kill interest before the experience ever begins, so onboarding needs to be simple, quick, and tailored to the attendee.

Personalized registration forms can help set the tone immediately. Asking relevant questions instead of generalized questions, helps capture information that can fuel tailored content, personalized agendas, and session recommendations. Attendees are likely to remain engaged during the event lifecycle when they feel seen from the beginning.

AI-driven agenda recommendations factor in here as well. With the right software, attendee responses, recorded participation, engagement, and professional interests could merge to create a curated event experience. Instead of giving attendees a complicated agenda and forcing them to figure out which session they will attend, you can guide them along to sessions and engagement that met their needs or goals.

A clear onboarding path brings closure to phase one of the attendee journey. There is much that could introduced into the welcome emails, downloading the app, previewing speakers, or readiness information that would prepare the attendee. Every touchpoint should eliminate friction and build excitement. An attendee that arrives to the event feeling confident, informed, and excited is set up for deeper engagement throughout the event.

Once the event is underway, the strategies will steer the event from onboarding to engagement – experience design is critical to the success of driving attendees through the active engagement continuum vs. becoming passive observers. Expectations of audience experiences have evolved and event producers have to design community experiences that are interactive, social and self-rewarding.

Gamified engagement is among the most effective ways to propel sustained participation. When executed properly, challenges, rewards, scavenger hunts or leaderboard options promote exploration. It can inspire participants to get up, speak with and connect with other attendees, expand their scope and, when possible, raise their hands to participate. The promise of gamified engagements can increase attendance in a session, enhance networking, and increase the visibility of sponsors, advertisers and exhibitors – without the registration form filling experience.

Smart matchmaking is equally important. Attendees want to meet people, they do not generally want idle small talk. Letting AI matchmaking do the work to connect people with common interests based on professional goals and behavioral indicators is smart. Attendees that are introduced to each other based on their similarities are more likely to have deeper conversations and find high levels of satisfaction and future potential relationship than those without any relations to the reason for a meeting.

Moreover, “live analytics” opens another layer of the event experience, allowing your team to see in-the-moment attendee behavior. Event organizer can have data on tedious things like the dwell time in a session & traffic flow in a venue, and the cumulative sessions attendee interaction captured over a time. That timing might spark shuffling to some other popular areas, note want to disrupt the current offerings. If an event segment is failing as the interactive audience they wanted, the data could prompt them to act before the interest naturally drops.

Finally, a thoughtfully designed the in-event experience is what takes attendee curiosity to an emotional experience. When attendees feel engaged, included and a have intrinsic motivation throughout the experience, they will be far more likely to engage in a post-event activity and certainly returning for a future event.

The attendee experience does not finish once the event is over. In fact, the most essential piece of the attendee experience begins after the event. The post-event follow-up will determine whether attendees ‘just liked’ the experience or if they become a part of your community.

It is key to collect feedback timely. Well-designed surveys, sentiment polls, and rapid rating questions give attendees a voice, engaging them, while also capturing data to support ongoing improvement. Feedback gives indication to the attendee that you care about their experience. Feedback increases trust and openness.

Recapping content extends the life of the content. Recap options include short highlight reels, quotes from speakers, downloadable slides, or recordings of sessions – all these options keep attendees engaged long after the event experience is over. Recaps keep messages top of mind and also allow you to remain visible in the weeks following the event.

Community groups are yet another effective retention tool. When attendees join dedicated channels on WhatsApp, LinkedIn, or inside your event app, now they have a dedicated space to develop these conversations, announcements, collaborations, or connections. These micro-communities foster ongoing participation and a sense of belonging that continues after the event is over.

When people feel connected to the experience and brand, they will come back again. A good post-event plan makes sure that decisions and momentum are not lost after the event experience, but it translate it into continued participation.

Events are no longer solely evaluated on attendance or NPS, the future is about understanding the full journey viewed through multiple touch points and over multiple events. Once teams start analyzing attendance journeys overtime patterns will emerge. These patterns will inform marketers for better segmenting, customizing communications within every segment, and creating improved experiences for every touchpoint.

Immediately, understanding how people traverse a registration page, to taking action by process of attending sessions, and what actions they take after the event provides insight into conversion roadblocks. Additionally, Knowing which formats of content worked well overtime (or which segments dropped off early) will provide the team data to make informed decisions about what is working or not working. Over time this type of visibility at the level of the journey transforms events from being reactive experiences, to automated growth engines.

Retention becomes at least possible with behavior measured in a more holistic way. Instead of guessing or working on assumptions event marketers can make adjustments or create strategy based on actual audience signals. This becomes a winner because every new (and old) event becomes sharper, custom, and aligned with what the audience expectation was.

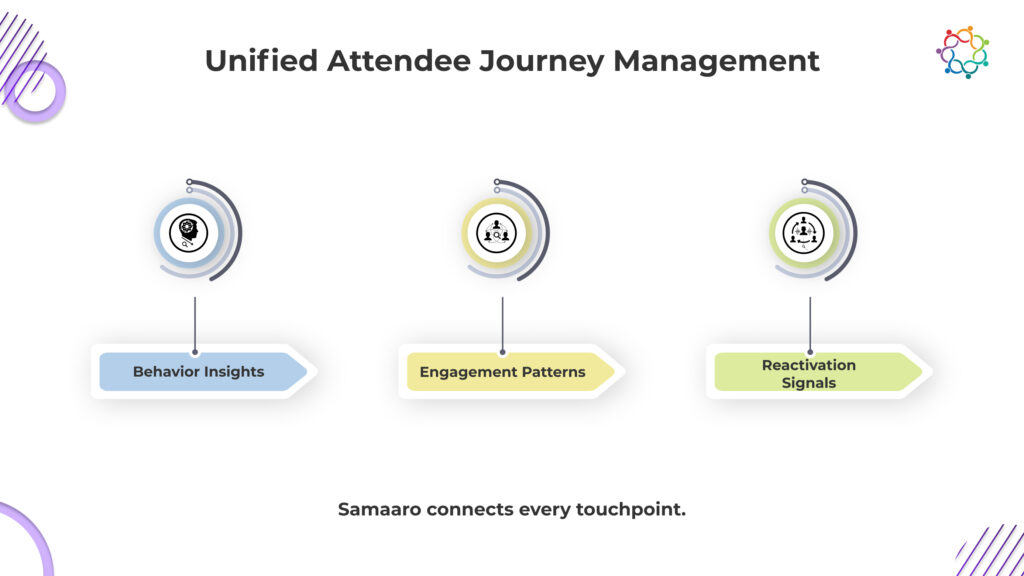

The attendee journey only works when every phase, registration, onboarding, in-event participation, and post-event retention, is connected by intelligence, not isolated tools. Samaaro unifies these touchpoints so event teams can design journeys based on real behaviour, not surface-level assumptions.

Samaaro captures every interaction from the moment someone lands on a registration page: the questions they answer, the sessions they favour, dwell time across content, networking patterns, and the signals they generate before, during, and after the event. These signals drive three core outcomes:

1. Personalised onboarding without friction

Registration data automatically shapes recommended agendas, session paths, meeting suggestions, and pre-event communication. No manual mapping, no bloated forms.

2. In-event engagement that reacts to behaviour

Live analytics show movement, interest spikes, session fatigue, and interaction patterns. Teams can intervene in real time, reroute footfall, promote under-attended sessions, or activate nudges for high-intent attendees.

3. Post-event retention driven by measurable signals

Every action feeds into a unified attendee profile: content consumed, connections made, feedback given, follow-up engagement, CRM progression. Samaaro uses this history to automate personalised follow-up, re-engagement, and multi-event nurture paths.

Where most platforms report attendance, Samaaro reports journeys.

Where most tools end at check-in, Samaaro continues through the entire lifecycle, surfacing the insight needed to build long-term communities and repeat participation.

Samaaro turns attendee management into a continuous, intelligence-led cycle, so every event gets sharper, more personalised, and more predictable over time.

Retention does not happen by accident. It’s the result of thoughtful communication, intentional experience design, and continuous improvement across the entire attendee lifecycle. When event marketers treat events as relationship engines rather than one-time activations, every touchpoint becomes an opportunity to build trust and loyalty.

Design a continuous attendee journey with Samaaro’s connected engagement platform.

For far too long, event marketers have gauged success based on superficial metrics: registration numbers, foot traffic in a venue, social mentions, and often overly simplistic post-event surveys. These metrics simply provided a quick picture of “what happened,” but could fall short of telling the whole story. However, the same enterprise event is now producing a torrent of data across every conceivable digital and physical engagement, from clicks to dwell time at a booth, and even about sentiment from feedback. Data, in fact, is no longer the issue; the inability or difficulty to aggregate and translate data into meaningful insight is.

This is why event intelligence is a relevant concept. Rather than just “collecting” information, event intelligence looks to truly have meaning and understanding of the information collected to discover what really drives ROI, engagement, and retention. Supported by AI and machine learning, sophisticated event intelligence takes you from being reliant on defining data in static reports to being able to create actionable behavioural foresight because of those connections.

This article addresses how AI assists event marketers moving through descriptive reporting, and eventually into predictive and prescriptive reporting. It demonstrates examples of how leading enterprises are utilizing event intelligence to understand event performance before and during the event, optimization in real-time, and accurately attributing revenue impact.

For years, event analytics using traditional metrics assessed dashboards full of engagement rates, attendance numbers, and lead counts have been useful, but very rarely do they take a strategic lens toward action. Rather than being drawn on to pull insights about causalities and what should be done next, they are primarily hindsight metrics describing the what happened and not the why it happened and what should be done the same action to produce a different outcome.

Most event analytics, still primarily focus on the visible metrics of registrations as well as post-event survey scores. Nothing wrong with measuring those metrics, but rather it informs you nothing about how the event did in terms of impacts to your businesses pipeline or customer lifetime value.

When event information sits inside disconnected systems, social tools, CRM platforms, survey apps, registration portals, and mobile event apps, teams spend more time stitching data together than interpreting it. Fragmentation forces analysts into spreadsheet assembly instead of insight generation. As a result, the organisation loses the ability to connect engagement signals to business KPIs, making it nearly impossible to produce meaningful event intelligence or an actionable plan.

In fact, you rarely get a report detailing that the current event took place until days and weeks after the fact. By the time you find out that a trend existed post event, it is likely you couldn’t take action on it by then anyway. The very notion of an event insight is reactive.

Even if you manage to write a solid event report, the core problem remains: most of the metrics inside it are not actually linked to outcome measures that matter to sales or marketing. And unless your data is connected end-to-end, any claim that “engagement led to a conversion” or “the event accelerated pipeline velocity” is still largely speculative. Without a direct, verifiable connection between event behaviour and business results, ROI becomes an assumption, not proof.

Businesses don’t need one more dashboard, they need a smarter dashboard. It is not about prettier charts and new line graphs. Event intelligence is about making every single touchpoint an insight to determine your next strategic move.

Event intelligence embodies the advancement of analytics into a more adaptable decision-making framework that doesn’t just tell us what happened but why it happened and what will probably happen next.

True intelligence begins with unification. It is necessary that event data is integrated into one ecosystem from registrations, attendance logs, session engagement, app interactions, and feedback channels. Integration dismantles silos, exposing each datapoint as a facet of a richer, ongoing attendee profile.

AI and machine learning algorithms, based on patterns and criteria that humans may not easily recognize, respond to early engagement signals, and even predict attendance behaviour and at-risk segments. For example, algorithms can determine which sessions have the highest likelihood of converting post-event or which audience cohorts are most at risk of churn.

Finally, event intelligence turns all of that engagement and behaviour back into business impact, connection scores back to lead quality and likelihood to stay or move deals forward. Event measurement transforms from descriptive to prescriptive. When a marketer asks what worked, they can now position the question as what will we do next?

Imagine the event team making a discovery that attendees who engage during a designated speaker’s session have a 35% improvement in conversion rates in the next campaign. That period of time doesn’t merely summarize what success looked like, it informs the next strategy.

Artificial intelligence (AI) is changing the way companies assess and understand the return on investment (ROI). Instead of measuring individual outputs in isolation, teams are now considering the predictive and causal relationships that affect revenue and engagement. Below are three major changes that AI enables within the ROI conversation.

AI can predict registration patterns, identify audiences who are at risk of not attending, and even dynamically adjust priority outreach efforts. Marketers can leverage insights from historical behavior and current engagement signals to adjust their targeting with the intention of increasing attendance before it even starts.

For example, a predictive model indicates that first-time registrants are less likely to attend an event; a marketing team can trigger an automated reminder workflow or provide personalized content to first-time registrants to encourage attendance. Predictive intelligence can ensure that resources are deployed in a way that they have the most impactful use.

One of the biggest advantages that AI brings to the event measurement table is speed. Real-time analytics now give organizers options to make decisions mid-event, change schedules, session lengths and even the layout of the floor depending on live engagement.

Dynamic dashboards and sentiment analysis driven by AI enable event managers to measure drops in attention from an audience or modify content in the session altogether.

AI has made easier what used to be the hardest part of measuring events, attribution. Machine learning and AI can follow an attendee through their journey across channels, isolating the touchpoints that result in generating revenue directed from an event.

Marketers are no longer looking at “cost per lead” but they are now evaluating “value per relationship.” AI powered attribution can help map out the journey from the moment of making interaction during the event, marketing follow up, and final sale. Understanding the ROI becomes much easier with this type of identification on the outcome.

Event intelligence is not simply an analysis you perform and put on a shelf, it’s an ongoing operational cycle. This cycle can be broken into interrelating stages: collection, connection, and conversion.

Every registration, poll question response, app click and survey is contributing to a sprawling constellation of engagement signals. Every event generates behavioral data, reflecting attendee response to their content, design, and delivery.

The real power of intelligence occurs when these signals are connected to our CRM, marketing automation, and customer data platform. This connection changes the way marketers look at an attendee from a single point of interest, to the overarching buyer journey.

AI analyzes patterns coded from previous events, illuminating ways to retain, convert or churn. For instance, feedback data may provide evidence attendees that attended product demonstrations re-registered at a higher rate. That data informs not only content development but also audience targeting in future campaigns.

The cycle is self-reinforcing: feedback → insight → adaptation → improvement.

This loop defines the modern intelligent-event strategy, constantly adapting and compounding ROI.

While artificial intelligence, or AI, can highlight relationships and correlations, it cannot overcome the understanding of context and creativity that only a human can, and the success of an event all comes back to empathy and knowing what makes an audience feel inspired, motivated, or frustrated.

AI may tell you that session B performed better than session A, but it does not take anything more than a strategist to understand why. What was it that made it work better? Was it the subject matter? The way it was delivered? Some extra emotional connection? The most successful organizations use AI not as a solution, but rather as a catalyst for true human decision making.

The best teams consider AI to be a co-strategist, using AI to surface opportunities, then matching those opportunities with human creativity.

Traditional frameworks for measuring return on investment (ROI) analysed inputs and compared those against outputs. The difference with event intelligence is that it encapsulates outcomes, and more specifically, the outcomes it produces, or the shift it affects in customer behaviours and business growth.

Rather than simply counting heads, organizations are examining quality of engagement: depth of interaction and length of stay before and after the event. An AI algorithm can quantify the depth of that behaviour and surface attributes of engagement profiles that signify real interest versus passive participation.

AI analytics can help forecast lifetime value of an attendee, not transactional revenue created by attending one event, but cumulative impact across several places and over several occasions. The attendee’s experience may last for years; AI captures that experience over time.

Engagement probabilities and likelihood to convert is analysed by AI, allowing marketers to invest their budgets in audiences with high financial value. This efficiency in targeting allows for lower acquisition costs across the organizations portfolio of marketing efforts.

AI enables a layer of ROI attribution reports that illustrate, how an event catalyses action across flow that extends out to digital marketing, sales enablement, community retention, etc… Rather than fragmented leads reports, intelligent event attribution is connecting the dots for marketers.

Event intelligence is shifting ROI from retrospective estimation to a performance marketplace ecosystem, live and ongoing.

The development of experience marketing within events will be characterized by systems which will learn and adapt continuously.

Event ecosystems of the future will use predictive models that will simulation experiential (experiment) even before the event begins, testing messaging, timing and design. AI will help produce content, for different audience segments, and for personalized scheduling – all removing much (if not all) manual work and improving accuracy.

As event data layers more seamlessly into enterprise automation, intelligence will no longer be a measure of analysis, but rather a living breathing system. Each interaction will inform what happens next, and marketing programming will self-improve over time.

In this future state, event success – won’t be reliant on what happened as a post-event report (although reports will continue to valuable) but rather what audiences need before they even register.

As Samaaro continues working with our enterprise customers globally, our focus remains the same as to help our marketers turn data in clarity, and clarity into growth.

The next evolution of the event ROI will be a measure of what comes next, not a measure of what happened.

Most event teams do their engagement work as soon as the registration form launches. By that point, demand creation loses importance. The best B2B and community-led events have a success track record since they start to warm their audience weeks or even months before registration opens. Pre-event engagement lays the foundation for perceptions, builds trust, and establishes familiarity with an event’s base themes long before the initial landing page launches.

Once people know what the event stands for, why it matters, and how they might benefit, it morphs into a smooth transition from awareness to registration. This is why the process of pre-event engagement is not an optional promotional phase – it is a primary component of the event marketing funnel. It primes attendees to care about the event before they are asked to commit, making conversion easier and lowering the cost of acquisition.

The initial phase of engaging your audience prior to the event is generating a reason for participants to feel anticipation about something they cannot yet register for. Curiosity is an innate factor that builds interest, particularly in competitive industries where audience attention and interests are fragmented.

Here, teaser campaigns fit in particularly well. Short-form video, cryptic social posts, and countdowns based on hints give your audience enough information to know something is coming, albeit you do not give away the full picture. A great addition here is influencer seeding, where you allow key voices in your industry “discover” or comment on the idea of the event you are developing. This is a great way to build credibility and hit the social proof lever, before you roll anything out.

Industry discussion threads work much the same way. Social platforms like LinkedIn, Reddit & niche space communities are your friends here. You can intentionally spark discussion around the major theme of your event. The discussion is centered the concept of the event, which nurtures curiosity around the larger idea, rather than an awareness effort to sell the event. When you service the first registration announcement, it feels like an extension of a conversation attendees are already interested in.

By the time registrations begins, your attendees have already conceptualized the event as their event – relevant, timely, and important.

A big reason that so many pre-event promotions fail to drive registrations is that the offered content simply feels too much like a promotion too soon. Today’s audience needs value first – primarily through education. When you introduce the supporting themes of your event with content that really allows audience members to reflect, solve a problem, or consider new ideas, you are developing trust that fosters later conversion.

This could include thought leadership blogs that offer insights not in a direct reference to the event but related to the topic of your event. Webinars or short fireside chats with speakers also help to allow your audience warm up to the event’s knowledge ecosystem. Even polls or discussion prompts can draw attention to the area of concern your event will address.

The objective is simple; you want the audience to experience and feel the intellectual and emotional space of your event. When registration opens, the event should feel like a clearly logical next step in the learning journey they have started with you.

Pre-event marketing communications also represent an opportunity to reward early interest. Gated pre-registration links can allow your most engaged followers to lock in a spot before the general public. These soft offers also can show early demand levels, as well as allowing a more accurate capacity, content, and marketing plan.

Referral benefits (discount codes or early access to recordings of sessions) can convert your most excited early adopters into ambassadors. These micro-incentives can massively improve your organic reach without a large spend.

Providing exclusives is another powerful motivator. First access to speaker announcements, agenda reveals, or site updates can get the audience more engaged with the event and feel they’re part of the event build-up. This sense of belonging will drive conversions when general registration opens.

Intelligent pre-event engagement is based on simply understanding your best likelihood of attendance. Understanding your past attendees through event data, on-site behaviour, CRM activity or participation patterns gives you the ability to learn and plan marketing outreach from this, as an example, you might reach out to individuals who downloaded a certain whitepaper, engaged with private information related to the speakers, or participants who opened engagement communications from the last previous event. The individuals you reach have a higher response rate, because you aligned your messaging to existing behaviours based in interest.

The person-oriented outreach is key to ensure you are not sending generic teaser mass communications to all. Your messaging should be tailored to people who had curiosity or a need based on data. Data-rich engagement strategy reduces wasted trips to the event, strengthens engagement communications leading up to the event, and likely increases early conversion rates.

Pre-event engagement only works when it’s grounded in data, not guesswork. Samaaro gives teams the ability to identify likely attendees, understand past behaviour, and personalise outreach long before registration opens.

Samaaro’s engagement engine lets marketers:

Want to see how this would work for you?

See how Samaaro automates pre-event nurture, content drip, and intent scoring before doors open.

Book a tailored walkthrough →Because everything ties back to CRM and previous event data, teams know exactly who is warming up, who needs a nudge, and who’s ready for early-access conversion. Instead of manually orchestrating touchpoints, Samaaro operationalises the entire nurture motion.

Samaaro turns pre-event engagement into a measurable pipeline activity, giving teams the clarity to shape demand before the form even opens.

The top events start engaging with their audience well before the registration day itself. The best events are creating awareness, providing value, building trust, and finding out who is likely to attend months in advance. Engaging in pre-event tactics is simply healthier for the funnel and makes it easy to predict your turnout.

Utilize Samaaro’s engagement automation tools to lay out more thoughtful and strategic pre-event journey plans.

Pre-event engagement should begin weeks before registration opens because high-intent buyers need time to build context and conviction before they commit. If your first touch is the registration form, you’re asking strangers to register. If your first touch is valuable content weeks earlier, you’re asking warmed-up audiences to take the next logical step. Early engagement turns registration into an easy yes.

Effective pre-announcement tactics include: cryptic save-the-date posts that hint at the theme, countdown teasers on social, behind-the-scenes content showing event prep, early-access waitlists, speaker pre-announcement reveals (one name at a time), and short videos teasing the value attendees will get. Curiosity is the fuel. Don’t reveal everything at once. Let the audience lean in.

Use thought leadership content, webinars, and discussion prompts to educate audiences and build trust in the topic your event covers, not just the event itself. Publish blogs that frame the problem. Host short webinars with future speakers. Drop polls on LinkedIn about the themes you’ll cover. By the time registration opens, the audience already trusts your authority on the subject. They register because the event feels like the natural deep-dive.

Early-access incentives that convert high-intent prospects into the first wave include: pre-registration links for known accounts and past attendees, early-bird discounts (15 to 30 percent off), bring-a-peer referral codes, exclusive content drops for early registrants, and VIP pass upgrades for the first 50 to register. Referral perks (a free pass for every two peers referred) turn enthusiasts into ambassadors and lower your CPA significantly.

Use behavioural data and CRM signals to identify likely attendees by looking at past event attendance, content downloads, webinar participation, recent product page visits, and deal stage. A prospect who attended last year and downloaded last month’s whitepaper is high-intent. A cold contact with no engagement is not. Send personalised outreach to the high-intent group first, with messaging that references their past activity. Generic blasts to everyone waste the high-intent signal.

Pre-event engagement functions as a measurable pipeline activity when every touchpoint (content download, webinar attendance, RSVP, early registration) is tracked in the CRM and tied to specific accounts. Marketing can see which target accounts are warming up. Sales can prioritise outreach to high-engagement accounts before the event. By the time the event runs, you already have a ranked list of who to focus on. Samaaro turns pre-event engagement into pipeline data, not just awareness.

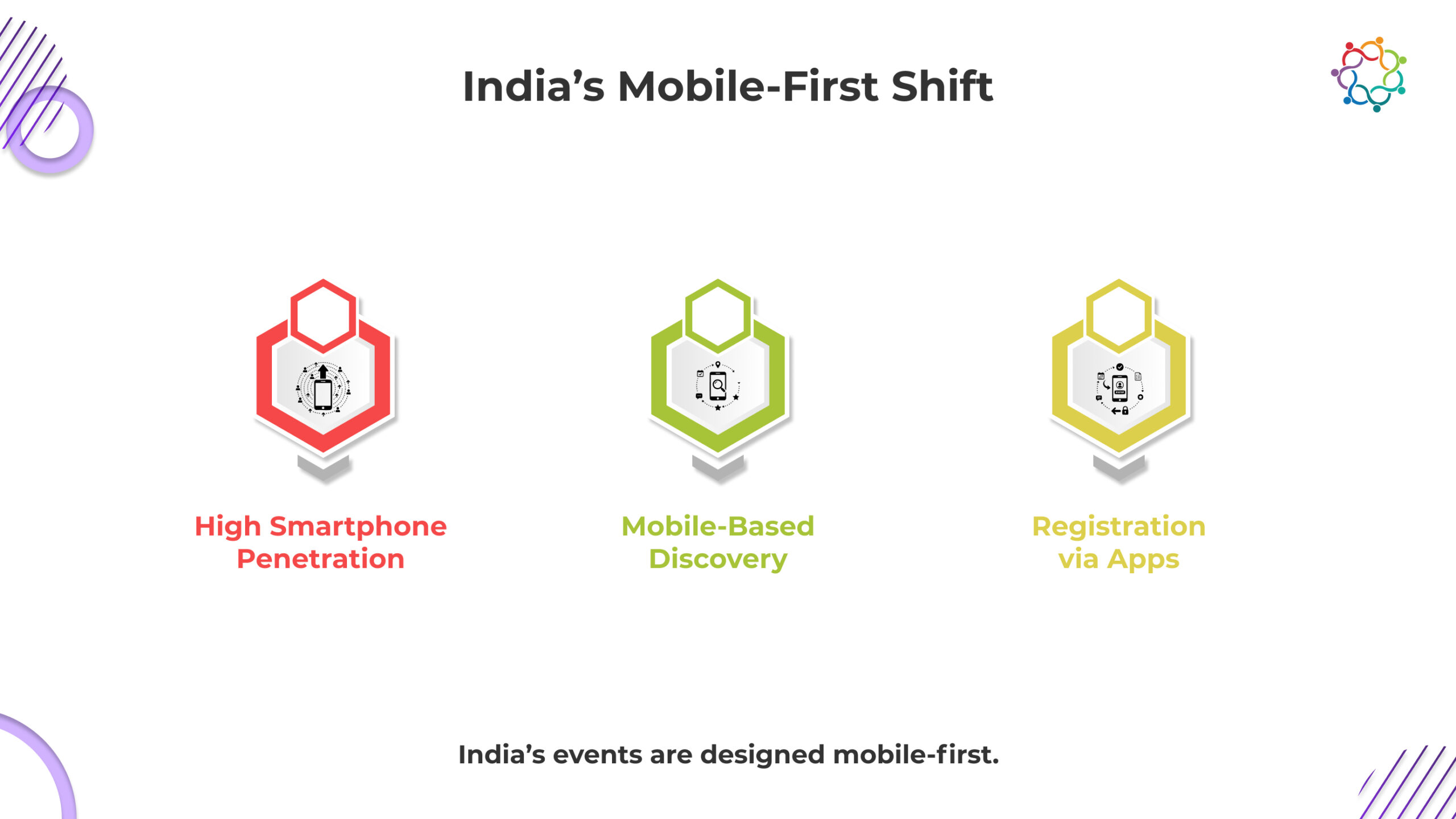

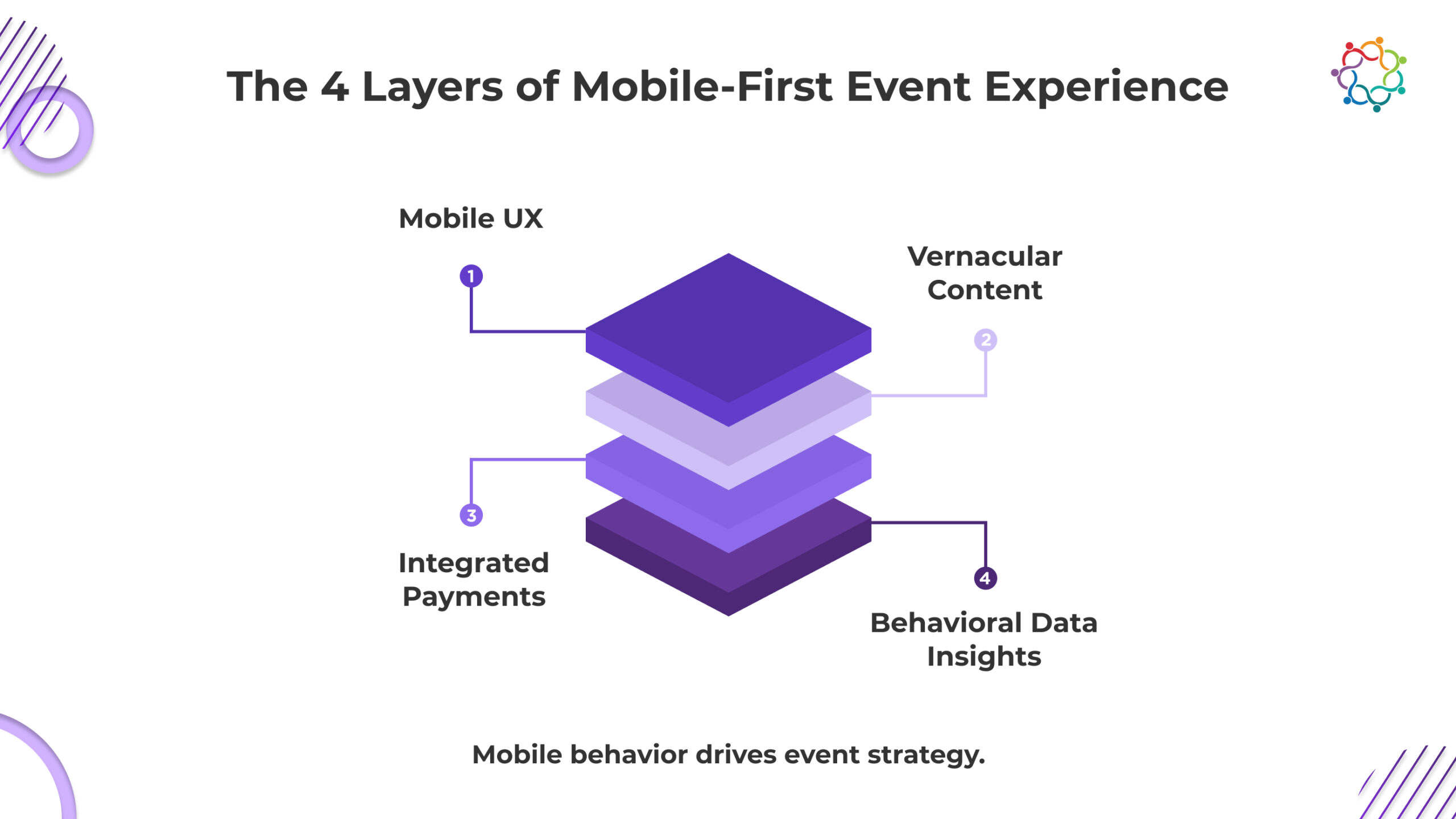

India has emerged as one of the most mobile-first digital economies globally, and this transition is affecting every layer of the B2B event ecosystem. For many attendees, the smartphone is no longer a companion device; it is now the primary channel for discovering events, registering, checking in, exploring content, attending, and ultimately networking. Traditional desktop-first workflows in events are fast approaching obsolescence, as younger professionals, regional audiences and busy executives are demanding fast, intuitive, app-ready experiences.

This change is about much more than just a change in the device used. It signifies a true behavioural shift that will mean event planners, chief marketing officers, and technology buyers will have to think differently about how events are planned and delivered. Speed, simplicity, location, and personalization are now the hallmark pillars of India’s event tech expansion. As organizations extend their event portfolio across metros and emerging rapidly Tier 2 and Tier 3 markets, ‘mobile first’ is a critical component of any B2B event strategy going forward.

Across Indian B2B events today, mobile-first design dictates engagement. Event registrations increasingly happen through mobile landing pages or quick prompting apps, as opposed to sign-up forms and attendees want to enter with a QR code instead of a verified digital pass/tap to confirm their ticket. The days of printing badges or writing on registration forms is starting to phase out across corporate conferences, trade expos, and partner summits.

To effectively engage this audience, the event software must utilise a UX engineered for the constraints of mobile. The interfaces must load quickly, remain reliable on contested networks, and require minimal data entry in order to function with ease. If an interface has one more field or takes one more second to load, then the risk of drop-off is imminent. Off-line access has also become equally important for attendees given the venue size and network congestion. Attendees want to have access schedules, maps of the venue, speaker biographies, and announcement alerts, while their network flows in and out.

A friction-less mobile UX is now shaping how attendees participate while they are in-session. Live polls, Q&A modules, emojis and thumbs up thumbs down reactions, session ratings, and digital handouts are accessed through participants’ mobile phones. The more intuitive and minimal the experience, the more likely attendees will engage in the activity. This behaviour baseline has also driven event tech providers to try for app-like responsiveness even when mobile UX is web-based journey.

The crowds at meetings in India are not well united. B2B events are moving away from only the normal metro audience, and this support of regional languages for facilitating attendance. Many attenders in Tier 2 and Tier 3 cities like: instructions and notifications to be in their regional language, and content for sessions to be in the vernacular language as well. Without localization, we have created that invisible wall of frustration, comfort and disengagement from the content.

Broadcasting agendas, notifications and resource materials in multiple languages builds an extra layer of inclusivity in attending events. To even have a toggle for Hindi, Tamil, Telugu, Kannada or Marathi languages can shift sides from a passive attendee to an engaged authority. Vernacular interfaces can help brands communicate a dedication to being regionally diverse, which is a highly considered trait to have in sectors like BFSI, Manufacturing, Distribution and Public Sector.

Localization is not simply language-based Systems, but rather, regional timing considerations, small cultural variations in the vernacular language and potentially even device-variations too. Most participants from lesser sizes of cities carry a light to mid-range smartphone, which means the user interface experience to be light and ensure low band-width optimization. Event technology benefiting from fully considered needs can experience higher adoption rates and supportive behaviour.

India has one of the most advanced mobile payment ecosystems globally, and this has transformed how paid events operate. UPI, mobile wallets, and regional payment gateways have dramatically simplified the registration-to-payment journey. Instead of forcing attendees through lengthy invoicing processes or multi-step checkout flows, organisers now enable near-instant mobile payments.

For B2B events, this shift has reduced bounce rates and improved conversion ratios for:

UPI’s immediacy is especially powerful during last-minute registrations, where opportunity windows are narrow. Many attendees decide to join only a day or even an hour before an event, and the ability to pay through a single tap removes friction entirely.

In-app or mobile-browser payments also allow organisers to run dynamic pricing, early-bird reminders, abandoned-cart nudges, and promotional voucher codes, all optimised for fast conversions on smaller screens. In markets like India, mobile-first monetisation has quickly moved from a convenience feature to a competitive advantage.

One of the most valuable outcomes of India’s mobile-first audience is the sheer depth of behavioural data it generates. Every tap, swipe, click, and interaction becomes a signal that organisers can analyse to refine content strategy, session formats, and lead-generation workflows.

Mobile-first platforms capture:

For CMOs and event leaders, this means post-event reporting goes well beyond attendance numbers. Instead of guessing which sessions resonated or which cohorts were most active, event teams get granular insights that influence sales alignment, content marketing, and the long-term event calendar.

This data becomes especially potent when combined with mobile identity markers, allowing organisers to run personalised retargeting, nurture attendees after the event, and shape better experiences next time. India’s willingness to engage through mobile gives technology buyers the clarity needed to make smarter decisions.

India’s mobile-first audience operates on three realities: inconsistent bandwidth, diverse regional languages, and rapid-fire decision cycles. Samaaro’s architecture is built around these constraints.

The platform is engineered to perform reliably on mid-range devices, busy venue networks, and high-volume traffic spikes. Pages load quickly, forms require minimal input, offline caching keeps agendas and maps accessible, and QR entry works even when connectivity dips. This foundation matters because Indian attendees don’t tolerate lag, they simply drop off.

Samaaro also supports true localisation, not just translated labels. Organisers can send notifications, agendas, alerts, and resources in multiple regional languages and segment different linguistic groups with precision. This removes the friction that normally alienates attendees from Tier 2 and Tier 3 markets.

On monetisation, Samaaro integrates directly with UPI and mobile wallets, enabling one-tap payments across ticket upgrades, add-ons, merchandise, and last-minute registrations, critical in a market where decisions often happen minutes before a session.

Every mobile interaction feeds into Samaaro’s behavioural analytics:

These signals help enterprises understand intent, segment audiences, and align sales and marketing with actual behavioural evidence, not guesswork.

For large-scale employee meets, distributor networks, roadshows, partner programs, and public expos, the formats driving India’s B2B growth, Samaaro gives organisers the scale, speed, and mobile readiness the market demands.

In India, the event technology revolution is not driven by devices, it’s driven by behaviours. A mobile-first populace expects speed, convenience, vernacular comfort, instant payments and personalized pathways. When B2B events build to this reality, they will win over B2B events that still anchor to desktop-era assumptions. The future of event experiences in India will be frictionless, intuitive, and informed by real-time data.

Find out how Samaaro powers India’s most mobile-first enterprise events.

Dubai’s evolution into one of the foremost events destinations for corporations, did not occur instantaneously. Rather, it started as a luxury playground associated with bling and scale, and over the last 10 years, it has transformed into a strategic global MICE destination. Now, for industry event planners seeking to bring enterprise events to Dubai, the attrition is not just based on rarefied structure and the aura of the skyline, but on the underlying infrastructure and policy and the readiness to embrace future-facing technology. As the UAE continues to status itself as a hub of innovation and business tourism, the landscape pertaining to corporate events in Dubai, as an example, is reshaping itself today for 2026 and beyond.

Underpinning Dubai’s new event identity – is technology. Organizers are leaving conventional check-in to digital check-in. New applications that utilize AI to network people based on industry and goals have entered the scene. Smart venues are adding sensor integration, digital wayfinding, automatic, crowd-flow management to and bringing abundance of comfort and convenience. In this way, immersive branding will become a default state rather than an exception, whether this is augmented reality showcasing, shared or interactive LED images or screens, and curated journeys with transformed content. For planners eager to create meaningful and global events, Dubai’s willingness to embrace new technology allows for a proverbial sandbox for new methods and concepts.

The concept of sustainability has gone from a desirable something to a foundational expectation. Eco-certified event spaces in the city of Dubai are at the forefront of the paradigm shift toward sustainability by incorporating lower energy consumption, smart lighting, water-efficient infrastructure, and advanced waste separation at their facilities. Event teams are using more paperless workflows, using recycled materials, and tracking their logistics carbon impact even more than before. Furthermore, policies from the UAE government put tourism and business events in the context of sustainable and environmentally responsible business practices, which are becoming a necessity for any company putting together a calendar of events in the region for 2026.

In today’s day and age, organizations are no longer planning corporate events in Dubai that are simply jammed with dense agendas and presentations. The focus is now shifting towards experiences that provide a seamless combination of value for work, fun, hospitality, and cultural experience such as designed networking lounges, wellness activities, food experiences, souk like marketplace conversions, and local art related offsite experiences and even trips into the desert. From a hospitality perspective, Dubai’s ecosystem allows planners to curate events that feel distinctive, memorable, and of high quality; appeal to corporate teams and globally branded organizations, especially meeting and event planners, as hosting a meeting or launch of a product that feels exclusive or premium has become an essential higher-level value initiative.

The momentum of MICE in Dubai is significantly bolstered by the initiatives taken by Dubai government-related entities and organisations such as Dubai Business Events and the Department of Tourism and Commerce Marketing are actively building the international partnerships, securing global congresses and developing local capacity to improve the business tourism infrastructure in the Emirate. New transit links, venues and favourable regulations related to the hosting of events as a whole, have made Dubai easier to access and more favourable for planners. By 2026 and beyond, these efforts will further position Dubai amongst top international destinations.

Dubai’s new event economy runs on data, personalisation, and operational scale, exactly where most organisations struggle when running high-stakes corporate events in the region. Samaaro addresses that gap by giving enterprise teams one platform to manage registration intelligence, behavioural analytics, personalised agenda delivery, lead qualification, and post-event ROI without relying on fragmented tools.

Dubai’s event ecosystem rewards precision, personalisation, and high-quality execution. Samaaro gives global brands the intelligence layer required to meet those expectations.

Dubai’s corporate events have started a new chapter of innovation, sustainability, luxury, and government-assisted growth. Instead of just offering planners distinctive experiences at high levels of impact and engagement for attendees, Dubai has proven a formidable contender in the arena of global destinations. Now, as some planners seek out bold, future-ready event experiences, the combination of Dubai’s access, infrastructure, and larger supply of creative events have re-written the script and challenged new standards for international corporate events in large-scale arenas.

The future event trends of today will define the reality of corporate events that businesses encounter when hosting or engaging in a large-scale corporate event in 2026.

Find out how Samaaro supports enterprise events in key markets like Dubai.

AI chatbots experienced growing popularity in the event industry as event teams have been looking for scalable ways to approach attendee communication. These chatbots promised instant answers, real-time assistance, and reduced need for staff. As events continued to grow larger and more complex, chatbots appeared to be a great way to facilitate high volume questions from attendees as teams learned to scale without burdening their human teams.

However, the results have been mixed. Some events find chatbots helpful to providing good turnaround times and attendees find it easier to navigate the event, while other events report frustrated attendees, citing unanswered questions or unresolved issues. This blog will look at what chatbots are actually bringing, and where they fall short, and how event teams can seasonally evaluate chatbot’s true value before using any commonality in the event space.

Chatbots excel in situations that are predictable and structured, where attendees ask the same question in different ways. Well-programmed chatbots can take the workload off live support staff and provide instant feedback without any live intervention.

Chatbots perform very well with:

Instant FAQs

An attendee may want quick details about timings, layout of the venue, parking, or access to wi-fi. A chatbot can provide an instant answer without needing to wait in line.

Schedule Lookups

Instead of going through the agenda and scrolling to locate a specific session, attendees can request the times for a session they are interested in, or the location of that session.

Personalised Reminders

Bots can help attendees stay on track and send prompts about sessions they have an interest in, or have saved/bookmarked.

Basic Navigation

Chatbots can provide instant information on where the general session hall, booths, lounges, or help desk is located.

In all of these instances, chatbots provide a level of convenience that is truly valuable, especially in larger conference settings where the live help desk becomes overwhelmed.

Chatbots encounter limitations when emotions, ambiguities, or context dependency are involved. Events are dynamic environments during which the attendee is often required to form a judgment the automation can’t provide.

Chatbots fail at:

Empathy

A bot cannot comfort an attendee who is confused or stressed at check-in.

Contextual Understanding

Messages that convey meaning but have typos or odd phrasing often result in answers that are disconnected to their intent.

Flexibility

Bots use linear logic. Once a user moves outside those lines, the interaction becomes futile quickly.

Escalation to Humans

Bad chatbots will not offer a seamless transition when the user clearly needs a real person. Without a handoff, frustration ramps up very fast.

These are real gaps in the toolkit of chatbots that should suggest chatbots should supplement their work toward human centred roles not replace.

Chatbots often appeal to event managers because they reduce manpower and lower staffing costs. They can efficiently manage thousands of repetitive queries and free human teams for higher-value interactions.

However, cost savings cannot be evaluated in isolation. A chatbot that delivers poor responses can damage attendee satisfaction, reduce trust in the event brand, and ultimately undermine the experience.

An accurate ROI assessment should consider:

Cost Efficiency

Less dependency on on-ground staff and faster resolution times.

Experience Quality

Whether attendees feel supported or annoyed during interactions.

Brand Impact

A malfunctioning chatbot can reflect poorly on the overall event, signalling a lack of preparedness or care.

The goal is to ensure that automation enhances experience rather than replacing essential human touchpoints.

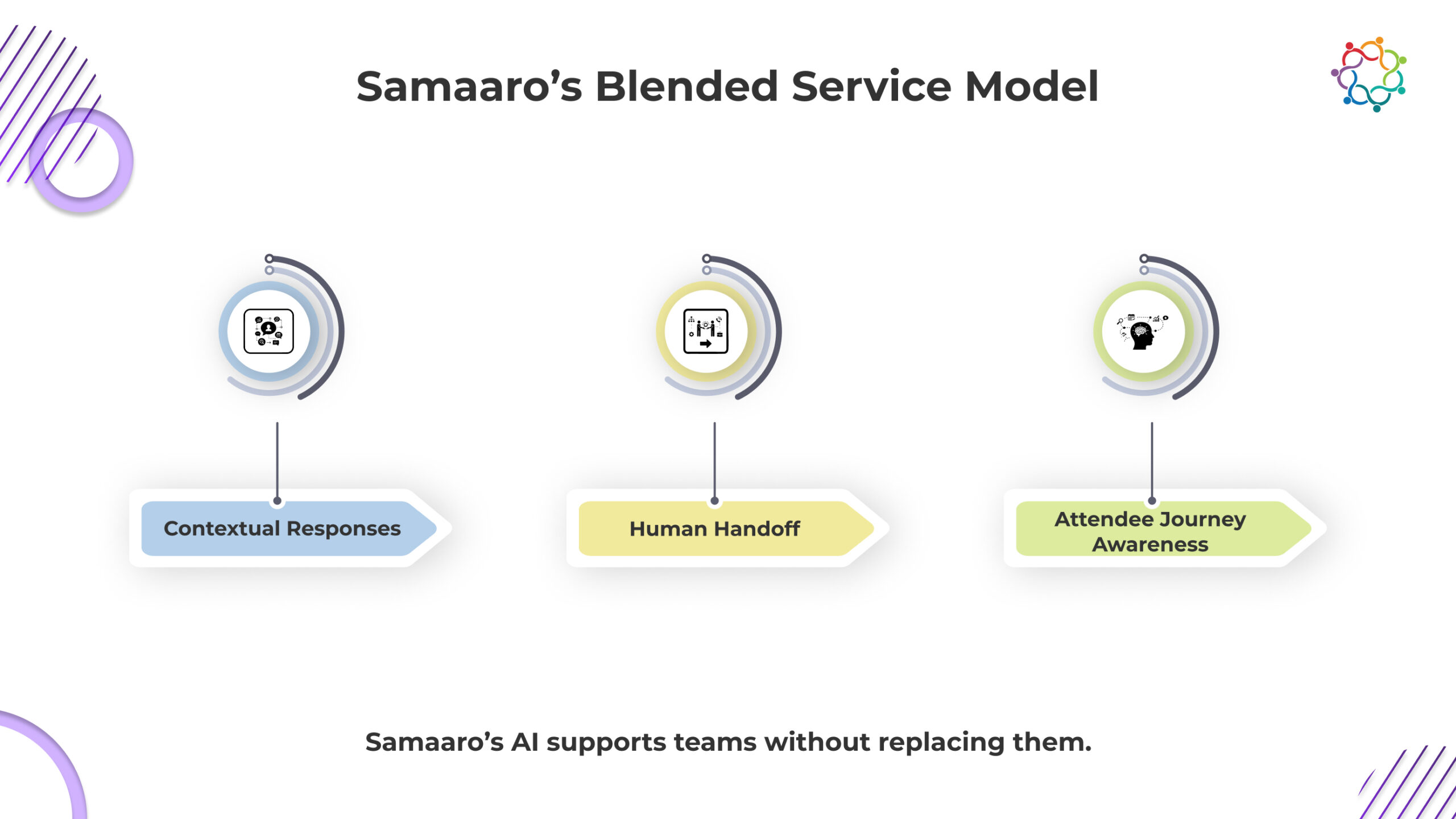

The most effective event organisations use a hybrid support model that combines AI efficiency with human availability. Chatbots handle high-volume, straightforward requests, while trained staff manage nuanced or emotionally sensitive cases.

A blended model looks like this:

AI handles FAQs, schedule checks, reminders, navigation, and rule-based queries.

Humans handle escalations, logistical exceptions, personal disputes, technical issues, and anything requiring empathy.

This approach reduces workloads without compromising experience. It treats automation as a tool that supplements human service, ensuring attendees always receive the right type of support.

With the appropriate strategies, AI chatbots might be a useful resource at events. AI chatbots excel at answering repetitive inquiries that are not emotionally complex or have a simple response. It is advisable for teams to utilize chatbots with clear expectations and as part of a blended service model that maintains the quality of the attendee experience.

Find out how Samaaro assists teams in designing evenly balanced engagement workflows that leverage the power of intelligent automation.

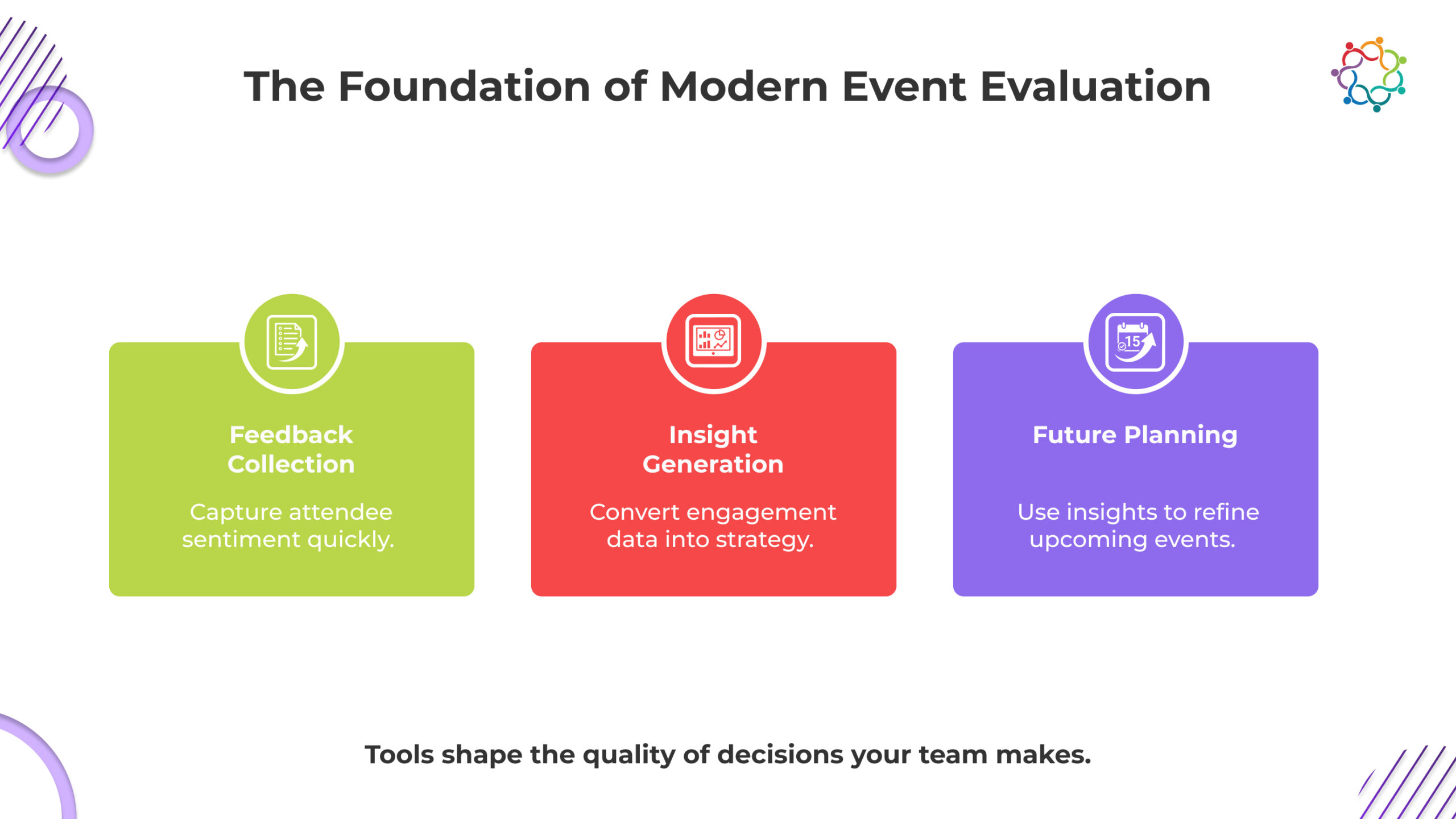

Numerous event teams continue to view post-event evaluation as a customary bureaucratic act, as the report gets produced, distributed, and stored away. The dilemma is not about having insufficient data. The dilemma is about having the evaluation not intentionally relevant to the organization’s business objectives.

Most report on the surface-level engagement without offering strategic intelligence into the larger issues that leaders care about. Did the event support revenue teams? Did customer sentiment increase? Did participants engage through the organization’s marketing channels? Which areas of focus attracted audience interest? Which event attributes must be excluded from the next event?

Post-event evaluation should be viewed as a continuous improvement process. Each intelligence piece should inform planning, audience, and investment decisions. When done well, post-event evaluation serves as a growth engine that helps marketers justify spend, improve formats, and show meaningful business impact. When done poorly, post-event evaluation is a collection of impressive looking charts with no meaningful clarity. The difference is avoiding common mistakes covertly sabotaging the evaluation process.

Event metrics related to attendance, social engagement (likes, shares, etc), or total registration are typically the first numbers that teams will share. These metrics are relatively easy to track, but they really do not tell you much about performance. A big audience that never converts is not really a win. The number of impressions for a campaign does not speak to lead value.

The real value is found in outcome-based metrics. For example, how many demos were booked because of the event, how many qualified leads did we generate, how many existing customers came back to the brand, and how did pipeline movement improve because of the follow up. Attendance only gets meaningful context when paired with intent indicators. When teams exchange vanity metrics in favour of KPIs tied to revenue, the evaluation is much more honest and a lot more actionable for decision making.

How to avoid this: Build your reporting framework around the actions taken by the audience. Demo sign-ups, content downloads, meeting requests, opportunity status updates, post-event conversions – all demonstrate actions taken – that will provide a clearer story about what if any, impact you had, and help marketing demonstrate value to leadership.

Feedback is among the most influential pieces of information in an evaluation of a post-event experience, and it is also one of the greatest mishandled aspects of an evaluation. Sending long, one-size-fits-all surveys several days post-event is unlikely to produce quality feedback. Generally, attendees will either ignore them or fill them out with little thought. In either case, the feedback is often parsed incorrectly to find the ‘actionable’ information and takeaways that do not match audience sentiment.

Quality feedback begins with an understanding of the purpose of the data and the timing of the feedback. Short, contextualized questions emailed a few minutes or hours after a session will produce higher engagement levels and better accuracy. Instead of asking participants broad, satisfaction questions, teams should be asking for data related to session relevance, depth of content, speaker quality, and even intent to take the next step with the brand. These specificity of response prompts could help teams understand what truly resonated (or did not) with the audience.

How to avoid this: Create a feedback process that includes session-level micro surveys, exhibitor or booth feedback for trade shows, and NPS directly following the event. Keep it brief, tied to an action, and focused.

Stated feedback reveals what people say. Behavioural data reveals what people do. A lot of evaluation reports use only survey data or other surface metrics, thereby missing the real insights that behavioural analysis can provide. This can create blind spots as, for example, an attendee may express one sentiment in a survey while exhibiting completely different behaviours during the event.

Behavioural insights indicate true engagement. Session dwell time indicates whether attendees truly attended the session or otherwise dropped off the call. Booth visits indicate level of interest for a specific solution/product. Link clicks, content downloads, general content consumption, and attendee interaction all illustrate the digital body language of the audience. When behaviour trends are interpreted properly, they will show intent and provide clarity that surveys alone cannot and they often validate survey responses too.

How to avoid it: Combine feedback responses with behavioural analytics. Track dwell time, engagement heatmaps, content interactions, and visit patterns inside event spaces. Use these signals to understand buyer interest and refine targeting for future events.

The challenge of disjointed data is one of the biggest challenges for post-event evaluation. Registration platforms, badge scanners, surveys, CRM systems, and engagement tools can often sit in silos. When teams try to manually collate everything together, efforts are delayed and mistakes occur. Inevitably, this leads to missing insights and reports that become stale by the time they get to decision-makers.

Disjointed data also restricts teams from building an aggregated attendee profile. When teams don’t have complete data, it becomes challenging to identify high-intent behaviour or map the buyer journey. This impact future event strategy and revenue.

How to avoid it: Invest in platforms that unify registration data, engagement analytics, CRM records, survey results, and lead qualification into a single source of truth. Automated data consolidation ensures accuracy and saves operational hours that can be used for deeper analysis.

A solitary post-event report, considered by itself, cannot tell the whole story. Without context, performance can be perceived as better or worse than it actually is. Many teams stop by evaluating one event on its own, impeding strategic learning and suppressing long-term trends from emerging.

Comparative analysis provides teams with information about whether engagement improved from the prior year. Whether the new format engaged a more discerning audience. Whether specific sessions drive conversions consistently. It is trend analysis that separates tactical reporting from strategic assessment as it describes direction/momentum.

How to avoid it: Build evaluation frameworks that include year-over-year comparisons, channel-level impact, audience growth trends, recurring session performance, and changes in lead quality. This creates a richer understanding of what is working and what needs refinement.

(Also Read: Mastering Your Event Evaluation Timeline: A Key to Smarter Events)

Most teams don’t fail at evaluation because they lack data, they fail because the data sits in six different systems that never talk to each other. Samaaro eliminates this by consolidating registration records, attendee behaviour, CRM status changes, and feedback into a unified reporting layer. No exports, no stitching, no manual hunting for insights.

Samaaro gives teams what evaluation should have delivered in the first place:

Instead of charts with no interpretation, Samaaro produces outcome-linked intelligence that informs budgets, targeting, and next-cycle planning. The platform pushes teams past descriptive reporting (“what happened”) into diagnostic and predictive intelligence (“why it happened” and “what to do next”).

Samaaro’s evaluation layer transforms events from isolated activities into measurable revenue programmes.

Evaluating an event should not be an end-of-cycle exercise. When shaped by strategy, it becomes a highly valuable tool in the event marketer’s toolbox. By not falling into common traps, teams can move past vanity reporting and reveal insights that will improve design, enrich audience engagement, and impact business results.

Discover how Samaaro simplifies post-event evaluation with automated reporting and ROI dashboards.

The evaluation of events is no longer reliant on manual effort or disparate data points. Today, the tools you leverage will determine the insight you capture from your audience. A strong evaluation platform does more than collect feedback – it weaves together audience insight, session engagement, and revenue implications into a coherent story. When teams grow up to leveraging strong evaluation tools, evaluation elevates from being an operational piece that provides insights to a strategic function that informs planning and investment decisions. On the other hand, when teams do not use a good evaluation product, reporting becomes a slow, fragmented process with limited data points and learnings.

This blog compares top event evaluation tools across four areas, so enterprise teams can determine the best tool for their needs considering size, integration needs, and depth of analytics.

Feedback plays a major role in organizations creating insights and actions plans. Tools like Type form, SurveyMonkey, and Google Forms easily allow organizations to collect attendee sentiment quickly. They are easy to use, inexpensive, and appropriate for organizations that need a lightweight feedback loop and action plan, usually without complex analytics.

But they are not without limitation. They will gather what the attendee says but will not capture what they do. They will not track attendee behaviour in sessions, in session dwell time, or heat mapping. They also rely on manual compilation when scaling. They can be valuable for small teams or standalone events. But in many cases for enterprise class analysis, they are insufficient.

Best for: Indications of quick sentiment, simple surveys, SMB scale events, simple NPS gathering.

Want to see how this would work for you?

30 minutes on how Samaaro replaces 4-5 separate evaluation tools with one connected workflow.

Book a tailored walkthrough →

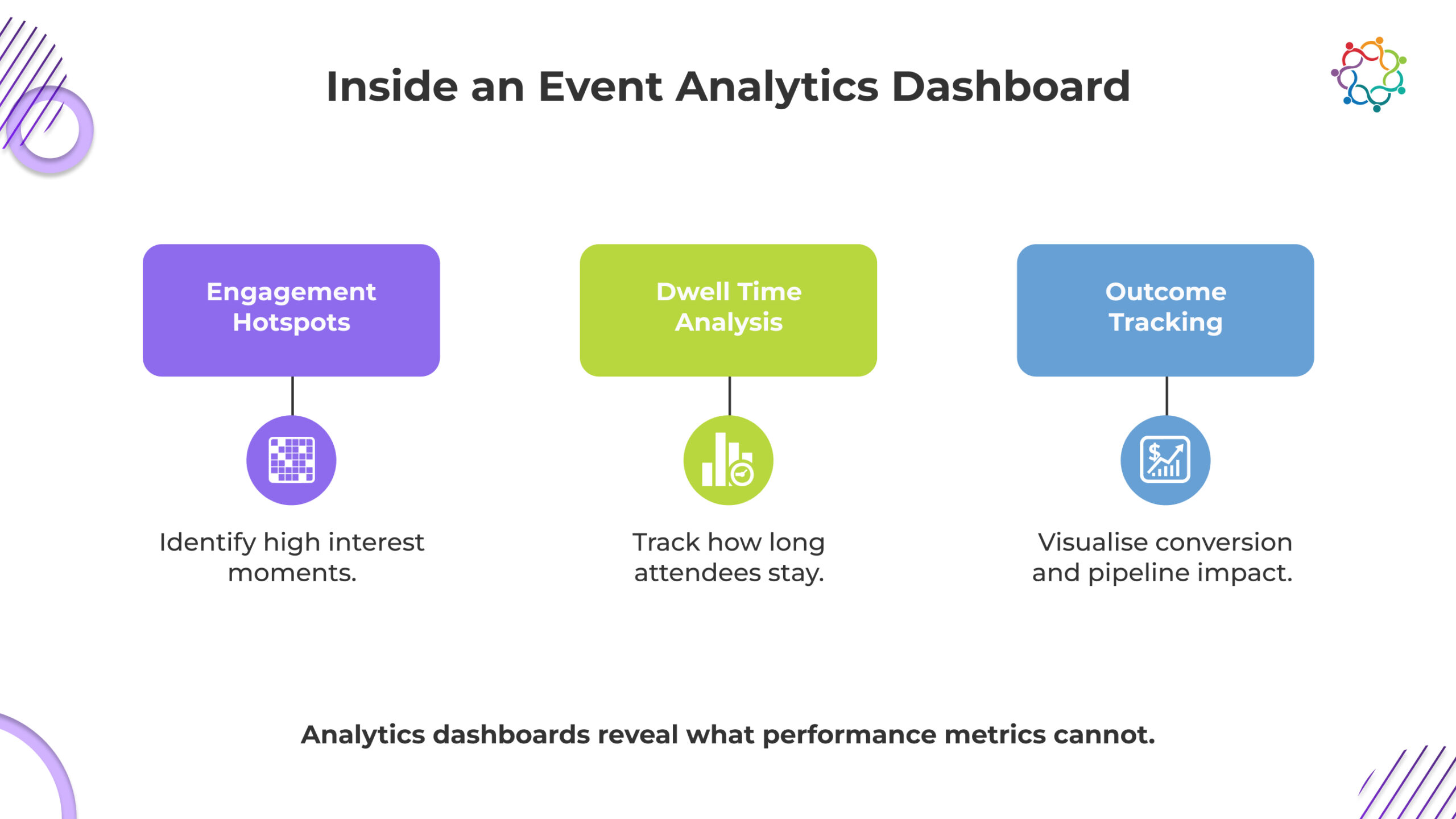

Analytics dashboards provide visibility into engagement, performance and outcomes. Platforms such as Samaaro and Bizzabo offer dashboards where teams can engage and track attendee movement, session attendance, booth visits, content engagement, and lead quality. They transform raw numbers into visual intelligence to help stakeholders discern what worked and why.

Analytics dashboards also automate manual work. Data will flow from registration, engagement tools, mobile apps, and the CRM system. Teams can measure conversions; segment based on high intent and compare session attendance and engagement by format or group.

Best for: Enterprise events, multi session conferences, exhibitions, engagement tracking, ROI tracking, year over year tracking.

CRM-integrated tools connect the activity from events to the revenue engine directly. Event evaluation platforms powered by Salesforce, HubSpot integrations, and specific connectors create a connection; they connect attendee engagement to the sales pipeline. It gives you a clear line of sight with the connection you are making from the interactions at the event to a business outcome.

When events’ data is housed inside a CRM, sales teams can see the engagement with intent. They can view which prospects attended specific sessions, downloaded resources, and visited exhibitors. This allows for more contextual follow ups and strengthens conversion opportunities. For marketing teams, CRM integration gives attribution a reality and gives insight in forecasting a pipeline.

Best for: revenue focused organisations, account-based marketing, making sellers’ lives easier, and high engagement B2B events.

AI-powered assessment platforms make sense of feedback data in ways that manual review at scale cannot. They can review open text feedback, identify sentiment trends, and surface themes cross thousands of responses. Some systems can even recommend changes to strategy based on what they’ve observed.

But AI can do more than evaluate feedback. It can identify audience segments, assess intent, or flag engagement drop off within the timeframe of their session. Meaning, event teams can modify content, targeting, and messaging in the moment, or during a future planning cycle. As an example, AI platforms are most valuable when supporting teams’ possess events at scale with a diverse audience.