Samaaro + Your CRM: Zero Integration Fee for Annual Sign-Ups Until 30 June, 2025

- 00Days

- 00Hrs

- 00Min

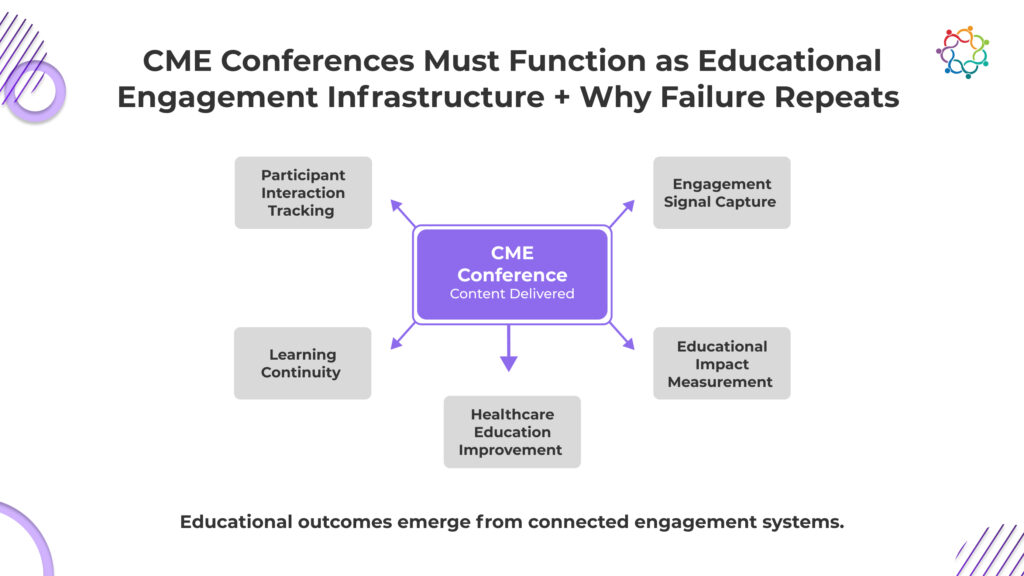

CME conferences look successful by every institutional measure that exists. Scientific content is strong. Expert faculty deliver credible education. Attendance is consistent. Compliance requirements are fully met. On paper, nothing is broken.

But educational impact does not come from content delivery. It comes from participant engagement. And that is where visibility disappears.

Organizations can confirm who attended. They cannot confirm who actively learned. They can document session completion. They cannot prove knowledge absorption or educational influence.

This creates a structural illusion. Education appears effective because it was delivered. Not because it changed anything.

When engagement is invisible, learning becomes an assumption rather than an outcome. Institutions protect the educational process while losing control over educational impact.

This blog covers why educational delivery creates institutional confidence without guaranteeing educational impact, and why engagement visibility is the missing link between content and learning.

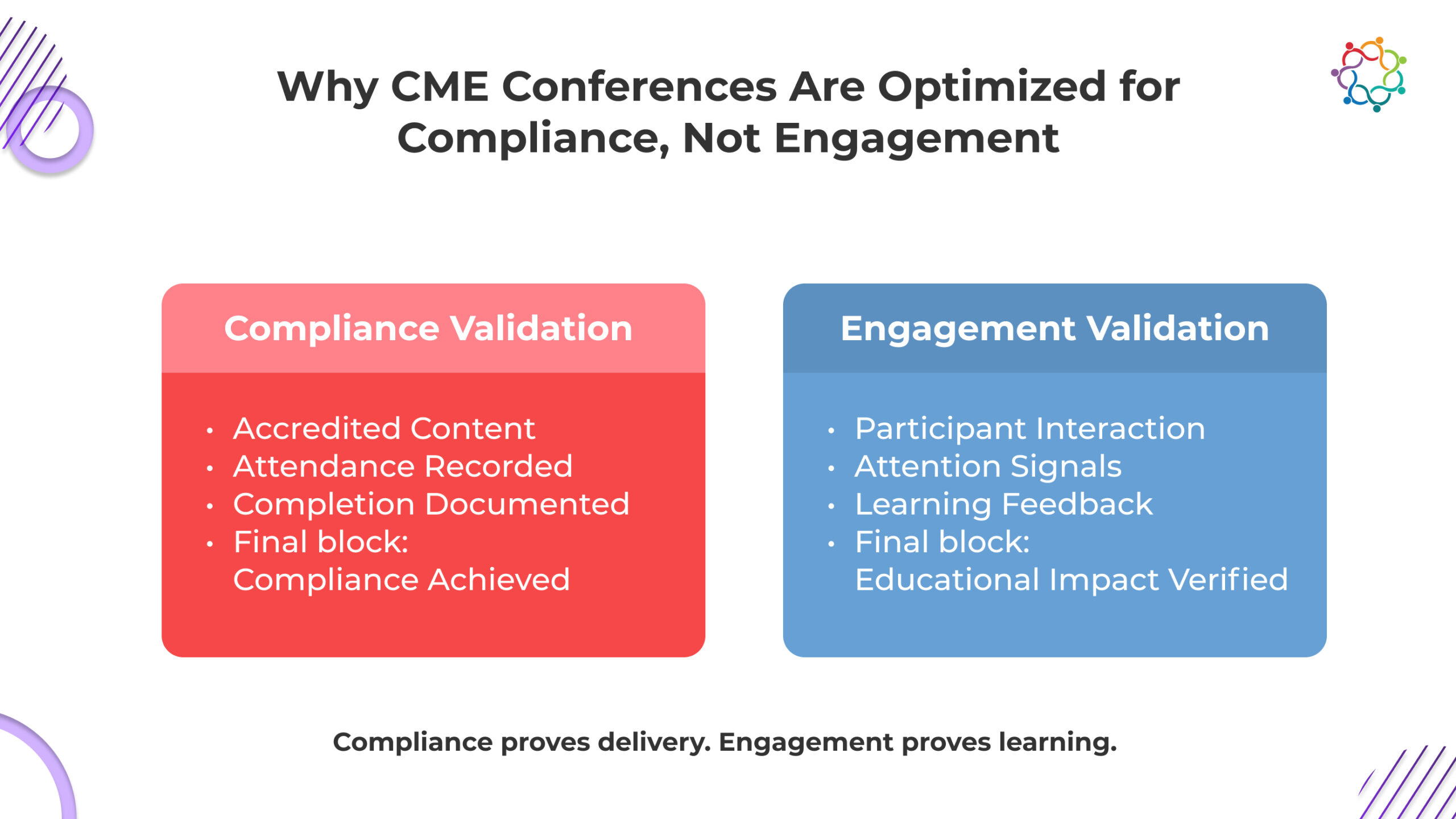

Compliance frameworks exist to protect educational credibility. They ensure scientific validity. They enforce content accuracy. They confirm that medical education meets institutional and ethical standards.

This structure serves an essential purpose. It protects trust in continuing medical education events. But compliance frameworks were never designed to measure engagement.

They confirm that the content was delivered correctly. They do not confirm that participants actively engaged with the content, causing structural misalignment.

When education systems optimize around compliance, they prioritize delivery integrity. Engagement becomes secondary. Interaction becomes optional. Participation depth becomes invisible.

The system rewards completion. It does not reward cognitive involvement.

This creates a predictable chain of consequences:

This is not a failure of intent. It is a structural outcome.

Compliance protects credibility. It does not create engagement. Educational effectiveness depends on interaction, reflection, and reinforcement. Compliance systems do not track these variables.

The institutional consequence is unavoidable. Education becomes operationally complete but educationally unverified. This creates a silent erosion of educational influence.

Organizations continue delivering education. They lose visibility into whether learning is actually occurring.

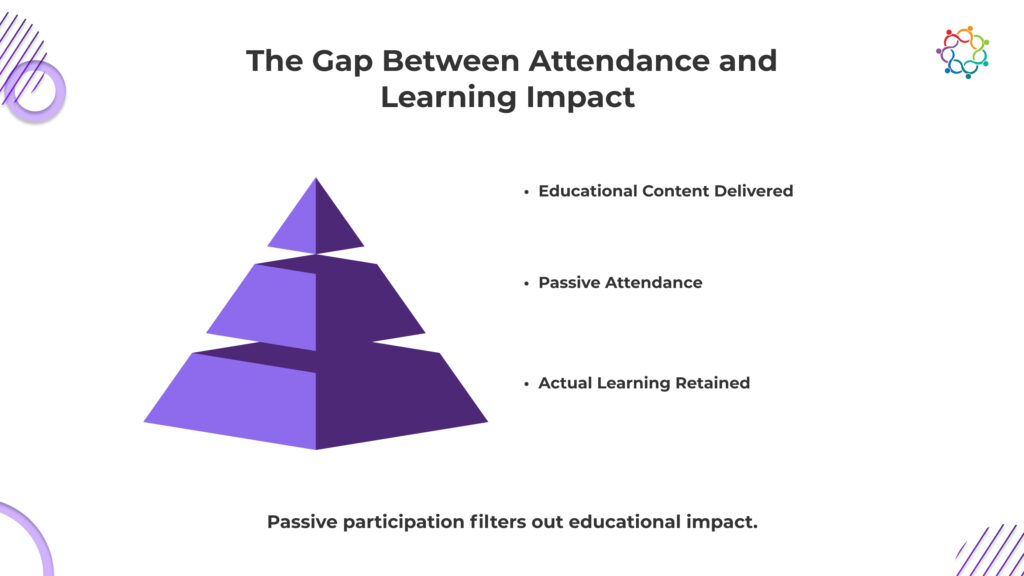

Attendance provides reportable metrics. It confirms participant presence and session completion. It does not confirm comprehension, retention, or applied change.

Presence in a session does not indicate cognitive engagement. Exposure to content does not demonstrate understanding. Completion does not establish clinical influence.

In many educational environments, attendance metrics function as proxies for impact. However, proximity to content is not equivalent to knowledge transfer.

If institutions cannot identify which participants internalized key concepts, where confidence shifted, or whether clinical reasoning evolved, learning impact remains unverified.

This distinction matters. Reporting participation volume without validating educational progression creates a measurement gap.

Attendance supports documentation. Measurable learning progression supports credibility.

Without separating the two, reported educational success may not reflect actual educational influence.

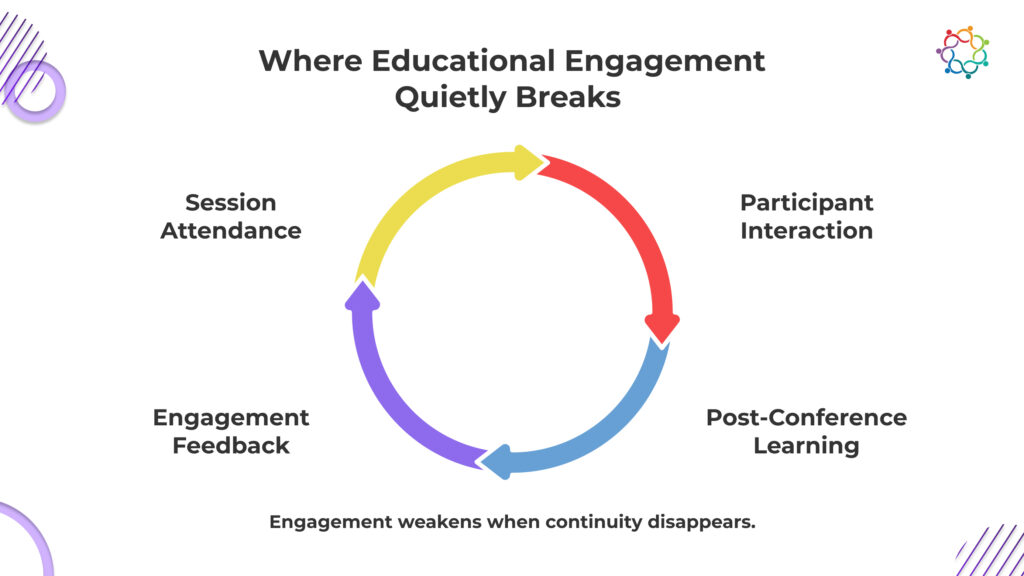

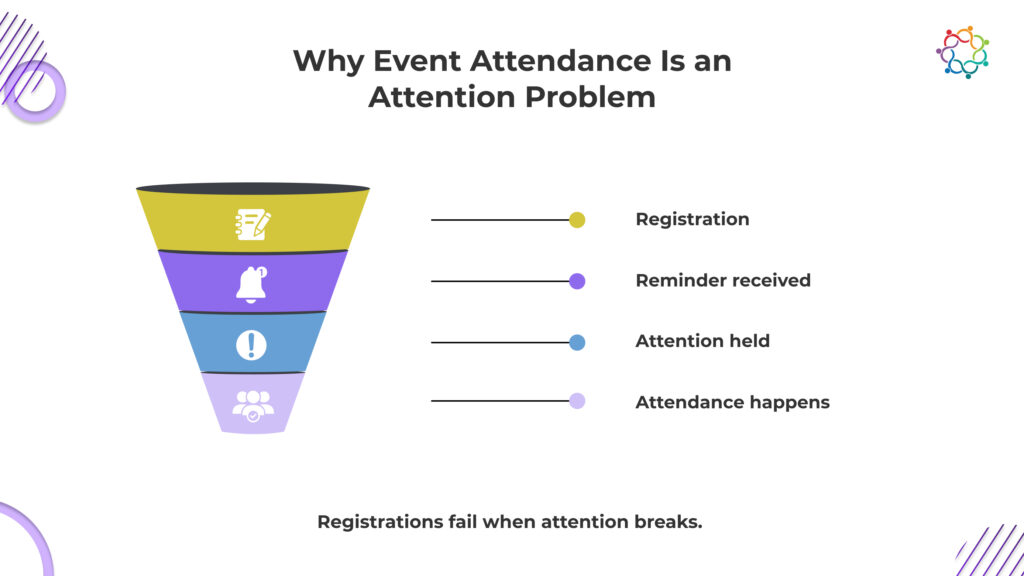

Educational engagement does not collapse instantly. It deteriorates through a sequence of invisible failures. Each stage reduces engagement clarity. Each stage weakens the learning impact.

Participants sit through sessions, but organizers cannot identify who asked questions, who responded to key concepts, or who disengaged midway. Attendance logs flatten every participant into the same category. Active cognitive involvement and passive listening look identical in institutional records.

This removes the ability to isolate where learning actually happened.

Educational leaders are left with session completion data, not learning evidence. Without interaction visibility, educational effectiveness remains an unverified assumption, not a confirmed outcome.

During sessions, participants experience moments of clarity, confusion, and doubt. These moments define learning. Yet most of this feedback is never captured when it happens. Post-event surveys rely on delayed recall, which weakens accuracy. Participants forget specific friction points. Educational leaders receive generalized satisfaction responses instead of precise learning signals.

This prevents faculty and institutions from identifying where education succeeded or failed.

Without real-time feedback integrity, educational evaluation becomes a broad opinion, not precise educational intelligence.

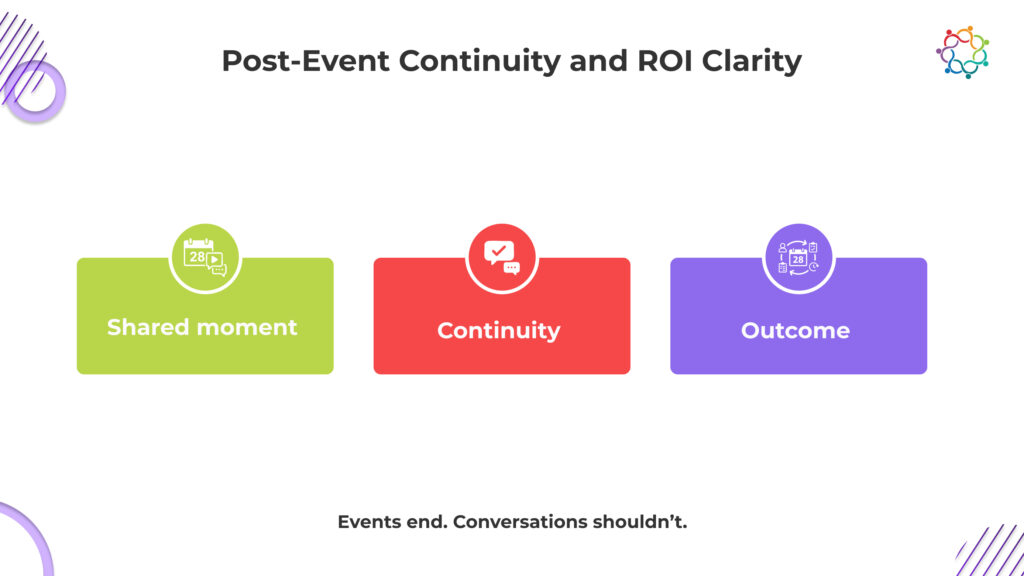

Once the conference ends, participant visibility stops. Institutions do not know whether attendees revisited concepts, applied knowledge in clinical settings, or disengaged entirely. There is no structured mechanism to observe knowledge progression after session completion. Educational influence becomes time-bound to the event itself.

This prevents organizations from linking education to sustained professional impact.

Without post-conference engagement continuity, learning remains an isolated exposure rather than a measurable progression that strengthens clinical competence over time.

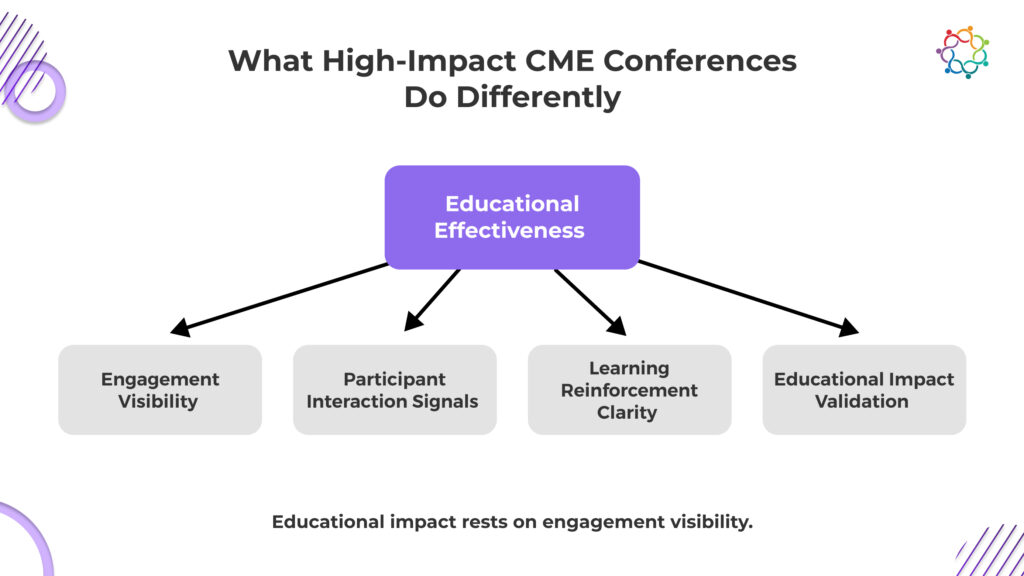

The difference between low-impact and high-impact educational environments is not content quality. It is engagement visibility.

Low-impact environments optimize for:

High-impact environments optimize for:

This structural difference determines educational effectiveness.

Content does not create impact. Engagement does.

Educational value emerges when participants interact with knowledge, not when they are exposed to it. This is where CME conferences either create influence or lose it entirely.

When engagement is visible, educational leaders gain control. They can see learning progression. They can measure educational clarity. They can validate educational effectiveness.

When engagement is invisible, education becomes speculative.

Institutions assume learning occurred. They cannot prove it. This distinction separates procedural education from impactful education.

Most educational programs operate without persistent proof of educational control. Sessions are delivered. The event concludes. Visibility into participant progression diminishes immediately after.

This is not a communications issue. It is an infrastructure limitation.

If education influences clinical thinking, that influence should generate observable signals. Without observable signals, educational impact becomes inferential rather than measurable.

Engagement infrastructure means the event produces structured, traceable learning signals that persist beyond the session itself, signals that can be analyzed, reinforced, and institutionally defended over time.

In practical terms, this requires:

Without these mechanisms, education is delivered but not governed.

Attendance records do not demonstrate comprehension.

Session completion does not demonstrate retention.

Faculty credibility does not demonstrate applied impact.

Only structured engagement evidence does.

When educational impact is questioned – by leadership, accreditation bodies, or institutional stakeholders, defensibility depends on measurable progression, not participation volume.

Interactive learning models and structured signal capture are central to building this kind of engagement architecture. A deeper exploration of how interactive design strengthens CME defensibility can be found in this analysis on Enhancing CME Event Engagement with Interactive Learning.

This problem persists because your systems never demanded anything better.

Educational success is declared the moment attendance thresholds are met and compliance boxes are checked. Once those signals appear, scrutiny stops. No one asks who struggled to understand. No one investigates whether clinical confidence actually improved. The absence of engagement evidence is quietly tolerated because operational completion is easier to defend.

This is where leadership becomes exposed.

You are not facing engagement failure because it is unsolvable. You are facing it because your evaluation standards allow it to exist.

As long as attendance is accepted as proof, engagement will remain invisible. And when engagement remains invisible, learning remains unproven.

This means every future conference will repeat the same pattern. Education will be delivered. Success will be declared. And the one outcome that actually matters, verified learning, will remain the one thing you still cannot prove.

Healthcare institutions exist to influence clinical behavior, not to host educational gatherings. Yet most cannot prove that influence. CME conferences strengthen scientific credibility on the surface, but without engagement, their institutional value remains exposed. Leadership believes education is working because delivery is complete.

But when impact is questioned, belief is not a defensible position. The absence of proof shifts education from a strategic asset to an unverified expense.

You can confirm sessions occurred. You cannot confirm they changed their thinking. This leaves educational outcomes open to doubt when leadership, regulators, or sponsors demand evidence of real impact.

Significant resources fund medical education. Without engagement visibility, you cannot show return in terms of learning progression, making future investment harder to justify with authority.

Education exists to improve decisions. If you cannot track retained knowledge or applied learning, clinical improvement becomes a claim without institutional backing.

Credibility is not built on delivery. It is built on provable influence. The moment you cannot prove learning, your educational leadership stands on completion records, not educational evidence.

Education delivery is not the same as educational impact. Sessions can be scheduled, delivered, and documented. That does not mean learning persisted.

Most institutions can prove attendance. Few can prove progression. That gap is not academic – it is structural risk.

When educational programs are questioned by leadership, accreditation bodies, or stakeholders, attendance records will not demonstrate comprehension. Completion certificates will not demonstrate application.

Only engagement evidence establishes defensible impact.

If a CME conference cannot demonstrate measurable participant engagement, it cannot credibly demonstrate educational effectiveness. At that point, impact becomes an assumption rather than an asset.

Forward-looking institutions are already reframing CME conferences as measurable learning infrastructure. The shift is not about improving content quality. It is about improving visibility into educational progression.

If engagement is invisible, educational authority is vulnerable.

Platforms built for structured engagement capture and longitudinal visibility – such as Samaaro – are enabling institutions to operationalize this shift from event delivery to measurable educational governance.

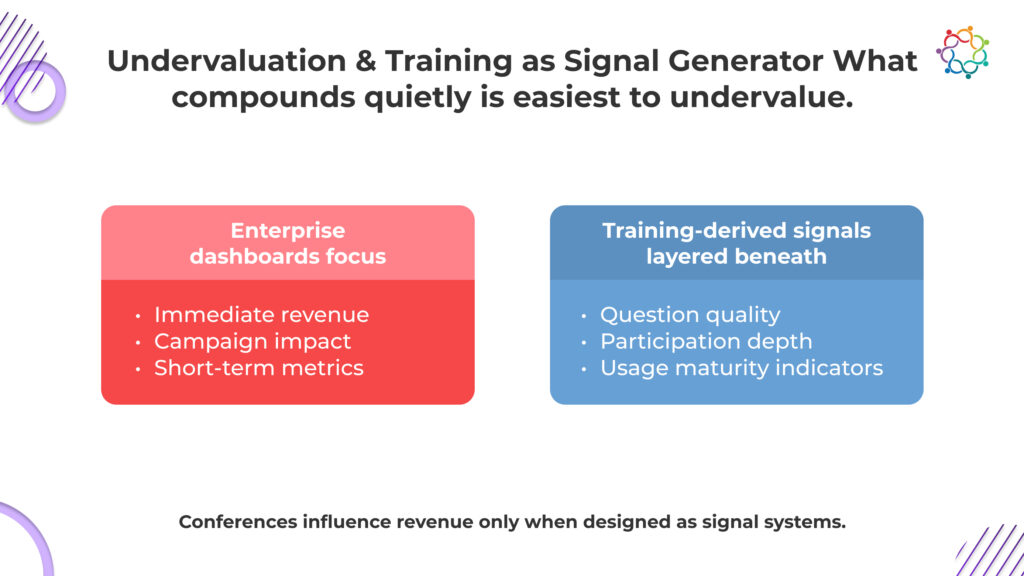

In most enterprises, training is categorized as support, not strategy. Budgets sit under enablement or customer success. Growth discussions center on acquisition, upsell, and pipeline acceleration. Yet the majority of revenue risk and expansion potential exists after the sale.

Training events rarely appear in growth narratives because they do not create visible spikes in revenue. They operate differently. They stabilize usage, increase confidence, and reinforce value realization over time. That compounding effect influences renewal confidence and expansion readiness more consistently than many front-end initiatives.

When customers fail to adopt deeply, churn risk increases. When users lack confidence, expansion stalls. Training addresses both conditions at their source by strengthening capability and reducing uncertainty.

The issue is not execution. It is a classification. When training is viewed as a cost center, its influence on retention leverage and sustained product adoption remains invisible.

This blog explains why training events influence growth outcomes more reliably than many acquisition efforts and how learning translates into usage, retention, and long-term expansion.

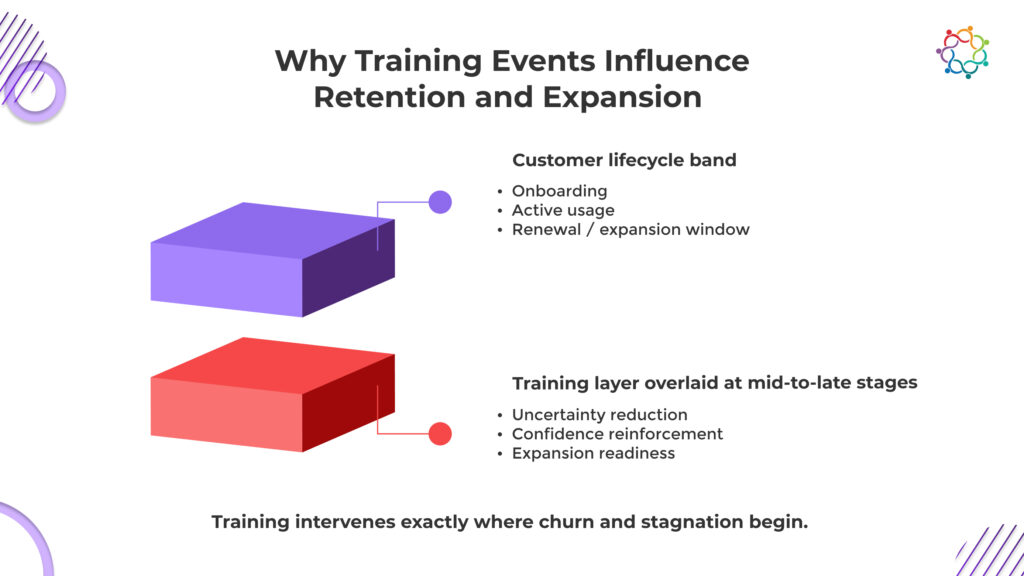

Retention does not fail because customers are unhappy. It fails because they are uncertain. When users are unsure whether they are extracting full value, renewal becomes a financial risk rather than a logical continuation. That uncertainty rarely begins in the final quarter of a contract. It forms months earlier through shallow adoption and inconsistent usage.

Expansion follows the same pattern. No stakeholder approves additional investment in a product that the team has not fully mastered. Without demonstrated value realization, upsell conversations stall. What looks like budget resistance is often capability hesitation.

This is where structured learning initiatives intervene. They reduce ambiguity, increase confidence, and strengthen usage maturity before renewal or expansion conversations begin. They influence the decision environment long before commercial discussions take place.

If retention and expansion are core growth metrics for your organization, then ignoring the mechanisms that shape user confidence is a strategic blind spot. Growth does not hinge only on selling more. It depends on whether customers feel competent enough to continue and confident enough to expand.

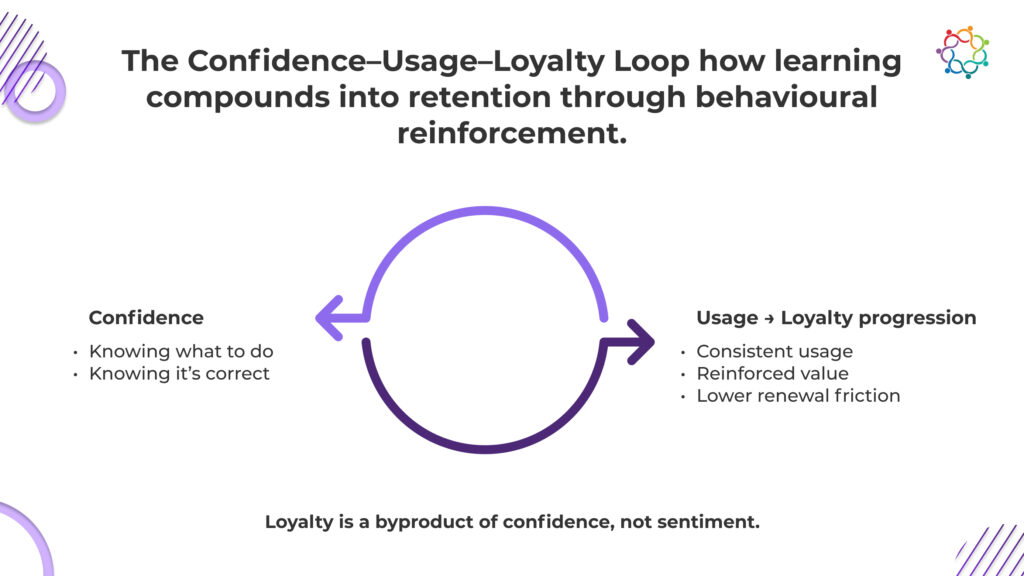

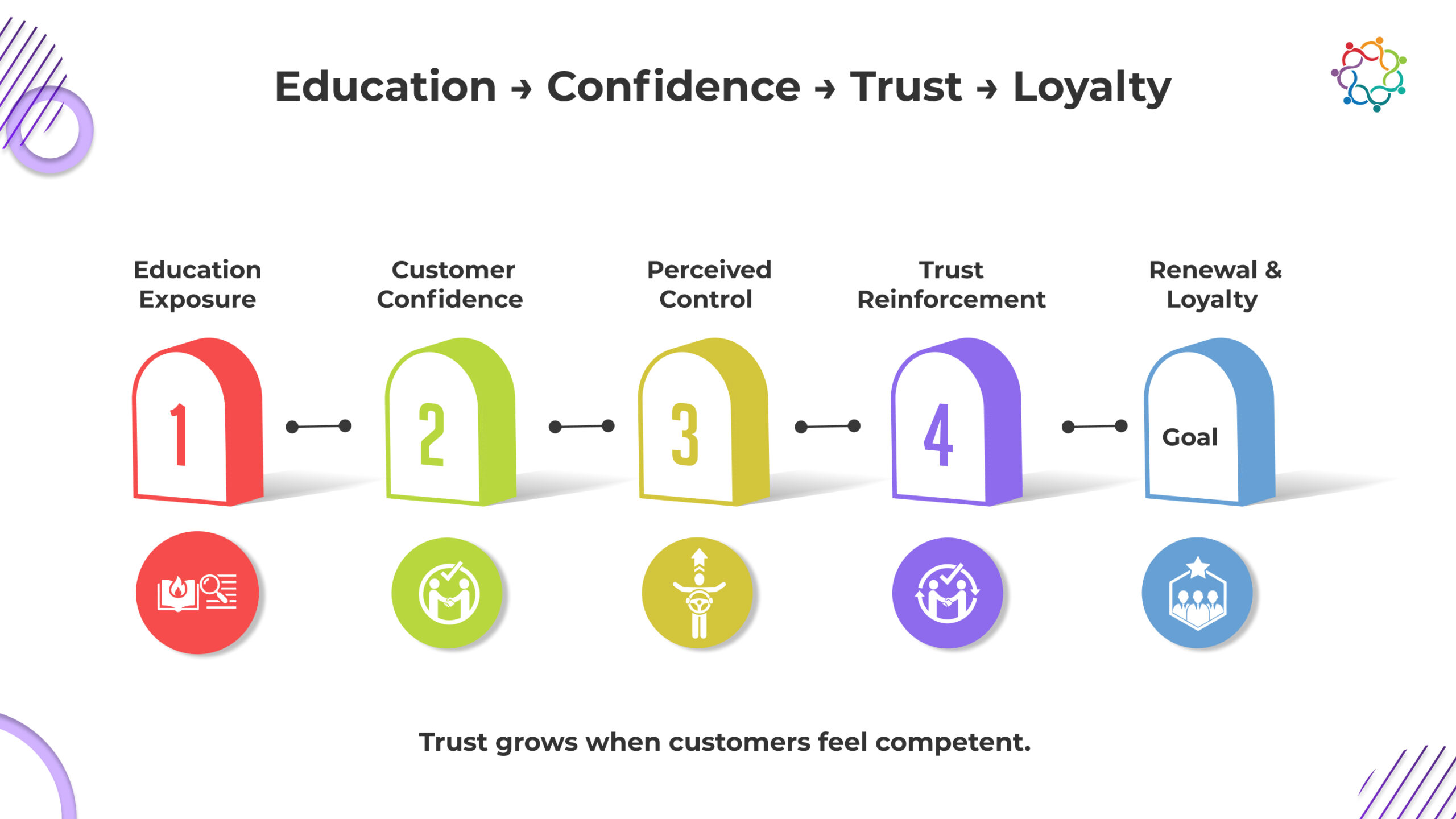

Training’s impact on growth is best understood as a loop where confidence leads to consistent usage, which then reinforces loyalty. This Confidence–Usage–Loyalty Loop is a practical framework for linking enablement efforts to measurable outcomes.

Users often hesitate to fully adopt a product when they are unsure of its correctness or impact. Even minor uncertainties, such as whether a process is being followed correctly, can prevent engagement. This lack of confidence translates into inconsistent or shallow usage, which limits the value realized from the product. Structured training events address this gap by:

Confidence is not an abstract psychological outcome. It can be quantified by behavioural measures, including higher participation in advanced workflows, fewer support enquiries, and increased feature utilisation. Users are unlikely to investigate more sophisticated features without this base, which would impede adoption and growth prospects.

Once users gain confidence, consistent usage becomes the primary mechanism for reinforcing value. Regular engagement with the product allows users to:

Sporadic or inconsistent usage, on the other hand, erodes perceived necessity. A feature that is rarely accessed or incorrectly used appears optional, diminishing the overall product impact. Training events create structured opportunities for consistent engagement, ensuring that the value proposition remains visible and reinforced across teams.

Loyalty in enterprise customers is rarely a matter of affection or brand attachment. It emerges from competence, trust, and the ability to achieve measurable outcomes. Trained users become internal advocates for the product, influencing renewal discussions and supporting expansion decisions.

Key takeaways of this loop:

In this way, training events create growth structurally, ensuring that loyalty emerges from competence rather than marketing sentiment.

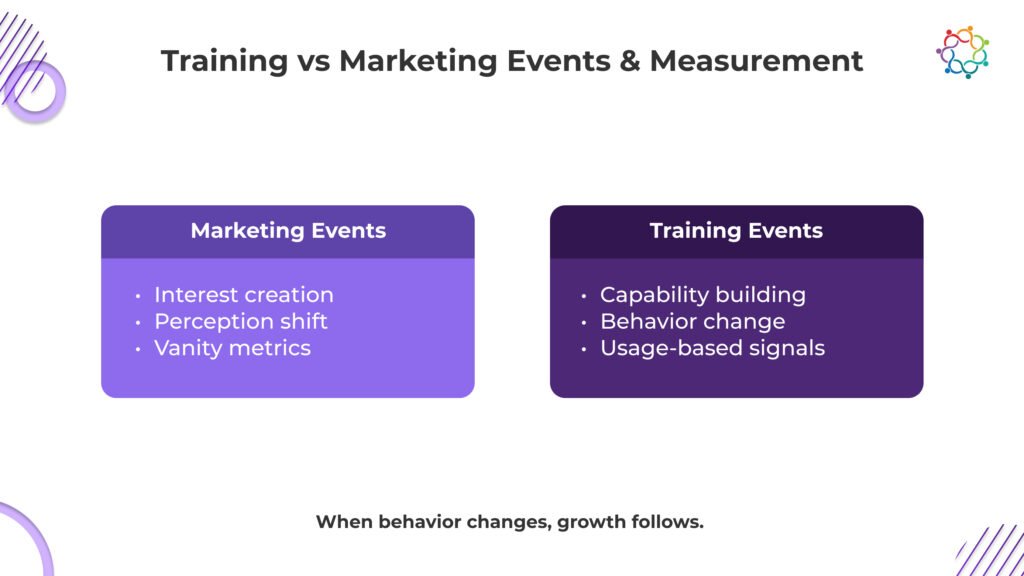

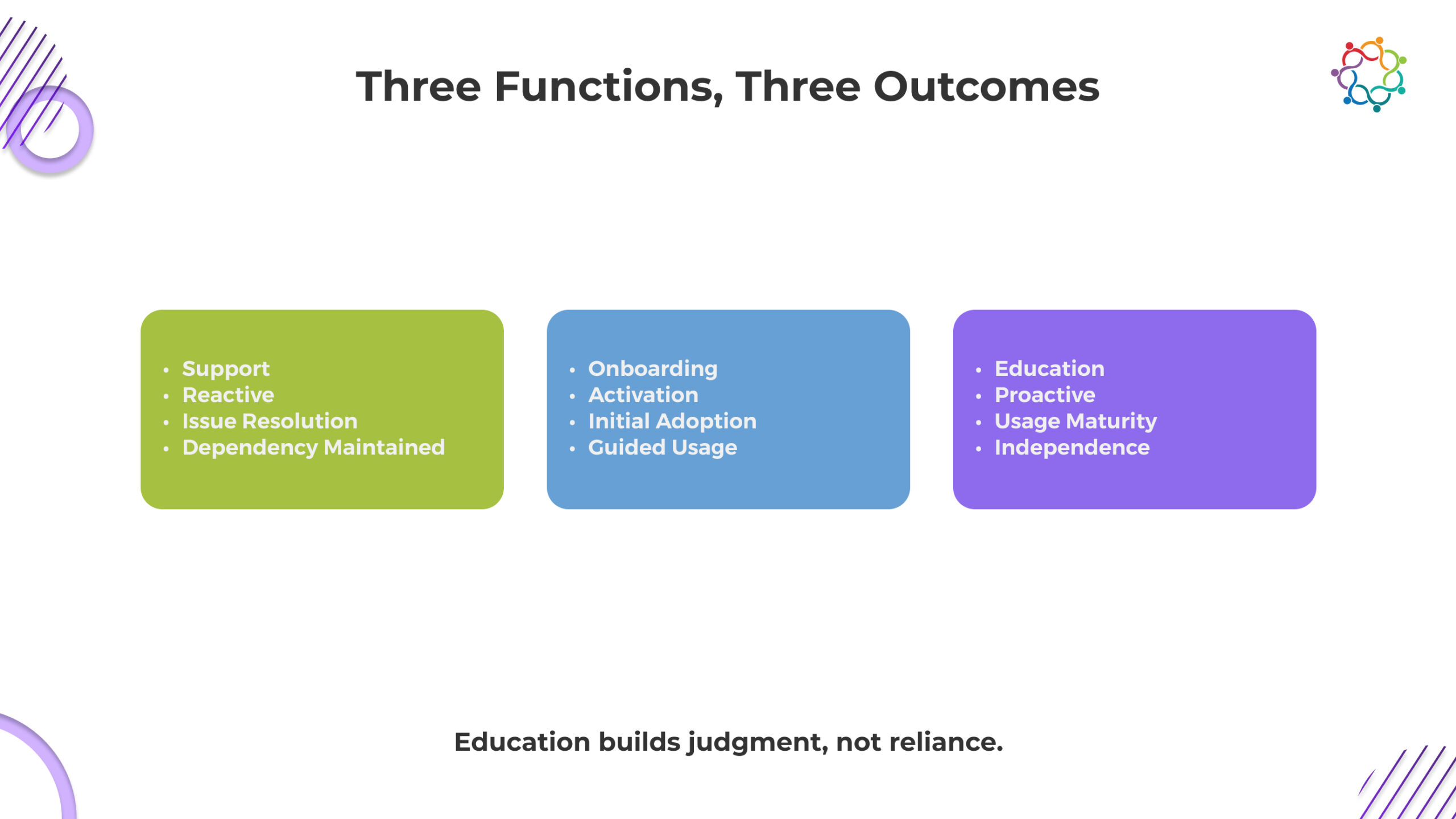

A fundamental misunderstanding that limits investment in training is the tendency to evaluate it with marketing KPIs. Marketing events are designed to create awareness, momentum, and interest. They change perception. Training events, by contrast, create capability and independence. They change behavior.

The critical distinction lies in intent and measurable outcomes:

Businesses constantly underestimate the impact of training when they use marketing assessment frameworks. Positive satisfaction ratings and high attendance are frequently seen as indicators of success, but they don’t account for the compounding impacts of increased confidence and utilisation.

Leaders may align expectations and measure training in a way that accurately reflects its economic importance by being aware of this distinction. It guarantees that enablement initiatives are viewed as a direct contributor to retention and growth results rather than as a support role.

Evaluating training through traditional satisfaction surveys or attendance figures provides limited insight. Leaders must shift focus from vanity indicators to metrics that demonstrate real growth impact.

Many organizations rely on the following metrics:

While these numbers indicate activity, they do not demonstrate whether participants gained confidence, applied new skills, or increased product usage. Measuring presence rather than progress creates an illusion of impact, leading to misallocation of resources.

Metrics that genuinely correlate with growth include:

Rather than emphasising sentiment, these measurements show changes in behaviour. They demonstrate how training promotes adoption, lowers barriers, and helps users accomplish their goals.

Behavioral shift becomes visible when engagement is structured and tracked. In one global training seminar, 95% of attendees completed digital session check-ins and contributed over 600 structured feedback entries. Participation at that depth does not measure attendance; it reveals learning engagement and signal quality that can be tied back to adoption maturity.

Organisations can justify strategic investment and measure the economic benefit of training events by concentrating on these variables.

Despite clear evidence of impact, enterprises often undervalue training campaigns due to structural and organizational blind spots.

During budget reviews, the initiatives with delayed visibility are the first to be cut, even when they compound revenue over time. This undervaluation reinforces the misconception that training is discretionary, when in reality it is structural to retention and expansion.

Recognizing the delayed yet compounding nature of training impact is essential. Enterprises that ignore it optimize for immediate optics while weakening long-term growth stability.

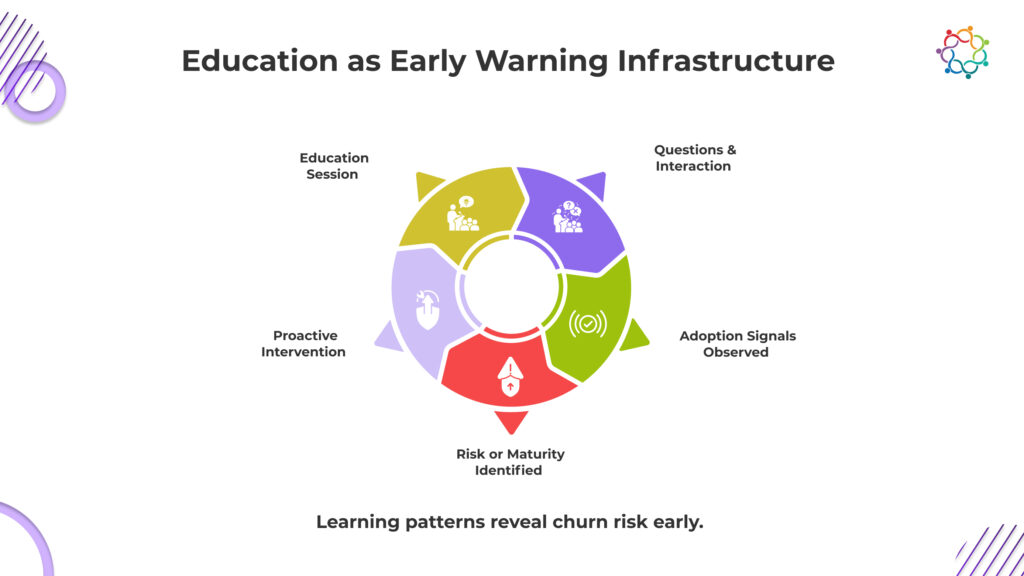

Beyond immediate adoption, training events provide unique insights into customer health and behavior. Participation patterns, questions asked, and session engagement serve as reliable signals for account maturity and risk.

In this way, training functions as an intelligence platform. It surfaces some of the cleanest behavioral signals in the entire customer lifecycle, helping leaders proactively manage risk and identify growth opportunities.

Real growth does not only come from winning new customers. It comes from keeping and expanding the ones you already have. That happens when customers clearly understand your product, use it correctly, and see consistent results.

When people feel confident, they use more features. When they use more features, the value becomes obvious. When value is obvious, renewal feels natural, and expansion feels logical.

If you ignore capability building, you create doubt. Doubt slows usage. Slow usage weakens retention.

Companies that compound revenue over time do not only sell effectively. They systematically increase customer capability.

For organizations reassessing how capability building influences retention and expansion, explore how structured Training Events & Seminars contribute to long-term engagement.

If this perspective challenges how your organization currently evaluates training investments, you can continue the conversation here.

Churn is usually explained with easy answers: pricing, feature gaps, competition. These explanations protect internal narratives. But most customers leave not because something fails, but because they never achieve confident, independent usage.

A product can work perfectly and still feel uncertain. When customers do not understand how to extract value, hesitation replaces conviction. Confusion lowers perceived impact before dissatisfaction is voiced. Usage becomes shallow. Advocacy never forms. Renewal becomes risky.

Customers rarely churn in a dramatic moment. They drift when nothing fully clicks.

Retention is not first a product problem. It is a learning problem. If customers never achieve usage maturity, they cannot justify value internally. And if they cannot defend the investment, they will not renew it.

This blog covers why education, not support, determines long-term retention and how structured learning directly influences confidence, trust, and loyalty.

Education is not a content function. It is a confidence engine. Customers do not renew because they were helped quickly. They renew because they feel capable of winning consistently. Confidence lowers perceived risk. Lower risk strengthens trust. Trust stabilizes retention.

When customers understand not just how a feature works but why it matters, value realization accelerates. They shift from dependency to control. Control changes behavior. It increases experimentation, deepens adoption, and strengthens internal alignment. That alignment protects renewals long before procurement discussions begin.

If customers constantly need reassurance, loyalty is fragile. If they understand how to create results independently, loyalty becomes durable. Retention strengthens when customers feel in control, not when they feel supported.

If you blur the line between support, onboarding, and education, you are mismanaging retention. These functions are not interchangeable. Treating them as one bucket guarantees shallow adoption and fragile renewals.

Support is reactive. It activates after friction appears. A ticket is raised. A response is given. The issue is resolved. Resolution restores functionality, not mastery.

Education anticipates confusion before it compounds. It addresses recurring gaps collectively. It upgrades baseline understanding across accounts. If your customers only learn when something breaks, you are training dependency, not confidence. Dependency increases churn sensitivity. Prevention reduces it.

Onboarding moves customers from purchase to first value. It proves the product works. Then it ends.

Education begins where onboarding stops. It deepens usage maturity. It connects features to evolving business scenarios. Without structured reinforcement, adoption plateaus at “good enough.” Good enough does not survive competitive pressure. If learning stops after activation, renewal risk starts increasing.

Support answers how. Education clarifies why and when. That difference defines retention strength.

Customers who understand context make independent decisions. Independent users explore more. Exploration drives deeper value realization. If your customers cannot articulate strategic application, they are not loyal. They are temporary.

Education events exist to create autonomy. If your programs do not build judgment, they are not protecting retention.

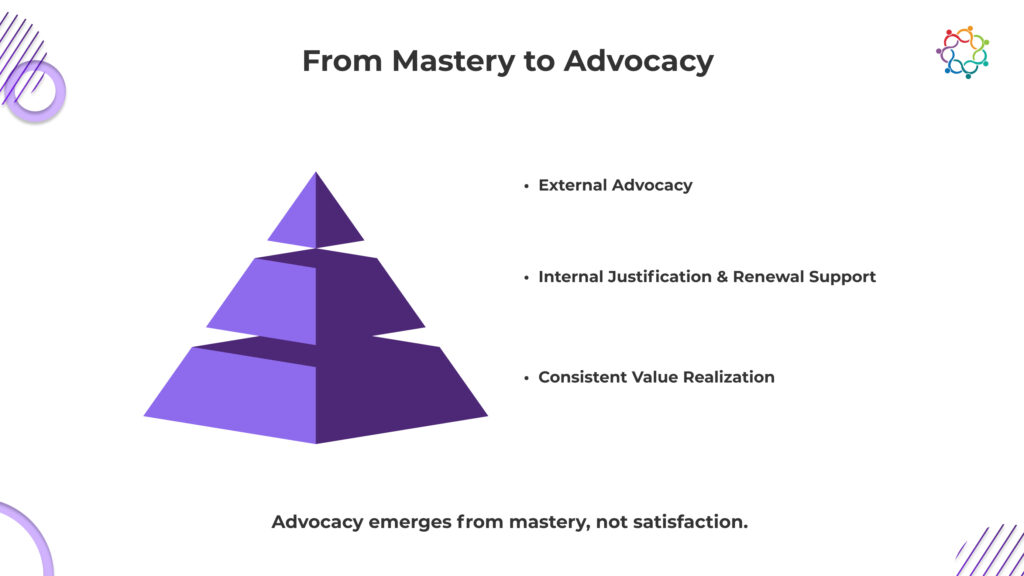

Retention is not just about contract continuity. It is about durable alignment between product value and customer belief. Education shapes that alignment.

Educated customers extract value faster. They expand usage across teams. They connect capabilities to measurable outcomes. This accelerates value realization and reinforces retention economics.

Mastery changes internal conversations. When customers understand a product deeply, they can justify investment decisions. They defend budget allocations. They articulate ROI in their own language.

Education influences advocacy in subtle ways:

Satisfaction is emotional. Mastery is structural.

Advocacy often emerges from competence, not delight. A satisfied customer may still consider alternatives. A master user understands trade-offs. They recognize opportunity cost. They know what would be lost in migration.

This is where customer education events compound impact. They create shared learning communities. They normalize advanced practices. They reinforce trust through transparency and expertise.

Over time, educated customers become internal experts. Internal experts anchor renewal conversations. They reduce procurement friction. They provide social proof inside their organizations.

Retention is reinforced when value is clearly articulated, and education makes that articulation possible.

Without ongoing product education programs, usage stagnates. Stagnation lowers perceived growth potential. When growth potential declines, renewal risk rises.

Retention is sustained by continuous learning, not periodic persuasion.

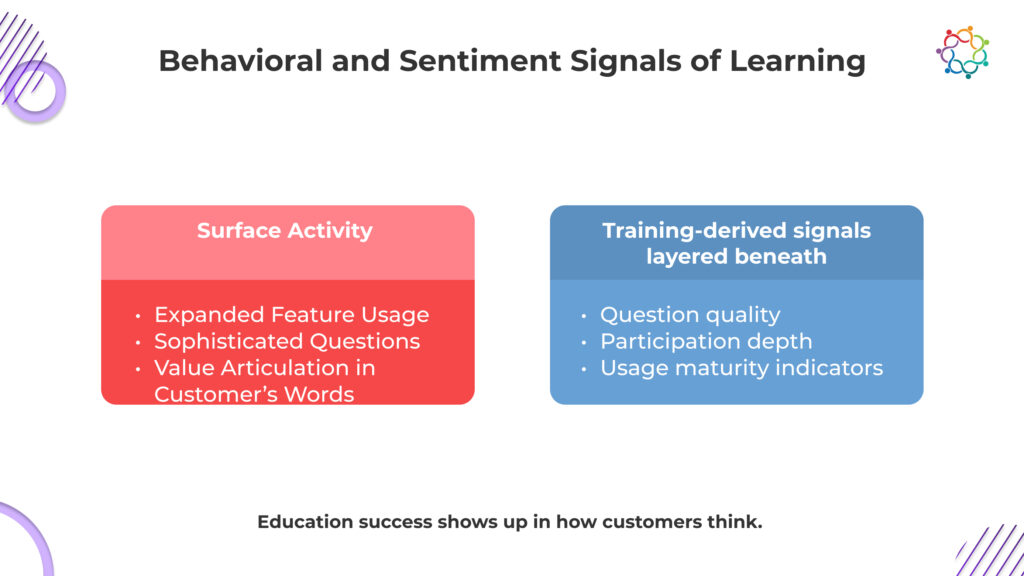

Attendance metrics are visible. Retention signals are deeper.

Measuring customer education impact requires behavioral and linguistic analysis. Surface engagement does not prove confidence. Leaders must look for evolution.

Behavior reveals maturity. As customers learn, patterns shift.

These indicators reflect usage maturity and value realization. They signal that learning reinforcement is occurring. Customers are not just consuming content. They are applying it.

Advanced questions demonstrate cognitive progress. When customers challenge assumptions or explore integration depth, they display confidence. Confidence reduces churn risk because it strengthens internal ownership.

Signal quality improves when behavior changes. Retention becomes predictable when adoption depth expands consistently.

Language shifts precede renewal strength. Customers who understand value articulate it clearly.

Listen for evidence:

These signals indicate trust reinforcement and advocacy formation. Education is working when customers teach others. Peer teaching reflects mastery.

Measuring impact requires qualitative attention. Surveys alone are insufficient. Observe how customers think. Observe how they speak. Observe whether they connect the product to business outcomes without prompting.

Education success appears in dialogue quality, confident framing, and proactive engagement. Retention improves when customers internalize value narratives. Education shapes those narratives.

Most teams claim retention is a priority. Few allocate resources to the one lever that systematically protects it. Education is deprioritized not because it lacks impact, but because its impact is misunderstood. If you treat learning as optional, you are choosing preventable churn. The bias is structural, and it is expensive.

Education rarely delivers dramatic quarterly lifts. It compounds quietly through confidence-building and usage maturity. If you prioritize only visible short-term wins, you will consistently underinvest in long-term retention economics. In reactive organizations, short-term spikes often win budget over long-term compounding effects.

Education influences customer success, product adoption, marketing advocacy, and expansion revenue. Because the outcomes are distributed, accountability becomes blurred. When no single team “owns” the upside, no single team fights for the budget. Avoiding ownership does not reduce risk. It increases it.

Measuring customer education impact requires behavioral analysis, not vanity metrics. Reduced churn volatility and stronger renewal confidence appear over time. If your measurement model only rewards immediate attribution, you will miss the structural drivers of loyalty.

Education prevents confusion, stagnation, and silent disengagement. Prevention rarely looks urgent until renewals decline. By then, rebuilding trust is slower and more expensive than sustaining it.

If you are underfunding education events, you are not being efficient. You are deferring risk.

s

Education is not only about delivery. It is diagnostics.

Every question asked during a session reveals adoption maturity. Basic clarifications signal early-stage understanding. Advanced integration inquiries signal confidence and exploration.

Attendance patterns also reveal risk. Declining participation can indicate disengagement. Sudden inactivity may reflect internal disruption. Consistent engagement suggests embedded value.

Feedback collected during sessions often exposes strategic gaps. Customers articulate friction points candidly in educational settings. This transparency provides early warning long before formal churn signals appear.

Signal quality from engagement is stronger in educational environments because participation is voluntary and intent-driven. Customers who attend want to improve outcomes. Their questions are forward-looking. Their concerns are predictive.

Retention intelligence derived from education is proactive. It enables targeted intervention before risk materializes. It allows customer success leaders to prioritize accounts based on learning velocity rather than only revenue size.

Usage maturity is observable in real time through dialogue depth. Leaders who treat education as insight infrastructure gain clarity that others miss.

Retention is easier to protect when warning signals are visible. Education events make those signals visible.

Retention is not protected by reminders, discounts, or last-minute persuasion. It is protected by understanding. Customers renew when they are confident, capable, and clear about the value they create with your product. That clarity does not happen accidentally. It is built through deliberate education.

If learning slows, usage plateaus. If usage plateaus, renewal weakens. The pattern is predictable.

Customer education events are not optional programming. They are a retention infrastructure. Underinvest here, and churn becomes a matter of time, not surprise, especially for teams that delay formalizing how they approach education strategy.

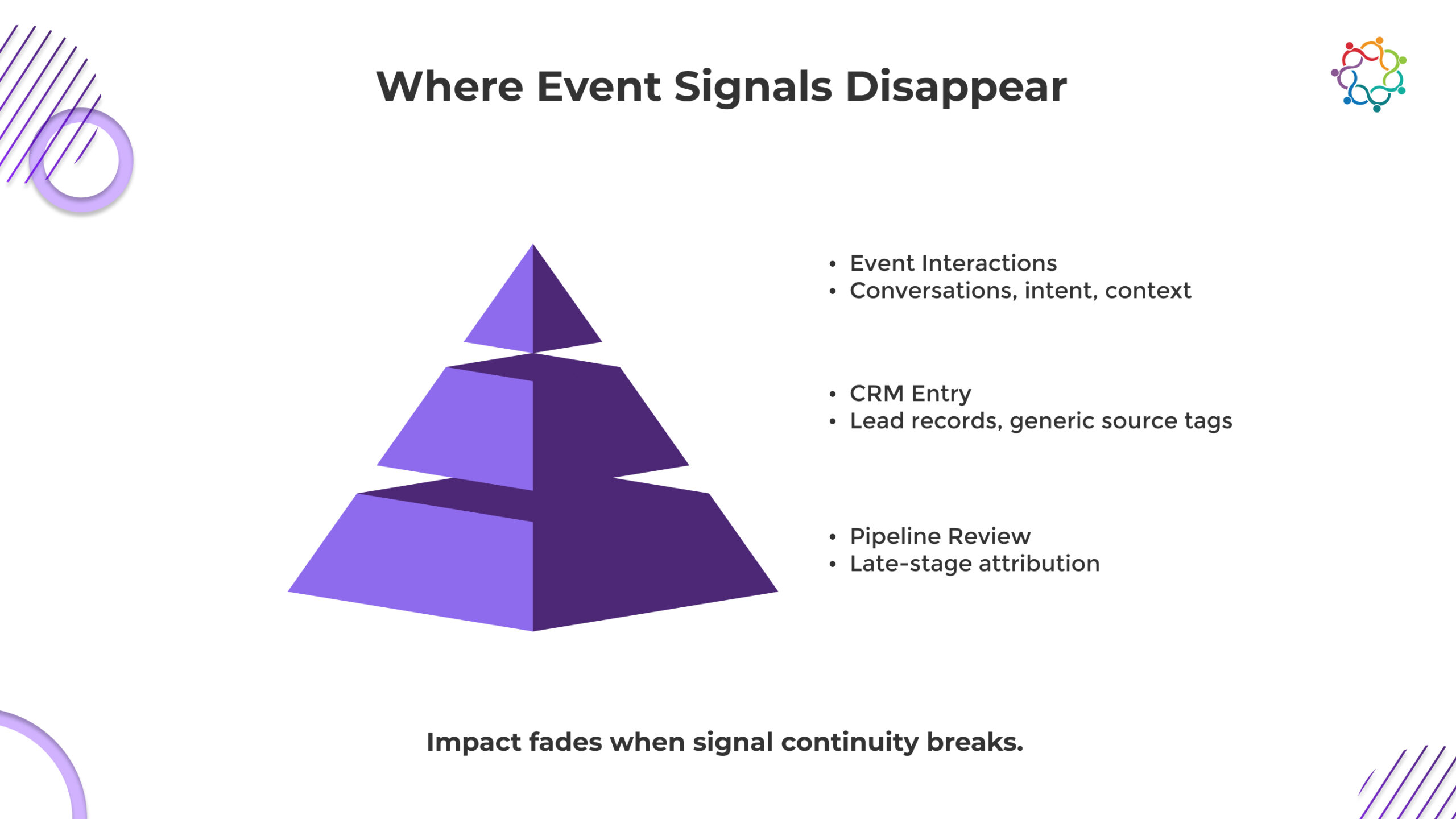

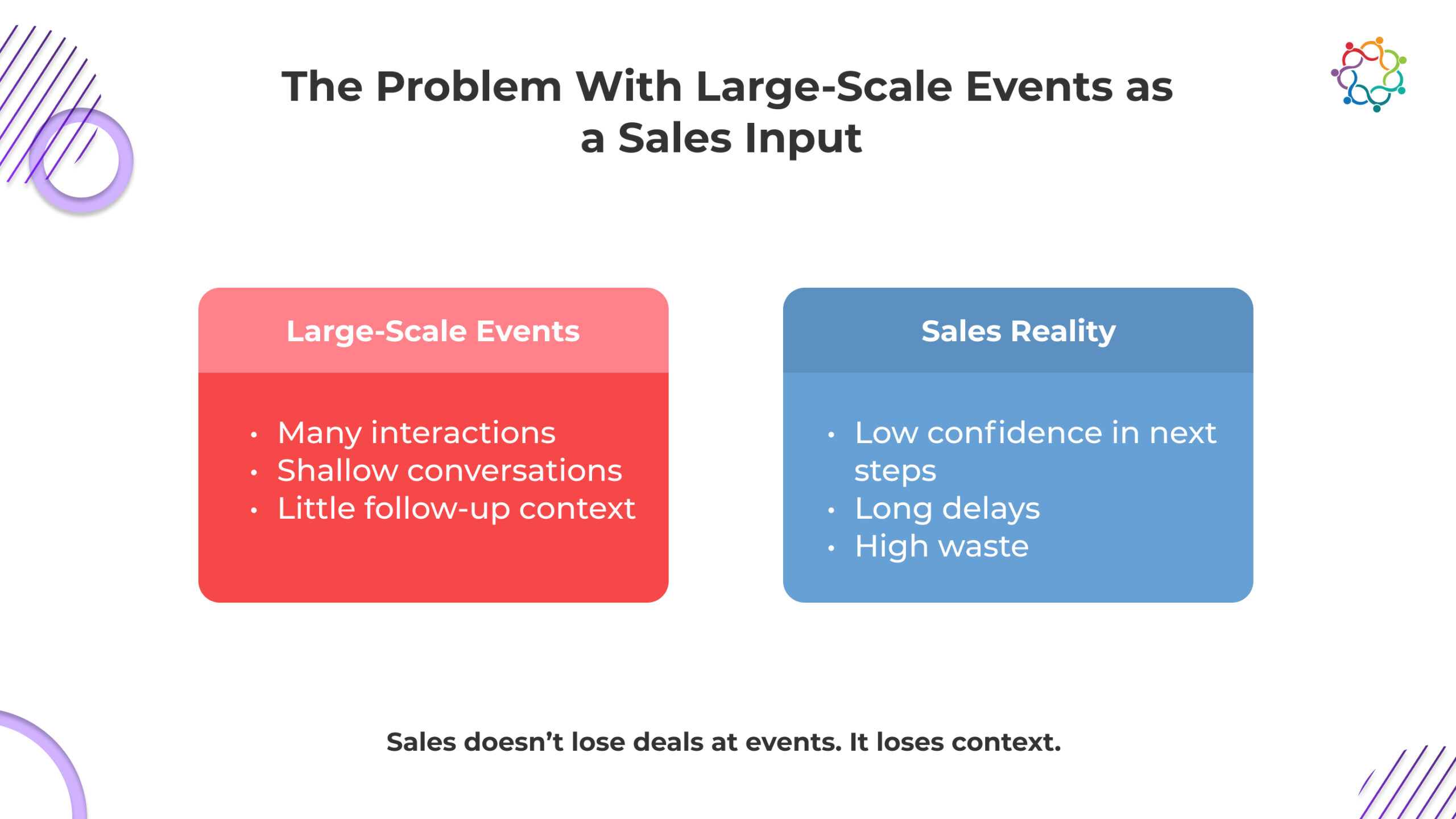

Field teams execute flawlessly. Regional activations, roadshows, and local programs run on time, booths engage, and attendance targets are met. Yet, when leadership asks about ROI, conversations become defensive. The problem is not execution. It is observability. Field events generate influence through conversations, context, and behavioral signals that rarely survive the journey from the floor to CRM systems.

By the time pipeline reviews happen, this influence is diluted, timing gaps obscure relevance, and attribution appears weak. Leadership perceives value as optional because traditional dashboards and reporting systems fail to capture these subtle signals.

Understanding this distinction is critical. Great execution does not guarantee visible ROI. What matters is ensuring that field marketing event impact is measurable, traceable, and aligned with revenue motion. Without that, even the most professionally executed programs are constantly questioned.

This blog covers why measurement, not execution, determines credibility, how signal loss occurs, and what metrics high-performing teams track to connect field marketing events to revenue outcomes.

A natural assumption in field marketing is that better execution leads to better ROI. Intuitively, a polished booth, engaging presentations, and flawless logistics should boost results. In reality, execution quality primarily enhances experience, not revenue attribution. Leadership skepticism often persists even after well-reviewed events. Great events make attendees happy, but they do not inherently create observable pipeline movement.

Consider these factors:

High execution standards are essential, but they cannot compensate for weak measurement systems. Field marketing managers must recognize that even flawless delivery cannot “force” revenue attribution. The challenge is not what the teams do at the event; it is what happens after.

Strategic Insight: You cannot execute your way out of a measurement problem. Leadership credibility depends on capturing signals, aligning them with pipeline progression, and demonstrating influence over revenue motions.

You’ve executed flawlessly, yet leadership still questions ROI. Why? Because the real impact of field events rarely travels intact from the event floor to the pipeline. Conversations, intent, and engagement, what actually drives deals, get flattened into generic leads or lost entirely.

Timing gaps, attribution delays, and signal dilution make your influence invisible. The more impressive the event, the more it risks appearing irrelevant if measurement systems cannot capture what truly matters.

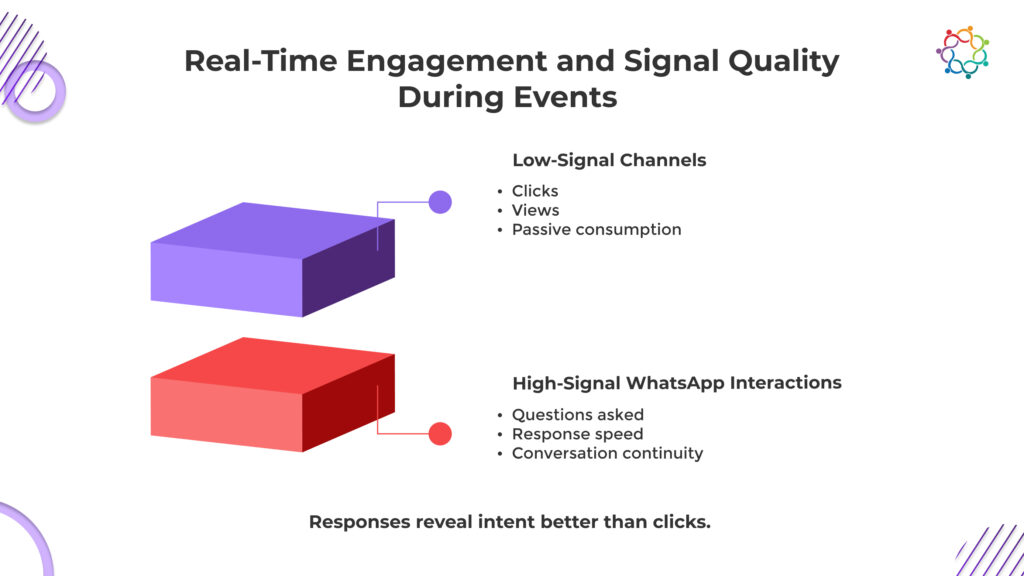

Every conversation at an activation, every demo, and every engagement produces behavioral signals: intent, interest, context, and problem recognition. These signals indicate future opportunities but rarely manifest as immediately closed deals. Event influence is diffused across time and channels, creating an attribution challenge.

Without capturing these signals systematically, field events appear as isolated touchpoints with no measurable outcomes. Execution quality remains high, but influence remains invisible.

Once leads are entered into CRM systems, context is often lost. Notes become generic, urgency fades, and the richness of field interactions flattens into a numeric entry. Behavioral cues such as challenges expressed, urgency detected, and contextual priorities are not systematically tracked.

This disconnect explains why pipeline-focused reviews underestimate the true contribution of field marketing.

Revenue attribution often occurs at the point of deal closure or milestone progression, long after field influence occurred. Field events rarely appear directly responsible for pipeline creation in traditional attribution models. When influence is indirect or catalytic, traditional dashboards fail to show contribution.

Why it matters for ROI credibility:

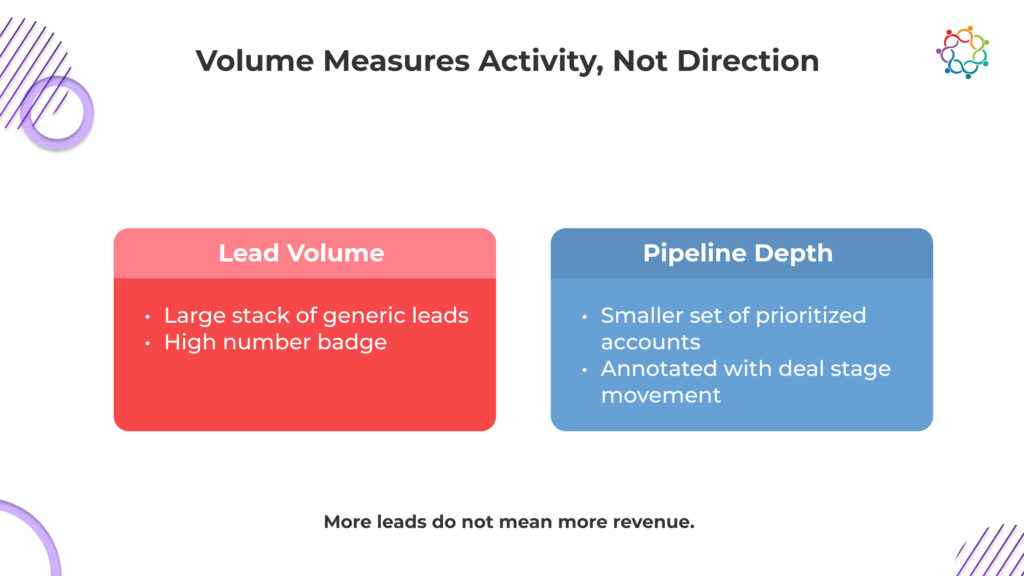

The most common metric for field marketing success, lead volume, frequently misrepresents value. While volume demonstrates reach, it says little about relevance or pipeline impact. High volumes may overwhelm sales with low-priority leads, eroding trust and visibility into the event’s real influence.

Volume-centric measurement inadvertently signals to leadership that field events are “tick-box” activities rather than revenue enablers. When volume dominates reporting, the subtle but critical influence of field events on deal acceleration and reactivation is overlooked.

Without observing the qualitative contribution of events to the buying journey, measurement collapses, and credibility is lost upstream.

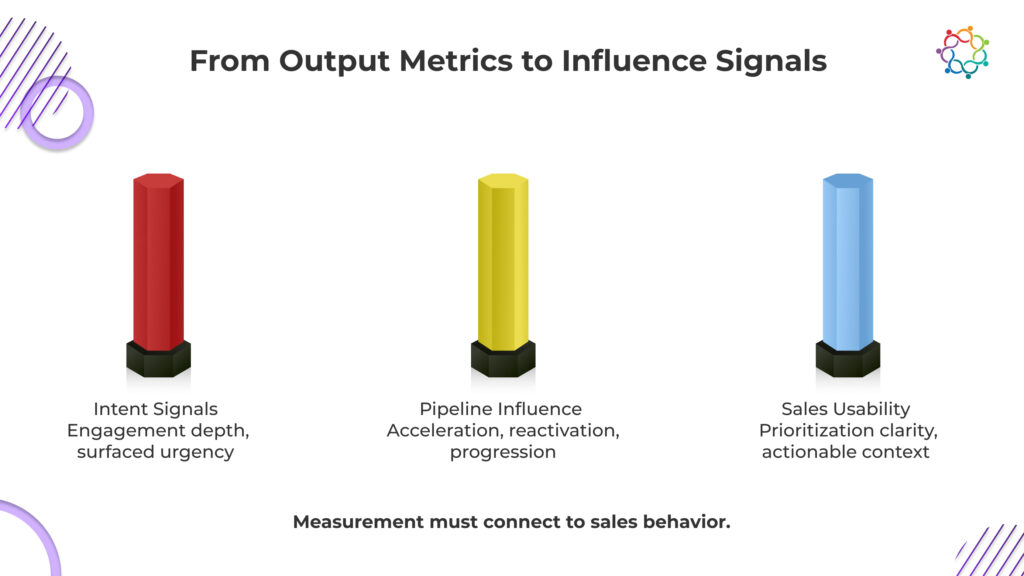

Many teams unintentionally weaken their credibility by reporting what is easiest to count rather than what influences revenue motion. Execution remains visible. Influence does not. The teams that earn leadership trust measure the signals that survive beyond the event and connect to pipeline progression.

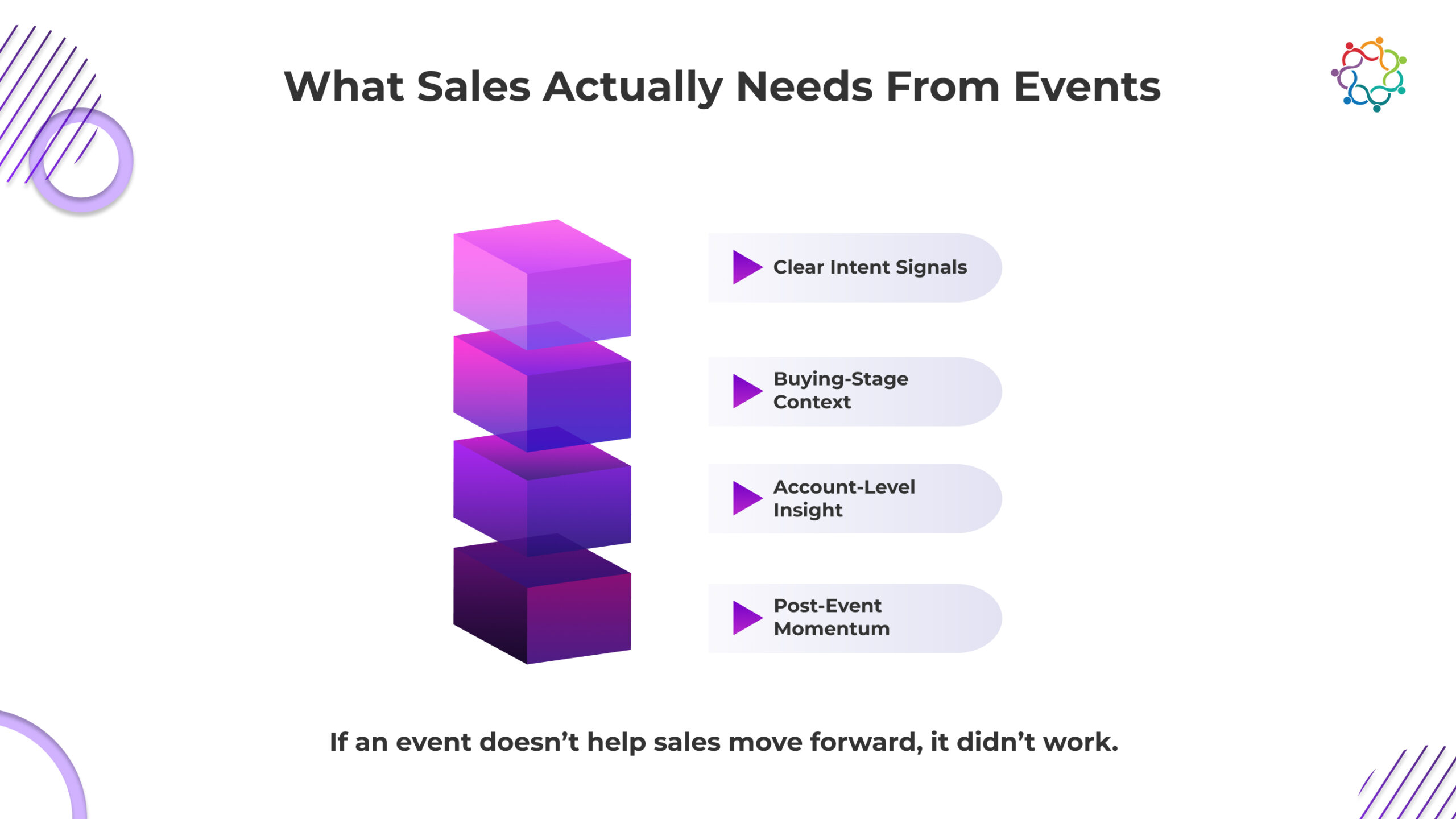

It is not how many people scanned a badge or attended a session. True intent signals reveal who engaged deeply, what problems surfaced, and where urgency existed. If you cannot show that meaningful engagement occurred, your events are invisible to leadership. Generic lead counts hide the reality: engagement does not automatically translate into deal progression.

Field events do not close deals, yet most reports treat them like they should. High-performing teams measure acceleration, reactivation, and progression of opportunities influenced by the event. If you cannot show your programs nudging deals forward, you are selling yourself short. Leadership only cares about influence, not execution perfection. Your events are catalysts, not closers. Measurement should reflect that catalytic role.

If your outputs are not actionable for sales, your events are noise. True measurement asks whether post-event insights change prioritization, follow-up, or strategy. If sales ignores the intelligence you generate, you have failed before you even start reporting. Your influence exists only if it translates into behavior; otherwise, it disappears.

When reporting focuses on activity instead of influence, field marketing events will continue to be undervalued.

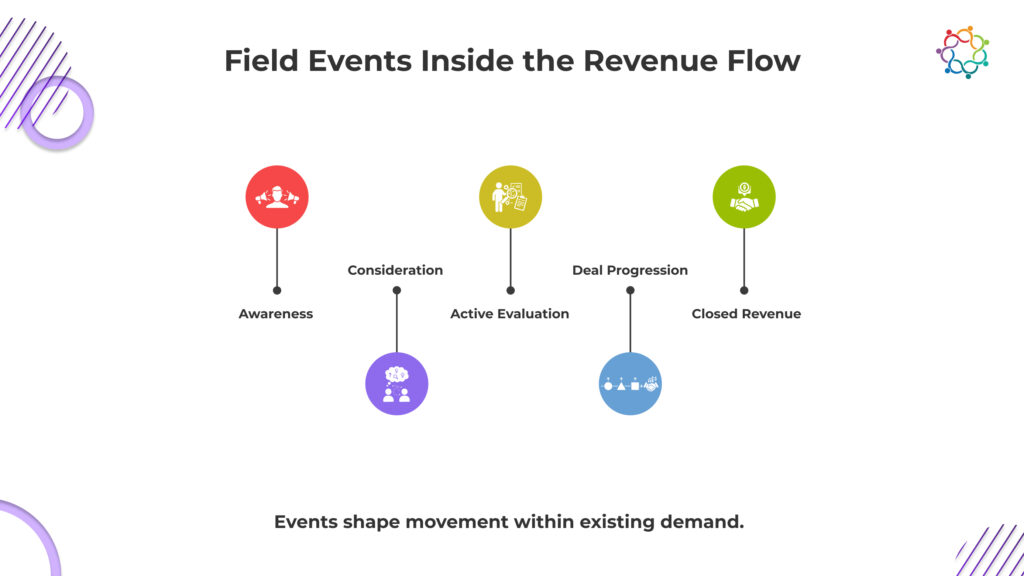

Most field events operate in a vacuum. They are planned, executed, and reported as if deals start at the booth. The reality is simple. Field events rarely create demand from zero. Events intersect with active buying journeys, influencing decisions that are already in motion.

If measurement ignores this, every dashboard will make your programs look optional.

Leadership sees cost without connection, activity without outcome, and influence without proof. The uncomfortable truth is that your events are only as credible as the signals they leave in the revenue flow.

Measurement must move beyond reach and volume to include relevance, acceleration, and actionable influence. Until field events are tied directly to where deals progress and decisions are made, you will keep losing the ROI argument, no matter how polished the execution feels.

Field marketing teams execute flawlessly, yet their impact is constantly questioned. The reason is systemic. The structures, incentives, and reporting habits in most organizations actively work against capturing the true influence of field events. You are delivering value that leadership cannot see, and the systems are designed to make that invisible.

Reality check: Systems and incentives often prioritize what is easiest to measure, rather than what truly drives revenue. The field marketing team’s credibility suffers not due to poor execution, but due to misaligned metrics.

Execution alone will never resolve ROI questions. Your field events generate real influence, but if the signals do not survive the journey to sales and CRM systems, leadership will always see them as optional.

Volume, attendance, and flawless delivery cannot replace observable impact.

The teams that win the credibility battle track intent, behavioral signals, and pipeline influence, not just activity. Field marketing events become strategic revenue inputs only when measurement captures what truly matters.

Make field marketing influence visible inside the revenue motion. When impact is observable, credibility follows.

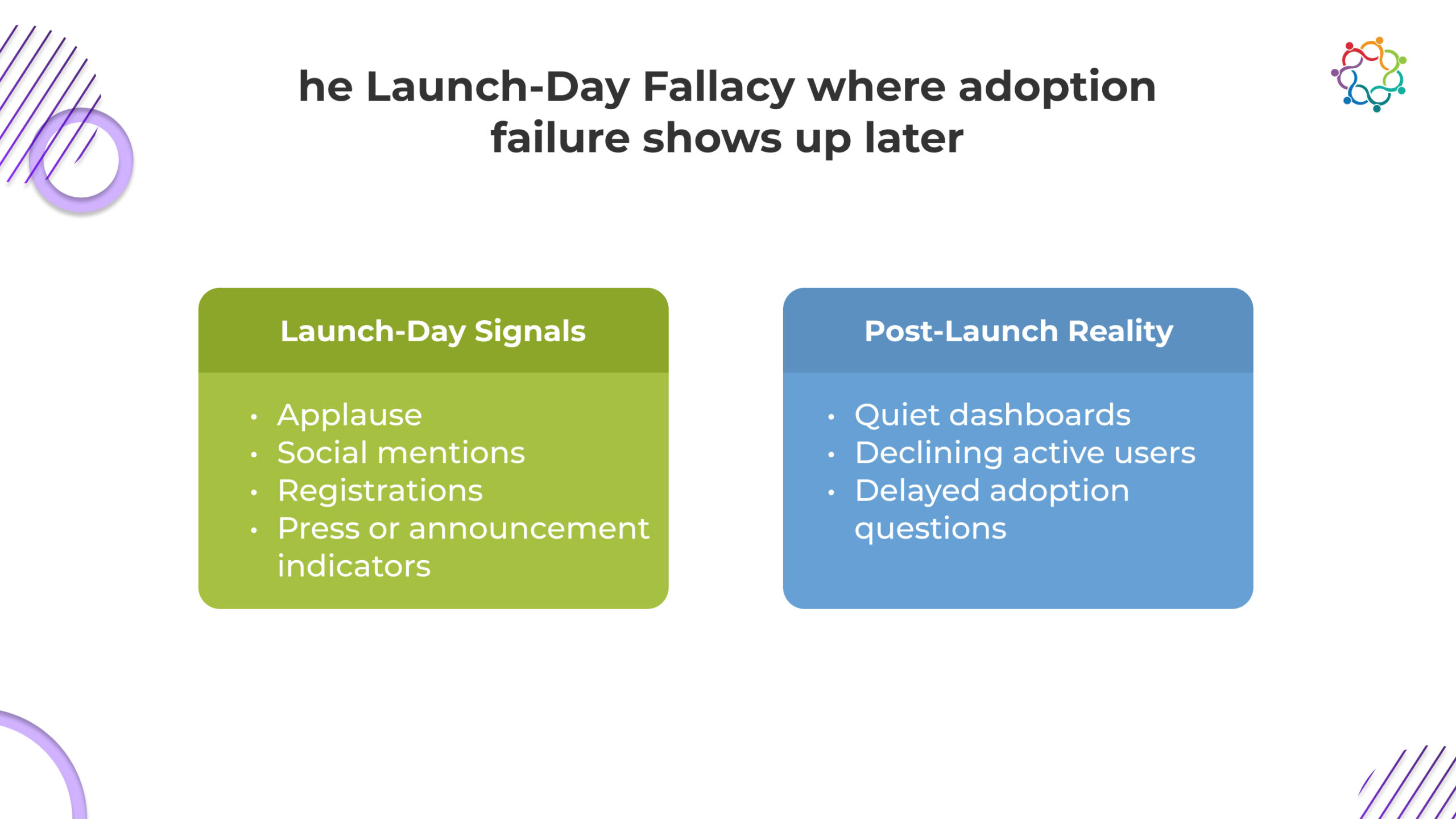

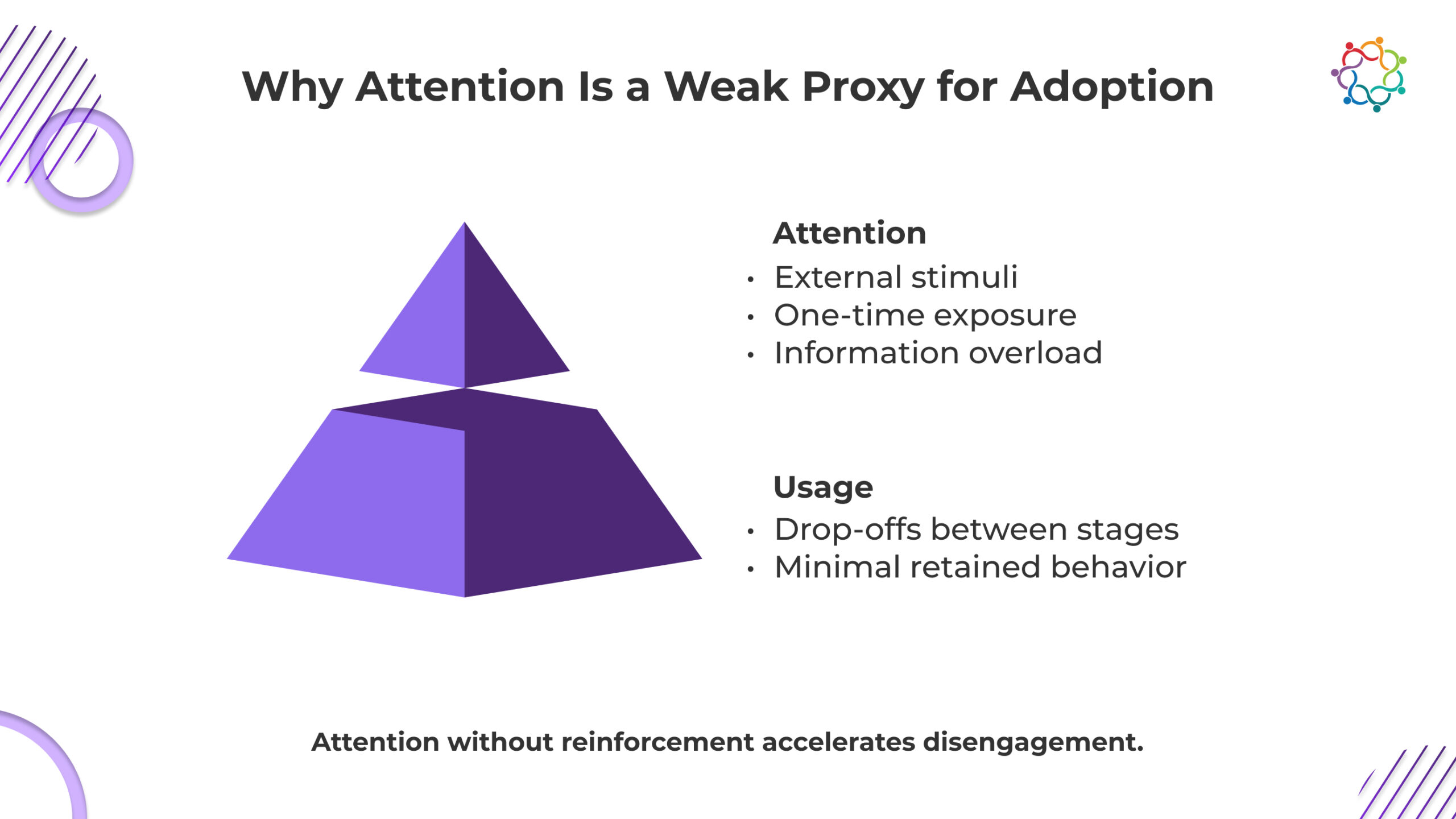

Product launch events are often treated like the ultimate proof of a product’s promise. They are visually stunning, strategically timed, and built to generate applause, social shares, and registration numbers. The room buzzes. The chat fills with excitement. Marketing celebrates. Leaders nod approvingly.

The structural problem is simple: attention alone does not create adoption.

For product marketers and growth leaders, this is frustrating. Launch-day applause rarely leads to lasting engagement. Usage often drops sharply once the excitement fades. The problem is not that users fail the product, but that the launch fails the users.

The issue is not user apathy. It is launch design. Attention, messaging, and onboarding are misaligned with real behavior change. Launch events must function as the starting point for learning and action, not the peak of success.

Creating enthusiasm on launch day is surprisingly simple. Any product can appear revolutionary for a few hours with a well-executed presentation, striking graphics, and well-publicised announcements. The number of registrations soars. Buzz on social media gets more intense. The perceived level of success is increased by media publicity. However, none of these measures assess how likely users are to interact with the product.

The fundamental flaw is the assumption that visibility equals adoption. Teams often equate applause with understanding and awareness with action. Launch events reward immediate attention rather than sustained engagement. Users may leave the event inspired, but inspiration without guidance rarely translates into behaviour change.

Why adoption suffers:

Most launches succeed as events and fail as adoption systems. The audience leaves with enthusiasm but not clarity on the next steps, setting up adoption decay from the outset.

Engagement during a launch is not evidence of sustained usage. Even though an eye-catching presentation or an engaging demonstration may get people to look, listen, and cheer, it doesn’t guarantee that they will utilise your product.

Adoption is essentially distinct. Repeated encounters, intrinsic motivation, and a firm grasp of value extraction are necessary.

Users are forced to assimilate too much information too soon because most launch events jam months’ worth of content into a few hours. Awareness does not equate to action. Although users may leave feeling amazed, they do not leave feeling knowledgeable. Their excitement fades the moment they return to their daily routines, and without structured reinforcement, drop-off becomes inevitable.

Attention cannot carry adoption without reinforcement. Real adoption is earned through guidance, reinforcement, and clarity.

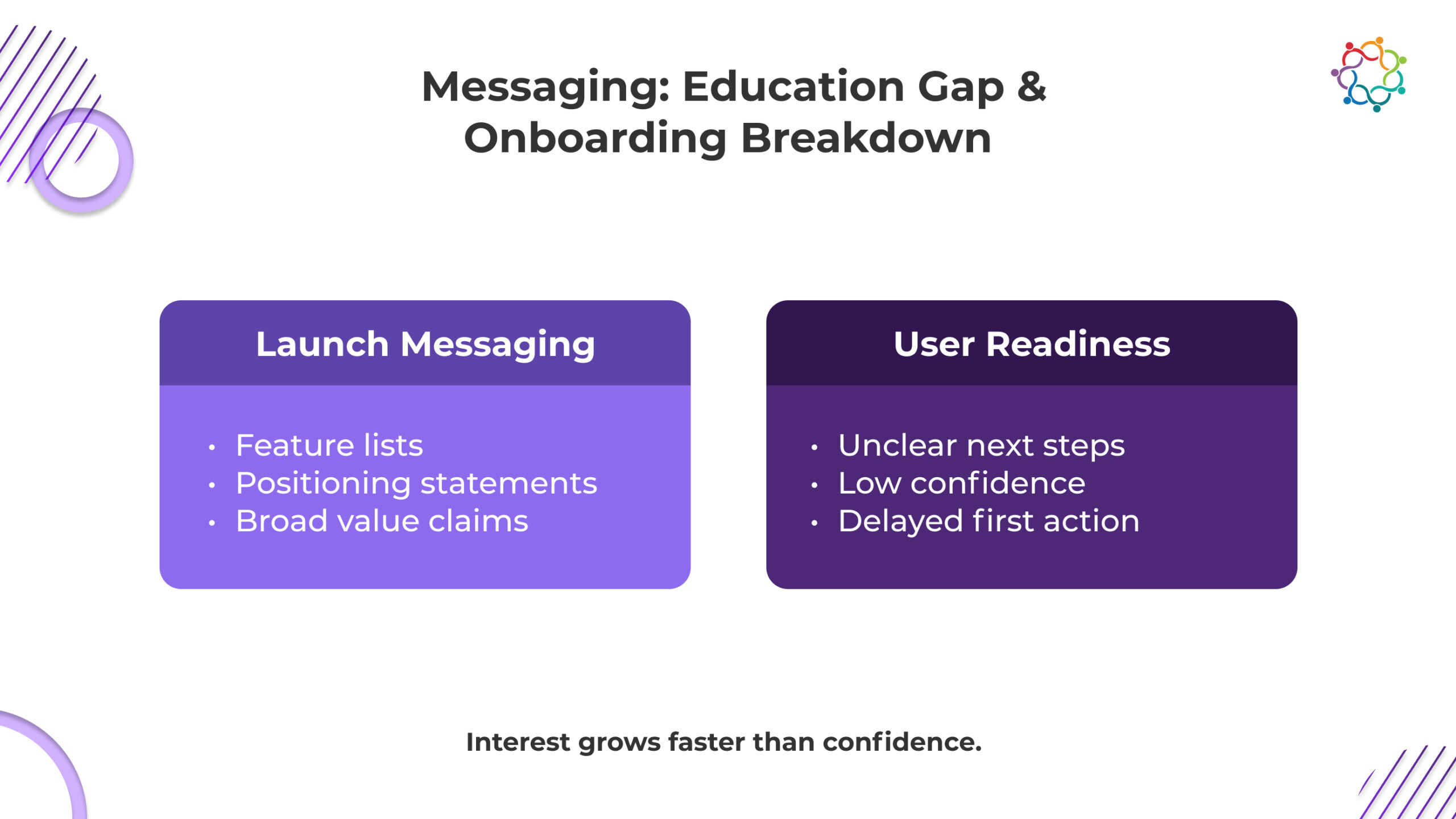

Even well-positioned products struggle after launch because messaging and education are treated as the same thing. They are not. Messaging creates interest. Education creates usability. When that distinction is ignored, adoption slows before it properly begins.

Launch presentations often highlight multiple features in rapid succession. Users see capability, but they do not see sequence. Without context on when and why to use each feature, complexity increases and confidence drops.

Strong positioning clarifies who the product is for and why it matters. It rarely explains how to start. Users understand the promise but remain unsure about the first practical step toward value.

Events compress large amounts of information into a short time frame. Cognitive overload reduces retention, which increases activation lag once users attempt to engage independently.

Curiosity drives sign-ups. Confidence drives continued use. Without guided education that builds familiarity and competence, early enthusiasm fades into hesitation, and hesitation turns into abandonment.

Adoption improves only when education is embedded directly into the launch experience rather than postponed until later.

Most launch events prioritize spectacle over readiness. Users frequently depart feeling excited but unsure of their next course of action. The experience is fragmented since onboarding is handled as a distinct operation, frequently months after the launch. Adoption is intrinsically brittle when launch and onboarding are separated.

Launch events frequently put the reveal ahead of preparation, emphasising statements over concrete actions, which leaves people without obvious ways to get value right away. Adoption stalls before it even starts because onboarding is viewed as reactive rather than proactive, leaving early users unsupported.

This assumption ignores activation lag and compounds cognitive overload. Adoption is not accidental. It must be deliberately designed. Launch events that ignore onboarding unintentionally guarantee a post-event drop-off.

Adoption is not a moment of excitement. It is a behavioral transition. And behavioral transitions don’t happen on stage; they happen through repetition under reduced uncertainty.

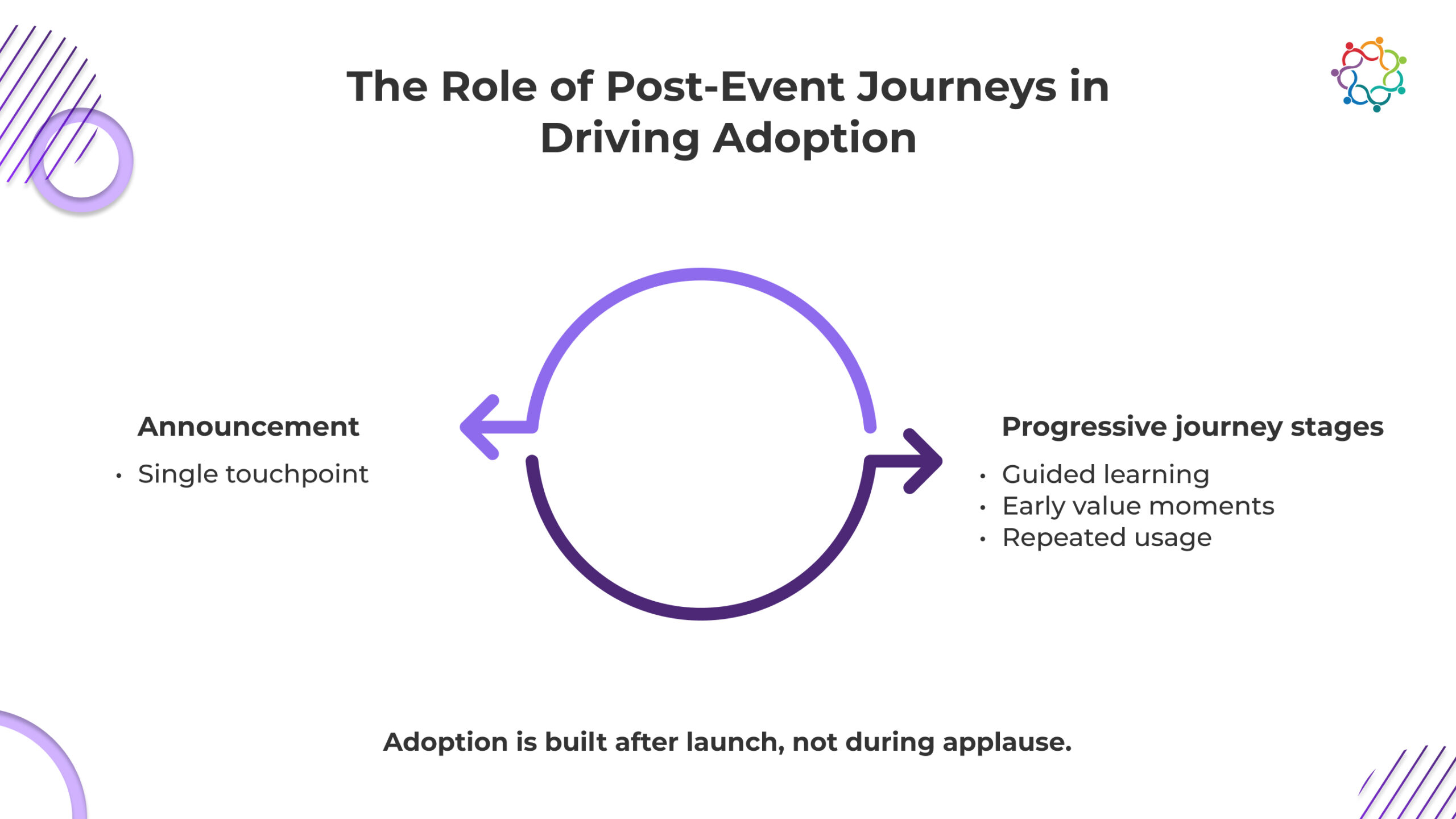

A launch event creates awareness and emotional energy. What it does not create is confidence. After the spotlight fades, users are left with questions: Will this fit into my workflow? Will it slow me down? What happens if I get stuck? That uncertainty is the real barrier to adoption.

Post-event journeys exist to systematically remove that uncertainty.

Spaced reinforcement, through follow-up emails, contextual nudges, short webinars, or guided in-app prompts, keeps the product cognitively active without overwhelming the user. Each interaction reduces ambiguity. Reduced ambiguity increases willingness to try. Trying creates small repetitions. Repetitions, when paired with visible progress, begin forming habit.

This is the cause chain:

Early value moments accelerate this loop. When users experience a quick, tangible win soon after the event, perceived risk drops. The product stops feeling theoretical and starts feeling useful. Momentum replaces hesitation.

Engagement signals complete the feedback system. When teams track who activates, who stalls, and where friction occurs, they can intervene before confusion turns into abandonment. Without this, usage decay is inevitable.

The uncomfortable truth is that even a high-energy, well-attended launch cannot guarantee adoption. Energy fades. Memory decays. Attention shifts. Only structured, pre-planned post-event journeys convert awareness into behavior.

Events ignite interest. Journeys convert it into habit.

And without engineered habit, usage always drifts back to baseline.

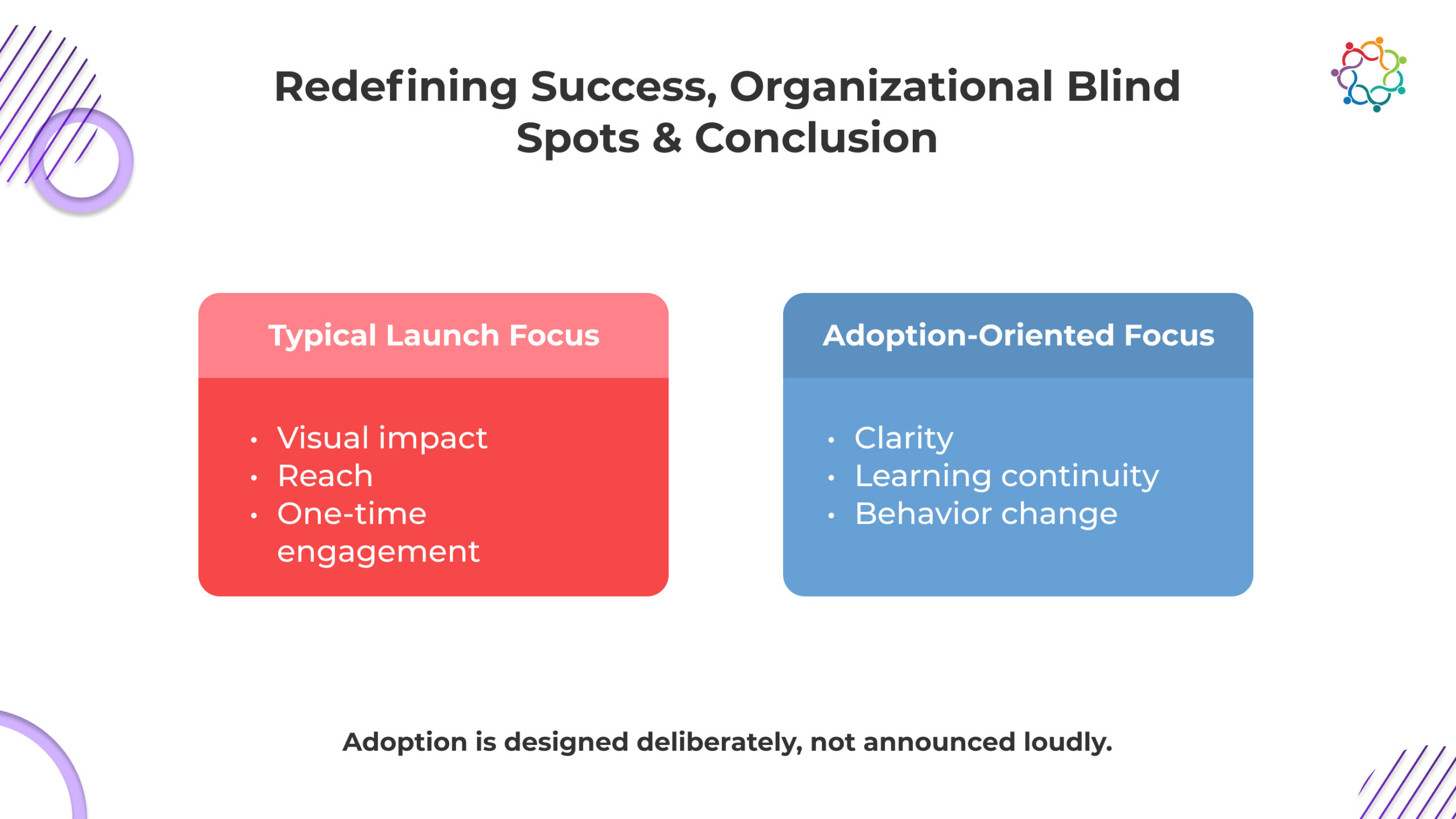

Conventional product introductions frequently emphasise spectacle. Teams spend a lot of money on announcements, visual impact, and one-time interaction, producing impressive moments that are rarely used again. The issue is that these measures prioritise attention over behaviour and praise over adoption.

Successful launches adopt a distinct strategy. Instead of viewing the incident as a climax, they view it as the beginning of a process of learning and behaviour modification. Reducing cognitive friction, defining future actions, and fostering confidence in early use are the goals of every choice, from agenda design to demos. The objective is to empower consumers to take action, not to impress.

| Typical launches optimize for: | Adoption-focused launches optimize for: |

| Visual impact | Usage clarity |

| Announcement reach | Defined first action |

| One-time engagement | Reinforced early value |

Effective launches translate attention into first use. Announcements reaffirm early value, engagement tactics extend beyond the event to direct uptake, and visuals aid in understanding. The most successful events resemble the initial chapter of onboarding rather than the conclusion. They are intended to pique interest, provide clarification on usage, and sustain momentum long after the cheering has stopped.

Teams that focus on adoption-first design avoid the common trap of high visibility with low retention.

Marketing is rewarded immediately. Product is judged months later. No one is accountable for the gap. Marketing teams cheer when the event goes viral, counting registrations, social mentions, and applause as proof of achievement. Product teams wait months to judge whether users actually engaged, often realising too late that the product hasn’t been adopted.

Nobody assumes accountability for the path that unites these two realities in the meantime. Most teams act as though they can optimise for both adoption and spectacle without altering accountability or incentives.

The disconnect is not a minor oversight; it’s a structural flaw. By celebrating attention without owning post-launch behaviour, teams create the perfect conditions for disengagement. Users leave the launch excited, but with no guidance, no support, and no reinforcement, they quietly abandon the product. When no team owns adoption continuity, disengagement is predictable.

Launch-day applause is fleeting. Buzz does not equate to usage, and registration numbers do not translate into engagement. Product launch events can generate excitement and social proof, but without deliberate design for behaviour change, adoption will inevitably lag.

Adoption requires continuity. It demands that learning extend beyond the event, that onboarding is integrated with launch messaging, and that post-event journeys reinforce confidence and momentum. Events must initiate behaviour change, not conclude messaging.

A launch merely increases the drop-off if it does not make the product easier to use. If a launch does not make first usage easier within 24 hours, it has failed adoption.

Sales kickoff events are remembered as high points in the revenue calendar. Energy peaks. Leadership clarifies direction. Product and marketing align around a shared narrative. For a few days, the organization feels synchronized and focused.

Then everyone returns to the field.

Within weeks, selling patterns look familiar. Qualification remains inconsistent. Messaging drifts. Forecast variability continues. The intensity of the moment does not translate into sustained execution change.

This is the paradox. The experience feels successful. The behavior does not materially shift.

The issue is not effort, budget, or production quality. It is structural design. Inspiration is treated as transformation. Alignment is mistaken for adoption. Applause is interpreted as progress.

Sales kickoff events rarely fail in the room. They fail in the weeks that follow.

(Read: The Ultimate Guide to Integrating Sales Enablement and Event Marketing)

This blog covers why motivation decays, why execution resists inspiration, and what must structurally change for sales behavior to actually move.

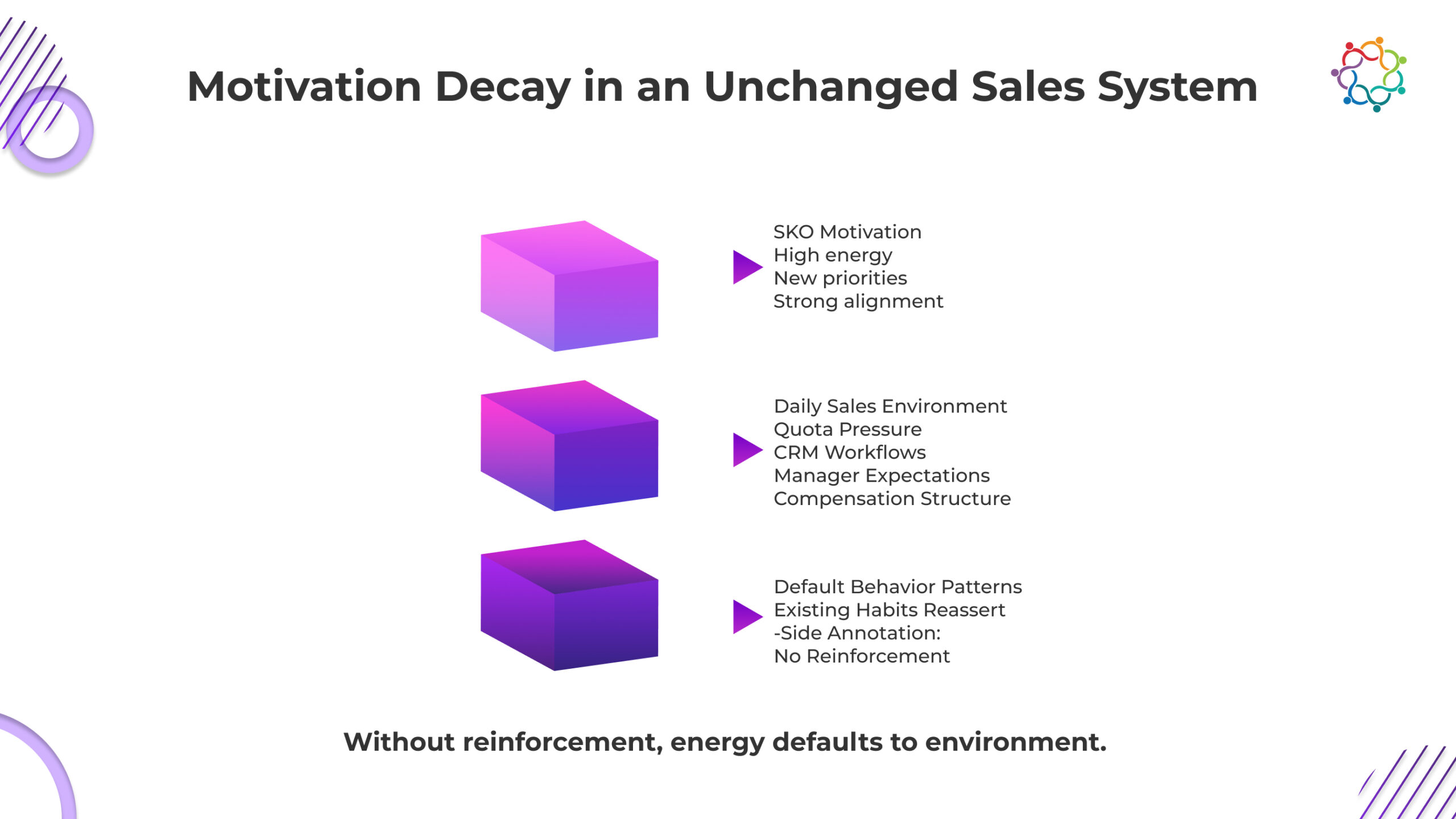

Motivation reliably spikes during the kickoff. The problem is that you expect that spike to survive in an unchanged environment. It will not.

Sales behavior is not shaped by how inspired your team felt for two days. It is shaped by quota pressure, compensation design, CRM workflows, pipeline scrutiny, and manager inspection. If none of those changed after the event, why would behavior change?

Motivation is temporary and context-bound. The context during the event is controlled, focused, and emotionally charged. The context back in the field is chaotic, metric-driven, and unforgiving. When those two environments collide, the operational one wins every time.

Reps do not abandon new priorities because they disagree. They abandon them because the system does not require adoption. Forecast calls still prioritize volume. Managers still coach the old way. Incentives still reward the same behaviors.

If the operating environment remains intact, old patterns will reassert themselves. Energy fades. Habits remain.

If you did not redesign how behavior is reinforced after the event, you did not design change.

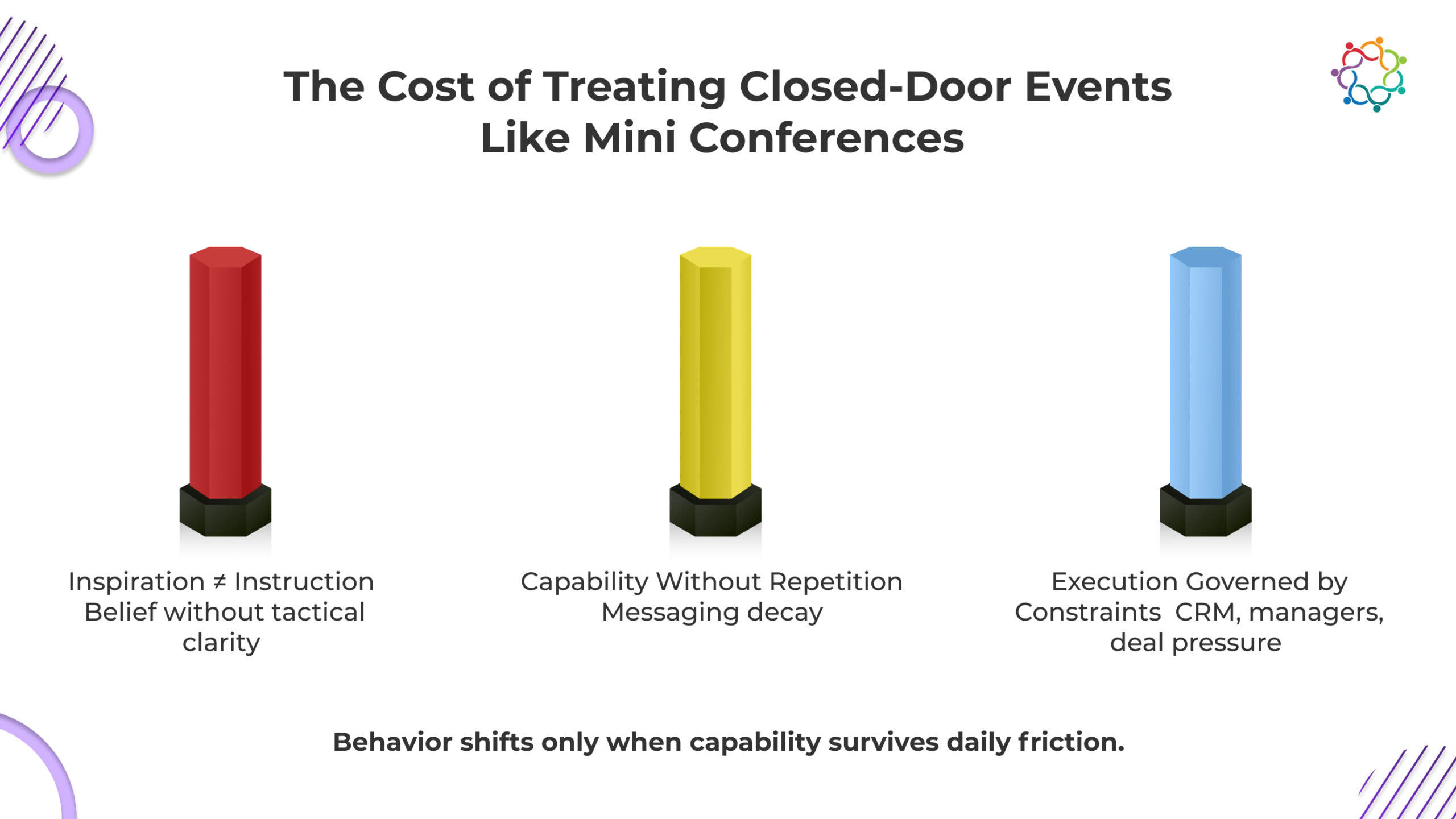

Sales kick-off events often blur the line between belief and behavior. Teams leave convinced the strategy is right. That conviction is mistaken for readiness. Agreement is not execution. Until priorities are translated into enforced daily actions, nothing materially changes.

Vision creates belief. It does not create skill. Reps may understand the new direction but remain unclear on what to do differently in live deals.

Key gaps typically include:

Without procedural clarity, sellers default to familiar routines. Alignment without instruction produces confidence, not capability.

Even when new frameworks are introduced, they fade without repetition. Memory weakens. Confidence drops. Quota pressure pushes reps back to proven scripts.

Common failure points:

What is not reinforced is not retained.

Selling behavior follows incentives and inspection. If CRM stages, pipeline reviews, and compensation plans remain unchanged, priorities remain unchanged.

Execution responds to:

If the system does not move, behavior will not move.

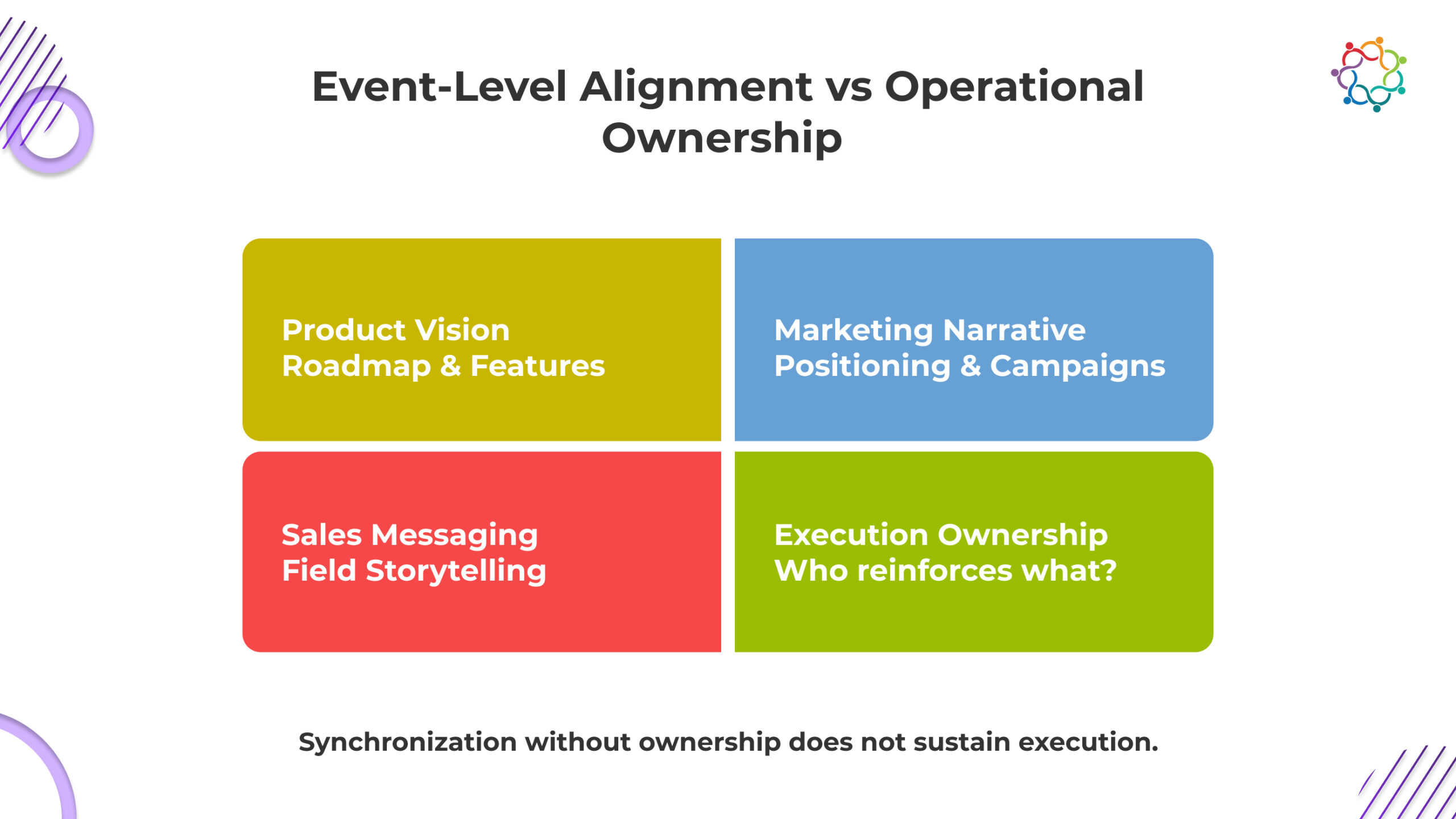

Alignment is frequently declared at the end of the event. Messaging appears unified. Strategy feels shared. Teams leave believing they are synchronized. But alignment inside a ballroom does not guarantee alignment inside a live deal. When cross-functional priorities are not translated into execution ownership, fragmentation resurfaces quickly.

Product roadmaps are often presented in terms of innovation and differentiation. What is missing is direct mapping to customer objections, competitive pressures, and pricing resistance. Without translating vision into field-level conversations, reps struggle to operationalize what they heard.

Marketing introduces refined positioning and value propositions. However, if those narratives are not tested against real buyer pushback, they remain theoretical. Messaging must survive scrutiny in live calls, not just on stage.

Leadership may announce new target segments or deal strategies. If CRM stages, qualification criteria, and compensation models remain unchanged, those priorities lack enforcement. Process must reflect strategy.

True alignment requires ownership beyond presentation. Product, marketing, and sales must co-own reinforcement. Without coordinated follow-through, alignment dissolves at first friction.

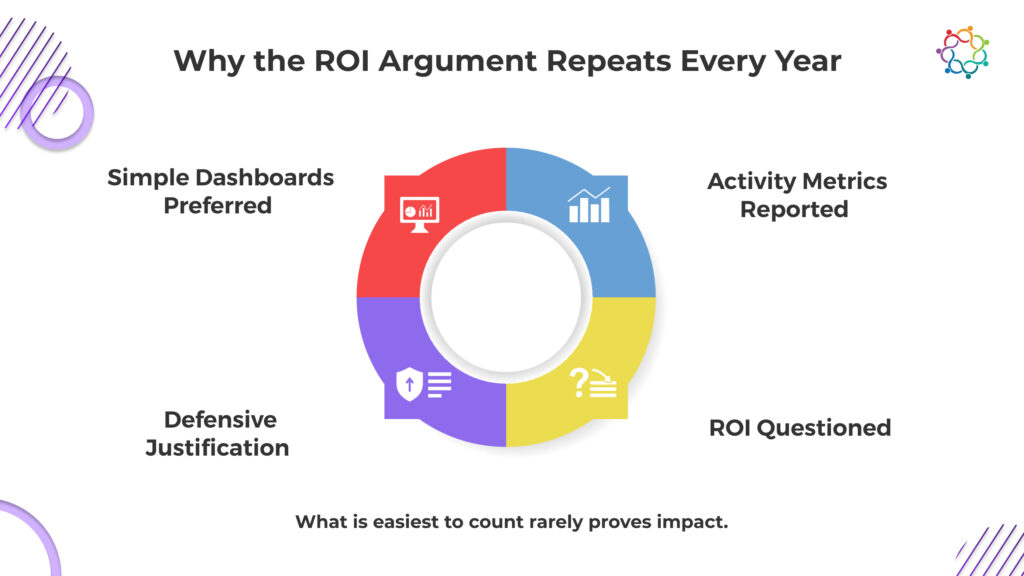

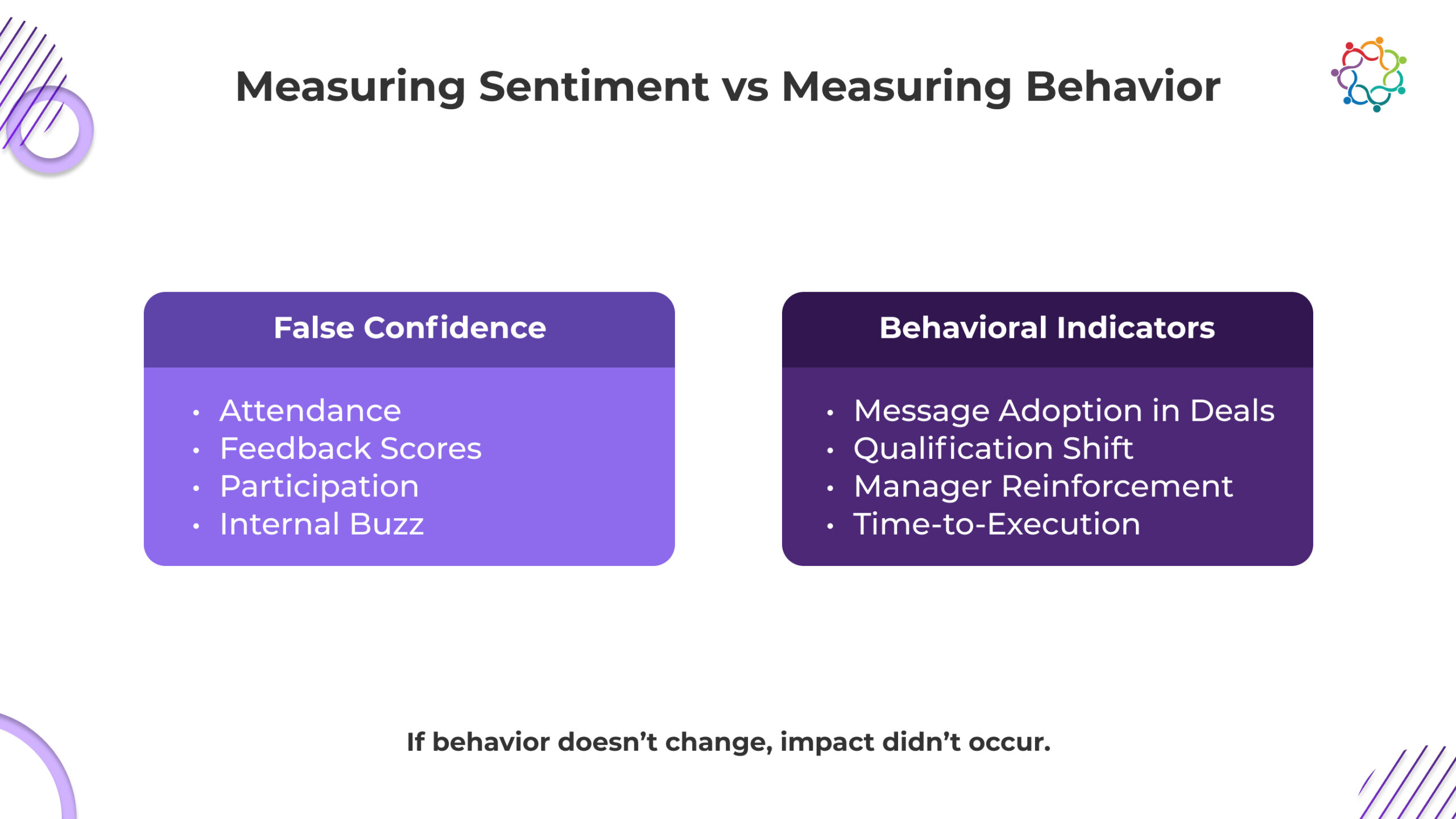

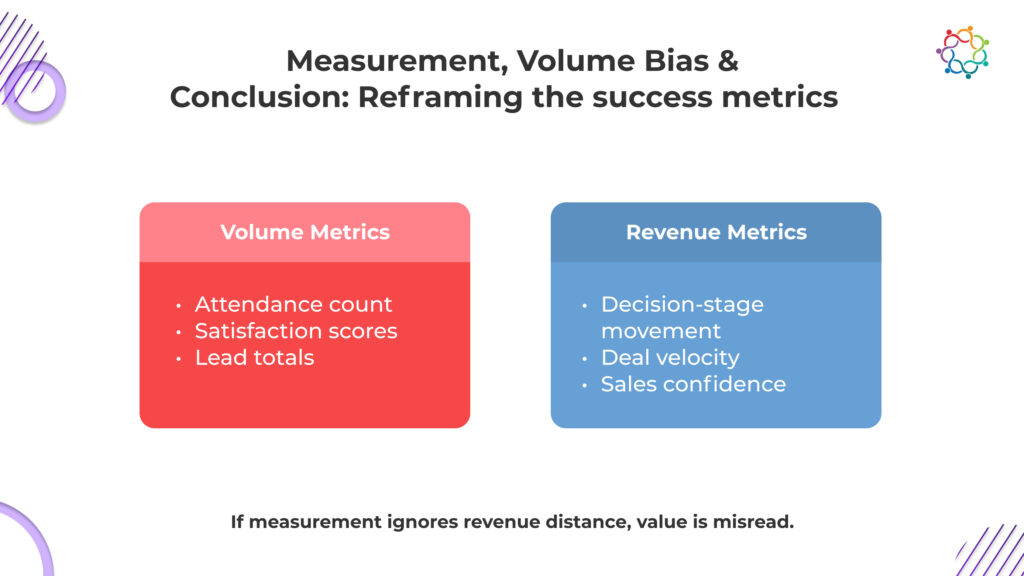

Organizations often measure what is visible during the event rather than what changes afterward. Attendance rates, participation levels, and session feedback scores create a perception of success. They capture sentiment. They do not capture adoption.

High attendance is expected. Positive feedback is common when events are well produced. Internal social sharing generates visible enthusiasm. These indicators feel reassuring because they are immediate and quantifiable.

However, they reflect emotional response, not behavioral shift. A rep can rate a session highly and never apply the content. Satisfaction does not equal implementation.

When leadership reviews these metrics, it reinforces a flawed assumption that energy equates to impact. This is signal versus sentiment confusion. Sentiment is easy to capture. Signal requires behavioral evidence.

If measurement frameworks stop at participation, the organization creates false confidence. The absence of behavior tracking ensures that adoption gaps remain invisible.

If the objective is sales behavior change, measurement must move closer to execution. Are new messaging frameworks appearing in call recordings? Has the opportunity qualification improved in consistency? Are managers reinforcing the new standards during pipeline reviews?

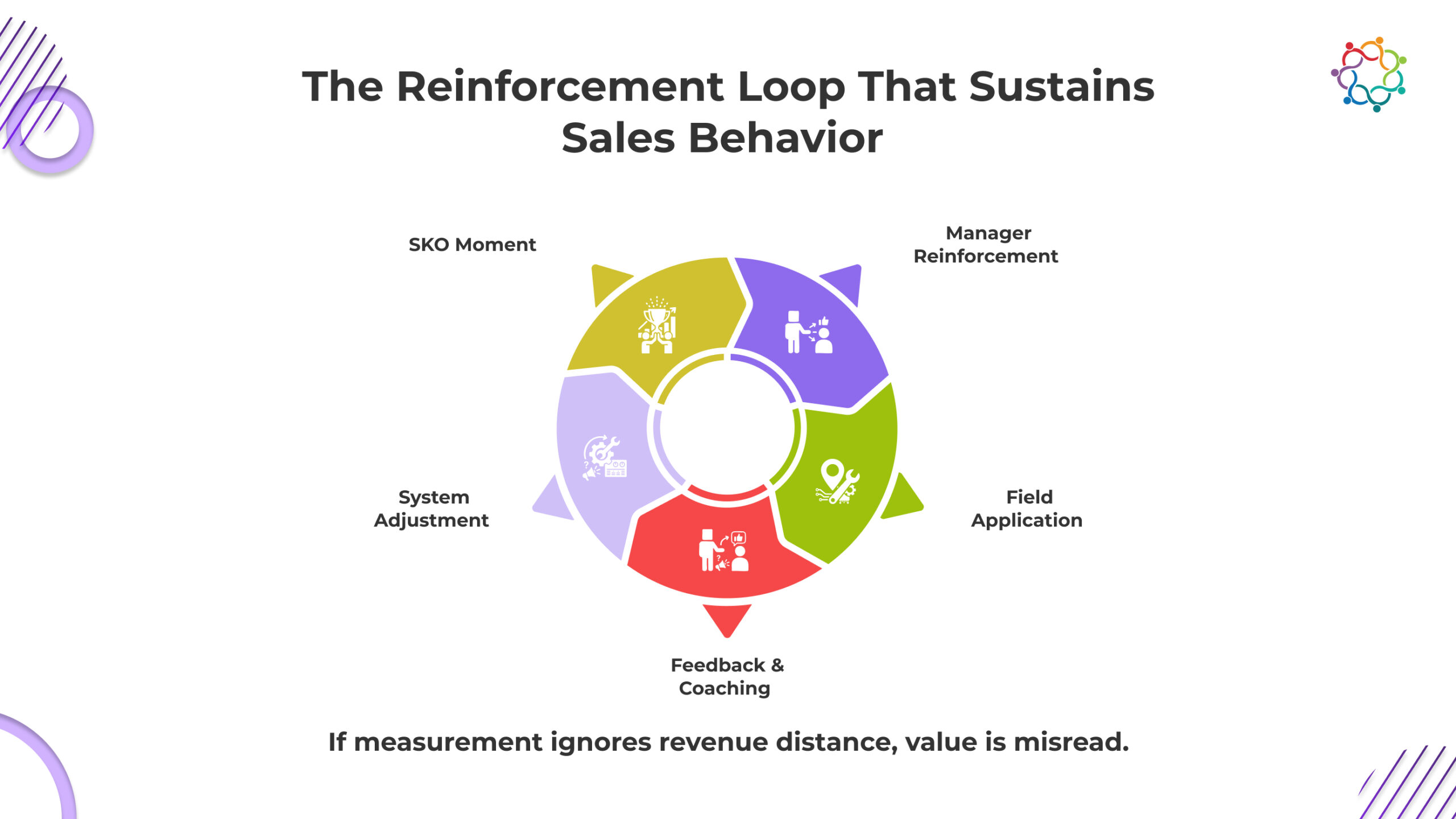

Time to execution after the event is a critical indicator. If new plays take months to appear in live deals, reinforcement is weak. Manager reinforcement consistency is another leading signal. When coaching sessions incorporate new priorities, adoption strengthens.

Changes in opportunity qualification patterns reveal a deeper impact. If teams are targeting different profiles or adjusting deal criteria as instructed, structural alignment may be taking hold.

If selling patterns remain identical, the event did not influence execution.

If you treat the kick-off as the peak of effort, you have already guaranteed its decline. Learning does not stabilize because people were attentive. It stabilizes because systems force repetition. Without structured reinforcement, what felt urgent on stage becomes optional in the field within days.

Reps do not ignore new priorities out of defiance. They ignore them because nothing in their daily environment requires adoption. Forecast calls do not reference the new qualification standard. Coaching sessions do not audit the updated messaging. Deal reviews do not penalize old patterns. In that vacuum, the familiar wins.

Post-event learning loops are not supplementary. They are the only mechanism that converts exposure into execution. Repetition inside real deals, manager-enforced feedback, and measurable application checkpoints determine whether behavior shifts. If reinforcement is inconsistent, decay is immediate.

Event excellence cannot compensate for operational neglect. If the weeks after the kick-off look identical to the weeks before it, the outcome will be identical as well.

This problem persists because the organization rewards the wrong outcome. You are measuring how the event felt, not what the field did afterward. As long as morale, attendance, and internal buzz are treated as proof of impact, you will continue mistaking energy for execution.

A high-energy room creates psychological relief. It feels like progress. But morale is not a leading indicator of pipeline quality or forecast accuracy. When you equate excitement with improvement, you avoid asking the harder question: Did selling behavior actually change?

If enablement teams are evaluated on session quality and participation rates, they will optimize for experience. Adoption tracking requires structural follow-through. If no one is accountable for behavioral reinforcement, the decay is inevitable.

Frontline managers shape daily execution. If they are not explicitly measured on reinforcing new priorities, they default to familiar coaching patterns. Without manager accountability, kick-off messaging becomes optional.

After the event ends, ownership becomes diffuse. Sales assumes enablement will follow up. Enablement assumes managers will coach. Leadership assumes alignment already happened. When reinforcement lacks a clear owner, motivation predictably collapses.

Sales kick-off events succeed as moments. They rarely succeed as systems. Energy peaks during the gathering because the context supports it. Behavior persists afterward because systems reinforce it.

If daily workflows, incentives, coaching rhythms, and metrics remain unchanged, selling patterns will remain unchanged. Motivation without reinforcement is temporary. Capability without repetition decays. Alignment without ownership fragments.

Sales leaders, revenue operations heads, and enablement managers must confront a direct question. Did anything structurally change after the event? If the answer is no, then execution will revert.

These events do not fail because they lack ambition. They fail because organizations overestimate the power of inspiration and underestimate the power of systems.

If nothing changes in how reps are coached, measured, and supported, nothing will change in how they sell. And if selling behavior does not change, revenue outcomes will not either.

Energy is easy to generate. Structural behavior change is not.

If nothing changes in how the system reinforces selling behavior, the kick-off changed nothing.

For organizations reassessing how their sales kickoff translates into execution discipline, the conversation can continue here.

Private executive gatherings are often misunderstood because leaders approach them with assumptions shaped by large conferences. The moment an event becomes invite-only, expectations rise. Smaller room. Senior audience. Higher cost. Therefore, a higher visible return.

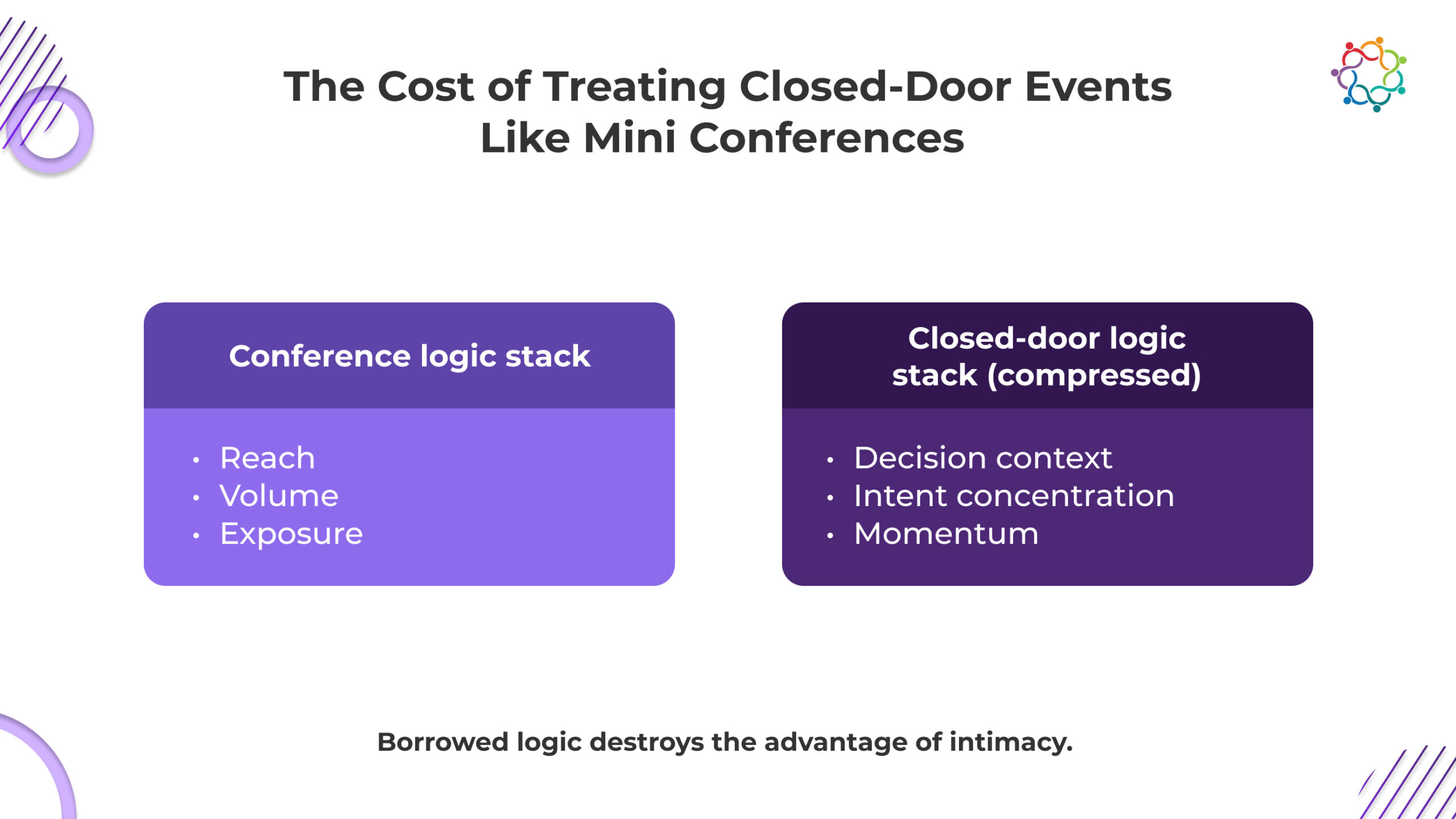

Closed-door events are not smaller conferences. They are a different revenue play. Yet teams apply conference logic to decision-stage environments. That assumption feels rational. It is not. This is not a scale issue. It is a revenue proximity issue.

These formats operate closer to active buying decisions than awareness programs. But they are measured using attendance volume, brand visibility, and post-event buzz. That mismatch distorts outcomes.

Teams expect exposure-stage signals from decision-stage conversations, then question the format when results feel inconsistent. These events fail not because they are small, but because leaders apply the wrong revenue lens.

This blog explains why misclassification quietly undermines deal acceleration.

Many revenue teams undermine closed-door formats by applying conference logic. If scale drives awareness and pipeline, a smaller version should deliver proportionate returns. That assumption does not just miss nuance. It delays deals, wastes senior access, and creates false confidence in pipeline health.

Conferences optimise for reach. These formats optimise for decision compression. Treating them as scaled-down conferences shifts focus to the wrong variables and stalls decision velocity inside active accounts.

Conference strategy rewards audience expansion. In private formats, expanding reach weakens intent concentration. When invitations prioritise impressive titles instead of live buying context, conversation depth collapses. The room looks strong on paper, but lacks commercial density. Senior access is spent without moving a single deal forward.

Large events generate brand lift and social proof. That logic becomes dangerous here. Private executive environments operate near deal acceleration. Measuring visibility instead of decision-stage movement produces misleading success signals. Teams report momentum while opportunities quietly stall.

Conference agendas centre on broad industry themes. In decision-proximate rooms, broad narratives delay urgency. Executives engage when discussions surface real constraints, trade-offs, and internal resistance. When content drifts into generic thought leadership, decision energy drops and active deals slow.

Attendance volume, satisfaction scores, and lead quantity belong to conference dashboards. They do not measure buying committee alignment or shifts in deal velocity. Applying these metrics protects optics while hiding commercial reality. The format appears successful even as the revenue impact weakens.

When conference thinking dominates, intimacy becomes cosmetic. Revenue declines not because the format is flawed, but because it was forced to perform a job it was never built to do.

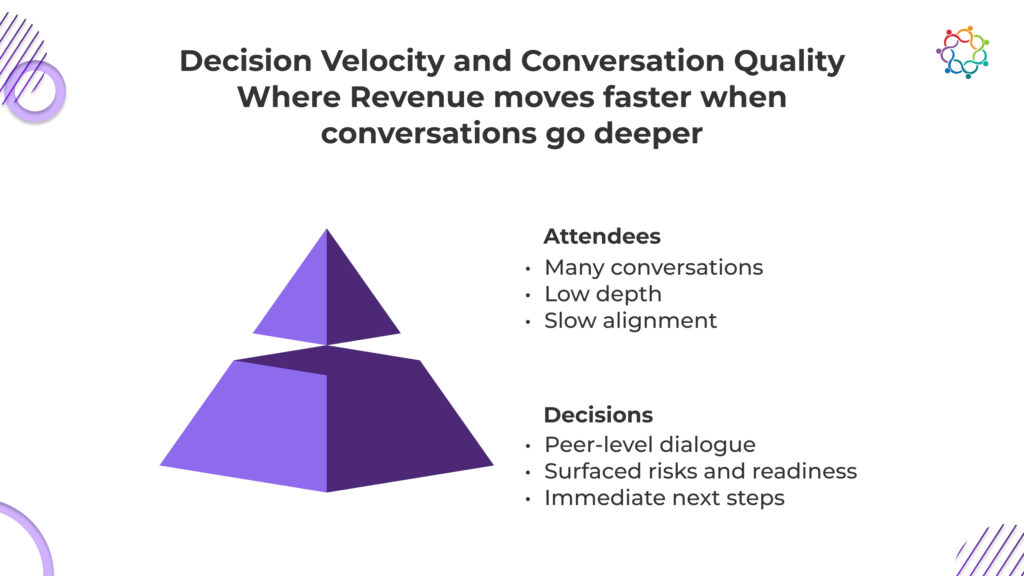

In revenue-proximate environments, speed is leverage. The primary advantage of private executive gatherings is not exclusivity or seniority. It is compression. When the right decision-makers are placed in a relevant context, alignment happens faster. That speed directly affects pipeline outcomes.

Attendance volume does not indicate commercial impact. Decision velocity does. The true question is whether the event shortens time-to-decision inside active accounts. When evaluated through this lens, smaller rooms frequently outperform larger conferences.

In smaller settings, buying committee dynamics surface naturally. Stakeholders voice constraints, trade-offs, and concerns in real time. This transparency reduces back-channel resistance that typically delays enterprise deals.

Executives move faster when uncertainty decreases. Hearing how peers are solving similar problems reduces perceived risk. This accelerates internal advocacy and strengthens executive conviction.

Decision energy is highest during and immediately after the gathering. When context is preserved, follow-up conversations are sharper and more action-oriented. Momentum does not need to be rebuilt because clarity was already established.

Closed-door formats succeed when they compress uncertainty. When that compression translates into shorter deal cycles, attendance becomes secondary to acceleration.

Most event discussions focus on networking. That framing is insufficient when revenue outcomes are at stake. What matters is not who met whom, but what surfaced during those conversations.

Conversation quality is a measurable revenue variable. Executive-level dialogue reveals readiness, resistance, and risk in ways no form can ever fill. The depth of discussion indicates where accounts actually sit in the buying journey.

High-quality conversations do three things:

Shallow conversations create false positives. They feel productive but generate weak commercial signals. Sales teams lose trust when follow-ups are based on surface-level engagement rather than real buying context.

Pipeline influence comes from what is said and surfaced, not who showed up. When conversations are structured around relevant business tension, they become diagnostic tools. They help teams understand which deals deserve acceleration and which require deeper work.

This is where executive engagement metrics matter. Not attendance counts, but conversation depth, relevance, and decision proximity.

Design is where closed-door events either protect or destroy revenue momentum. Not logistics. Not production quality. Design determines whether decision velocity increases or quietly stalls. Most teams do not have an execution problem. They have a structural one. Three failure patterns consistently surface.

The invite list determines intent concentration. Seniority is not intent. An impressive title does not equal an active decision.

When invitations prioritise prestige over live business tension, signal density collapses. The room looks credible but lacks revenue proximity. Deals do not move because the right buying context was never present.

Agendas are decision environments, whether teams admit it or not. When topics drift toward broad thought leadership, urgency fades.

Executives engage when discussions surface real constraints and trade-offs. When conversation stays theoretical, momentum slows. Active opportunities lose compression instead of gaining clarity.

Post-event engagement often restarts conversations instead of advancing them. Generic outreach erases context and weakens the alignment created in the room.

When follow-up fails to carry forward surfaced risks and implied next steps, velocity drops. Decision energy dissipates.

Every design choice compounds or corrodes momentum. In closed-door formats, there is no neutral ground.

Volume bias is deeply ingrained in event marketing. Bigger audiences feel safer. They produce more data points. But revenue impact is not linear. In fact, it often moves in the opposite direction.

Closed-door events thrive on intent concentration. When audiences are small and relevant, clarity increases. Sales teams trust signals from these environments because they are grounded in real conversations, not inferred interest.

Fewer data points can offer higher clarity because:

Ambiguous engagement erodes sales confidence. Clear signals accelerate action. This is why ten right conversations consistently outperform a hundred unclear ones.

High-intent B2B events do not scale outcomes by adding people. They scale outcomes by removing noise. This is uncomfortable for teams conditioned to equate reach with success. But revenue does not care about comfort. It cares about movement.

Leadership teams that understand this stop asking for volume and start asking for velocity.

Measurement determines whether a closed-door event is treated as a revenue instrument or a marketing expense. These formats appear to underperform not because they lack impact, but because teams measure the wrong outcomes.

Traditional dashboards reward participation and sentiment. Decision-proximate environments demand evidence of commercial movement. If you cannot see deal progression, velocity shifts, or sharper next steps, you are not measuring impact. You are measuring activity.

Frameworks that rethink executive event ROI through a revenue lens, such as How To Host Closed-door Events For CXOs With Measurable ROI, connect conversation depth directly to pipeline movement.

If accounts did not advance to the next stage, hesitation was not reduced. If sales cycles did not compress, uncertainty was not removed. If follow-up conversations lack specificity, alignment did not occur.

If measurement does not reflect proximity to revenue, it misrepresents value. Closed-door environments should be judged by acceleration and clarity, not attendance and applause.

Closed-door formats operate under different economics. They reward precision, context, and speed. When designed and measured correctly, they influence outcomes disproportionately to their size.

They are not awareness plays. They are decision acceleration mechanisms. Their value lies in how effectively they compress time, surface risk, and move deals forward inside active accounts.

If an event does not accelerate decisions, intimacy alone will not save it. Teams that understand this stop chasing scale and start engineering clarity.

If this reframing feels uncomfortable, it is likely because your measurement system rewards optics over acceleration.

For organisations studying how high-intent engagement and contextual follow-up integrate into revenue systems, platforms like Samaaro illustrate how events can function as embedded decision environments rather than standalone marketing moments.

Enterprise conferences sit at the intersection of brand ambition and revenue accountability. CMOs defend them as strategic platforms. Field marketing leaders manage complex logistics and stakeholder expectations. Demand generation teams are expected to translate them into measurable pipeline impact.

Yet the uncomfortable reality remains: most enterprise conference marketing initiatives struggle to clearly demonstrate pipeline influence. Not because they lack scale, attendance, or production quality. But because the structure of how they are designed filters out commercial signal long before revenue discussions begin.

Most enterprise conferences look successful in scale and fail in revenue influence. That contradiction is structural.

Large conferences often feel successful. Attendance numbers rise. Social engagement spikes. Leadership sees packed rooms and active booths. Internal dashboards glow with metrics that imply momentum. However, conference marketing ROI is often based on exposure signals rather than commercial clarity.

Consider what typically defines success:

These metrics show organizational effort, not pipeline influence. The illusion comes from visible scale, while true intent remains hidden. Only structured detection, deeper prioritization, and intent preservation turn visibility into revenue impact.

By the time leadership asks how the event influenced revenue, the influence window has already narrowed. Attribution ambiguity surfaces. Sales reports uneven follow-up outcomes. Teams rely on broad time-window models to prove a connection. The architecture assumed commercial relevance without engineering it.

Conferences rarely fail due to poor execution. They fail because exposure was prioritized over decision relevance from the start. That is why enterprise conference marketing can feel internally successful yet collapse under revenue scrutiny. Pipeline influence is not recovered after the event. It must be structurally protected before the first invitation is sent.

Conferences are not accidentally biased toward scale. They are built that way. Sponsors push for reach. Leadership asks for presence. Marketing reports brand amplification. Bigger audiences are celebrated, funded, and repeated. Intent density rarely appears in the approval deck. That preference shapes design decisions long before the first invitation goes out.

In many demand generation conferences, strategy centers on maximizing participation:

This is rewarded behavior. Larger rooms create easier narratives. Attendance growth signals momentum. Relevance requires exclusion, and exclusion reduces numbers. Most organizations choose scale.

Visibility scales because exposure does not require qualification. Intent does. Intent requires filtering and prioritization, which shrinks dashboards. So they are deprioritized.

When enterprise conference marketing is designed to maximize audience breadth, commercial density declines. Sales receive volume without clarity.

Demand generation teams wrestle with conference attribution challenges. CMOs are asked to explain revenue impact using exposure metrics that were never built to answer that question.

Pipeline visibility is mistaken for pipeline creation. Intent signals do not disappear by accident. They are buried by design. And that design is approved, funded, and repeated. What looks like growth is often signal dilution in disguise.

Most enterprise conferences report success using attendance-driven dashboards. Registration numbers, booth scans, and session turnout create an impression of momentum. However, these metrics rarely withstand leadership scrutiny when the conversation shifts from activity to revenue.

The core issue is not that attendance metrics are wrong. It is that they measure exposure, not intent. Pipeline influence depends on buying signals, decision readiness, and commercial prioritization.

A full venue signals interest in a topic, not intent to purchase. Conferences attract a mix of decision-makers, researchers, students, partners, and competitors. Attendance numbers flatten this distinction. Pipeline influence requires clarity on who is evaluating solutions now versus who is passively exploring.

High lead counts create internal confidence. Yet large volumes often dilute commercial quality. When everyone who interacts with the brand becomes a “lead,” intent density drops. This creates lead inflation, where the database grows but the concentration of revenue-relevant prospects shrinks.

Post-event reporting often relies on time-based attribution windows. If an opportunity is created within a certain period, the conference receives partial credit. But without clear behavioral indicators captured during the event, attribution becomes ambiguous. Leaders question whether the conference influenced the deal or merely coincided with it.

Attendance reflects what already happened. Pipeline influence depends on what happens next. Without structured insight into attendee behavior, follow-up lacks direction. When early intent clarity is missing, the pipeline conversation becomes reactive rather than predictive. That gap is where most enterprise conferences lose their commercial credibility.

Pipeline failure rarely occurs in a single visible moment. It unfolds across a sequence of accepted weaknesses. The conference ends. Applause fades. Volume is reported. And then intent begins to erode inside the system that everyone agreed to use.

After most conferences, marketing transfers leads to sales in bulk. This is not a tooling limitation. It is a design choice. Context from sessions attended, conversations held, and behavioral signals collected is reduced to fields that fit cleanly into CRM. Depth is sacrificed for administrative efficiency.

What sales receive:

What they rarely receive is prioritization clarity. Which accounts showed repeated engagement? Which attendees consumed late-stage product content? Which interactions signaled evaluation urgency? Those answers often exist in fragments, but they are not operationalized.

Everyone knows this gap exists. It persists because the volume has already been counted as success.

When handoff fails, pipeline influence weakens immediately. Manual reconstruction replaces structured prioritization. Speed declines immediately. Friction increases. Speed declines. Intent fades.

In enterprise conference marketing, handoff is treated as an administrative closeout task rather than a strategic bridge. This is where intent either survives or dies. Most organizations accept its erosion as normal.

Once leads enter CRM, prioritization logic determines commercial reality. If conference leads are scored uniformly, high-intent signals disappear inside aggregate volume. Intent density becomes mathematically invisible.

Sales teams respond rationally. They pursue clearer inbound signals or known accounts. Large conference lead lists become background noise unless explicitly weighted.

This is not a sales discipline problem. It is an organizational decision to value quantity over clarity. When prioritization collapses, momentum stalls. Opportunities that could have accelerated remain dormant. Attribution becomes diffuse because the system never elevated what mattered.

The final erosion point is follow-up decay. Generic sequences replace contextual relevance. Messaging ignores session behavior and expressed interest. Response rates fall.

At this stage, attribution ambiguity intensifies. Revenue leadership questions impact. Demand generation struggles to defend its influence. Sales reports are inconsistent.

Pipeline does not fail loudly. It erodes quietly across handoffs, collapsed prioritization, and signal loss. And it erodes in ways the organization has repeatedly tolerated.

If the commercial narrative of enterprise conference marketing collapses after the event, it is not because intent was absent. It is because the system allowed it to disappear and move on once the attendance numbers were shared.

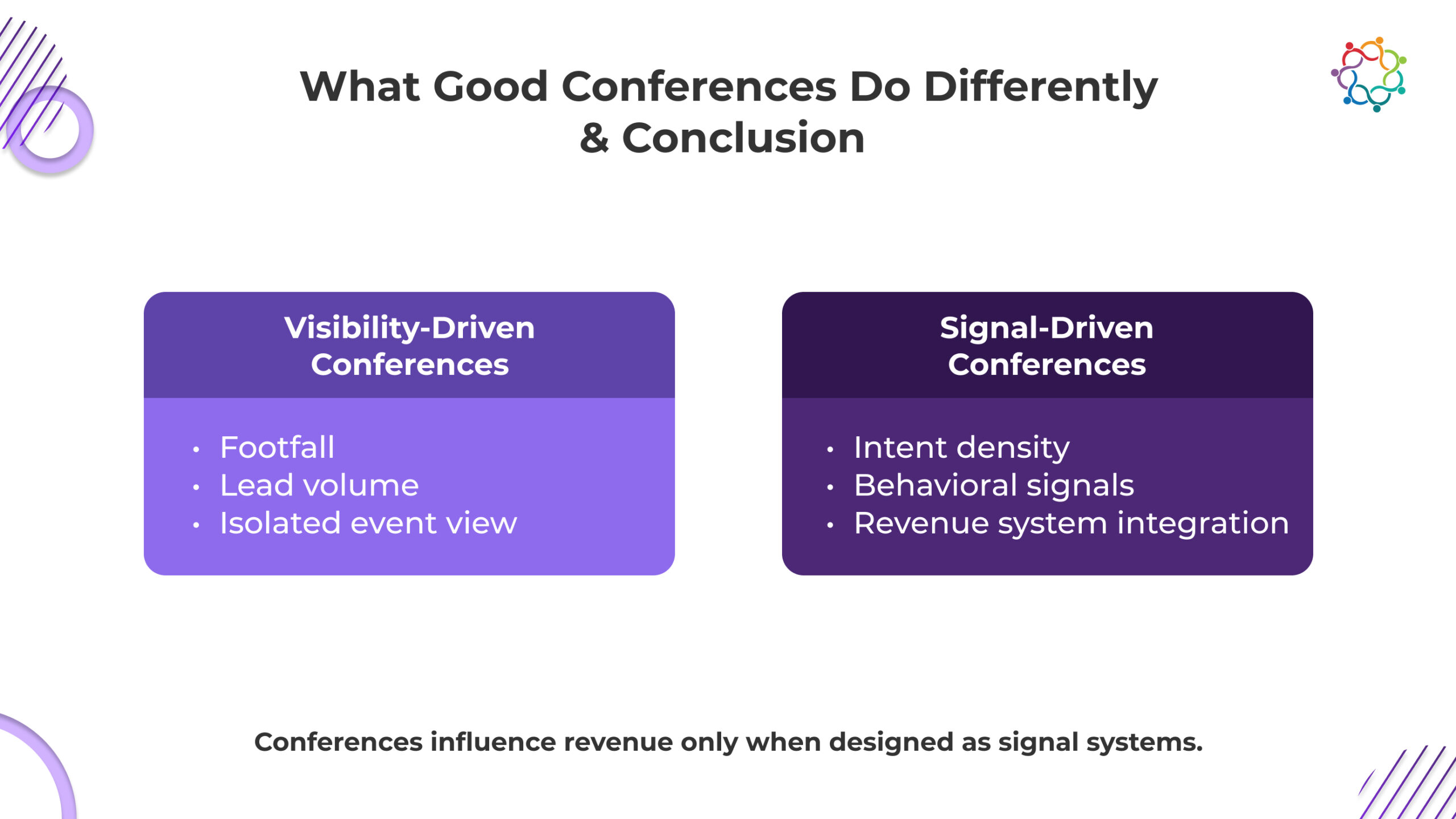

High-performing conferences do not look dramatically different on the surface. They may have similar scale and production value. The difference lies beneath the experience layer.

Poorly performing conferences optimize for:

High-performing conferences optimize for:

This distinction changes the operating model. High-performing conferences do not celebrate total attendance. They measure commercial concentration. They rank engagement by depth and buying relevance instead of treating every badge scan equally. They do not send raw lead lists to sales; rather, they transfer prioritized intelligence.

Conference marketing is designed backward from commercial action. The core question is simple: which accounts move next, and why?

Behavioral signals are structured, not stored. Session participation, repeat engagement, content consumption, and account-level activity are translated into ranked outputs. Sales receives context, not contacts. Demand generation tracks progression, not just response rates.

They consciously sacrifice vanity scale to protect commercial signal. Scale without intent clarity weakens pipeline influence. Signal protection, not spectacle, defines performance.

For conferences to influence the pipeline, they must be designed as part of the revenue system, not as standalone marketing moments. When events operate in isolation, any commercial signal generated on-site weakens once the experience ends.

Treating conferences as pipeline infrastructure shifts the focus from execution excellence to signal continuity. The value of the event is determined not by what happens during the conference, but by how effectively intent survives and travels into demand and sales systems.

In high-performing organizations, conferences function as structured input layers into the broader demand engine. They are designed to feed sales and marketing systems with prioritized intelligence. This means engagement data is captured in a way that directly supports downstream decision-making, rather than existing only as post-event reports.

Most conferences generate intent that disappears at the badge scan. Behavioral indicators such as session depth, repeat engagement, and content interaction are rarely preserved in usable form. Pipeline infrastructure ensures these signals survive the event and remain accessible for prioritization, follow-up, and attribution.