Samaaro + Your CRM: Zero Integration Fee for Annual Sign-Ups Until 30 June, 2025

- 00Days

- 00Hrs

- 00Min

When you purchase something from a business, the stakes are much bigger than when you buy something for yourself. Enterprise choices often involve big financial commitments, operational risk, and being reliant on the solution chosen for a long time. These decisions cannot be made quickly or without careful evaluation.

Multiple stakeholders typically participate in the process. Technical teams look at how well the product works and how easy it is to integrate. Leaders in finance look at costs, possible returns, and risks. Executive leadership looks at how well the strategy fits with the organization’s larger aims. Each level of evaluation brings up more discussion, validation, and cooperation inside the organization.

Budgets also require formal approval cycles, and procurement processes add further scrutiny before a final commitment can be made.

In B2B markets, buying decisions are rarely moments. They are processes.

These structural realities explain why enterprise purchasing timelines extend across months. This blog explores how events influence these long evaluation journeys and help buyers move toward confident decisions.

Long buying cycles create a difficult marketing challenge. Maintaining meaningful engagement across extended evaluation periods is far more complex than generating initial awareness.

Buyer attention naturally fluctuates during long decision processes. Prospective customers research options, pause discussions internally, return to evaluation, and revisit earlier assumptions. During these gaps, marketing visibility can fade.

Digital channels provide useful information but often struggle to sustain deeper engagement across months of decision activity.

Organizations must therefore maintain interactions that remain relevant throughout the journey. The goal is not constant promotion but consistent presence during key evaluation stages.

Events become particularly valuable in this context because they create concentrated engagement opportunities. Instead of relying solely on passive information consumption, buyers participate in environments where questions can be explored, perspectives exchanged, and relationships developed.

These interactions help sustain relevance when long sales cycles might otherwise weaken buyer attention.

Within extended B2B buying cycles, certain moments carry greater strategic importance than others. These moments typically occur during evaluation, comparison, and validation stages of the buyer journey.

This is where B2B event marketing often plays a meaningful role.

Events frequently align with key decision points such as:

These environments allow buyers to deepen their understanding beyond what written content or digital presentations can provide.

Events create concentrated interaction moments within otherwise slow buying journeys.

Instead of gathering fragmented information across multiple channels, buyers engage directly with experts and other stakeholders. This interaction helps clarify uncertainties that often delay enterprise purchasing decisions.

As a result, events do not replace other marketing channels. They act as important checkpoints where deeper evaluation and information validation can occur.

Buyers require environments where claims can be challenged and expertise can withstand scrutiny in real time. This is difficult to achieve through static content or one-way communication.

B2B event marketing creates these environments by enabling direct interaction between buyers and experts. During evaluation, buyers ask detailed questions, test assumptions, and observe how vendors respond to real business problems.

In these moments, marketing is no longer about messaging. It becomes a live validation of competence, credibility, and strategic thinking.

Buyer Interaction in Digital Channels vs Events

| Digital Channels | Events |

| Information delivery | Direct conversations |

| Limited real-time interaction | Multi-directional interaction |

| Mostly one-to-many communication | Peer validation and expert discussion |

Enterprise purchasing rarely involves a single decision-maker. Most B2B buying groups include individuals with different responsibilities and priorities.

Typical participants include:

Before endorsing a purchase choice, each stakeholder needs different information.

Events offer a context for these viewpoints to come together. Rather than evaluating a vendor in isolation, buying groups often attend industry meetings, briefings, or roundtables together.

In this setting, stakeholders can interact with peers from other organisations and vendor representatives while discussing issues as a group.

Internal alignment within buying groups is frequently accelerated by B2B events.

When multiple decision participants experience the same discussion or demonstration, misunderstandings decrease. Stakeholders hear identical explanations, ask questions simultaneously, and evaluate responses together.

This shared exposure can reduce internal friction that frequently slows enterprise purchasing decisions. Instead of each stakeholder forming independent impressions, events help buying groups build a shared understanding of potential solutions.

In long sales cycles, this alignment becomes a critical step toward eventual purchase decisions.

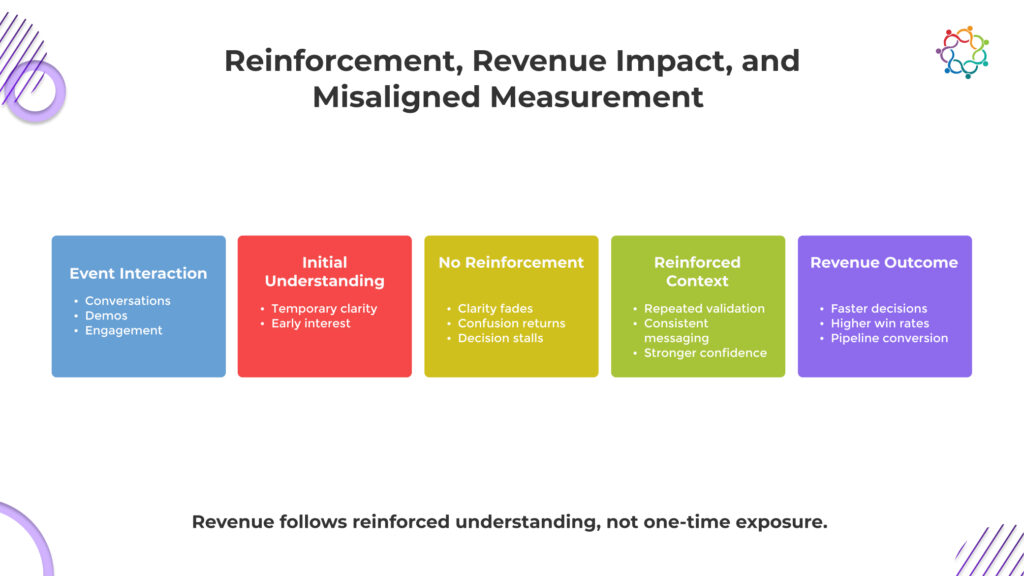

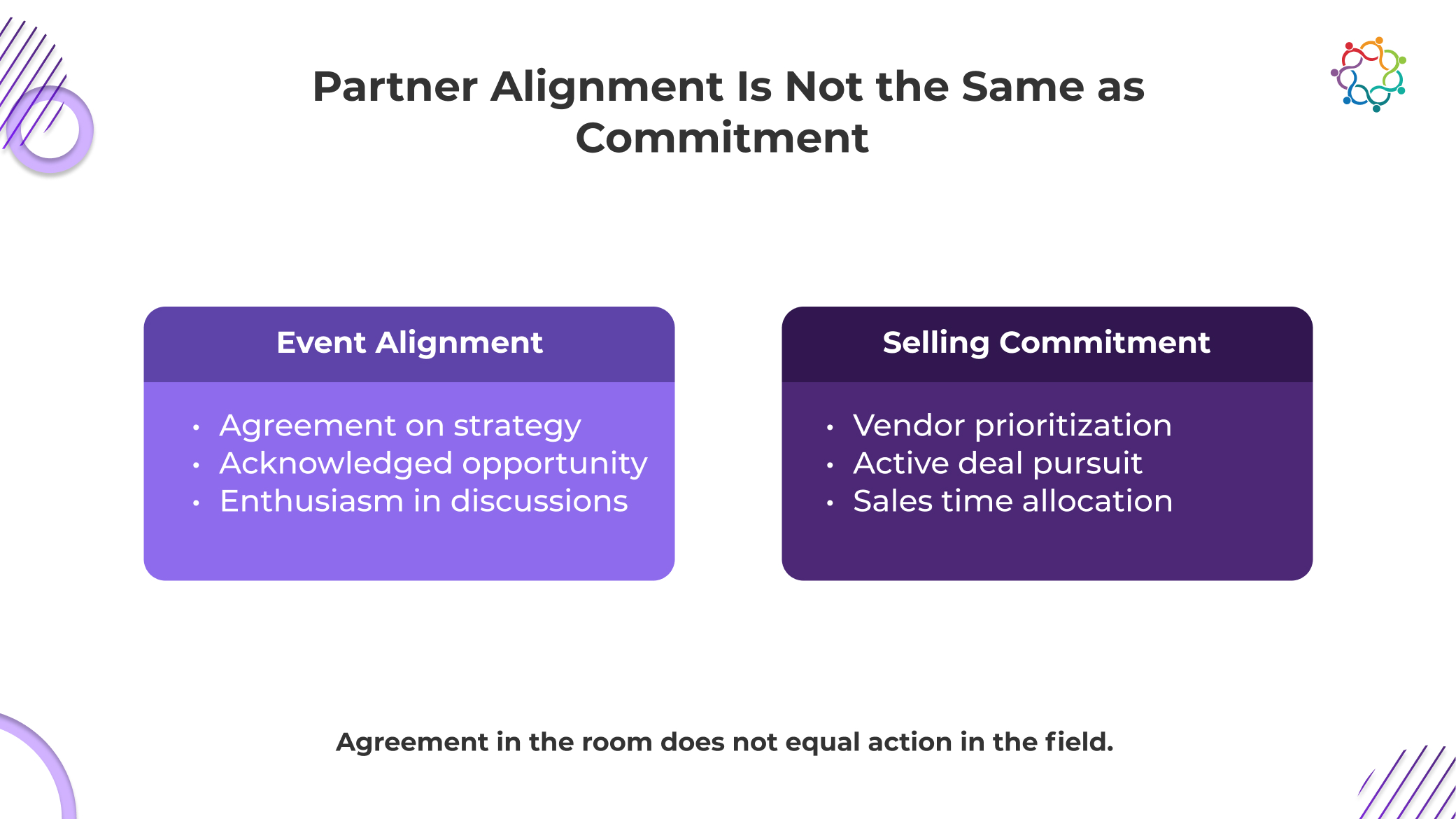

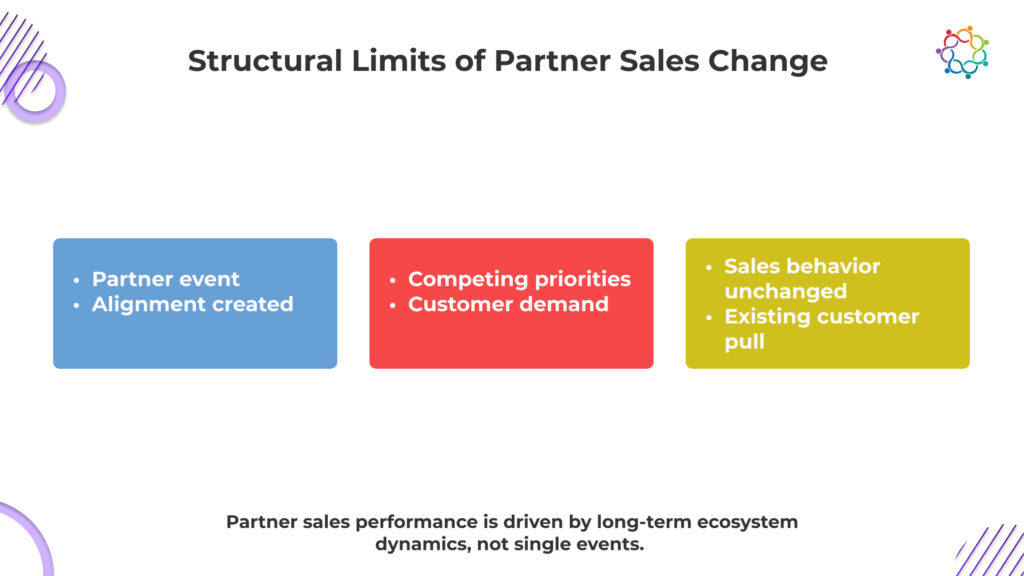

Despite their strategic importance, events are often misunderstood. Some organizations expect them to generate immediate revenue or rapid deal closures.

This expectation conflicts with how enterprise buying actually works.

Complex B2B purchases require multiple layers of evaluation:

Even when a buyer becomes interested during an event interaction, these processes still take time.

B2B event marketing influences perceptions, understanding, and confidence rather than closing transactions directly.

Expecting immediate revenue from B2B events misunderstands how enterprise buying works.

Events typically occur during evaluation stages rather than final procurement decisions. Buyers attend to gather insight, compare vendors, and test assumptions.

The impact of these interactions becomes visible later in the sales cycle when buying groups narrow their options and move toward vendor selection.

Viewed through this lens, events support pipeline progression rather than instant conversion.

The real strategic impact of events emerges across time rather than in a single interaction.

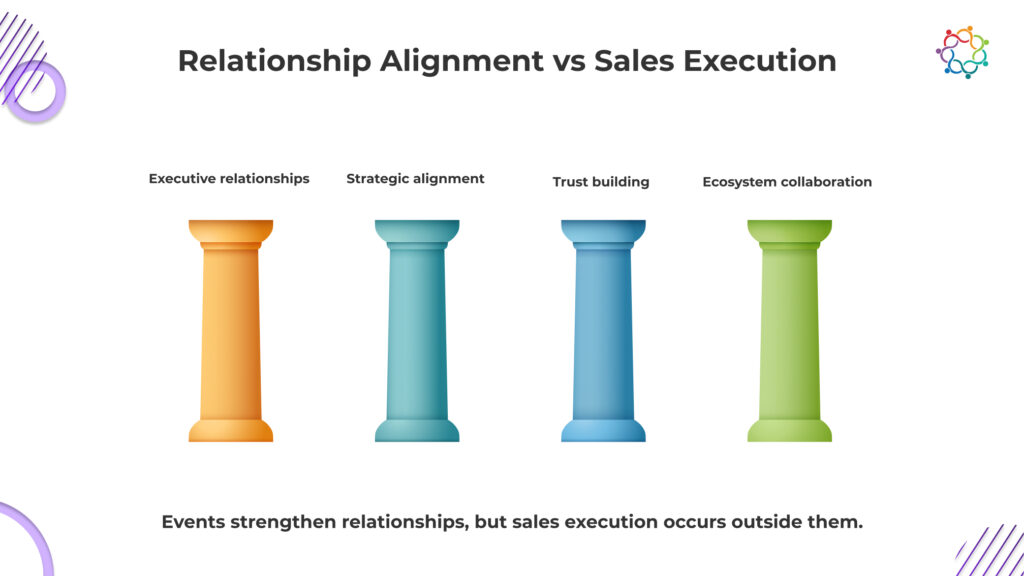

Buyers navigating long evaluation processes often participate in multiple industry or vendor events throughout their decision journey. Each interaction contributes additional context, insight, and familiarity.

Over time, several patterns begin to develop:

Through these repeated interactions, relationships gradually strengthen.

This cumulative dynamic explains why B2B event marketing remains an important component of long sales cycles. It allows vendors to remain present as buyers refine their understanding and compare potential partners.

As buying journeys unfold, events provide recurring opportunities for:

Each interaction builds upon previous conversations rather than restarting the relationship.

By the time buyers approach final vendor selection, they may have accumulated months of interaction history through conferences, briefings, or industry gatherings.

Influence compounds through these encounters, shaping perception and decision confidence.

Enterprise purchasing decisions unfold slowly because they involve risk, investment, and organizational alignment.

Marketing cannot force these timelines to accelerate. It can only influence how buyers navigate them.

During the review process, B2B event marketing helps by generating opportunities for buyers to gain a deeper knowledge, validate information, and strengthen relationships.

These interactions do not have instant outcomes. Their influence becomes visible as buying groups progress toward confident decisions.

B2B event marketing is not designed for immediate results.

Its value lies in shaping the decisions that take time to form.

Most companies believe event marketing means planning events. The conversation quickly shifts to venues, catering, registration numbers, and event-day coordination. When people talk about success, they usually refer to how smoothly the event ran or how many people showed up.

But none of these explains the real purpose of the event.

Execution answers how an event happens. It does not explain why the event exists in the first place. Without this clarity, events become well-organised activities rather than marketing initiatives that influence buyers and accounts.

This confusion is why B2B event marketing is frequently reduced to operational work instead of being treated as part of a broader marketing strategy.

Logistics enable events. Strategy defines their role.

This blog explores what B2B event marketing actually means and why the distinction matters.

B2B event marketing is the strategic use of events to influence buyers within target accounts across long sales cycles. It is not defined by attendance or execution quality, but by its ability to shape how buying decisions progress.

In B2B environments, purchasing decisions involve multiple stakeholders, extended evaluation periods, and internal alignment before commitment. Events function within this context as structured interaction points where buyers engage directly, validate assumptions, and assess credibility.

The objective is not to generate footfall, but to drive engagement that moves conversations forward within accounts. B2B event marketing exists to influence how decisions evolve, not to measure how many people attended.

B2B buying decisions are rarely simple. They involve multiple stakeholders, large financial commitments, and long evaluation cycles where companies must justify their choices internally. Under these conditions, information alone is not enough for buyers to move forward.

Digital channels are excellent at distributing information. They create awareness, educate audiences, and introduce companies to potential buyers. But awareness does not resolve the deeper questions buyers have when the decision carries real business risk.

Buyers want to understand the people behind the company, the depth of its expertise, and whether its thinking aligns with their problems. These judgments are difficult to make through content alone.

Events create environments where these evaluations can happen directly. Buyers interact with experts, discuss real challenges, and observe how companies think in unscripted conversations.

That shift from exposure to interaction is why events remain essential. They create the depth of engagement that complex B2B decisions require.

Confusion between event execution and marketing strategy causes many organisations to mix two completely different functions.

Event management is a part of running a business. It focuses on venues, scheduling, registrations, and on-site coordination to make sure everything goes well. Its role is execution.

Event marketing is planned. It talks about which accounts should be there, what conversations buyers need to have, and how the event helps with the purchase process.

These priorities cannot be reversed.

A marketing campaign can fail even if the event goes off without any issues. Full rooms, smooth logistics, and positive feedback do not prove buyer engagement or decision progress.

Execution quality shows operational competence.

Marketing effectiveness is measured by buyer influence.

| Event Management | B2B Event Marketing |

| Venue, logistics, scheduling | Buyer engagement strategy |

| Event operations | Marketing influence |

| Registration numbers | Account participation |

| Execution quality | Buyer decision impact |

This distinction clarifies why operational success alone cannot determine whether an event contributed to business outcomes.

Most B2B purchases do not happen because someone read a webpage or downloaded a report. They move forward when buyers start trusting the company behind the message. That kind of confidence rarely builds through passive channels alone.

Events create space for buyers to see a company more clearly. They ask questions, challenge ideas, and observe how experts respond to real business problems. In these moments, buyers are not listening to prepared messaging. They are observing how a company actually thinks.

Events also bring in peer perspectives. When buyers hear how other organisations are approaching similar challenges, it often sharpens or reshapes the discussions happening inside their own teams.

This is where real influence begins. Buyers are not just collecting information. They are judging credibility, expertise, and fit.

Events shape those judgments. And those judgments are what move decisions forward.

The strategic purpose often disappears from planning discussions when organisations treat events primarily as operational projects. Marketing teams focus on timelines, vendor coordination, and attendance goals while the objective of buyer influence remains unclear.

This approach creates several common patterns, such as:

In many cases, marketing teams celebrate successful execution while sales teams struggle to connect the event to real buyer progression.

The issue is not that the events were poorly organised. The issue is that they were never designed to influence the buying process in the first place.

When events are treated as logistics projects, marketing impact becomes accidental.

Without strategic framing, events become isolated activities rather than structured touchpoints within the broader revenue journey.

When events are planned carefully, they stop being marketing activities and become points of interaction in the revenue process. The focus shifts from planning events to making sure that businesses and potential customers have meaningful interactions.

Effective event marketing strategies focus on a few key areas:

Events become moments where relationships deepen, and decision-making groups align around specific challenges.

From this perspective, events are also part of a larger business ecosystem. Digital avenues raise awareness and spread information, while events provide spaces where that information can be discussed, proven, and explored.

Organisations no longer treat events as separate campaigns; they position them as strategic touchpoints ensuring meaningful interactions between businesses and potential customers that influence how buyers navigate lengthy evaluation processes.

In this role, events contribute directly to account engagement and pipeline momentum.

Many organisations claim to run successful events. Venues are full, agendas run on time, and attendee feedback looks positive. Yet none of these signals confirms whether the event influenced buyers. When strategy is replaced by logistics thinking, companies measure activity instead of impact. The result is predictable. Events appear successful operationally while contributing little to real buyer progression.

When logistics thinking dominates, attendance numbers become the primary measure of success. Marketing teams focus on filling rooms rather than ensuring the right accounts are present. High turnout may look impressive, but it reveals nothing about buyer engagement or decision movement.

If events are treated as operational projects, marketing teams naturally optimise logistics. They improve registration flows, scheduling, and event experience. None of these improvements guarantees meaningful buyer conversations or accounts engagement.

Without strategic intent, events rarely align with sales priorities. Sales teams need opportunities to progress conversations with target accounts. Logistics-driven events prioritise scale and attendance, which often leaves the most important buyers absent.

An event can run perfectly and still deliver zero marketing impact. Smooth execution does not mean buyers changed their perception or moved closer to a decision. When organisations fail to separate strategy from logistics, they celebrate events that accomplished nothing commercially.

Venues, agendas, and attendance figures do not define an event. Instead of describing the marketing purpose, these parts describe implementation.

Events are truly valuable when they have an impact on buyers within target accounts. They create environments that enable deeper dialogue, the growth of trust, and the alignment of decision-making groups around solutions.

Events transition from operational initiatives to strategic engagement points within the revenue stream when organisations recognise this difference.

When strategy disappears, events become logistics exercises.

When strategy leads, events become one of the most powerful channels in B2B marketing.

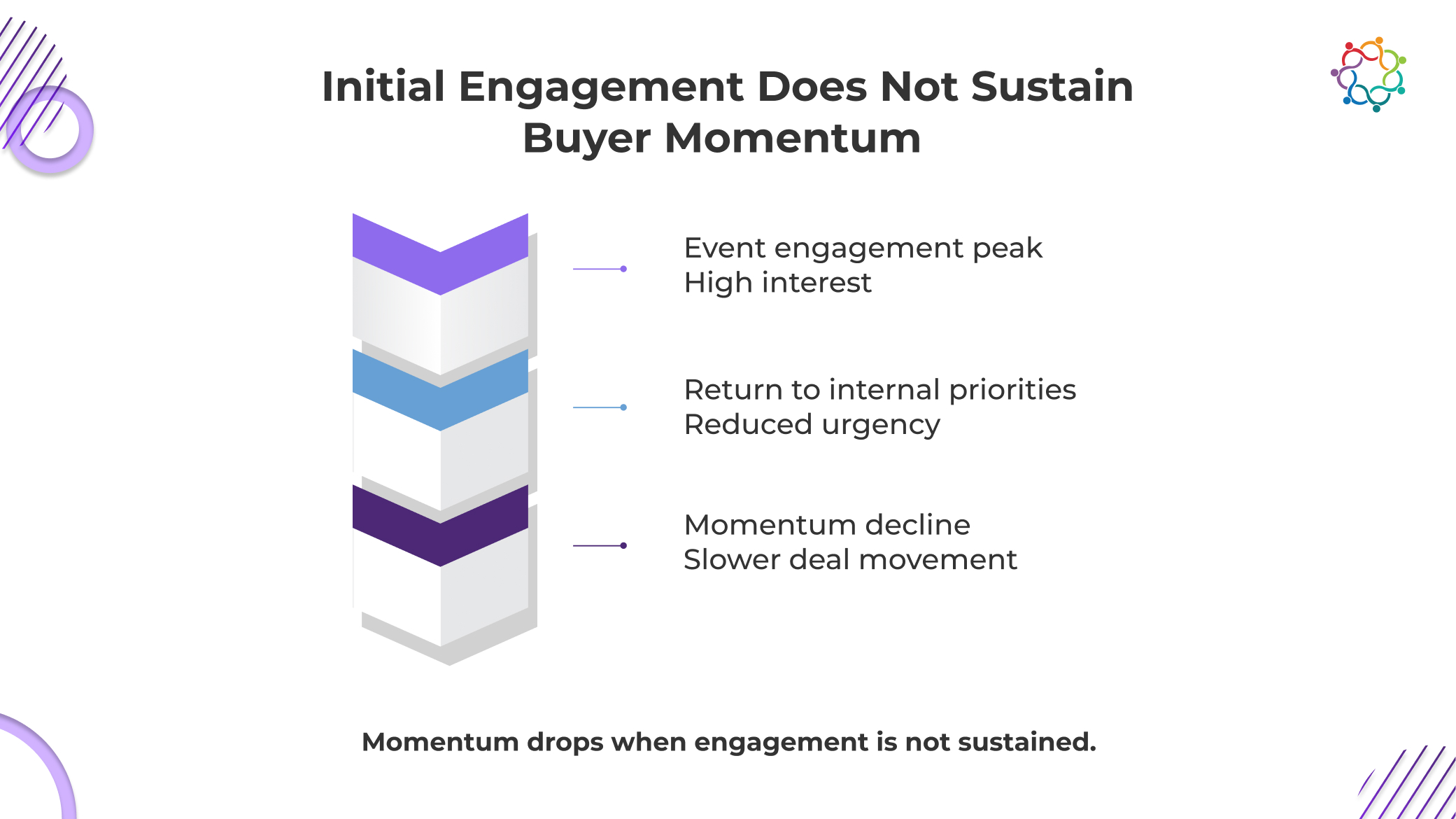

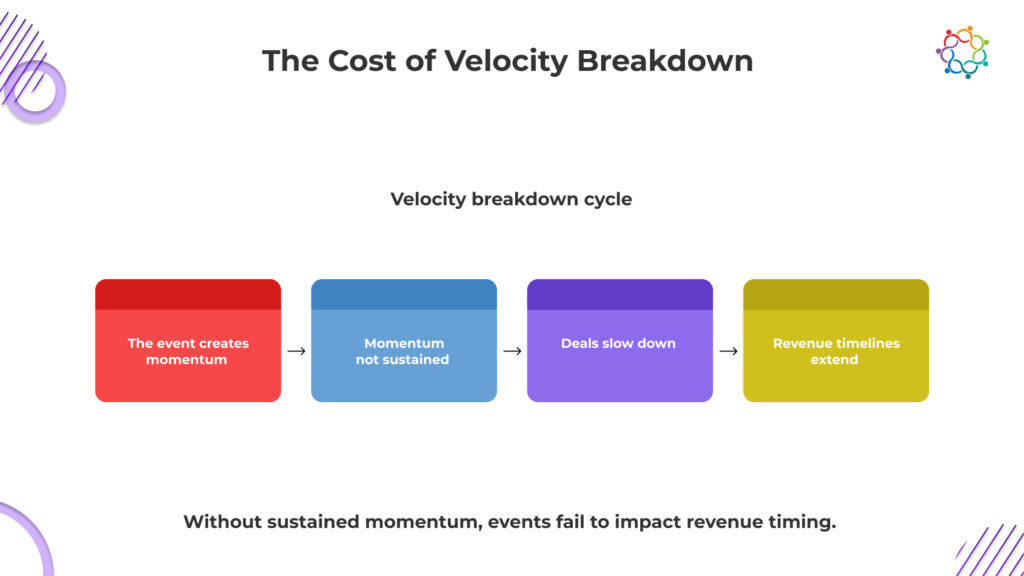

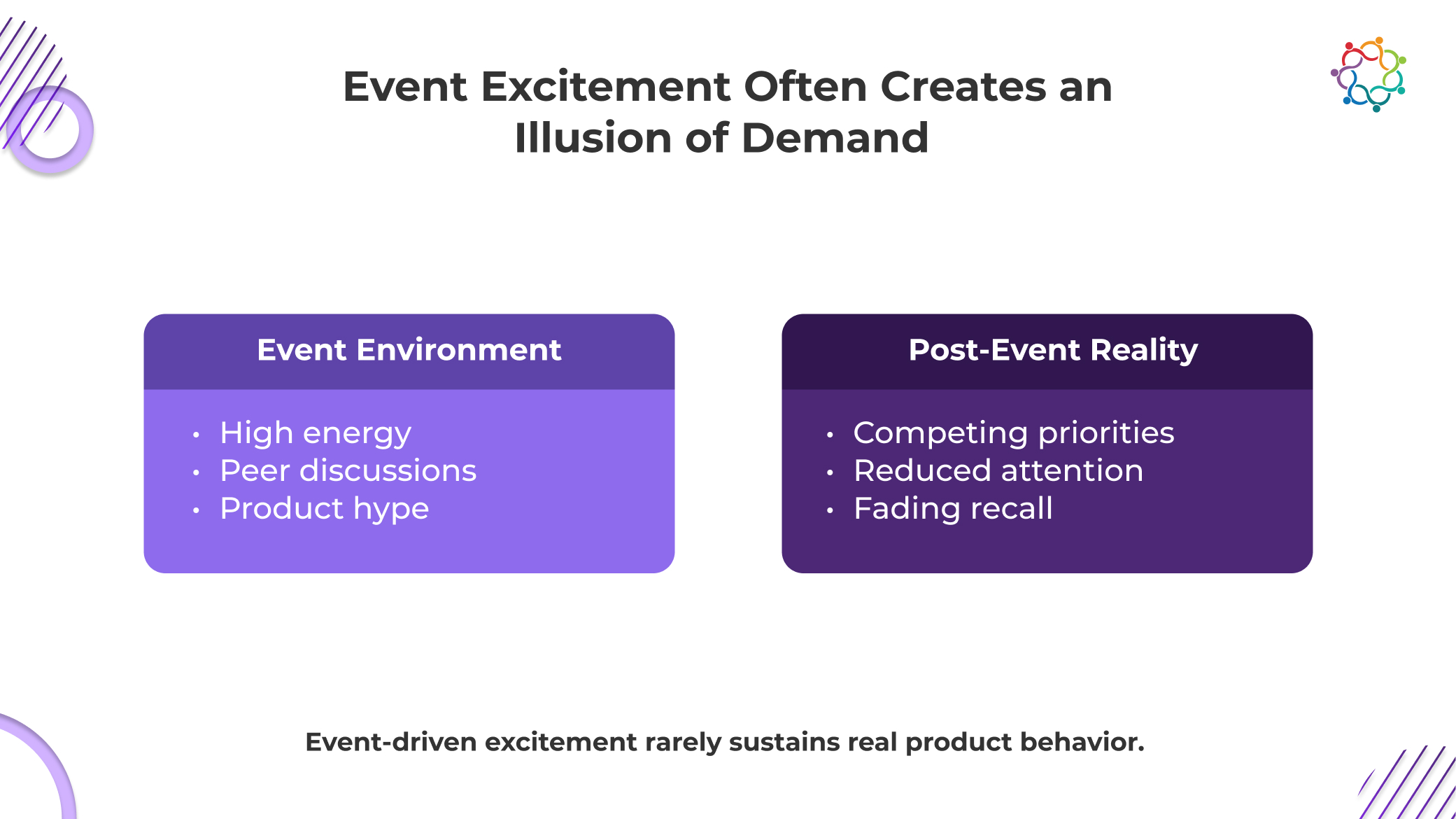

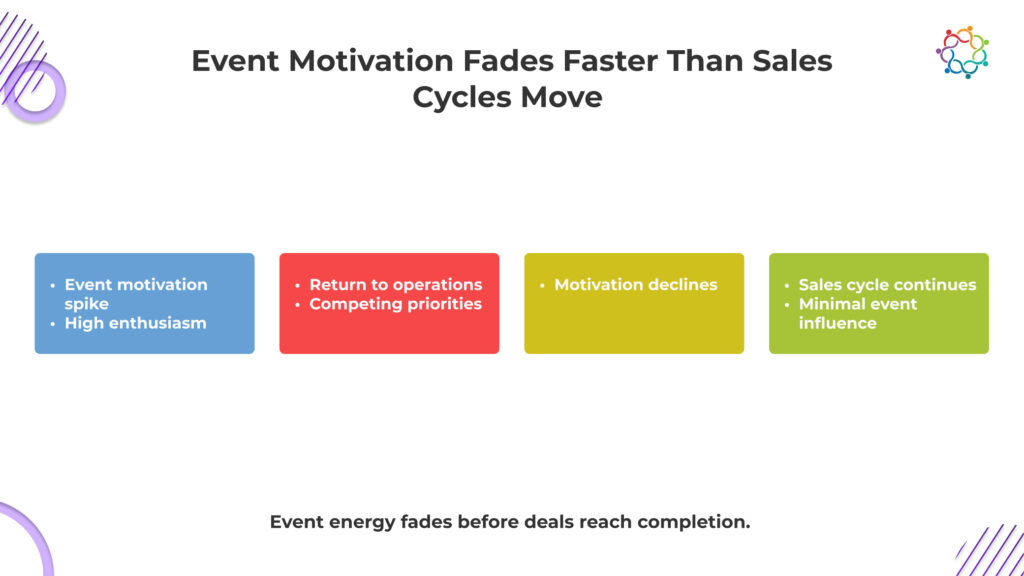

B2B events marketing creates a sharp spike in momentum. Conversations start faster, meetings stack quickly, and buyers appear highly engaged. For a brief window, deal movement feels accelerated, and the pipeline looks active.

This is the Pipeline Velocity Illusion, where increased activity during events is mistaken for sustained deal movement.

But this momentum does not hold. It is driven by a temporary environment where attention is concentrated, and distractions are limited. Buyers are available, responsive, and open to discussions because the setting is designed that way.

The moment the event ends, that advantage disappears. Buyers return to their actual work environment where priorities compete, decisions slow down, and scrutiny increases. Conversations that felt urgent lose intensity. Deals that looked ready to move forward begin to stall.

Events are effective at creating entry into the pipeline. They are not built to sustain progression within it.

This blog breaks down why that momentum drops after events and how it impacts pipeline velocity and revenue movement.

The urgency created during events is not real. It is situational.

At events, buyers operate in a different psychological state. They are in discovery mode. They are open to conversations. They are more willing to explore ideas without immediate pressure. This creates the illusion of intent.

But that intent is not anchored in real decision conditions.

Once the event ends, buyers return to environments defined by risk, accountability, and competing priorities. Decisions are no longer exploratory. They are scrutinized. Every conversation must now survive internal validation.

This is where momentum collapses.

This is the core tension in B2B tech events. Events compress attention into a short window. Deal velocity requires sustained attention over time.

The gap between these two realities is where pipeline movement breaks.

What appears as strong engagement is often just temporary accessibility. Buyers are available during events. That availability is mistaken for intent.

But intent only becomes real when buyers commit to moving forward within their organization. That rarely happens at the same speed as event conversations suggest.

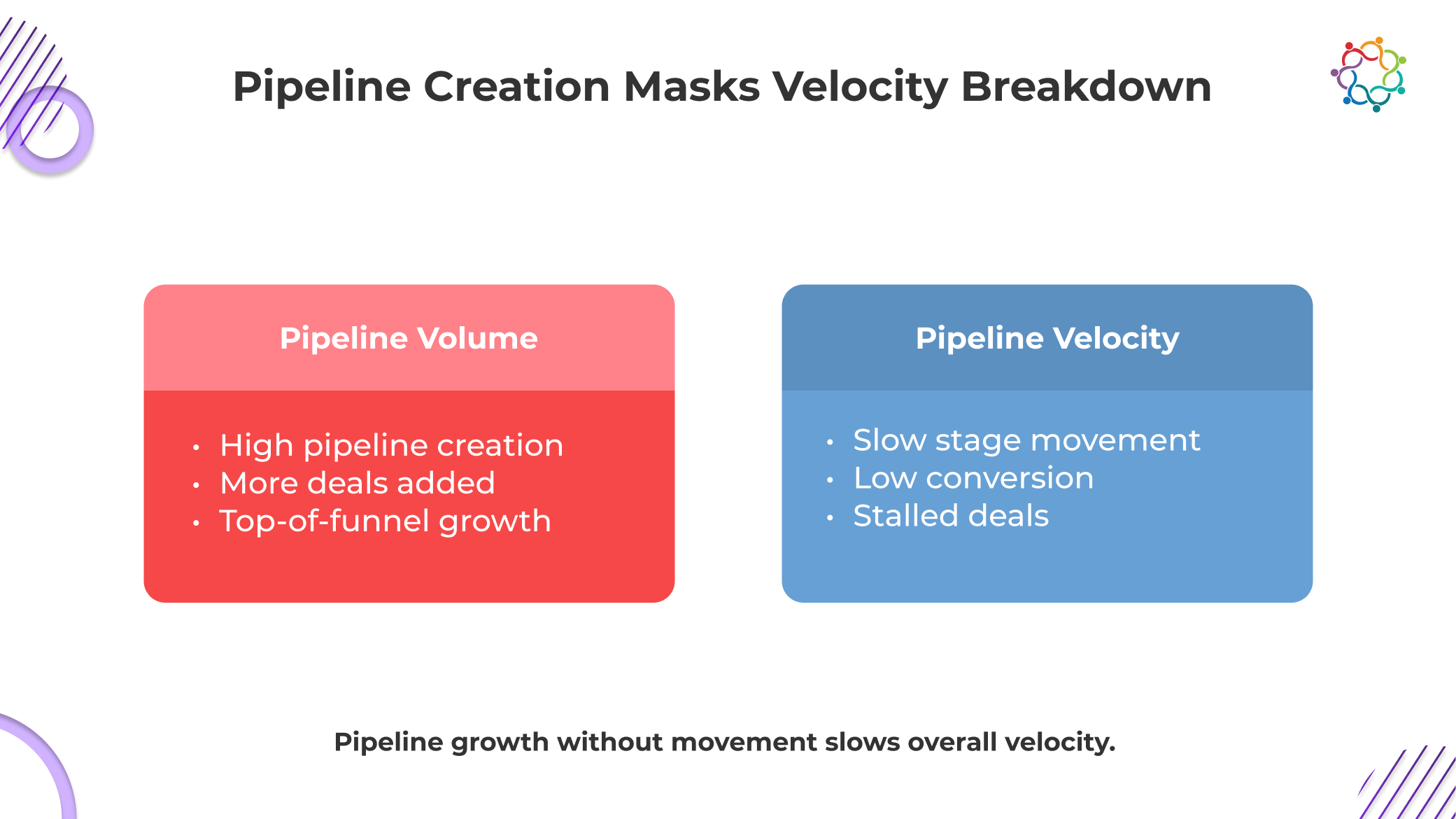

B2B events marketing consistently drives strong pipeline creation. New deals enter quickly, early-stage opportunities increase, and top-of-funnel metrics show clear growth. On the surface, this signals success.

But this growth often hides a deeper issue. Pipeline volume increases, while deal movement does not. Deals created during events tend to stall in early stages, slowing overall progression across the funnel.

This is where the Pipeline Velocity Illusion becomes visible. Activity expands, but actual deal progression does not.

This creates a misleading picture. Teams see more opportunities and assume momentum is building. In reality, the pipeline is becoming heavier, not faster.

As more deals accumulate without progressing, sales cycles extend, conversion rates flatten, and pipeline aging increases. The system appears productive but struggles to move deals forward.

Pipeline growth without velocity improvement does not strengthen performance. It obscures where deals are actually breaking and delays corrective action.

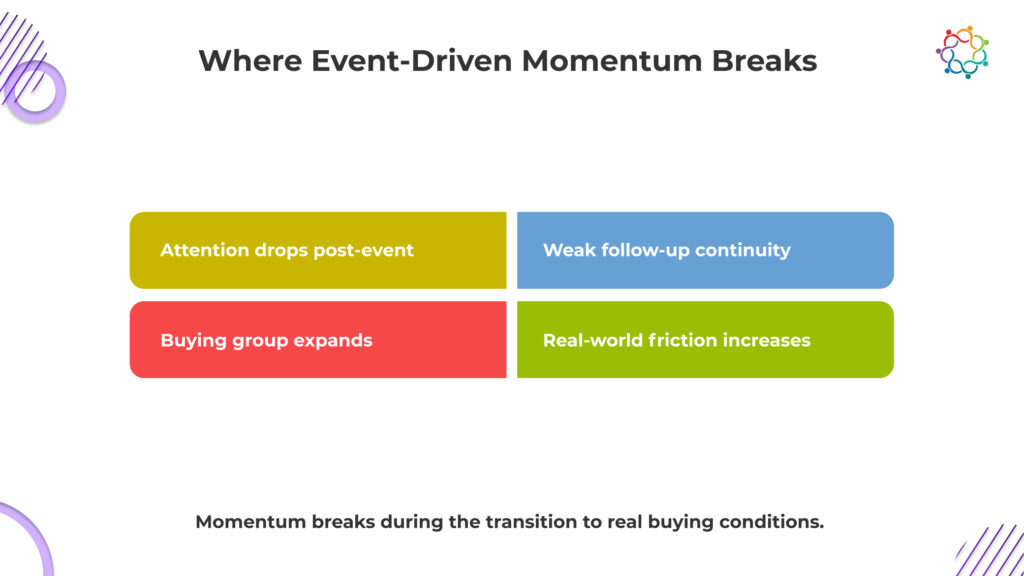

Momentum does not disappear randomly. It breaks at specific transition points between the event environment and real buying conditions.

At events, buyers behave with low perceived risk. Conversations are exploratory. There is no immediate pressure to commit.

Inside their organization, the same buyers operate under scrutiny. Decisions require justification. Risk tolerance decreases. What felt like a strong opportunity now faces internal resistance.

During events, interactions are continuous. Conversations happen back-to-back. Follow-ups are immediate.

After the events, that continuity disappears. Engagement becomes fragmented. Without sustained pressure, conversations lose momentum and drift.

Event interactions often involve one or two stakeholders. Real decisions involve many more.

As additional stakeholders enter, alignment becomes harder. Each new participant introduces new concerns, new objections, and new delays.

This is where most teams underestimate the problem.

Events simplify reality. Conversations focus on value, possibilities, and high-level fit.

Real deals reintroduce complexity.

What looked like a clear path forward becomes uncertain.

This is not a failure of follow-up. It is a structural mismatch between how conversations happen at events and how decisions happen in organizations.

Velocity does not break during the event.

It breaks when simplified conversations collide with real buying conditions.

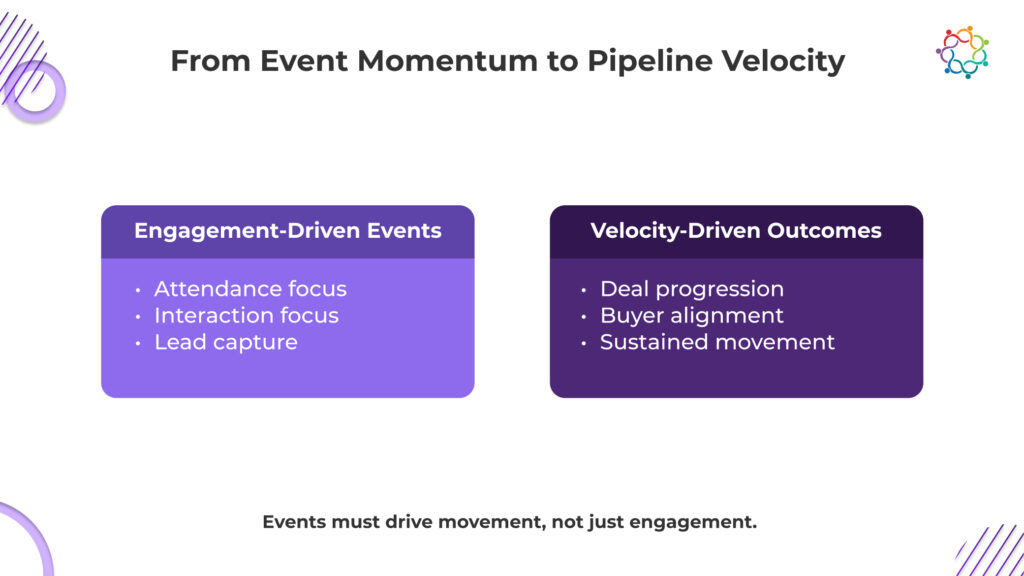

Most B2B tech events are structured to generate activity. The focus remains on attendance, engagement, and lead capture. This drives pipeline entry but does not influence how deals actually move forward.

A shift in thinking is required. Events must be evaluated based on their ability to impact deal progression, not just create opportunities.

This means focusing on how effectively an event contributes to advancing real buying decisions. Deals should move closer to resolution, not just enter the funnel.

When events are aligned with progression, they influence stakeholder alignment, clarify decision criteria, and reduce friction within active opportunities.

Without this shift, events continue to produce the same outcome. High engagement during the event, followed by limited movement afterward.

The role of events is not to start more conversations. It is to ensure that existing and new conversations move forward inside actual buying environments.

Momentum from events cannot sustain itself. It depends on what happens immediately after the event ends. Without continuity, buyer engagement resets instead of progressing.

During events, conversations are concentrated and continuous. After events, that continuity breaks. Interactions become fragmented, context is lost, and urgency weakens.

This disrupts deal progression. Buyers who showed interest during the event no longer maintain the same level of engagement. Sales teams are forced to re-establish context instead of building on existing momentum.

Pipeline velocity improves only when engagement continues without interruption. Each interaction must move the deal forward, not restart the conversation.

Without this, deals drift back into early-stage behavior. Interest fades, decision timelines extend, and progression slows across the pipeline.

Events initiate movement, but sustained velocity depends on whether that movement is maintained through consistent, connected interactions after the event.

When event-driven momentum breaks, the impact extends beyond individual deals. It affects overall revenue performance.

Sales cycles become longer as deals spend more time in the early stages. Conversion rates decline because initial interest weakens before decisions are made. Pipeline aging increases, making it harder to maintain deal quality.

At an operational level, forecasting becomes less reliable. Stalled deals remain in the pipeline longer, distorting revenue projections. Leadership loses visibility into what will actually close and when.

Pipeline bloat increases as more deals enter than progress. Sales teams spend more time managing inactive opportunities instead of advancing active ones. This reduces overall efficiency and increases acquisition costs.

Organizations continue investing in events expecting acceleration, but without sustained velocity, revenue timelines continue to shift.

Event success without deal movement does not drive growth. It creates pressure across the entire revenue system.

Despite these issues, most organizations continue to measure event marketing effectiveness using engagement metrics.

The reason is simple. Engagement is easy to capture.

Attendance numbers, session participation, meetings booked, and leads generated. These metrics are visible, immediate, and easy to report.

Velocity is harder to measure. It requires tracking deal progression over time, across stakeholders, and through complex sales cycles.

So teams default to what is measurable, not what is meaningful.

This creates a structural bias. Teams optimize for what they can report quickly, not what drives long-term outcomes.

The result is predictable. Events are designed to maximize visibility and activity. Not to improve deal progression.

When success is defined by engagement, velocity breakdown is not an accident. It is the expected outcome.

Events play a critical role in B2B event marketing. They create visibility, initiate conversations, and generate pipeline entry.

But none of these outcomes guarantees revenue movement. Pipeline velocity depends on what happens after the event.

If momentum is not sustained, deals slow down. Buyers disengage. Revenue timelines extend.

The core issue is not that events fail to create momentum. It is that the momentum they create does not survive real buying conditions.

Events compress attention. Real decisions expand complexity.

The Pipeline Velocity Illusion makes this gap easy to miss. Activity rises, but progression does not follow.

Until this gap is addressed, the same pattern will repeat. A spike in engagement followed by a drop in velocity. A growing pipeline with limited movement. A system that produces activity without acceleration.

B2B tech events are not underperforming. They are doing exactly what they are designed to do, generating attention, engagement, and product curiosity at scale. The problem is what happens after.

Companies set up high-impact spaces where products can be shown off clearly and precisely at events like product launches, developer events, and SaaS user conferences. People look at the benefits, talk about them, and leave with a strong belief that the product is worthwhile. This looks like a success from the point of view of the event.

But product metrics tell a different story. Trials do not increase proportionally. Onboarding activity remains flat. Active usage does not reflect the level of engagement observed.

The gap is not accidental. It exists because interest is being mistaken for adoption.

This blog examines why B2B tech events consistently generate product interest but fail to translate that interest into actual product usage.

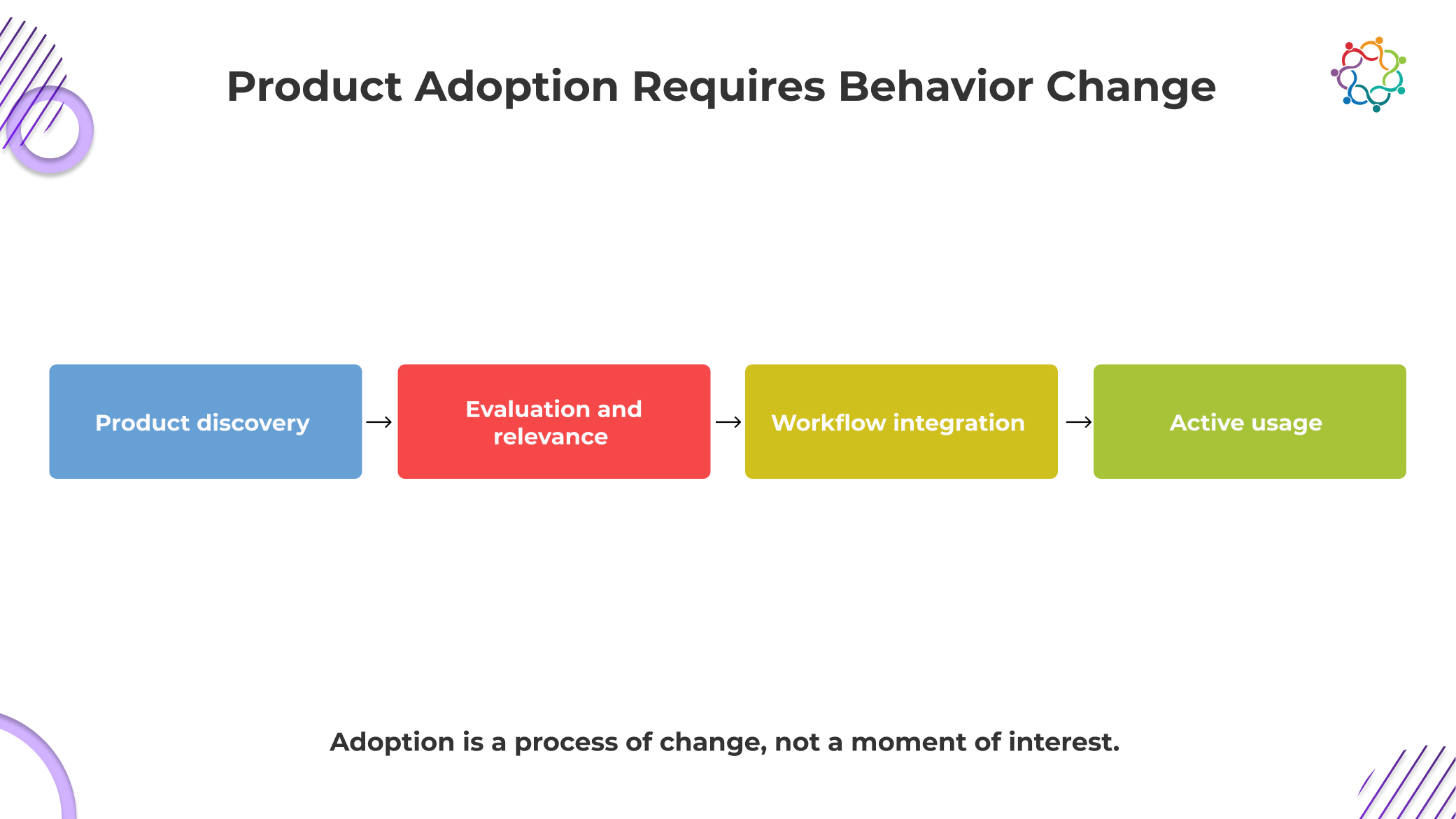

Product adoption is not a continuation of interest. It is a shift in behavior that introduces friction, risk, and internal dependency. This is where most assumptions around event impact begin to fail.

Engaging with a product at an event requires attention. Adopting it requires disrupting existing workflows, reallocating time, and justifying change. These are not lightweight decisions. They demand evaluation under real constraints, not event conditions.

A critical gap sits in who attends versus who decides. The person exploring the product at a developer event or product marketing event is often not the one responsible for implementation or budget approval. Even if interest is strong, it must be translated into internal alignment across teams that were not part of the experience.

Engineering, IT, and leadership each introduce their own criteria. Feasibility, risk, and long-term value all come under scrutiny. What felt straightforward during the event becomes layered and uncertain.

This is why adoption slows down. Interest is immediate. Adoption is negotiated, validated, and often delayed until it loses momentum.

Event environments do not just amplify interest. They manufacture confidence around demand that has not been tested. Inside B2B tech events, attendees operate in a low-risk, high-stimulation setting where curiosity is encouraged but commitment is not required. Expressing interest feels natural because nothing forces follow-through.

What gets missed is that this behavior is conditional. It exists only within the event environment. The moment that context disappears, so does the signal strength.

The deeper issue is not just that interest fades. It is that teams treat this temporary engagement as evidence of real market pull. Pipeline assumptions get built. Forecasts get influenced. Product narratives get reinforced.

But this demand has never faced friction. It has not encountered internal resistance, budget constraints, or workflow disruption. It has not competed with existing tools.

This is not an early demand. It is pre-demand noise.

Until interest survives outside the event, it should not be treated as proof of anything.

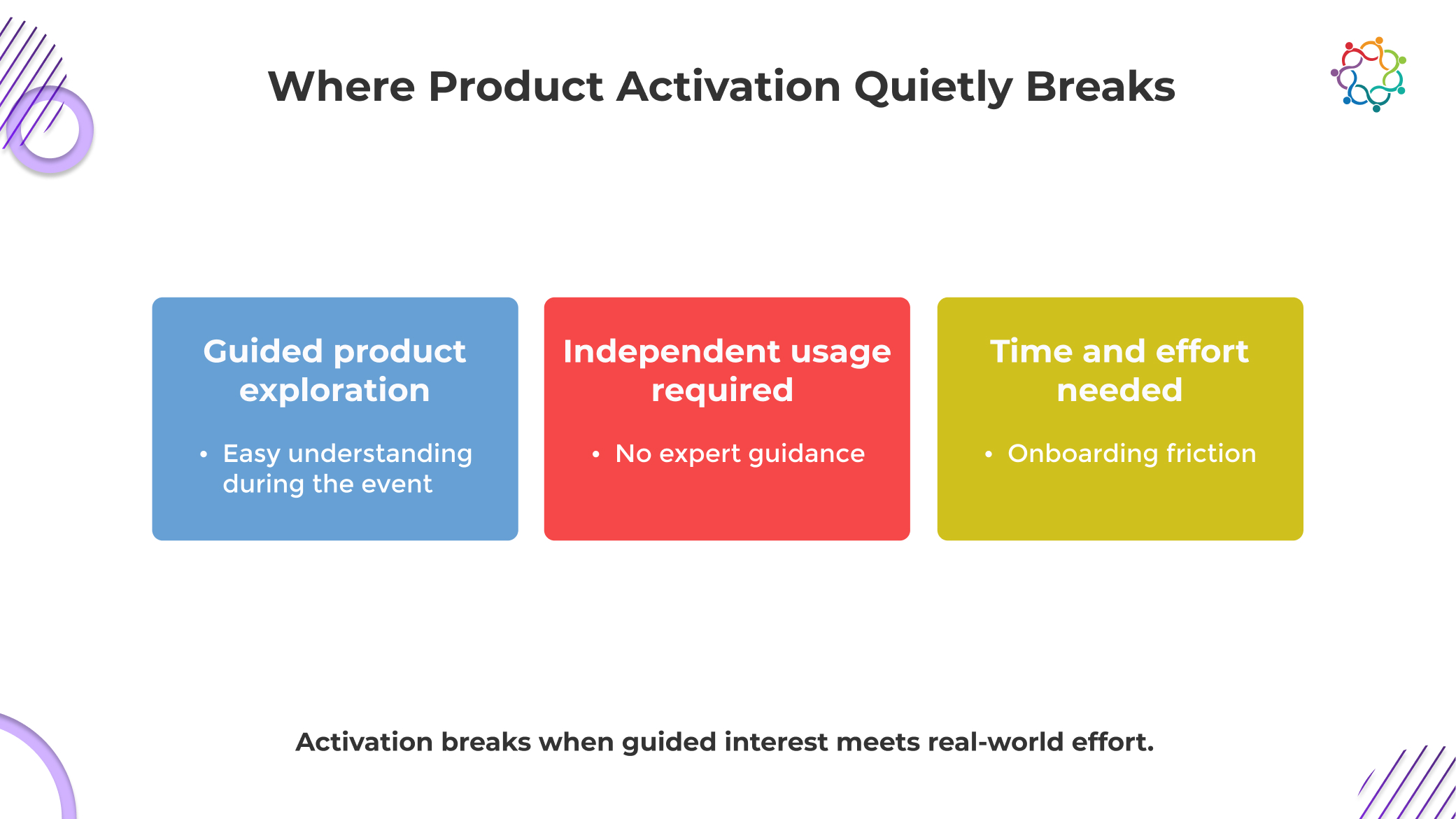

The breakdown does not happen at the point of interest. It happens in the transition from a controlled experience to an uncontrolled reality. This is where momentum loses structure and begins to collapse.

During events, the product is experienced in its most optimized form. Every interaction is guided, every feature is contextualized, and complexity is deliberately reduced. This creates clarity that does not exist outside the event.

Once the environment disappears, users face the product without support. What felt intuitive during a demo now requires independent navigation. Without guidance, uncertainty increases, and exploration slows down before it becomes meaningful usage.

Interest at an individual level is rarely enough to drive adoption in B2B environments. Most products require validation across teams that were never part of the event experience.

Engineering evaluates feasibility, IT examines risk, and leadership questions value. Each layer introduces friction and delay. The initial momentum weakens as the product moves through internal scrutiny that the event environment never exposed.

Even when alignment is possible, activation still depends on available time and priority. Starting a trial or onboarding a product requires focused effort that competes with existing responsibilities.

Without immediate necessity, the product is deprioritized. Interest alone cannot justify the shift in attention required to begin usage. This is where most activation attempts stall and eventually disappear.

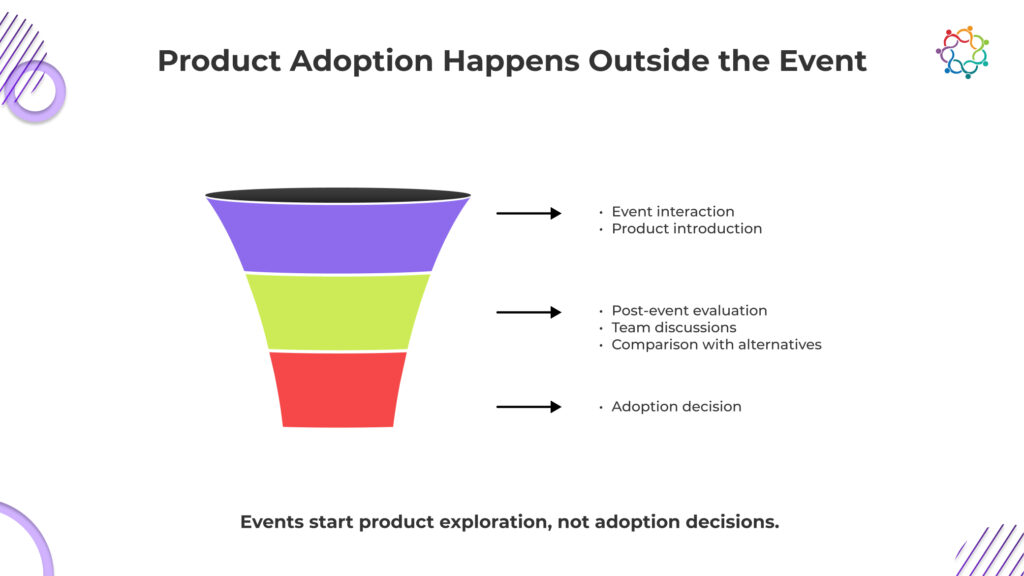

The assumption that adoption decisions happen during events is fundamentally flawed. Events create exposure, not commitment. The actual decision-making process begins after attendees return to their work environments, where the product is evaluated under real conditions.

In this phase, users revisit the product without guidance. They explore it independently, compare it with existing solutions, and assess whether it fits into their workflows. This evaluation is slower, more critical, and far more grounded than anything that happens during the event.

This is also where the majority of event-driven interest disappears. The product must now compete with established tools, internal processes, and organizational inertia. What felt compelling in a controlled environment must now prove its value in a complex and often resistant system.

Teams have to work hard because this time is hard to see. During the event, engagement is tracked, but evaluations after the event are harder to see. This makes a gap between what is seen as success and what is actually happening.

As a result, engagement data show that B2B tech events work well, but product adoption stays the same. There is a persistent lack of knowledge about how events affect product growth because the link between the two is believed rather than measured.

The problem is not that engagement signals are weak. It is that they are easy to collect and easier to believe. Demo interactions, session attendance, and product discussions create a visible layer of activity that feels like progress.

Teams keep misreading these signals because they are immediately available, while real adoption data is delayed, fragmented, and harder to attribute. Engagement offers instant validation. Adoption requires patience and often reveals uncomfortable truths.

There is also a structural incentive to prioritize these metrics. Marketing success is often measured by participation and interaction levels, not by downstream product usage. This creates a bias toward signals that can be reported quickly and positively.

Over time, this becomes normalized. Engagement is treated as a leading indicator of adoption, even when there is no consistent evidence supporting that relationship.

The result is a system where teams optimize for what is visible, not what is real.

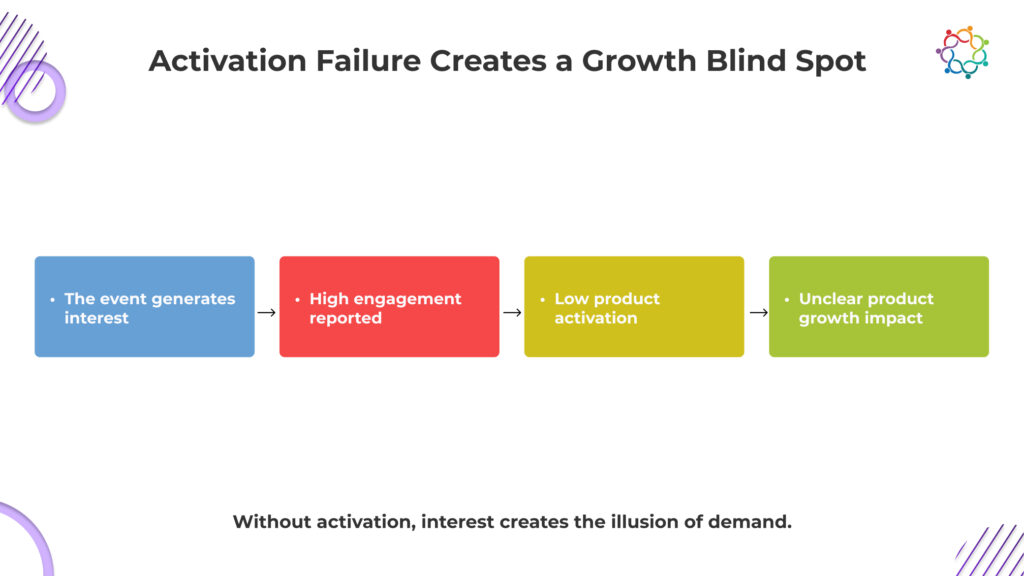

When interest does not convert into usage, the problem is not visibility. It is a misinterpretation. Teams believe growth signals are strong, while actual product behavior tells a very different story.

Event engagement creates the appearance of demand, but without activation, it remains unverified. Teams mistake visibility for traction, leading to decisions based on perception rather than actual product usage patterns.

Significant spend goes into B2B tech events, but when adoption does not follow, customer acquisition cost rises silently. Investment appears justified, while actual user growth fails to support it.

Strong engagement metrics push teams to double down on events. Without linking these signals to adoption, go-to-market strategies become biased toward visibility channels that do not deliver sustained product growth.

Marketing reports success through engagement data, while product teams see stagnant usage. This disconnect creates confusion at the leadership level, making it harder to identify what is truly driving product performance.

This blind spot is not accidental. It is reinforced every time interest is measured without accountability to adoption.

Event momentum feels powerful, but it is structurally short-lived. It is built on concentrated attention, not sustained intent. Once the event ends, that intensity disperses, and the product must compete in an environment where attention is fragmented and priorities are already defined.

What most teams underestimate is that adoption is not triggered by exposure. It is validated through continued relevance. A product must repeatedly prove that it deserves space within an existing system that is already functioning. That validation does not happen during an event. It happens in the weeks that follow, under pressure, scrutiny, and competing alternatives.

Momentum does not survive without reinforcement. And events, by design, do not provide that continuity.

This is why exposure spikes during events rarely translate into usage. Without sustained interaction, the product is remembered but not adopted.

B2B tech events do not fail because they lack attention. They fail because attention is mistaken for adoption. The room feels like a demand, but the product never enters real workflows. The people who engage are often not the ones who decide or use. The product that looked simple in a demo becomes complex in reality.

This is not a visibility problem. It is a translation failure between interest and action.

If this gap is ignored, companies will keep funding experiences that look successful but do not move product growth.

Interest fills rooms. Adoption changes behavior. Only one of these scales is a product.

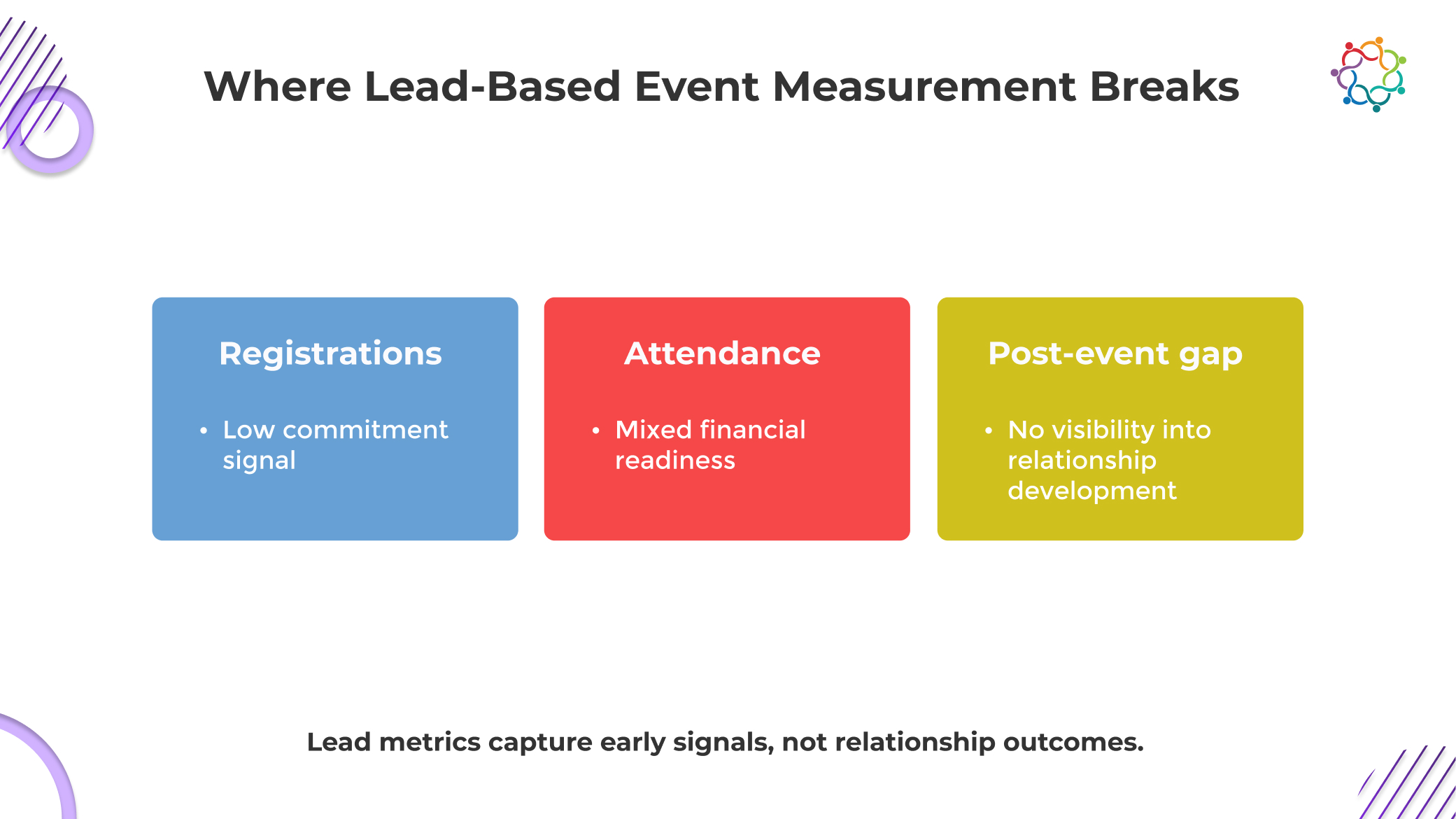

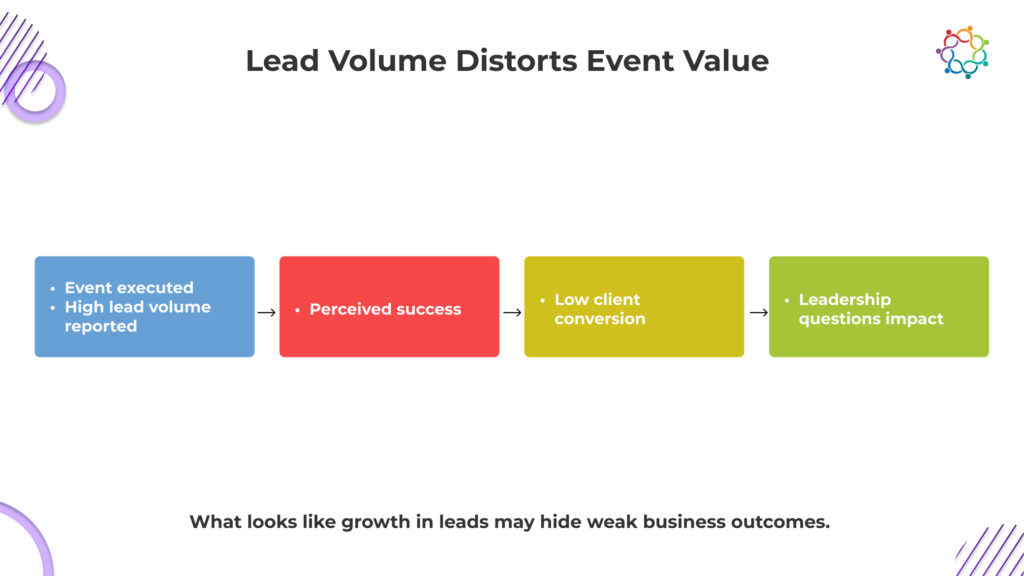

The most dangerous metric in financial services events is also the most celebrated.

Lead volume creates the appearance of success long before any real outcome exists. Registrations increase, attendee lists expand, and reports begin to signal strong demand. It feels like growth. It looks like momentum.

But this is the Lead Illusion.

Because the moment performance is evaluated against actual client acquisition, the narrative breaks. Large audiences rarely translate into meaningful advisory relationships. Most attendees disengage. Many were never viable prospects to begin with.

This is not a gap in execution. It is a flaw in measurement.

Lead volume does not just misrepresent performance. It creates false confidence, pushing teams to optimize for attendance instead of relationship depth.

This blog examines why lead volume fails as a metric, and what it hides about how financial services events actually create value.

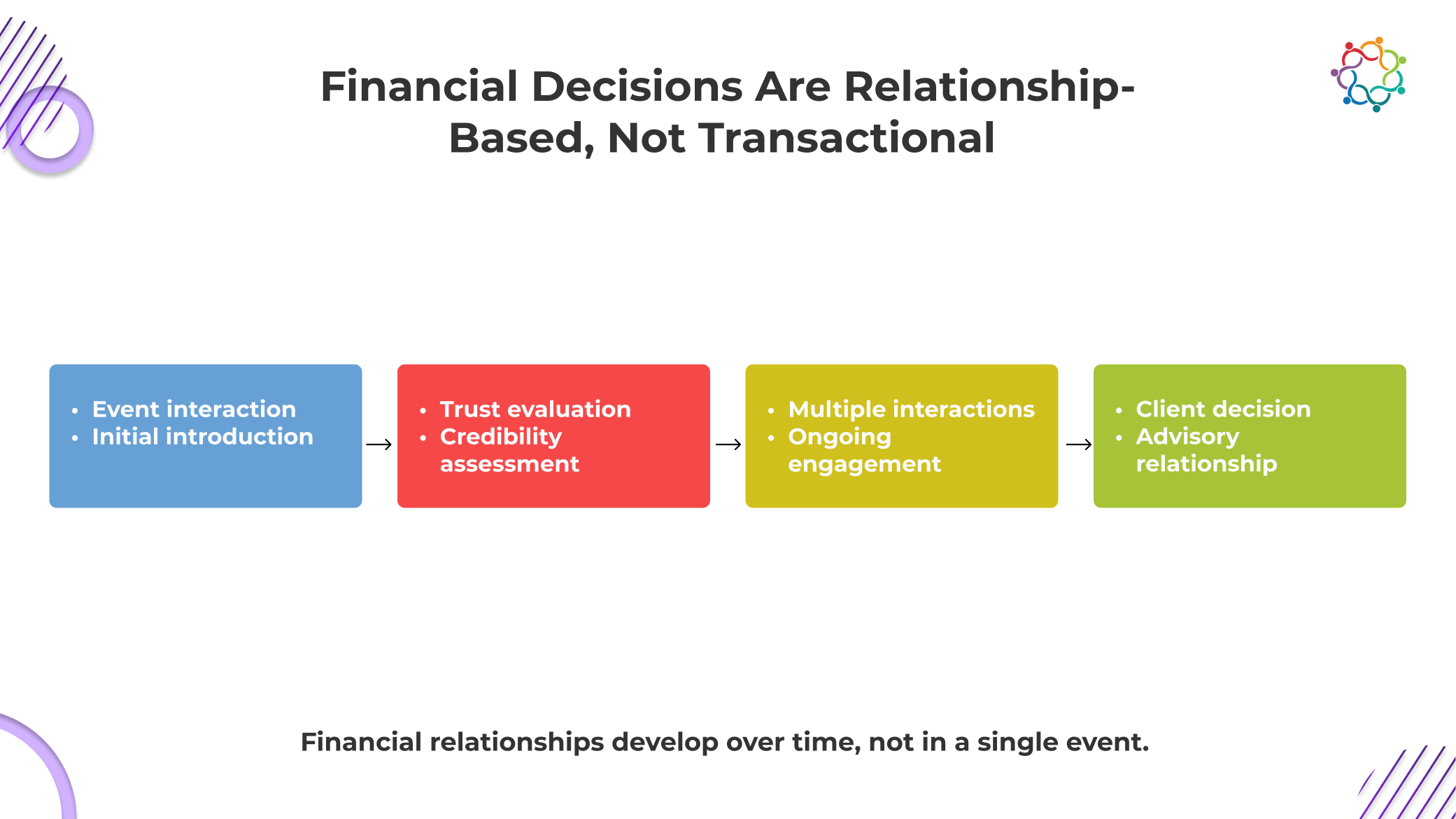

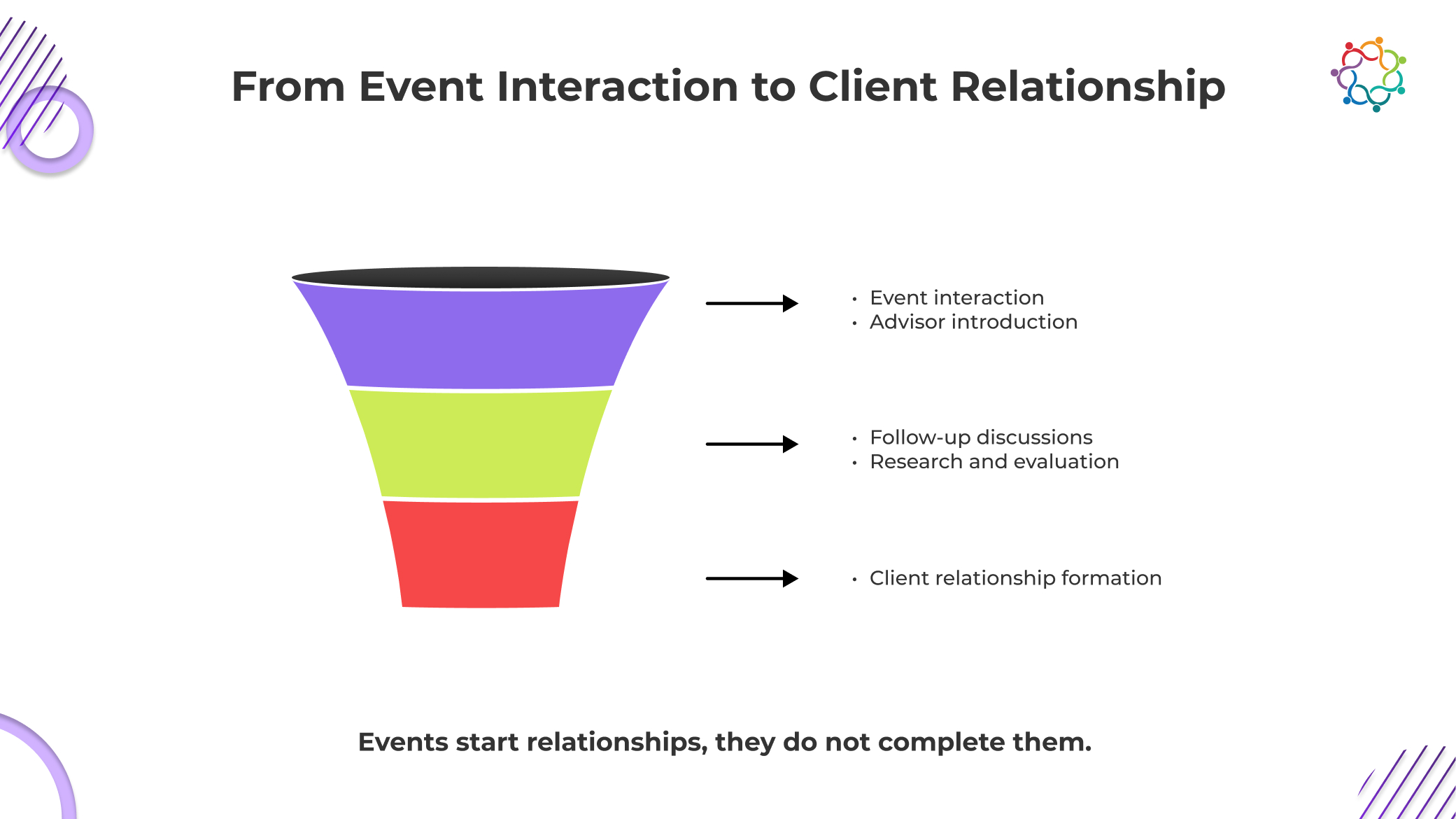

Most marketing systems are built around transactions. Financial services do not operate that way. This is where the entire measurement model begins to break.

Financial decisions are not quick. They are not impulsive. They are not driven by exposure alone. They are shaped by perceived risk, long-term consequences, and personal trust.

When an individual considers engaging with a financial advisor, they are not evaluating a product. They are evaluating a relationship that may influence their wealth, security, and future. This evaluation is fragile. It is slow. And it is deeply personal.

At any financial industry event, attendees are subconsciously asking:

None of these questions is resolved in a single interaction.

This is where most financial services events are misunderstood. They are treated as conversion environments when they are actually introduction environments.

The event creates visibility. It does not create commitment. The gap between those two is where most lead-based reporting fails. A person may attend an investment seminar, engage with the content, and even express interest. But that does not mean they are ready to shift their financial strategy or transfer assets.

Trust in financial services is not built through exposure. It is built through repeated validation.

This makes the journey from attendee to client fundamentally incompatible with lead volume as a success metric. Because lead volume assumes immediacy. Financial relationships operate on patience.

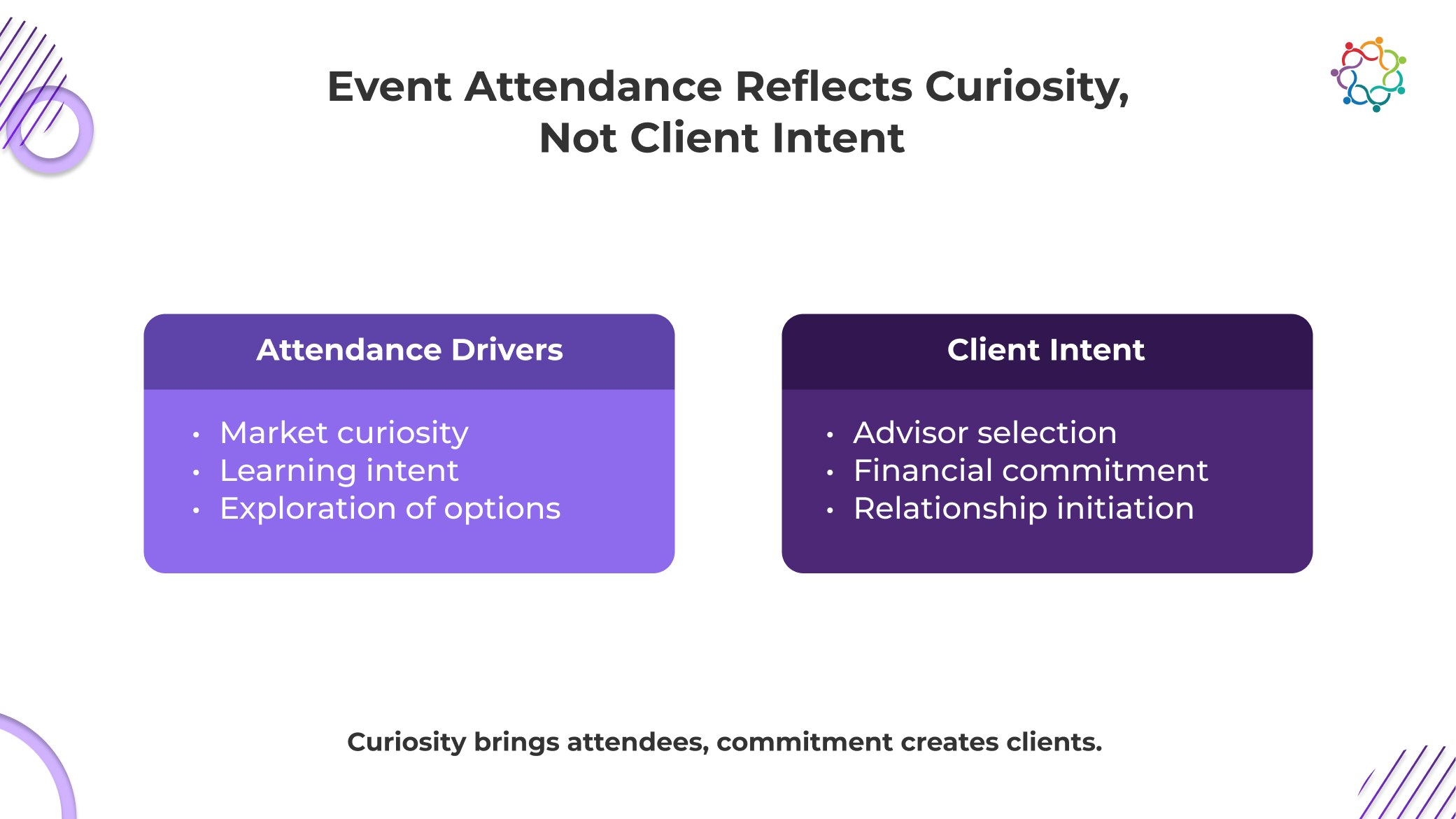

It is easy to assume that attendance signals demand. In financial services, that assumption is flawed.

Most people attending investment seminars or wealth management events are not there to become clients. They are there to learn, observe, or validate their existing understanding.

This is not a small distinction. It is the core reason that volume fails.

Attendees often show up with motivations that have nothing to do with hiring an advisor:

This means a large portion of any audience was never going to convert. Not later. Not eventually. Not at all.

Yet lead-based reporting treats every attendee as a potential client.

This is where the distortion begins. Educational engagement is interpreted as commercial intent. Curiosity is counted as a pipeline. Passive participation is recorded as an opportunity.

The result is a metric system that inflates perceived demand while ignoring actual readiness.

In reality, the overlap between education and client acquisition is limited. Someone can fully value a financial planning workshop and still have no intention of changing advisors or making new investments.

This creates a hard truth that most teams avoid acknowledging. Attendance does not indicate who is buying. It indicates who is interested in listening. And those are not the same audience.

Lead-based measurement persists because it is simple. It produces clean numbers. It creates easy comparisons.

But that simplicity comes at a cost. It removes the nuance required to understand financial client acquisition.

More importantly, it actively encourages the wrong behavior.

Registration is a low-friction action. It requires minimal effort and almost no risk.

People sign up for investment seminars out of curiosity, convenience, or even habit.

This does not indicate seriousness. It does not indicate financial readiness.

Yet registrations are often treated as early-stage pipeline indicators.

They are not. They are signals of attention. Nothing more.

Even when individuals attend, their intent varies widely.

Some already have trusted advisors. Others are years away from making significant financial decisions. Some are simply exploring options without urgency.

Lead metrics flatten this diversity into a single number.

That number suggests uniform potential. The reality is fragmented and uneven.

Advisory relationships are built through sequences, not moments.

These interactions define whether a relationship forms. They happen after the event. Often much later. Lead metrics ignore this timeline entirely.

This is where the real damage occurs.

By focusing on early-stage signals, teams begin optimizing for volume instead of depth. More attendees. More registrations. More surface-level engagement.

Less attention is paid to meaningful interaction.

The Lead Illusion does not just mismeasure outcomes. It pushes organizations toward shallow engagement at scale. And that is where wasted spending begins to accumulate.

If financial services events are not conversion engines, what are they actually doing? They are shaping perception. More specifically, they are shaping how potential clients evaluate credibility.

During an event, attendees observe signals that influence their long-term judgment:

These signals matter. They influence future decisions. But they do not trigger immediate action. This is where many teams soften their understanding. They say events “influence perception” and stop there.

That is not strong enough.

Events do not influence decisions. They influence perceived credibility. That distinction matters because credibility is only one component of client acquisition.

An attendee may leave with a stronger impression of an advisor’s expertise and still take no action for months. Or years.

This delay is not a failure. It is how financial decision-making works. But if you are measuring success through immediate lead conversion, this delayed impact becomes invisible.

And what cannot be measured is often undervalued.

The most critical moment in financial client acquisition does not happen inside the event. It happens after.

This is where most measurement models completely lose visibility. Once the event ends, the decision process moves into private environments.

Individuals begin to:

None of these actions is captured in traditional event reporting.

Yet these are the moments where decisions are actually made.

This is why financial services events cannot be evaluated as isolated experiences. They are entry points into a longer journey. The event introduces the advisor. It does not finalize the relationship.

Confusing these stages leads to flawed expectations.

Teams expect conversion signals too early. When they do not see them, they either overestimate success through lead volume or underestimate the event’s long-term impact. Both are incorrect.

The real issue is not performance. It is visibility. You are measuring the wrong moment in the journey.

This is where the problem becomes organizational, not just analytical.

When success is reported through lead volume, leadership receives a distorted view of reality.

High numbers create the appearance of momentum. Reports suggest strong demand generation. Event programs look scalable and repeatable.

But when those numbers fail to translate into client acquisition, confidence begins to erode. This creates a silent conflict between marketing and leadership.

Marketing presents activity. Leadership expects outcomes. And the gap between the two widens with every event cycle.

The consequences are not theoretical. They are financial.

This is the real cost of the Lead Illusion.

It does not just mislead reporting. It misguides investment decisions.

Over time, leadership begins to question whether financial advisor events or investment seminar marketing efforts contribute to growth at all. Not because they do not. But because the metrics used to evaluate them fail to prove it.

Financial client acquisition does not follow campaign timelines. It follows trust timelines. These timelines are inherently slow. Clients need time to observe consistency, validate expertise, and reduce perceived risk.

This process cannot be compressed into a single event or a short reporting window.

Yet most measurement frameworks attempt exactly that. They apply short-term metrics to long-term decision processes. This is where the fundamental mismatch becomes unavoidable.

Trust-based engagement evolves gradually. Lead metrics capture instant activity.

These two systems are incompatible. This is why financial services events often appear underperforming when evaluated too quickly. Their real impact has not had time to materialize. At the same time, lead volume creates the illusion that something meaningful has already happened.

So, you end up with a paradox.

Strong reported performance with weak visible outcomes. The truth is simpler and more uncomfortable. The metrics are broken.

They are not just incomplete. They are structurally incapable of capturing relationship-driven value.

Lead volume is not just an imperfect metric. It is a dangerous one.

It tells you the event worked when it did not. It rewards attendance when there is no intent. It gives leadership confidence where there should be scrutiny.

This is the uncomfortable reality. You are not measuring growth. You are measuring activity that looks like growth.

And every time you optimize for more leads, you move further away from real client relationships.

In financial services, the risk is not low conversion.

The risk is building an entire event strategy around people who were never going to become clients.

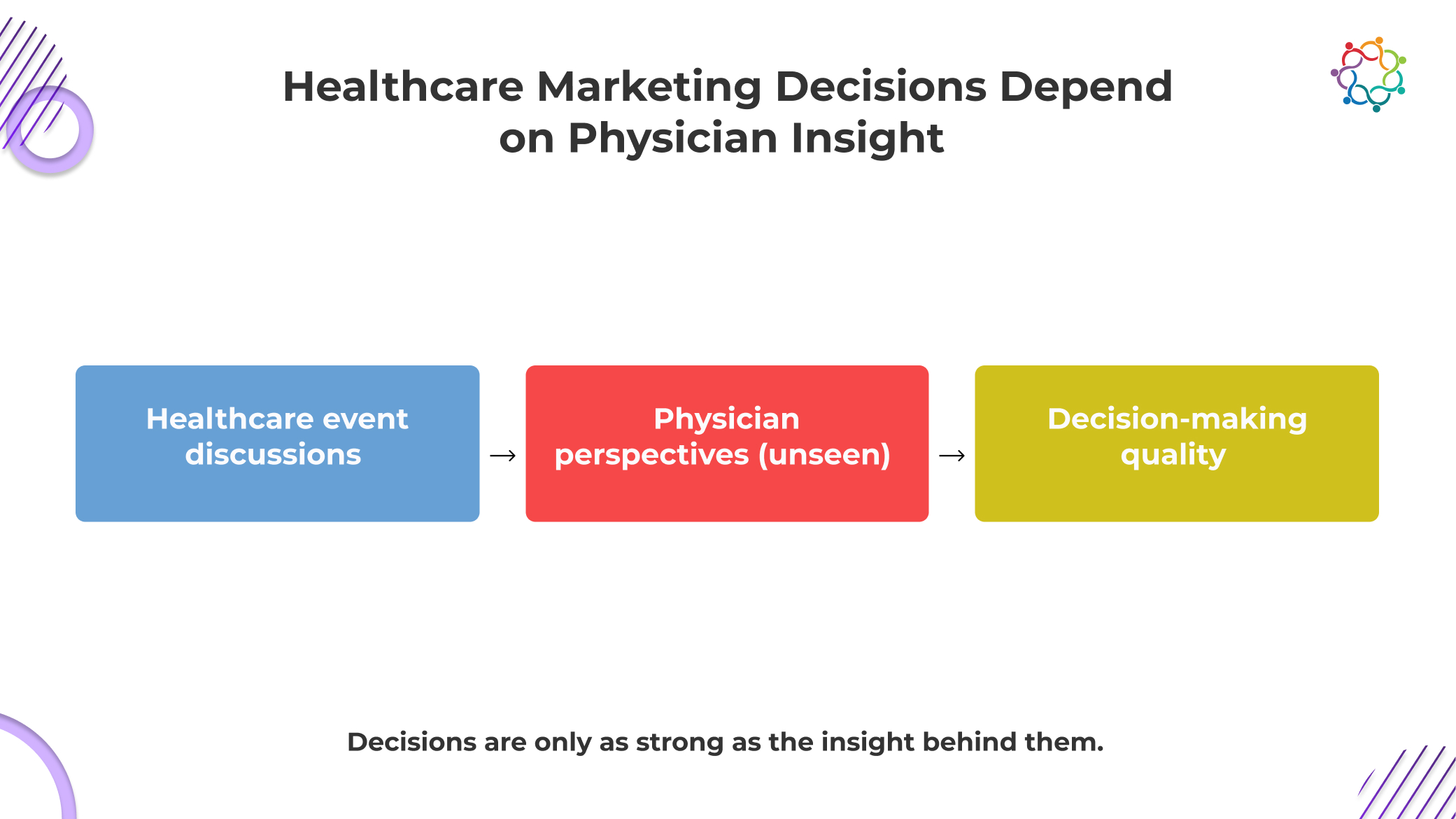

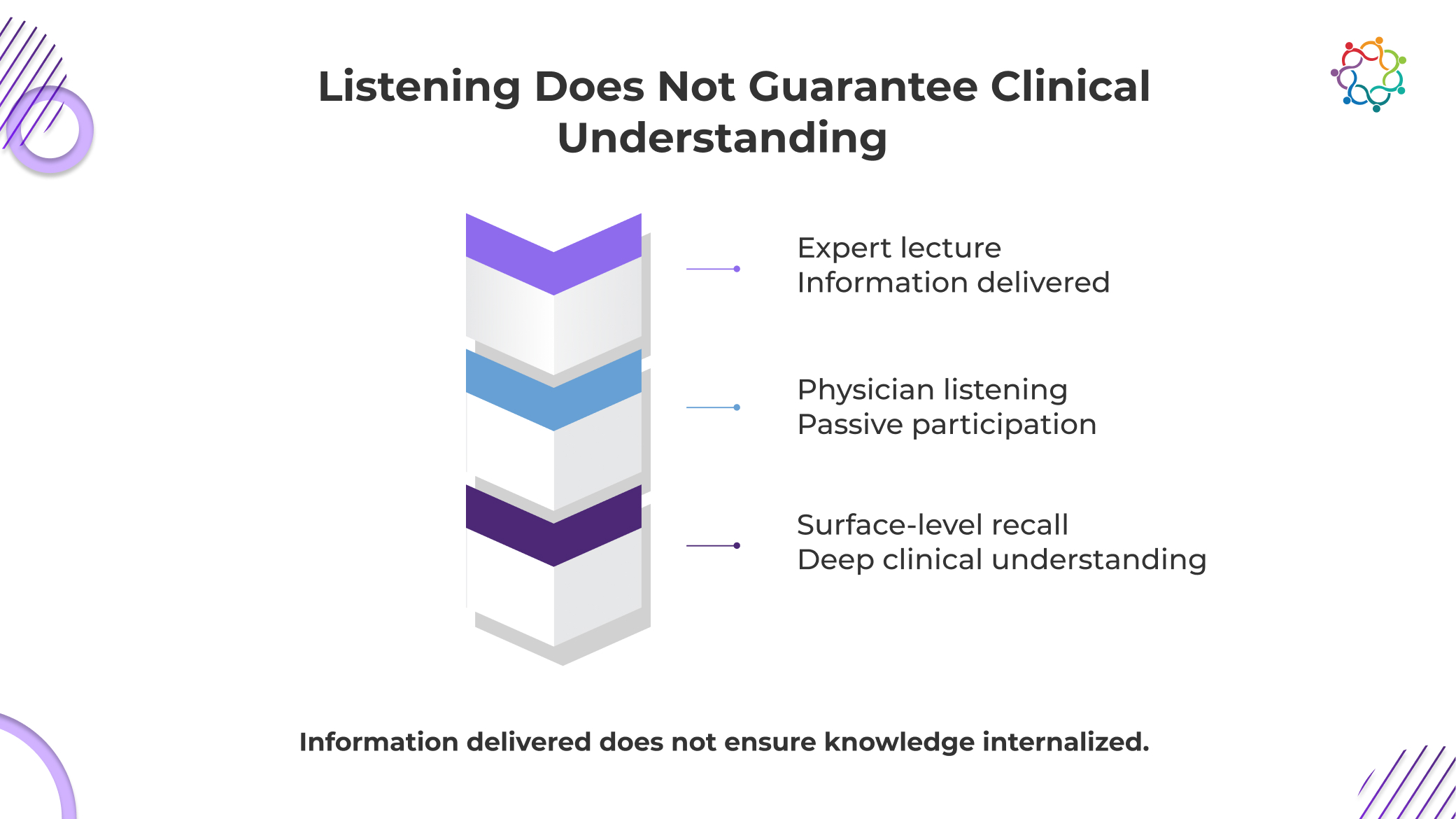

Healthcare event marketing generates a constant stream of visible signals. Rooms fill up. Sessions run smoothly. Physicians ask questions, nod along, and stay engaged through discussions. On the surface, it looks like alignment is happening.

It is not.

What you are measuring is behavioral compliance, not cognitive response. Physicians are trained to engage professionally, listen actively, and participate constructively. That behavior creates the appearance of resonance, even when critical evaluation is happening internally.

Most healthcare event dashboards do not capture thinking. They capture movement. They record who showed up, how long they stayed, and whether they interacted. None of that explains what they actually believed, challenged, or rejected.

This is where Insight Blindness begins. You are not missing data. You are interpreting the wrong signals as truth.

The more polished the event, the stronger this illusion becomes.

This blog examines how this illusion forms, where physician thinking disappears, and why it creates a strategic risk for healthcare organizations.

Healthcare marketing decisions are not abstract. They directly influence how therapies are positioned, how education is structured, and how physicians interpret clinical value. These decisions require a precise understanding of medical stakeholder thinking.

Physicians are not passive recipients of information. They evaluate, compare, question, and filter every clinical message they encounter.

Their perspectives shape:

This is not optional context. It is the foundation of an effective strategy.

Healthcare events bring together doctors, experts, and clinical leaders in concentrated settings where particular subjects are thoroughly examined. These times are infrequent. They focus their knowledge and practical experience in one location.

However, the chance is lost if the organization leaves knowing merely who came and how long they stayed.

Without knowing how doctors think, you are left to make decisions regarding their behaviour.

When insight is missing, assumptions take over. Messaging is refined based on internal belief rather than external reality. Campaigns are built on perceived alignment rather than validated understanding.

The risk is not that you know too little. It is that you believe you know enough to act.

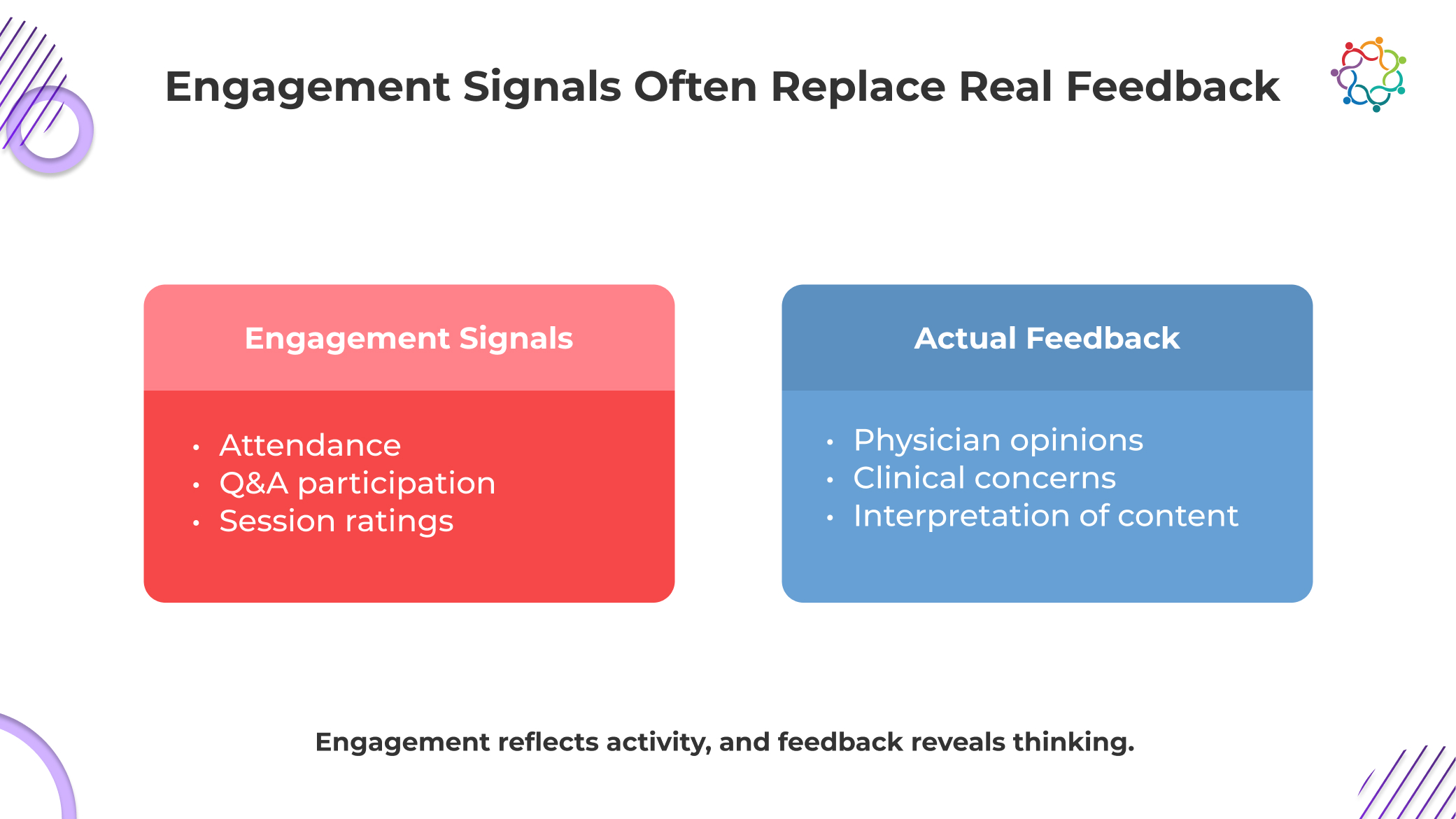

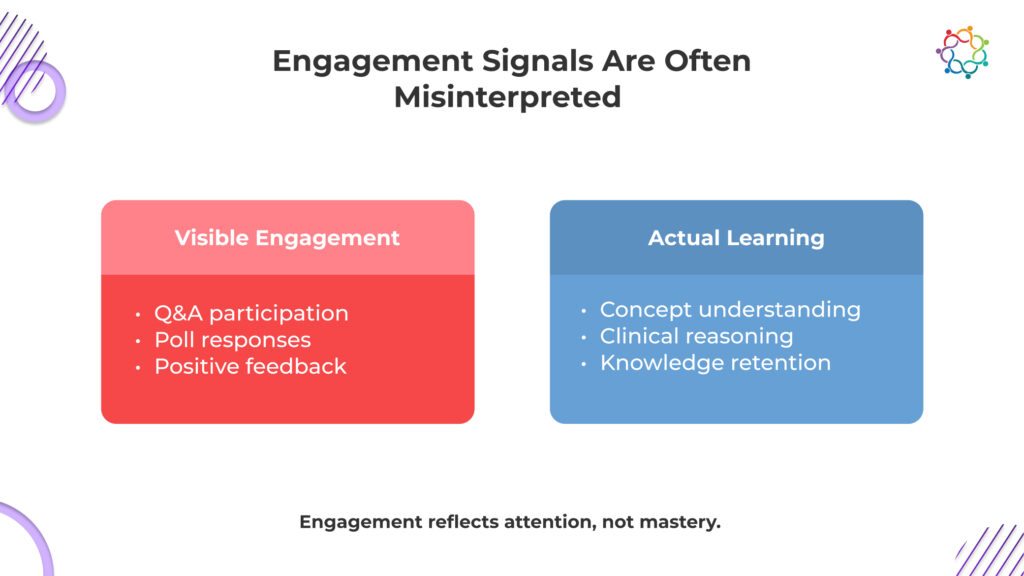

Healthcare event reporting relies on engagement because it is visible, measurable, and easy to present. That convenience creates a problem. These signals do not just lack depth; they distort reality by implying alignment where none may exist.

Full rooms signal topic interest, not message acceptance. Physicians attend to learn and evaluate, not to agree, yet attendance is often misread as endorsement.

Questions indicate engagement with the topic, but often highlight confusion, skepticism, or gaps. Interpreting them as positive validation ignores the intent behind the interaction.

High ratings reflect delivery quality and event organization. They do not confirm whether clinical perspectives were accepted or challenged by the audience.

Active participation can coexist with silent disagreement. Physicians may engage outwardly while internally questioning assumptions, leaving critical perspectives completely unrecorded.

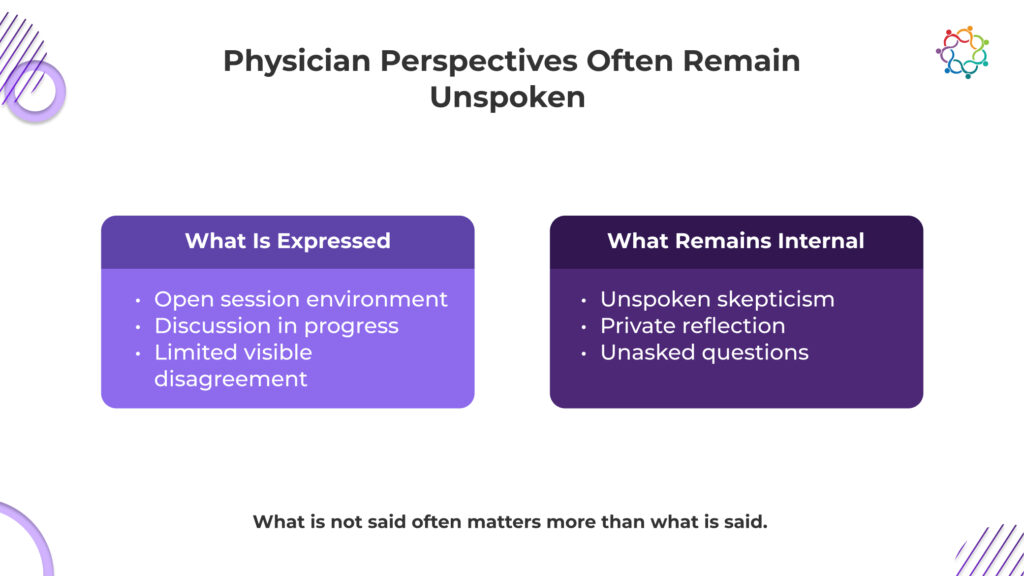

Healthcare settings are subject to limitations that are not present in most marketing scenarios. Physician communication in public is influenced by clinical duty, professional hierarchy, and regulatory sensitivity.

What is said during events is directly impacted by this.

Doctors don’t always publicly question concepts. They don’t always express dissent in front of specialists or their colleagues. They frequently think things through in private, analyse information internally, or talk openly only in casual, trusted contexts.

Silence, in this context, is not neutral. Silence can mean hesitation. It can mean skepticism. It can mean disagreement that is never expressed in the room.

Yet silence is frequently interpreted as acceptance.

This is where Insight Blindness becomes structurally embedded. The environment itself suppresses visible feedback, while the reporting system assumes that visible signals are sufficient. The absence of objection is treated as alignment. The absence of questions is treated as clarity.

Both assumptions are flawed.

Healthcare professionals are trained to evaluate carefully and respond selectively. What they choose not to say publicly is often more important than what they say.

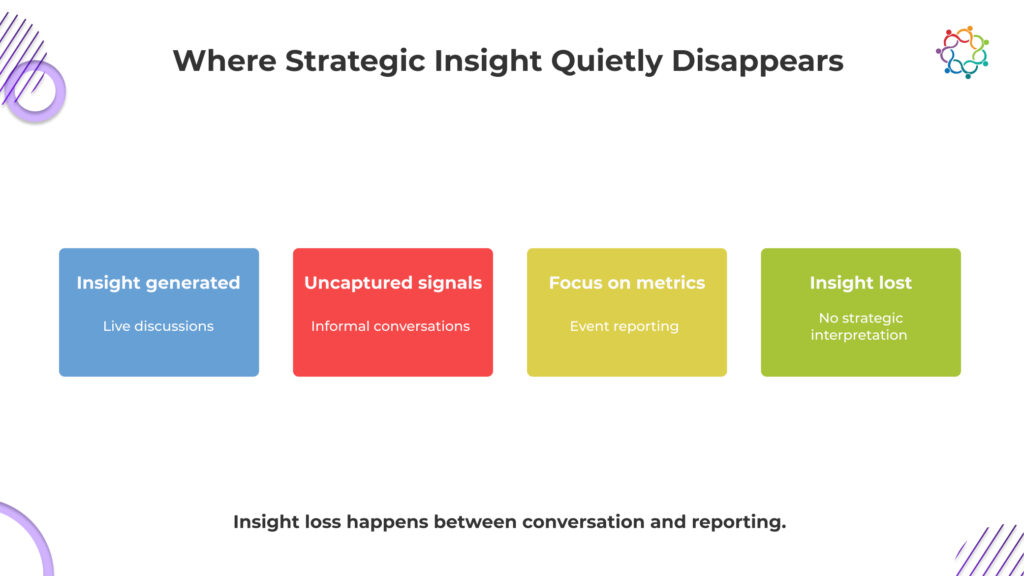

The loss of physician insight does not happen in one obvious moment. It happens across multiple points in the event lifecycle, each one quietly stripping away context that could have informed strategy.

During medical conferences and physician engagement events, the most honest perspectives often emerge outside formal sessions. Hallway discussions, small group conversations, and peer exchanges carry nuanced views that never enter official records.

These conversations reveal hesitation, disagreement, and real-world challenges. Yet they remain invisible to the organization.

Post-event reports are structured around execution. Attendance numbers, session flow, and participation rates dominate the narrative.

This confirms that the event happened successfully. It does not explain what physicians took away from it.

Even when fragments of feedback exist, they are rarely connected. Comments remain isolated. Observations are not synthesized into patterns. No clear view of physician sentiment emerges.

The system captures fragments, not meaning.

What you are left with is a record of activity without interpretation. The event generated signals. The organization failed to translate them into insight.

That is not a data problem. It is a strategic failure.

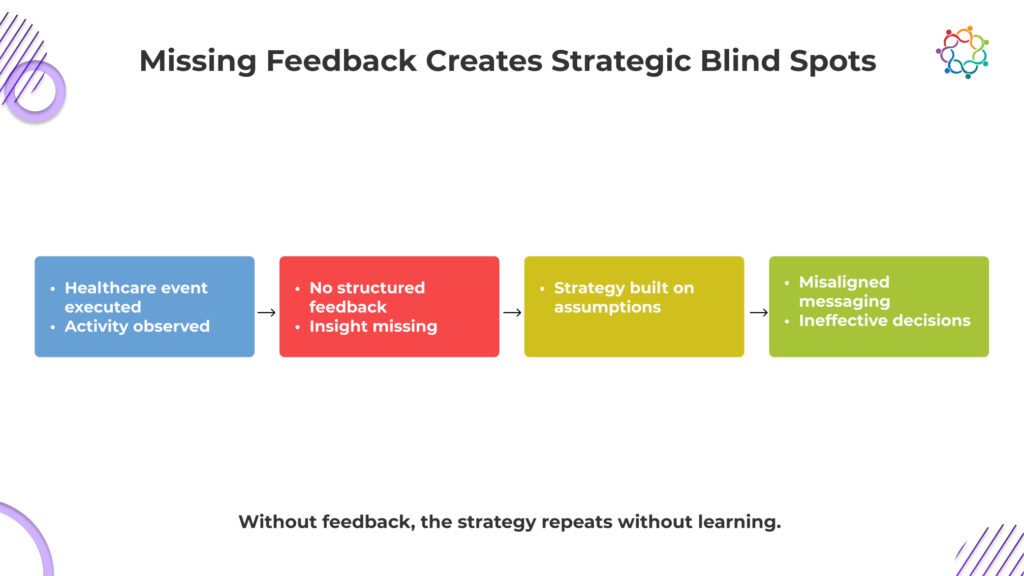

When healthcare organizations operate without clear visibility into physician thinking, decision-making does not stop. It continues. It simply becomes detached from reality.

This is where Insight Blindness turns into a strategic blind spot.

Marketing teams begin to assume alignment. They interpret engagement as agreement. They believe their clinical positioning resonates because nothing visibly contradicts it.

But physicians may have left the event with:

Without insight into these perspectives, leadership has no way to detect the gap.

You are not operating with partial clarity. You are operating blindly.

The most dangerous part is that the system reinforces itself. Positive metrics validate decisions. Decisions lead to similar events. Similar events produce similar metrics.

At no point is the underlying assumption challenged. This creates a closed loop where strategy is continuously refined without ever being corrected.

Healthcare events are not just engagement platforms. They are concentrated environments of real-world clinical thinking. You bring together physicians who actively treat patients, interpret evidence, and make decisions that directly impact outcomes. That density of perspective is rare.

In these settings, signals emerge constantly. Not in formal sessions, but in reactions, side conversations, hesitation points, and the types of questions that surface. These are early indicators of resistance, confusion, and adoption barriers.

If you are not extracting that, you are not just missing insight. You are actively wasting access to it.

Every event already contains answers to questions your strategy is trying to solve. Why is adoption slow? Where messaging breaks. What physicians do not trust.

If those signals leave the room with the audience, your event delivered activity but retained nothing of strategic value.

When you do not understand the physician’s thinking, the strategy does not pause. It continues, just without correction. That is where the risk compounds.

You repeat messaging because it appeared to work. You scale programs based on engagement signals that never reflect real alignment. You invest more into narratives that may already be breaking down in the minds of physicians.

This is how inefficiency becomes embedded.

Budgets get allocated to reinforce assumptions. Clinical communication drifts away from actual concerns. Opportunities to address skepticism are missed before they are even identified.

The result is not immediate failure. It is slower adoption, weaker influence, and increasing reliance on tactics to compensate for misalignment.

At that point, the issue is no longer execution. It is direction.

You are not optimizing the strategy. You are reinforcing errors with confidence.

Healthcare event marketing continues to expand in scale and sophistication, but the core flaw remains unchanged. It measures what is visible and assumes it reflects what matters. Attendance, engagement, and satisfaction create a convincing story, but not an accurate one.

If you are not capturing how physicians actually think, you are not refining strategy. You are reinforcing assumptions.

This is where most organizations get it wrong. They do not lack data. They lack truth.

And when strategy is built on signals that only look right, healthcare event marketing stops being an advantage.

It becomes a confident, well-funded misdirection.

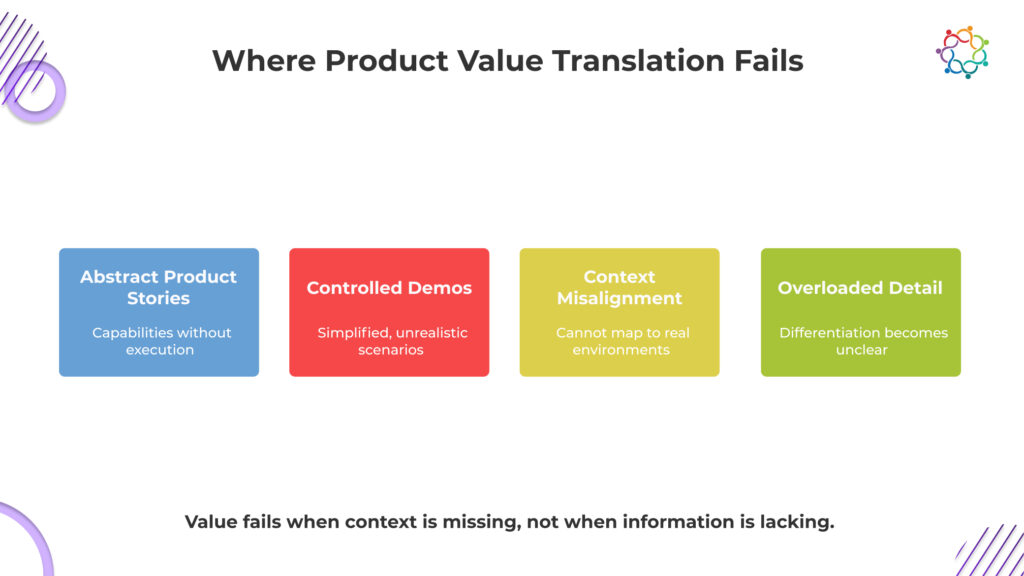

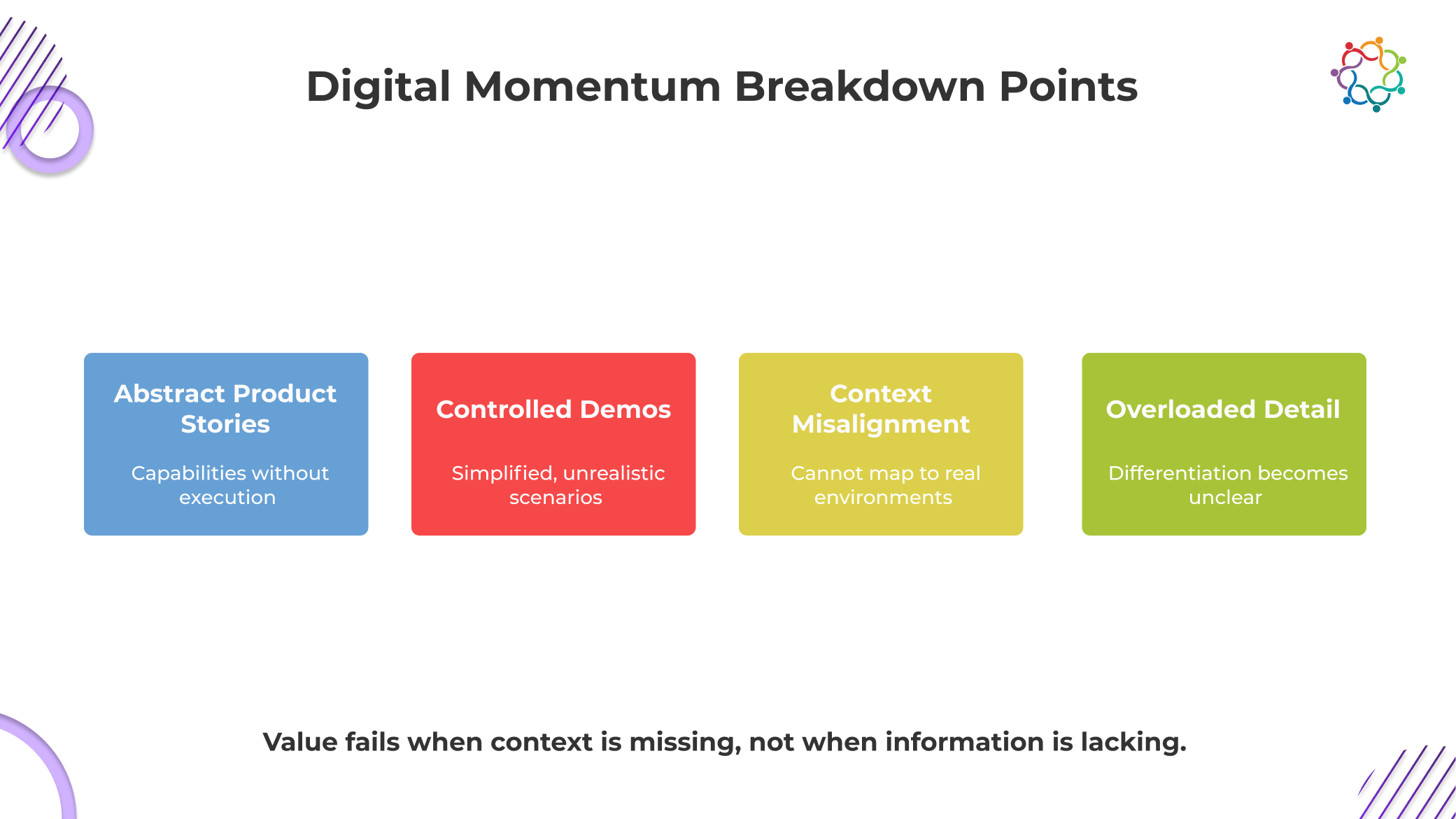

Martech companies do not fail to communicate value because channels are weak. They fail because those channels fragment value.

Product pages isolate features. Sales decks simplify workflows. Demos present controlled scenarios. Content assets explain capabilities without showing real execution. Each touchpoint delivers a partial view, never the full system.

This creates the Product Value Translation Gap.

Buyers see what the product does, but not how it actually works inside their environment. They understand components, not outcomes. They compare features, not impact. Differentiation becomes unclear because value is never experienced as a whole.

The issue is structural. When value is split across disconnected interactions, buyers are forced to assemble meaning themselves. Most cannot do this with confidence.

And without confidence, decisions stall.

Traditional channels do not fail because they lack depth. They fail because they break the continuity required to believe the product will work.

This blog explains why that gap exists and how events attempt to close it.

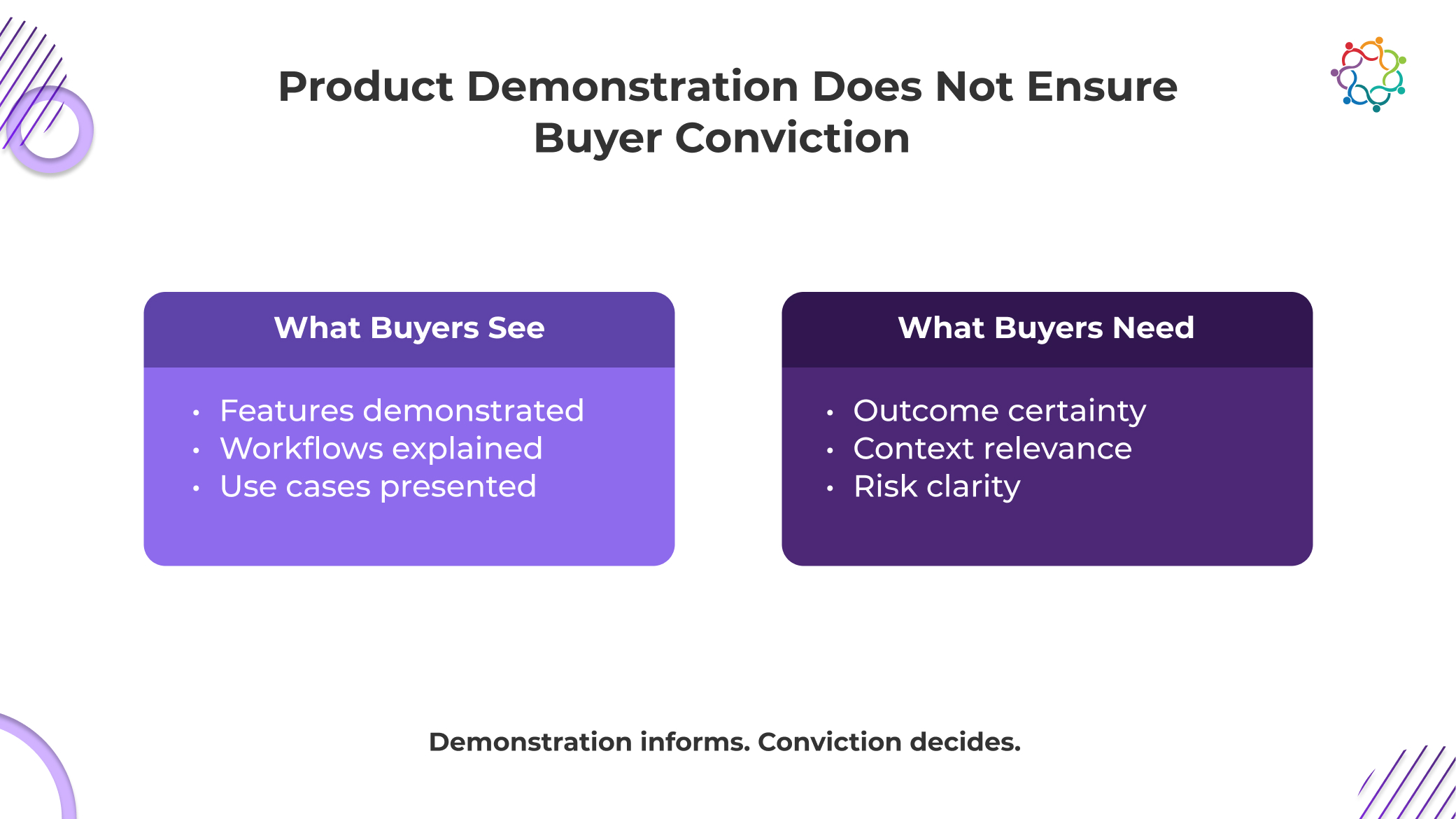

The assumption that better demos lead to better decisions is flawed.

Demos and event interactions increase exposure, not conviction. Buyers leave with a clear view of features, workflows, and use cases. They can explain what the product does. But they still hesitate when it comes to committing.

This is where deals lose momentum.

Demonstration answers what is possible.

Conviction answers what will actually work in their environment.

And that second question remains unresolved.

During martech events, engagement is high. Conversations are active. Interest feels strong. But beneath that, buyers are assessing risk, not capability.

They are asking:

These are not product questions. They are execution concerns.

When buyers understand features but do not trust outcomes, progress stalls. Visibility increases, but decisions do not move forward.

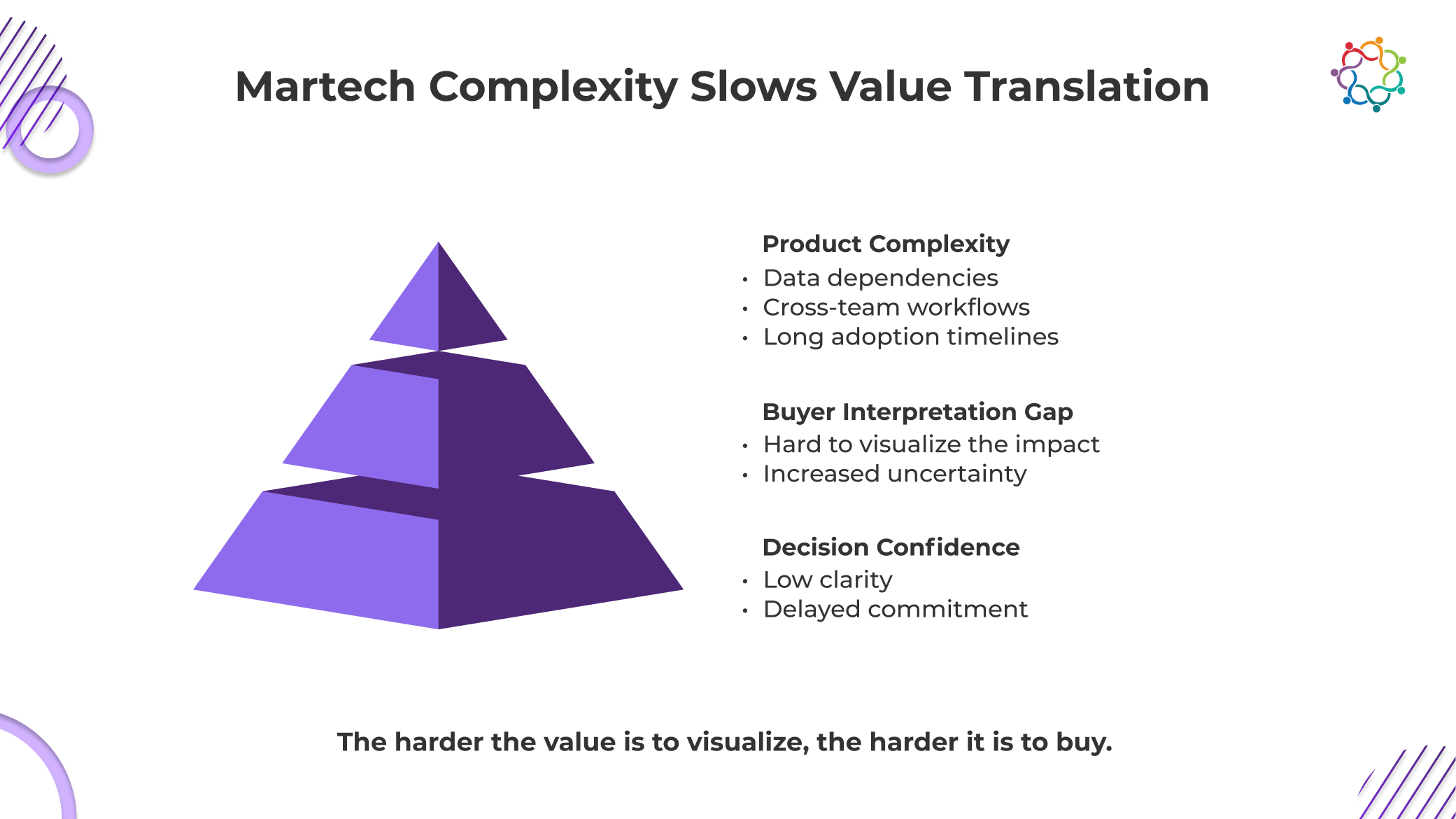

Martech products are not consumed instantly. They are implemented, integrated, configured, and adopted over time.

That reality introduces friction that no demo can fully resolve.

Complexity is often framed as a communication challenge. It is not. It is a risk amplifier.

Every integration dependency, every data flow, every cross-functional touchpoint increases uncertainty. Buyers are not just evaluating the product. They are evaluating their ability to make it work.

This is where the Product Value Translation Gap deepens.

The more complex the product, the harder it becomes for buyers to simulate success in their own environment. They cannot easily visualize end-to-end execution. They cannot confidently predict outcomes. And when prediction fails, hesitation begins.

This is why martech buying cycles expand even when interest is high.

Buyers are not delaying because they are unconvinced of the potential. They are delaying because they are unconvinced of execution.

And execution is where most martech investments fail.

Until buyers believe that implementation will succeed within their messy systems, no amount of product demonstration will convert into decision confidence.

The breakdown is not random. It happens at predictable points, but most teams fail to confront how severe the impact actually is.

Messaging explains capability but avoids operational reality. It highlights what the product enables without showing what it demands. Buyers are left with a clean narrative that does not reflect real execution.

This creates a dangerous mismatch between expectation and reality.

Demos are controlled environments. They remove friction, simplify workflows, and eliminate edge cases. But real usage is messy.

When buyers realize this gap, confidence drops. The demo becomes a best-case scenario, not a reliable indicator of success.

Every organization has unique systems, data structures, and team dynamics. Generic demonstrations fail to translate because they do not account for this variability.

Buyers are forced to mentally adapt the product to their context. Most cannot do this accurately.

More information does not create clarity. It reduces it.

This is the uncomfortable truth most teams avoid: clarity decreases as information increases when that information lacks structure.

Buyers are overwhelmed with features, integrations, and use cases. Instead of identifying what matters, they lose the ability to prioritize.

The result is paralysis.

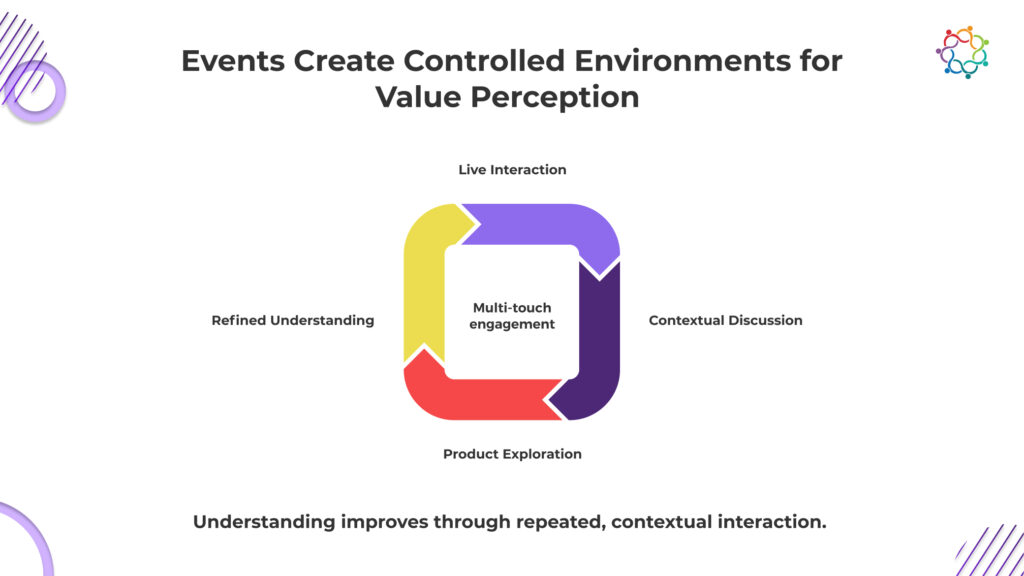

Martech events are not valuable because they are interactive. They are valuable because they compress understanding into a shorter window.

Unlike traditional channels, events allow multiple layers of value to be explored in rapid succession. Conversations evolve. Questions deepen. Context builds. Buyers engage with the product from different angles within a limited timeframe.

This compression is what makes such events effective.

This is not about better storytelling. It is about accelerated sense-making.

Events force buyers to confront the product more directly. They reduce the distance between exposure and evaluation.

But this does not eliminate the Demo-to-Decision Gap. It only narrows it temporarily.

Because even in compressed environments, the same underlying issue remains. Buyers still need to believe the product will work in their reality. And compression without validation can create false confidence.

This is why many martech events generate strong engagement but inconsistent outcomes. Understanding improves, but belief still lags.

Initial understanding is fragile. It decays quickly when it is not reinforced.

This is where most martech strategies collapse.

Events create a moment of clarity. Buyers connect features to use cases. They begin to see potential. But once they return to their environment, that clarity is tested against reality.

And reality is more complex than any event interaction.

This is not the same as demonstration failure. This is reinforcement failure.

Value does not disappear because it was poorly explained. It disappears because it was not validated repeatedly against real-world conditions.

Without this reinforcement, doubt resurfaces. And when doubt resurfaces, decision confidence collapses.

This is why post-event momentum often fades. Not because interest was weak, but because belief was never stabilized.

The Product Value Translation Gap is not closed in a single interaction. It requires consistent alignment between what was demonstrated and what is actually possible.

If that alignment breaks, buyers default to caution. And caution delays revenue.

When buyers are not fully confident in how a product will perform in their environment, revenue impact shows up immediately. Deals do not collapse. They slow down and become harder to close.

Evaluation stages stretch as more stakeholders get involved to reduce uncertainty. Procurement cycles extend. Internal alignment takes longer. What should have been a clear decision turns into prolonged validation.

This delay creates direct commercial pressure. Sales teams rely more on discounting to push deals forward. Margins shrink. Customer acquisition costs rise because more time and effort are required per opportunity.

At the same time, payback periods extend, weakening overall revenue efficiency. Pipeline may look strong, but conversion weakens underneath.

Martech companies continue investing in martech events, expecting acceleration. Instead, they often get volume without velocity.

Product value is not proven when it is presented. It is proven that buyers are willing to move forward without hesitation.

Most organizations continue to treat events as lead engines because measurement systems demand it. Performance is judged by leads generated, meetings booked, and pipeline created.

These metrics are easy to track and easy to report. They create a sense of progress, even when actual buying decisions remain unchanged.

As a result, marketing tech events are optimized for activity, not understanding. More attendees are targeted. More conversations are initiated. More opportunities are logged into the system.

But none of this guarantees that buyers have gained enough clarity to make a decision.

This creates a disconnect between reported success and actual revenue impact. Events look productive on dashboards while deals continue to stall in later stages.

The problem is not execution effort. It is what teams are incentivized to measure.

As long as volume defines success, events will prioritize acquisition over real product evaluation.

Martech companies rely on martech events because product value is difficult to communicate in fragmented environments. Standard channels distribute information but fail to create belief. Buyers see the product, but they cannot trust it within their own systems.

Events provide a temporary solution by compressing understanding. They create moments where value becomes clearer, faster.

But clarity is not enough.

If that clarity does not translate into decision confidence, the impact is limited. The role of marketing tech events is not to showcase the product. It is to reduce buyer uncertainty to the point where a decision feels safe.

Because that is the real barrier. Not awareness. Not features. But belief.

Martech events exist because buyers don’t struggle to see the product. They struggle to believe it will work in their reality.

If your events increase visibility but fail to reduce that doubt, you are not accelerating revenue. You are just making hesitation more informed.

Digital-first strategies have expanded B2B engagement at scale. Buyers now have unlimited access to content, constant touchpoints, and the ability to evaluate solutions on their own terms. On the surface, this looks like progress. More activity, more visibility, more informed buyers.

But this is where the Digital Velocity Trap takes hold.

More content does not just slow down decisions. It gives buyers structured reasons to delay them. Every new asset adds another comparison, another angle, another layer of doubt. Instead of moving forward, buyers expand their evaluation.

There is no pressure to commit. No moment that forces a decision. Only continuous access that keeps the process open.

The execution impact is immediate. Sales inherits buyers who are informed but not ready. Conversations restart instead of progressing.

The revenue consequence follows. Pipeline builds, but velocity drops. Deals stretch without clear timelines.

Digital channels increase access. They do not enforce movement toward decisions.

This blog explains why digital-first strategies fail to maintain decision speed and why events continue to exist as a mechanism to force buyer movement.

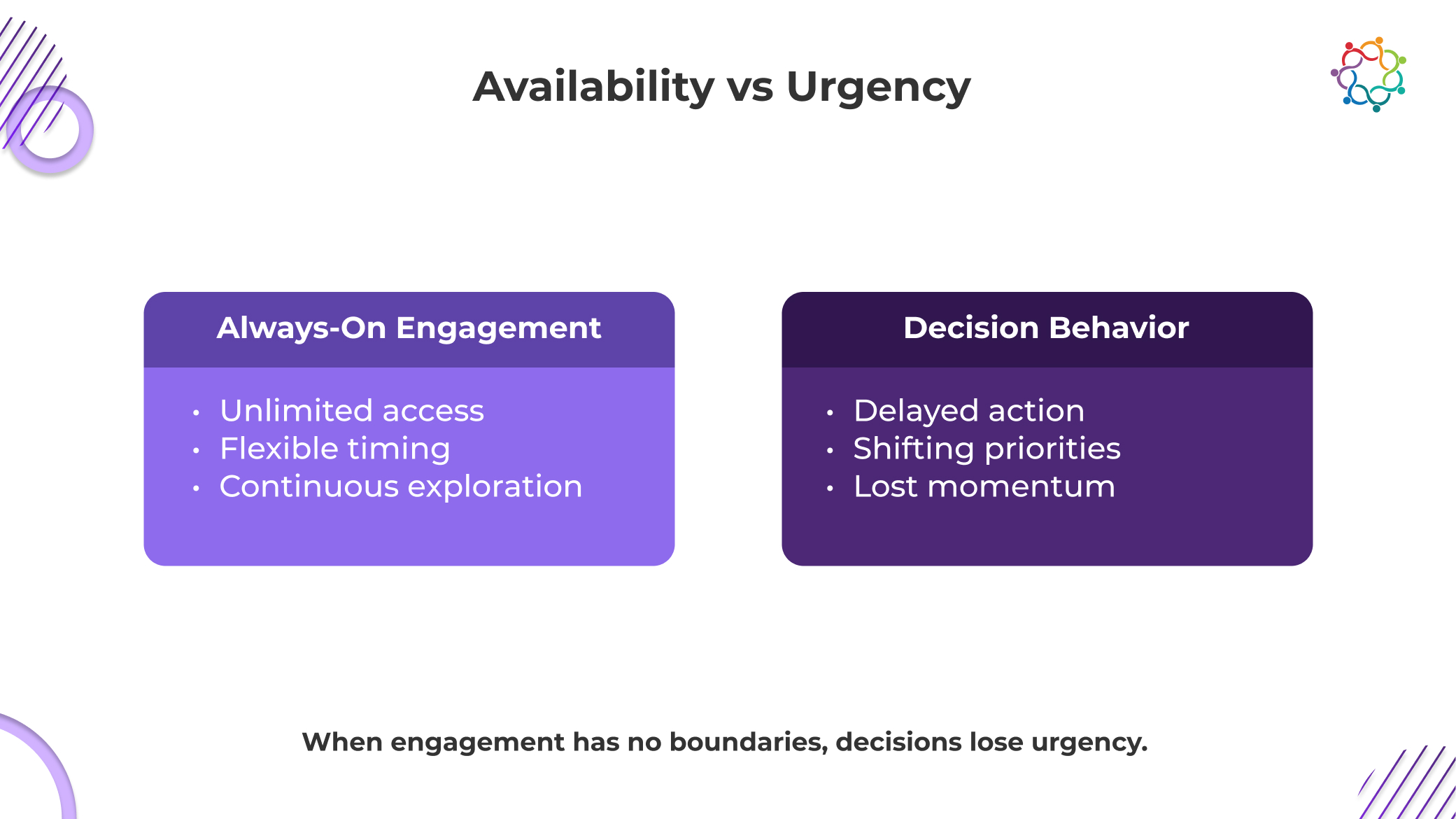

Digital-first marketing is built on availability. Buyers can engage anytime, from anywhere, at any stage of their journey. This flexibility is positioned as a strength. In reality, it removes the very thing that drives decisions.

Urgency.

The digital velocity trap deepens here because nothing forces a buyer to act. There is no deadline, no constraint, no moment of commitment. Evaluation becomes open-ended.

This is not inefficiency. It is rational behavior. When risk feels distributed over time, there is no incentive to compress decisions.

The execution impact is severe. Demand generation programs continue to produce engagement, but that engagement lacks direction. Sales teams chase activity, not intent. Conversations lose urgency because buyers do not feel compelled to resolve uncertainty.

The revenue consequence follows. Sales cycles extend. Opportunities linger in mid-funnel stages. The pipeline becomes inflated but inactive.

Digital marketing optimizes for constant access. Revenue depends on forced progression. Always-on engagement does not accelerate decisions. It eliminates the conditions required to make them.

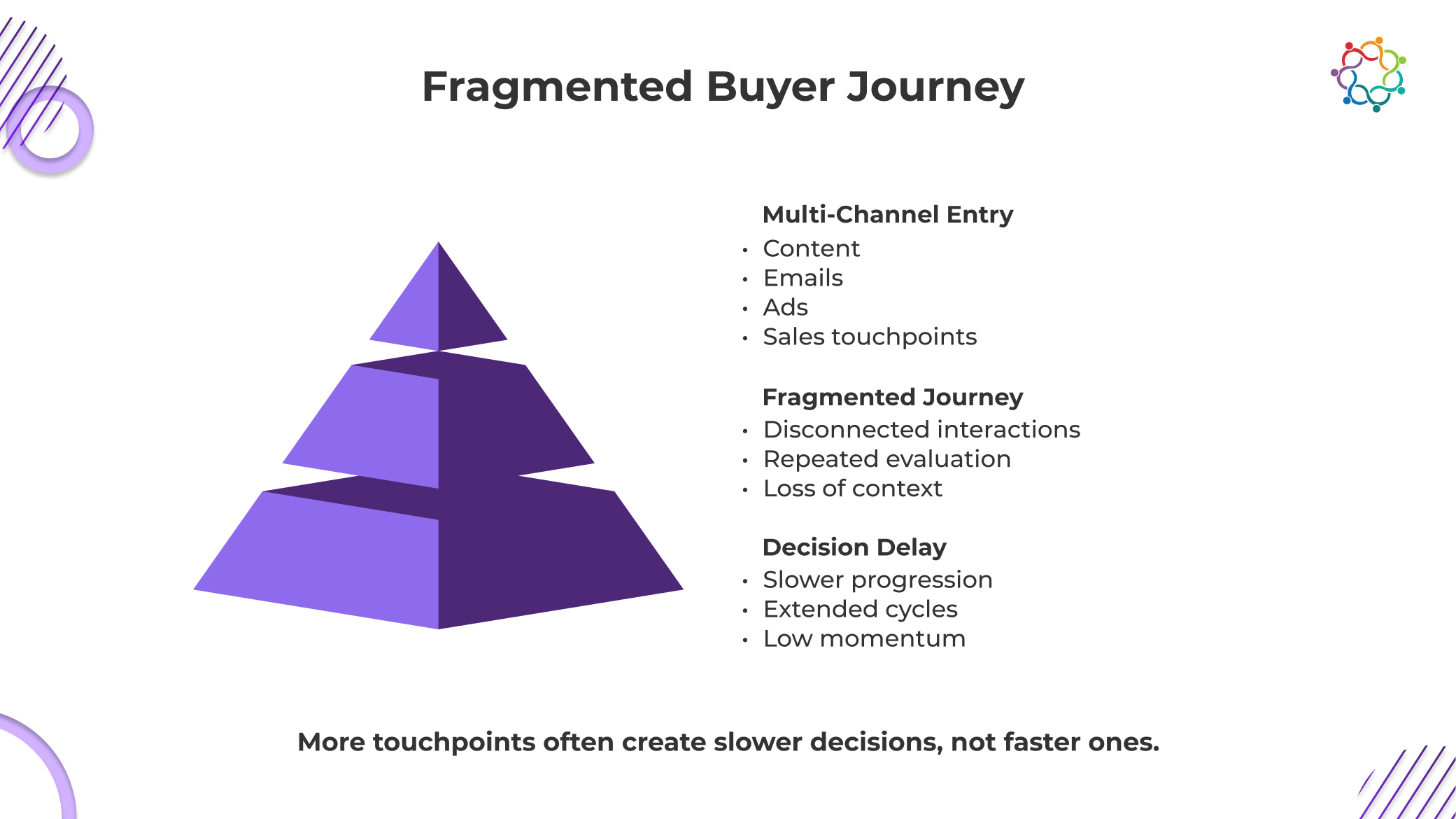

Digital-first strategies create more touchpoints than ever before. Content hubs, email nurtures, paid campaigns, sales outreach, and product demos. Each interaction is designed to move the buyer forward.

In reality, they rarely do.

When misalignment happens, these touchpoints do not connect into a single progression. They exist as isolated moments. Buyers move between them without continuity, which fundamentally alters how decisions are made.

The critical issue is not fragmentation itself. It is what fragmentation causes. Buyers do not build momentum. They restart the evaluation.

Every new interaction becomes a fresh entry point rather than a continuation. A new stakeholder joins and asks questions that have already been answered. A new piece of content reframes the problem. A new comparison introduces doubt.

The execution impact becomes clear quickly. Sales teams repeat conversations. Context is lost between interactions. Alignment across buying groups takes longer because no shared progression exists.

From a buyer psychology perspective, this makes the delay feel safe. When decisions are spread across multiple channels and timelines, the perceived risk of waiting decreases. There is always another input to consider. Another perspective to validate.

The revenue consequence compounds over time. Deals cycle through extended evaluation loops. Pipeline stages become less predictive. Movement slows, even as engagement increases.

More touchpoints do not accelerate decisions. They multiply the opportunities to hesitate.

The slowdown in decision speed is not random. It is structural. Digital-first environments are not designed to create commitment. They are designed to sustain engagement.

The Digital Velocity Trap becomes visible at specific points in execution where momentum consistently breaks.

No single interaction carries enough weight to drive commitment. Buyers split attention across content, platforms, and conversations. Focus is diluted, and no moment stands out as decisive.

Digital interactions tend to remain surface-level. Initial engagement happens through content or asynchronous communication. Deep conversations require scheduling, alignment, and follow-ups. By the time they happen, momentum has already weakened.

Stakeholders interact in various contexts, at various times, and via various channels. Instead of being a decision-making process, alignment turns into a coordinating issue.

Evaluation goes on forever in the absence of clear situations that compel advancement. Because there is no incentive to stop, buyers continue to explore.

The sharper insight here is simple. In digital environments, no single moment forces a decision.

The execution impact is prolonged indecision. Sales teams operate in a reactive mode, trying to pull buyers forward without leverage.

The revenue consequence is predictable. Pipeline slows, forecasting weakens, and deal velocity declines. Digital systems optimize for continuity. Decisions require interruption.

This is where the role of B2B events marketing becomes clear. Not as a legacy channel, but as a structural counterweight to digital slowdown.

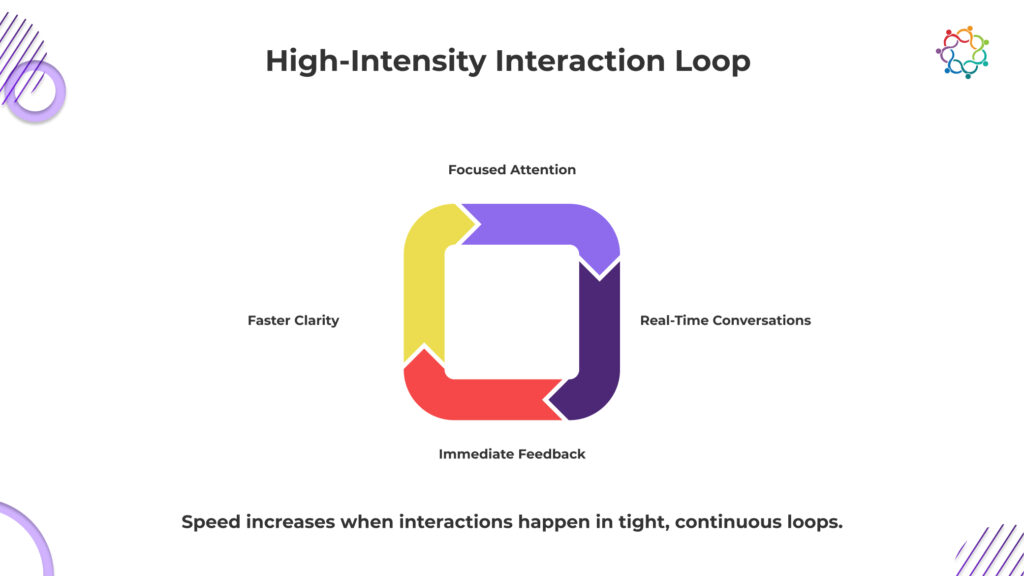

Events operate outside the Digital Velocity Trap because they introduce something that digital environments cannot replicate. Constraint.

Time is limited. Attention is focused. Access to stakeholders is immediate. These conditions change buyer behavior instantly.

Events do not just accelerate understanding. They force decisions into a bounded timeframe. Buyers cannot defer indefinitely because the opportunity to engage is finite.

This creates a high-intent interaction environment where decision-making accelerates naturally.

The revenue consequence is not theoretical. Decision timelines compress. Opportunities move faster through the pipeline. Alignment happens in hours or days instead of weeks.

B2B events marketing does not replace digital programs. It compensates for their inability to create urgency.

Without these moments of forced interaction, buyers remain in extended evaluation cycles. With them, decisions regain structure and speed.

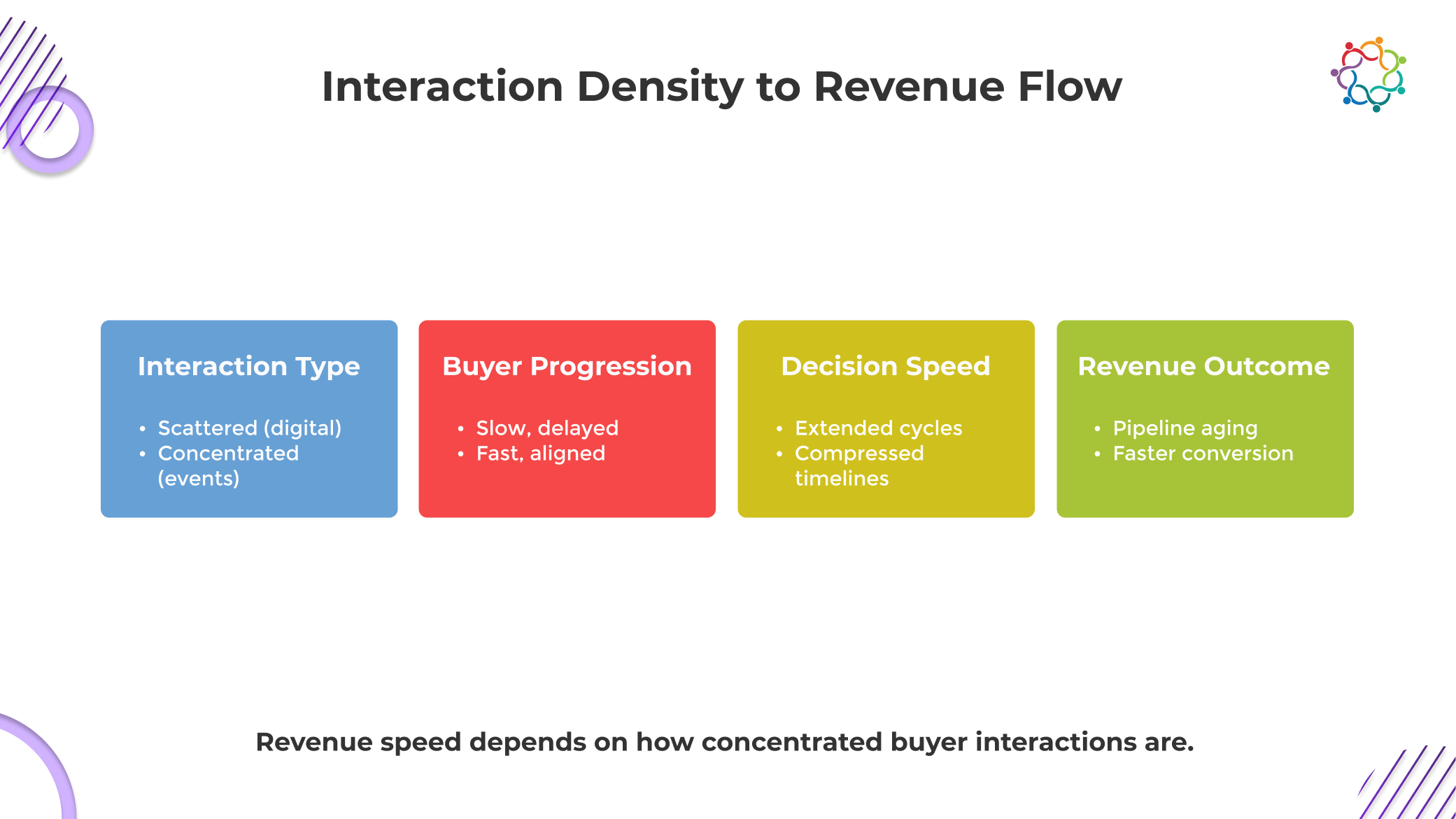

Speed in B2B does not come from how often buyers engage. It comes from how tightly those interactions are packed together. This is where the digital velocity trap quietly breaks your pipeline. Digital systems stretch conversations across days, sometimes weeks, creating gaps where momentum fades and priorities shift.

Every delay between interactions forces buyers to recontextualize the problem. They revisit earlier questions, reopen internal discussions, and dilute urgency. What should be progression becomes repetition.

Concentrated interaction changes this dynamic completely. When conversations happen back-to-back, decisions build on continuity. Questions are resolved before doubt compounds. Stakeholders align while context is still shared.

If your interactions are spread out, your deals will slow down. There is no workaround.

The execution impact is simple. Either you control the pace of interaction, or the buyer defaults to delay.

Speed is not about maintaining engagement. It is about removing the time gaps that allow decisions to drift.

When decision speed drops, the damage does not stay in the pipeline. It shows up directly in revenue performance. The digital velocity trap turns what looks like a healthy funnel into an inefficient one.

Opportunities accumulate but do not convert at the expected rate. Pipeline volume increases, but movement stalls. This creates a misleading sense of growth while actual revenue realization slips further out.

Longer sales cycles drive up acquisition costs. More touchpoints, more follow-ups, more time from sales and marketing teams. You are spending more to close the same deal, often with lower certainty.

Missed timing becomes the real cost. Deals that should close within a defined window drift, pushing revenue into future periods or losing it entirely. Forecasting becomes guesswork because timelines are no longer dependable.

If decisions are slow, revenue is slow. It is that direct.

You are not facing a demand problem. You are operating with a speed problem that compounds across every stage of the funnel.

Despite clear signs of slowing decision velocity, most organizations continue to prioritize digital-first strategies. Not because they perform better, but because they are easier to justify.

The misalignment persists because digital success is measured through activity. Reach, engagement, and lead volume create visible proof of performance. These metrics are immediate, scalable, and easy to report upward.

Speed is none of those things. It is harder to isolate, harder to attribute, and harder to defend in reporting structures. So it gets ignored.

This leads to a predictable outcome. Teams double down on what looks efficient on paper while overlooking whether deals are actually moving faster. More campaigns are launched, more leads are generated, and more content is produced.

But none of it forces decisions.

Digital is prioritized because it is cheaper to run and easier to measure, not because it accelerates revenue.

If your strategy rewards activity over movement, you are not optimizing for growth. You are systematically slowing it down.

Digital-first strategies have earned their place by scaling awareness, engagement, and demand. But scale alone does not move deals forward. When buyers have unlimited access to information, no defined timelines, and fragmented interactions, decisions slow down. Engagement increases, but progression weakens.

Events continue to matter because they reintroduce what digital lacks. Focus, immediacy, and shared decision moments. They compress conversations, align stakeholders, and force clarity within a fixed window.

If your strategy generates activity but delays decisions, it is not driving growth.

Revenue does not follow engagement. It follows decisions made on time.

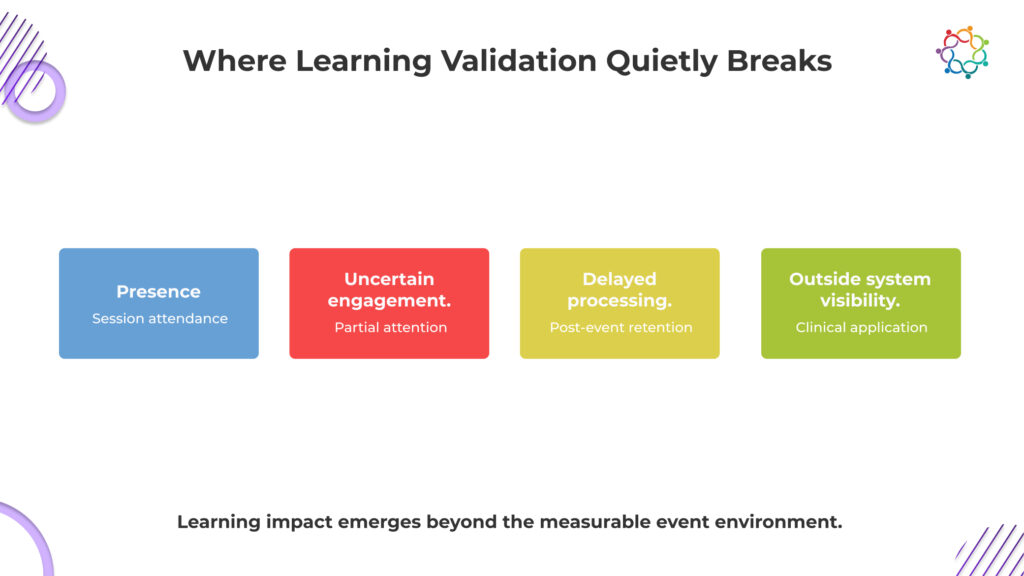

CME proves presence, not progress.

That is the metric most programs optimize for, even if they do not say it out loud.

Across CME events, participation looks strong on paper. Sessions fill up. Physicians log attendance. Credits are issued and documented. From a reporting standpoint, everything signals success. The system confirms that education was delivered and received.

But that confirmation is superficial.

Attendance only proves that a physician was present when information was shared. It does not confirm whether they engaged with the content, understood its clinical relevance, or changed how they interpret medical decisions. The assumption that presence equals learning is where the problem begins.

This is not a gap in effort. It is a gap in validation.

The system captures exposure because it is measurable. It cannot capture cognitive change because it is not.

This blog examines the Learning Validation Gap in CME and why proving attendance is far easier than proving meaningful learning.

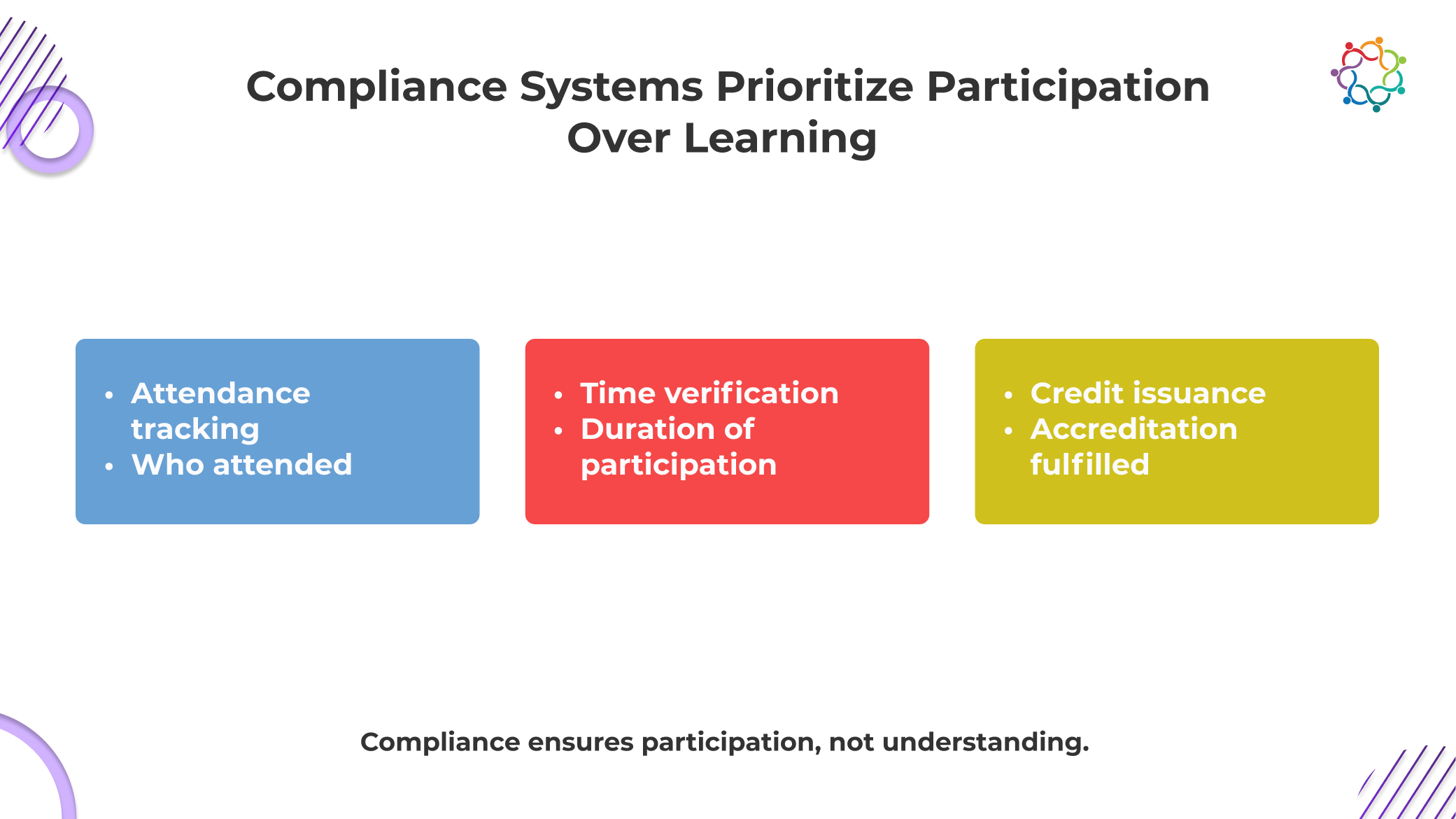

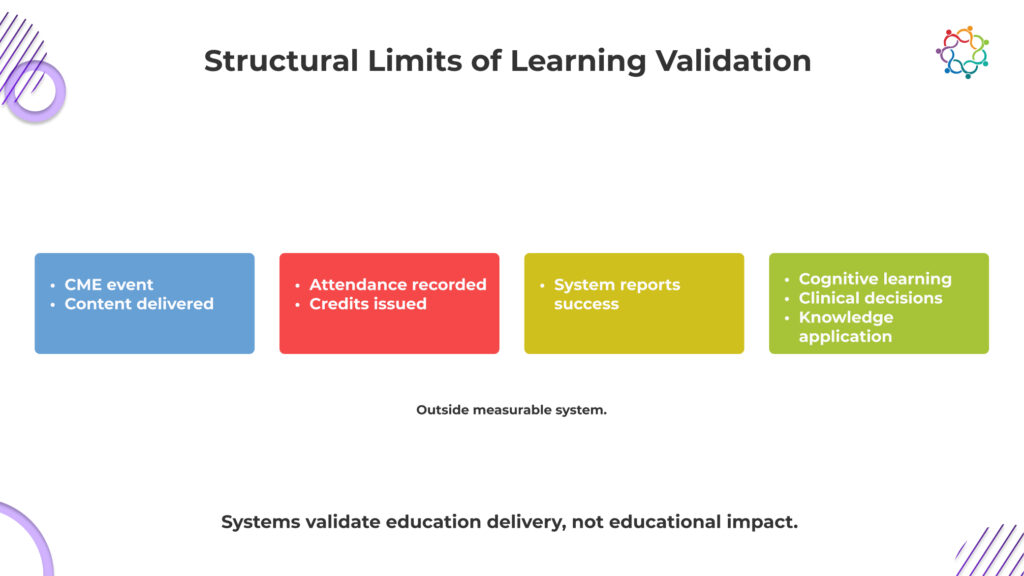

CME systems are not failing to measure learning. They were never built to do it.

At their core, these frameworks exist to prove compliance. They ensure that educational standards are met, content is accredited, and physician participation is properly documented. Everything revolves around what can be verified in an audit.

And learning cannot be audited.

So the system defaults to what it can prove. Attendance logs, session duration, and credit issuance. These are clean, defensible, and easy to report. They satisfy regulatory requirements and create the appearance of educational rigor.

But they do not confirm understanding.

This is the uncomfortable reality. The system is designed to validate that education was delivered, not that it was absorbed. In Continuing Medical Education events, participation becomes the endpoint because it is measurable.

Learning remains outside the system, unverified and largely assumed.

Most medical education events are built on a simple assumption that if physicians hear the information, they will understand it. That assumption is dangerously weak.