Samaaro + Your CRM: Zero Integration Fee for Annual Sign-Ups Until 30 June, 2025

- 00Days

- 00Hrs

- 00Min

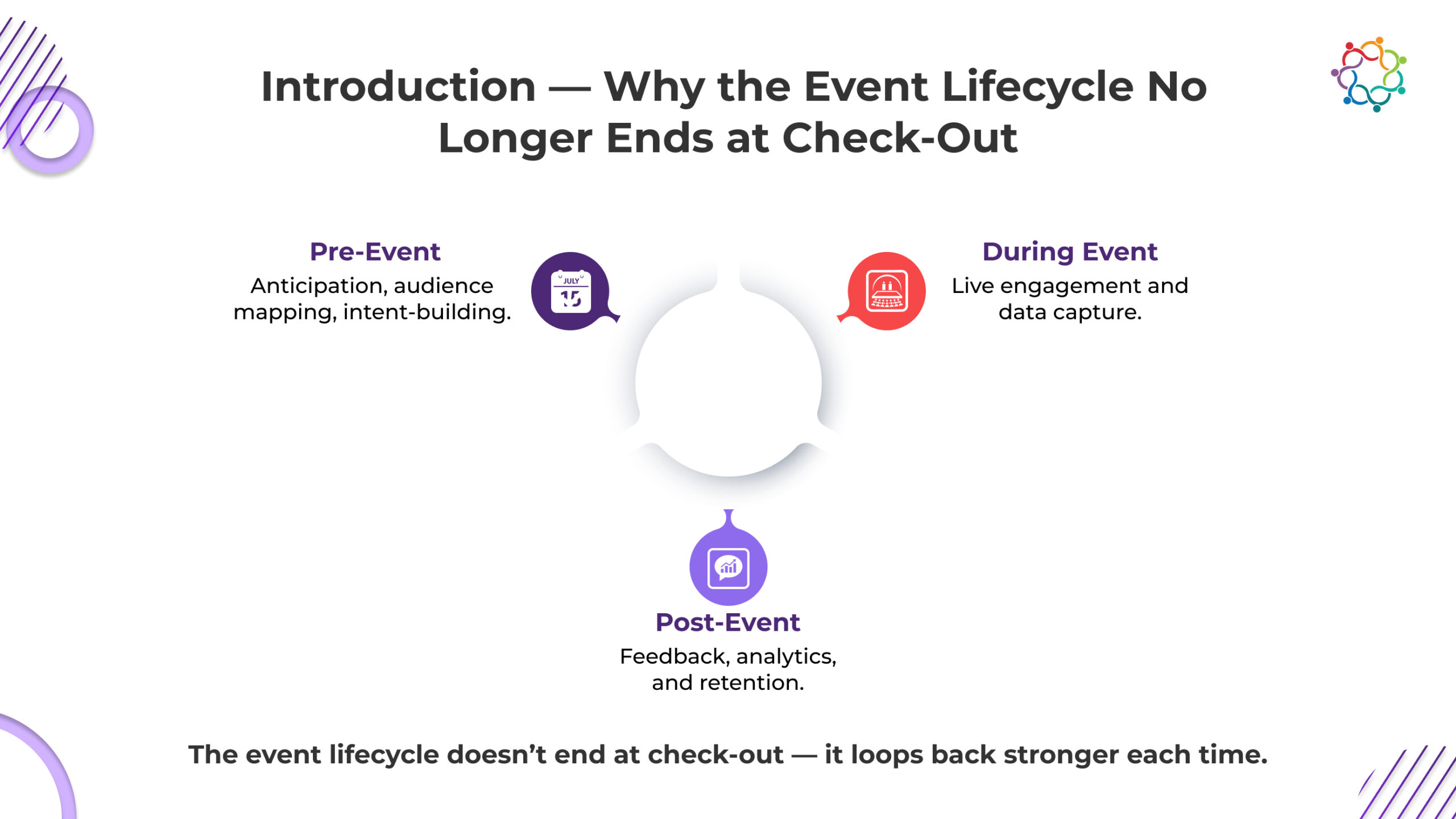

Event marketing has long been treated as a one-day experience. The goal was simple: get as many people to the event as possible, create a memorable experience, and measure success through foot count or applause. But in a data-driven, digital-first marketing ecosystem, this is no longer a viable model.

The event landscape is changing from one-off activations to ongoing engagement ecosystems. For enterprise marketers, events are no longer one-off milestones, they are living, breathing touchpoints that relate brand storytelling, customer data, and audience behavior into a continuous loop.

This change is powered by the integration of automation, analytics, and insights. Every click to register, respond to a poll, and fill out a survey post-event contributes to a cohesive marketing narrative that extends well-beyond the event.

This resource walks through each stage of the life cycle, from pre-event anticipation to post-event analysis, and how some leading organizations leverage customer engagement into a measurable, repeatable growth engine.

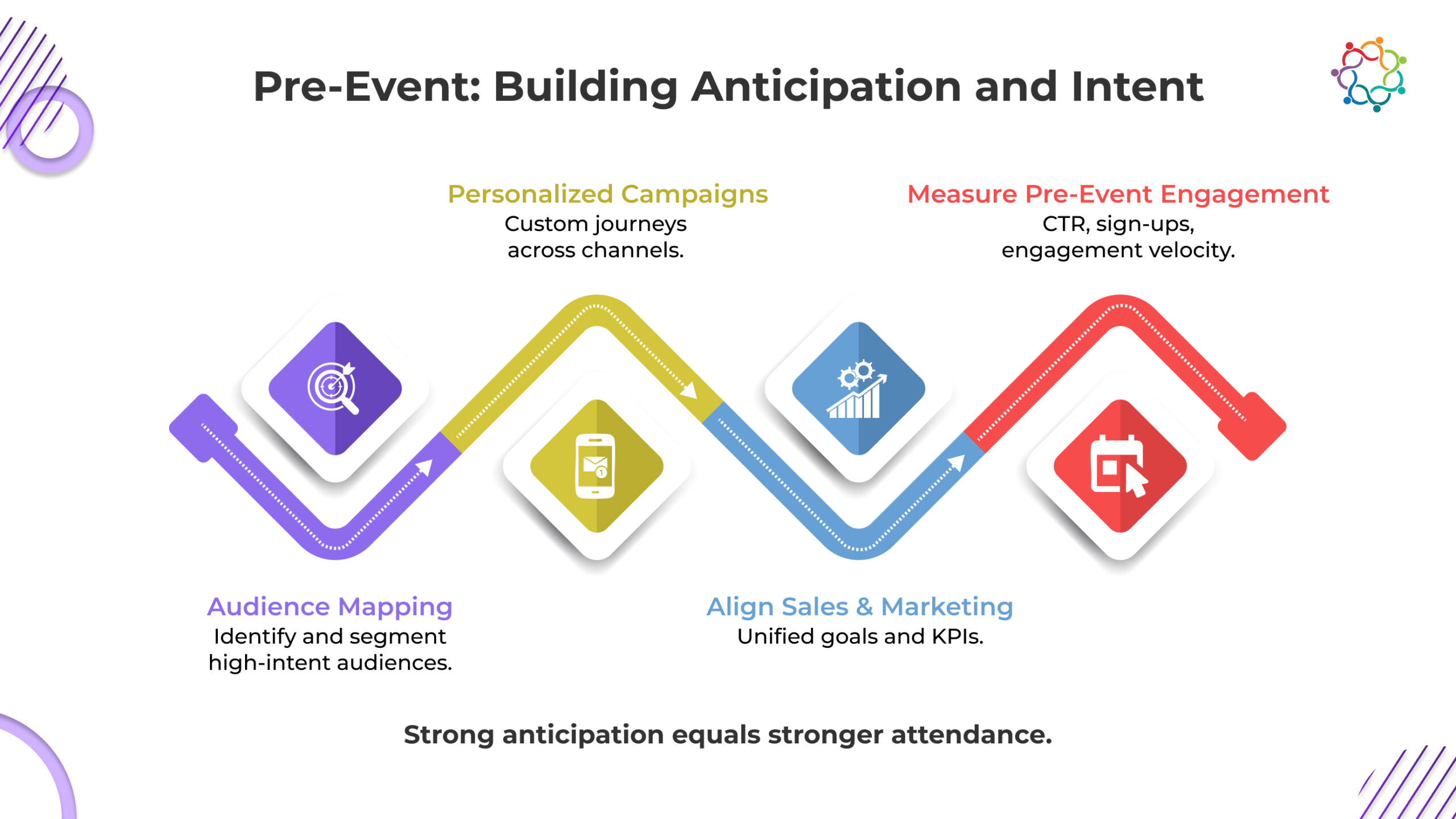

The occurrence of an event starts way before the lights at the venue shine bright. The pre-event phase determines everything that follows. This is where awareness is cultivated, curiosity is heightened, and intent is created.

Previously, pre-event promotional efforts relied on very broad messaging and mass exposure. Now, it’s all about personalization, segmentation, and knowing your audience map to success. Contemporary marketers understand that getting people excited is not a by product of exposure it is a function of relevance.

Marketers at enterprises now use audience intelligence tools to find segments based on interest, behavior or industry. Instead of just pushing out generic messaging, brands can now build tailored communication journeys that speak directly to each persona’s motivations for attending, whether it is to learn, network, or make a business connection.

By integrating data from CRM systems, website traffic and social engagement, marketers can create specific pre-event funnels that more deeply engender emotional and professional engagement.

Content-led nurturing is instrumental in converting awareness into action. Whitepapers, teaser videos, or speaker previews warm up prospects over time, before the registration point of the event. The best events campaigns are those that keep an ongoing narrative going, effectively inviting the audience into a story that takes somewhat over weeks, not hours.

Research suggests that organizations sending personalized pre-event communications have 40% higher attendance rates and 25% more engagement during the event. The rationale is straightforward; when attendees believe the event was designed for them, they want to make it count.

One of the things that tends to be overlooked in terms of pre-event success is internal alignment. When sales teams and marketing have aligned goals (for example, getting targeted accounts to attend or converting attendees into high-value leads), every outreach effort is part of a cohesive strategy, rather than just another independent activity.

Metrics such as pre-registrations, engagement velocity, and conversion-to-check-in ratio help to quantify how well someone is building anticipation. In truth, the pre-event stage is not simply about a “promotion”, it’s about generating intent.

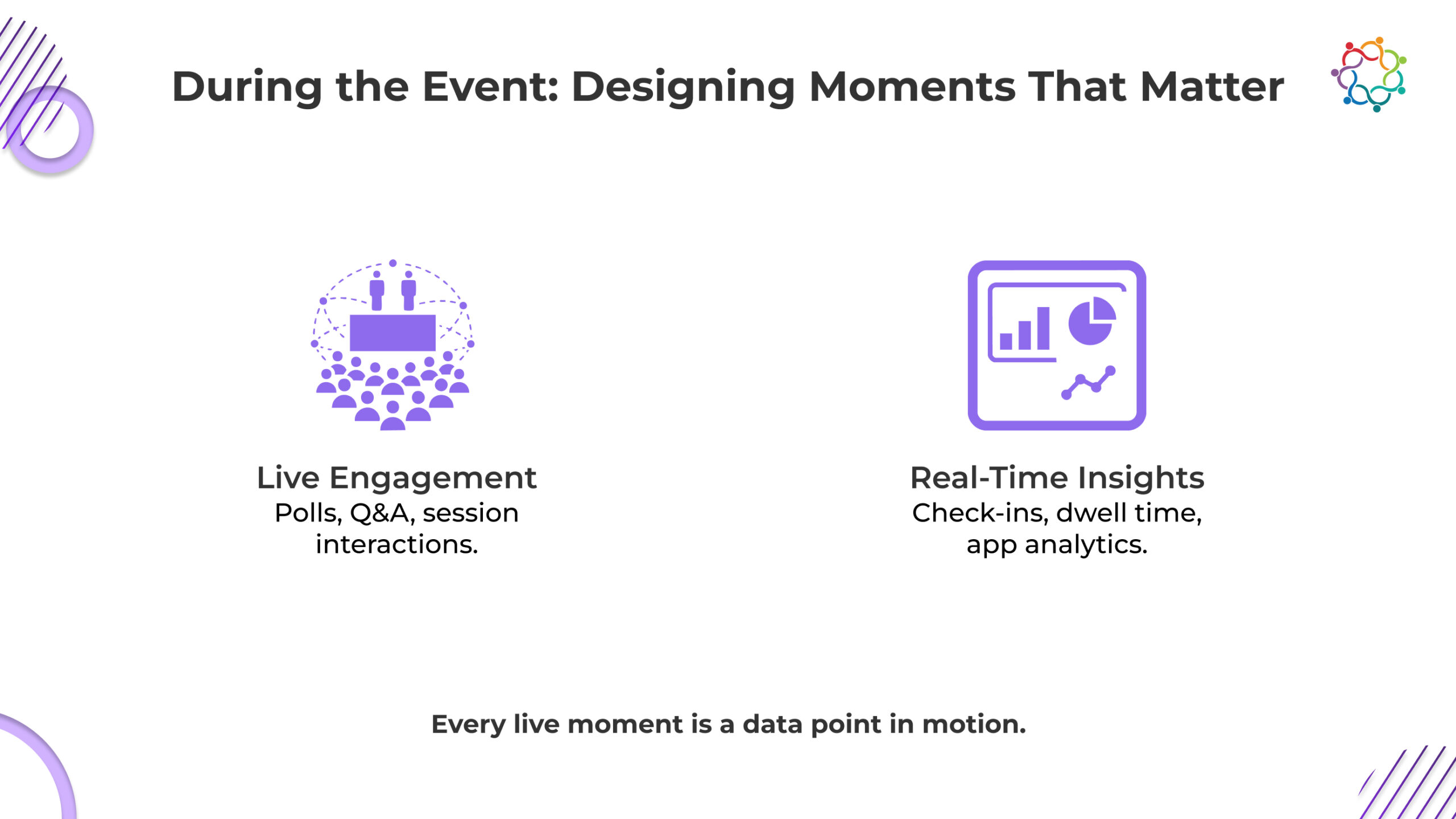

The day (or days) of the event is often the most public, high-stakes period of the event lifecycle. Anticipation becomes participation, and brands have a special opportunity to create memories, collect data and build deeper connections;

However, in the modern era, the “live” experience is also a data experience. Every action of the attendee, from scanning a badge, answering a poll, or posting to the app, feeds a larger feedback loop that will define the future of engagement.

Nowadays, effective event marketing is about engagement rather than just observation. For example, audience poll responses, digital Q&A, and app-based participation experiences turn participants from passive observers into active participants. These inputs allow participants to be engaged in the moment while providing immediate feedback on sentiment and topics that resonate with the audience.

More and more businesses design sessions using audience personas instead of generic tracks. For example, decision-makers may have unique roundtables, while practitioners are participating in workshops. Designed for the audience accelerates relevance and helps manage how content is consumed at professional expense.

All the unique experiences of each attendee track what they did digitally; it tracks mechanisms, check-in time, how long each stayed in booths, how many sessions they attended, and which content they downloaded.

Understanding these behaviorally-based data is the difference between a successful execution and simply good marketing intelligence.

Modern event-tech ecosystems like Samaaro connect all of those signals so that marketing teams can understand engagement levels as they are happening. In this viewpoint, a team can naturally adjust throughout the event when it makes sense to do so, such as session times, promoting panels that had under-attendance, or sales can receive real-time alerts about visitors demonstrating intent.

In the end, the event becomes both brand experience and data laboratory, in which marketers can see, test and learn from the progression of individual levels.

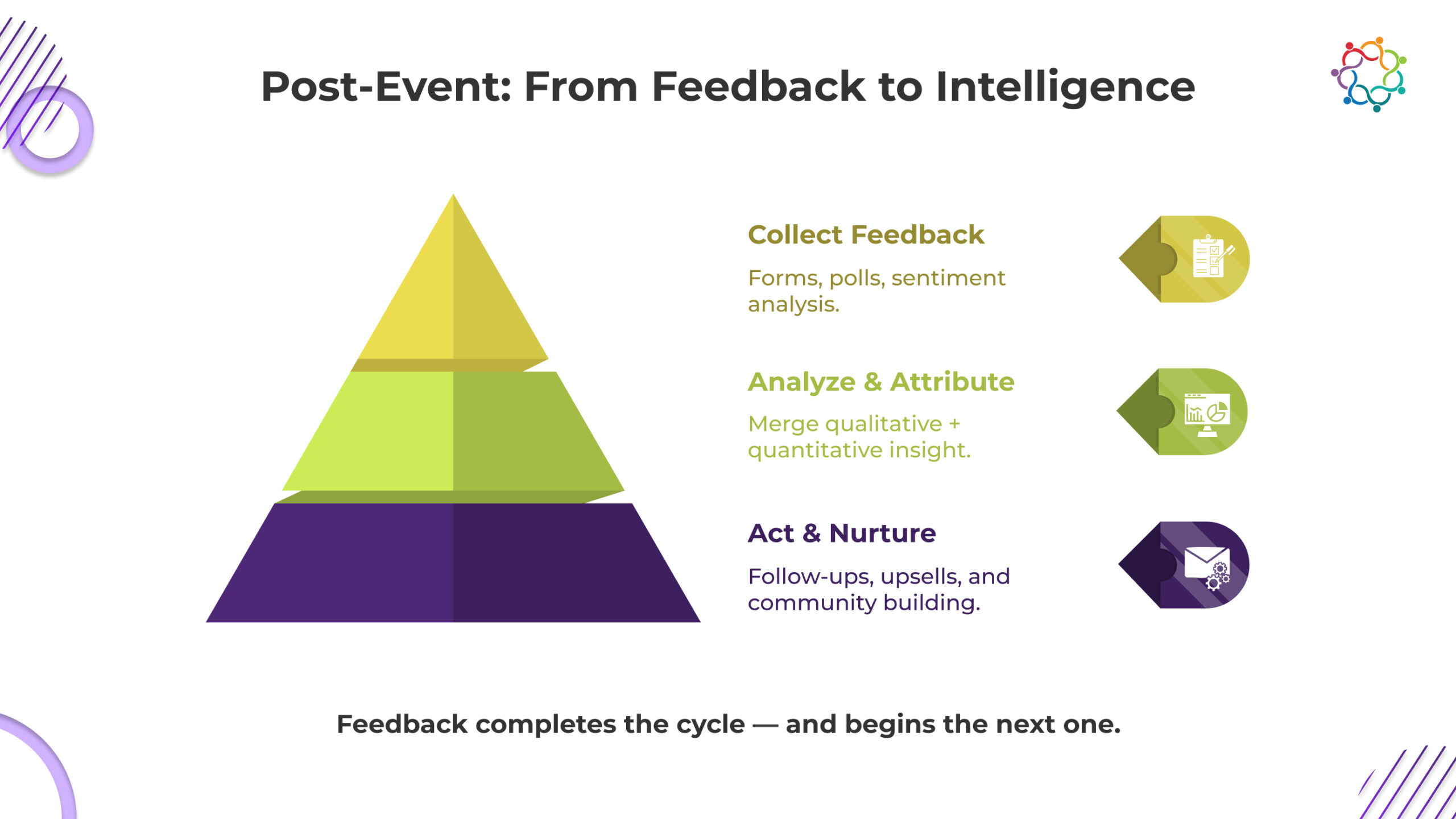

If the event lifecycle stopped when the last attendee left, much of its potential would remain untapped. The post-event phase is where raw experience turns into actionable intelligence — and where the groundwork for future engagement is laid.

Gathering response information has advanced far beyond simply distributing satisfaction surveys. Today, event teams utilize a variety of methods across channels: in-app surveys, email forms, live polling, and sentiment analysis from social media and chat transcripts. Combined, the information collected gives a 360-degree overview of what went well, what did not, and audience sentiment.

When qualitative information is analyzed in conjunction with quantitative metrics, marketers can uncover important factors impacting satisfaction, reveal unmet expectations, and refine future tactics.

Feedback is not merely a process for sharing experiences. Feedback is a tool for creating change or improvement, and if collected and assessed in a timely fashion, can have an impact on marketing automation, generation of content and product strategies.

Just an example, if feedback indicates a high-level interest in sustainability or AI, the feedback can then inform the future skills of marketing campaigns or product demos. The most well-organized enterprise teams will close the feedback loop in weeks not months, to keep the momentum going.

A number of enterprise teams utilize integrated dashboards, Samaaro dashboard for example, to be able to combine engagement metrics with survey data and drive a more clearly and develop a clearer indication of impact. Also, nothing like collecting the post-event data that will turn into the story of customer intent and brand affinity, rather than just data.

Attendee numbers are no longer the one true measure of success – today’s marketers look for deeper, like engagement quality, conversion rates, lead velocity, and customer influence. An actual ROI connects the emotional connection to a business impact and can reflect how each series of events impacted the pipeline, retention, or advocacy.

The post-event point is not an endpoint; the post-event stage is the point where insights lead to new opportunity.

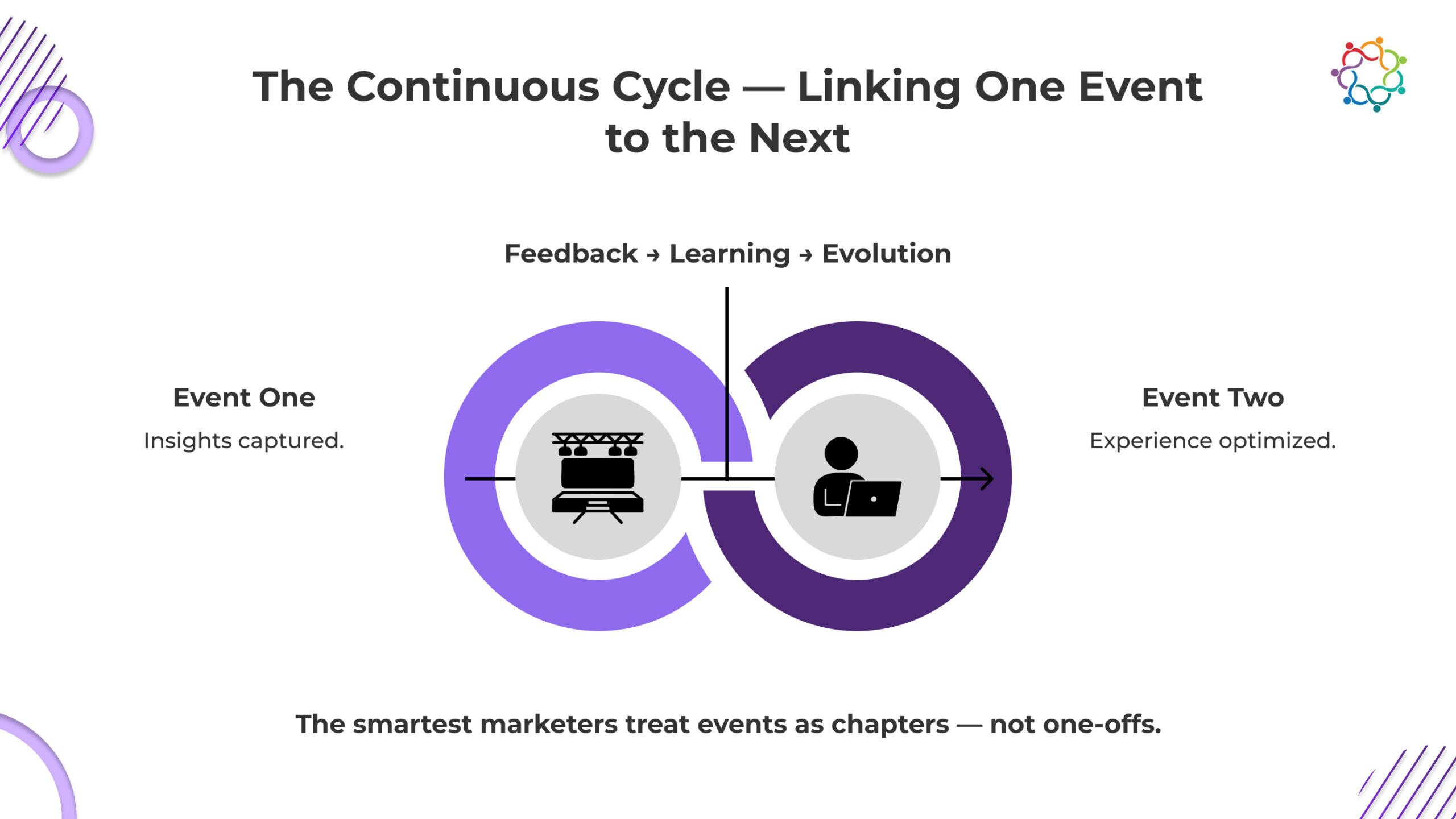

Events today aren’t isolated milestones,, they’re interconnected chapters of a larger narrative. The most successful enterprises think of every event as part of a continuous cycle of learning, engagement, and improvement.

The actual evolution is in how brands shift from organizing individual activations to creating communities. Instead of seeing members as one time participants, the marketer views members as part of an ongoing conversation with lasting meaning. These communities will become self-sustaining ecosystems, where content, conversation, and collaboration occur year-round.

Every event produces a series of patterns, sessions with the greatest dwell-time, the most engaged audiences, or the formats that ignited authenticity and discussion. When properly applied as a review process, this is the starting point for your next event design.

In connecting the dots of the data from event to event, marketers may optimize everything from timing to content themes, to sponsors. KPIs like re-engagement rate, returning audience %, or conversion event to event, more accurately contribute to the definitions of success than ticket counts.

This is an ongoing practice of building value over time withing a series of event- which ultimately creates a long-tail ROI. Each engagement sets up the next, fully transforming the event portfolio – one event at a time – into a living breathing ecosystem of marketing.

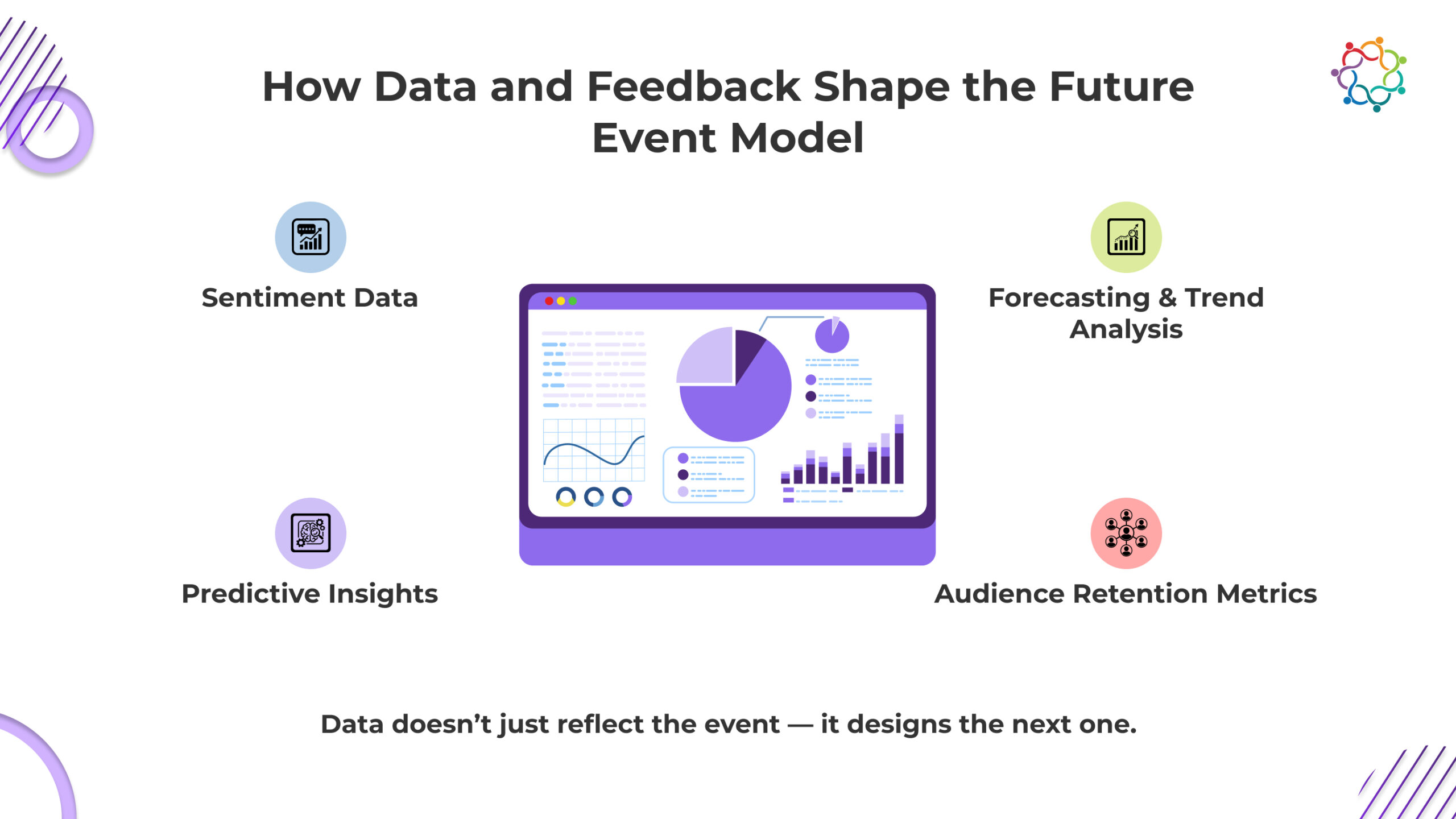

At its core, the event lifecycle is fundamentally based on insight. For each interaction, for every comment left by a participant, or every click they made, you will be able to start to shape your understanding of what the audiences value and want. It’s when feedback crosses paths with analytics does the human dimension and operational side of event success collide.

Post-event feedback doesn’t just unearth information to help improve the next event; it often unearths an insight into the product perception, emerging themes in the industry, and in customer needs. A best-in-class marketer sees feedback as relevant market insight, guiding product roadmaps, and campaign storytelling.

With predictive models infused by AI technology, teams are able to now predict probable attendance, peaks in engagement, and content interests. Predictive data can help planners assign more resources to amplify the reach and increase the potential of their event right from the front end, which saves on efficiencies and reduces any risk associated with budgeting and logistics.

Tools running natural language processing (NLP) technology can signal emotional sentiment from seemingly unmotivated responses. We want to act in future events based on whether a participant or audiences experienced inspiration, or indifference, or were simply overwhelmed. This will help with exactly how we craft the tone for our events, and provides insight into storytelling, and speaker selection for future events.

Research we conducted with Samaaro and their enterprise clients validates that teams capable of operationalizing feedback within 30 days of an event, will commit to double the retention and re-engagement for their next campaign. Leveraging real-time dashboards of event analytics and feedback loops directly engages the audience feedback loops and experiences while getting event insights into action quickly, creating feedback as a living system.

The more frequently data is used, the stronger the event marketing flywheel becomes. Every campaign becomes an experiment that improves the next.

Overseeing the entire lifecycle of an event is complicated, especially on an enterprise level. Organizations that serve their full potential can be disrupted by disparate tools, incomplete data, and isolated teams, which can often make consistency a challenge. It is the organizations that work through these challenges who see tangible benefits to efficiency and impact.

Common dysfunctions

Best practices

Successful enterprises engaged continuously, look at the event lifecycle as a system of learning and connection, rather than a series of disconnected tasks.

The next five years will redefine what event marketing means. The shift from campaigns to communities, from static data to real-time intelligence, will turn event teams into core strategic functions within organizations.

Predictions

As event marketing matures into an always-on discipline, Samaaro continues to advocate for data-driven, feedback-led decision making, a principle at the heart of every modern event lifecycle.

The future of events isn’t in bigger audiences, it’s in smarter, longer-lasting relationships.

Few places have redesigned event marketing quite like the United Arab Emirates. Once characterized by a focus primarily on global expos and major summits, the UAE continues to evolve and become a hotbed for innovation based, experience-focused marketing. Events in Dubai, Abu Dhabi and Sharjah have progressed beyond one-off brand activations into the overall engagement plan reflecting the UAE’s transformation to a global creative economy, one of the main drivers of the UAE’s success in hosting global events, conferences and forums.

Becoming a global creative economy didn’t happen in one day. It has been the carefully curated policy design, ongoing investment into tourism and technology and a cultural penchant for being hospitable and connection. In the UAE’s Vision 2031 Action Plan and the National Innovation Strategy, the leadership continued to proclaim experience industries as key contributors towards the goal of GDP diversification. And, with that, we stimulated an ecosystem of creativity and commerce as a way for global businesses to try new ways to engage.

More importantly, the UAE was creating events to change narratives more than make events in new and different ways. A corporate conference at Expo City Dubai, an experiential launch event in Downtown Dubai to sustainability conversations in Abu Dhabi, every experience was designed in a way that resonates with audience cohorts of younger, mobile and globally connected humans. Their investment into venues, digital infrastructure and further investments into design and content expedited the experience transformation.

This blog will take a closer look into the details that differentiate event marketing in the United Arab Emirates. It will explore the array of forces igniting change, the products that AI and data are introducing to enhance experiences, and experience-led brand strategies that are engaging new forms of enterprise marketing for the future of the region. As a platform that observes experiences and has industry connections with the marketing teams throughout the MENA region, Samaaro will continue to observe and write up the evidence to strengthen and position a way of testing the insights from the quickly maturing UAE context into smarter, more connected event marketing solutions.

The UAE’s event marketing landscape has reached a point of global recognition, not just for its scale but for the sophistication of its approach. The country has built an ecosystem where policy, private enterprise, and culture align to support continuous innovation in live experiences.

The expansion is built on the bedrock of government backing. Organizations like Dubai Business Events and the Abu Dhabi Convention and Exhibition Bureau have intentionally and systematically sought to position the UAE as the MICE (meetings, incentive travel, conferences and exhibitions) capital of the Middle East. These organizations do much more than attract events, they are also building a knowledge-based economy in which industry, education and innovation intersect with cultural and social areas.

The legacy of Expo Dubai and the development of Expo City Dubai, as a sustainable, smart and event space, demonstrates the UAE ambition to be a front-runner in the global event economy.

There is substantive consideration of infrastructure, but the support of national governmental policy initiatives focused on sustainability, digital transformation and trade diversification have incentivized enterprise brands to invest in an experience-based regionally focused event. The evolution of new regulation and licensing process for enterprises and work and residency permits have not only improved conditions but resulted in increased interest from multinational enterprise brands to deliver large conferences and exhibitions internationally from Dubai and Abu Dhabi.

The economic structure of the UAE plays an important role in defining event marketing trends. The presence of thousands of multi-national headquarters in Dubai and Abu Dhabi has led the country to be seen as the nerve center for B2B events across a number of sectors, including finance, technology, real estate, and logistics. Corporate events are no longer viewed as transactional, they are means of showing brand philosophy. From fintech expos to real estate showcases, businesses in the UAE invest heavily in lively experiences, resulting in immediate engagement and long term loyalty.

The cultural foundation of the UAE enhances the reach of experiential marketing. Hospitality, community, and shared experiences are at the core of the region’s identity. Even in a largely digital and disconnected world, in-person networking is the cornerstone of the business relationship. The cultural preference for in-person relations promotes the continued championing of physical events, combined with hybrid options that allow it to retain a local edge.

The result is an event ecosystem that considers tradition and novelty, balancing the warmth of Arabic hospitality with the promise of modern digital interaction.

The UAE’s event marketing momentum is not accidental. It is driven by three interconnected forces: audience diversity, technological maturity, and supportive infrastructure.

There are few markets as demographically diverse as the UAE; more than 85 percent of the population consists of expatriates, presenting an exceptionally global audience mix. Attendees have high expectations for personalization, inclusivity, and convenience due to that diversity. They are multilingual, technologically savvy, and have come to expect seamless digital experiences in all aspects of their lives.

Thus, event marketers need to customize messaging, content, and design for multiple cultural and linguistic processes. A single approach does not work anymore. Successful campaigns in the UAE are now centered on segmentation, behavioral understanding, and cross-channel personalization that resonates with the audience’s global thinking.

In the UAE, technology is not a must-have, it is the baseline. Everything from onsite registration powered by AI to interactive mobile event apps, attendees in the region expect a seamless digital layer built into every experience. Event organizers are now using real-time dashboards to track engagement returns and ROI live at their event. Marketers are no longer focused on simply footfall, but now track dwell time, participation, and attendee retention of sessions.

This shift is indicative of the UAE’s changes from valuing engagement for visibility over engagement that can be measured. Brands are no longer investing in events to simply be seen, they are investing to achieve measurable impact.

The UAE’s long-term ambitions for a knowledge-driven and sustainable urban landscape influence event marketing. Its smart city programs (e.g., Dubai 10X, Abu Dhabi’s Smart Hub) have driven innovative digital tools and data usage in the event economy. Facilities such as the Dubai World Trade Centre and Abu Dhabi National Exhibition Centre incorporate advanced connectivity and data infrastructure to assure that the largest events can be delivered to exceptional and unique technical delivery.

These structural, strategic advantages permit the UAE to be an industry context in which global best practices are not only matched in quality but also positioned.

The essence of how UAE marketers have approached audience engagement is entirely driven by artificial intelligence. From predictive modeling to communicating in different languages, AI tools provide brands with important information and the ability to project future attendee behavior. Marketers can now find the most beneficial audience segments, tailor outreach as a result of engagement data, and modify campaigns beforehand, immediately after or even during an engagement.

Companies are even taking it a step further with AI to make better decisions concerning scheduling, personalize recommendations, and automate much of the post-event communication. These data-driven insights, and insights into other key performance indicators, ultimately lead to better satisfaction of the attendee and lead to measurable accountability for brands.

In the UAE, the future of marketing is increasingly defined by what happens after the event. Marketers are increasingly looking beyond simple metrics involving attendance only, to using measures of the depth of engagement, spend time at booths, amount of times they interacted with an exhibitor, and content downloads. This change reflects a wider move in the industry towards strategy based on analytics that are rich in information.

Data has certainly become the basis for making post-event marketing decisions. Event managers are now using engagement reports to leverage insight into not only refining their messaging, but also re-targeting attendees with personalized offers, and considering potential partnership opportunities for future events.

The fast-paced business environment in the UAE creates a demand for operational agility. The time frames across the initiatives are shrinking, and marketing teams are challenged by managing multiple touch points in parallel with goodwill. Thus, automation has become critical for both consistency and scalability.

Workflow automation for registration, communication and post-event nurturing, enables teams to carry out campaigns that would otherwise take a complex staffing model, with substantially fewer resources. Automated workflows also provide timely reminders, personalized confirmations, and relevant follow-ups, all while creating a consistent brand experience.

Events held in the UAE have shifted from being campaign tools to experiential activations of brand identity. Brands today are less concerned with simply talking about their products and more focused on curating experiences that embody their values and purpose.

This creative and psychological shift has created a new definition of success. Consumers and attendees don’t want to merely observe; they want to engage, and contribute, and connect. Therefore, experience design is central to contemporary brand storytelling.

Tech companies collaborate with innovators and give delegates an opportunity to experiment and play with cutting-edge technology. Luxury brands curate immersive environments inviting delegates to visit and experience craftsmanship for themselves. Even business-to-business (B2B) summits utilize gamification of learning and sensory storytelling to drive engagement and participatory outcomes.

What distinguishes the UAE market is the willingness of brands to measure emotional resonance alongside performance metrics. The measure of an event’s success is no longer the parameters of a connection experienced during the event, but also to the level of post-attendance retention it creates, as opposed to the one-and-done event, which the marketer can count on to create some level of brand interaction.

Data platforms become the bridge to give marketers the visibility they need to connect experience to outcome, while also providing some proof that creative expenditure achieves some kind of return on investment (ROI).

While the UAE’s event landscape is rich with opportunity, it is not without complexity.

Challenges

Opportunities

The balance of these challenges and opportunities reflects a market that rewards sophistication. Enterprise marketers that integrate strategy, creativity, and data fluency are the ones most likely to thrive.

The UAE’s event ecosystem is poised for another phase of transformation. Several trends will define its trajectory over the next half-decade.

The UAE is not simply following global workplace trends; it is defining them. It has achieved a unique combination of ambition, infrastructure, and inclusivity, which has made it a model for the way that modern event marketing should work. Here, art, and analytics, as well as experience and data, come together in a way that crafts events that are emotionally impactful, and operationally smart.

From government policy down to corporate creativity and purpose, each layer of the UAE’s ecosystem is purposely supporting one observable end goal: to create engagement that is measurable, sustainable, and deeply human.

As the transformative era of event marketing continues to evolve, the lessons that will arise from the UAE will increasingly challenge global organizations to consider how to balance creativity and technology for the purposes of engagement.

Samaaro will continue to document and learn and contribute to this journey, particularly with organizations who are interested in building smarter and more connected and impactful experiences to event marketing inspired by the UAE.

AI is not just on the horizon of event marketing; it’s already built into event marketing

In the last few years, what started as a hodgepodge of automations, is now the invisible infrastructure behind how brands plan, market, and measure their events. From predicting attendee behaviour, to building personalized campaigns, AI is now a stealth partner to event marketers at every stage of the event marketing cycle.

Enterprise marketers have moved on from asking if AI is an appropriate strategy for them to how deep they want to go into AI. AI is not just optimizing operations, it is changing how event marketers look at intent, design experiences, and link engagements to business outcomes. However, despite all the hype, most marketing teams still struggle to conceptualize what “AI in events” truly looks like, beyond a superficial understanding of automation.

This blog dives into that gap, evaluating how AI is changing the full event marketing lifecycle, before, during, and after the event, and what the next evolution of this technology means for enterprise growth.

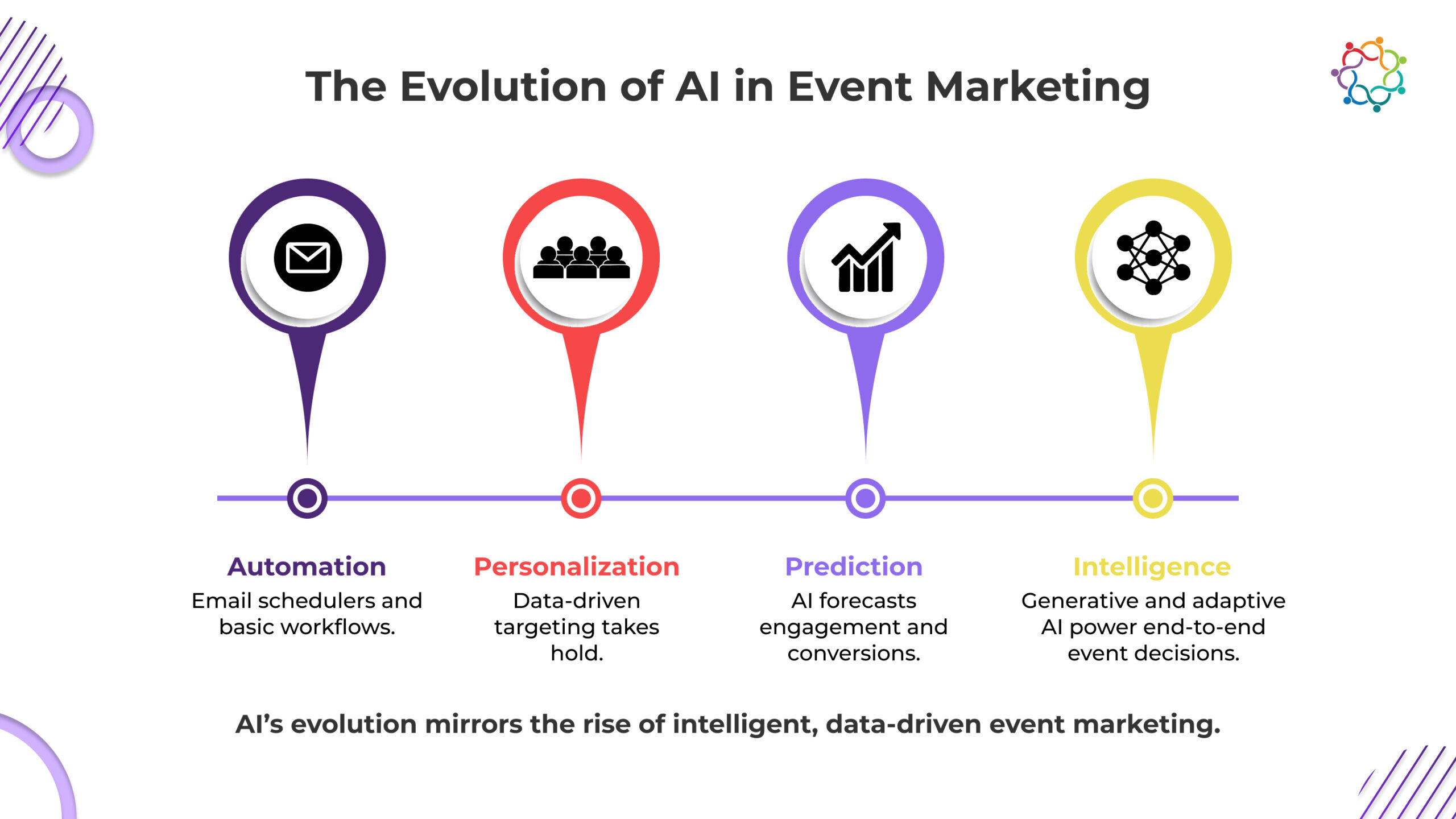

Initially, when artificial intelligence was first brought into the realm of event marketing, it was essentially regarded as a tool to convenience tasks. The first use cases involved automating tasks that had being repetitive and time consuming: scoring leads in a spreadsheet, segmenting people based on list, and scheduling follow up emails. With efficiency as the motivator.

As the practice of data collection matured, marketers began to recognize a tangible opportunity. Every digital interaction – website visits, registered sessions for events, submitting feedback forms – all were insights into a behaviour. The second wave of AI allowed the ability to read these signals algorithmically and at scale. Marketers could now predict which profiles were most likely to convert, engage, or disengage, and not just categorize an attendee.

That was a major tipping point; going from AI as a back-end tool to AI helping act as a compass for strategic direction. Platforms began using predictive analytics, natural language processing, and recommendation engines to help teams understand the whys behind an audience’s behaviour.

By 2025, industry reports expect that 60% of the worlds enterprise organizations are using AI to help make decisions at various levels of event marketing execution; from planning a campaign to creating content.

AI has gone from automation to augmentation. AI isn’t just doing the work faster, it’s learning from every action – and translating those learnings into measurable outcomes for the business.

But beyond buzz words and technology claims, really the only question that matters: What is truly working today?

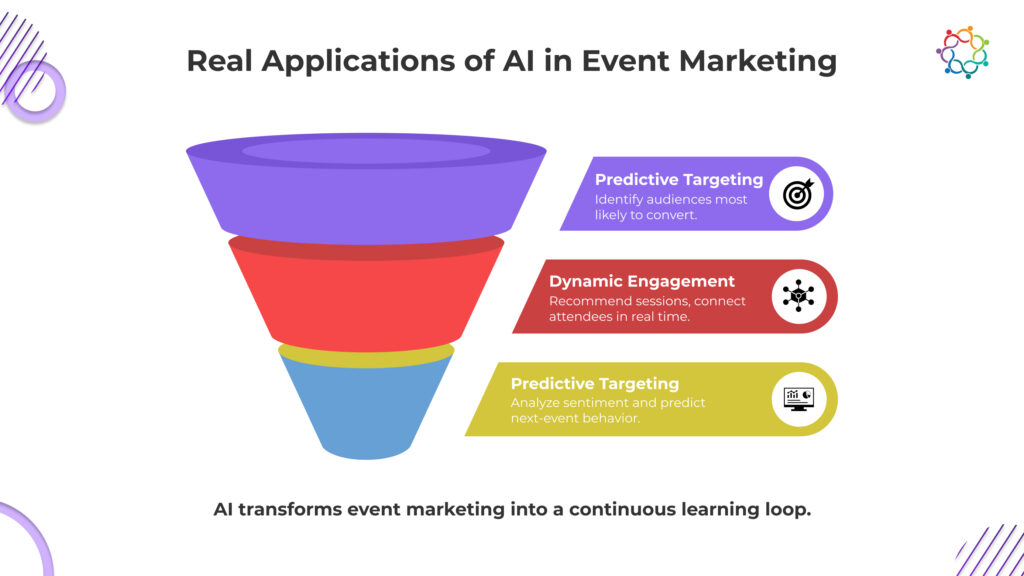

AI’s real power reveals itself when mapped across the three stages of the event marketing cycle: pre-event, during the event, and post-event. These aren’t isolated phases anymore, they form a continuous loop of learning, prediction, and optimization.

All events begin with the same question. Who do we target, and how?

Artificial intelligence is changing how marketers can respond to that question.

Instead of merely analysing historical attendance or manually segmented attendee lists, AI systems can generate attendee profiles that are both dynamic and predictive. Attendee profiles include references to both demographics and intent, illustrating and identifying which audiences are most likely to (1) register, (2) engage, and (3) convert.

Predictive targeting has become one of the most valuable tools available in pre-event marketing. Based on behavioural data and historical outcomes, AI can flag the top twenty percent of prospects that will yield the most conversions. With multi-million-dollar marketing budgets, every campaign cycle must be well-targeted, and it is increasingly risky to spread marketing spend too thin, targeting audience segments with little to no probability of conversion.

This intelligence is also valuable for illustrating the importance of personalized strategies and personalization. Generative AI models can both produce and facilitate adaptive campaign content, from email templates to ad copy. As a result, personalization is not solely about better open and click through rates but relevance at scale.

Another key application is smarter budget allocation. AI can analyse historic performance and predict, based on past channel performance (e.g. ad conversions to sales) where every rupee in marketing spent represents the best ROI where materials are deployed to “winning prospects.” That means smart teams can leverage AI to determine when to invest both time and resources into tripling down on LinkedIn ads or pull the spend on paid search based on reasonable probability or historical conversion rates, not gut instinct from 5 years ago.

For example, a global technology brand was able to use predictive analytics based on past attendee registrations and behaviour to understand who its highest propensity attendees without using the gut instinct of targeting 50,000 prospects. Instead, it was able to target most effectively the top 10,000 and, ultimately, produced 1.6x as qualified registrations and no increased marketing budget.

We’ve seen other similar examples in our ecosystem with Samaaro. One case with an enterprise organizer used predictive analytics, enabled through Samaaro’s campaign management application and component tracking tools, improved its in-person event registration and attendance ratio from registration for 100 to an actual registration and attendance ratio of 30%. They were also able to substantially illustrate the point that targeting precise audiences or segments is an effective tool.

Once attendees check in, the AI’s functions shift from predicting what might happen to orchestrating activities in real-time.

Real-time engagement is one of the hallmark capabilities of AI-driven event experiences. Algorithms can track behaviours across touchpoints – app logins, session check-ins, networking engagements, etc – and respond accordingly in real-time.

Imagine an attendee browsing through a session topic track within an event app. The AI observes that behaviour, and as a result, using a combination of a base algorithm and the data collected on their behaviour, the AI can make recommendations of session or exhibitors in real-time based on that interest. So, what you have is a living personalized journey throughout the event that shifts as the attendee shifts.

The real-time dynamic delivery of content is the same concept. Personalized agendas, push reminders, and real-time recommendations on the go helps each attendee operate efficiently in any event. AI’s potential can shift event apps in real-time from passive directories to an active engagement platform.

Smart networking is another purposeful function. Machine learning algorithms explore attendee profiles including job title profiles correlating to what sessions attendees are engaging to make high value recommendations to connect with a fellow attendee. Rather than productive meetings with random fellow attendees, they depart with connections to peers, partners or leads that contribute to contingencies during the event in accordance with their intended purpose.

In one specific enterprise summit with multiple networking meetings, AI matchmaking alone increased meeting success rates by 45% across simply aligning on the attendee profile information and credential priorities.

Samaaro’s ecosystem subtly and efficiently allows for this. The Attendee App’s recommendation algorithms and smart networking functions immediately allow event marketing teams to capture engagement data in real-time turning spontaneous attendee behaviour on-site but through the event journey into structured marketing intelligence.

Real-time sentiment analysis is another layer of understanding. Pulling based on daily polls, post-event feedback forms, and/or posts made on social media captured daily and during the event AI can sense as it relates to sentiment and may be changing with the collective presence of people in the event. It also means that marketers can engage the flexibility of making an adjustment on site, be prepared to extend a session, or adjust a tone in an announcement, and development of event in-event inducements to action plans based on the signals of attendee engagement and participation.

Historically, the post-event phase was where data went to die. With the rise of AI, it can be turned into a feedback engine.

With natural language processing, we can now analyse feedback forms and survey responses at scale. Through sentiment detection, teams are better able to understand tone and context, and learn what resonated and what did not, going beyond the mere numerical ratings.

Predictive models can now be used to forecast future attendance, lead quality, or churn risk by looking at historical engagement. These insights allow marketers to design follow-up campaigns that feel personalized, as opposed to generic or cursory.

AI has also created a gap that did not exist, by connecting post-event insights back into the enterprise’s customer relationship management (CRM) system. Instead of receiving a static report on an event’s success, marketers now receive dynamic intelligence on which sessions impacted pipeline growth, which attendee segments delivered the best ROI, and which content themes drove the best conversions.

With AI dashboards, marketers can easily summarize these insights with AI power. In the past, it would take the teams days to put together reporting, but now they receive visual narratives of performance with next steps included.

AI doesn’t necessarily change how we measure success but influences the system feedback loop. Like processing the requests of an algorithm, the next time a campaign is run, a recommendation to the next campaign’s planning phase will be present and based on what it learned about attendees and engagement at the summit.

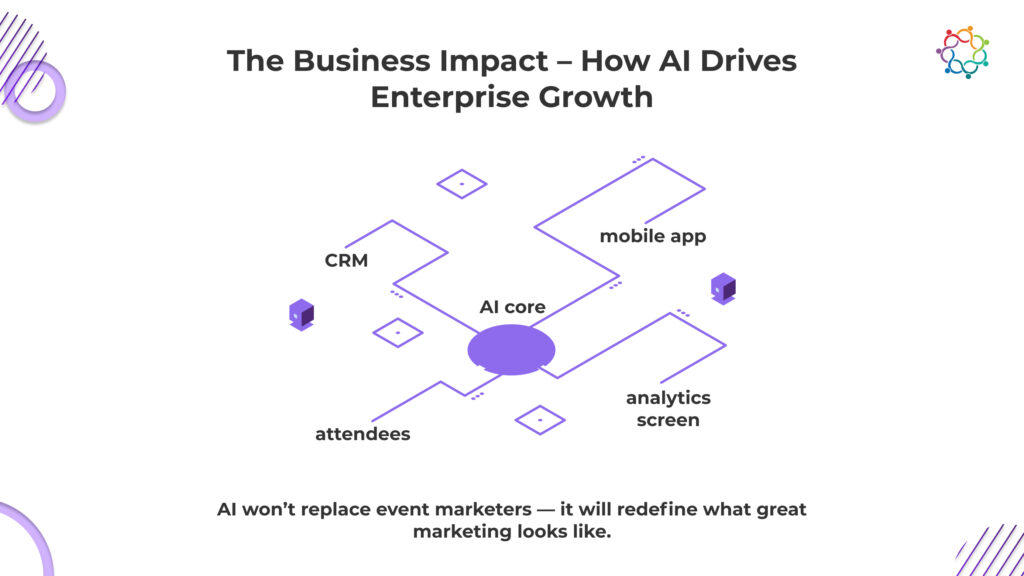

The integration of Artificial Intelligence into event marketing is not an isolated development, it is insulated so as not to be one of a growing range of technologies that make data more accessible and insight more immediate.

Predictive analytics platforms have emerged to be the nervous system of event intelligence. In fact, they can accurately predict campaign activity before it activates to allow the team to deploy resources and focus on areas with mathematical reasoning when millions of rupees of investment is on the line. This predictive layer has removed some uncertainty and applied measurable impact for enterprise marketing teams.

Natural Language Processing (NLP) has emerged as the interface from AI to human. Chatbots and voice assistants can handle thousands of attendee conversations at one time, and free up event teams to focus on strategic and mission-driven work. AI moderators can summarize live Q&As, categorize audience questions, and provide contextual answers in real time.

Computer Vision is entering large scale events. Cameras can read locations and crowd density, facial expressions, or movement patterns. These insights can help event organizers make decisions around layout, security measures, and engagement. Computer Vision must be approached in a responsible manner, but the upside for safety and personalized engagement for large scale events is compelling.

Generative AI is changing the creative flow. Event teams can use generative AI to generate personalized content, create marketing assets of every size, and write micro-copy for all different voice tones for every flavour of audience. In fact, generative AI can support iterative speed without replacing human creativity along the way; event marketers can ultimately push refinements for their idea(s) instead of manually creating thousands of emails, social or campaign outputs.

AI and IoT Integration is the next frontier. Smart badges and heat-mapping sensors can track measures around how attendees are moving through physical space – in terms of where booths, sessions or planners, where are the most desirable places for attendee engagement? Insights collected would lead to next level insights and predictive models to turn physical behaviour into digital outcomes.

When combined, this technology offers to marketers what they often seek as professional event marketing professionals – a more accurate understanding of audience intent. Technology does not replace the role of creating to steward intention, it compounds accuracy to the creation,

At its core, the use of AI in event marketing is not a technology story – it’s a business story.

Predictive targeting reduces wastage in marketing. Instead of focusing on large campaigns, the use of AI allows for resources to be invested in leads with a high probability of conversion, thus limiting budget waste.

Personalization drives engagement, and engagement drives ROI. When attendees feel known and appreciated, they tend to engage more with the event content. Engagement is converted and retained in the brand ecosystem.

AI-driven insights speed up decision making. Dashboards and bursts provide marketing decision makers the confidence to pivot their strategy nearly in real time, rather than relying on a post-event report.

And perhaps most importantly, AI breaks down silos. Event data once siloed between marketing, sales, and operations can now be viewed through a framework in which intelligence is shared among teams. Everyone is working off the same information, which removes the speed of feedback loops and aligns teams around results, vs. opinion.

An enterprise working with AI technology embedded in their event marketing strategy realized a 37% improvement in lead quality and a 28% improvement in conversions post-event. These were not abstract improvements. They were tangible growth in revenue attribution, and overall acceleration of the pipelines they experienced.

AI does not simply optimize events. AI changed these events from an expense on the marketing budget, to profit center.

With the introduction of any evolving technology, comes an element of shared responsibility. This is especially true for AI in event marketing.

Data privacy is still the primary concern. If systems are analysing on a personal and/or behavioural basis, it is important that the marketer is transparent as to how attendees’ data will be used. Attendee consent is a signal of trust, not just compliance.

Another risk relates to over reliance. When every decision is made based on algorithmic output, people may lose creative judgement. The best event marketing is based on human intuition, sensitivity, tone, and emotional nuance, these qualities cannot yet be replicated by AI.

The regulatory frameworks such as GDPR and the soon to be passed AI Act will change how data is collected, processed, and stored. Enterprises need to develop AI strategies that are compliant by design, not reconstituting an approach in post-facto.

The future of AI in event marketing will have a long trajectory based on how the technology is responsibly managed today.

The future of artificial intelligence, or AI, in event marketing is not only going to enhance how campaigns run but also predict how they should be designed.

Predictive planning will enable marketers to model entire event concepts prior to launch, testing the viability of themes, formats, or audiences. AI will enable marketers to simulate potential outcomes and optimize planning strategy before the first campaign launches.

Conversational AI will mature from merely being reactive chatbots to being proactive co-pilots. They will handle registration, surface audiences and guide them through feedback, and even help marketers decipher real-time engagement data during the event.

But perhaps the most significant difference will be the concept of continuous learning. AI systems will refine strategies for events on-the-fly, aggregating insights from many past events to apply to real-time recommendations made for the next event. Campaigns will no longer live in isolation, as the user would be granted an intelligence loop that encompasses geographies, audiences, and time, and will continue to learn.

These ideas have already been a part of Samaaro’s road map.

In 2025, we focused on deeper CRM intelligence, cross-channel insights, predictive recommendations and aggregating all this information across the entire event portfolio, that provides marketers with an Elephants Eye view of engagement.

The next frontier is not going to be automation; it is going to be augmentation. AI will not take the job of event marketer; it will eventually define what great marketing looks like.

(If you’re thinking about how these ideas translate into real-world events, you can explore how teams use Samaaro to plan and run data-driven events.)

Samaaro is an AI-powered event marketing platform that enables marketing teams to turn events into a measurable growth channel by planning, promoting, executing, and measuring their business impact.

Location

© 2026 — Samaaro. All Rights Reserved.