Samaaro + Your CRM: Zero Integration Fee for Annual Sign-Ups Until 30 June, 2025

- 00Days

- 00Hrs

- 00Min

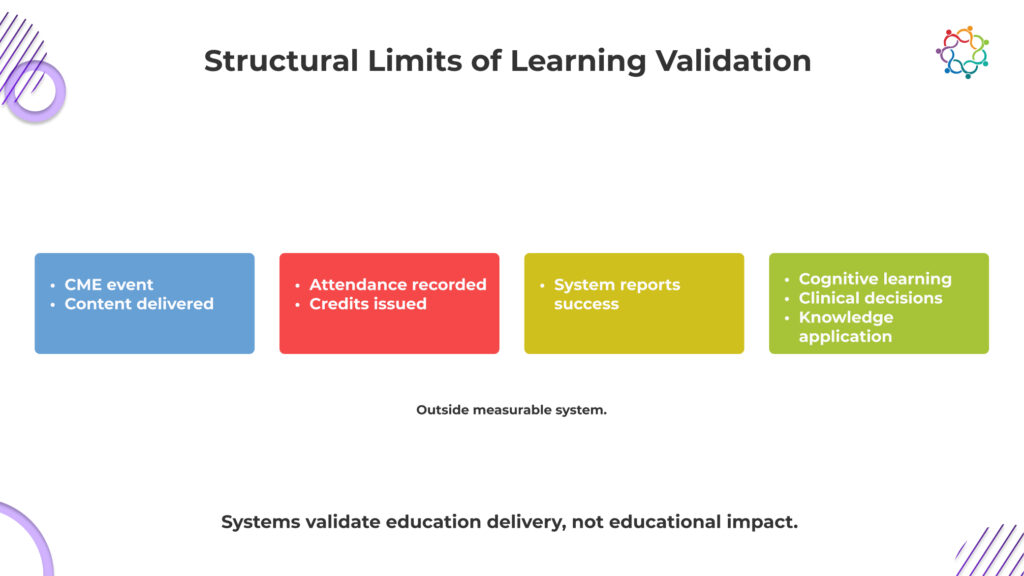

CME proves presence, not progress.

That is the metric most programs optimize for, even if they do not say it out loud.

Across CME events, participation looks strong on paper. Sessions fill up. Physicians log attendance. Credits are issued and documented. From a reporting standpoint, everything signals success. The system confirms that education was delivered and received.

But that confirmation is superficial.

Attendance only proves that a physician was present when information was shared. It does not confirm whether they engaged with the content, understood its clinical relevance, or changed how they interpret medical decisions. The assumption that presence equals learning is where the problem begins.

This is not a gap in effort. It is a gap in validation.

The system captures exposure because it is measurable. It cannot capture cognitive change because it is not.

This blog examines the Learning Validation Gap in CME and why proving attendance is far easier than proving meaningful learning.

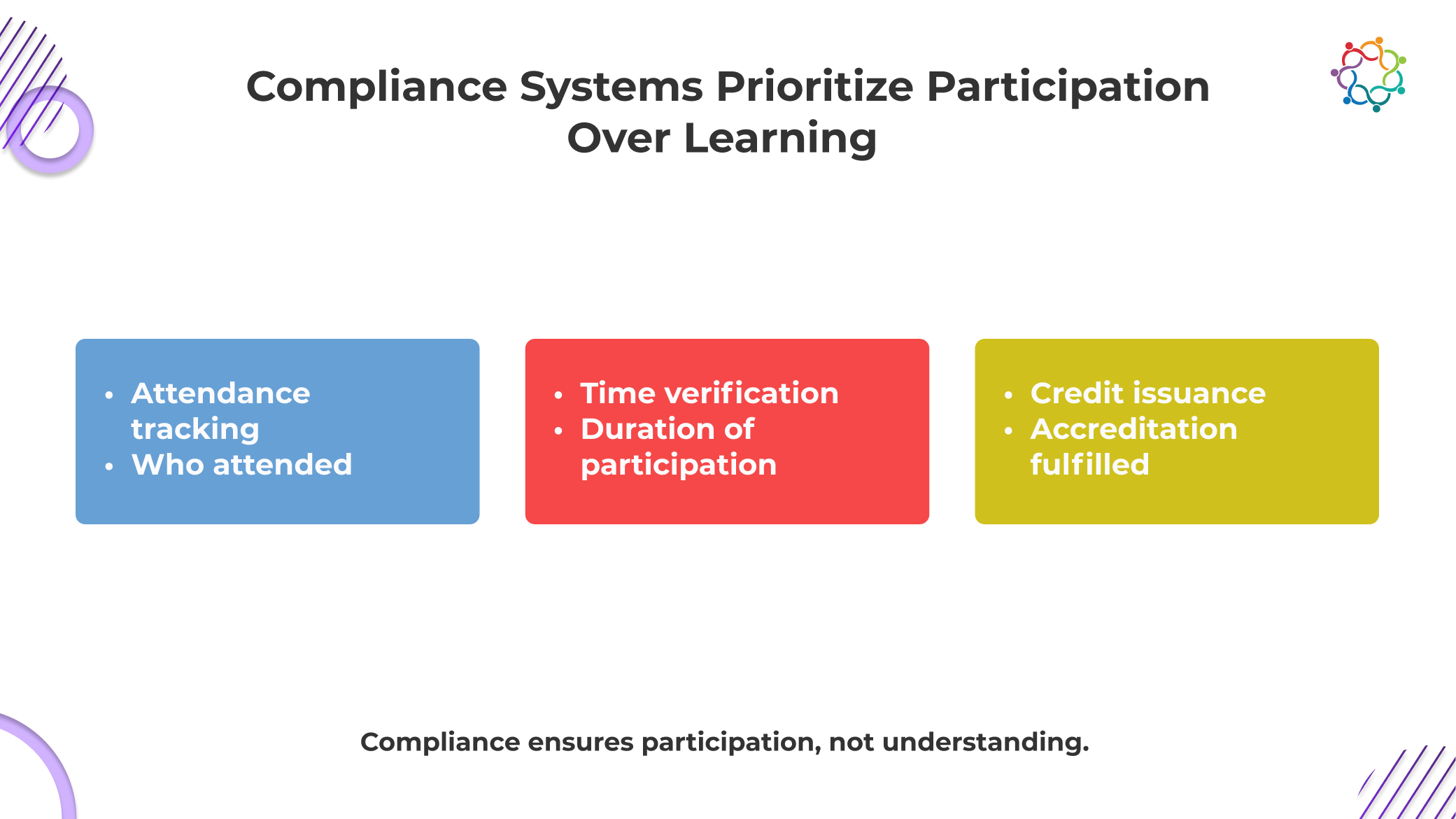

CME systems are not failing to measure learning. They were never built to do it.

At their core, these frameworks exist to prove compliance. They ensure that educational standards are met, content is accredited, and physician participation is properly documented. Everything revolves around what can be verified in an audit.

And learning cannot be audited.

So the system defaults to what it can prove. Attendance logs, session duration, and credit issuance. These are clean, defensible, and easy to report. They satisfy regulatory requirements and create the appearance of educational rigor.

But they do not confirm understanding.

This is the uncomfortable reality. The system is designed to validate that education was delivered, not that it was absorbed. In Continuing Medical Education events, participation becomes the endpoint because it is measurable.

Learning remains outside the system, unverified and largely assumed.

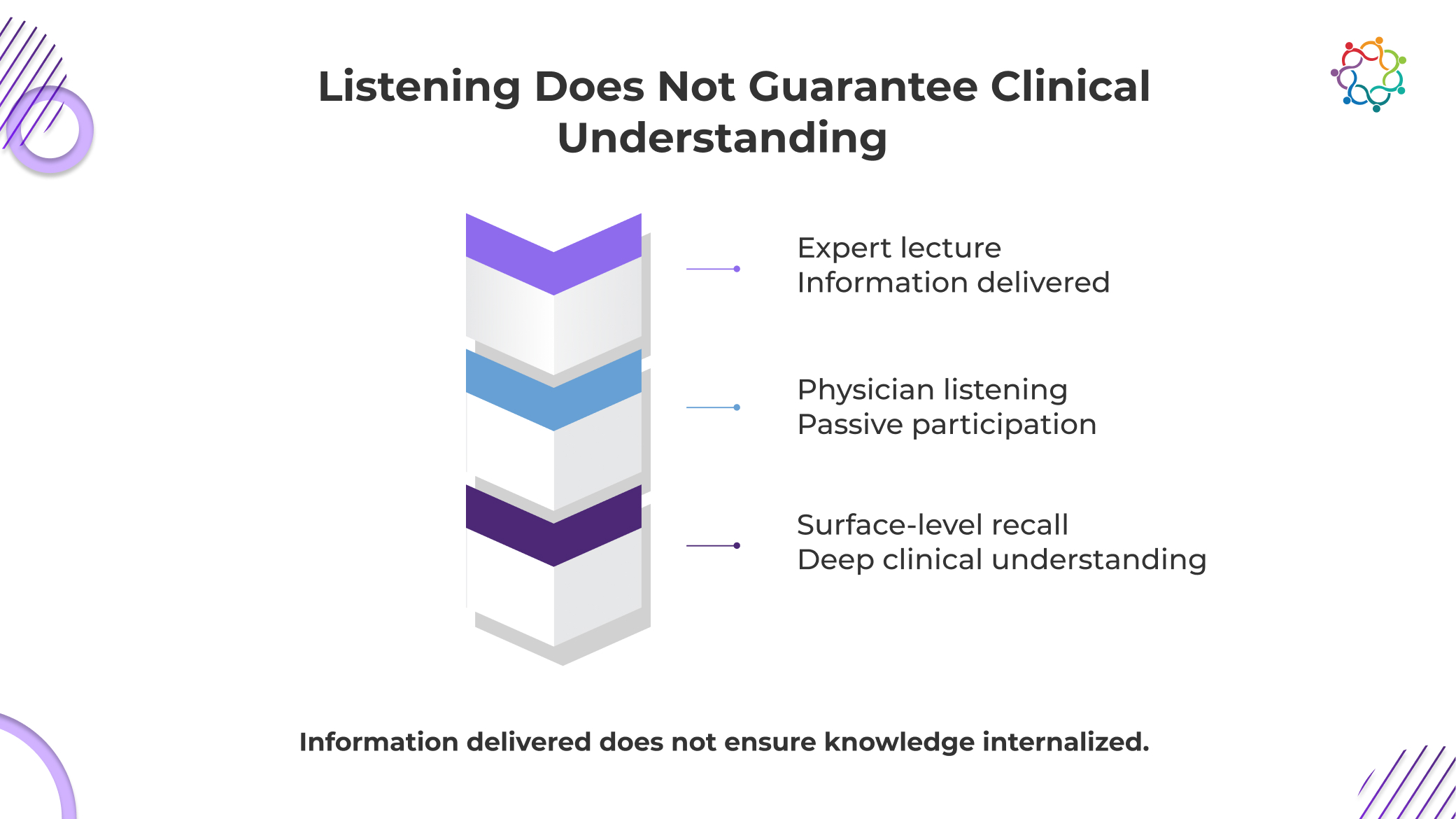

Most medical education events are built on a simple assumption that if physicians hear the information, they will understand it. That assumption is dangerously weak.

Exposure is not the only way to receive clinical knowledge. It calls for context, interpretation, and the capacity to use it under duress. Even if a doctor attends the entire class and follows every presentation, they may still leave with a disjointed or inaccurate understanding of the subject. That gap cannot be detected by the system.

This is not just an academic concern. It is a clinical risk.

If a physician misinterprets a guideline or fails to internalize a treatment protocol, their decisions do not improve. They may rely on outdated approaches or apply new information incorrectly. The consequence is not poor learning metrics. The consequence is inconsistent patient care.

When learning is assumed instead of validated, error becomes invisible.

And yet, most CME conferences continue to treat listening as sufficient proof of understanding, without ever verifying what actually changed in clinical thinking.

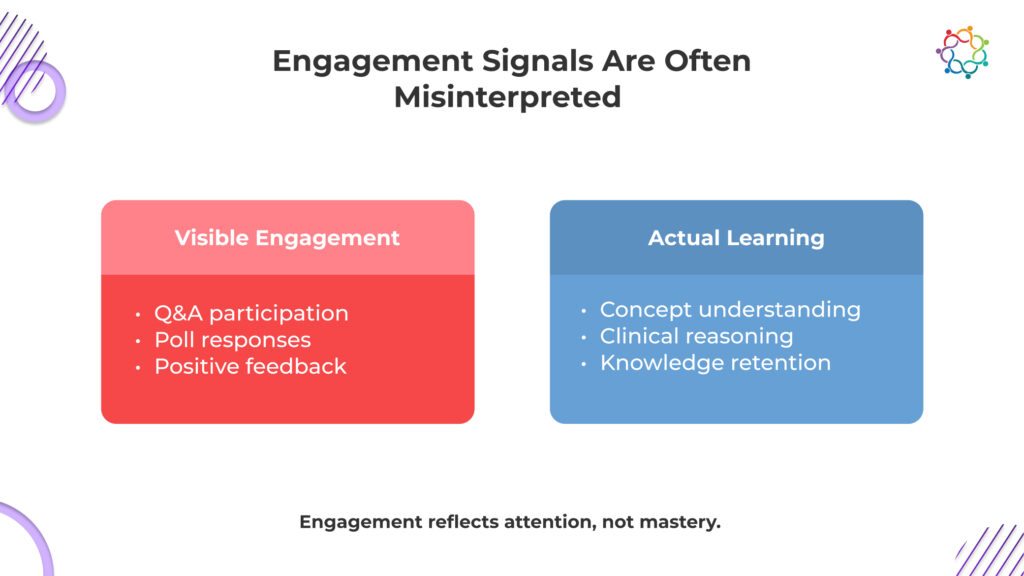

Engagement metrics do not validate learning. They create false confidence that learning has happened.

In many CME events, interaction is treated as a proxy for effectiveness. Questions are asked, polls are answered, and feedback scores are collected. These signals are then used to demonstrate that physicians were actively involved in the session.

But involvement is not the same as understanding.

A physician can participate without processing the content deeply. They can respond to a poll instinctively, ask a question out of curiosity, or rate a session highly because the speaker was engaging. None of these actions confirms that knowledge was absorbed or retained.

What these metrics actually measure is attention, not comprehension.

The danger is not that engagement is irrelevant. The danger is that it is overvalued. It creates a narrative of success that feels credible but lacks substance. Leadership sees interaction and assumes impact, without questioning whether clinical understanding has improved.

This is how false confidence is built into CME learning outcomes.

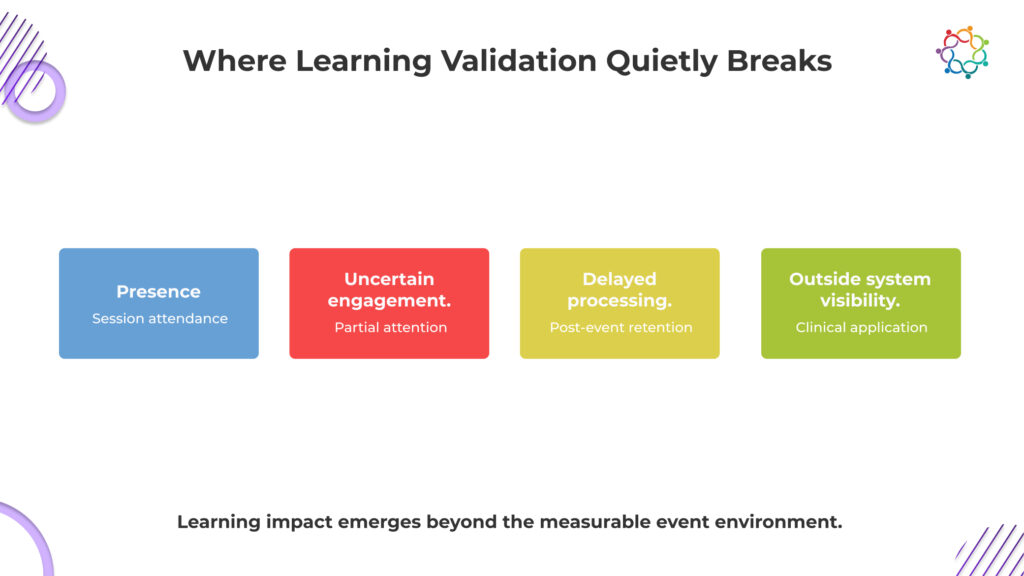

The breakdown does not happen in one place. It happens across multiple points, each weakening visibility into real learning outcomes.

Attendance records physical or virtual presence, not attention. Physicians may be distracted, multitasking, or attending only for credits. The system assumes focus where none may exist, turning passive presence into perceived participation without confirming any real cognitive involvement.

Understanding rarely occurs in real time. Physicians often process and connect information later, when faced with relevant cases. By then, the event has ended, and measurement is complete, leaving actual knowledge retention disconnected from the moment it was supposed to be captured.

Learning only proves its value when it influences real clinical decisions. These decisions happen in patient care settings, far removed from the event. This distance makes it difficult to trace whether a specific session actually shaped how a physician chooses treatments.

The system measures learning at the exact moment it is least visible. Cognitive change happens later, in fragments, across contexts. What is captured is activity during the session, while what truly matters unfolds outside measurable boundaries, beyond the reach of event data.

Systems capture what happens during the session. Learning often happens outside it. This disconnect ensures that true educational impact remains largely unobserved.

Learning does not happen on the timeline of an event. Measurement does.

Physicians rarely absorb and apply new knowledge instantly. They reflect on it, compare it with existing experience, and only integrate it when a relevant clinical situation arises. That moment may come days or weeks later.

By then, the event is no longer part of the equation.

This is where validation breaks down. Even if learning occurs, it cannot be confidently linked back to the session. Attribution is lost. The system cannot prove whether a clinical decision was influenced by the event, prior knowledge, or another source.

So impact becomes untraceable.

In CME events, outcomes are expected immediately, but real learning appears later, outside measurable boundaries. What gets captured is participation. What actually matters emerges too late to be connected.

That is why learning remains unproven, even when it exists.

This is the uncomfortable conclusion. CME systems reward exposure because it is measurable, not because it is meaningful.

Attendance, credit completion, and participation metrics dominate reporting frameworks. These indicators show that physicians had access to information. They confirm delivery, not impact.

Exposure is easy to quantify. Knowledge is not.

A physician can attend multiple sessions, earn credits, and still show minimal change in clinical understanding. The system records success, even when learning is uncertain.

This creates a structural bias.

Programs are evaluated based on what can be measured. As a result, they prioritize metrics that are easy to capture rather than those that reflect true educational value.

None of these confirms learning.

Yet they are treated as indicators of success.

This is not a measurement gap. It is a measurement mismatch.

The system validates educational delivery. It does not validate educational impact.

And until that distinction is addressed, the Learning Validation Gap will continue to exist.

The issue persists because it is inherently difficult to solve.

Learning is a cognitive process. It cannot be directly observed. Systems can track behavior, but they cannot access how information is interpreted or understood.

This makes learning fundamentally hard to measure.

Organizations default to proxies because direct validation is not feasible. Over time, these proxies become accepted as reality.

The real value of education lies in its impact on patient care. But clinical decisions happen far from the event environment. They are influenced by multiple factors, making it difficult to isolate the effect of a single session.

This creates attribution challenges.

Organizations continue operating within this limitation because there is no easy alternative. They rely on participation metrics, knowing they are incomplete.

That is the blind spot.

Not because the problem is ignored, but because it is difficult to address.

And so, physician learning events continue to be evaluated based on what is visible, not what is meaningful.

CME programs are essential. They create structured opportunities for physicians to stay informed, exchange knowledge, and engage with evolving clinical practices.

But participation metrics cannot define their success.

Attendance proves presence. Engagement signals show interaction. Neither confirms that learning occurred.

And without learning, there is no guarantee of improved clinical decision-making.

That is the real risk.

Unvalidated learning leads to uncertain outcomes. In healthcare, uncertainty in knowledge can translate into inconsistency in treatment. That is not just a measurement issue. It is a clinical one.

The Learning Validation Gap is not about better reporting. It is about recognizing that current systems cannot prove what truly matters.

Here is the line that should stay with you:

CME programs can prove that doctors attended. They cannot prove anything changed in how those doctors think or treat patients.

Until those changes, effectiveness will remain assumed, not validated.

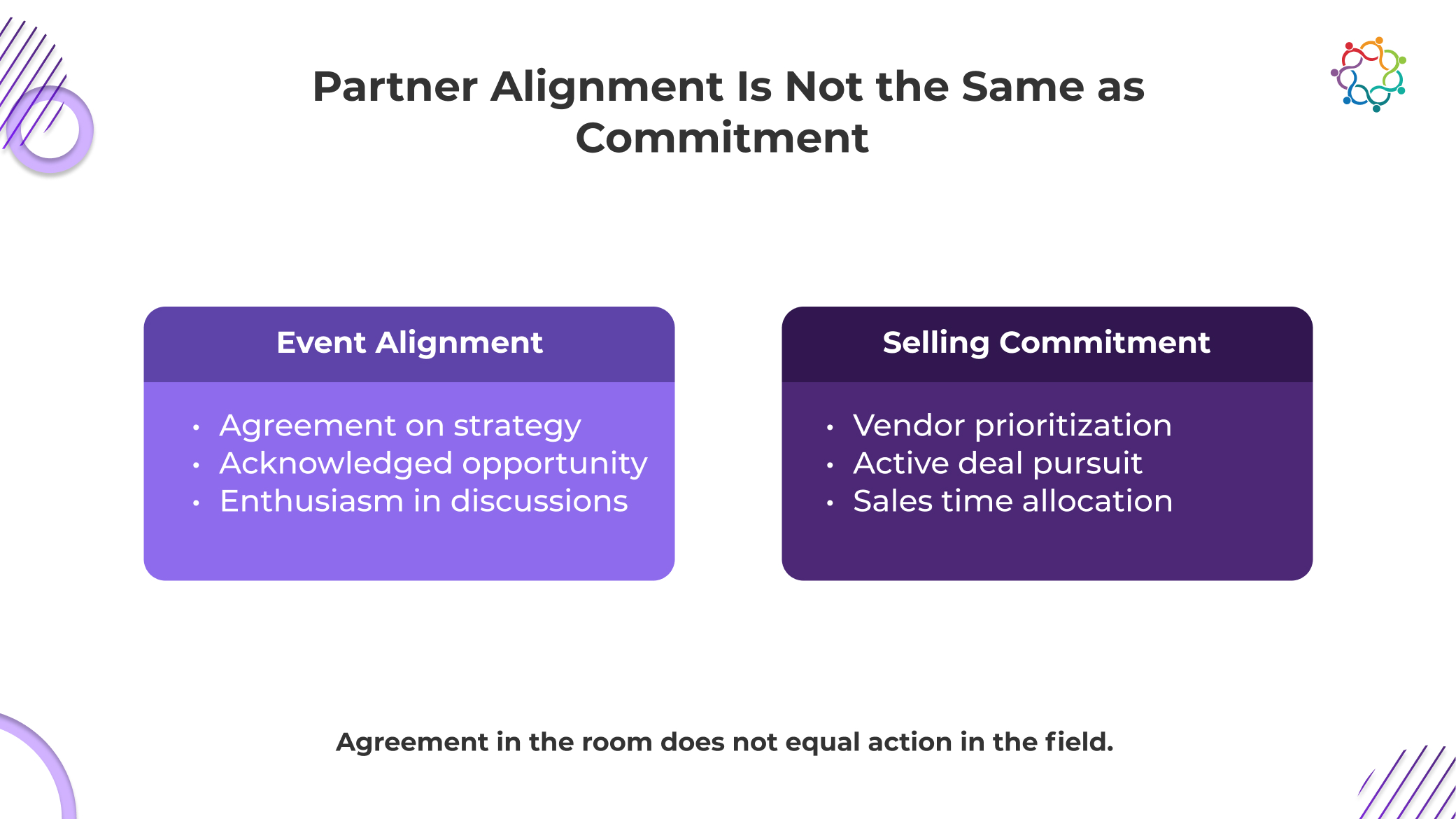

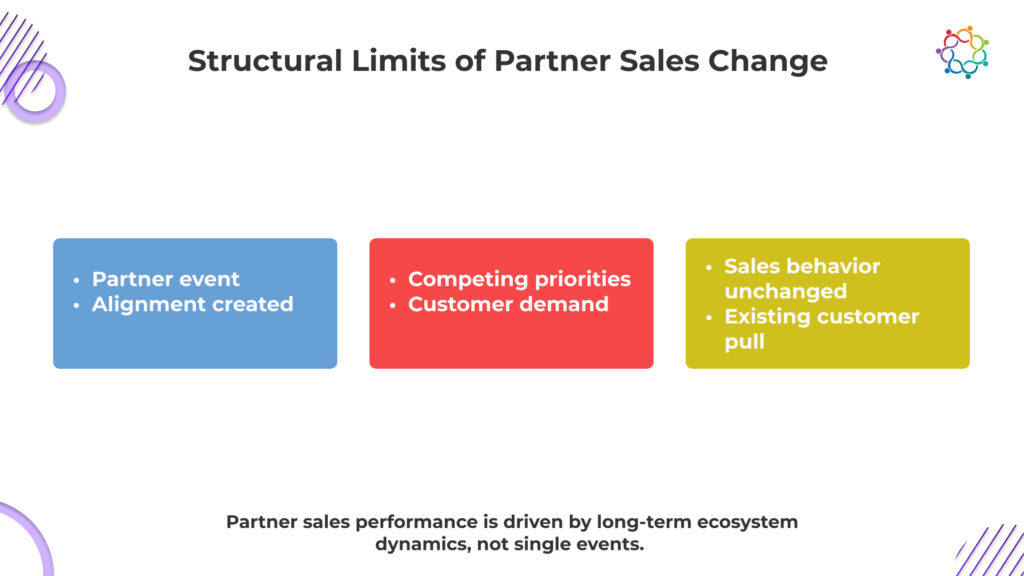

The most dangerous outcome of partner events is not failure; it is the lack of preparation. Rooms are full. Conversations are active. Partners engage, ask questions, and lean into discussions. The signals are all positive, and they arrive immediately. Leadership walks away with a clear impression that partners are aligned and ready to move.

But this is where the misread begins. What you are seeing is not readiness. It is responsive to a controlled environment.

Inside the event, attention is focused, messaging is uninterrupted, and partners are temporarily operating within your narrative. In that moment, alignment feels real because there are no competing pressures.

However, the moment partners return to their actual sales environment, those pressures return. Competing vendors, active deals, and revenue targets take over. And that is where the earlier signals start to collapse.

Because alignment inside an event is not the same as priority inside a pipeline.

This is the Partner Priority Gap, the disconnect between partner alignment during the event and actual prioritization when revenue decisions are made.

This blog examines why that gap exists and why it consistently prevents partner sales performance from changing.

Inside any well-run partner marketing event, the narrative is compelling. Market opportunity is clearly framed. Differentiation is positioned. Growth potential is highlighted.

Partners agree because the story makes sense. But agreement is not the trigger for action.

Partners do not reorganize their selling behavior because they understand your strategy. They do it when your offering changes their revenue equation.

That is the edge most organizations avoid confronting.

A partner can fully agree with your positioning and still not sell your product. Because agreement does not influence how they allocate time, resources, or attention across deals.

Once the event ends, partners return to a far less controlled environment. Now they are no longer responding to messaging. They are making commercial decisions.

None of these factors is resolved by alignment.

So, while the event creates a temporary moment of clarity, it does not alter the underlying economics of partner behavior.

And without changing economics, behavior does not move.

This is why partner events consistently overperform on engagement and underperform on revenue impact. They influence what partners think, not what partners do.

Partners do not operate on strategic alignment. They operate on deal movement. Inside any partner organization, multiple vendors compete for the same limited selling time, and that time is allocated based on what converts fastest with the least resistance.

This is the part most organizations avoid confronting directly.

Even if your positioning is strong and your narrative lands during the event, it still enters a pipeline where urgency, simplicity, and speed dominate decision-making. A partner will naturally move toward the solution that is easier to explain, quicker to position, and more likely to close without friction.

Because every extra step slows revenue down.

So, your offering is not being evaluated against how well it aligns. It is being judged against how efficiently it turns effort into income.

And in that environment, the easiest deal almost always wins.

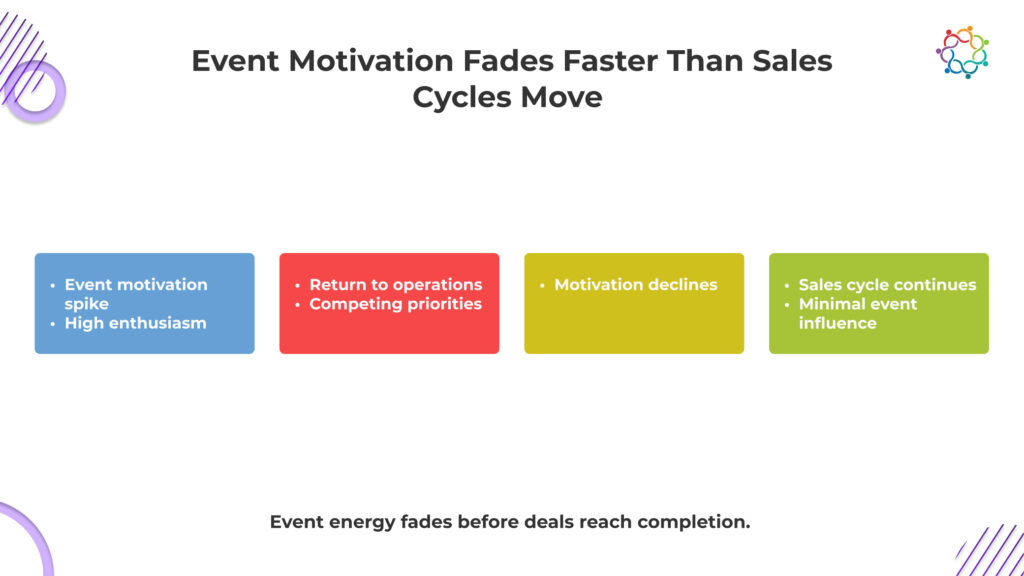

The problem is not that motivation fades. The problem is that revenue decisions happen after motivation is no longer relevant.

Right after partner events, partners are interested. But deals are not closed in that moment. They are evaluated later, when pressure, urgency, and economics take over.

And that is where things start slipping.

Here is the part most teams miss.

Motivation does not just fade. It gets outcompeted.

By the time a partner is deciding where to invest effort, your opportunity is no longer competing with the memory of the event. It is competing with deals that are easier, faster, and more profitable.

And that is where revenue is actually decided.

It is comfortable to believe that stronger relationships lead to stronger revenue. That belief is rarely challenged because it feels directionally correct.

But in partner ecosystems, it is incomplete.

Relationships create access. They build trust. They keep you in the conversation. But they do not determine what gets sold. Because when a partner evaluates an opportunity, they are not asking who they trust more. They are asking what makes more commercial sense right now.

High-performing ecosystems are clear about this, and more importantly, they reject the signals others rely on.

Instead, they focus on one uncomfortable truth.

Partners will always choose the path that maximizes return for the least effort in the shortest time.

So even in the strongest relationships, revenue remains selective. Because trust may keep you relevant. But economics decides if you get sold.

You are influencing the wrong layer of the partner organization and expecting revenue from it. The people you engage at events shape relationships, not deal decisions. Sales happen elsewhere.

Executives align on vision, partnerships, and long-term direction. But they do not decide which product gets pitched tomorrow. Their alignment rarely translates into immediate selling pressure on the ground.

Frontline sellers care about what closes. If your solution takes longer to explain, position, or negotiate, it gets deprioritized instantly, regardless of how strong the event messaging was.

Even if sales teams are exposed to event content, it does not stay with them during live deals. In high-pressure selling situations, only what is easy and familiar survives.

Events do not reduce friction in pricing, positioning, or closing. So when sales reps evaluate deals, nothing has actually improved. And unchanged conditions lead to unchanged behavior.

If the people closing deals do not change how they sell, your event never had a chance to change revenue.

When partner revenue does not change after an event, the instinct is to question the event itself.

Was the messaging strong enough? Was attendance high enough? Was engagement sufficient?

These questions feel logical. But they are misdirected.

Because the system used to evaluate partner event ROI is built around engagement, not performance. And that system does more than mismeasure. It actively discourages honest evaluation.

Why?

Because engagement is easier to report. It produces immediate metrics. It shows visible success. It justifies investment quickly. Revenue impact, on the other hand, is delayed, complex, and harder to attribute. So organizations default to what they can prove quickly.

And in doing so, they reinforce a cycle where events are optimized for participation, not for ecosystem sales performance.

This creates a dangerous loop.

Events look successful on paper. Leadership expects revenue impact. Revenue does not change. But the metrics still validate the investment. So nothing fundamentally shifts.

And the Partner Priority Gap continues to widen.

The reason this pattern repeats across organizations is simple. Partner events are designed to influence perception. Partner sales performance is driven by structure.

These forces do not disappear after an event. They remain constant. They continue to shape behavior long after alignment fades.

So, expecting an event to override these dynamics is not just optimistic. It is structurally unrealistic.

This is why even the most well-executed partner ecosystem events fail to produce consistent revenue change.

Because they are being asked to do something they are not designed to do. They can influence how partners see you. They cannot control how partners sell.

And until that distinction is fully accepted, organizations will continue to misattribute performance gaps to execution, instead of acknowledging the underlying structure.

You did not mis-execute the event. You misread what it could ever influence.

Partner events foster alignment, visibility, and stronger relationships. However, these outcomes do not necessarily influence how partners choose what to sell, as those decisions tend to be shaped by factors like margin, speed, ease, and demand.

If your offering does not lead on those factors, it may not be immediately prioritized—regardless of event timing.

So, the question is no longer whether your event worked.

It is whether your product can compete in a partner’s revenue reality.

Because the Partner Priority Gap does not close with better events. It only closes when your offering earns a place in the partner’s revenue priorities.

Because if it cannot, no level of alignment will ever convert into sales.

“You didn’t run out of events. You ran out of proof.”

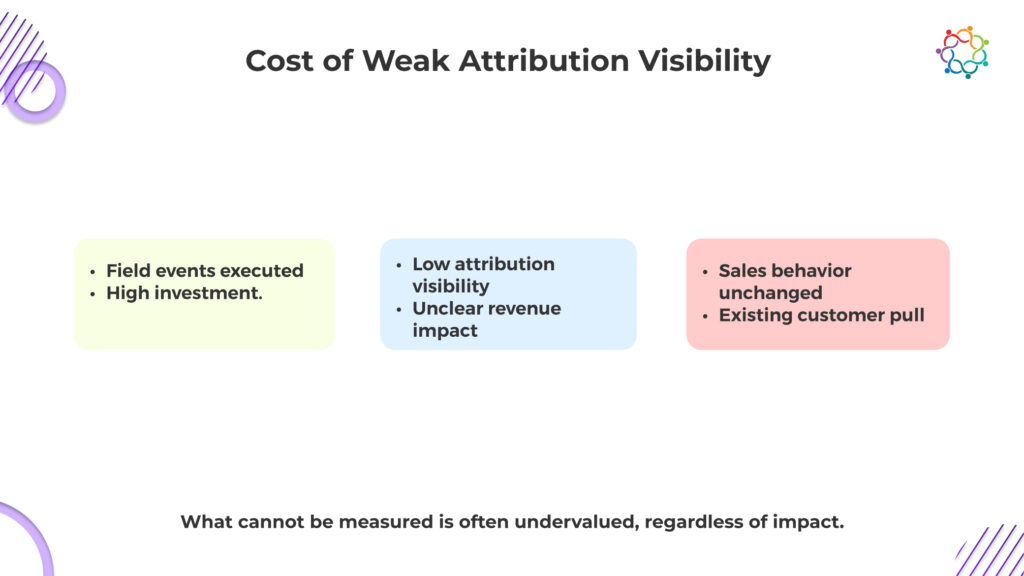

That is the uncomfortable reality most B2B teams face after a full year of executing field events. The calendar was full, the rooms were booked, and the right people showed up. Conversations happened, sales teams walked away with momentum, and marketing reported strong engagement.

Everything points to progress until the question changes. Not how many events were run, but which of them actually drove revenue.

This is where the Event Attribution Gap becomes impossible to ignore. Activity is visible, but revenue impact is not, and visibility is not what leadership funds. At this point, the narrative weakens because performance cannot be proven.

This blog diagnoses why that proof breaks, where attribution fails, and how event influence disappears before revenue is ever measured.

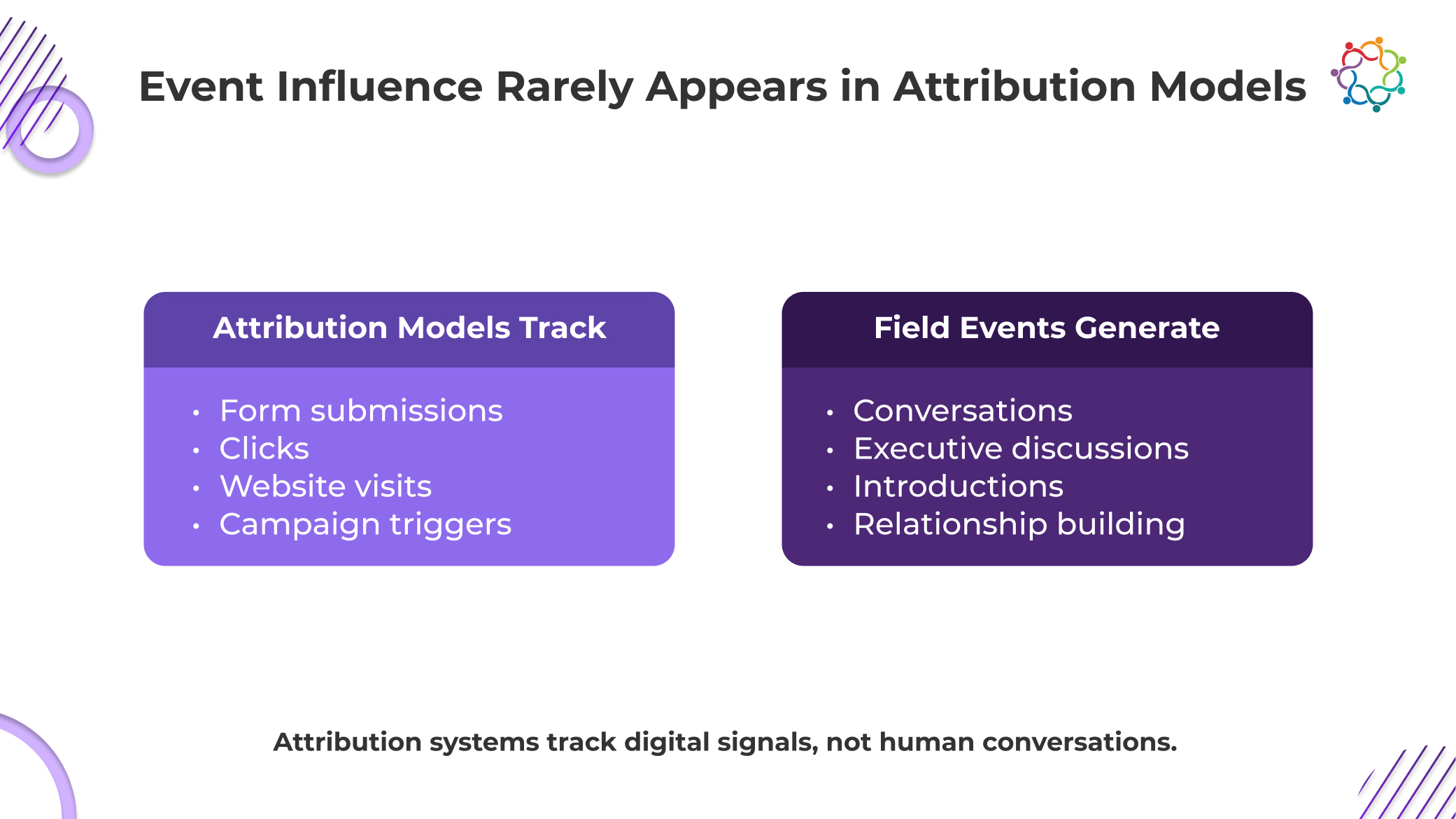

Your attribution model is not broken. It is doing exactly what it was designed to do. It captures clicks, form fills, and digital activity because those are easy to record, structure, and report. That is what your entire measurement system is optimized for.

But these events do not produce that kind of data. They produce conversations, alignment, and decision-shaping moments that never enter a system. The interactions that actually influence deals happen in rooms, not in dashboards.

This is where the Event Attribution Gap becomes unavoidable. You are relying on a system that cannot see the interactions you know are critical. Then you expect it to explain revenue.

So the model credits what it can track, not what actually mattered. And you accept those outputs as truth.

At that point, the issue is no longer attribution. It is your willingness to measure the wrong signals and still expect the right answers.

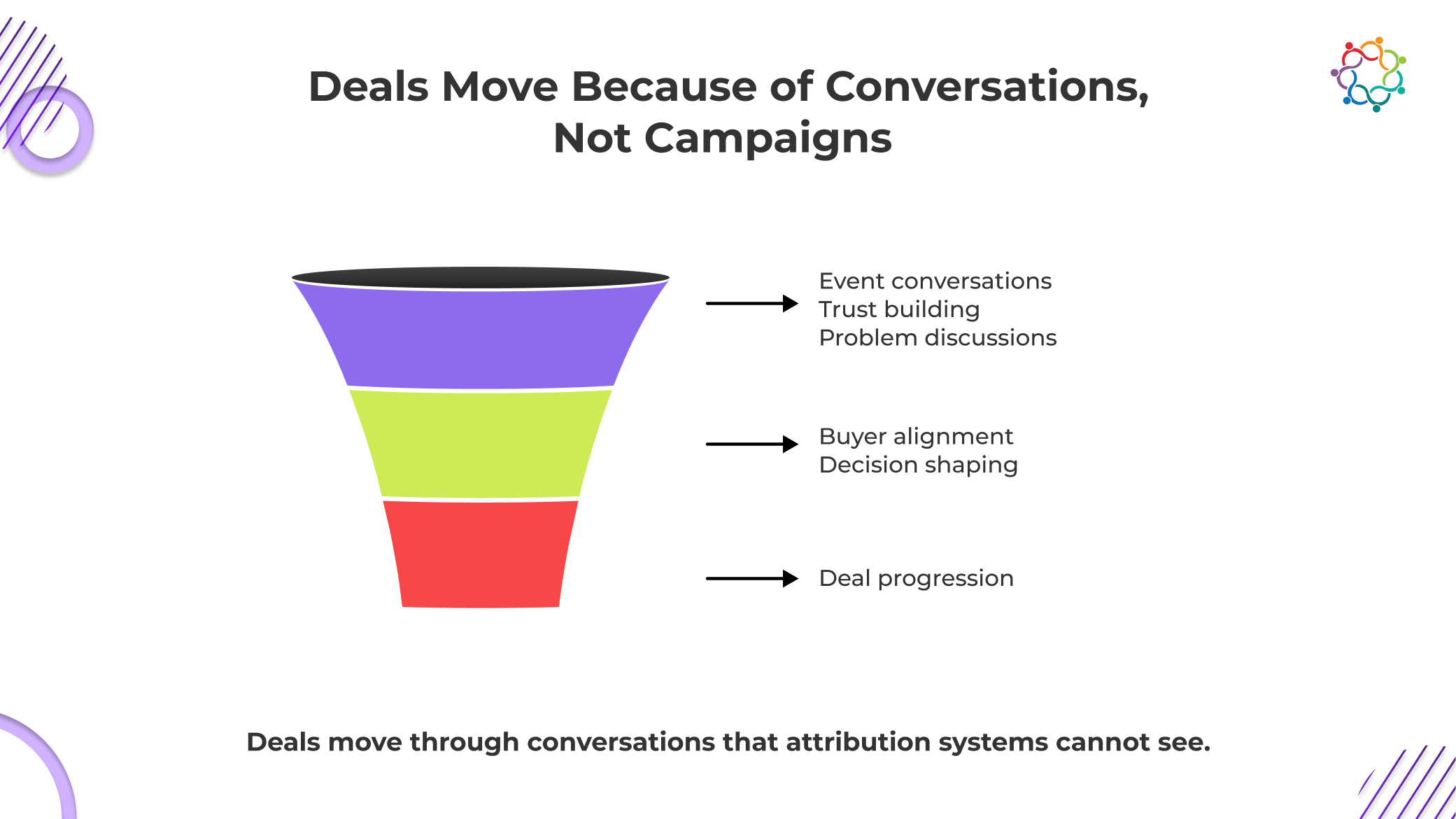

You already know deals do not move because someone clicked an email or downloaded a whitepaper. Those actions create activity, not commitment. What actually moves deals is trust, and trust is built in conversations that your systems never record.

In complex B2B environments, decisions progress when buyers gain clarity, align internally, and feel confident in the people they are engaging with. These shifts happen in discussions, not in dashboards. And field events are where these discussions accelerate.

This is the uncomfortable truth behind the Event Attribution Gap. The moments that change deal direction are the same moments your attribution model cannot see. So when revenue is analyzed, those interactions are missing, replaced by whatever digital signal appeared later.

You are not tracking what moves the deal. You are tracking what is easy to log.

And then you wonder why the story your data tells never matches the reality your sales team experiences.

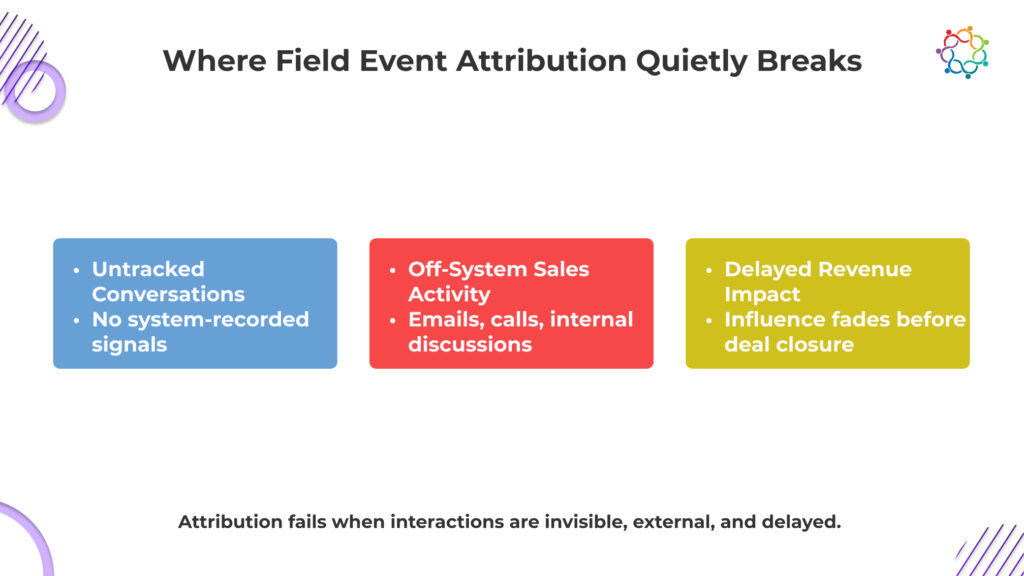

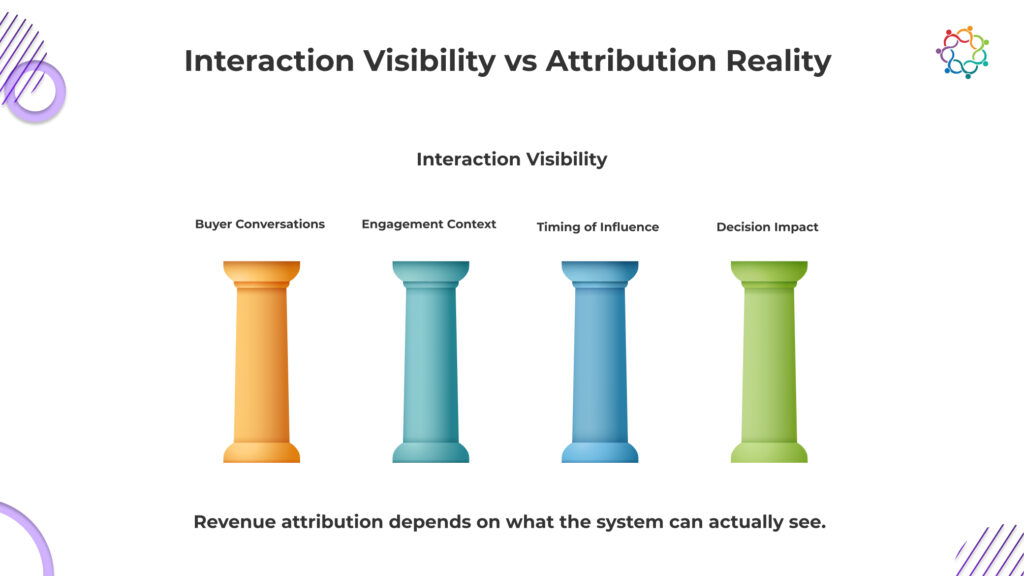

The Event Attribution Gap is not a single failure point. It is a chain reaction that unfolds across the entire revenue journey.

The process begins with in-person interaction. Buyers engage in discussions that shape their thinking. These conversations are often the most critical moments in the decision process.

But they are not captured.

No system records them. No structured data is generated. The interaction exists, influences, and disappears.

Because these interactions are not logged, they never enter attribution systems. There is no signal to assign weight to. No event to connect to opportunity progression.

At this stage, the influence is already at risk of being lost.

As the deal progresses, other interactions begin to appear. Emails are exchanged. Content is consumed. Forms may eventually be filled out.

These actions are captured.

They become the visible markers of engagement, even though they are not the original drivers.

By the time revenue is realized, attribution models assign credit to the last visible interactions. The earlier event influence is absent.

The result is a complete breakdown chain:

Conversation → No system capture → No attribution signal → Influence disappears → Revenue misattributed

This is how field event ROI becomes systematically undervalued.

Not because the impact is weak. But because the system never saw it.

Revenue attribution does not fail randomly. It fails precisely where visibility disappears. If your system cannot see an interaction, it cannot assign value to it. The conclusion it produces will always be incomplete, no matter how advanced the model appears.

You are relying on outputs that only reflect recorded activity, while ignoring the fact that some of the most critical buyer interactions were never captured in the first place. Field events create moments where intent sharpens, objections surface, and decisions begin to take shape. But if those moments are not documented, they effectively do not exist in your attribution logic.

At that point, your system is not evaluating performance. It is reinforcing a partial version of reality. And you continue to trust it, even when it consistently overlooks the interactions that actually influence revenue outcomes.

You are measuring revenue too late in the journey and expecting to understand what influenced it early. That is the disconnect. By the time a deal becomes visible in your system, key decisions have already been shaped.

Such events often influence buyers when they are still forming opinions, defining problems, and deciding which vendors deserve attention. These are not trackable stages. There are no forms, no CRM entries, and no measurable signals. But this is where direction is set.

Your attribution model only starts paying attention once visible activity appears. By then, the foundation will have already been built. The system credits what it can see, not what actually created momentum.

So the story you present internally begins in the middle, not at the origin. And that distortion compounds over time.

You are not missing data at the end of the journey. You are missing the beginning, where the most decisive influence actually occurs.

When you cannot clearly connect field events to revenue, the consequences are not subtle. They show up in how decisions are made, how budgets are allocated, and how marketing performance is judged.

Leadership does not wait for perfect attribution. It acts on what is visible. And what is visible often favors channels that produce immediate, trackable signals.

This is how strategy gets distorted. You begin optimizing for visibility instead of impact. Over time, this leads to over-investment in low-impact channels and under-investment in high-influence environments.

The risk is not just misreporting. It is misdirection. You are actively steering resources away from what drives revenue, simply because it is harder to measure.

The issue persists because the foundation itself was never built to support this kind of reality. Marketing measurement systems were designed for digital environments where every interaction can be tracked, timestamped, and stored.

But such events do not operate within those constraints. They rely on human interaction, context, and relationship dynamics that cannot be easily converted into structured data.

You are trying to force a relationship-driven channel into a system built for transactional tracking. That mismatch is the root of the problem.

Instead of questioning the system, most teams try to adapt their reporting to fit it. They simplify, approximate, or overstate connections just to produce something that looks measurable.

But the limitation does not go away.

Until measurement approaches reflect how buyers actually engage and make decisions, attribution will continue to fall short. Not because events lack impact, but because the system was never designed to recognize it.

Field events play a critical role in shaping how buyers think, engage, and ultimately decide. They create the conversations that build trust, align stakeholders, and move deals forward.

But most attribution systems are not built to capture this reality.

They measure digital interactions, not human influence. They prioritize visibility over significance. And as a result, they consistently underestimate the impact of such events.

The Event Attribution Gap is not a flaw in execution. It is a flaw in how impact is measured.

If organizations want true revenue visibility, they need to rethink what attribution is supposed to capture. Not just actions, but interactions. Not just signals, but influence.

Because the current system is clear about one thing.

Field events don’t fail to drive revenue. They fail to show up in the systems used to measure it.

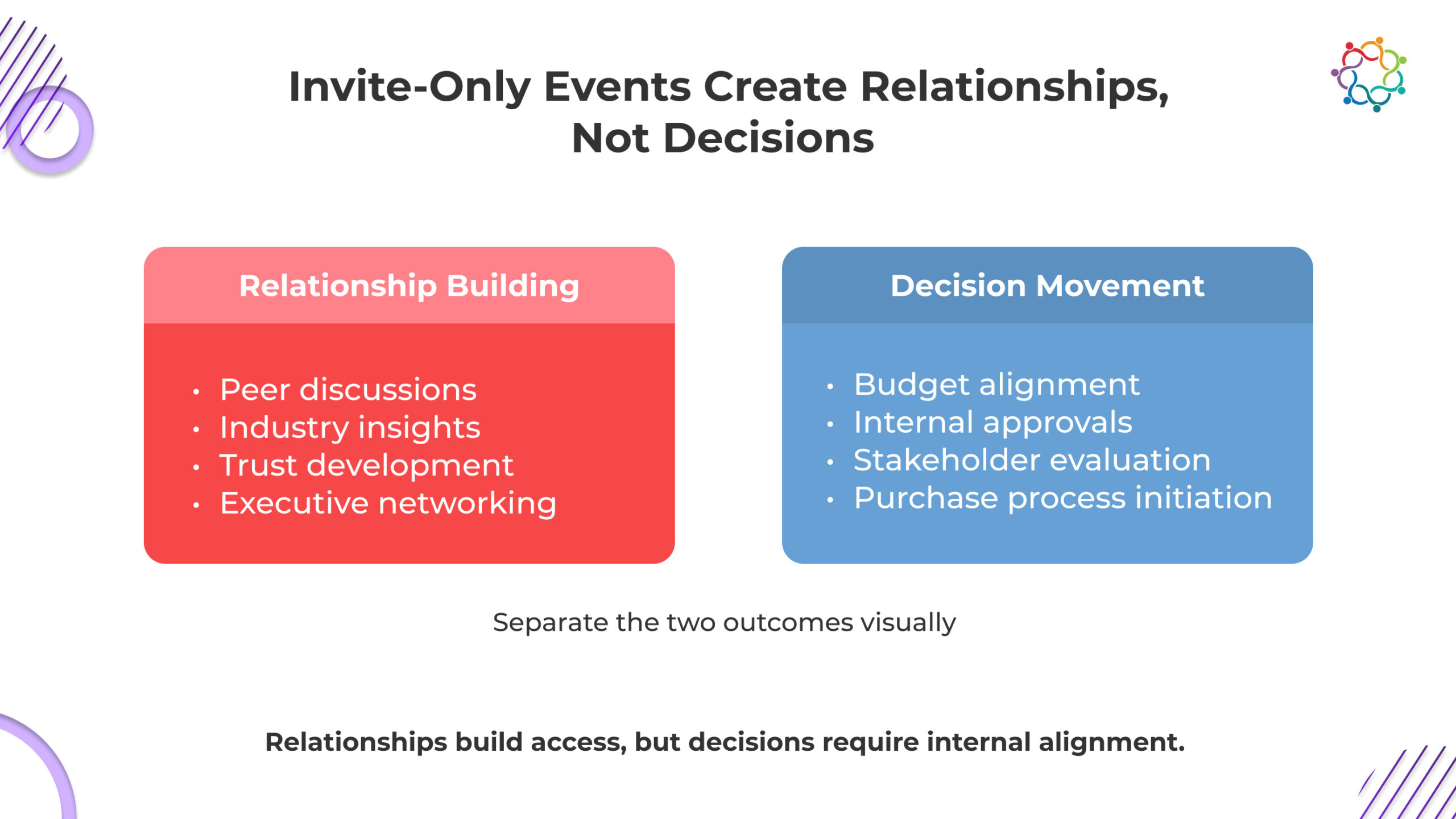

Invite-only executive environments are engineered to feel valuable. The right accounts are invited. The right people show up. Conversations are thoughtful, informed, and often energizing. By every visible signal, the event works exactly as intended.

Senior stakeholders engage deeply. Industry perspectives are exchanged. Relationships appear to strengthen in real time. Inside the room, it feels like momentum is building toward something meaningful.

This is precisely why invite-only events are so heavily relied upon for enterprise deal acceleration. They create access that is otherwise difficult to achieve. They compress interaction into a high-quality setting. They give sales and marketing teams the illusion of progress.

But the illusion becomes visible only after the event ends.

When pipeline reviews happen weeks later, those same accounts often show no measurable movement. Deals remain in the same stage. Timelines do not shift. No new urgency appears. The conversations that felt decisive in the moment leave no structural impact on the deal.

This is the tension most teams fail to confront.

A productive room creates the feeling of progress. A pipeline reflects actual progress. Those are not the same outcome.

This blog breaks down why that gap exists, where momentum actually disappears, and why strong executive engagement inside an event rarely translates into deal movement after it ends.

There is an assumption embedded in most executive events that needs to be confronted directly. The assumption is that proximity to senior buyers accelerates decisions.

The reality is far less convenient.

Executives do not attend these environments to make buying decisions. They attend to learn, to exchange ideas, and to build relationships that may or may not translate into commercial action later.

Invite-only events are intentionally designed to remove pressure. They create safe, non-transactional environments where senior leaders can speak openly without being forced into immediate commitments. That design is not a flaw. It is the reason these events work for engagement.

But it is also the reason they fail in deal movement.

This means that even when your solution is discussed, it is rarely evaluated in a way that moves a deal forward.

Trust may increase. Familiarity may improve. But enterprise decisions do not move on trust alone. They move when organizations initiate structured internal processes such as evaluation, budgeting, and alignment.

If those processes do not begin after the event, the deal does not move.

The uncomfortable truth is simple.

Closed-door executive environments are optimized for relationships. Deals move only when those relationships trigger internal action. Most of the time, they do not.

Even when the right executive is in the room, the deal is not.

This is where most expectations collapse.

Enterprise buying decisions are not controlled by individuals. They are governed by buying committees that include technical stakeholders, financial decision-makers, operational leaders, and executive sponsors. Each of these roles carries influence, and none of them can be bypassed.

This creates a structural limitation that invite-only events cannot solve.

The person attending your event may be a senior. They may be influential. They may even be supportive of your solution. But they are rarely empowered to move the deal forward alone.

This introduces friction the moment the event ends.

The attendee must take what they experienced and translate it internally. That means:

Most of the time, this translation never fully happens.

Not because the attendee is uninterested. But because internal alignment is difficult, political, and time-consuming. Competing priorities interfere. Other initiatives take precedence. The momentum from the event loses clarity as it moves into a more complex environment.

This is the point where deals stall without appearing broken.

The event may have influenced perception. But perception alone does not move the deal.

Deals move when internal consensus forms. And that process happens entirely outside the event environment.

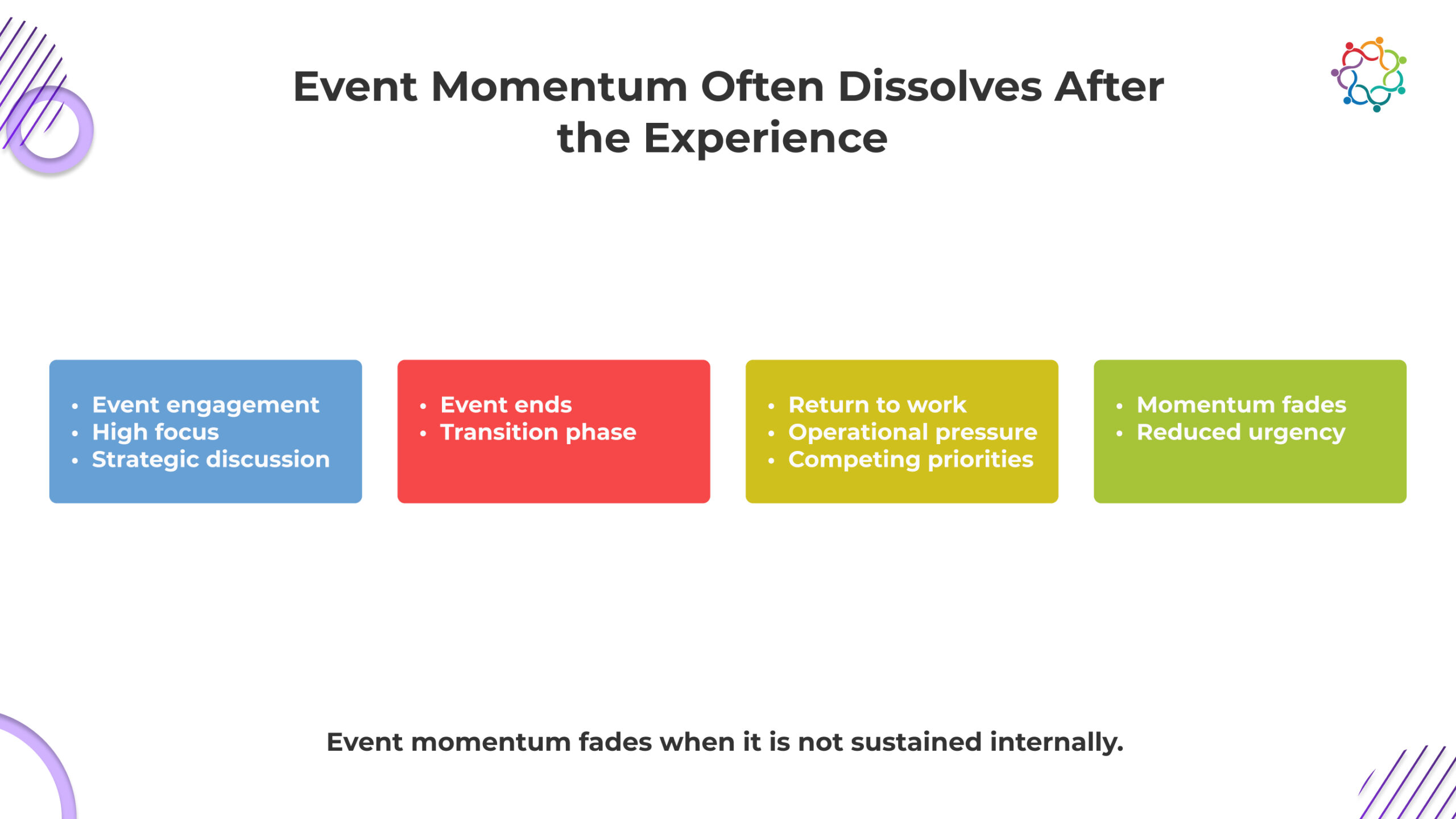

What feels like urgency inside an event is rarely real. It is borrowed.

Events create temporary conditions that amplify focus. Attendees are removed from daily distractions. Conversations are uninterrupted. Ideas are explored with unusual depth. In that moment, everything feels more important than it actually is in the broader context of the organization.

This creates a false signal.

During the event, buyers may express interest. They may ask detailed questions. They may even indicate a willingness to continue discussions.

But that energy does not belong to the deal. It belongs to the environment. The moment the event ends, that environment disappears.

Executives return to operational realities filled with competing priorities, internal deadlines, and ongoing initiatives that already demand attention. The urgency created during the event does not survive this transition.

This is where most perceived momentum collapses.

The conversation that felt critical becomes optional. The interest that felt immediate becomes deferred. The next steps that felt obvious become unclear.

The deal does not regress. It simply loses momentum without replacement. That is the defining characteristic of what can be called the Post-Event Deal Stall.

The event creates urgency that feels real but is not anchored in internal business pressure. Without that anchor, the urgency fades. And when urgency fades, deals stop moving.

The Post-Event Deal Stall follows a clear, repeatable sequence. Momentum does not disappear randomly. It weakens step by step after the event ends, as insight fails to convert into internal action and structured decision movement.

The attendee leaves with strong perspectives and potential interest. But without structured internal sharing, that insight remains individual. The organization never absorbs it, so the opportunity fails to enter collective consideration.

Without deliberate follow-through, the attendee does not initiate discussions with other stakeholders. The event experience is not translated into organizational dialogue, preventing alignment from even starting.

Curiosity and positive sentiment fail to trigger a formal evaluation. No meetings, no assessments, no movement. The idea remains conceptual instead of entering the company’s decision process.

The event creates temporary focus, but internal priorities remain unchanged. Without real urgency inside the organization, the deal has no reason to progress.

This is where momentum quietly collapses, and deals stop moving without resistance.

After executive engagements, sales teams almost always report strong signals.

Long conversations took place. Senior stakeholders engaged deeply. Follow-up discussions were suggested. From a surface perspective, everything points toward progress.

This is where interpretation becomes dangerous.

These signals reflect engagement. They do not confirm intent.

Invite-only events are designed to encourage participation. Executives are expected to engage. They are expected to contribute. They are expected to explore ideas openly. High engagement is not a buying signal. It is the baseline behavior of the environment.

The mistake happens when this behavior is translated into pipeline expectations.

These interpretations are rarely accurate.

Executives can be highly engaged without any intention to initiate a buying process. They can express interest without aligning internally. They can agree that a solution is valuable without prioritizing it.

This creates a false sense of pipeline acceleration.

The conversation feels like progress. The CRM does not reflect it.

The gap between those two realities is not accidental. It is structural.

Engagement is easy to observe. Buying intent is not.

And when engagement is mistaken for intent, the Post-Event Deal Stall becomes inevitable.

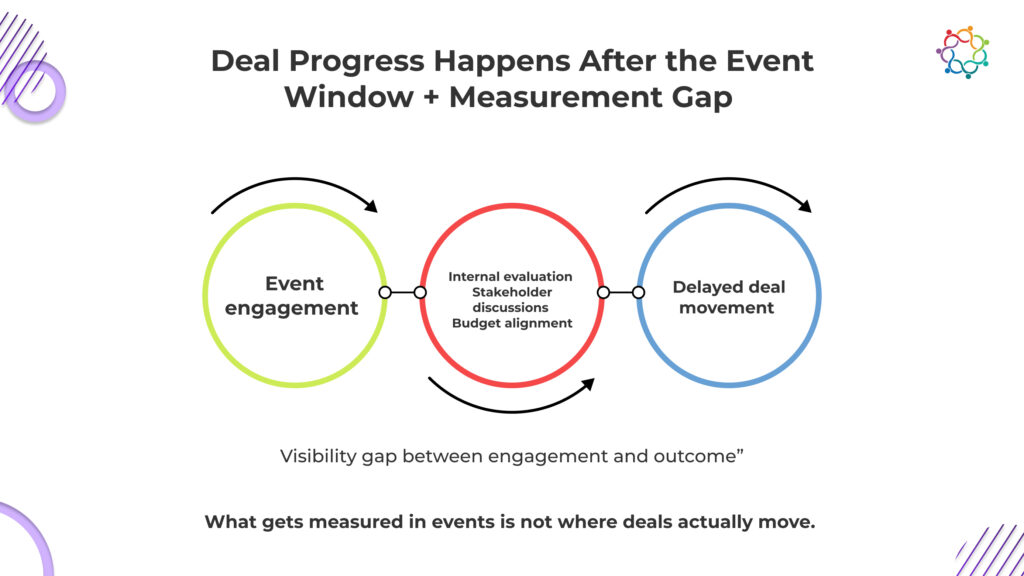

Deal movement does not follow event timelines.

This is where attribution and expectation both break down.

Enterprise decisions unfold over extended periods. After attending executive environments, buyers must evaluate internally, align stakeholders, and assess financial implications. None of these processes operates within the timeframe of an event or its immediate aftermath.

This creates a disconnect.

By the time a deal actually progresses, the event that influenced it may no longer appear relevant. The movement happens later, in a different context, driven by internal discussions that are not visible to external teams.

This makes invite-only events difficult to evaluate in terms of direct pipeline impact.

The influence may exist. The timing obscures it.

As a result, organizations either overestimate the immediate impact of events or fail to recognize their delayed influence. In both cases, the understanding of deal progression remains incomplete.

The key point is not that events lack value. It is that their influence does not align with measurable windows.

Deal movement happens when internal cycles reach alignment. That moment rarely coincides with the event itself.

Most organizations believe they understand event performance.

They track attendance quality. They measure participation. They evaluate conversation depth and satisfaction. These metrics create a clear picture of what happened inside the event.

What they do not capture is what happens after.

This is the fundamental limitation.

The system is designed to measure engagement because engagement is visible. It happens in controlled environments. It produces immediate data.

Deal movement, on the other hand, is complex and delayed. It happens inside buyer organizations. It depends on internal conversations that are not directly observable.

So it is ignored.

This leads to a distorted understanding of success.

An event can score highly across every engagement metric and still have zero impact on pipeline progression. The data will suggest success. The pipeline will contradict it.

This is not a measurement gap. It is a prioritization problem.

Organizations choose to measure what is easy rather than what is meaningful.

And until that changes, the Post-Event Deal Stall will continue to be misunderstood.

Because the system is not built to see it.

Closed-door executive environments are powerful. They create access, enable high-quality conversations, and strengthen relationships with the right accounts. None of that is in question.

What is often misunderstood is what those environments are capable of delivering.

They create awareness. They build trust. They shape perception. But they do not move deals on their own.

Deal progression depends on what happens after the event. It depends on internal alignment, organizational priorities, and decision urgency. These forces exist entirely outside the room.

When deals fail to move, the issue is rarely the event itself. It is what happens next, or more accurately, what does not happen next.

The Post-Event Deal Stall is not a failure of engagement. It is a failure of internal activation.

A strong room creates conversation. Deals move only when that conversation survives outside it.

At a broader level, this raises a deeper need for visibility. Organizations must understand how executive engagement evolves after events, how internal buyer behavior develops across accounts, and how those signals connect to pipeline movement.

Platforms like Samaaro are built around this exact challenge, helping teams move beyond event-level metrics toward true account engagement intelligence.

Conference floors create a dangerous kind of confidence.

Everything signals success. Booths stay crowded. Conversations flow without friction. Calendars are overbooked. Sales teams leave with pages of contacts and the clear impression that demand is strong and immediate.

This is where most teams get misled.

What looks like momentum is not pipeline movement. It is compressed attention. Conferences temporarily remove the friction that usually slows down buyer engagement. Decision-makers are available, curiosity is elevated, and interactions happen faster than they normally would.

This creates a spike in activity that feels like progress.

But that expectation is built on a flawed assumption. Activity during the event does not equal continuity after it. The environment disappears the moment the conference ends, leaving fragmented interactions with no guaranteed progression.

This is the Conference Conversion Gap. It explains why high engagement during conferences rarely translates into post-event pipeline movement.

If engagement does not extend beyond the event, it was never momentum to begin with.

This blog shows how to close the Conference Conversion Gap by turning event engagement into sustained pipeline movement.

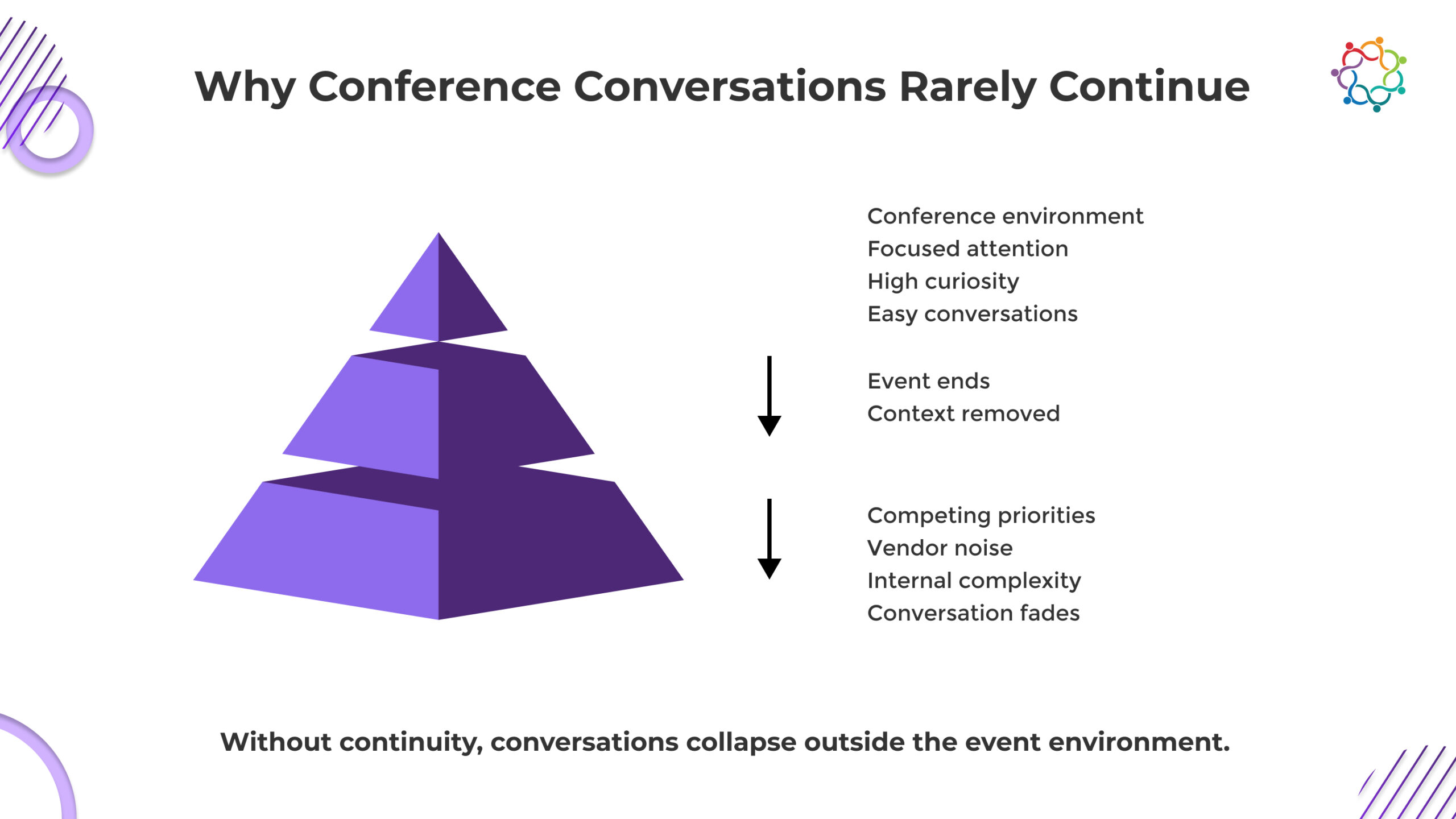

Conference conversations feel meaningful because the environment removes friction. Buyers are available, curious, and open. You are not earning attention. You are borrowing it.

That advantage disappears the moment the event ends.

Buyers return to real priorities, internal approvals, and competing demands. Your conversation is no longer contextual. It is just another follow-up in a crowded inbox. The urgency you relied on was temporary, and now it is gone.

This is where most teams miscalculate. They assume interest will carry forward. It does not.

If the conversation was not anchored in a real problem, a clear use case, and a defined next step, it has no reason to continue. Buyers do not reject you. They deprioritize you.

If your post-event strategy depends on re-engaging the buyer, you have already lost the advantage the event gave you.

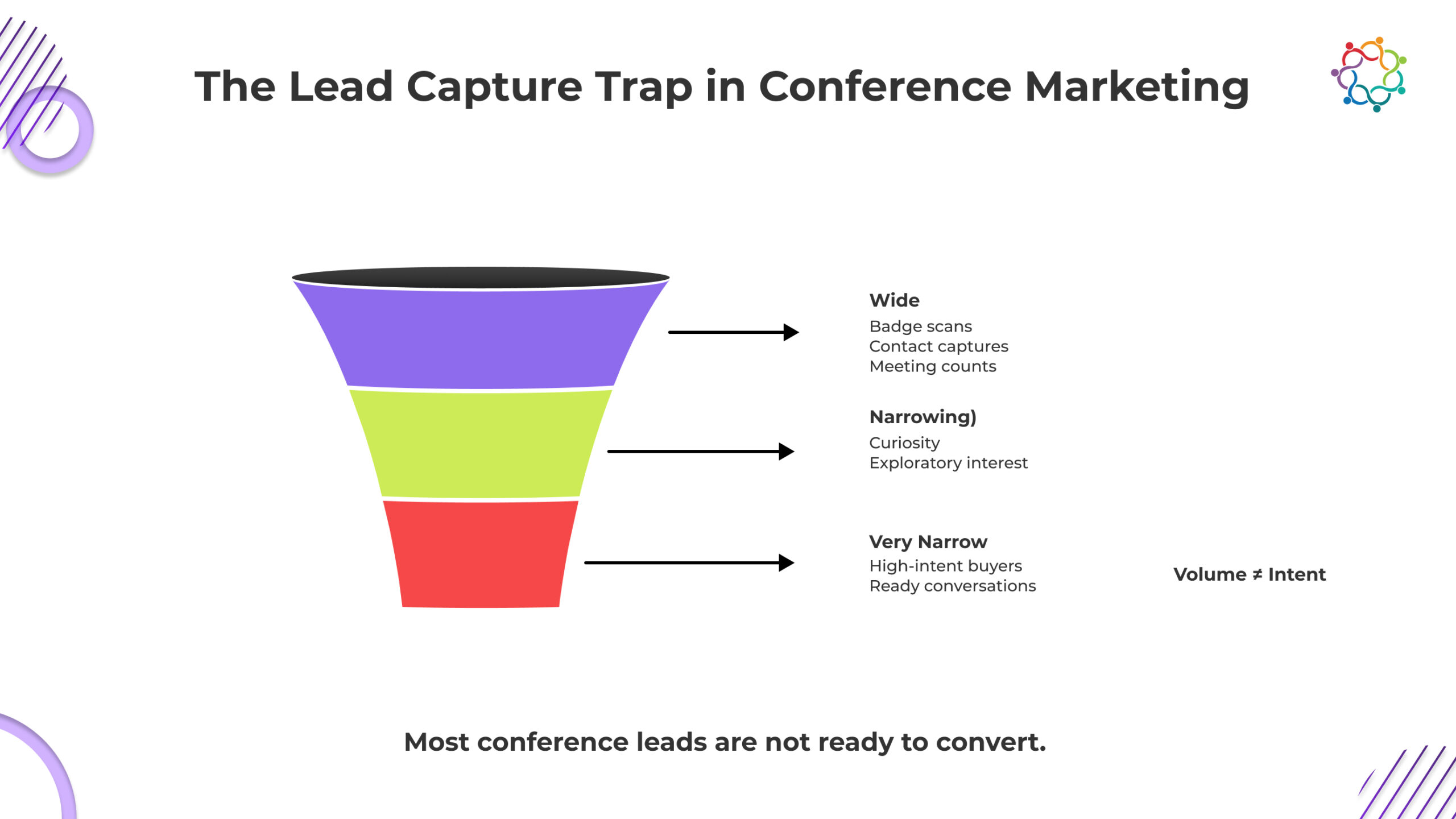

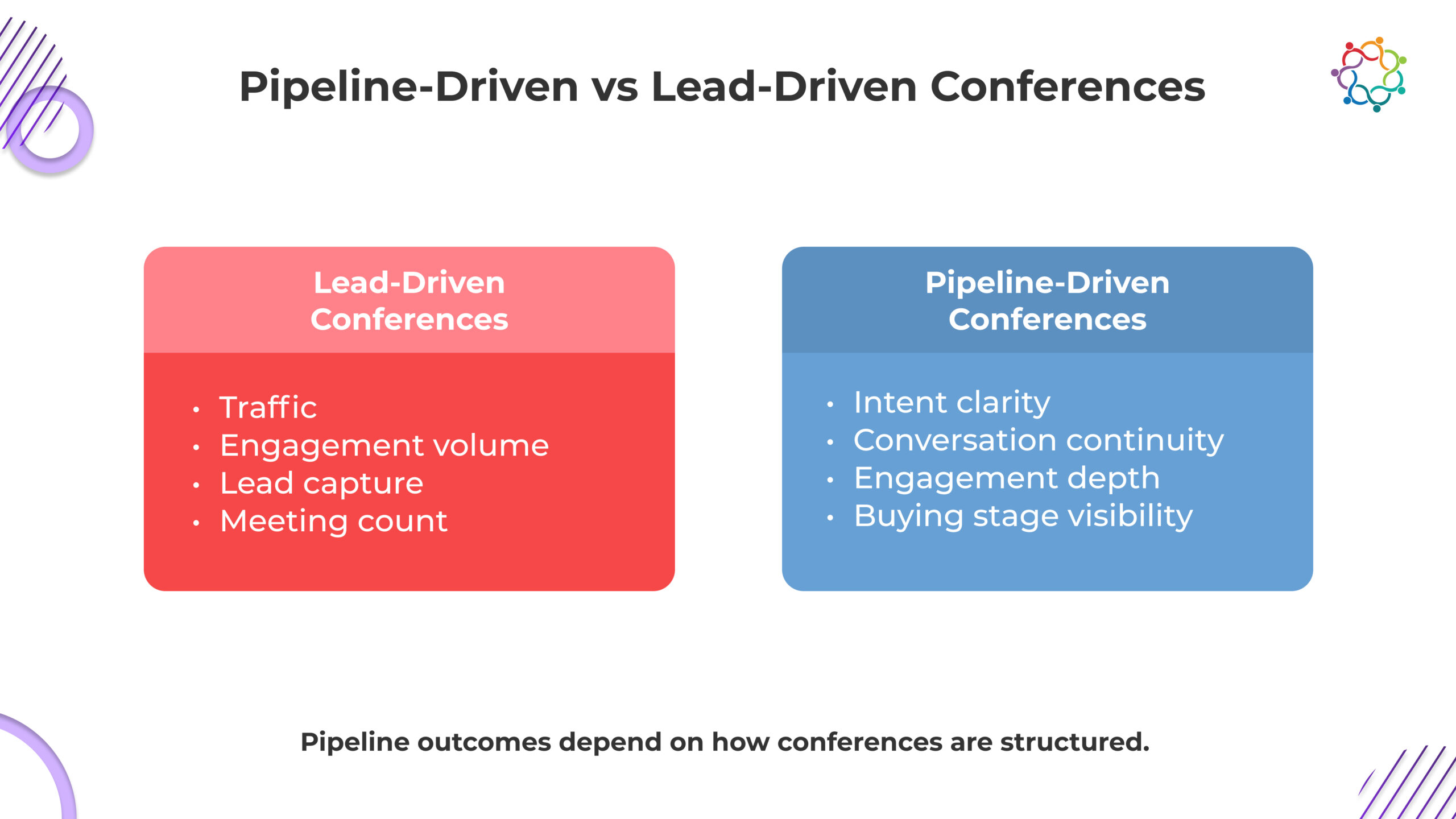

Most conference strategies are optimized for what is easiest to measure, which is lead capture.

Badge scans. Form fills. Meeting counts. Contact acquisition. These metrics are clean, immediate, and dashboard friendly. They provide instant visibility into event activity.

That is exactly why teams optimize for them.

Not because they are effective indicators of pipeline, but because they are easy to track, easy to report, and easy to justify internally.

This creates a structural bias.

Teams focus on maximizing the number of event marketing leads, assuming that volume will eventually translate into pipeline. It rarely does.

The problem is not just that these metrics lack depth. It is that they actively distort how success is defined.

Very few represent validated demand.

Yet internally, all leads are treated as potential pipeline contributors.

And then conversion stalls.

The issue is not poor follow-up. It is due to weak input quality.

Capturing a contact is not the same as capturing intent. Without understanding the buyer’s context, urgency, and decision stage, post-event conversion becomes guesswork.

The Conference Conversion Gap is reinforced here. Lead capture creates the appearance of demand, but it does not create the conditions required for pipeline progression.

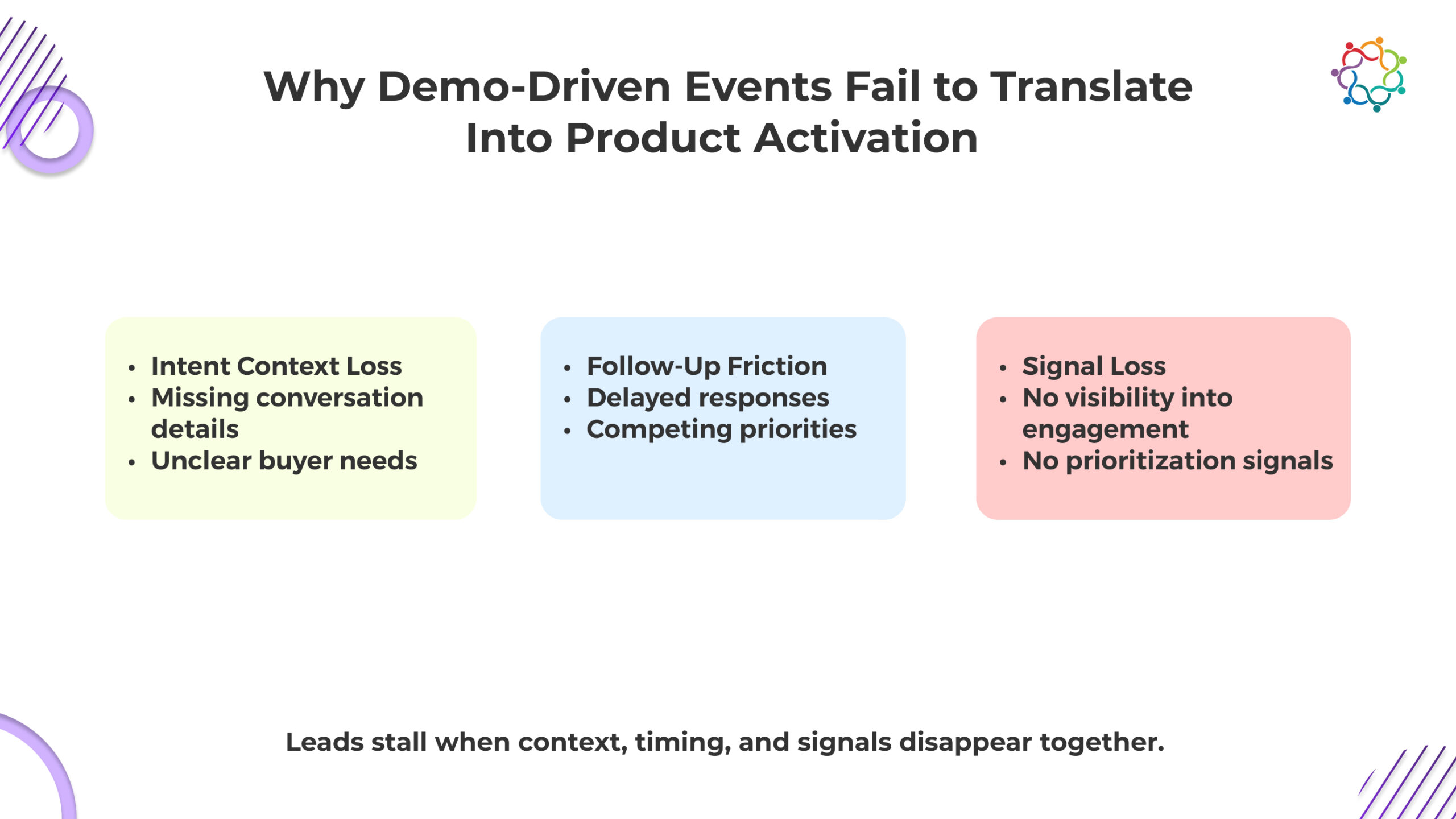

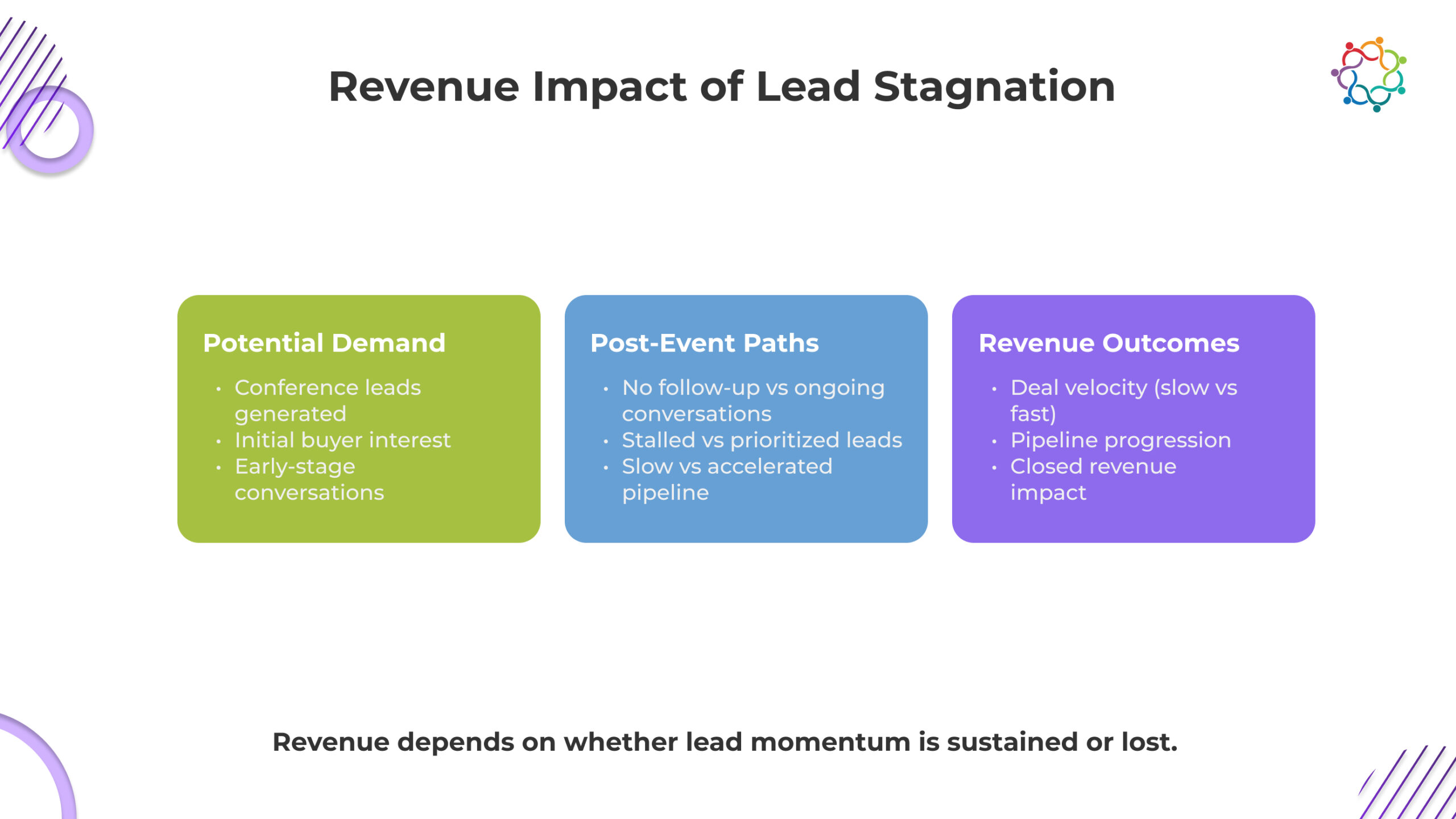

Lead stagnation does not happen at a single point. It unfolds as a sequence.

A predictable chain of breakdowns that begins the moment the event ends.

Event Ends → Context Disappears → Generic Follow-Up → Disengagement → Pipeline Stalls

This sequence explains why post-event leads fail to convert, even when initial engagement was strong.

Sales receives names without meaning. No problem, no urgency, no buying stage. Without context, follow-ups lose relevance instantly. You are not continuing a conversation. You are restarting blindly.

Without clarity, outreach defaults to templates. Nothing reflects the original discussion. Buyers recognize this immediately. What felt like a real conversation now looks like mass follow-up noise.

Buyer Attention Shifts

Post-event, your message competes with operational priorities and multiple vendors. The urgency is gone. If your conversation was not anchored deeply, it gets deprioritized without hesitation.

Rich interaction data stays trapped in the event. Sales only sees contacts. No visibility into interest or behavior. Without signals, prioritization fails, and high-intent buyers get treated like everyone else.

No context, weak follow-up, distracted buyers, missing signals. The outcome is inevitable. Conversations fade, opportunities never form, and what looked like momentum collapses before entering the pipeline.

This is not a breakdown in execution. It is a breakdown in continuity.

The conversion gap is not a single failure point. It is a chain reaction. And once it starts, recovery becomes difficult.

High-performing teams do not treat conferences as standalone marketing events. They treat them as active stages within an ongoing sales process. That shift forces a different standard. Every interaction is expected to move somewhere, not just happen.

They do not optimize for traffic, booth activity, or meeting volume. They prioritize clarity. Who is evaluating, what problem is being considered, and how close that conversation is to a buying decision. If that is not clear, the interaction is not valuable.

This is where most teams fall short. They leave the event with contacts, not direction.

Pipeline-driven teams leave with defined conversations that sales can act on immediately. There is no reset after the event because nothing was left incomplete.

If your conference strategy depends on restarting conversations after the event, you have already lost control of the outcome.

If your conference cannot influence what sales does the next day, it is not a pipeline system. It is a temporary engagement spike. Pipeline-driven conferences are designed to produce usable intelligence, not just interaction.

That changes what the event must deliver:

When this structure exists, sales do not guess. It acts with precision. Conversations move forward because they were never left incomplete.

If your event outputs cannot guide prioritization, they cannot drive progression. And if progression is not built into the design, the pipeline impact you expect will never materialize.

When post-event conversion fails, the damage is not limited to missed opportunities. It destabilizes your entire revenue model. The pipeline slows down at the top, which delays everything downstream. Forecasts become assumptions instead of projections.

Sales cycles stretch because early-stage conversations were never qualified properly. Teams waste time revalidating interest that should have been clear during the event. Meanwhile, genuinely interested buyers slip through because they were never identified correctly.

This is where most organizations lose control. They believe the event created demand, but they cannot translate that into pipeline movement or revenue timing.

The cost is hidden but significant. Poor conversion does not just reduce ROI. It introduces uncertainty into planning, weakens sales efficiency, and erodes confidence in event investments.

If your conference cannot produce predictable pipeline movement, it is not contributing to revenue. It is complicating it.

Most companies are not unaware of the problem. They are locked into it.

Lead volume persists as the primary success metric because it is immediate, visible, and easy to defend. Pipeline progression is slower, harder to attribute, and exposes gaps teams would rather not confront.

This creates a cycle of comfortable reporting and poor outcomes.

Marketing delivers numbers that look strong on dashboards. Sales receives inputs that do not convert. Leadership sees activity but cannot connect it to revenue. The system continues because no one is forced to challenge it.

The issue is not a lack of data. It is a refusal to prioritize the right data.

As long as conferences are measured by how much they produce instead of what progress they make, results will remain inconsistent.

If your metrics reward volume, your outcomes will reflect noise. And noise does not convert.

Conferences do not fail because they lack engagement. They fail because engagement is not carried forward into pipeline progression. What begins as a strong interaction collapses without continuity, leaving sales with contacts rather than conversations and marketing defending with activity instead of outcomes.

This gap is not accidental. It is the direct result of how events are structured and measured.

Conference leads do not stall because buyers lose interest. They stall because the system loses the conversation.

Until conferences are designed to sustain momentum beyond the event, pipeline impact will remain inconsistent and unreliable.

If you are rethinking how conference engagement translates into pipeline progression, the conversation should not be about capturing more leads. It should be about understanding intent signals, sustaining conversations, and building post-event momentum into your revenue system.

That is the shift platforms like Samaaro are designed to enable.

ROI for events is often reduced to a cost-versus-revenue analysis. This strategy is supported by pressure from the leadership to defend spending. The visibility of closed agreements becomes the default metric of success as finance teams concentrate on quick returns. Although this framework is practical and simple to report, it ignores the more comprehensive ways that events add value.

Even though events have an impact that is difficult to measure right away, event ROI is frequently approached as a mathematical problem. Long before money is made, deals might move forward, connections could get stronger, and decision confidence could rise. Strictly looking at ROI through a spreadsheet lens misses these dynamics and runs the danger of undervaluing significant business-shaping events.

This blog explains what Event ROI truly measures, why spreadsheets and immediate revenue understate its value, and how to interpret business impact, pipeline influence, deal acceleration, and strategic outcomes correctly.

Event ROI evaluates business value relative to cost. The focus is on outcomes, not activity, meaning that simply hosting or attending an event does not equate to return.

Value includes more than closed revenue. It encompasses pipeline influence, accelerated deals, increased account engagement, and strategic positioning. ROI measures whether the investment produced a meaningful business impact, not whether an event occurred efficiently.

ROI explains what happened, not why it happened. This requires interpretation beyond raw numbers. It contextualizes the financial and strategic effect of events while avoiding simplistic cost-versus-revenue calculations.

Narrow ROI thinking assumes immediate revenue signals event effectiveness. Events rarely work this way. Many influence deals already in motion, contribute to multi-touch account engagement, or affect outcomes indirectly.

The time lag between the event and measurable outcomes reduces the visibility of ROI in the short term. Revenue contribution may be distributed across several events, sales interactions, and other initiatives, making single-event attribution difficult.

If ROI counts only immediate revenue, it ignores the most common ways events actually create value: influencing probability, accelerating decisions, and expanding account engagement. Spreadsheet-only ROI does not reflect these effects and underestimates true business impact.

Event ROI extends beyond immediate revenue. To understand the full business impact, it is important to recognize the different ways events create value. These effects often influence deals, accounts, and relationships in ways that are not captured by spreadsheets. The following categories clarify how ROI manifests across business dimensions:

Events move opportunities forward rather than create them. They increase deal seriousness and shape decision confidence across accounts.

Events reduce friction and shorten cycles. They shift timelines without guaranteeing immediate closure, affecting velocity rather than creation.

Events broaden stakeholder participation and strengthen relationships. Influence spreads beyond the attendees directly involved.

Events can contribute directly or indirectly. Some outcomes are measurable in closed revenue, others only in influenced pipeline or delayed wins.

Strategic events often prioritize influence over immediate revenue. Executive alignment, relationship building, and long-term positioning create value that spreadsheets cannot capture.

Small events can produce an outsized impact. A single meeting, conversation, or engagement may shape multi-stakeholder decisions. Measuring only visible revenue ignores this reality.

Spreadsheet-only ROI assumes impact is linear and immediate. It treats influence as optional and timelines as uniform. Events that create directional change across accounts or accelerate deals appear weak on paper.

Ignoring strategic value leads to misallocation of resources. Teams may cancel high-impact events because short-term ROI looks low.

Low visible ROI does not equal low business impact. It reflects the limits of calculation. Strategic events require judgment, not simple math. Treating the spreadsheet as the final word undervalues what actually drives business outcomes.

ROI numbers alone are incomplete. They show what happened financially, not why it happened.

Events influence deals, accounts, and relationships in ways that raw revenue cannot reflect. Attribution provides the context necessary to interpret ROI correctly.

Without context, ROI can mislead. A strong number may overstate the contribution. A weak number may understate real strategic impact.

Interpreting ROI requires understanding the environment, the pipeline, and the multi-touch influences at play. Numbers without explanation create false certainty and encourage poor decision-making.

ROI answers what happened. Attribution explains why. Ignoring this distinction reduces events to short-term cost centers, rather than strategic instruments.

Event ROI is often misunderstood. Misreading the numbers leads to flawed decisions and misallocated resources.

Event ROI requires judgment, context, and interpretation. Relying on metrics alone blinds leadership to the real value events create.

ROI for events cannot be calculated on a spreadsheet. It serves as a prism through which to assess actual business impact. Low value does not equate to low immediate revenue. Even when they are not immediately apparent, pipeline movement, transaction acceleration, and account engagement are significant.

Ignoring these elements distorts decision-making and lowers ROI to a meaningless figure.

By treating ROI as a formula, strategic events are undervalued, and false certainty is produced. Read the number alone, and you are blind to what the event actually achieved. ROI is about impact, not arithmetic. Misinterpret it, and you misjudge the business itself.

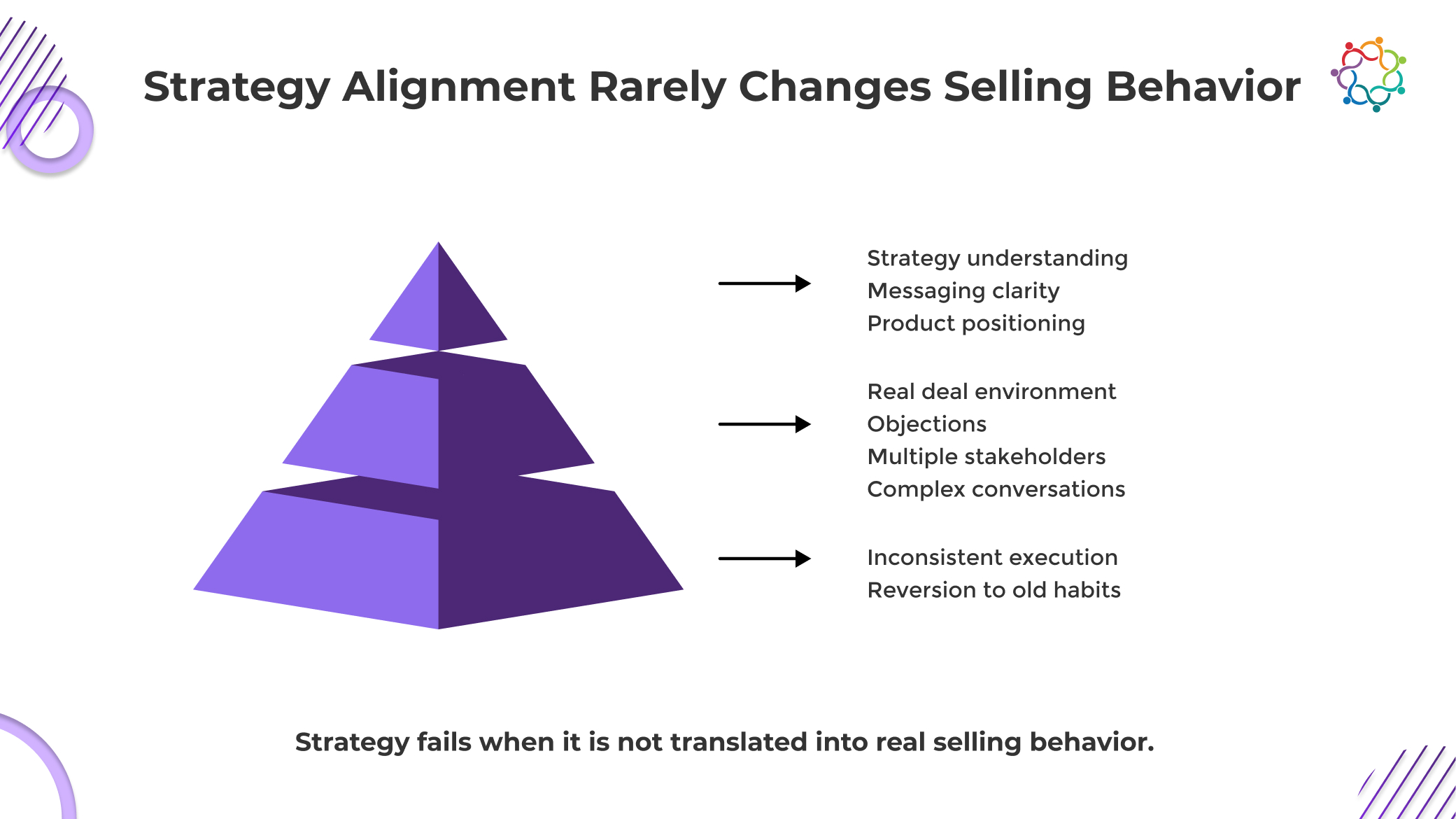

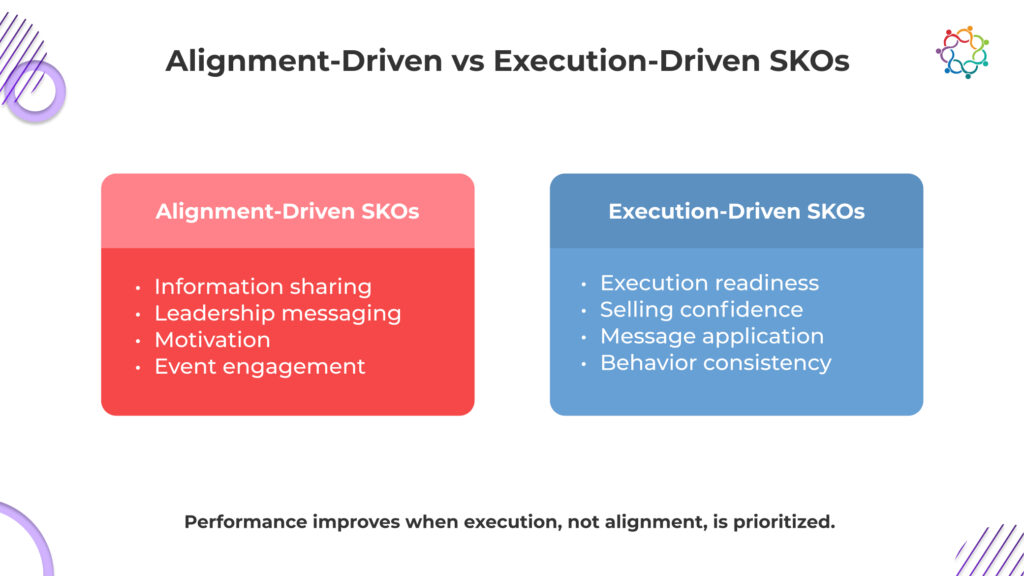

Most Sales Kickoffs fail at the one outcome they are trusted to deliver: better sales performance.

They create energy, alignment, and confidence in the moment. The room is focused. Messaging feels clear. Leadership sees a unified team ready to execute. It looks like readiness.

It is not.

This is perceived readiness, not actual selling readiness. The difference is where performance breaks. Once sellers return to live deals, complexity takes over. Pressure rises, habits resurface, and new messaging struggles to survive.

This is the SKO Performance Gap. Alignment created inside the event does not translate into consistent execution outside it.

Sales teams do not fail because they lack clarity. They fail because clarity was never converted into behavior.

This blog explains why that gap exists and why it continues to undermine sales performance.

You assume that once the team understands the strategy, execution will follow. It doesn’t.

Clarity in a room does not survive pressure in a deal.

During a sales alignment event, positioning sounds sharp, differentiation feels obvious, and messaging appears easy to apply. Sellers can repeat it. Leadership hears consistency. It feels like progress.

Then real conversations begin.

Buyers challenge assumptions. Deals stall. Objections force deviation. In those moments, sellers do not rely on what they just learned. They fall back on what they have used repeatedly.

Understanding is passive. Execution is conditioned.

If a new approach has not been tested across multiple deal scenarios, it does not become usable. It stays theoretical, disconnected from actual selling environments.

This is where most organizations miscalculate.

They believe communication drives change. It does not.

Behavior changes only when the strategy is applied, refined, and repeated until it becomes the default. Without that, nothing about execution shifts.

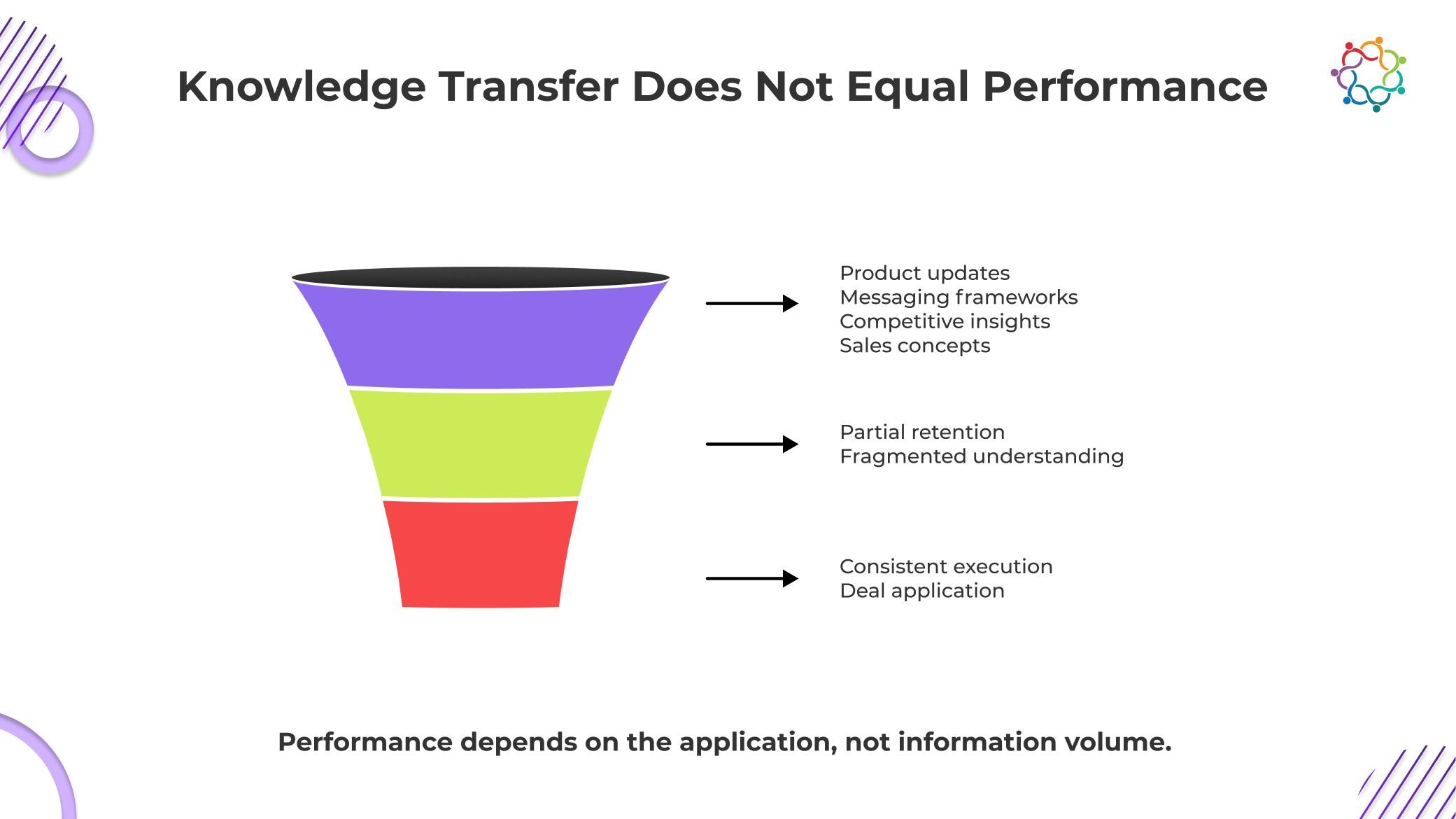

Sales launch events are dense information environments. In a short span of time, teams absorb product updates, new messaging, competitive insights, and revised frameworks. The assumption is simple: more knowledge leads to better performance.

It does not.

Knowledge without repetition decays faster than teams realize.

What feels clear during the event begins to fade within days. Not because the content was weak, but because it was not embedded in execution. The brain prioritizes what is used, not what is heard.

Sellers return to active pipelines where:

New knowledge has to fight for relevance. And most of the time, it loses.

This is the uncomfortable reality behind the SKO Performance Gap. Sales teams may know what to say, but they do not know how to apply it consistently across different deal situations.

Performance requires operational capability. That means:

None of this happens through exposure alone.

Launch events deliver knowledge. Performance requires integration. Without a system that forces knowledge into repeated use, the majority of what was learned remains unused.

And unused knowledge has zero impact on revenue.

The breakdown is not sudden. It follows a predictable chain that most organizations ignore.

Alignment inside the event creates confidence. But what happens next determines whether that confidence translates into performance.

The moment sellers return to the field, the environment shifts. The structured clarity of the kickoff is replaced by fragmented deal realities. Competing priorities take over. Focus disappears.

Sales behavior is not driven by recent learning. It is driven by repeated habits. Years of selling patterns do not disappear after a single event. When pressure rises, sellers fall back to what feels natural.

As habits take over, new messaging begins to fragment. Some sellers try to apply it. Others partially adapt. Many revert entirely. The result is inconsistency across deals.

When messaging is inconsistent, execution weakens. Differentiation becomes unclear. Deals progress unevenly. Win rates do not improve. Sales cycles remain unchanged.

This is the full expression of the SKO Performance Gap:

alignment → return to field → habit dominance → message inconsistency → performance stagnation

The critical insight is this. Sales-aligned events do not fail because they lack quality. They fail because their impact ends when execution begins.

Once the event ends, the system that created the alignment disappears. Nothing replaces it.

And without a system to sustain execution, alignment has no chance of becoming performance.

Most organizations still evaluate these events based on what happens inside the room. Engagement looks strong. Messaging lands cleanly. Leadership alignment feels complete. It creates the impression that the objective was achieved.

It wasn’t.

An internal sales launch event is not successful because information was not delivered effectively. It is successful only if selling behavior changes afterward. That is the only outcome that impacts revenue.

This is where most teams get it wrong. They confuse communication quality with performance impact. Clear messaging does not matter if it is not used in live deals. High participation does not matter if execution remains unchanged.

The real test begins after the event ends.

If sellers are not running conversations differently, handling objections with new positioning, and progressing deals with more consistency, nothing has improved.

Execution is the only metric that counts. Everything else is a distraction that makes failure look like success.

Performance improves through repetition and reinforcement, not one-time exposure.

This is not a suggestion. It is a requirement.

Sales behavior changes only when new approaches are used repeatedly in real situations. That repetition builds confidence. It reduces friction. It turns conscious effort into instinct.

A single Sales kickoff cannot achieve this.

Sellers need to:

Without reinforcement, none of this happens.

The SKO Performance Gap persists because organizations treat the event as the endpoint. In reality, it should be the starting point of execution.

What happens after the kickoff determines whether performance changes. If there is no structured reinforcement, the system collapses.

Sellers revert. Messaging fades. Habits return.

Leadership often underestimates how quickly this happens. Within weeks, most of the alignment created during the event is diluted. Within months, it is largely gone.

Performance does not improve because nothing sustained it.

A launch event can introduce change. Only reinforcement can sustain it.

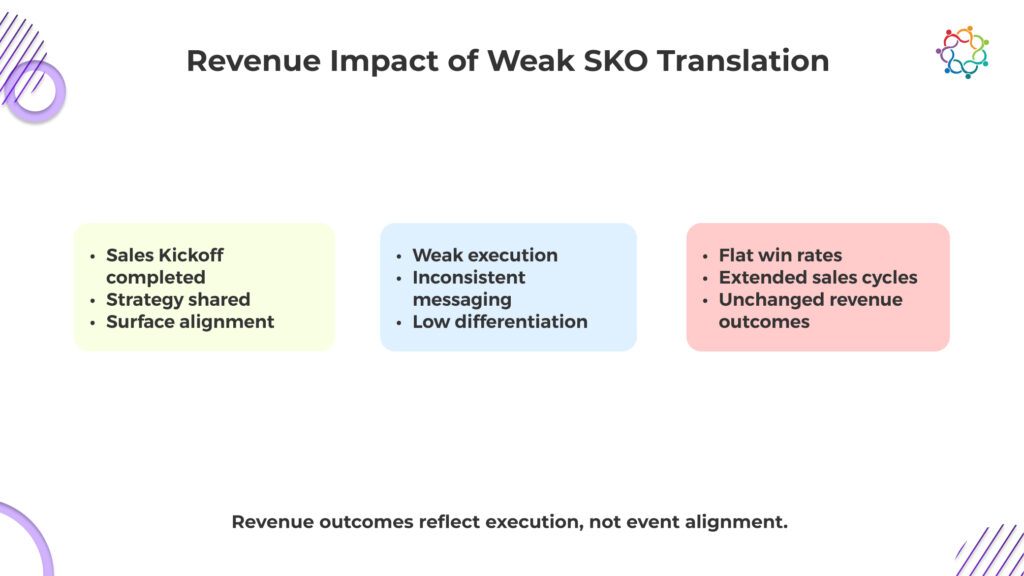

When the SKO Performance Gap is left unaddressed, the consequences are not theoretical. They show up directly in revenue outcomes.

The organization invests heavily in alignment. But execution remains unchanged. The result is a disconnect between strategy and performance.

The impact is measurable:

This is not a minor inefficiency. It is a systemic failure.

Sales launch events are positioned as strategic investments. But when they fail to influence execution, they become isolated events with no revenue return.

The cost is not just the event itself. It is the missed opportunity to improve performance at scale.

Organizations believe they have aligned the team. In reality, they have created temporary clarity without lasting impact.

Revenue does not respond to clarity. It responds to execution.

And execution has not changed.

You already know the gap exists. Yet these events continue to be designed the same way.

Because alignment is easy to prove. Execution is not.

A sales strategy summit can show participation, engagement, and feedback within days. Leadership gets clean reports, visible activity, and a narrative of success. It feels controlled.

Execution tells a different story. It exposes whether sellers actually changed how they run discovery, handle objections, and move deals forward. That takes time. It is harder to measure. And more importantly, it is harder to defend when results do not improve.

So the system defaults to what is visible.

You are not optimizing for performance. You are optimizing for optics.

This is why the gap persists. Not because the organization lacks awareness, but because it continues to reward alignment signals over execution outcomes.

And as long as that continues, nothing about sales performance will change.

Sales Kickoffs play an important role. They align teams, communicate priorities, and create shared understanding. That value is real.

But it is incomplete.

Performance improves only when strategy becomes behavior. And behavior changes only when it is applied, reinforced, and repeated in real-life situations.

The SKO Performance Gap is not a failure of events. It is a failure of translation.

Organizations assume that alignment will naturally evolve into execution. It does not. It requires a system that extends beyond the event, forces application, and sustains change.

Without that system, nothing shifts.

Sales teams don’t underperform because they lack strategy. They underperform because strategy never becomes behavior.

Most training events look successful for the wrong reasons. Customers attend, engage, ask questions, and leave with confidence. The environment is controlled, explanations are guided, and answers are immediate. Everything feels clear in the moment.

This is where the Customer Clarity Gap begins.

The confidence built during these sessions is situational, not transferable. It depends on structured guidance, not independent understanding. Remove that structure, and the clarity collapses. Customers return to real workflows and realize they cannot interpret, apply, or navigate the product without support.

Organizations mistake in-session confidence for real comprehension. They measure engagement and assume understanding. But what customers experienced was assisted clarity, not owned clarity.

Training events create the appearance of understanding. They rarely ensure it.

This blog explores why this gap exists, how it forms, and why it continues to undermine real product understanding.

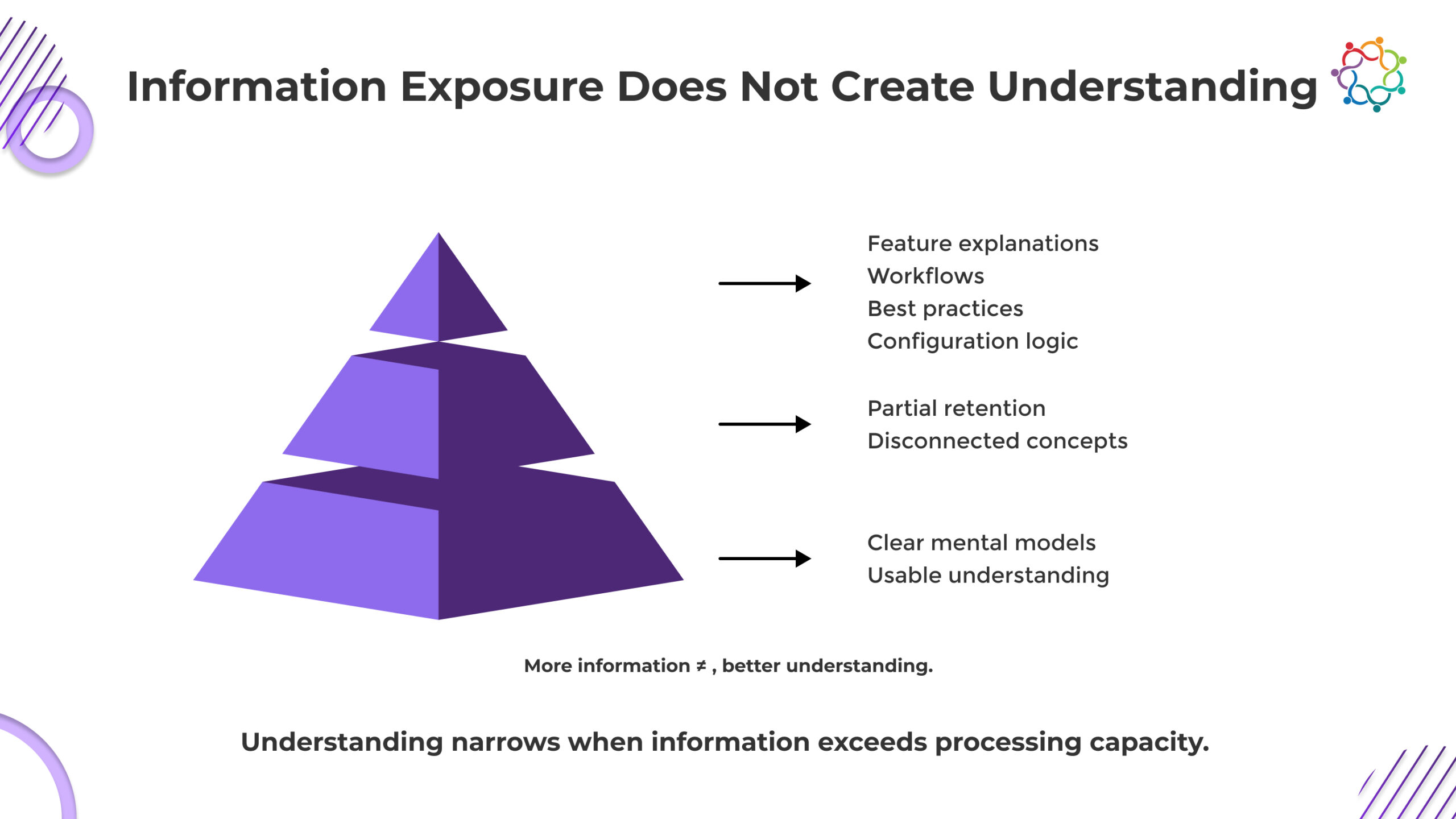

You are overestimating what your customers actually take away from training events.

Exposure feels like progress. Features are demonstrated, workflows are explained, and best practices are presented. Customers see everything. They follow along. It looks like learning is happening.

It is not.

Seeing a feature explained is not the same as knowing how to use it.

Understanding requires customers to connect concepts, interpret situations, and make decisions without guidance. That does not happen at the pace most training events operate. When information is delivered faster than it can be processed, the brain does not build understanding. It stores fragments.

Customers leave with scattered knowledge, not a working model of the product.

If your training is optimized for how much you show rather than how much they can apply, you are not educating. You are overwhelming.

And the moment customers try to use the product alone, that gap becomes impossible to ignore.

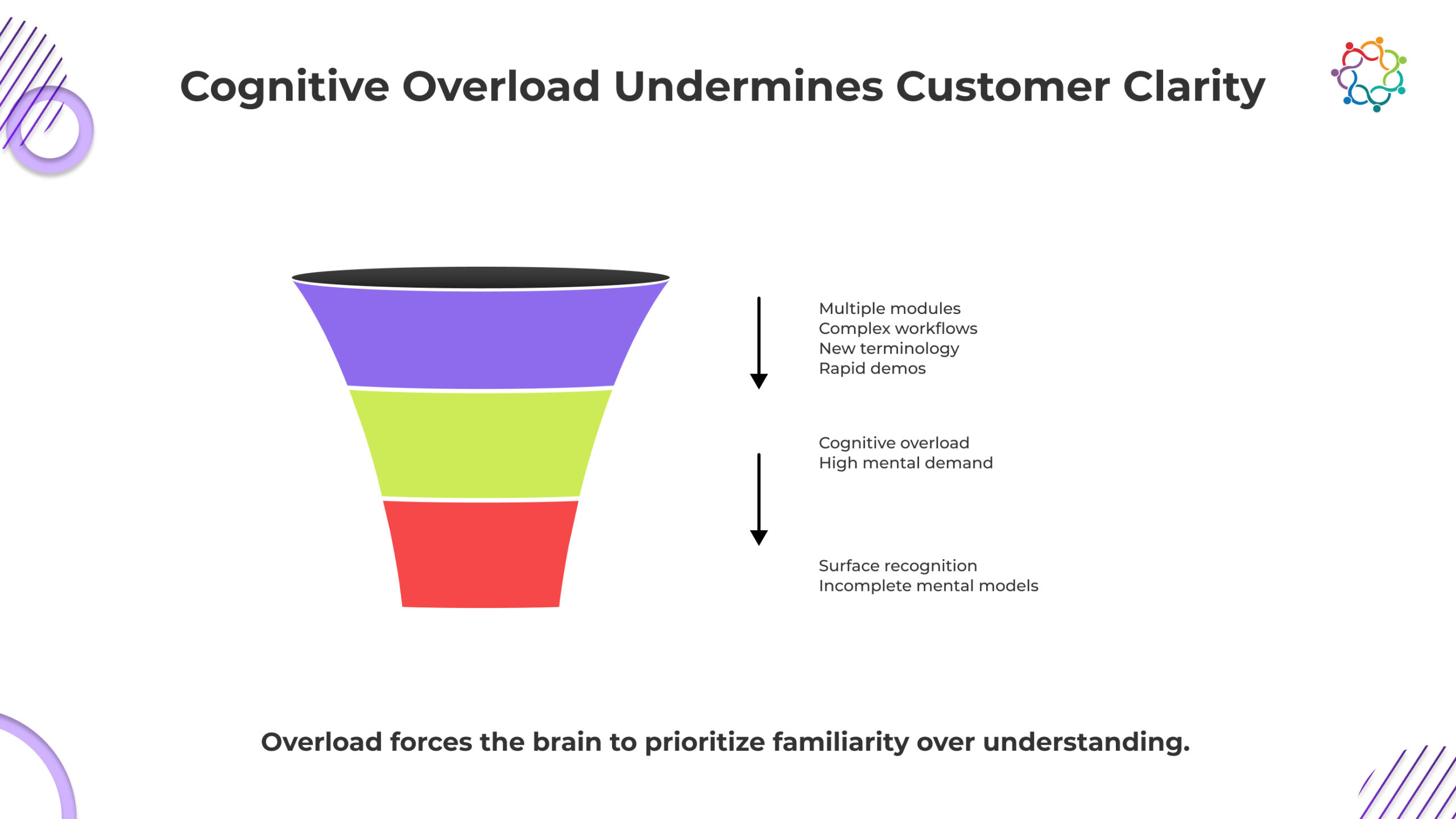

Cognitive overload is not an edge case in training events. It is the default condition you are creating.

You introduce multiple modules, layered workflows, new terminology, and rapid demonstrations in a compressed window, then expect customers to walk away with clarity. They will not. The brain does not process volume into understanding. It filters, shortcuts, and settles for surface recognition.

That is where the real damage happens.

Cognitive overload does not just reduce learning. It creates false confidence. Customers feel they understand because everything looked familiar during the session. But familiarity collapses the moment they try to act without guidance.

This is not a minor gap. It is a structural failure.

If customers leave your training recognizing features but are unable to use them, the overload has already done its job. You did not just fail to create clarity. You actively replaced it with the illusion of understanding.

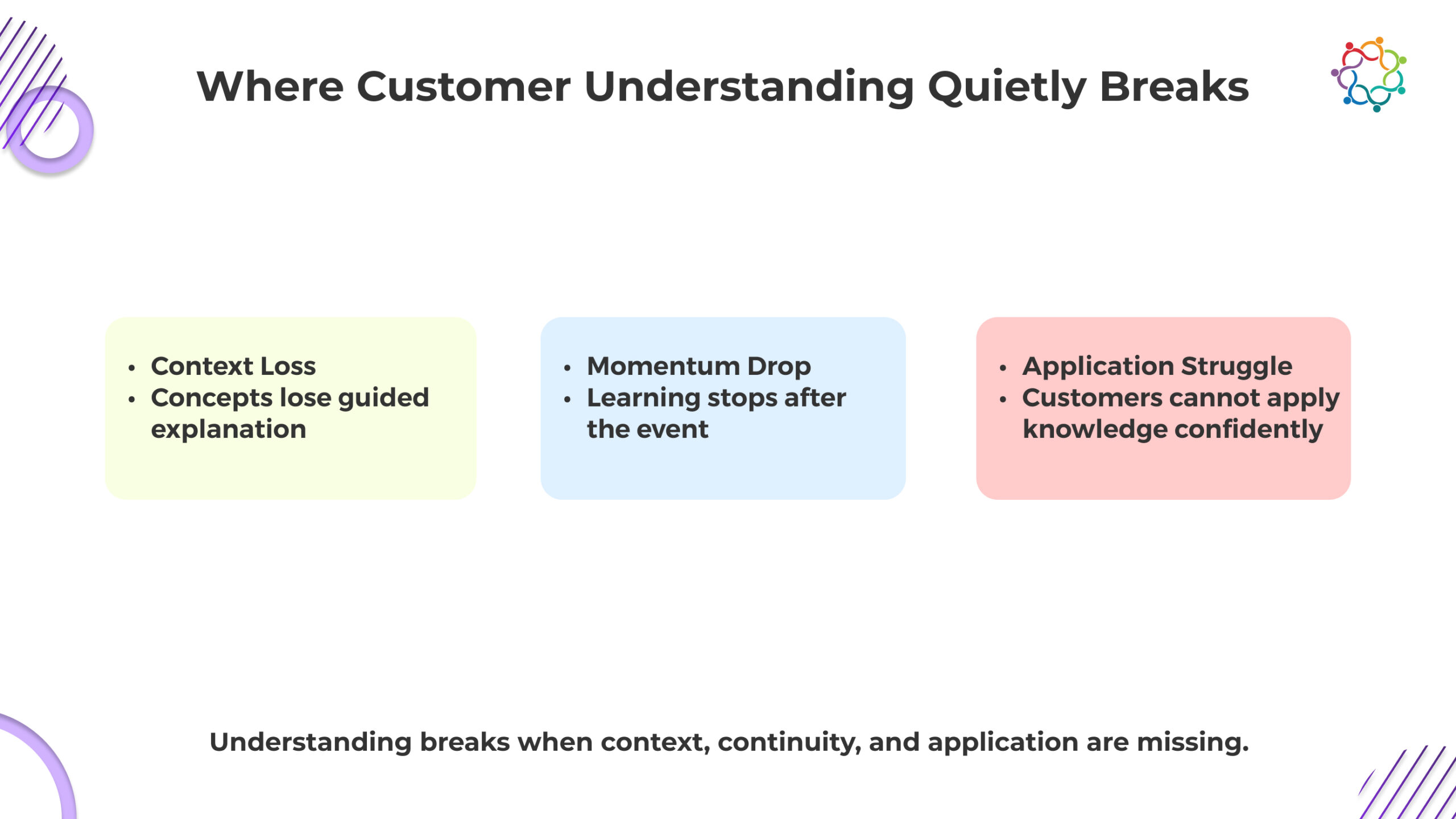

Customer confusion does not appear immediately after training. It unfolds in a predictable chain of breakdowns that most organizations fail to recognize until it is too late.

During training, concepts are presented with structured guidance. Examples are explained step by step. The logic behind workflows is made explicit. Customers rely on this context to make sense of what they see.

Once the event ends, that context disappears.

Customers are left to interpret product concepts on their own. Without reinforcement, the connections between ideas weaken. What once seemed clear becomes ambiguous. The absence of guided context exposes how fragile the initial understanding was.

Training events concentrate learning into a short burst of activity. This creates temporary momentum. Customers are immersed, attentive, and focused.

But comprehension does not develop in bursts. It requires repetition and reinforcement.

When the learning environment stops, the momentum collapses. Without continued exposure, retention declines rapidly. Customers lose access to the mental pathways they briefly formed during training.

Application is where understanding is tested. And this is where the breakdown becomes visible.

Applying product knowledge requires:

Customers who have only experienced guided learning are not prepared for this shift. They hesitate. They second-guess. They avoid using features they are unsure about.

This is the full breakdown chain:

Guided learning → Independent usage → Confusion → Support dependency

The Customer Clarity Gap is not theoretical. It becomes operational at this stage, where customers move from passive learning to active usage and realize they were never truly prepared.

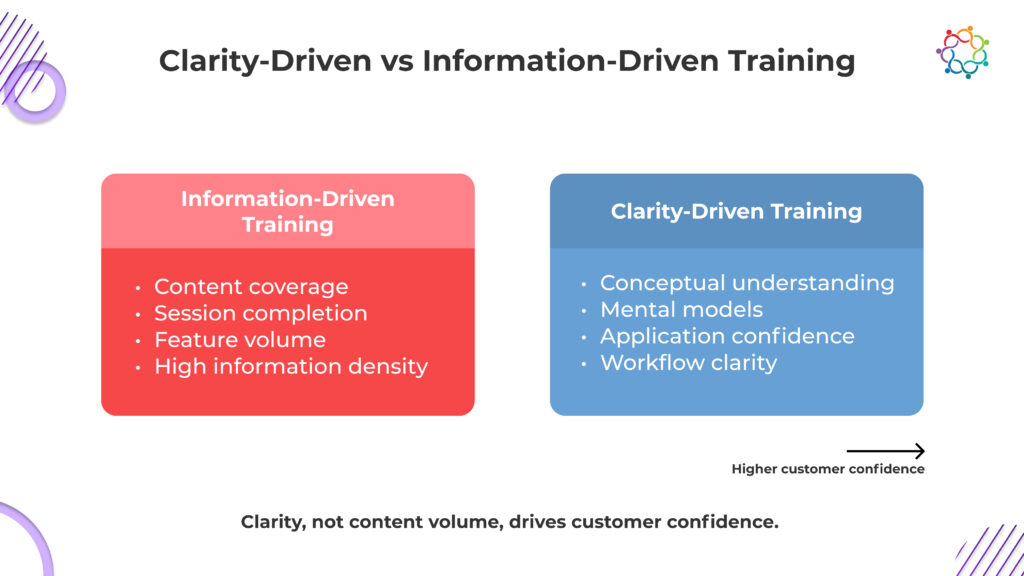

Most training events are not designed to create clarity. They are designed to prove coverage. More features explained, more sessions delivered, more content completed. It feels productive. It is not.

Every additional layer of information without resolution increases ambiguity. Customers leave with more to remember but less ability to decide. They recognize more, but understand less. And that confusion does not stay contained. It shows up the moment they try to use the product without guidance.

If your training is increasing information faster than it is reducing uncertainty, you are actively widening the Customer Clarity Gap.

This is the uncomfortable truth. Your training is not neutral. It is either simplifying the product in the customer’s mind or making it harder to use.

If customers still hesitate after training, the problem is not them. It is the ambiguity you left unresolved.

Customer confidence is often treated as a byproduct of training exposure. This is incorrect.

Confidence is a direct outcome of clarity, not exposure.

Customers feel confident when they understand the logic of the product. When they know how features connect, when to use them, and what outcomes to expect. This clarity allows them to act without hesitation.

Without it, behavior changes immediately:

This is not a capability issue. It is a clarity issue.

Training events directly influence this outcome. If the event builds clear mental models, customers leave ready to act. If it only delivers information, customers leave uncertain.

The Customer Clarity Gap translates directly into confidence gaps.

Organizations that ignore this connection misdiagnose the problem. They assume customers need more training, more sessions, more exposure. In reality, customers need a clearer understanding, not more information.

Confidence does not come from seeing more. It comes from understanding deeply enough to act independently.

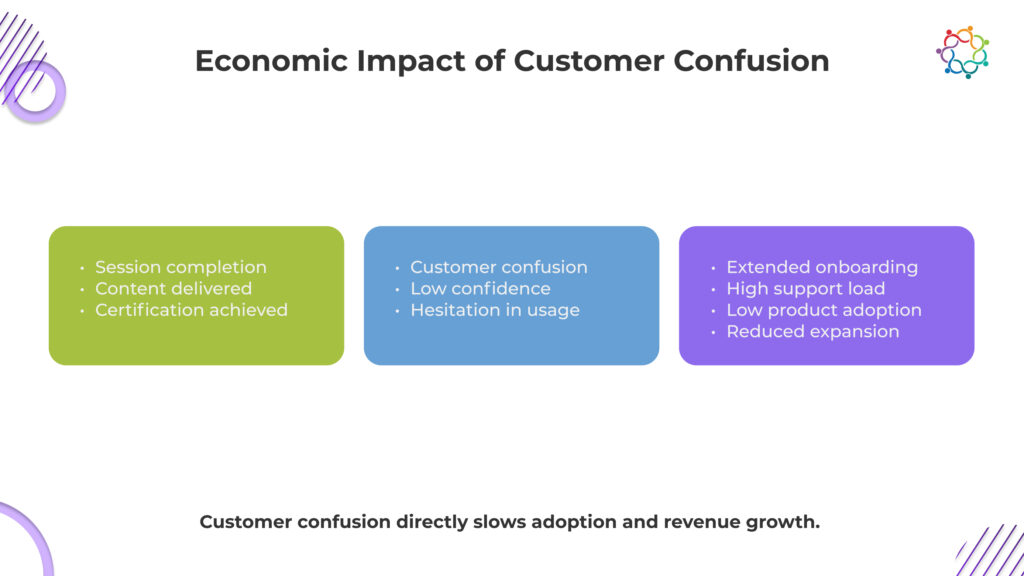

Customer confusion is not just a learning problem. It is a revenue problem.

When training events fail to close the Customer Clarity Gap, the consequences extend across the entire customer lifecycle.

Onboarding timelines extend because customers take longer to reach functional proficiency. Support costs increase as customers rely on assistance for tasks they should be able to perform independently. Product usage remains shallow because uncertainty limits exploration.

This is the hidden cost of ineffective customer training events.

Organizations often focus on the cost of delivering training. They rarely measure the cost of confusion that follows it.

Clarity accelerates adoption. Confusion delays it.

Every unresolved gap in understanding translates into delayed value realization and lost revenue potential. Training events are not just educational touchpoints. They are economic levers that directly influence customer lifetime value.

If the problem is so clear, why do organizations continue to design training events that overload customers?

The answer is uncomfortable.

Organizations reward what is measurable, not what is effective.

Training success is often defined by metrics such as the number of sessions delivered, the features covered, and the completion rates. These metrics are easy to track, easy to report, and easy to scale.

But they do not measure understanding.

As a result, training programs evolve in the wrong direction. More content is added to demonstrate value. More features are included to justify the program. More sessions are created to increase engagement.

Each addition increases information density but does nothing to improve clarity.

The system reinforces the behavior because it produces visible outputs. Content delivery becomes the goal, not customer comprehension.

This is why the Customer Clarity Gap persists. Not because organizations are unaware of the problem, but because their measurement systems reward the wrong outcomes.

Until success is redefined around customer understanding, training events will continue to expand in volume while failing in impact.

Training events are not failing because customers are disengaged. They are failing because organizations confuse exposure with understanding. High attendance and engagement only prove that information was delivered, not that it was absorbed, connected, or applied.

The Customer Clarity Gap persists because training environments create temporary confidence that collapses under real-world usage. When customers cannot act independently, training has not succeeded, regardless of metrics.

Customers don’t struggle because they weren’t trained. They struggle because they never truly understood.

Until clarity becomes the measure, training will continue to underdeliver where it matters most.

For teams trying to understand whether customer education is actually driving product clarity, the approach must shift from tracking participation to interpreting how customers engage, process, and retain learning beyond the event.

Because customer education is not complete when the session ends. It is complete when clarity translates into confident product usage.

Event ROI is often judged too quickly. Leadership asks for immediate answers, dashboards produce early signals, and conclusions form before outcomes fully unfold. In many cases, events are evaluated within narrow measurement windows that capture activity but miss delayed business effects. ROI then appears fixed, even though it is time-dependent.

Event ROI doesn’t change because the event changes; it changes because time reveals different outcomes.

This blog covers how short-term and long-term event ROI differ, what each time horizon actually reveals, what early measurement misses, and why judging events too soon leads to distorted conclusions about their true contribution.

Short-term event ROI captures visible, immediate outcomes. It reflects early engagement signals, rapid follow-ups, and initial pipeline activity that emerges soon after the event concludes.

In shorter measurement windows, teams can observe quick deal movement, new conversations initiated, and early-stage opportunities influenced. These indicators provide tactical validation. They show whether the event generated immediate commercial momentum.

Short-term event ROI is especially relevant in fast sales cycles or high-velocity environments where decisions move quickly. It can signal whether messaging resonated and whether the right audience was present.

Short-term ROI is useful but incomplete.

It reflects what materializes within the initial measurement window. It does not account for delayed decision-making, complex buying processes, or influence accumulation. Its strength lies in clarity and immediacy. Its limitation lies in temporal scope.

Short-term event ROI often overlooks outcomes already in motion before the event occurred. Deals that were progressing may accelerate quietly, without being recognized within narrow measurement windows.

It also misses relationship-building effects. Events frequently influence internal buyer discussions that unfold weeks or months later. Consensus-building, budget alignment, and stakeholder validation rarely happen instantly.

Sales follow-ups may take time to convert into visible revenue. Conversations initiated at events can reappear later in the pipeline without being directly tied back to the original interaction.

The absence of immediate revenue is not evidence of low impact.

Short-term measurement windows privilege speed over depth. They highlight rapid conversions while obscuring delayed outcomes. When ROI is judged only within these early windows, the interpretation skews toward immediacy rather than influence.

Long-term event ROI expands the measurement window and changes what becomes visible. It does not invent impact, it uncovers influence that requires time to surface. When evaluation moves beyond immediate outcomes, different patterns emerge.

Opportunities already in motion may close faster after meaningful event interactions. The event may not create the deal, but it can reduce friction and move decisions forward.

Extended timelines reveal whether stakeholders gained clarity, alignment, or conviction. Confidence is rarely instant, but it can materially affect final outcomes.

Events often shape how key accounts perceive a brand across multiple touchpoints. Over time, this influence becomes visible in decision quality and depth of engagement.

Repeated exposure builds familiarity and trust. Long-term ROI captures this accumulation, which short-term measurement windows typically overlook.

Long-term event ROI is harder to isolate because attribution clarity decreases over time. Multiple influences overlap. Marketing campaigns, sales outreach, peer conversations, and market shifts intersect.

As time passes, attribution decay sets in. Systems struggle to connect delayed outcomes back to earlier interactions. Measurement windows close before the influence fully materializes.

System limitations amplify this challenge. Most reporting structures prioritize recent activity and immediate conversion. Delayed outcomes often appear disconnected from their original triggers.

Difficulty of measurement does not reduce the importance of the impact.

Long-term ROI may be less visible, but it often reflects deeper strategic influence. Its opacity stems from structural complexity, not diminished value.

When event ROI is judged too early, strategic errors follow. High-impact programs may be cut because immediate revenue appears modest.