Samaaro + Your CRM: Zero Integration Fee for Annual Sign-Ups Until 30 June, 2025

- 00Days

- 00Hrs

- 00Min

There are two different perspectives on business events. The marketing team wants to build an audience and create a meaningful experience, while the sales team is more concerned with generating income through sales meetings and converting leads into customers. Although both departments benefit from participation in business events, many times there is a gap in the information being shared between the two departments.

Because of this lack of communication between the two departments, there are many missed opportunities in the B2B event strategy. Without a coordinated effort between marketing and sales to properly utilise event engagement data, both departments miss out on valuable information. AI provides the opportunity for both teams to work together and create a common language, a single data set, and a better way to track leads through to eventual income generation.

This blog will provide a breakdown of how AI has bridged the gap in business event ROI that has existed for many years.

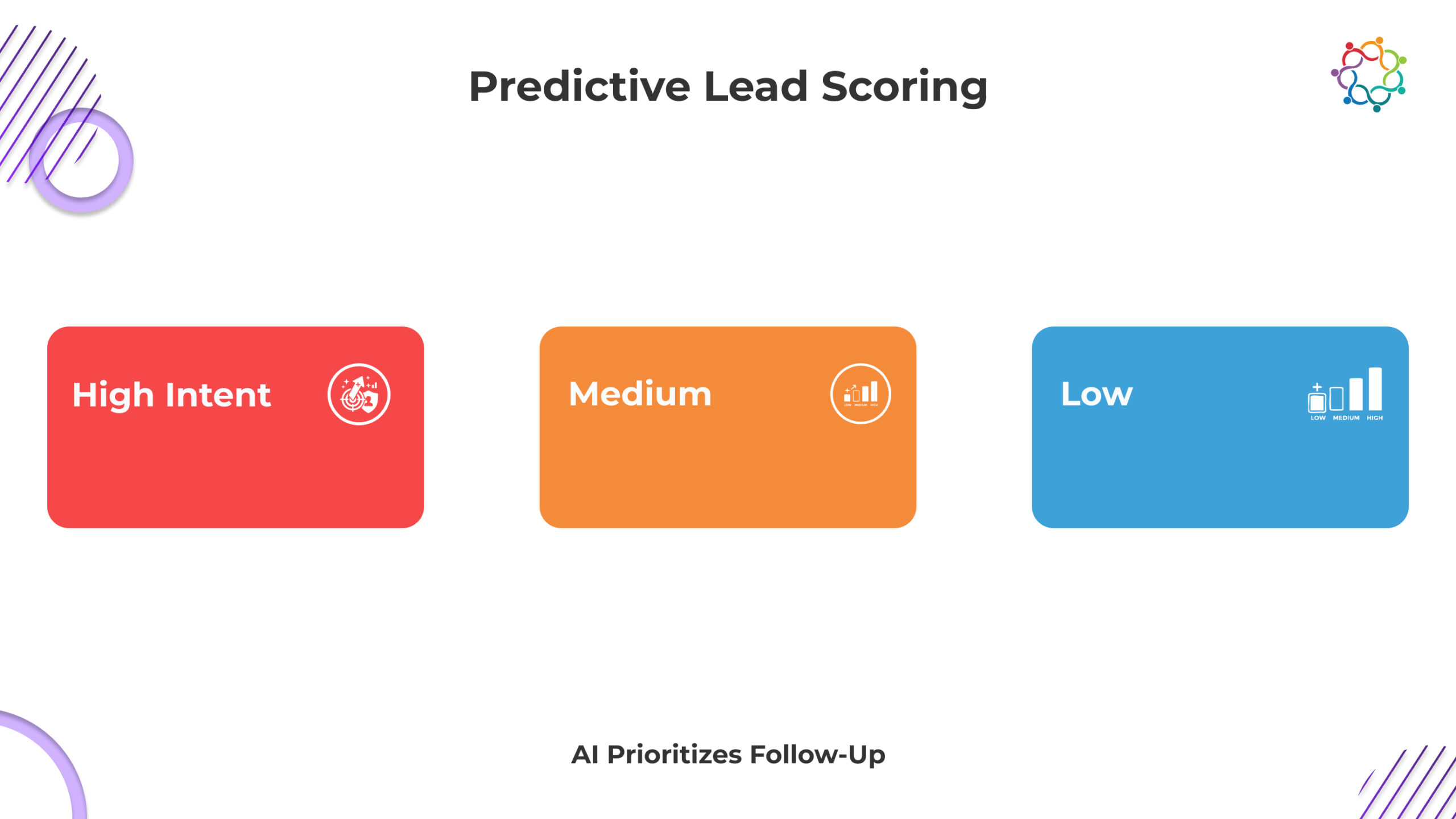

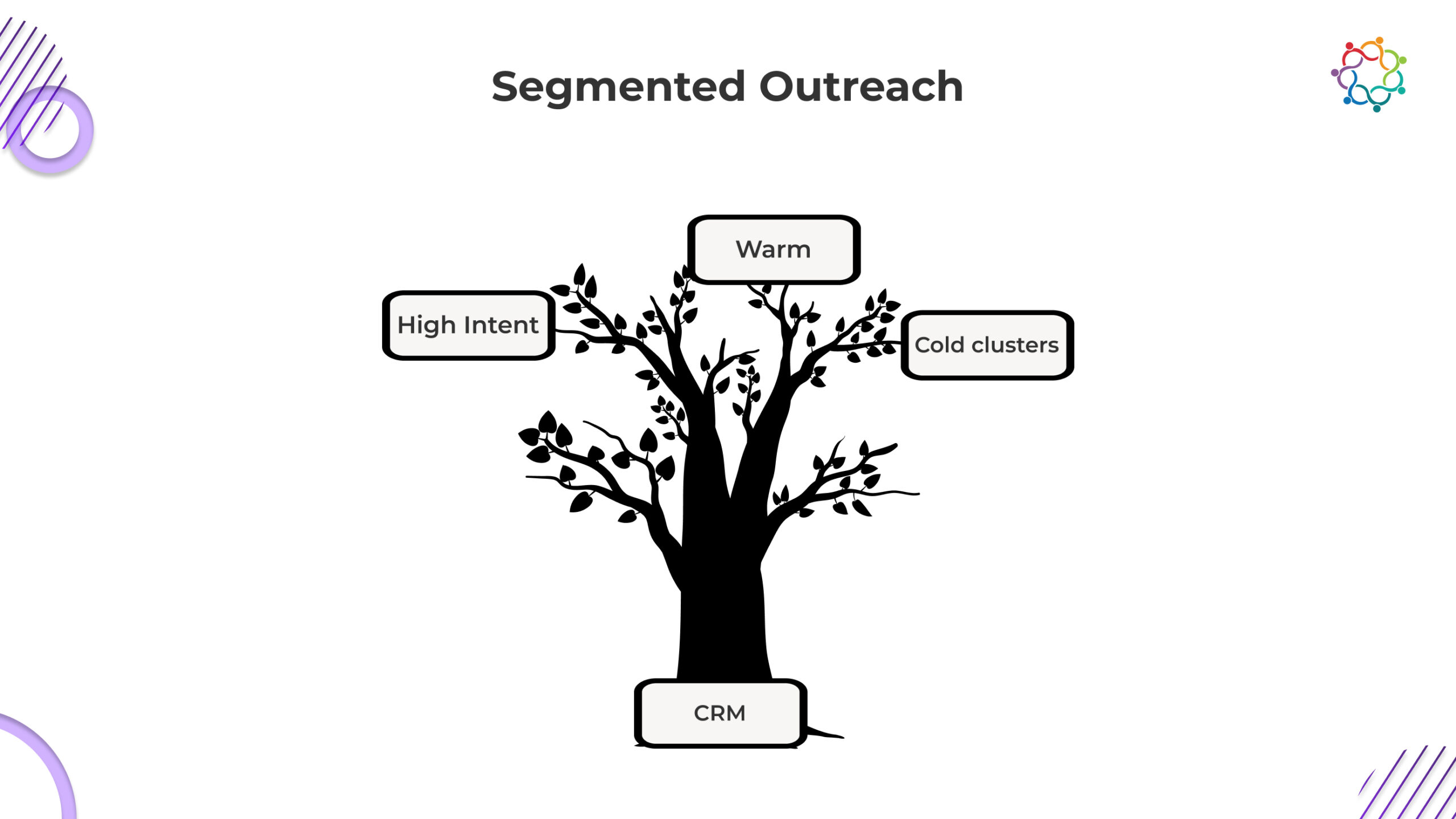

Traditionally, all lead lists for an event are created equally. With AI layered on top of the traditional lists, additional layer(s) of intelligence through customer behaviour data reveal true intent of buying.

Rather than considering engagement through click count or session equal to “surface-level,” AI property evaluates;

From this, AI can provide a probability-based intent score for every user.

This leads to a much simpler, but far more impactful, process when it comes to assessing follow-up:

Predictive-scoring will be used to eliminate guesswork and guide sales efforts to the customer with the highest probability of conversion.

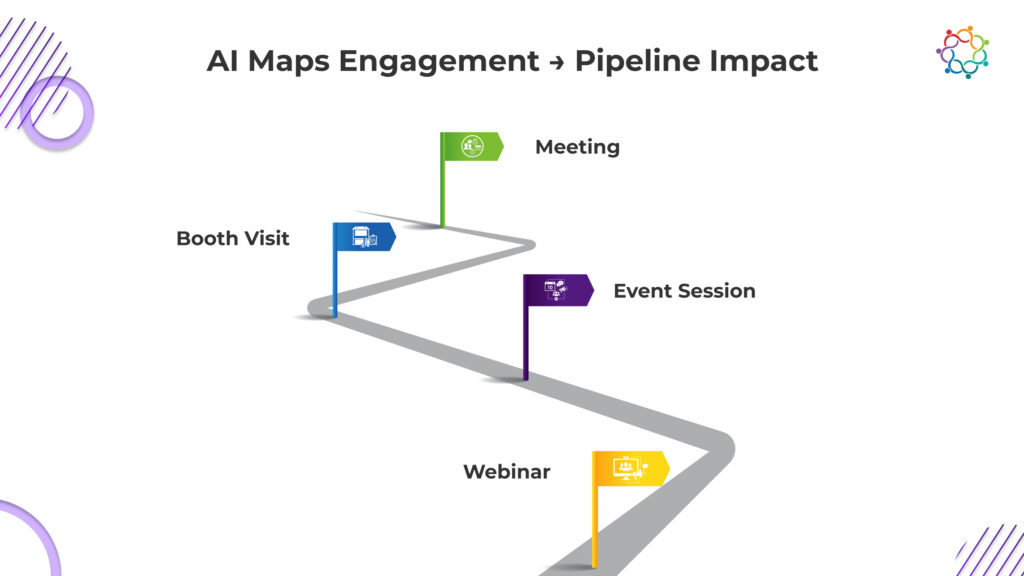

There are many significant high-value moments taking place throughout an event such as meetings, product demonstrations and expert discussions however, the vast majority of businesses are unable to match the appropriate attendee to the appropriate person at the appropriate time.

Using AI there is the ability to identify and route attendees to their appropriate representatives automatically.

AI uses information gathered from an attendee’s profile, their industry, their behaviours, and previous experiences to determine who they should be meeting with. AI will identify the appropriate exhibitor for an assigned attendee to meet with. Based on the attendee’s live behaviour signals, AI will suggest what actions are available next.

What This Means:

Attribution is the source of the greatest amount of friction between the two departments: sales and marketing.

Sales says our pipeline was already working before marketing says any event drove pipeline production.

Leadership is only looking at numbers without seeing how influence played into those numbers.

However, with Artificial Intelligence, that all changes; because AI takes behavioural data (engagement info) combined with CRM activity to create a map that displays:

With AI, an organisation can create a clear and transparent view of the path that generated revenue; therefore, organisations can track;

For the first time, the two departments are looking at the same true story instead of looking down parallel paths.

Samaaro tackles sales–marketing misalignment by treating event data as a shared intelligence layer, not a departmental artifact.

Built on an AI-driven event operating model, Samaaro connects engagement signals from events directly to sales activity and pipeline outcomes, ensuring both teams operate from the same source of truth.

Instead of fragmented views, Samaaro unifies:

This creates a single funnel view where marketing understands downstream impact and sales understands upstream intent.

In practice, this enables teams to:

Samaaro doesn’t replace sales or marketing tools, it synchronises them around event intelligence, allowing alignment to emerge from data rather than coordination meetings.

For many years, enterprises have worked to close the gap between sales and marketing by having meetings, creating processes and communicating better. The main issue, however, has always been fragmented intelligence.

With unified engagement data, artificial intelligence can provide insights into potential customer types, automate routing of leads and connect activity to revenue, enabling businesses to achieve sales-marketing alignment without having to manually create it through communication or the use of other tools.

Artificial Intelligence can convert event data from a series of isolated, one-time occurrences to a systemic, continuous model that supports ongoing efforts to generate revenue. Event-based alignment is no longer a choice for today’s enterprises; it is a critical component of ongoing revenue growth based on event-driven models.

In recent years, the real estate market in Dubai has been expanded beyond just showrooms and presentations; it has evolved to become a dynamic interactive marketing platform for real estate developers, allowing them to showcase their products to a wide range of potential buyers, investors and Global Audience.

Today, a Dubai property launch is not only a single event, but it has now become a Dynamic Encompassing marketing platform where developers can create an experience that combines storytelling and Technology with Buyer insights to achieve sales.

As a result of these changes in the Dubai Real Estate market, the way in which real estate developers operate has changed dramatically. Due to the high level of competition in the Dubai Real Estate market, real estate developers must continue to innovate and evolve their marketing strategies to remain competitive.

Through this blog, we will explore how some of the most successful developers in Dubai have turned their launch events into a streamlined marketing engine, from start to finish.

For international investors, Dubai is more than a city, it is an aspirational lifestyle destination. Real estate brands leverage this perception by designing launch events that feel like cinematic reveals rather than corporate gatherings.

These launches are curated to evoke emotion, build desire, and shape perception instantly. For affluent global buyers, the energy and prestige of the event become part of the brand narrative itself.

In a market where investors often make decisions from abroad, the launch experience must create trust, clarity, and excitement. Dubai’s developers understand that global buyers don’t just purchase property, they invest in a lifestyle. Events are the most powerful medium to communicate that lifestyle.

This shift mirrors a broader transformation across the UAE, where events have become central to experience-led brand strategies rather than one-off promotional moments.

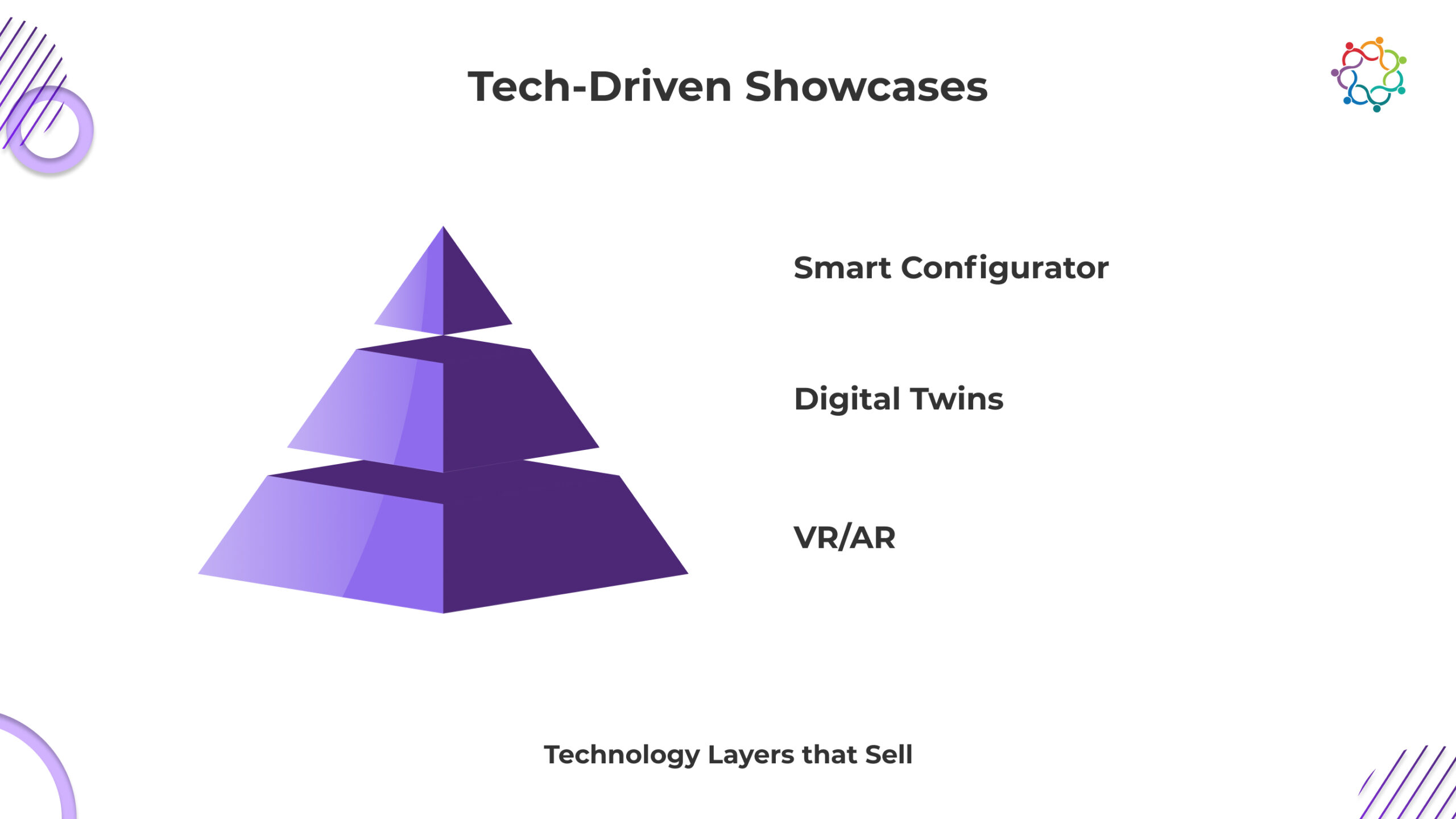

While the glamour of Dubai’s launches draws immediate attention, technology has become the backbone of the experience.

Perhaps the most transformative element is real-time personalization. Investors can select unit types, switch interior palettes, or view payment plans on demand, allowing them to visualize their purchase journey immediately.

This level of tech-enhanced clarity speeds up investor confidence. In a high-value, emotion-driven market, interactive and immersive experiences significantly reduce ambiguity, making decision-making faster and more informed.

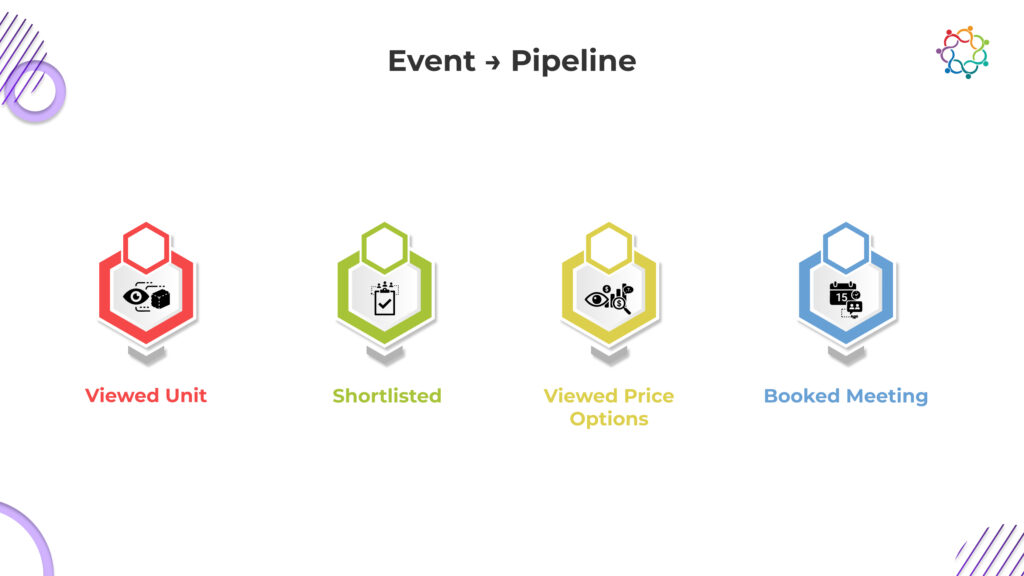

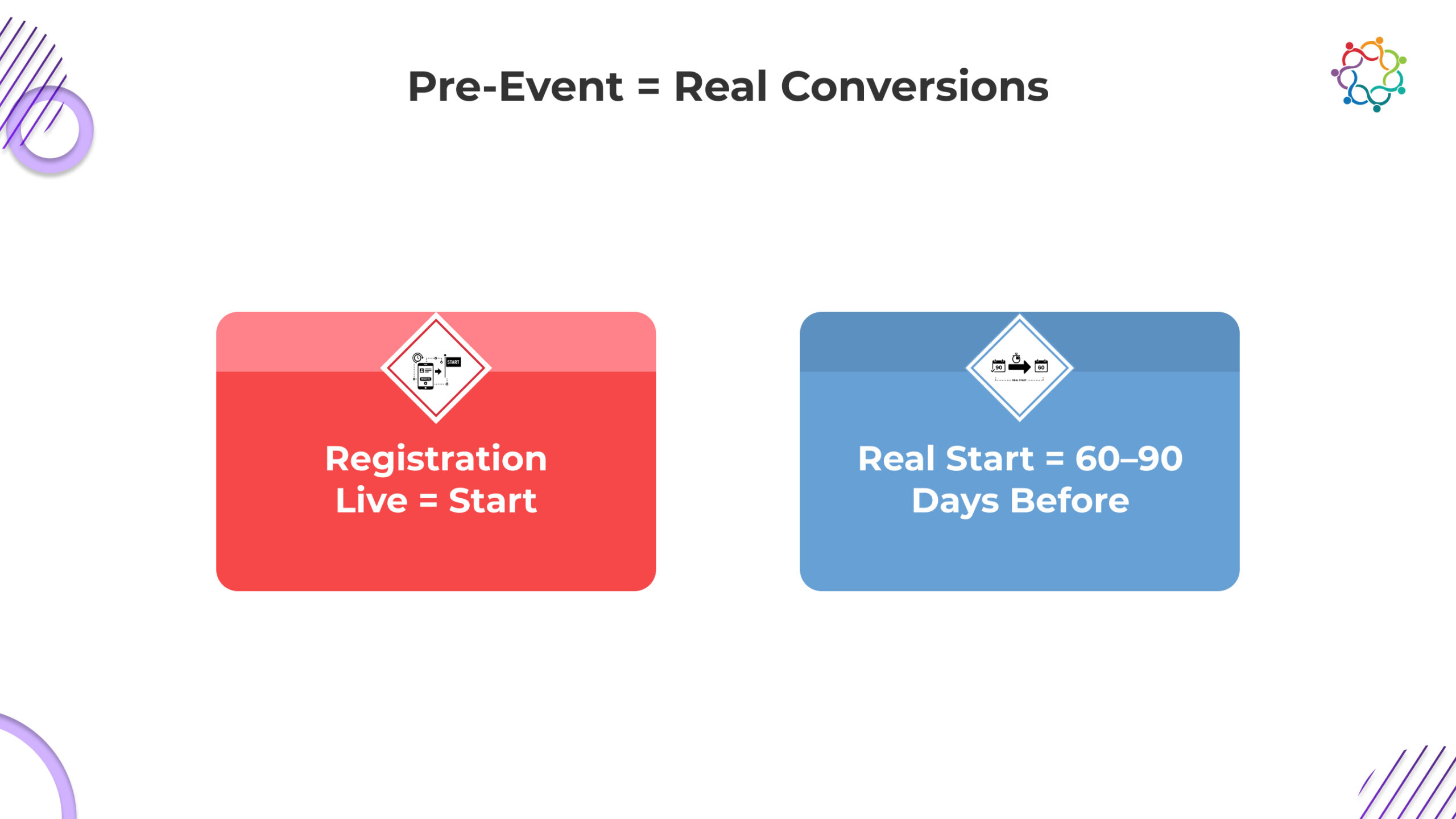

In Dubai’s real estate ecosystem, a property launch is not the end goal, it is the start of the revenue engine.

Developers now leverage behavioural data from events to guide next steps and nurture high-intent prospects. For example, an investor who interacted heavily with premium waterfront units or luxury penthouses can be routed into tailored follow-up sequences with specialized advisors.

Events have moved beyond their traditional role as marketing showcases. They directly influence deal velocity, pipeline quality, and conversion rates, making them one of the most important commercial assets in a developer’s strategy.

Dubai property launches generate enormous interest, but value is created only when that interest converts into structured follow-ups and committed buyers.

Samaaro helps developers carry momentum forward by turning launch interactions into measurable buyer journeys.

With Samaaro, teams can:

Instead of treating the launch as a single peak moment, Samaaro enables developers to manage the entire post-launch conversion, from first interaction to final commitment.

In Dubai, property launches have evolved into powerful marketing and revenue machines. They blend:

Developers who master this formula gain a clear edge in a crowded market. As global investor demand grows and expectations rise, experiential property launches will only become more elaborate, intelligent, and commercially impactful.

Dubai isn’t just building skyscrapers, it’s building experiences. And in this landscape, the event is the brand.

While marketers have traditionally looked at indicators such as the amount of RSVPs received or the number of people who attended a sponsored event to assess the success of an event, the truth is that a person’s attendance at an event does not necessarily mean that their attendance created positive business impacts.

It is important to focus on how good of a job the event does in moving a person from the moment that they express an interest in an event to when they become a customer or an ongoing loyal customer.

In today’s world, an event must be designed as a complete and continuous journey down the sales funnel, rather than simply a one-time activity. Once the RSVP-to-retention journey is developed based on behaviour data and customer segmentation, events are no longer viewed as just a cost centre; rather, they can be treated as a reliable engine of conversion.

This shift reflects a broader move toward treating events as continuous lifecycle programs rather than isolated campaigns, where every touchpoint compounds learning and performance over time.

This fundamental change is how innovative and forward-thinking brands will continue to run and evaluate their B2B events in the future.

Though an RSVP typically reflects a simple yes/no response, in actuality, it is the first indicator of one’s intent to attend the event and is much more than this. Early RSVP’s are typically viewed as having higher levels of motivation and commitment to the event; for example, individuals who complete the form completely and engage with pre-event content demonstrate higher levels of motivation than last-minute RSVPs, who will require additional nurturing before the event.

Both Indian and international data related to events demonstrates that RSVP behaviour indicates an attendee’s likelihood of attending an event, as well as their potential to convert from attendee to buyer/customer. Knowing how to manage the nuances of RSVP behaviour will help teams activate the right segments of their audience before the event occurs.

Segmentation during the RSVP stage is the foundation of the entire lifecycle of events. Marketers can segment registrants by:

a. Persona/job role,

b. Industry/business size,

c. Product interest,

d. Past event engagement, and

e. Buying cycle stage.

When clusters are established, pre-event communication becomes relevant to the registrants. This includes personalised reminders, curated agendas, and registrants having a sense of expectation that an event has value before they arrive.

Strong pre-event signals help teams predict demand and prioritise effort. Key metrics include:

These insights turn RSVP lists into lead-intent maps.

The total number of attendees at your event does not provide an accurate picture of event success. To determine the success of your event and the conversion rates from your event, you need to determine the level of engagement for each attendee, meaning how engaged were the attendees when they attended your event. Checking in at your booth does not give you the full picture of how much interest the attendee had in your company and products.

In addition to knowing that the attendee was physically present at your booth, you need to collect additional data from the attendee during the event that will give you a more comprehensive picture of your attendee’s potential value to your company.

To gain a more complete insight into the value of each attendee to your business, the following things must be tracked: how long the attendee remained at your booth; the level of interaction the attendee had with your company; how many workshops or presentations were attended by the attendee; and how many content pieces were downloaded by the attendee from your booth.

There is a strong correlation between levels of deep engagement at an event and the level of activity in your post-event pipeline. Attendees who spend a significant amount of time in high-value zones will often convert into customers faster than attendees who do not spend time in these areas.

During an event, attendee behaviour becomes a treasure trove of buying signals. Some of the strongest indicators include:

These actions often reflect mid-funnel readiness.

Guided agendas, AI-powered recommendations, and personalized nudges all help to shape the experience of today’s events. When participants can clearly see what sessions and booths will bring them the greatest value, they are more likely to make efficient use of their time, leading to higher conversion rates.

Utilizing personalized push notifications, session reminders, and contextual recommendations ensures that participants have access to all of the key moments that matter.

Key onsite metrics include:

Together, these form the backbone of conversion-focused event intelligence.

Many organisations invest heavily in event execution but fall short when it matters most, immediately after the event. The common issues include:

When follow-up is slow or irrelevant, high-intent leads grow cold. Research shows that conversion opportunities drop sharply after 72 hours.

A high-performing post-event system includes:

These journeys turn engagement signals into meaningful actions.

Critical indicators at this stage include:

These metrics confirm whether the event successfully moved people down the funnel.

Events shouldn’t be treated as isolated campaigns. Guests who convert or show strong interest should be nurtured through:

Long-term engagement builds trust and increases customer lifetime value.

Retention indicators include:

These metrics reflect whether the event helped shape a long-term relationship.

When brands continuously learn from attendee behaviour, each event becomes smarter than the last. This creates a self-reinforcing loop where every stage- RSVP, attendance, engagement, follow-up, strengthens the next.

Most event platforms capture activity. Samaaro is built to connect activity to outcomes across the entire RSVP-to-retention lifecycle.

Instead of treating registrations, attendance, engagement, and follow-up as separate phases, Samaaro unifies them into a single behavioural journey for every attendee.

With Samaaro, teams can:

By operationalising the full event lifecycle, Samaaro helps brands design events as predictable conversion engines, not one-off experiences that reset after every show.

The industry is now focusing less on tracking people through attendance-based KPIs and more on producing results using outcome/growth-oriented strategies. Attendee experiences that direct them from RSVP through the conversion process and then into retention are significantly more productive than the traditional approach of providing a generic engagement strategy for all attendees.

By utilizing a lifecycle model for their events, companies can utilize events as predictable sources of revenue. On the other hand, companies that do not utilize the lifecycle model will continue to experience low ROI and poor sales funnel activity due to the lack of predictability associated with their marketing efforts.

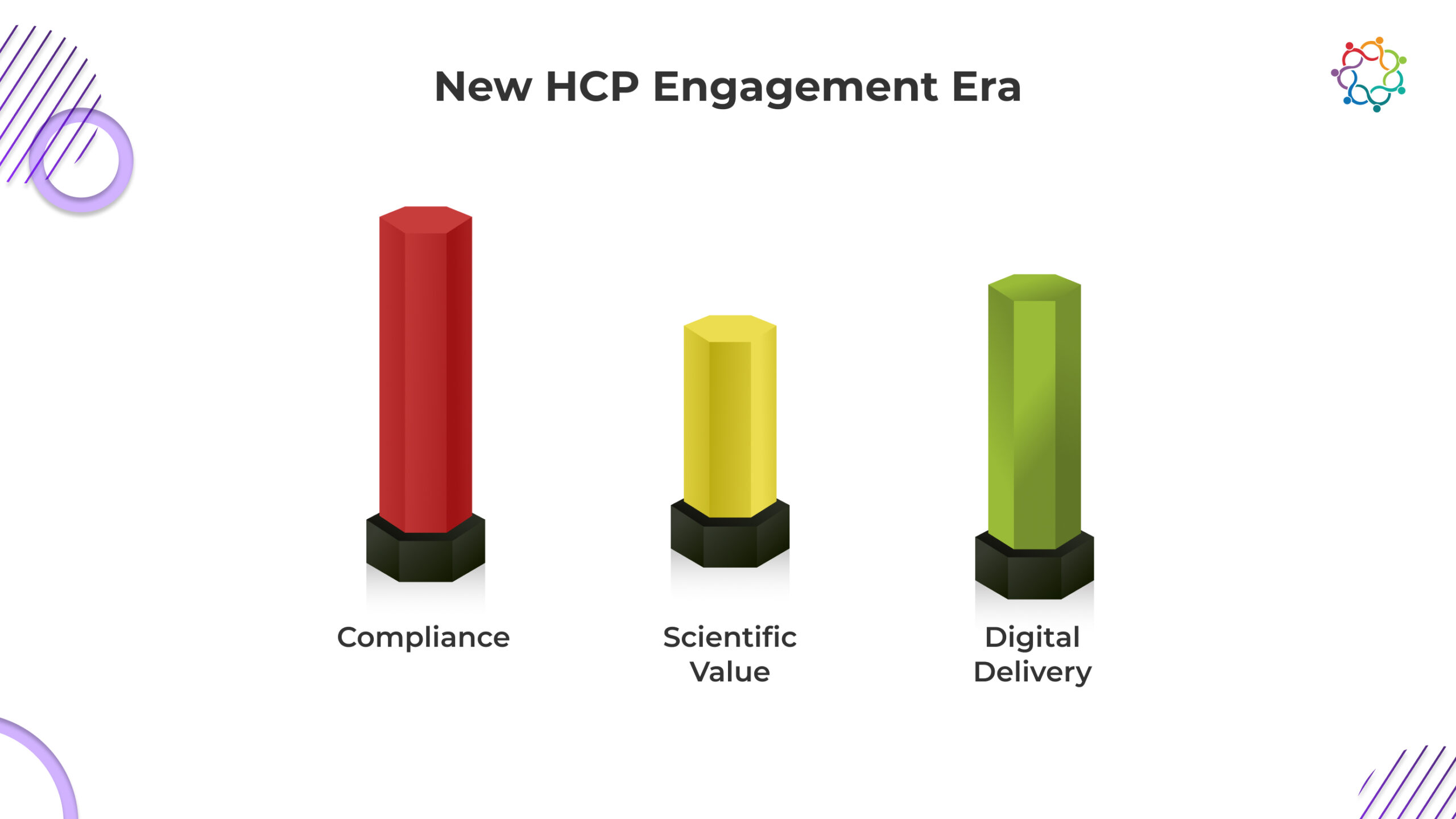

The way in which the pharma industry interacts with healthcare professionals (HCPs) in the United Arab Emirates (UAE) is evolving due to an increase in digital engagement from medical companies. Historically, most of the engagement between HCPs and the pharma industry took place with face-to-face interactions at conferences and scientific meetings. However, with an increasing number of HCPs using digital channels as the first point of contact with healthcare products, they now expect more value, credibility and convenience regarding their digital engagement.

As such, pharma companies are re-evaluating their approach to providing HCPs with an experience, conveying scientific content, and building rapport. The evolution of HCP engagement has been further accelerated by the UAE’s historically professional and progressive healthcare system combined with the recent acceleration of digital adoption, which is changing how HCPs learn about, interact with and assess the validity of information. Today’s leading pharma events include the use of pre-event content, personalization at the event, and post-event learning pathways, which provide HCPs with a continuous experience as opposed to a one-time conference.

This shift reflects a broader change in how events operate across the UAE, where experience-led, technology-enabled engagement has become the foundation for brand credibility and trust.

Technology has created a greater reliance on digital technology as it relates to the delivery of scientific education, and this trend will only continue to grow as we move into a more virtual environment. As HCPs become increasingly reliant on digital platforms to receive information before actually showing up to an event, it is changing how companies create the content for their events.

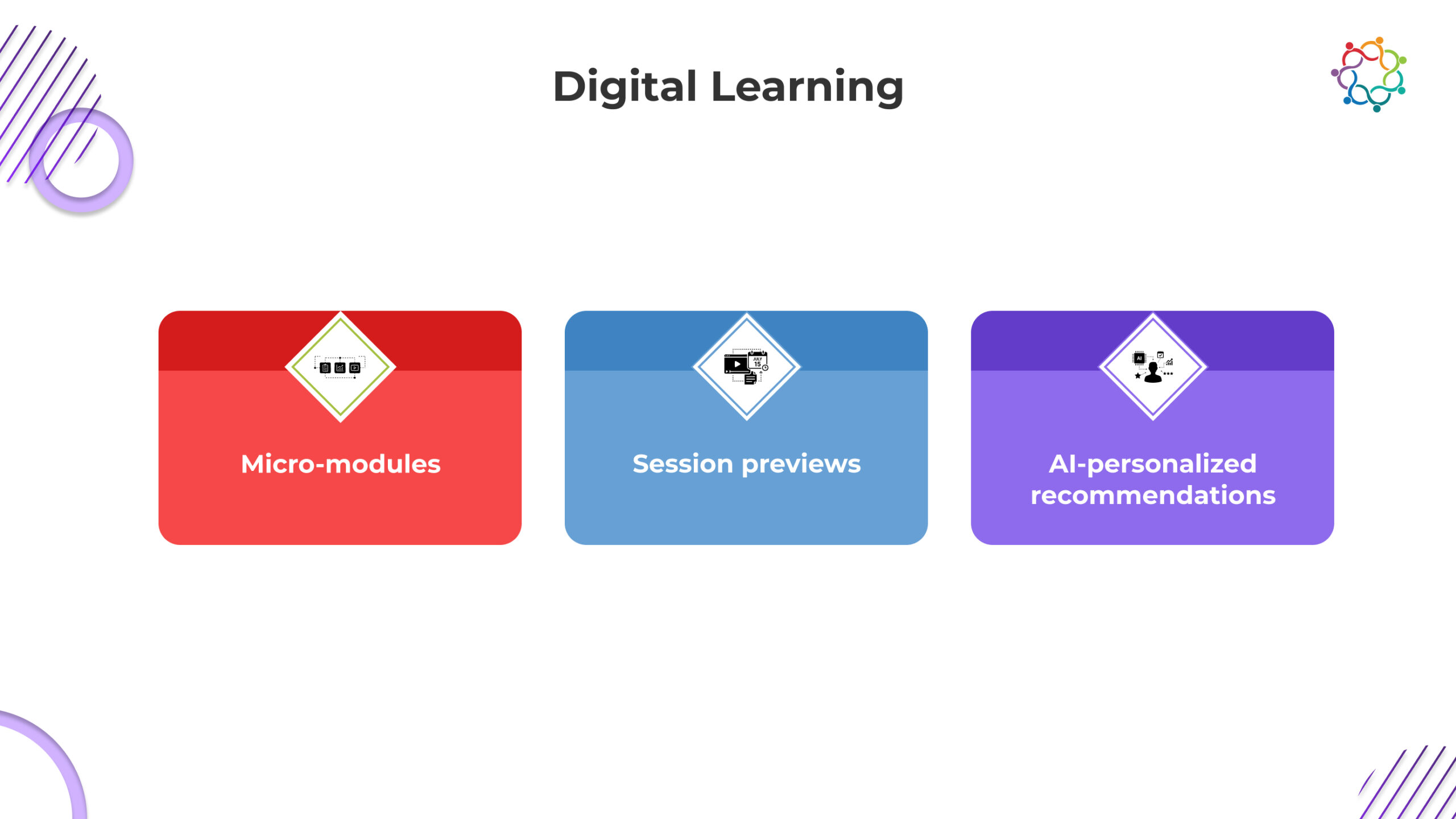

Micro-learning modules (MLMs) are designed for physicians and specialists so that they can prepare themselves with fundamental knowledge regarding specific topics before coming to an event. The pieces of content provide a summary of research conducted and highlight relevant findings pertaining to the area of study without overloading busy medical professionals with information. Previews of sessions allow HCPs to understand what to expect in each session, giving them an opportunity to determine the order of sessions based on the sessions that apply to their specialty areas.

Artificial Intelligence (AI) is also a critical tool in this process. AI tools consider the behaviour of HCPs as well as the specialty areas in which they work so that AI content engines provide suggestions for each HCP on what to read, watch and experience prior to an event. For example: a Cardiologist will receive different pre-event content compared to a Dermatologist; and physicians working with new drug classes will receive MLMs tailored specifically to those drug classes. By having access to the relevant information prior to their arrival at the event, each of these physicians will arrive at the venue prepared and ready for deeper discussions about the scientific principles they will be discussing at the event.

This shift towards a digital first approach to the delivery of scientific education provides an opportunity to produce higher quality attendance at events, as well as ensuring that time spent at the event is not wasted on having to catch up on information.

The medical communities in Dubai, Abu Dhabi, and the greater UAE are amongst the most diverse in the world with specialty physicians coming from myriad healthcare systems with unique training experiences and different subspecialty focus. A single agenda across the board is no longer possible.

Digital platforms allow pharmaceutical brands to develop customised session tracks based on specialty, history of attending previous events, and personal learning history. Health care professionals (HCPs) now receive customised tracks for the events they attend instead of having to navigate through countless session listings to find value around their clinical interests.

Likewise, intelligence-based recommendations now direct HCPs to relevant exhibits, posters, workshops and theatres related to their specialty. Rather than depending on the luck of finding the correct information, Artificial Intelligence directs HCPs to content that will deliver maximum benefit.

For event organisers this is beneficial because it provides greater insight and a broader understanding of what types of content are engaging HCPs and where the greatest number of engaged HCPs are located and how demand varies between therapeutic categories, thus, allowing them to compile data that will aid in planning for future scientific programming, KOL engagements and educational strategies related to market education.

Personalisation is not merely a convenience but rather a mechanism that drives greater learning after the event and delivers a greater impact overall.

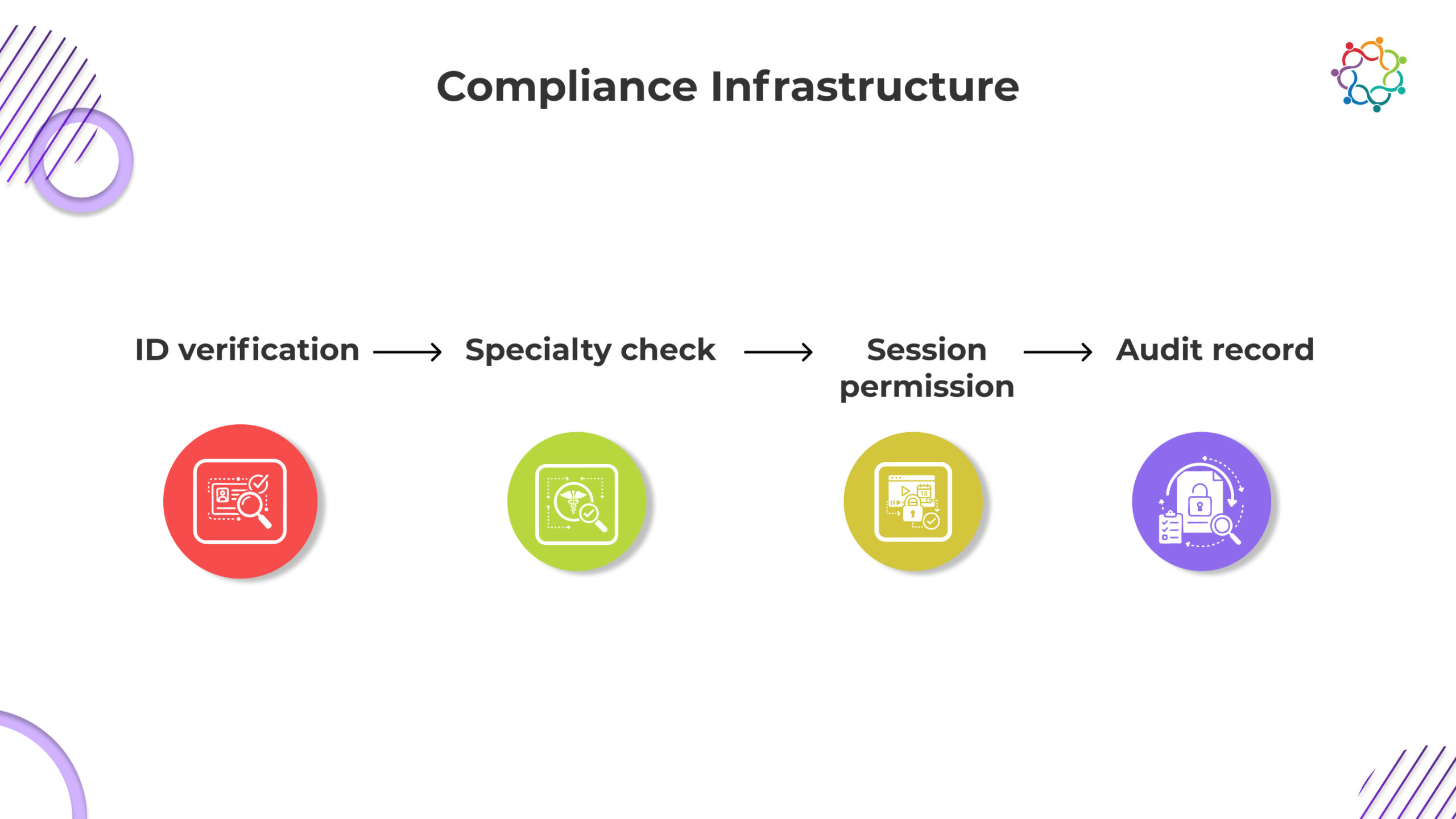

Digital-first systems have made pharma engagements easier and more discoverable; however, compliance has always been a priority and should remain that way. As a direct result of digital-first systems, there is now a high level of security associated with the way UAE pharma events are conducted.

UAE pharma events now have the following components:

The components not only support ethical engagement; they also fulfil the UAE regulatory framework for responsible and secure data use and for communications.

Digital systems generate interaction logs that can be used as part of internal audits, cross-checked against MLR (medical, legal and regulatory) approvals and/or used as verification of participation in continuing medical education (CME).

As a result, greater control, clearer documentation and a more streamlined process for governance can occur without slowing the attendee’s experience.

Pharmaceutical teams in the UAE are under increasing pressure to deliver scientifically meaningful engagement while maintaining strict regulatory discipline. Samaaro is designed to support this balance by enabling digital-first HCP engagement that is structured, secure, and fully auditable.

Rather than treating events as isolated touchpoints, Samaaro allows pharma organisations to manage HCP engagement as a continuous journey, before, during, and after scientific meetings.

With Samaaro, teams can:

By combining secure workflows with intelligent personalisation, Samaaro enables pharma enterprises to meet regulatory expectations while delivering the relevance, convenience, and credibility that HCPs in the UAE now expect from digital-first engagement.

The pharmaceutical event climate within the UAE is going through an evolution as it develops into the more digital-driven era of tomorrow. Health care providers (HCPs) are now looking for early access to scientific information, customised agendas and consistent compliance-related interactions. With digital-first approaches, pharma brands will be able to create a more efficient, relevant and accountable environment for scientific exchange.

Pharma companies that invest in creating these digital capabilities will develop greater trust with their audience through better education and remain competitive in a rapidly changing environment.

Digital engagement does not replace traditional events; it is used to improve traditional events, creating an enhanced and customised environment in which to have a more meaningful experience.

The effectiveness of AI continues to be questioned by most Event-oriented teams at large corporations as its ability to deliver quality products has not been proven; companies have discovered that there are far too many variables when it comes to the success of developing AI and, since AI is still evolving, it cannot yet be classified as a fully matured technology.

Many people are disappointed by the fact that AI doesn’t possess every expectation of it that has been placed upon it due to unrealistic expectations, discrepancies between processes within an organization, and the lack of proper preparation on the part of organizations for what AI represents. Many businesses treat AI as if it were simply another software program that would allow for enhanced productivity through the installation of an upgrade.

Unfortunately, to maximize the value of AI, there needs to be alignment with the culture of the organization, data must be cleaned (to create valuable data) and businesses must have clear strategic thinking related to AI, and businesses must also invest in continual improvement to maintain the value of AI as it develops over time.

This blog post will provide you with information related to the many misconceptions related to AI and how organizations can run with AI while using it to position their organization toward maturity.

A prevalent myth in enterprise environments is that AI will fix all the inefficiencies inherent in an organization; however, this could not be further from the truth.

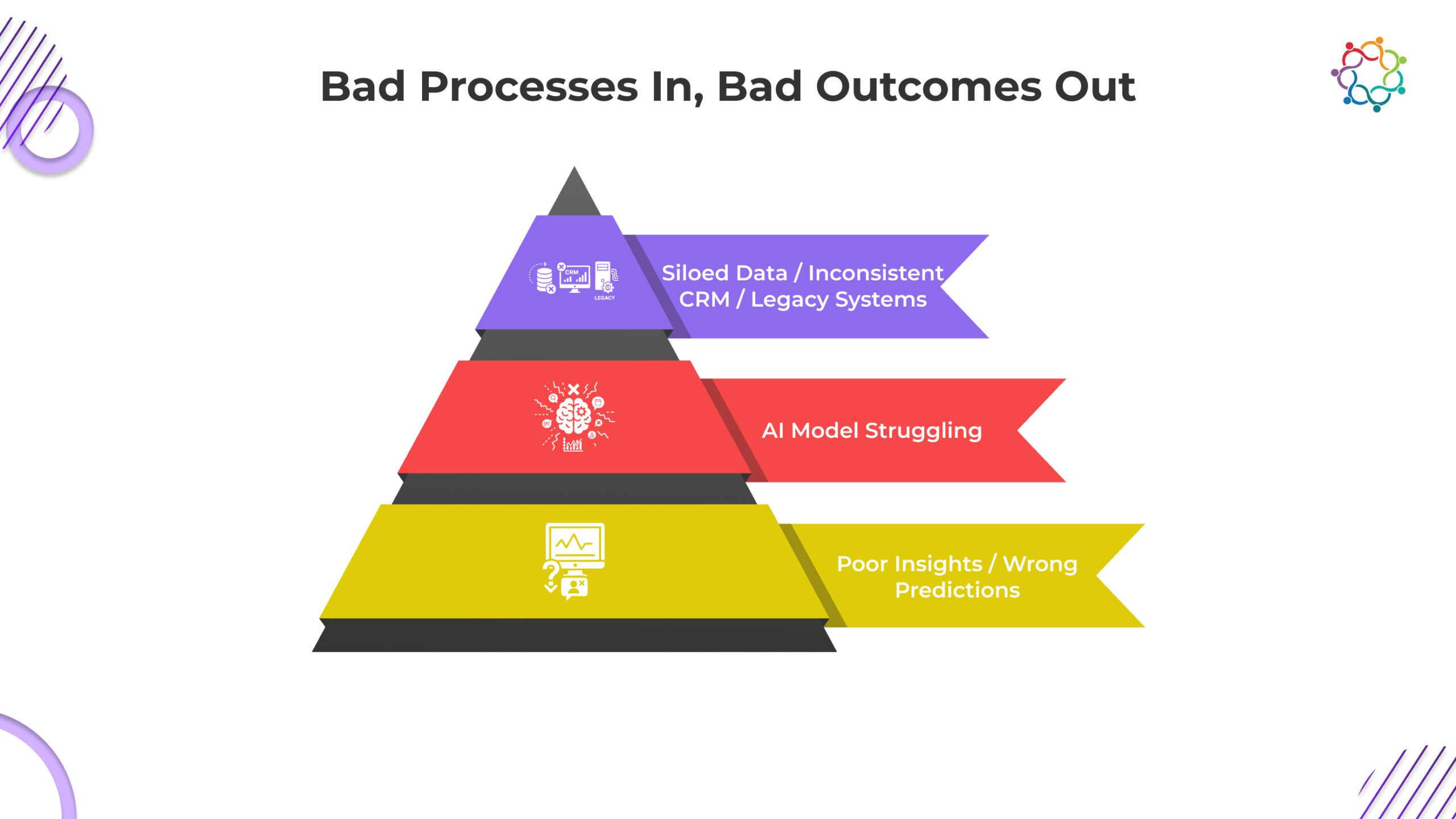

AI simply amends whatever process it touches; therefore, if the process being improved is flawed, the output of the AI-enhanced process will be flawed.

If an organization’s workflows are broken, if there is inconsistent input data, and/or if teams operate separately from each other (i.e., in silos), the AI models created by the organization must inherit all of these deficiencies and will not eliminate them – they will only produce outputs that replicate the same mistakes made prior to the introduction of AI into the organization.

Issues with legacy data hygiene. For example, an organization that uses bad or incomplete customer record management (CRM) systems will not obtain reliable future predictions from the AI model it creates from this data.

Siloed workflows. Marketing and sales and operations teams all utilize different tools to manage operations; as a result, all of the intelligence of these functions becomes fragmented within the organization.

Unclear key performance indicators (KPIs). Without defined outcomes, the AI model will not know how to align its predictions with business goals.

AI is an accelerator of processes; it does not fix processes. Thus, to achieve improved processes, it is essential that the underlying processes of an organization be clean, structured, and aligned.

People often think about AI replacing humans because they fear it or, in some cases, have unrealistic expectations for what AI can do. However, while AI has an important role in enterprise event marketing, its importance is not as valuable compared to the context created through human interaction.

AI aids in many aspects of strategic planning; however, it cannot replace judgement.

AI technology can assist with identifying, researching and analysing data through pattern recognition; however, it is unable to:

AI is able to ascertain the likelihood of attending from an audience of high intention, however, the marketer still needs to engage with and demonstrate value to individual high intent audience members and develop a human experience for high-intent attendees.

Therefore, AI is only a tool for input, and therefore requires further analysis, and, ultimately, judgment or strategic decisions for the marketer to maximise any value derived from the use of AI.

The primary objective of using AI for enterprise event marketing is to assist and add value, through augmentation, to the marketer.

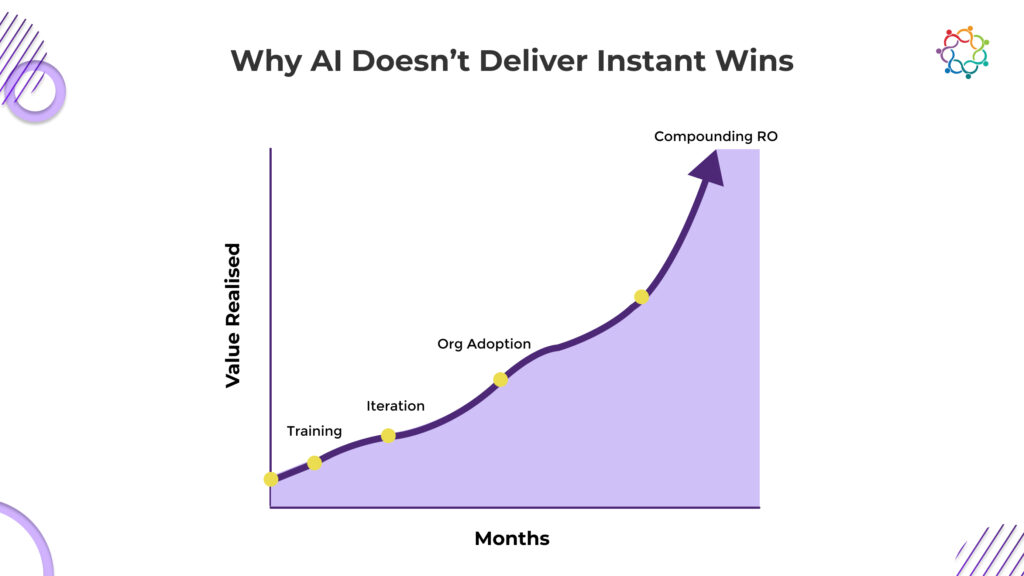

Most teams at enterprises treat AI as a wholesale switch: Once flipped, immediate results can be expected. AI is not a product that will produce instant success, rather it is a long-term investment in an evolving capability that matures through ongoing development.

AI Must Have:

Most companies run into culture-related issues in their AI implementations, trustworthy output from AI(s), immediate executive expectation for return on investment (ROI), and lack of understanding regarding onboarding requirements (e.g., ongoing processes, integration steps), this equates to a lack of trust/immediate results on behalf of the teams involved in implementing AI, resulting in a much slower adoption rate than would normally occur because many organizations run into cultural issues rather than algorithmic issues.

As long as organizations continue to input consistent data into AI(s), the longer the organization develops/use(s), the more accurate they will become exponentially – thus their value will continually build upon itself, not become apparent overnight.

The real ROI associated with AI will be cumulative, not immediate.

Samaaro approaches AI differently from most event platforms. Instead of positioning AI as a standalone capability, intelligence is embedded as an enablement layer across planning, engagement, and post-event workflows.

This approach reflects a broader AI-driven event operating model, where AI supports decision-making across the event lifecycle rather than attempting to automate isolated tasks.

In practice, this means:

Samaaro’s philosophy is simple: AI should strengthen human judgment and operational discipline, not attempt to replace them.

AI adoption’s greatest obstacle is not the tools; it’s the way people think about them. Artificial Intelligence has become synonymous with a lot of things that are not real. Misperceptions such as “AI can fix everything” or “I’ll never have to hire another marketer again” create false expectations and, therefore, slow down the pace of transformation within companies.

Companies that focus on AI’s real value through readiness, data quality, process alignment and incremental adoption will benefit from AI. On the other hand, companies that only chase after Artificial Intelligence’s trendy buzz will end up being disappointed.

Successful implementation of Artificial Intelligence depends on whether the company is ready to implement it. In addition, today’s successful organisations in event marketing would have to be the most prepped-up organisations in terms of AI.

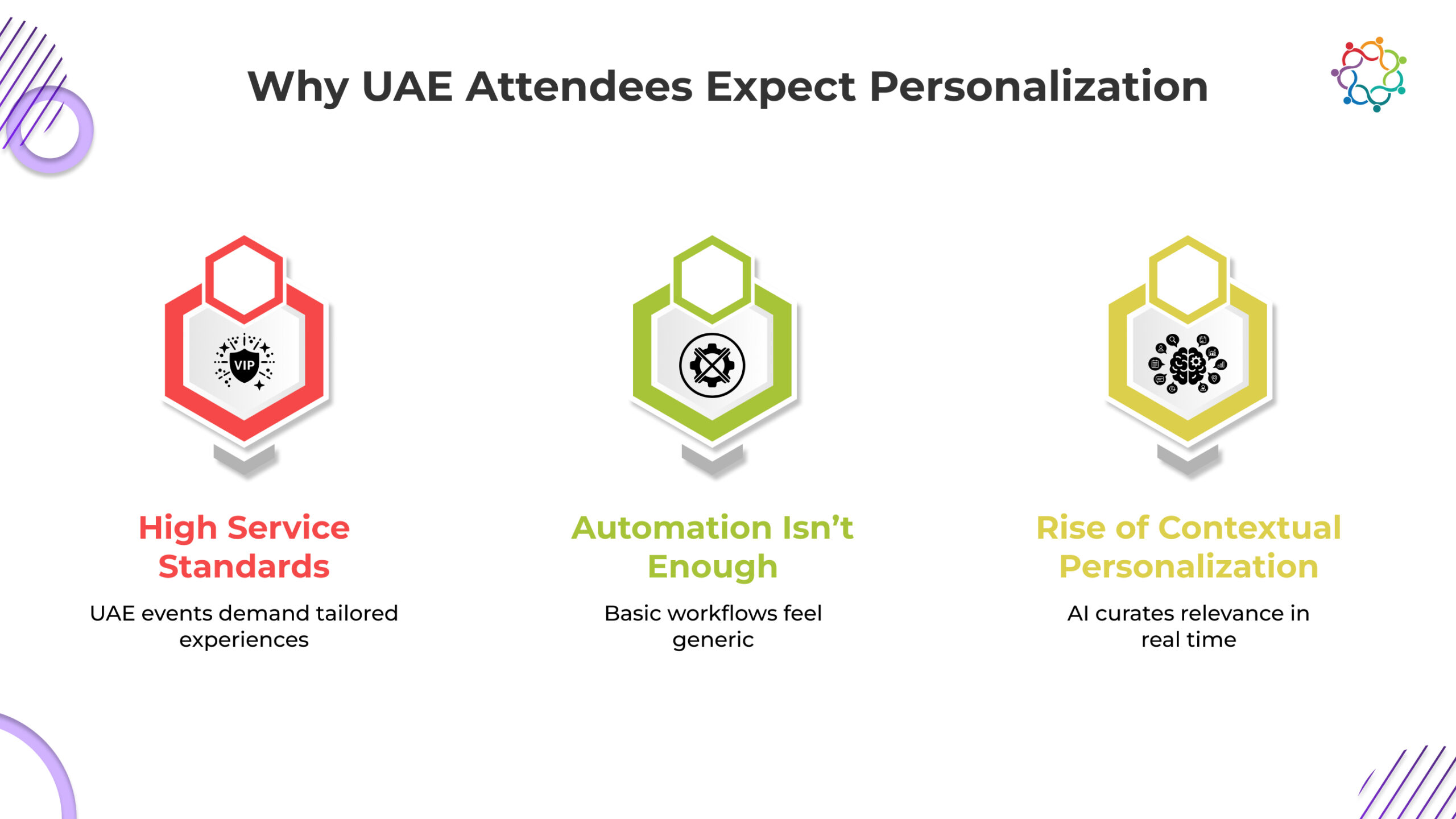

Events are defined by the participation of people who want things to be easy, interesting and enjoyable. In the UAE, when you think about events, they have raised the bar and set the standard for the types of experiences people want from events. In addition to having the highest expectations, events are now being delivered with an AI and a contextual experience in the future.

The future of event management in the UAE will see AI being embedded in not only how event managers operate but also how each attendee will be taken on a journey throughout the entire experience starting with the time before the event to when they engage after the event. Event experiences will no longer be a one-size-fits-all approach, rather there will be event experiences that are tailored to the individual and provide a personal experience for each attendee.

In an area where audience expectations are defined by top-of-the-line hospitality, relevance is not optional. Personalisation powered by Artificial Intelligence (AI) addresses this need and creates a personalised, individually tailored content experience unique to each attendee.

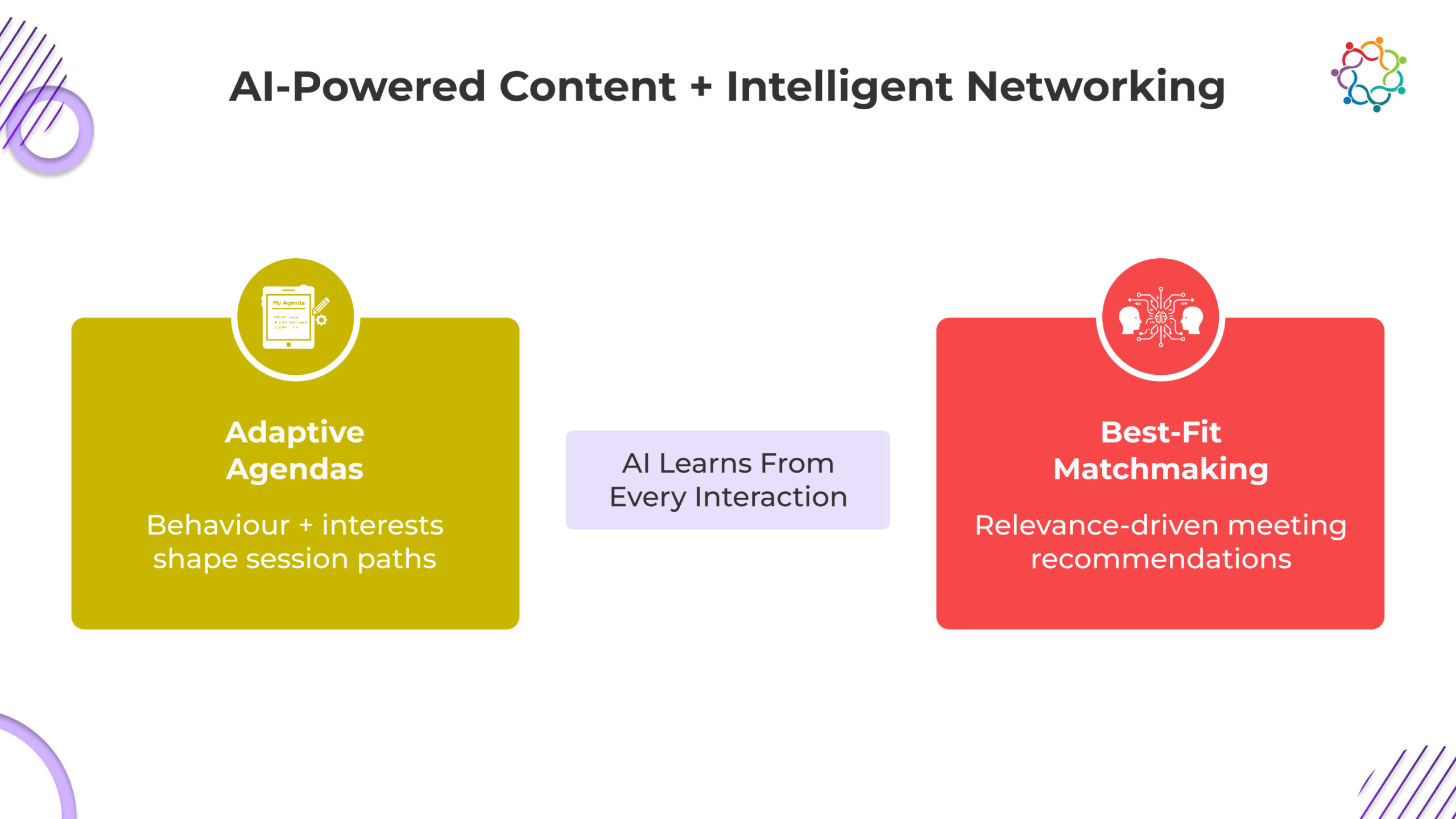

AI analyses (as signals) the attendee’s behaviour, content accessed, industry role, past sessions attended, and even regional tastes/preferences, to generate session recommendations that are aligned specifically with each attendee’s interests.

As each attendee’s agenda will be aligned with their individual goals, the higher the engagement in all sessions will be. Because attendees are able to receive an agenda that is aligned with their desired goals, there are significantly lower rates of drop-off due to feeling empowered as opposed to overwhelmed.

As part of their culture and business practices, the UAE has an expectation of hospitality, personalised attention and proactive service; therefore, the AI recommended sessions provide an elevated level of expectation for a ‘curated’ agenda as opposed to a ‘generic’ one.

By doing so, the result is that attendees navigate the site with clarity and confidence and with the belief that the site has been thoughtfully organised to provide for their needs.

Events in the UAE benefit significantly by attracting cross-border, multinational and industrially diverse participants. Networking is one of the key value drivers for these events and AI is able to create a formal meeting structure for networking that eliminates random chance encounters.

Through understanding each individual’s professional history, industry verticals, products of interest and previous engagement the AI can make recommendations on other attendees, exhibitors and decision-makers who would be relevant to them.

Attendees no longer need to wander around the venue trying to find relevant connections. The AI assists them in connecting them with individuals who genuinely share similar interests.

Exhibitor/sponsors are now able to connect with a higher quality of individuals that are of the right fit for their company. This means a greater number of leads being generated, speedier on-site qualification of leads and increased return on investment for sponsors/exhibitor.

For events where there is a presence of buyers, investors and international attendees, the use of intelligent matchmaking maximises the experience of all attendees and gives each participant a tangible value from their participation.

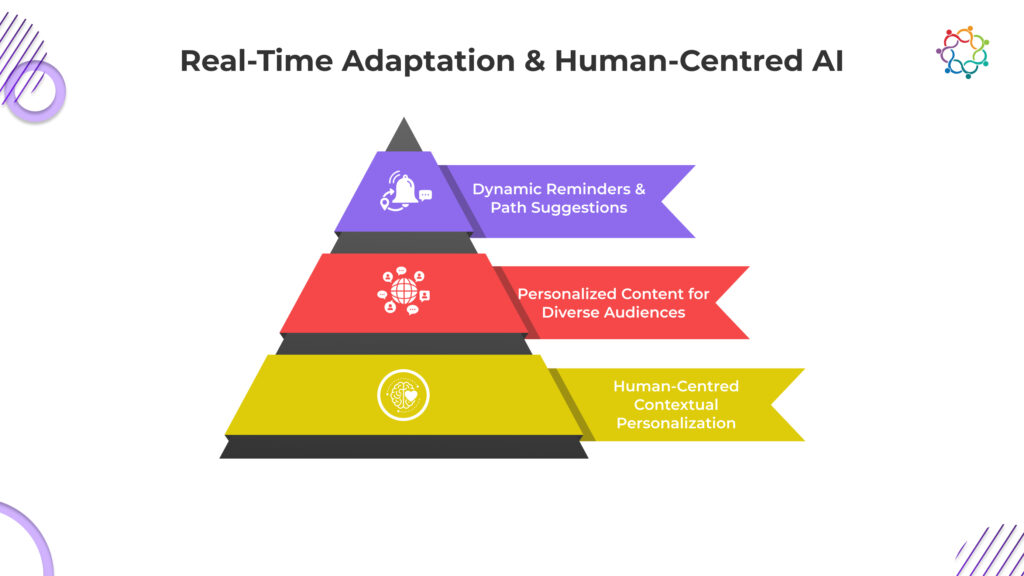

Personalization doesn’t finish when guests check in. Rather, UAE events are progressively utilizing real-time personalization to guide the participant experience from the time they arrive until they leave.

Smart nudges are sent to attendees at timely points while they are on the event floor, such as ‘This session is about to start’, ‘Other attendees with similar interests to yours are attending this workshop’ or ‘Your match is here now’. These automated nudges facilitate movement through the venue to meet their aspirations and look for opportunities for networking.

As attendees interact with different sessions, including viewing content and visiting displays booths, and saving sessions for future viewing, AI can immediately adapt its relevant recommendations based on previous behaviour. The event experience is fluid and continuously evolving.

Real-time multilingual adaptation of the event and its content make it much easier for all event participants who have travelled from various countries in the GCC, Asia, Europe and Africa to participate.

This type of continuous dynamic personalisation provides a natural flow of information. It enables attendees to feel like they are receiving assistance and support from event organisers and helps Event Organisers build world-class event experiences that promote attendee value and satisfaction.

In the UAE, personalization isn’t a feature, it’s an expectation. Samaaro is built to deliver personalization as a living system, not a static configuration.

By continuously interpreting behavioral signals, stated preferences, and real-time activity, Samaaro shapes each attendee’s journey as it unfolds, without overwhelming them with options or notifications.

Here’s what that means in practice:

This personalization layer sits on top of Samaaro’s broader AI-driven event operating model, where intelligence informs planning, engagement, and post-event decision-making across the entire event lifecycle, not just attendee-facing moments.

For organizers, this translates into:

Samaaro enables events to deliver personalization at scale while preserving the high-touch, premium experience the region is known for.

The UAE’s Event Landscape has consistently operated ahead of global expectations and is currently pushing those boundaries with AI-driven personalization. People attending events in the UAE do not just want automation; they desire experiences that have been designed from an individual’s specific needs, goals, and behaviour.

The use of AI as a major focal point is aiding attendees through all aspects of their event journey, from agenda curation to intelligent networking and real-time engagement. As Brands in the UAE continue to implement this sea change, these Brands will provide a deeper and more immersive experience for attendees, while setting the standard for excellence in events in the UAE.

India’s corporate event market is entering a decisive shift; not driven by event scale, but by rising customer acquisition costs, stronger demands for attribution, and the growing expectation that every marketing activity must prove business impact. As noted in the Meta (India) Performance Report 2024, customer acquisition costs across digital channels are climbing steadily, forcing leadership teams to scrutinize how events contribute to pipeline and revenue. As a result, corporate events are no longer viewed merely as experiential touchpoints, but as high-intent data engines that must deliver measurable outcomes.

For years, corporate events in India have been planned and executed through logistics-heavy models, large teams coordinating vendors, registrations, schedules, and on-ground operations manually. This approach worked when events were smaller, expectations were limited, and success was measured largely by execution rather than outcomes.

That model is now breaking down.

As Indian enterprises scale their event programs – across cities, audiences, and business objectives – the complexity of managing events has increased sharply.

This pressure is especially visible across industries. BFSI organizations require verifiable attendance records and auditable participant journeys due to regulatory scrutiny. Manufacturing enterprises need error-free execution for large-scale compliance training and internal programs. IT and SaaS companies run multiple, parallel events across regions and customer segments, where manual coordination simply does not scale.

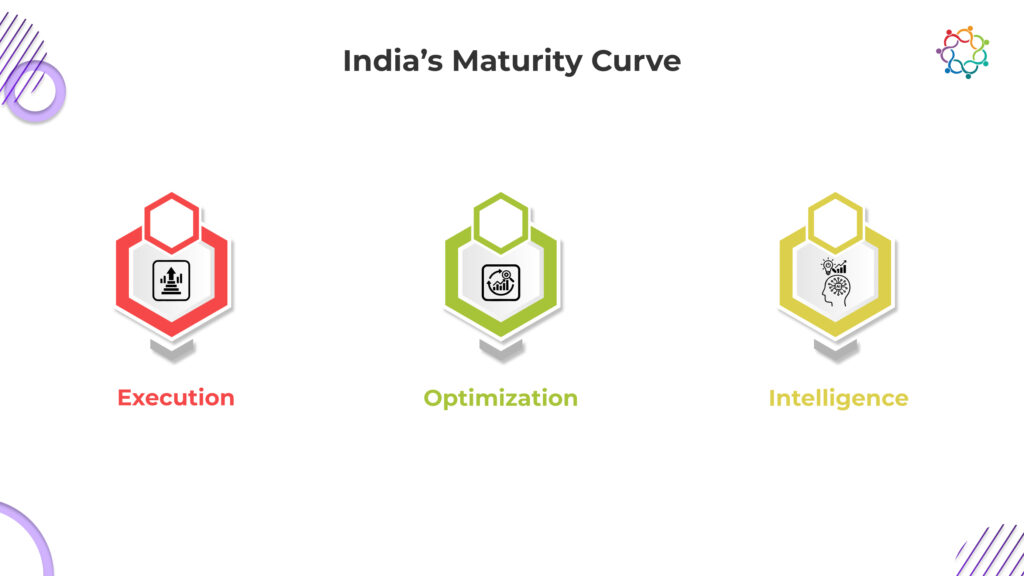

As a result, Indian enterprises are moving away from intuition-led planning toward intelligence-driven models. Instead of relying on gut instinct to estimate attendance, allocate budgets, or manage resources, organizations are adopting data-backed planning that uses historical attendance patterns, engagement signals, and predictive models to guide decisions before an event even begins.

This shift fundamentally changes how events are planned. Intelligence-driven planning reduces uncertainty, limits operational risk, and allows teams to focus less on coordination and more on delivering meaningful experiences. By 2026, this transition will define how mature Indian enterprises approach event strategy, moving from reactive logistics to proactive, data-led decision-making.

India’s corporate events operate in one of the most diverse audience environments globally. A single event may bring together participants across languages, regions, industries, and levels of digital maturity, making one-size-fits-all event journeys increasingly ineffective.

By 2026, personalization is no longer a competitive advantage but a baseline requirement. This shift is driven by India’s mobile-first digital behaviour and the dominance of regional-language consumption across the internet. According to the IAMAI–Kantar ICUBE 2024 report, 98% of Indian internet users consume content in regional languages, with over half of urban users preferring vernacular experiences.

For event teams, this changes how engagement must be designed. Vernacular-first registration flows, localized communication, and language-aware in-event content are now essential to reduce drop-offs and improve participation across fragmented audiences.

At the same time, enterprises face pressure to personalize experiences across roles and intent levels. BFSI events must serve senior decision-makers, agents, and compliance stakeholders differently within the same program. IT and SaaS companies run multi-city launches and customer events where relevance varies sharply by account, function, and maturity.

As Indian enterprises scale their event programs, personalization at scale becomes a structural necessity, not a design choice. The ability to adapt journeys by language, role, and intent will define how effectively events drive engagement and business outcomes in 2026.

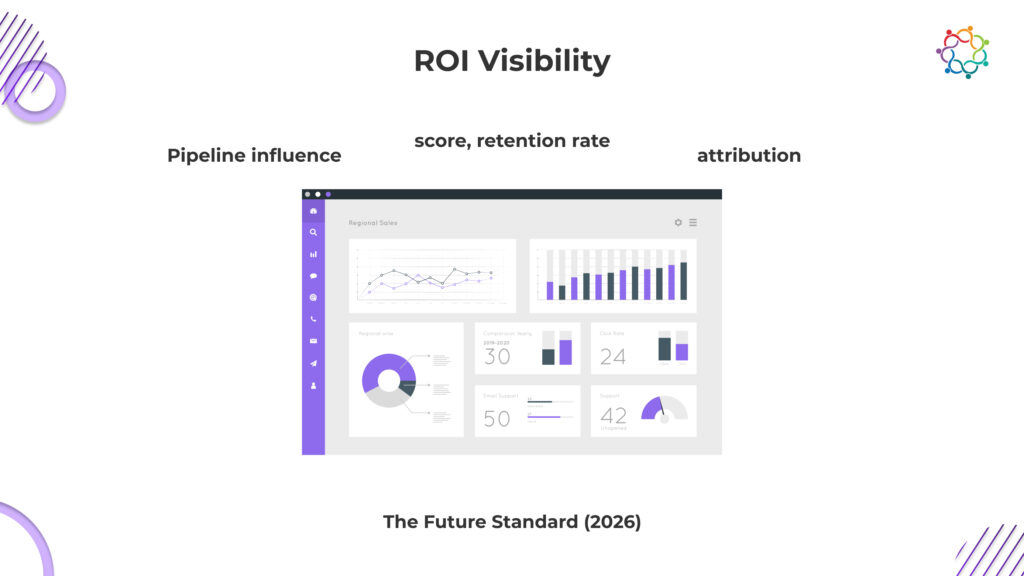

The corporate events sector in India has moved away from success measures based on traditional metrics toward an outcomes-driven model. Instead of evaluating events through superficial indicators such as registrations and footfall, organizations are increasingly focused on understanding the tangible business impact events create.

Organizations no longer measure event success by attendance numbers alone. While registrations indicate interest, they offer little insight into intent, influence, or commercial viability. As companies accelerate their sales cycles, corporate events are now expected to demonstrate how they contribute directly to revenue outcomes.

One of the most significant shifts in event evaluation is the mainstream adoption of pipeline attribution. Indian enterprises are increasingly measuring how events influence:

Rather than asking how many people attended, organizations are now evaluating how effectively events support pipeline growth and sales outcomes.

The quality of engagement has emerged as a stronger signal of intent than attendance alone. Metrics such as dwell time, session stickiness, booth interactions, demo participation, and repeat visits provide deeper insight into attendee interest. These signals allow event teams to identify high-value prospects and estimate the likelihood of post-event conversion with greater accuracy.

To support this shift, seamless integration with CRM platforms such as Salesforce, HubSpot, and Zoho has become non-negotiable. Without automated data flow from event systems into CRM pipelines, attribution breaks down and event influence becomes difficult to trace. Indian enterprises increasingly expect events to feed directly into the same intelligence layer that powers their sales and marketing engines.

Budget owners now demand defensible narratives backed by data rather than anecdotes. CFOs seek clarity on:

This level of scrutiny is pushing event teams to adopt deeper reporting frameworks that connect engagement data to business outcomes. As Indian enterprises continue their shift toward data-driven decision-making, events are evolving from brand-led initiatives into measurable growth levers.

ROI visibility is no longer a desirable outcome, it is a mandatory expectation. By 2026, organizations that ground their event strategies in attribution, behavioral intelligence, and financial accountability will be best positioned to succeed.

As corporate events evolve into measurable, data-driven growth channels, enterprises need more than tools to execute events, they need systems that connect planning, engagement, and outcomes into a single intelligence layer. Samaaro is built to support this shift by enabling enterprises to operate events with predictability, scale, and accountability.

Rather than being designed for a specific geography, Samaaro is built around modern enterprise event realities: multi-city programs, diverse audience segments, high volumes of attendee interactions, and the need for consistent execution across teams. Its mobile-first architecture and configurable workflows allow organizations to manage complex event operations without relying on manual coordination or fragmented systems.

What differentiates Samaaro is its intelligence layer. Predictive attendance models help teams plan capacity and resource allocation with greater accuracy. Engagement analytics surface high-intent signals, such as session participation, booth interactions, and repeat touchpoints, allowing teams to understand not just who attended, but who meaningfully engaged.

Crucially, Samaaro connects event engagement directly to business outcomes. With deep CRM integration, enterprises can track pipeline influence, lead progression, and revenue impact across individual events and entire event calendars. This enables organizations to evaluate events with the same rigor as other performance-driven marketing channels.

For enterprises running dozens of events across regions and functions each year, this unified intelligence becomes a strategic advantage. Instead of managing events as isolated executions, teams gain a consolidated view of performance, engagement quality, and ROI, turning events into a repeatable, insight-driven growth engine.

India’s event ecosystem is moving decisively from execution to intelligence. In this next phase of growth, enterprises that act fastest will gain a clear competitive advantage by treating events not as isolated activations, but as continuous sources of business data.

By 2026, success will depend on an organization’s ability to link engagement to revenue, personalize experiences at scale, and operationalize decisions through real-time intelligence rather than manual coordination. This shift demands three non-negotiables: a unified event data layer, deep CRM integration, and AI-driven personalization embedded across the event lifecycle.

These capabilities will clearly separate enterprises that merely run events from those that convert them into engines of insight, influence, and measurable business impact. In the year ahead, success won’t belong to the companies that host the most events, but to those that understand them best.

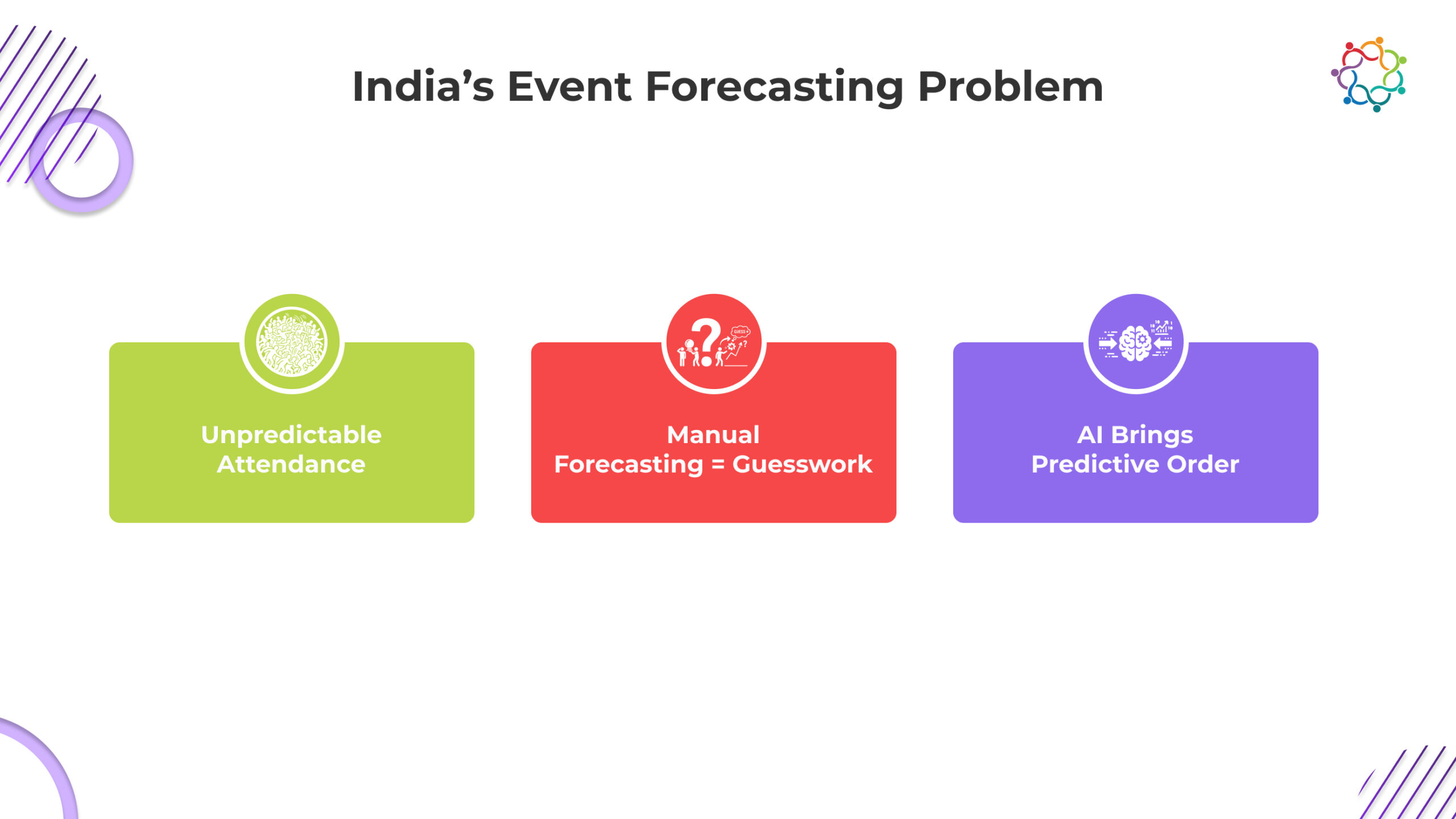

Predicting attendance has always been a challenge for marketers that create and implement events within India. Registration numbers can change dramatically up until the last minute; different regions have different behaviours; and people that primarily use mobile devices will often wait until the last minute to register, frequently change their plans and be inconsistent in responding to reminders about event dates. As B2B events become larger and more expensive to produce, many marketers can no longer accurately predict attendance without using AI technology.

AI is changing everything about how marketers work. By looking at behaviour indicators (what people do), engagement indications (who is engaging with them), and past attendance (how people have behaved), Indian marketers now know, with high degree of confidence, how many people will actually attend events and what they should spend. Attendance forecasting will now become a part of the strategic planning process, allowing marketers to make more accurate predictions about attendance and the best use of their resources, to minimise waste and maximise event ROI.

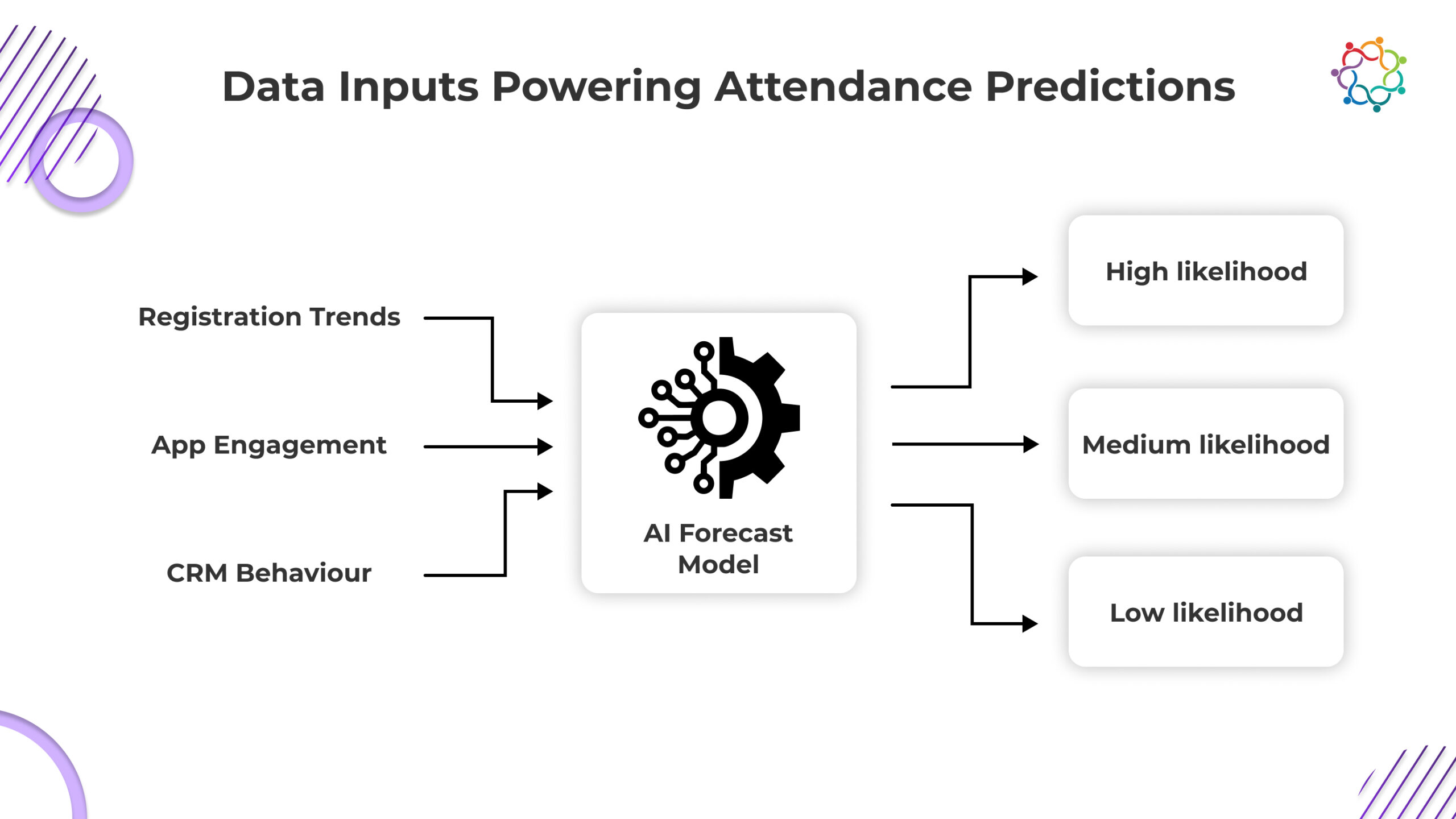

In order for a company to make accurate predictions, it must have a good quality and depth of behavioural data. Indian organisations are becoming increasingly aware that they can see a person’s intent to attend long before the day(s) of the actual occurrence. AI models can process a large number of different data points that humans cannot.

The following lists key data sources that inform Artificial Intelligence (AI) model forecasts.

In India, AI models also capture a variety of contextual behaviours:

Based on a combination of inputs, AI systems create a probability score for all registrants. In addition to providing registrants with the same weight, marketers are provided with additional cohort groups: Highly Likely to Attend, Somewhat Likely to Attend, and Unlikely to Attend. This provides clarity for marketers to make more informed decisions throughout the customer experience.

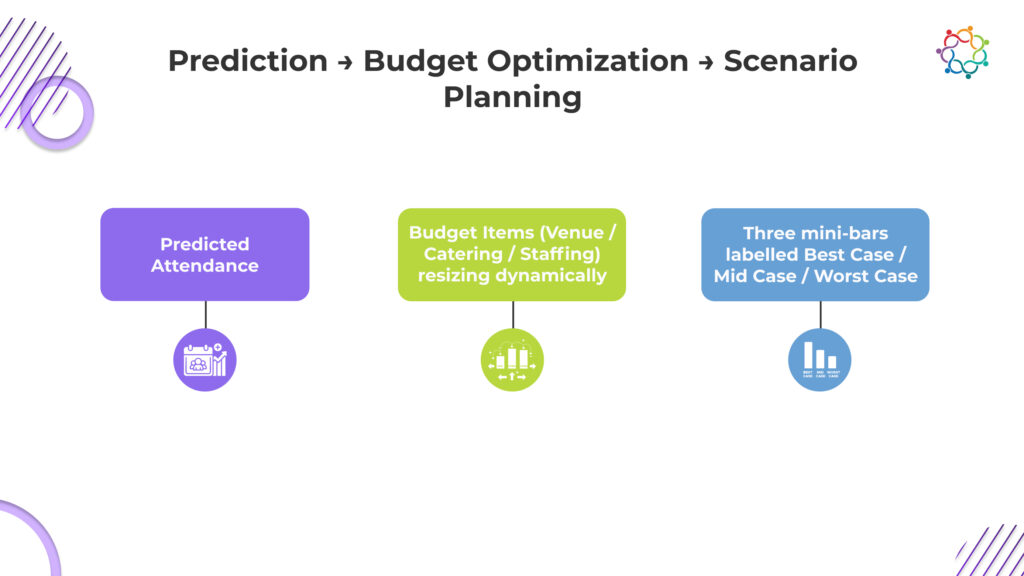

Financial planning is directly affected by attendance forecasting. Accurate forecasts change reactive budgets to optimised budgets.

Forecasting gives teams the opportunity to know if a venue needs additional resources or if it can cut back. They will be able to determine what type of catering will be reasonable and find the appropriate staff to avoid bottlenecks without over-spending.

When faced with uncertainty about the expected turnout to an event, many Indian marketers budget too much for expenses related to that uncertainty. By providing marketers with predictive insights, waste is eliminated and expense decisions can now be supported by data rather than the fear of running short.

Marketers are now able to allocate their budgets toward the segments of their audience that have a higher likelihood of attending the event rather than an overall generalised campaign approach. The more directed the marketing approach is, the more efficient marketers will be and the greater the number of attendees converted into buyers.

While prediction was formerly an analytical exercise, it now directly affects resource management, cost discipline and strategic planning.

Scenario Planning for High-Variance Indian Events

Behaviour of the audience in India will vary from city to city; by industry sector; by format of event. Thus, scenario planning provides a strong opportunity.

Teams can utilise models of AI to simulate three different types of audience attendance scenarios (best-, mid-, and worst-case) all, of which, connect directly back to actual audience behaviour. With these models, marketers can:

When looking at national events that take place across Tier 1; Tier 2; and Tier 3, the need for scenario planning is even more important, as through the use of AI, teams can plan for unpredictability without overspending or jeopardising attendee experiences.

Most forecasting tools stop at prediction. Samaaro goes a step further, it ties attendance probability directly to operational and budget decisions.

By combining registration velocity, engagement behaviour, and CRM history, Samaaro doesn’t just estimate how many people might attend. It shows who can attend, who needs nudging, and who is unlikely to show up at all, early enough to act on it.

Each registrant is dynamically scored and grouped into clear attendance cohorts. These cohorts then drive downstream decisions:

In Indian event environments, where last-minute registrations, mobile-first behavior, and regional variance are the norm, this linkage between prediction and action is what prevents over-spending and under-delivery.

Modern Business Events are changing in India, and they’re changing fast with the introduction of Prediction Intelligence and the power of Artificial Intelligence to eliminate guess work and establish clear and concise attendance data to allow companies’ budgets to be based upon actual behaviour instead of assumptions.

The requirement for accurate and efficient planning in an incredibly volatile and fluid Indian marketplace means that with if a business can fully leverage AI and Predictive Intelligence to forecast their events they will absolutely receive a financial return on their investment.

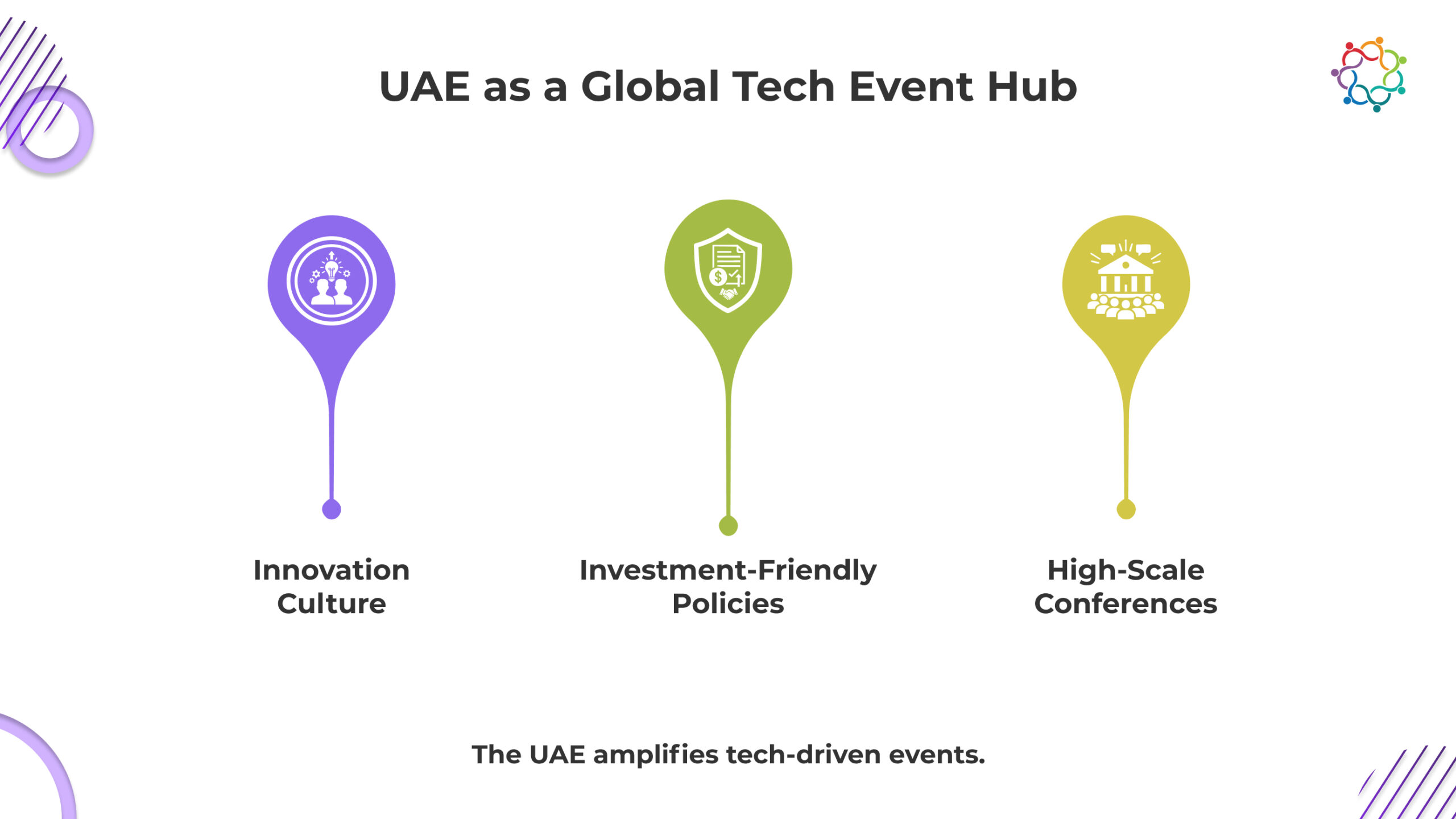

The United Arab Emirates has established itself as one of the foremost global ecosystems for technology, innovation, and corporate events. In the last decade, Dubai and Abu Dhabi have matured from simply being business hubs in the region, to platforms for showcasing global technology, investment, and collective cross-border partnerships. With a plethora of government-backed initiatives, venues with a futuristic appeal, and a rich expatriate-enforced talent pool, the country has laid down an environment for growth, visibility, and the rapid adoption of new technologies.

This ecosystem has changed the way technology companies build their marketing engines; events are no longer annual touch points or a one-off branding initiatives; but rather, events are evolving the way that firms in the UAE are attracting prospects, building partnerships, and creating accelerated revenue opportunities.

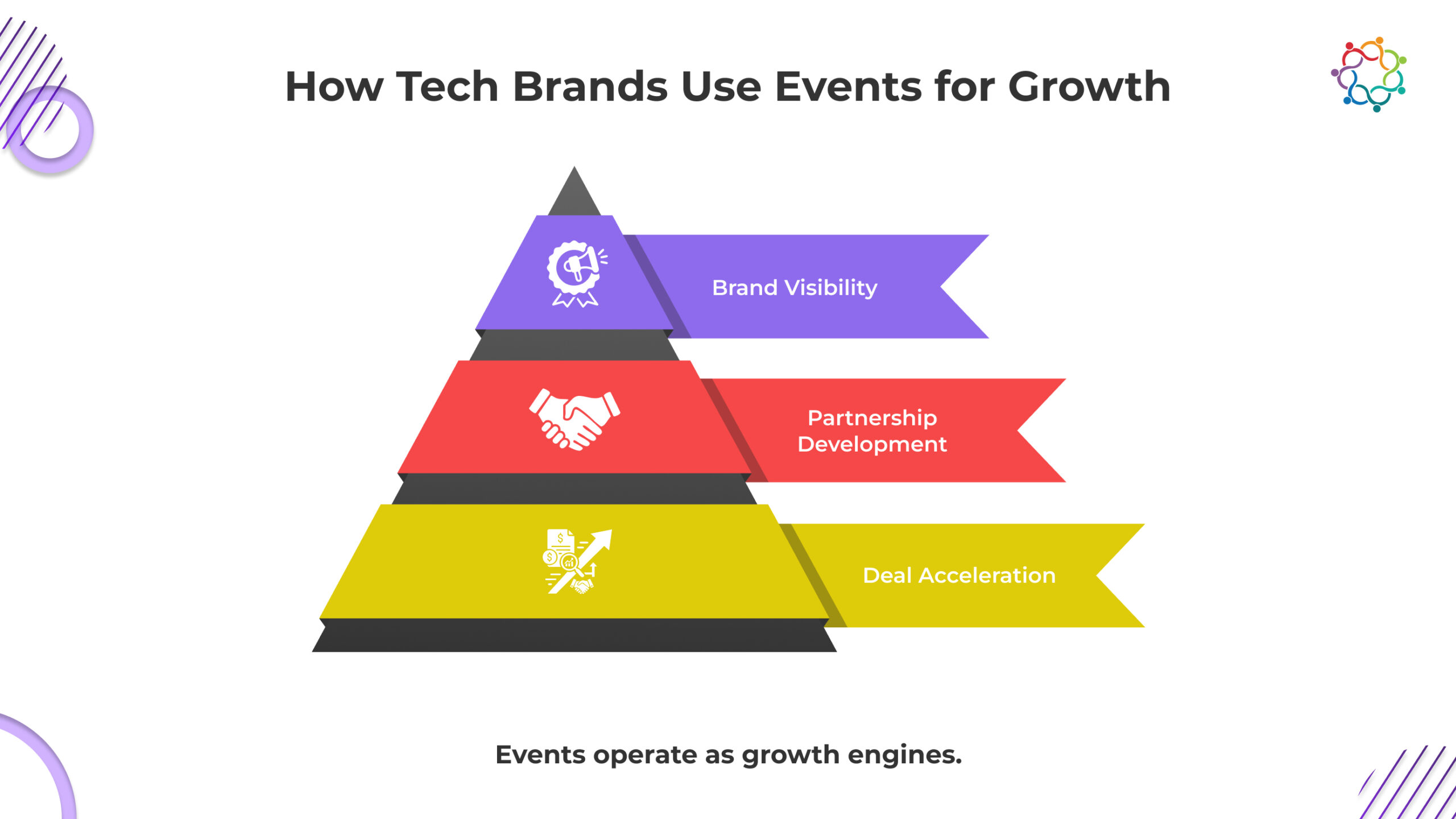

In the UAE’s rapidly changing technology environment, events are critical to growth strategy. Whether it is a fintech start-up that is looking to attract investment, a cybersecurity company showcasing their latest tech, or a cloud services company pitching a solution to local GCC governments, events are an effective mechanism for brands to build their visibility and/or have their voice heard.

UAE technology companies utilize events to demonstrate their innovation live. Live product demo stations, use-case zones, and testing areas allow potential buyers and investors to physically interact with the technology. Experiences flourish in-place and attending an event which features a technology offering is an enormous trust building opportunity for engagement, especially in enterprise categories where there are complex decision-making pathways and multiple layers of procurement, purchasing, and/or restrictions to consent.

Events offer an opportunity for investment and cross-border partnership opportunities. Sponsors and technology companies from around the globe fly to the UAE for high value events like GITEX, LEAP, Step Conference, and ADIPEC – which serves to absorb the international movement of innovators from around the world and create a concentrated deal-making opportunity. For local UAE technology businesses, it provides their international visibility with buyers/partners/investors that are otherwise scattered across multi-national markets.

Another significant benefit to technology companies is the UAE’s evolving ecosystem of early adopters. Technology providers, IT buyers, innovation offices in government, and digital-first teams within enterprises represent a growing community that engages with emerging technology and attends UAE events in droves. An event context generates a wide net of interested buyers/prospects with high intent to discover products through engagement. The concentration of all these individual stakeholders presents an opportunity for UAE technology companies to present their expertise and technology innovation while assessing for opportunities for geo-expansion throughout the region.

Traditionally, events in the region served mainly as large stages for brands to showcase their products and offered brands little way to measure the effectiveness of their event performance. This has changed rapidly for technology companies in UAE who are starting to use more data-led event marketing as a way to improve how they get value from event spend and idea of commercial impact.

Event platforms with CRM integrations allow marketing teams at each event to follow the journey of each individual visitor from registration, to booth interaction, to booking meetings, and on the backside, follow-up. This is becoming table stakes as companies move toward pipeline-oriented event models. Rather than simply counting leads from the event, they want to know what accounts had deep engagement, what content informed decision-making, and ultimately, how the content contributed to speed in the deal cycle.

Analytics or some sort of metrics are becoming a huge part of presenting to how technology companies in the UAE define success and continue to allow event teams to demonstrate the value of but measuring is about more than just attendees counted in general sessions. Marketers want to know the depth of engagement, how many attendee’s product demonstrations had, QR tick marks, behavioral heat maps and how connections were made. These metrics and data points capture to feed analytics dashboards in marketing and sales exploring a common view for leaders of influencing event touch points overall.

All of the data led event strategies means better budget allocation. For example, if a conference was shredded than brand awareness focused on driving creating higher-quality meetings, they can adjust booth space to reflect experience that investment and NHS can adjust Sponsorship or next year’s attendance strategy. If they find out other break-out sessions are driving greater participation with associations, the team can generate more content led programming. The shift from gutfeel focus of planning for an insight-trail plan focused path to event execution and metrics measure is potentially the largest shift in the event ecosystem in the UAE.

The UAE has a culturally diverse yet regionally specific audience. For tech companies, local relevance matters, especially in the enterprise and government context. Events have even more impact when brands are communicating with the audiences of the UAE’s culturally diverse, GCC bordered ecosystem.

Communication in Arabic first is gaining traction in event design, specifically in tech shows for government, finance, and public sector. Arabic versions of event apps, dual-language content tracks, and culturally sensitive messaging can-use of localization builds trust and credibility.

Beyond language, it’s also important to recognize the regional context. Tech companies should be aware of the business culture in the UAE-its focus on long-term partnership and the desire for face-to-face conversations. Events create an ideal opportunity to build this relationship. Hospitality (lounges for networking), curated introductions, and VIP programs are becoming standard features of enterprise events in the region.

Localization can also be applied to demonstrate regional relevance in the product narrative. For example, a cloud provider with Saudi compliance or a new fintech company that can effectively frame their product in relation to the GCC banking structure originally increases their credibility instantly. Events enable brands to deliver this level of context directly to decision makers.

Presenting the future, technology event industry in the UAE is focused on encouraging innovation, stewardship and intelligent engagement.

Several venues and organizers in the UAE are putting frameworks in place for sustainability reasons to lessen waste and carbon emissions. The adoption of digital ticketing, sustainable builds, green certifications, and carbon-tracking dashboards have all contributed to sustainability becoming a competitive edge for tech brands attending larger events.

AI is shifting how attendees’ network and find content. Intelligent matchmaking tools can now assess attendee profiles, interests, and behaviours, to recommend sessions, products and people. For UAE enterprise audiences – where decision-making cycles depend on building relationships – this applies a very valuable advantage.

While events in the UAE remain physical for the most part, a number of hybrid networking touchpoints improve accessibility and convenience. The capability of providing on-demand session libraries, digital lead capturing, and demo zones gives tech brands another opportunity to extend the life of the event and build engagement long after the show floor has closed.

Together, these trends point to an ongoing commitment to innovation, as well as the modernized infrastructure available in the UAE. The tech industry has benefited, as innovation is regarded as a new energy which encourages experimentation which rewards forward thinking engagement models.

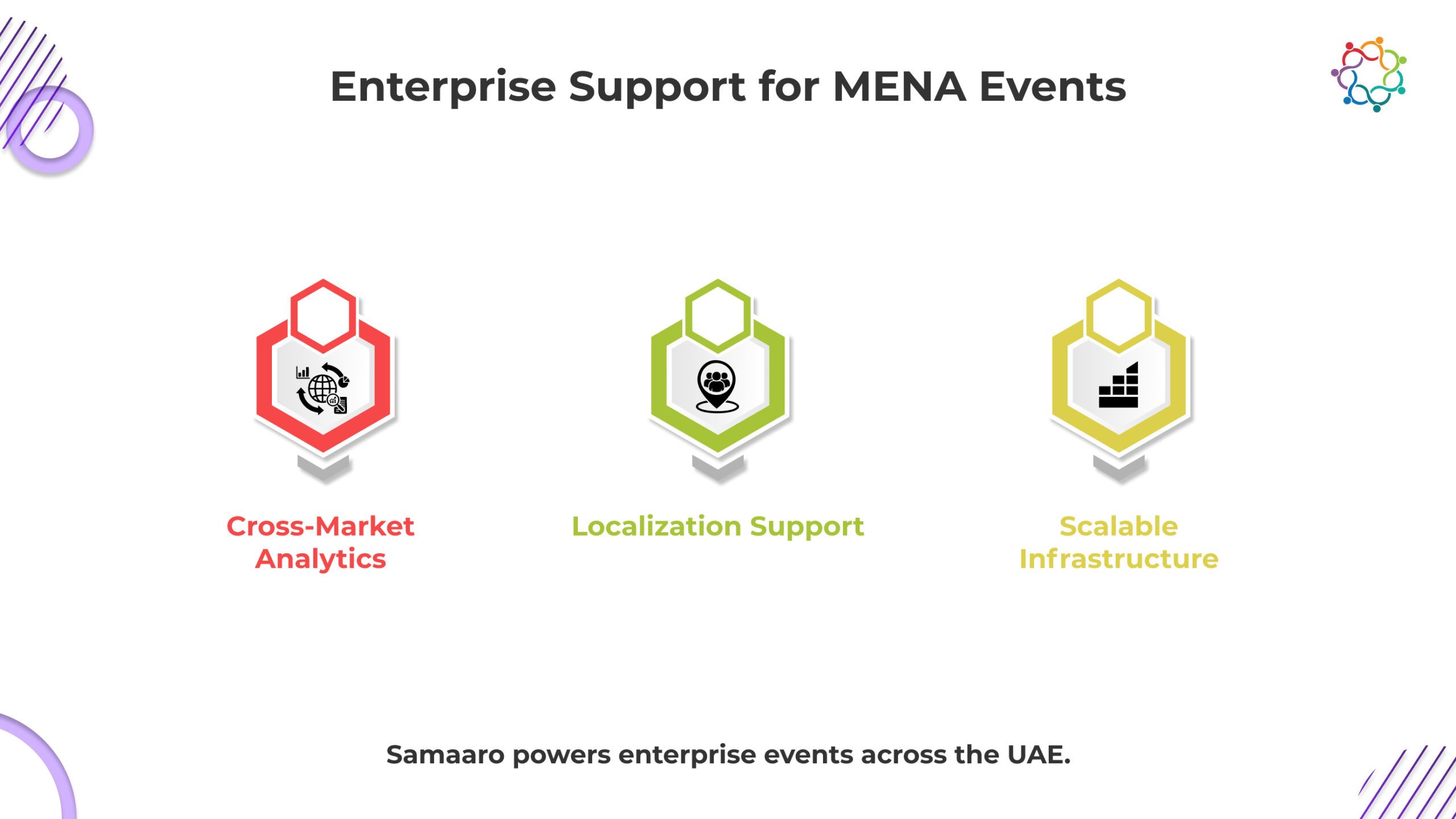

UAE tech companies don’t just run events, they operate multi-market, pipeline-driven programs that require precision, localisation, and real-time intelligence. Samaaro is built to support that complexity.

Samaaro gives UAE and MENA technology teams a unified system that ties registration, booth activity, behavioural signals, and CRM movement into clear, revenue-linked analytics. Instead of counting “leads,” teams see which accounts showed high intent, which sessions accelerated deals, and how event touchpoints influenced pipeline progression across the UAE, KSA, and wider GCC markets.

For a region where in-person connection drives enterprise purchasing, Samaaro supports:

For tech companies participating in events across the region, Samaaro becomes the operational engine that captures intent, measures influence, and scales event output across borders.

Samaaro turns events in the UAE from branding exercises into repeatable, revenue-aligned growth programs, exactly what the region’s tech ecosystem now demands.

In the UAE’s tech landscape, events are no longer episodic marketing activities. They act as enterprise media platforms where brands communicate value, strengthen partnerships, and accelerate revenue. With government support, a thriving innovation ecosystem, and an audience that embraces technology, events have become strategic engines powering business growth. Tech companies that invest in data-driven event frameworks, localised experiences, and future-facing engagement models will continue to shape the region’s digital transformation story.

Power your next UAE tech event with Samaaro’s enterprise-ready event solutions.

Event teams are now faced with more data than ever. Registration figures, heatmaps, dwell times, content interactions, meeting bookings, and pipeline attribution all live inside more sophisticated event systems and technology platforms than ever before. And, despite this abundance of information, most event reports don’t really matter to executives. CMOs don’t care for raw data, they want clarity, storytelling, and alignment with business goals.

The issue is not the lack of data; the issue is the lack of translation between operational metrics and strategic impact. Creating an event analytics framework that CMOs can utilize is the answer. The framework organizes information into a way in which executive audiences can easily understand and act upon it.

At the core of every event analytics framework is KPI alignment . Before considering charts, dashboards or metrics, analysts must ask the most important question: what does the business care about? CMOs tend to assess event success through three lenses, brand, pipeline, and audience growth.

These metrics assess whether the event increased the attendee’s perception of the brand’s awareness and authority. The data here may include the number of sessions attended by the attendees on thought-leadership topics, mentions on social media, number of views on thought-leadership content, ratings of speakers, and sentiment trends. On an important thought leadership topic, try not to drown out the report with a lot of granular engagement metrics, simply highlight signals that suggest the brand’s story resonated with the appropriate audience.

CMOs need to understand event conversion through revenue. This will include booked meetings, conversion MQL – SQL, acceleration of deals and influenced pipeline. The goal again is not that you document all sales activities or reports but instead boil them down to a concise story to tell. Did the event attract the right accounts, and did it move them closer to a decision?

Enterprises care about building long term communities. Metrics like first-time attendees, returning attendees, new subscribers to the content, progression to other brand touchpoints all allow CMOs to speak to whether a specific event exceeded their audience. When you report on audience growth KPI frame this layer of feedback through retention trends and long-term engagement.

Aligning KPIs to these three buckets will help ensure your analytics framework is speaking the language of executive leadership your business is already using. If CMOs see data framed in context they are far more likely to engage deeply.

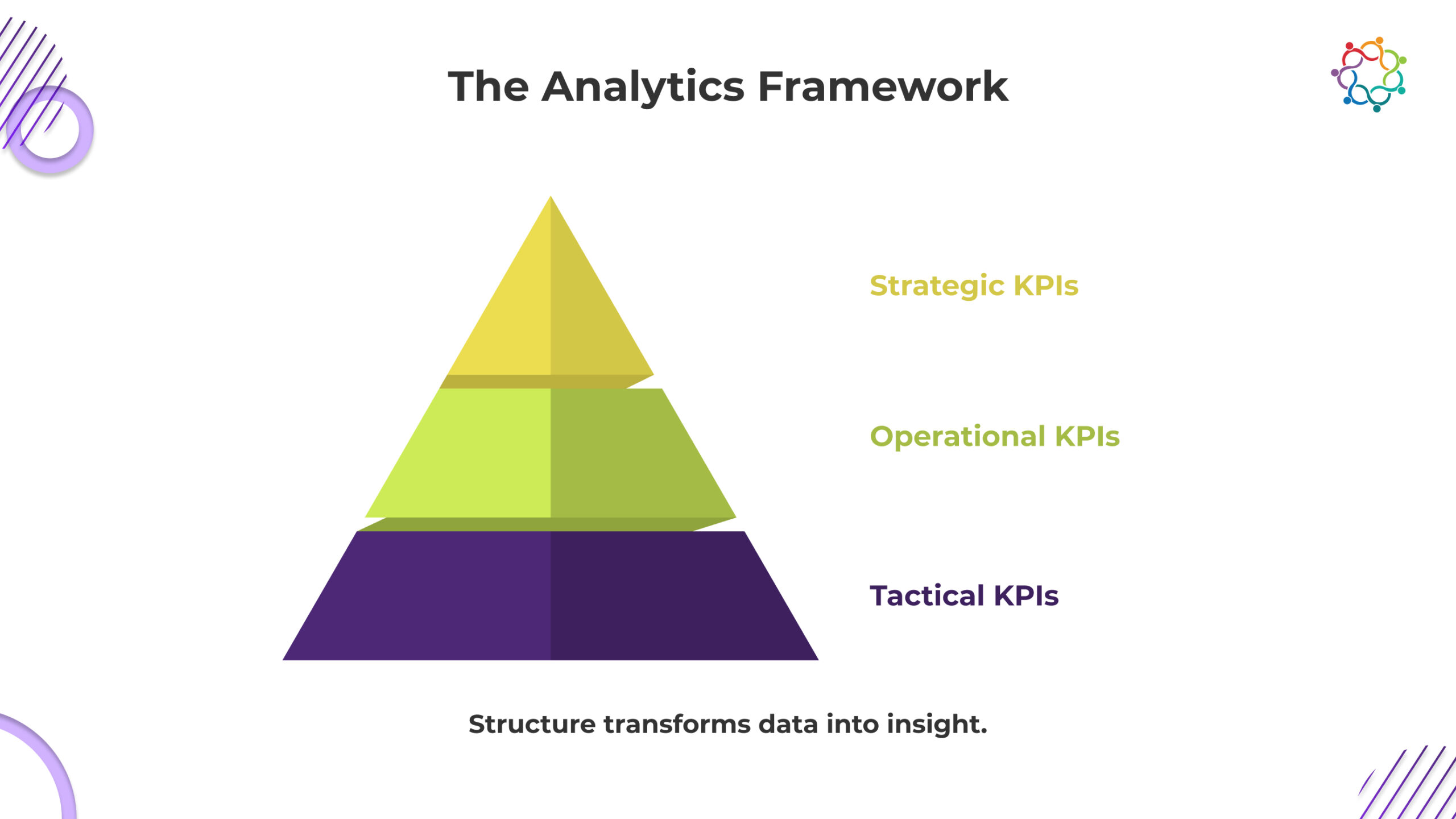

After determining KPIs, the next focus is creating an architecture that aligns tactical performance with strategic results. The three-tiered structure is best for event in your analytics: tactical, operational and strategic.

This level contains more fundamental indicators, such as registrations, attendance rate, session engagement, content engagement and app utilization. The tactical metrics allow analysts to review what happened during the event but seldom gets the full story at an executive level. Nevertheless, tactical metrics are still important because they create the scope to support higher level deduction.

This level connects audience behaviors to business outcomes. Examples of operational metrics are booth conversions, meetings booked, CRM enrichment, influenced opportunities and content-based lead progressions. Operational metrics sit in the middle, linking engagement metrics to revenue, demonstrating how those interactions influenced where buyers go next.

This is where CMOs focus the majority of their energy and time. Strategic metrics are pipeline contribution, ROI, retention habits, customer expansion opportunities, and incremental brand lift over a period of time. The strategic metric is the “so what” metric; what does this mean for the business?

Using these three levels to organize your analytics framework creates a logical line of sight from the activity level to the enterprise level outcomes. Refrain from labeling tactical and operational metrics as simple activities rather than intermediate business outcomes, then CMO or executive can quickly scan the strategic metrics first and dive down to lower levels only as necessary. Using this hierarchy enhances the intuitiveness of the report, enhances digestibility, and aligns executive’s priorities.

Even the most useful metric will be devalued if it’s poorly visualized. Effective visualization translates our complex datasets and reports into crucial intelligence that’s far easier to read through and interpret. When designing dashboards for an event or for use in an executive presentation, the most important design principle is clarity.

When dealing with executives, resist the temptation to show so many charts and chase with a small set of relevant data visuals. Instead, focus on the stories and importance stated with fewer visuals, but with great impact.

Also, use color coding across all data visualization so that the leaders and audience can look at a visualization and quickly relate that to a standard across other visualizations. For example, you might use blue for engagement, green for pipeline, and grey for operational measures. So, if you are showing a chart that relates to engagement, should be color coded blue in a title and/or legend. In that way, when the CMO looks at each data visualization, he/she can quickly relate the visual to one of the variables, to be on the lookout for engagement level.

Hierarchy matters. Each reporting section should start with a headline metric and a related chart. For example, if your strategic KPI is “₹12 crore in influenced pipeline,” relate the number with subsequent cohort, session related charts, or interactions related to that number. This accomplished two things; it communicates the fact, but also the story behind the number.

Finally, eliminate operational terminology from your analytics. Use “time spent at priority content areas” not “dwell-based heatmap segmentation.” Use, “how engagement influenced opportunity progression,” not, “multi-touch attribution variance.” Domain knowledge in business outcomes, not differentiated by operational analytics terminology.

To clarify that a solid event analytics framework provides insight about more than performance, it provides insight to help drive better decisions. CMOs couldn’t care less about pages of metrics, they want actionable recommendations. Analysts should interpret the data and thoughtfully explain what needs to change for the next event.

For example, if there is strong behavioral interest from attendees in certain industry-specific listening sessions and weaker attendance at generic product demonstrations, there would be a recommendation to restructure program content around attendee vertical priorities. If the behavior-based, CRM-mapped engagement showed that mid-market accounts demonstrate a stronger influence over pipeline than enterprise accounts, the next order of business is to modify targeting or bring sales into planning sooner in the pre-event stage.

This recommendation should be framed in a way that it presented in, “forward looking” language:

Examples of forward-looking recommendations:

When insights provide a clear path into next steps, executives will see and articulate their value in analytics within their company very quickly. The goal is to shift the conversation from reporting what happened, to advising what should happen next.

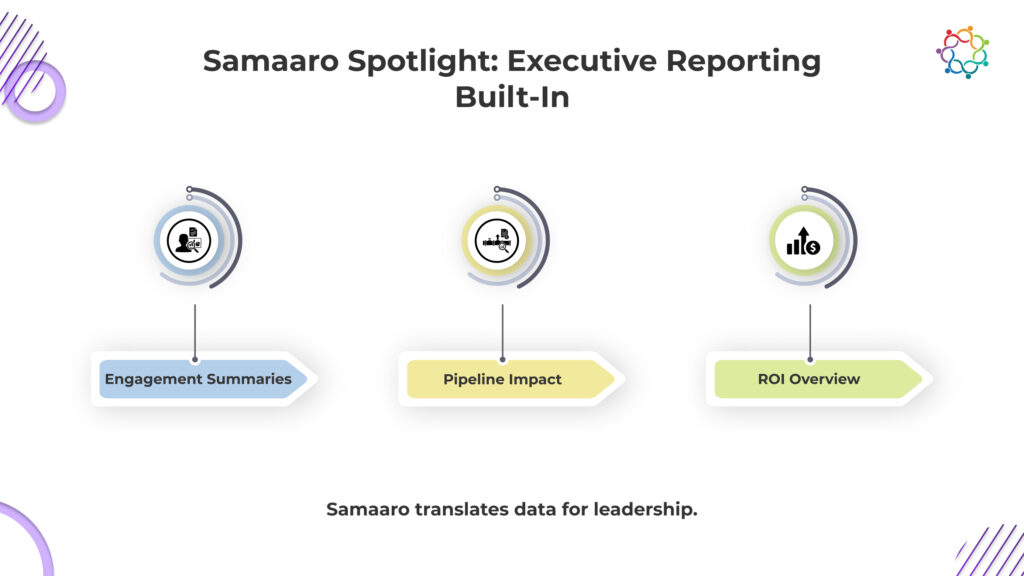

Building an event analytics framework only works if the underlying system translates raw signals into strategic clarity. Samaaro is engineered around the same hierarchy CMOs use to evaluate events: brand lift, pipeline movement, and audience growth.

Instead of handing executives dozens of tactical charts, Samaaro condenses five data sources, registration, engagement, behavioural intent, CRM movement, and ROI, into story-first dashboards that answer the questions CMOs actually ask:

Did we attract the right audience?

Samaaro links registration and behavioural patterns to ICP profiles, industry clusters, and account lists, surfacing whether the event reached the intended market.

Did the event change buyer behaviour?

Session-level dwell time, repeat interactions, and content pathways are summarised as signals of intent, not as isolated heatmaps.

Did we create sales momentum?

Opportunity movement, deal acceleration, and account scoring are tied directly to engagement patterns, giving a precise view of influence instead of “we generated 400 leads.”

What should we do next?

Samaaro auto-identifies high-intent accounts, underperforming sessions, content that created lift, and gaps in audience fit, turning dashboards into immediate recommendations.

Every insight is organised by the same three-tier structure used in your framework:

This makes Samaaro’s reporting instantly readable for CMOs, who scan the strategic layer first and drill into the underlying evidence only when necessary.

Samaaro doesn’t just present data, it tells the business story of the event, in the format executives already think in.

An effective event analytics framework simplifies the complexity of reframing event data and aligning all KPIs to business goals, displaying data intuitively in tiers, visualizing insights effectively and converting metrics into action. CMOs do not ask for raw numbers, rather a story they can trust that will articulate how the event is going to contribute to brand equity, pipeline movement and long-term growth of the audience. When event analysts create a framework that speaks this ‘language’ of trust, data goes from being informative to transformational.

Samaaro is an AI-powered event marketing platform that enables marketing teams to turn events into a measurable growth channel by planning, promoting, executing, and measuring their business impact.

Location

© 2026 — Samaaro. All Rights Reserved.