Samaaro + Your CRM: Zero Integration Fee for Annual Sign-Ups Until 30 June, 2025

- 00Days

- 00Hrs

- 00Min

1

2

3

→

Bottom Line:

events drive visibility, but without sustained validation and alignment, interest rarely converts into actual product usage.

B2B tech events are not underperforming. They are doing exactly what they are designed to do, generating attention, engagement, and product curiosity at scale. The problem is what happens after.

Companies set up high-impact spaces where products can be shown off clearly and precisely at events like product launches, developer events, and SaaS user conferences. People look at the benefits, talk about them, and leave with a strong belief that the product is worthwhile. This looks like a success from the point of view of the event.

But product metrics tell a different story. Trials do not increase proportionally. Onboarding activity remains flat. Active usage does not reflect the level of engagement observed.

The gap is not accidental. It exists because interest is being mistaken for adoption.

This blog examines why B2B tech events consistently generate product interest but fail to translate that interest into actual product usage.

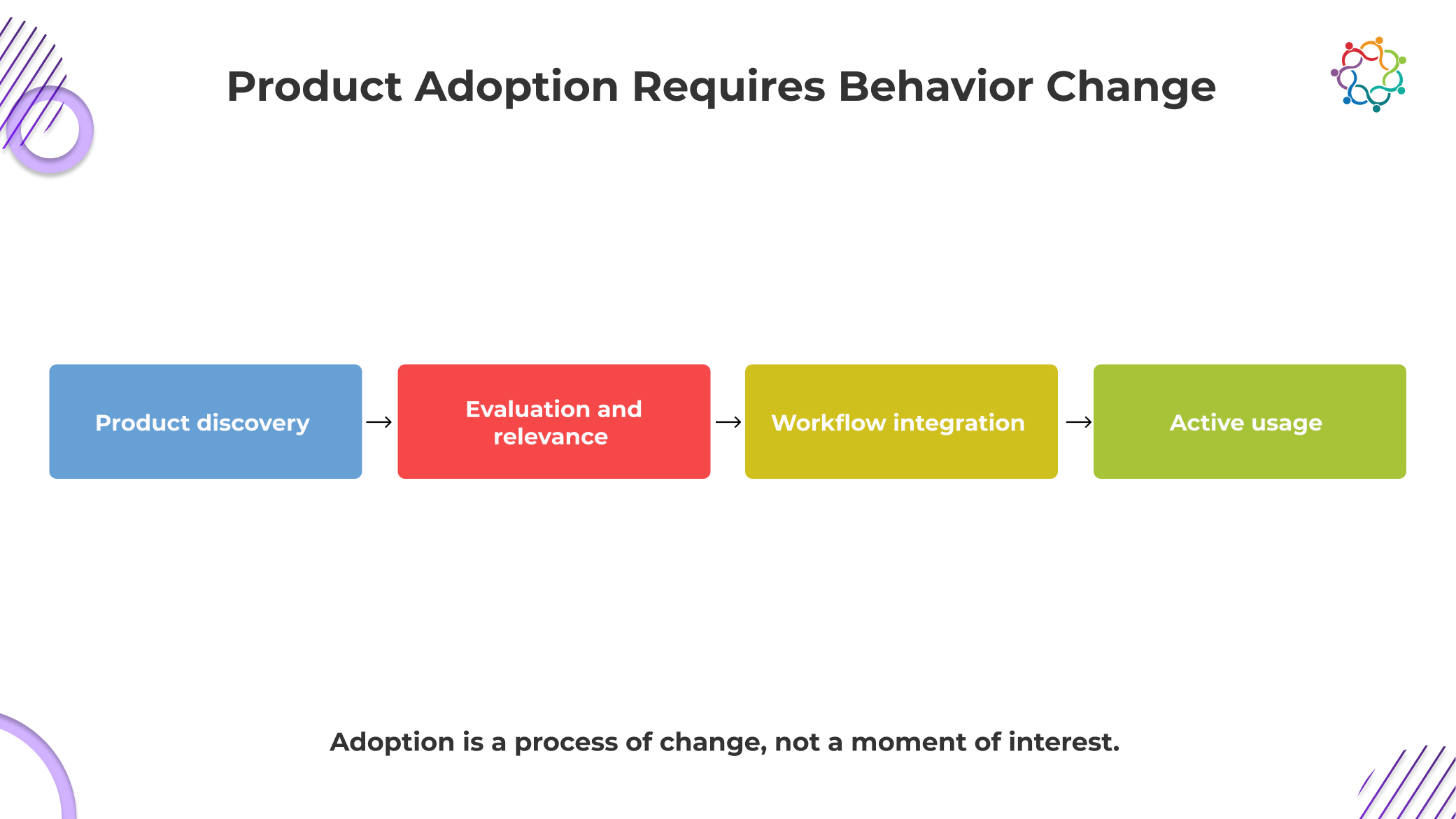

Product adoption is not a continuation of interest. It is a shift in behavior that introduces friction, risk, and internal dependency. This is where most assumptions around event impact begin to fail.

Engaging with a product at an event requires attention. Adopting it requires disrupting existing workflows, reallocating time, and justifying change. These are not lightweight decisions. They demand evaluation under real constraints, not event conditions.

A critical gap sits in who attends versus who decides. The person exploring the product at a developer event or product marketing event is often not the one responsible for implementation or budget approval. Even if interest is strong, it must be translated into internal alignment across teams that were not part of the experience.

Engineering, IT, and leadership each introduce their own criteria. Feasibility, risk, and long-term value all come under scrutiny. What felt straightforward during the event becomes layered and uncertain.

This is why adoption slows down. Interest is immediate. Adoption is negotiated, validated, and often delayed until it loses momentum.

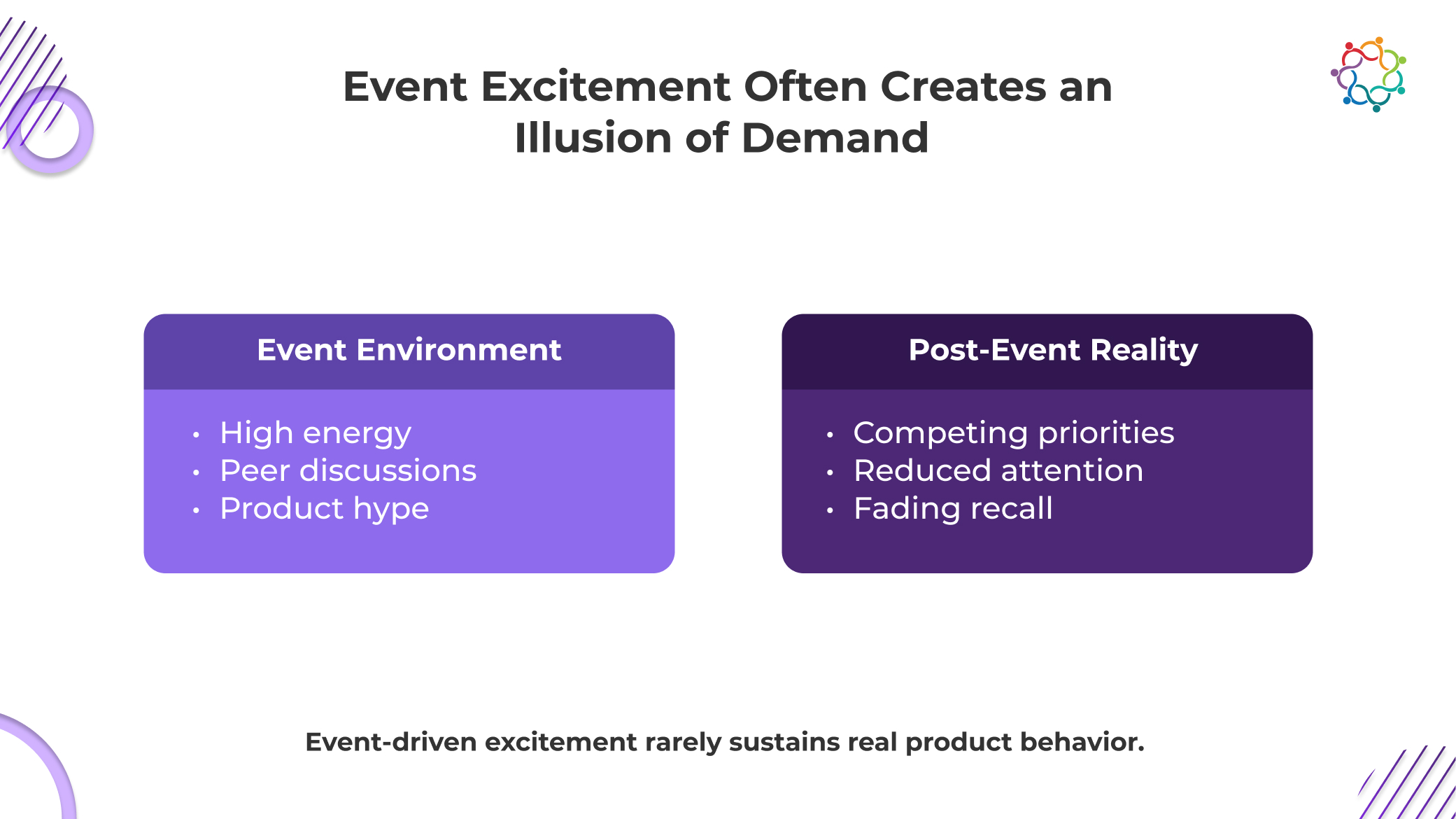

Event environments do not just amplify interest. They manufacture confidence around demand that has not been tested. Inside B2B tech events, attendees operate in a low-risk, high-stimulation setting where curiosity is encouraged but commitment is not required. Expressing interest feels natural because nothing forces follow-through.

What gets missed is that this behavior is conditional. It exists only within the event environment. The moment that context disappears, so does the signal strength.

The deeper issue is not just that interest fades. It is that teams treat this temporary engagement as evidence of real market pull. Pipeline assumptions get built. Forecasts get influenced. Product narratives get reinforced.

But this demand has never faced friction. It has not encountered internal resistance, budget constraints, or workflow disruption. It has not competed with existing tools.

This is not an early demand. It is pre-demand noise.

Until interest survives outside the event, it should not be treated as proof of anything.

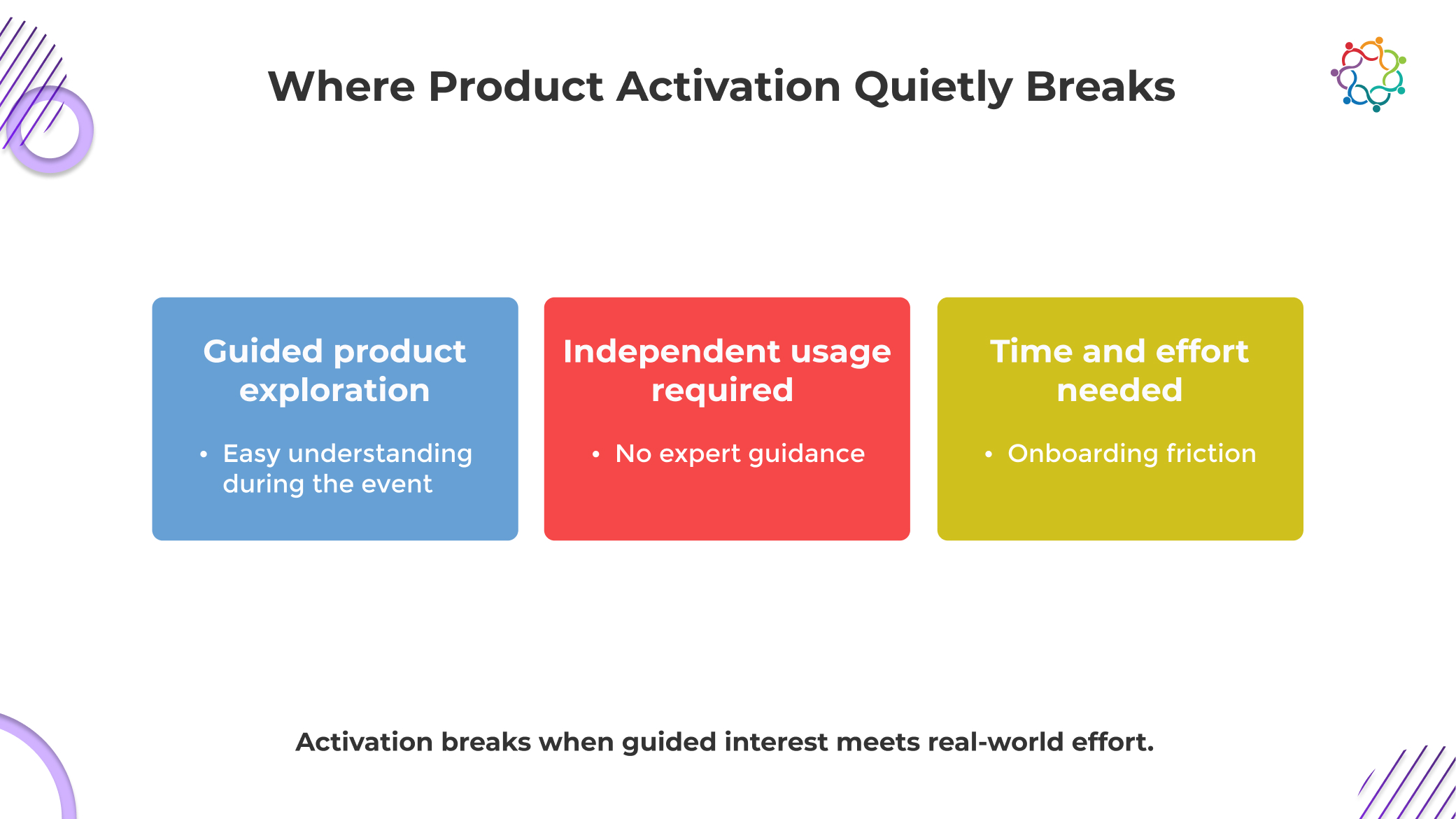

The breakdown does not happen at the point of interest. It happens in the transition from a controlled experience to an uncontrolled reality. This is where momentum loses structure and begins to collapse.

During events, the product is experienced in its most optimized form. Every interaction is guided, every feature is contextualized, and complexity is deliberately reduced. This creates clarity that does not exist outside the event.

Once the environment disappears, users face the product without support. What felt intuitive during a demo now requires independent navigation. Without guidance, uncertainty increases, and exploration slows down before it becomes meaningful usage.

Interest at an individual level is rarely enough to drive adoption in B2B environments. Most products require validation across teams that were never part of the event experience.

Engineering evaluates feasibility, IT examines risk, and leadership questions value. Each layer introduces friction and delay. The initial momentum weakens as the product moves through internal scrutiny that the event environment never exposed.

Even when alignment is possible, activation still depends on available time and priority. Starting a trial or onboarding a product requires focused effort that competes with existing responsibilities.

Without immediate necessity, the product is deprioritized. Interest alone cannot justify the shift in attention required to begin usage. This is where most activation attempts stall and eventually disappear.

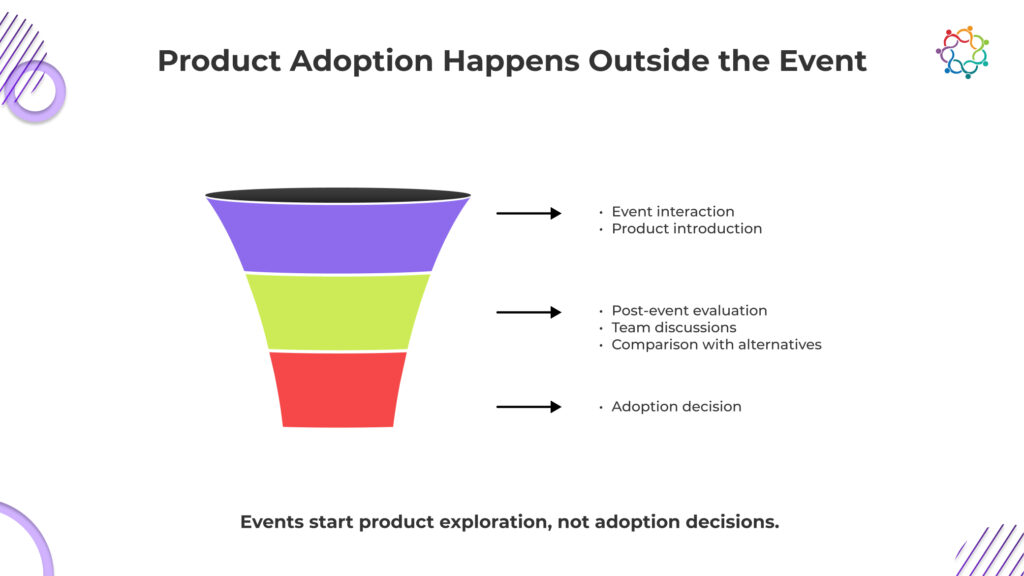

The assumption that adoption decisions happen during events is fundamentally flawed. Events create exposure, not commitment. The actual decision-making process begins after attendees return to their work environments, where the product is evaluated under real conditions.

In this phase, users revisit the product without guidance. They explore it independently, compare it with existing solutions, and assess whether it fits into their workflows. This evaluation is slower, more critical, and far more grounded than anything that happens during the event.

This is also where the majority of event-driven interest disappears. The product must now compete with established tools, internal processes, and organizational inertia. What felt compelling in a controlled environment must now prove its value in a complex and often resistant system.

Teams have to work hard because this time is hard to see. During the event, engagement is tracked, but evaluations after the event are harder to see. This makes a gap between what is seen as success and what is actually happening.

As a result, engagement data show that B2B tech events work well, but product adoption stays the same. There is a persistent lack of knowledge about how events affect product growth because the link between the two is believed rather than measured.

The problem is not that engagement signals are weak. It is that they are easy to collect and easier to believe. Demo interactions, session attendance, and product discussions create a visible layer of activity that feels like progress.

Teams keep misreading these signals because they are immediately available, while real adoption data is delayed, fragmented, and harder to attribute. Engagement offers instant validation. Adoption requires patience and often reveals uncomfortable truths.

There is also a structural incentive to prioritize these metrics. Marketing success is often measured by participation and interaction levels, not by downstream product usage. This creates a bias toward signals that can be reported quickly and positively.

Over time, this becomes normalized. Engagement is treated as a leading indicator of adoption, even when there is no consistent evidence supporting that relationship.

The result is a system where teams optimize for what is visible, not what is real.

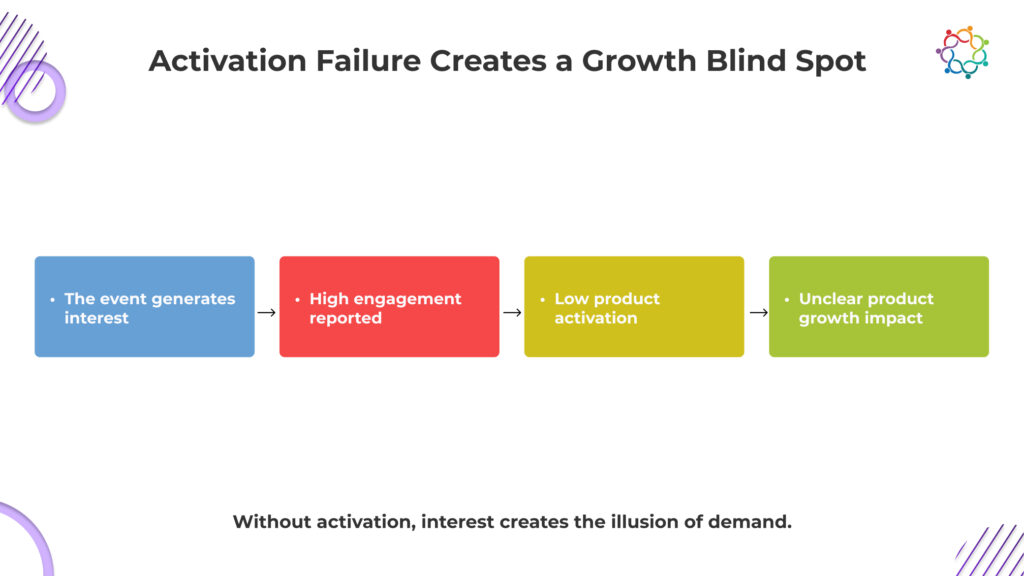

When interest does not convert into usage, the problem is not visibility. It is a misinterpretation. Teams believe growth signals are strong, while actual product behavior tells a very different story.

Event engagement creates the appearance of demand, but without activation, it remains unverified. Teams mistake visibility for traction, leading to decisions based on perception rather than actual product usage patterns.

Significant spend goes into B2B tech events, but when adoption does not follow, customer acquisition cost rises silently. Investment appears justified, while actual user growth fails to support it.

Strong engagement metrics push teams to double down on events. Without linking these signals to adoption, go-to-market strategies become biased toward visibility channels that do not deliver sustained product growth.

Marketing reports success through engagement data, while product teams see stagnant usage. This disconnect creates confusion at the leadership level, making it harder to identify what is truly driving product performance.

This blind spot is not accidental. It is reinforced every time interest is measured without accountability to adoption.

Event momentum feels powerful, but it is structurally short-lived. It is built on concentrated attention, not sustained intent. Once the event ends, that intensity disperses, and the product must compete in an environment where attention is fragmented and priorities are already defined.

What most teams underestimate is that adoption is not triggered by exposure. It is validated through continued relevance. A product must repeatedly prove that it deserves space within an existing system that is already functioning. That validation does not happen during an event. It happens in the weeks that follow, under pressure, scrutiny, and competing alternatives.

Momentum does not survive without reinforcement. And events, by design, do not provide that continuity.

This is why exposure spikes during events rarely translate into usage. Without sustained interaction, the product is remembered but not adopted.

B2B tech events do not fail because they lack attention. They fail because attention is mistaken for adoption. The room feels like a demand, but the product never enters real workflows. The people who engage are often not the ones who decide or use. The product that looked simple in a demo becomes complex in reality.

This is not a visibility problem. It is a translation failure between interest and action.

If this gap is ignored, companies will keep funding experiences that look successful but do not move product growth.

Interest fills rooms. Adoption changes behavior. Only one of these scales is a product.

Samaaro is an AI-powered event marketing platform that enables marketing teams to turn events into a measurable growth channel by planning, promoting, executing, and measuring their business impact.

Location

© 2026 — Samaaro. All Rights Reserved.