Samaaro + Your CRM: Zero Integration Fee for Annual Sign-Ups Until 30 June, 2025

- 00Days

- 00Hrs

- 00Min

1

2

3

→

Bottom Line:

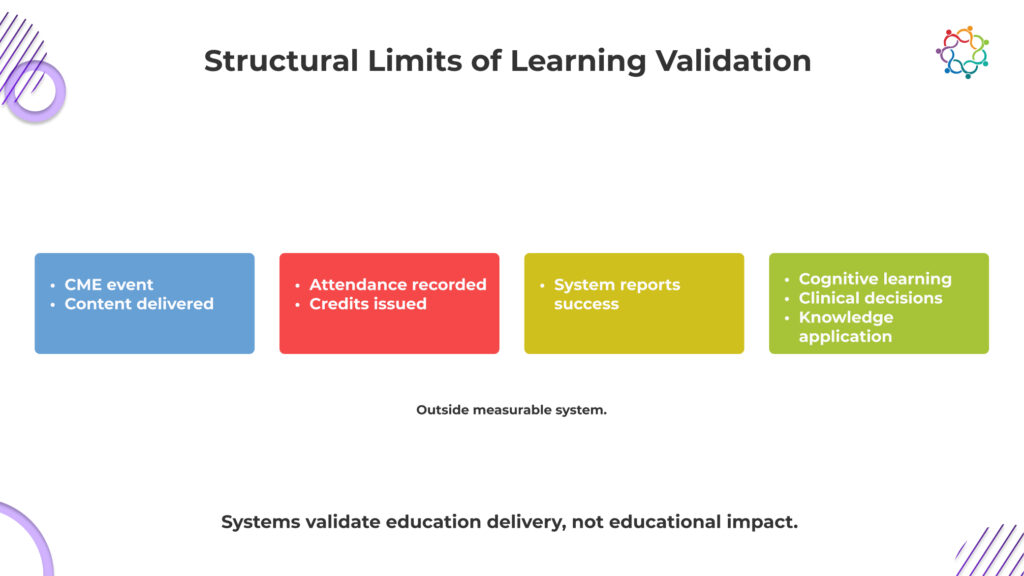

CME events prove exposure, not impact; real educational change occurs in practice, not in attendance logs.

CME proves presence, not progress.

That is the metric most programs optimize for, even if they do not say it out loud.

Across CME events, participation looks strong on paper. Sessions fill up. Physicians log attendance. Credits are issued and documented. From a reporting standpoint, everything signals success. The system confirms that education was delivered and received.

But that confirmation is superficial.

Attendance only proves that a physician was present when information was shared. It does not confirm whether they engaged with the content, understood its clinical relevance, or changed how they interpret medical decisions. The assumption that presence equals learning is where the problem begins.

This is not a gap in effort. It is a gap in validation.

The system captures exposure because it is measurable. It cannot capture cognitive change because it is not.

This blog examines the Learning Validation Gap in CME and why proving attendance is far easier than proving meaningful learning.

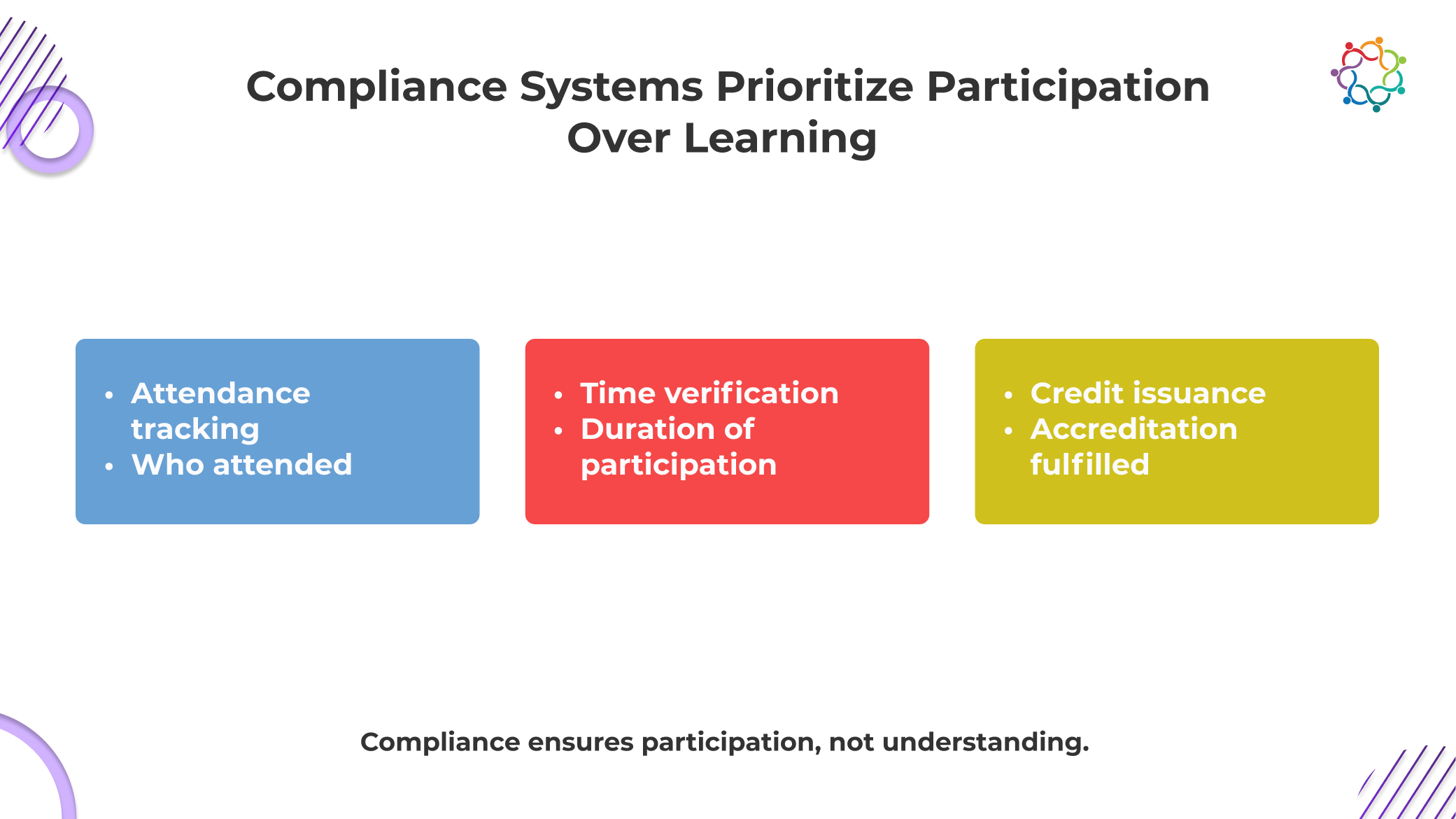

CME systems are not failing to measure learning. They were never built to do it.

At their core, these frameworks exist to prove compliance. They ensure that educational standards are met, content is accredited, and physician participation is properly documented. Everything revolves around what can be verified in an audit.

And learning cannot be audited.

So the system defaults to what it can prove. Attendance logs, session duration, and credit issuance. These are clean, defensible, and easy to report. They satisfy regulatory requirements and create the appearance of educational rigor.

But they do not confirm understanding.

This is the uncomfortable reality. The system is designed to validate that education was delivered, not that it was absorbed. In Continuing Medical Education events, participation becomes the endpoint because it is measurable.

Learning remains outside the system, unverified and largely assumed.

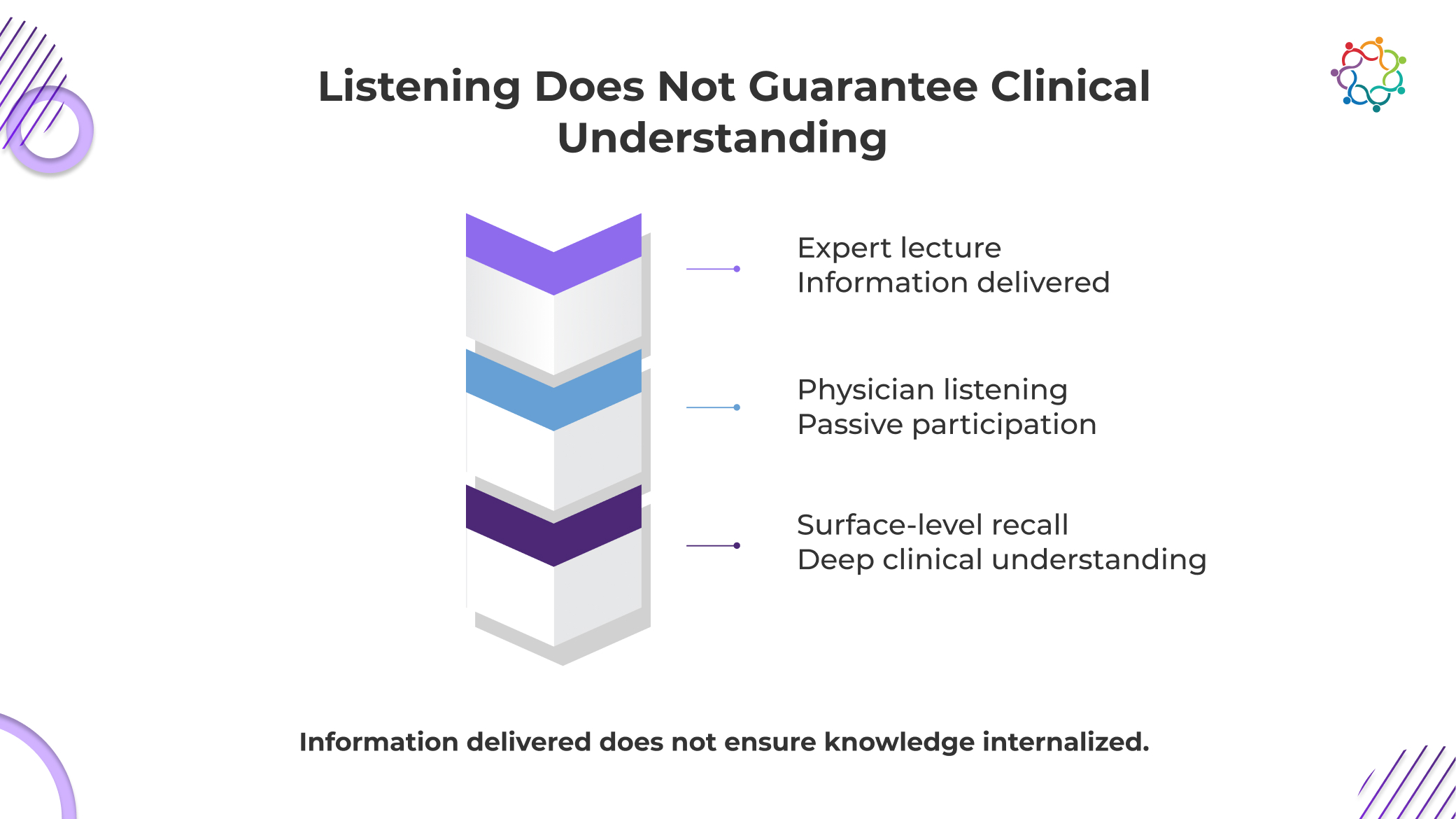

Most medical education events are built on a simple assumption that if physicians hear the information, they will understand it. That assumption is dangerously weak.

Exposure is not the only way to receive clinical knowledge. It calls for context, interpretation, and the capacity to use it under duress. Even if a doctor attends the entire class and follows every presentation, they may still leave with a disjointed or inaccurate understanding of the subject. That gap cannot be detected by the system.

This is not just an academic concern. It is a clinical risk.

If a physician misinterprets a guideline or fails to internalize a treatment protocol, their decisions do not improve. They may rely on outdated approaches or apply new information incorrectly. The consequence is not poor learning metrics. The consequence is inconsistent patient care.

When learning is assumed instead of validated, error becomes invisible.

And yet, most CME conferences continue to treat listening as sufficient proof of understanding, without ever verifying what actually changed in clinical thinking.

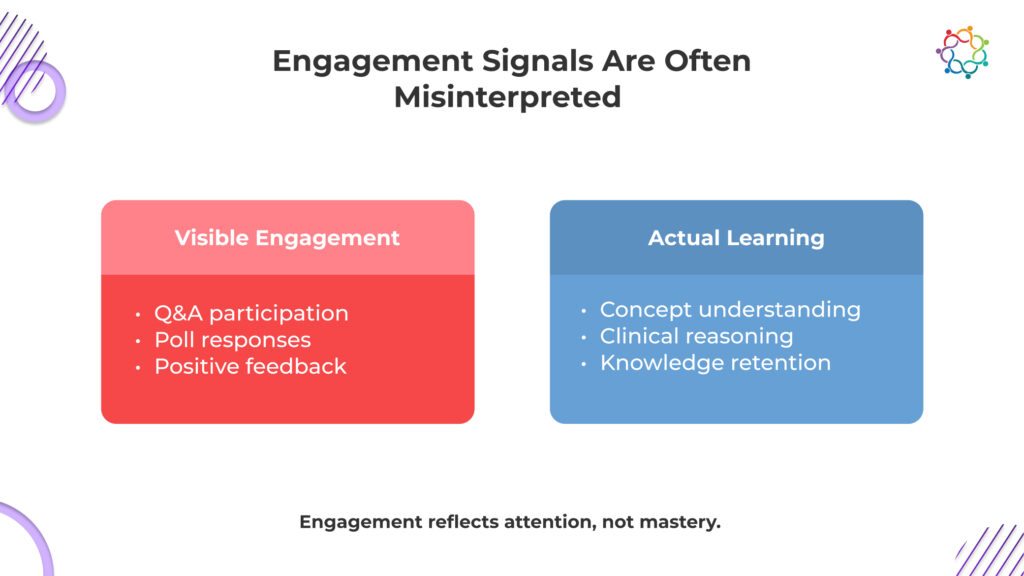

Engagement metrics do not validate learning. They create false confidence that learning has happened.

In many CME events, interaction is treated as a proxy for effectiveness. Questions are asked, polls are answered, and feedback scores are collected. These signals are then used to demonstrate that physicians were actively involved in the session.

But involvement is not the same as understanding.

A physician can participate without processing the content deeply. They can respond to a poll instinctively, ask a question out of curiosity, or rate a session highly because the speaker was engaging. None of these actions confirms that knowledge was absorbed or retained.

What these metrics actually measure is attention, not comprehension.

The danger is not that engagement is irrelevant. The danger is that it is overvalued. It creates a narrative of success that feels credible but lacks substance. Leadership sees interaction and assumes impact, without questioning whether clinical understanding has improved.

This is how false confidence is built into CME learning outcomes.

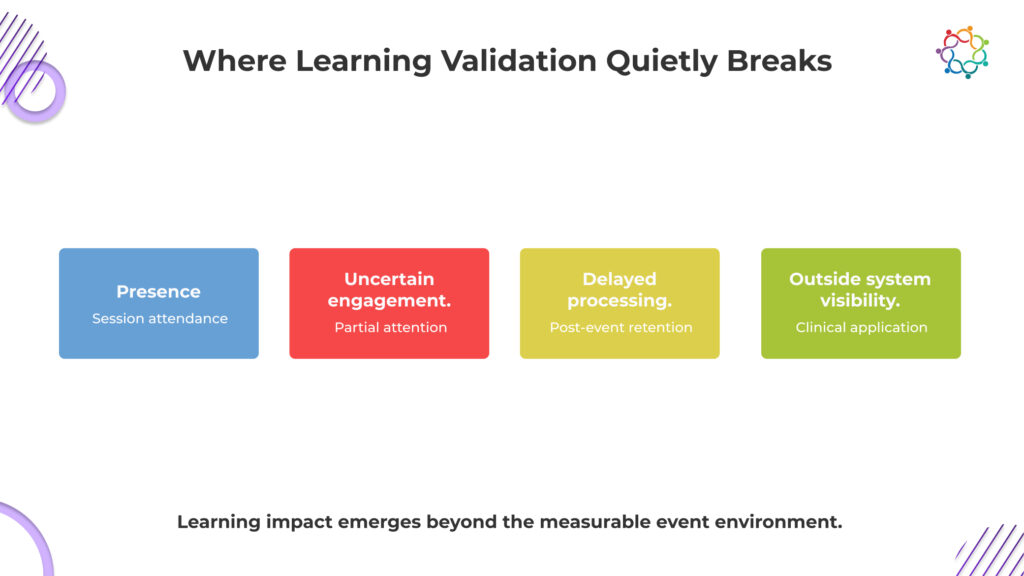

The breakdown does not happen in one place. It happens across multiple points, each weakening visibility into real learning outcomes.

Attendance records physical or virtual presence, not attention. Physicians may be distracted, multitasking, or attending only for credits. The system assumes focus where none may exist, turning passive presence into perceived participation without confirming any real cognitive involvement.

Understanding rarely occurs in real time. Physicians often process and connect information later, when faced with relevant cases. By then, the event has ended, and measurement is complete, leaving actual knowledge retention disconnected from the moment it was supposed to be captured.

Learning only proves its value when it influences real clinical decisions. These decisions happen in patient care settings, far removed from the event. This distance makes it difficult to trace whether a specific session actually shaped how a physician chooses treatments.

The system measures learning at the exact moment it is least visible. Cognitive change happens later, in fragments, across contexts. What is captured is activity during the session, while what truly matters unfolds outside measurable boundaries, beyond the reach of event data.

Systems capture what happens during the session. Learning often happens outside it. This disconnect ensures that true educational impact remains largely unobserved.

Learning does not happen on the timeline of an event. Measurement does.

Physicians rarely absorb and apply new knowledge instantly. They reflect on it, compare it with existing experience, and only integrate it when a relevant clinical situation arises. That moment may come days or weeks later.

By then, the event is no longer part of the equation.

This is where validation breaks down. Even if learning occurs, it cannot be confidently linked back to the session. Attribution is lost. The system cannot prove whether a clinical decision was influenced by the event, prior knowledge, or another source.

So impact becomes untraceable.

In CME events, outcomes are expected immediately, but real learning appears later, outside measurable boundaries. What gets captured is participation. What actually matters emerges too late to be connected.

That is why learning remains unproven, even when it exists.

This is the uncomfortable conclusion. CME systems reward exposure because it is measurable, not because it is meaningful.

Attendance, credit completion, and participation metrics dominate reporting frameworks. These indicators show that physicians had access to information. They confirm delivery, not impact.

Exposure is easy to quantify. Knowledge is not.

A physician can attend multiple sessions, earn credits, and still show minimal change in clinical understanding. The system records success, even when learning is uncertain.

This creates a structural bias.

Programs are evaluated based on what can be measured. As a result, they prioritize metrics that are easy to capture rather than those that reflect true educational value.

None of these confirms learning.

Yet they are treated as indicators of success.

This is not a measurement gap. It is a measurement mismatch.

The system validates educational delivery. It does not validate educational impact.

And until that distinction is addressed, the Learning Validation Gap will continue to exist.

The issue persists because it is inherently difficult to solve.

Learning is a cognitive process. It cannot be directly observed. Systems can track behavior, but they cannot access how information is interpreted or understood.

This makes learning fundamentally hard to measure.

Organizations default to proxies because direct validation is not feasible. Over time, these proxies become accepted as reality.

The real value of education lies in its impact on patient care. But clinical decisions happen far from the event environment. They are influenced by multiple factors, making it difficult to isolate the effect of a single session.

This creates attribution challenges.

Organizations continue operating within this limitation because there is no easy alternative. They rely on participation metrics, knowing they are incomplete.

That is the blind spot.

Not because the problem is ignored, but because it is difficult to address.

And so, physician learning events continue to be evaluated based on what is visible, not what is meaningful.

CME programs are essential. They create structured opportunities for physicians to stay informed, exchange knowledge, and engage with evolving clinical practices.

But participation metrics cannot define their success.

Attendance proves presence. Engagement signals show interaction. Neither confirms that learning occurred.

And without learning, there is no guarantee of improved clinical decision-making.

That is the real risk.

Unvalidated learning leads to uncertain outcomes. In healthcare, uncertainty in knowledge can translate into inconsistency in treatment. That is not just a measurement issue. It is a clinical one.

The Learning Validation Gap is not about better reporting. It is about recognizing that current systems cannot prove what truly matters.

Here is the line that should stay with you:

CME programs can prove that doctors attended. They cannot prove anything changed in how those doctors think or treat patients.

Until those changes, effectiveness will remain assumed, not validated.

Samaaro is an AI-powered event marketing platform that enables marketing teams to turn events into a measurable growth channel by planning, promoting, executing, and measuring their business impact.

Location

© 2026 — Samaaro. All Rights Reserved.