Samaaro + Your CRM: Zero Integration Fee for Annual Sign-Ups Until 30 June, 2025

- 00Days

- 00Hrs

- 00Min

1

2

3

→

Bottom Line:

The event is behind you; what you do with what it taught you is the whole point.

How did it go? Fine. Great turnout. The team is tired. Same answer you got last quarter. If that is your post-event debrief, you are not learning from your events. You are just surviving them.

The pattern is remarkably consistent. The debrief happens two or three days after the event closes. It runs about forty-five minutes. It covers what went wrong with catering, what went right with attendance, and ends with a vague plan to “do a few things differently next time.” No documented output. No decisions that actually change the next event plan. The team leaves feeling like something was reviewed. The program carries forward the same gaps it started with.

When post-event reviews do not produce structured, specific, documented insights, every event in the program starts from roughly the same baseline. Mistakes repeat because nobody named the root cause. Wins disappear because nobody wrote down what produced them. Budget decisions for the following quarter are made on instinct rather than evidence.

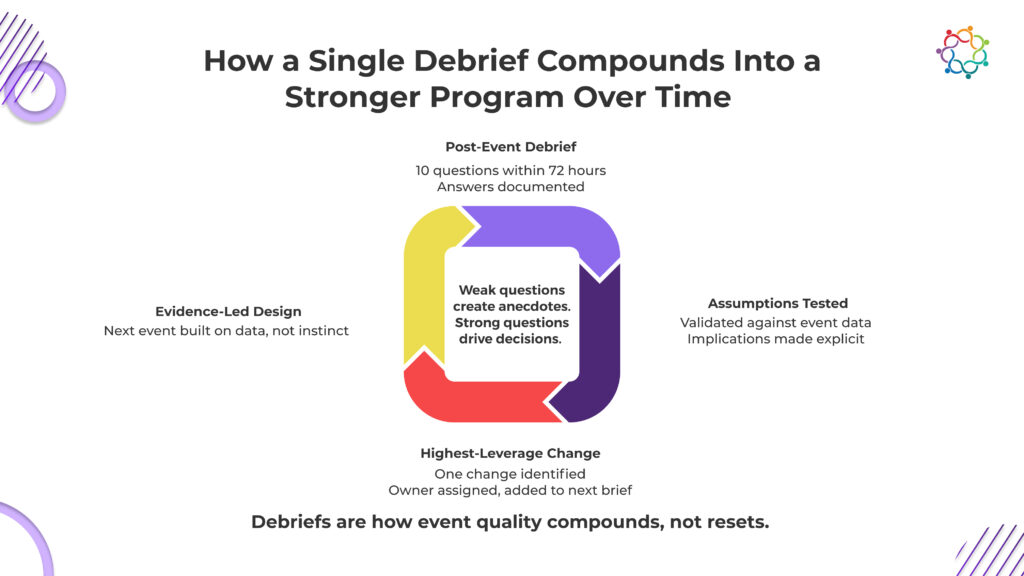

This blog is written for marketing leaders running event programs across multiple quarters, not individual events in isolation. For that audience, the post-event debrief is not a retrospective. It is the primary mechanism for compounding program quality over time. The post-event debrief questions your team asks determine what your program learns. Weak questions produce anecdotes. Strong ones produce decisions.

The ten questions below cover five strategic dimensions. Each one surfaces something a surface-level debrief conversation will miss.

Most post-event debriefs open with logistics and close with a vague nod toward pipeline numbers that are not yet available. The teams whose programs improve quarter over quarter invert this. They start with revenue and pipeline, and use those answers to frame every operational discussion that follows.

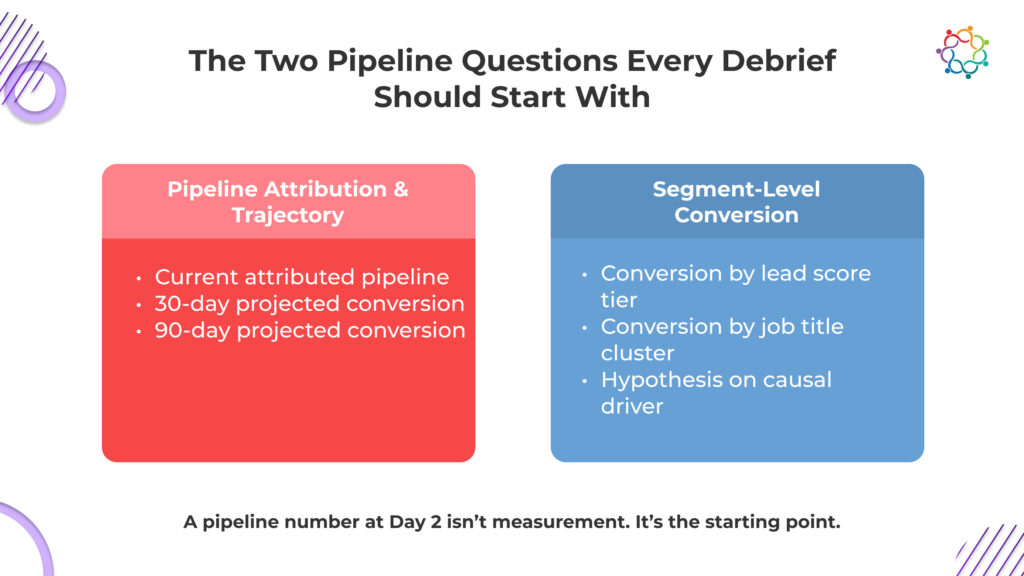

Question 1: What is our current pipeline attribution number from this event, and what is our projection at 30 and 90 days?

A single pipeline figure captured two days post-event is an incomplete picture. What marketing leaders actually need is a trajectory: what is attributed now, what is projected to convert based on lead score and deal stage, and what is the delta between those figures and the event investment. This question also forces the team to have a pipeline methodology before the next event rather than scrambling to construct one after it.

A strong answer includes a specific attributed number, a named person responsible for tracking the 30 and 90-day figures, and a clear explanation of how attribution is being assigned.

Question 2: Which lead segments converted to opportunity at the highest rate, and why?

Aggregate conversion rates hide the insight. Segment-level data tells you which audience profile, session type, or engagement pattern produced the most valuable attendees. That answer directly shapes audience targeting and content programming for the next event.

A strong answer breaks conversion rates out by lead score tier, job title cluster, or session attendance pattern, and includes a hypothesis about what caused the difference.

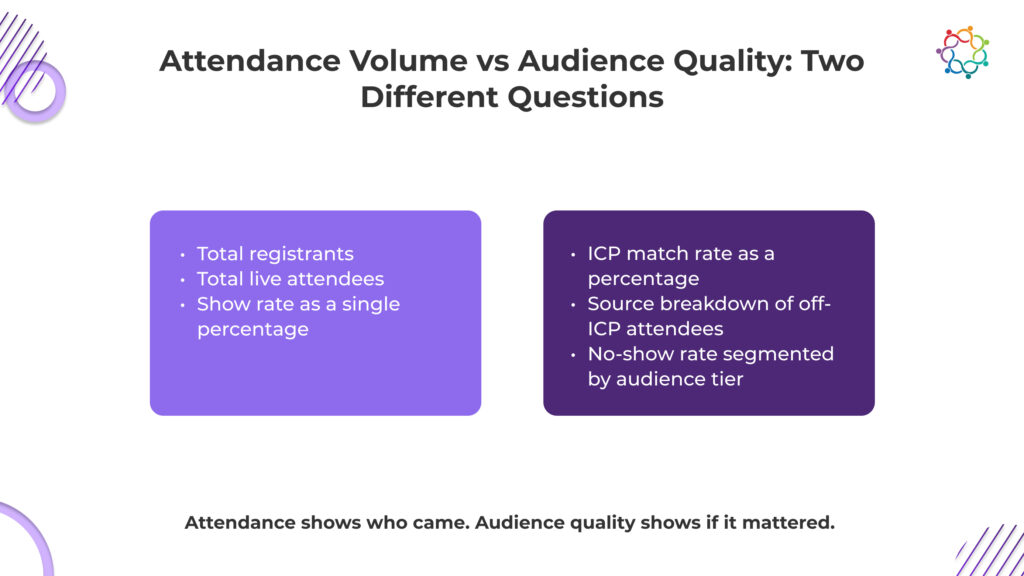

Registration counts and attendance numbers are what most event teams report because they are easy to pull. They are also among the least useful metrics for evaluating whether the event served the programme’s actual purpose. These two questions shift the conversation from volume to quality.

Question 3: What percentage of attendees matched our defined ICP, and where did the gap come from?

If 40 per cent of attendees were outside the ICP, that is not just a targeting problem. It is a signal about which promotional channels are pulling the wrong audience, whether the event positioning is attracting the right people, and whether the registration process has any qualifying friction. Each gap source has a different fix, which is why identifying the source matters more than just noting the percentage.

A strong answer gives the ICP match rate as a number, breaks down where off-ICP attendees came from, and includes a specific hypothesis about the channel or positioning adjustment needed.

Question 4: Who did not attend that we expected, and what does that pattern tell us?

No-show analysis almost never happens in post-event debriefs. It almost always should. If a specific title, seniority level, or account segment registered at high rates but attended at low rates, that gap is telling you something about timing, format, topic relevance, or what else was happening in the market that week. The next invitation strategy needs to address it.

A strong answer segments no-show rates by audience tier, names a hypothesis about the primary driver, and proposes a specific change to the pre-event communication or format.

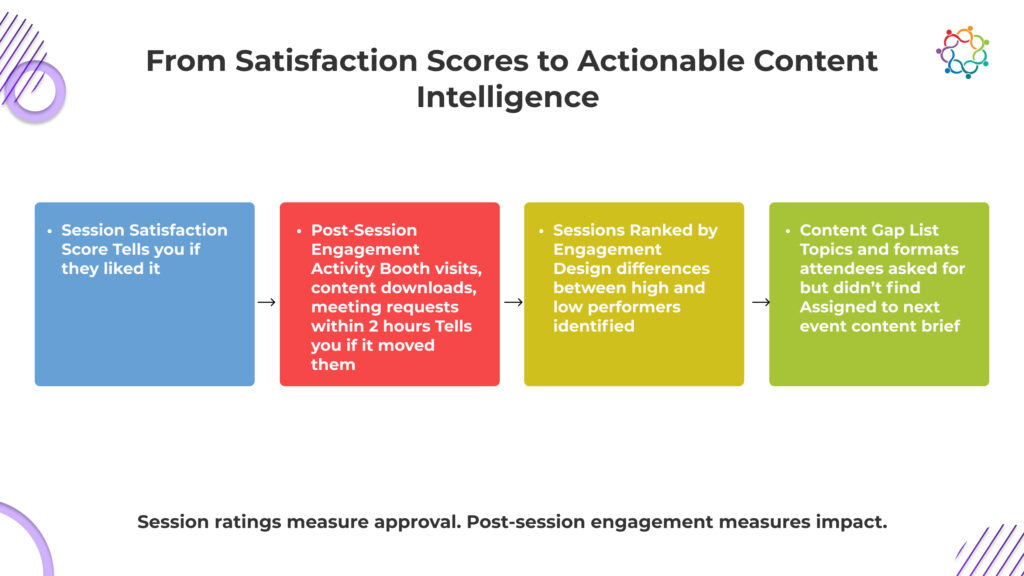

Post-event satisfaction surveys that ask attendees to rate sessions on a scale of one to five tell you whether people liked a session. They do not tell you whether it changed their thinking, moved them closer to a decision, or produced any downstream action. These two questions go further.

Question 5: Which sessions produced the highest post-session engagement, and what did those sessions have in common?

Post-session engagement, defined as booth visits, conversation requests, content downloads, or follow-up meeting bookings within two hours of a session closing, is a meaningfully stronger signal of session quality than a satisfaction score. If three sessions consistently produced action and two did not, the design difference between them is the programming insight for the next event.

A strong answer ranks sessions by post-session engagement activity, documents a hypothesis about what drove the difference, and translates that into a specific programming recommendation.

Question 6: What content did attendees ask for that we did not have?

This is a gap question rather than a performance question, and it is answerable from memory in the debrief room in a way that post-event sequence data never is. Sales reps remember what prospects asked for during booth conversations. That information is available right now and gone within a week. The answer directly shapes content production priorities before the next event.

A strong answer lists the content types or topics that came up repeatedly but were unavailable, and assigns a content brief to close the highest-priority gap.

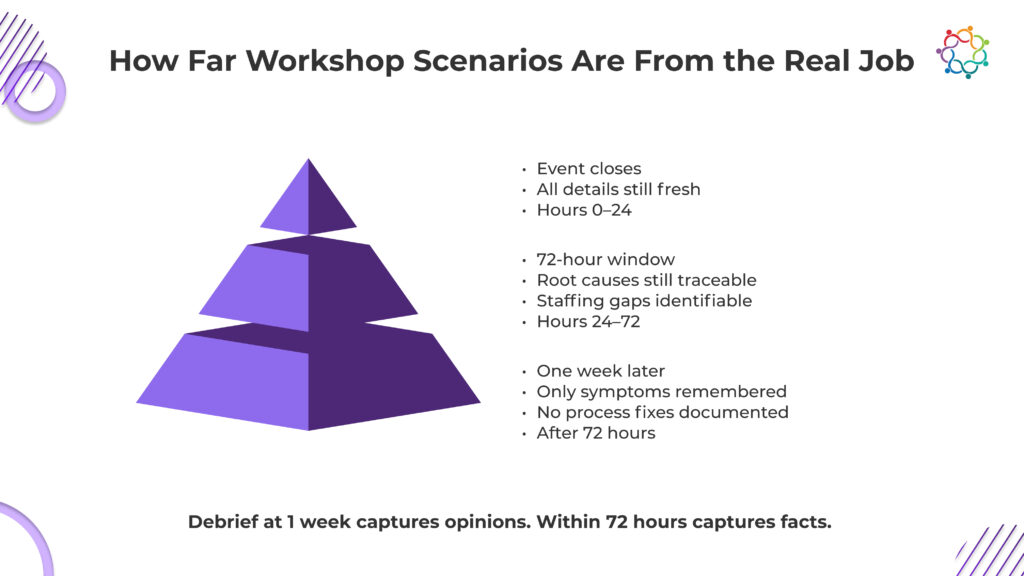

Operational debrief insights have a 72-hour shelf life. The specific memory of what broke in the check-in flow, which vendor did not deliver on scope, and where the run sheet failed fades fast once the team returns to normal workload. These questions need to be asked now, not at the retrospective scheduled for three weeks later.

Question 7: Where did the operational plan break down, and what was the root cause rather than the symptom?

Most post-event reviews catalogue symptoms. The badge printer jammed. The AV ran late. The catering ran short. Root cause analysis asks what planning or vendor management decision created the conditions for each failure. Fixing symptoms produces marginal improvement. Fixing root causes removes the failure mode from future events entirely.

A strong answer documents the top three operational failures with a named root cause for each, and adds a specific process change or vendor requirement to the planning checklist.

Question 8: Where did team members exceed their role, and where were the coverage gaps?

Event execution surfaces capability and capacity gaps that a normal workflow never reveals. The team member who covered three roles because a vendor contact was unreachable is showing you a single point of failure in the staffing model. The person who managed an unexpected speaker cancellation without escalating is showing you a capability worth developing deliberately.

A strong answer names both the exceptional performances and the coverage gaps, recommends a staffing adjustment for the next event of similar scale, and identifies any training or process needs.

A marketing leader running a single event asks what worked and what did not. A marketing leader running a quarterly programme asks what this event taught about programme strategy and what decision it should change. These are different questions.

Question 9: What did this event confirm or challenge about our core programme assumptions?

Every event programme runs on assumptions about audience, format, content, channel, and investment level. Those assumptions are rarely made explicit, which means they are rarely tested. This question forces the team to name the assumption this event either validated or contradicted and document it so the programme strategy evolves on evidence rather than habit.

A strong answer names one to three assumptions, delivers a clear confirmed or challenged verdict for each based on specific event data, and records the programme-level implication for next quarter’s planning.

Question 10: If we ran this exact event again with the same investment, what is the single highest leverage change we would make?

Open-ended improvement lists produce ten items of unequal value that nobody prioritises. This question forces one answer, which means the ranking work happens in the debrief room rather than getting deferred indefinitely. The answer goes to the top of the next event brief.

A strong answer names one specific change with a rationale grounded in event data and assigns a named owner before the meeting closes.

The most common failure mode for a structured post-event debrief is not the quality of the questions. It is the absence of a documentation protocol that converts answers into decisions.

Four things need to be in place before the meeting opens. Schedule within 72 hours. Same day is too exhausting; a week later has lost the operational detail. Assign one person as a dedicated note-taker whose only job is documentation while everyone else answers. Use a pre-built template that maps directly to the ten questions with fields for the answer, the insight behind it, the decision it produces, and the named owner. Close every debrief with a five-minute read-back of documented decisions and owners. If a question produced an insight but no decision, name the decision still outstanding and who owns it before the meeting ends.

A debrief that ends without a written list of named decisions and owners has not finished yet.

Marketing leaders running multi-quarter event programmes are not just executing individual events. They are building institutional knowledge about what works for their specific audience, format, and market. The post-event debrief is the only structured mechanism for that knowledge to accumulate rather than reset after every event closes.

Look at the last three event debriefs your team ran. Count the decisions they produced that directly changed something in the next event plan. If that number is lower than five, the debrief is not doing its job.

The event is behind you. What you do with what it taught you is the whole point.

The ten questions work. Answering them across six events a year is where the manual approach breaks down the data for Questions 1 through 5 lives in different systems, and pulling it together for each debrief takes longer than the meeting itself. Samaaro puts pipeline attribution, audience quality, session engagement, and lead segmentation into one reporting layer, so the debrief starts with answers instead of spreadsheets. See how it works.

Samaaro is an AI-powered event marketing platform that enables marketing teams to turn events into a measurable growth channel by planning, promoting, executing, and measuring their business impact.

Location

© 2026 — Samaaro. All Rights Reserved.