Samaaro + Your CRM: Zero Integration Fee for Annual Sign-Ups Until 30 June, 2025

- 00Days

- 00Hrs

- 00Min

1

2

3

→

Bottom Line:

A full room is easy, a changed team is the point, and most workshops are designed for the former while hoping for the latter.

The slides were polished. The room was full. And by Thursday, nobody could tell you what changed. That is not a workshop. That is a presentation with coffee breaks.

Most B2B organizations know this at some level. The internal training that everyone attended and nobody applied. The client workshop that produced three pages of flip-chart notes and zero changed behaviour. The onboarding session covered everything and transferred nothing. The uncomfortable truth is that the majority of workshops, both internal and client-facing, are structurally identical to presentations. Someone stands at the front, content moves in one direction, attendees take notes that they will not open again, and the organization marks the box.

The cost is not just wasted time. When workshops fail to produce behavioural change, the downstream effects show up in sales cycles that do not improve, onboarding cohorts that repeat the same mistakes quarter after quarter, and client engagements that plateau because the knowledge transfer never actually happened.

This is a direct comparison between B2B workshop best practices that produce measurable change and the patterns most organizations default to when time, design expertise, or honest self-assessment is limited.

Before a single person walks into the room, one decision determines whether the workshop produces results or just produces attendance. That decision is how the objective is written.

What most B2B companies run: Objectives that describe content delivery. “Participants will understand the new positioning framework.” “Attendees will learn the five-stage sales methodology.” These are coverage goals. They tell the facilitator what to present, not what participants should be able to do differently when they leave. When the objective is coverage, every design decision that follows optimizes for coverage too. Room layout, activity design, timing, facilitation style, all of it serves the goal of getting through the material rather than changing what people do with it.

What great looks like: Objectives written as specific behavioural outcomes with a post-workshop application anchor. “By the end of this session, each rep will have built a discovery call framework for their two highest-priority accounts using the new methodology.” “Each participant leaves with a documented 30-day implementation plan for their team.” The outcome is observable, specific, and tied to an action that happens after the room clears, not during it.

This is the distinction that separates a workshop from a logistics exercise. If the objective reads like a course description, you have designed a lecture. Rewrite it as a behaviour, and every other design decision changes with it.

There is a person most facilitators design for: an engaged, mid-level professional with moderate background knowledge and a reasonable amount of time. That person almost never shows up. The actual room has a clinical specialist who has run this process for twelve years, a new hire two weeks into the role, and a regional manager who joined because attendance was mandatory and has four unread messages from her VP waiting.

What most B2B companies run: A single version of the workshop deployed across every cohort, geography, and experience level. Pre-work is either nonexistent or a PDF nobody opens. The facilitator reads the room on the day and adapts based on energy rather than diagnosed need.

What great looks like: Real data collected before the session opens. Before a SaaS enablement workshop for customer success managers, the facilitator sends two questions: “What is the one onboarding challenge you have failed to solve in the last 90 days?” and “What is your current experience level with the analytics module: beginner, intermediate, or advanced?” The answers reshape breakout group composition entirely and determine whether the analytics section runs as a walkthrough or a troubleshooting exercise. Design choices follow data, not assumptions.

In medtech and technically complex SaaS environments, specifically, the gap between a novice and an experienced participant is not a matter of degree. It is a different knowledge domain. A workshop that pitches to the middle loses both ends and changes neither.

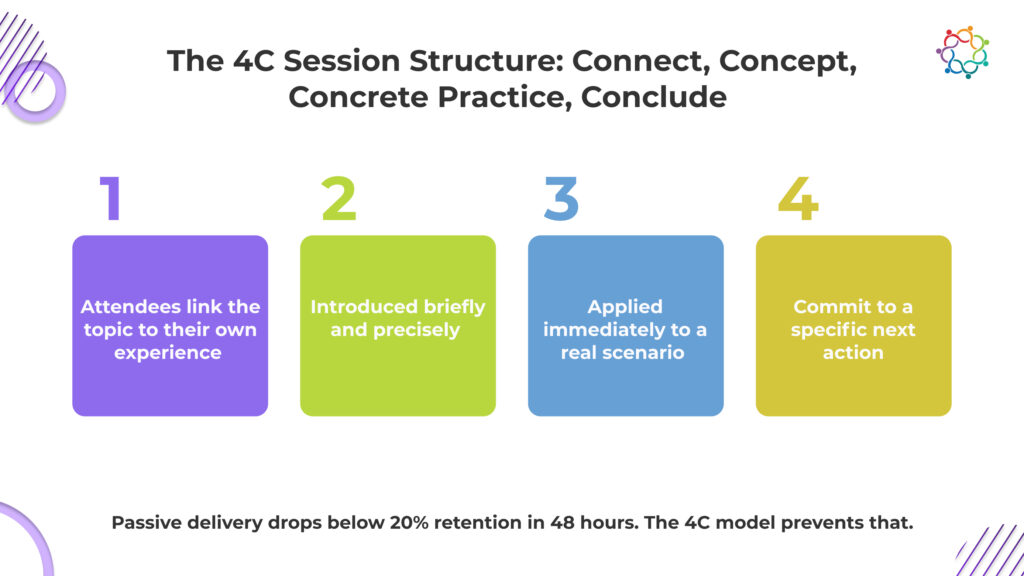

The default B2B workshop structure is inherited from the conference breakout session. Forty-five minutes of presentation, ten minutes of Q&A, five minutes of wrap-up. It is easy to fill, predictable to run, and almost never produces lasting change. Retention from passive information delivery drops below 20 percent within 48 hours. The structure is optimized for the presenter’s comfort, not the participant’s learning.

What most B2B companies run: A linear information sequence where participation is limited to questions at the end or a single polling moment inserted to prove the session was interactive. Attendees are passive for 80 percent of the time and are expected to do something different with the information afterwards, despite never having practised using it.

What great looks like: A session built on the 4C framework, Connect, Concept, Concrete Practice, and Conclude, drawn from Sharon Bowman’s Training from the BACK of the Room. Participants connect the topic to their own experience first. The concept is introduced briefly. Concrete practice puts it to work in a realistic scenario immediately.

The conclusion is a commitment to a specific next action, not a summary slide. In SaaS onboarding, concrete practice is where feature adoption actually happens. In consulting workshops, the connect step is where client-specific context surfaces problems the facilitator never knew to design for. Skipping in either context is where workshop ROI disappears.

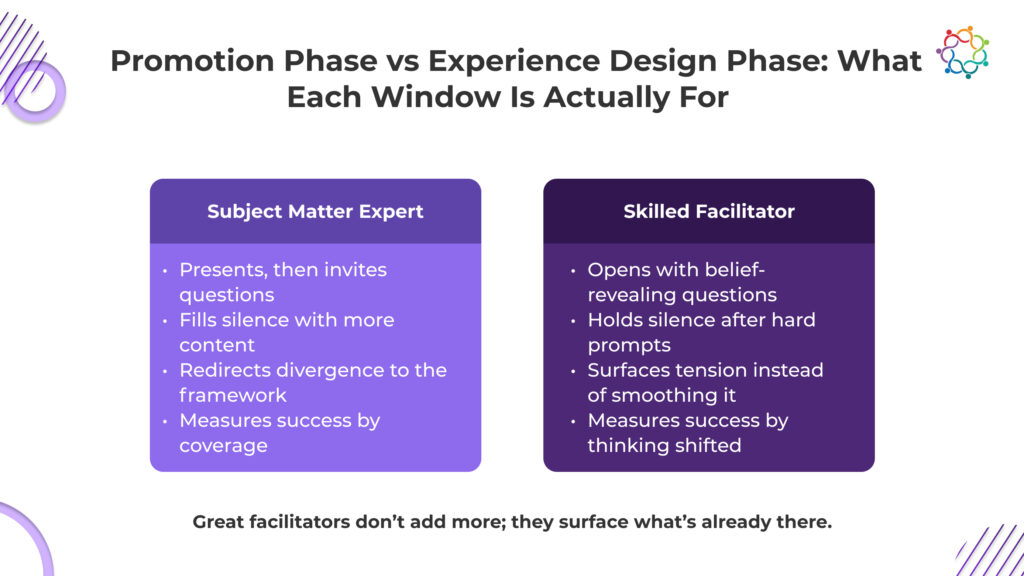

Subject matter expertise and facilitation skills are not the same thing. The person who built the content and knows the most about the product is frequently the worst person to facilitate the session, because expertise pulls toward telling rather than drawing out. The best facilitators in medtech, SaaS, and consulting contexts are not the most knowledgeable people in the room. They are the most skilled at surfacing and organizing the knowledge already there.

What most B2B companies run: The subject matter expert facilitates because they built the content. Their pattern is presentation with interruptions for questions. When the room goes quiet, they fill it with more content rather than a better question. Divergent perspectives get acknowledged briefly and redirected back to the framework.

What great looks like: A facilitator who operates with a question architecture prepared in advance. Opening questions that surface existing beliefs and experience. Bridging questions that connect new concepts to real participant scenarios. Challenge questions that expose assumptions without triggering defensiveness. Synthesis questions that help the group build shared conclusions rather than receive them from the front of the room.

The behavioural markers that distinguish great facilitation from adequate facilitation are specific: holding silence after a hard question rather than rescuing the room, naming group tension rather than smoothing it over, redirecting questions back to the group before answering them, and connecting participant contributions to the workshop objective in real time. Your facilitation quality determines whether participants leave with your framework memorized or with their own thinking sharpened by it. Only one of those produces lasting change.

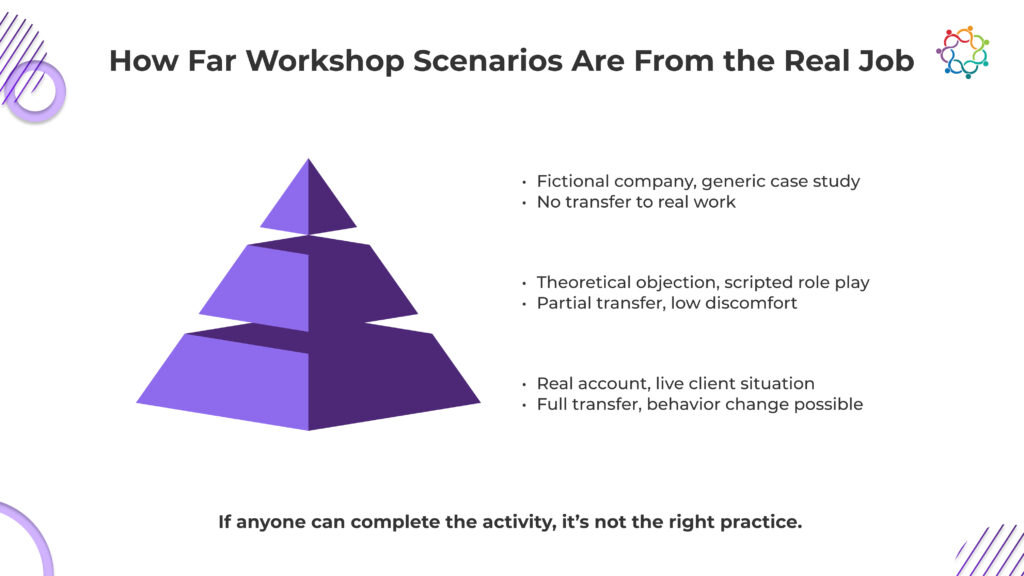

The most direct determinant of whether behaviour actually changes after a workshop is whether the practice scenario resembles the actual job. Most do not come close.

What most B2B companies run: Generic case studies featuring fictional companies. Role plays using theoretical objections nobody in the room has actually heard. Group discussion prompts asking what participants would do in situations designed to have clean, comfortable answers. The activity feels productive. The debrief is collegial. And back at the desk on Monday, nothing transfers because the workshop scenario never closed the gap to the real work.

What great looks like: Practice built from a real organizational context with the rough edges intact. In a medtech workshop, the scenario comes from an actual clinical conversation challenge the sales team has been struggling with. In a SaaS onboarding workshop, participants configure their own live accounts rather than a demo environment. In a consulting firm workshop, the case study is a lightly anonymized version of a current client situation the team is navigating right now. The discomfort of realism is not a design flaw. It is the mechanism through which learning transfers.

If your workshop activity could be completed competently by someone who has never done this job, it is not practicing the right thing.

The most common post-workshop outcome in B2B organizations is a burst of good intentions that dissolves within two weeks. Not because participants did not value the session. Because no structure exists to sustain the behaviour, the workshop started.

What most B2B companies run: A summary slide. An action items slide nobody photographs. Participants return to their roles carrying new knowledge and no reinforcement mechanism. Managers are not told what commitments were made. No follow-up is scheduled. Within ten business days, the environment that produced the original behaviour reasserts itself, and everything reverts.

What great looks like: The final fifteen minutes are dedicated to a structured commitment protocol. Each participant documents one specific behaviour change they will implement in the next seven days, names an accountability partner in the room, and books a fifteen-minute check-in before leaving. A post-workshop summary goes to the participant and their direct manager within 24 hours, covering the stated commitment. A 30-day follow-up touchpoint is scheduled before the session ends, whether a pulse survey, a group debrief call, or a manager reinforcement guide.

Behaviour change measured 30 days after the workshop is the only metric that tells you whether it worked. Most organizations never measure it.

The measure of a workshop is not attendance, satisfaction scores, or completion rates. It is whether the people who attended do something differently the following week because of what happened in that room.

Pull the last workshop your team ran. Apply the six comparisons above. Count how many landed in the great column and how many landed in the most companies column. That number is your starting point.

A full room is easy. A changed team is the point.

The B2B Workshop Design Audit Checklist covers all six dimensions as a side-by-side scoring rubric with a total score guide and prioritized redesign recommendations.

If your team runs workshops where post-session behaviour change matters, Samaaro captures session-level engagement, feedback, and participant commitments in one system, so the 30-day follow-through that determines whether the workshop actually worked does not depend on spreadsheets and memory. See how it works.

Samaaro is an AI-powered event marketing platform that enables marketing teams to turn events into a measurable growth channel by planning, promoting, executing, and measuring their business impact.

Location

© 2026 — Samaaro. All Rights Reserved.