Samaaro + Your CRM: Zero Integration Fee for Annual Sign-Ups Until 30 June, 2025

- 00Days

- 00Hrs

- 00Min

Events require significant investment. Budgets cover venues, production, travel, staffing, and follow-up. Because of this cost, leadership expects clear financial justification. The pressure to validate spend often drives a search for a single definitive metric.

Attribution and ROI frequently appear together in executive reports. Revenue contribution, influenced pipeline, and return calculations are presented in the same discussions. Over time, the distinction between explaining impact and evaluating return becomes blurred.

The desire for a single-number answer reinforces this confusion. If an event influenced revenue, it is assumed to have delivered strong ROI. If ROI appears weak, the influence itself is questioned.

Attribution and ROI answer different questions, but are often treated as interchangeable. This collapse creates a misinterpretation of both.

Event attribution explains how an event influenced business outcomes. It evaluates the event’s contribution to pipeline progression, account engagement, and revenue movement across time.

The focus is influence. Attribution examines how participation affected decision confidence, stakeholder alignment, and opportunity acceleration. It does not assume the event created the opportunity. It assesses the role the event played in shaping what happened next.

This approach produces directional insight rather than a financial verdict. It identifies patterns of contribution and contextualizes event revenue contribution within broader sales cycle dynamics.

Attribution explains what role the event played in influencing outcomes. It provides context about contribution, not judgment about efficiency.

Event ROI evaluates whether the financial return generated by an event justifies its cost. It compares investment against measurable outcomes such as revenue or influenced pipeline value.

The focus is efficiency. ROI examines whether the resources allocated to the event produced proportional financial results. It is a tool for investment evaluation and budget prioritization.

Event ROI does not attempt to explain how influence occurred. It measures the outcome relative to expense. This makes it central to executive decision-making, especially when allocating limited resources across competing initiatives.

ROI evaluates whether the investment made sense in financial terms. It delivers a judgment about return, not an explanation of influence.

Attribution and ROI measure fundamentally different realities. Influence happens before outcomes. Outcomes measure results after multiple factors interact. Confusing the two collapses explanation into judgment and misleads decision-making.

Events shape opportunity progression, stakeholder alignment, and decision confidence. These effects occur before any measurable revenue. Influence sets the conditions for outcomes but does not guarantee them.

Revenue outcomes rarely result from a single event. Sales calls, prior marketing exposure, competitor activity, and internal discussions all combine. Ignoring this multiplicity exaggerates the apparent power of any one event.

ROI measures financial return, but without understanding influence, the number is meaningless. A positive ROI may reflect external factors, not event contribution. A weak ROI may hide a strong influence still unfolding in long sales cycles.

Attribution explains what changed. ROI judges whether the cost was justified. Treating influence as efficiency or outcomes as explanation distorts the truth. If you evaluate events solely by ROI without attribution, you are blind to what truly drove results.

Attribution often precedes ROI because investment evaluation depends on understanding contribution. If leadership does not know how an event influenced revenue, ROI calculations risk misinterpretation.

Attribution clarifies whether revenue movement was meaningfully connected to event engagement. It identifies the scale and direction of event revenue contribution. This context informs realistic ROI expectations, especially in long sales cycles.

An event can demonstrate strong influence while showing weak short-term ROI. Revenue may still be developing. Conversely, a favorable ROI may reflect external factors unrelated to the event’s strategic impact.

ROI is context-dependent. Attribution provides that context.

One common mistake is declaring an event successful or unsuccessful based on a single metric. High attributed revenue may be equated with strong ROI without examining cost efficiency. Low ROI may be interpreted as a lack of influence without analyzing the contribution.

Another error is over-crediting or under-crediting events. When attribution and ROI are blended, revenue outcomes may be assigned disproportionate weight relative to the event’s actual role.

A further misunderstanding occurs when events are compared to digital campaigns without contextual alignment. Different sales cycles and influence patterns require different evaluation logic.

These are structural misunderstandings, not reporting errors. Collapsing influence and efficiency into one measure distorts both.

Attribution and ROI answer different questions, yet many teams treat them as one. Attribution explains how an event influenced accounts, opportunities, and decision-making. ROI judges whether the financial return justifies the investment. Collapsing them erases context.

Attribution shows what an event changed. ROI shows what the event returned for its cost. Looking at ROI alone ignores how the change happened. Looking at attribution alone ignores whether the change was worth the investment.

Mature teams keep the distinction sharp. Attribution informs interpretation. ROI informs investment decisions. Confuse the two, and you misallocate budgets, misread impact, and risk making strategic decisions blind to reality.

In high-volume, short sales cycle environments, ROI often carries greater weight. Outcomes appear quickly, and efficiency comparisons across channels are feasible.

In high-value, long sales cycle environments, attribution may matter more initially. Influence unfolds over time, and early ROI calculations may not reflect the full revenue impact.

For tactical objectives focused on immediate acquisition, ROI may dominate. For strategic objectives centered on account development and progression, attribution context becomes critical.

Different business situations shift the emphasis. The distinction remains constant.

Event attribution and ROI are not interchangeable. Attribution measures influence, contribution, and how events shape decisions. ROI measures efficiency, return, and whether the investment was justified.

Treating one as a proxy for the other erases critical context. Decisions based on collapsed metrics risk overvaluing events that shaped little or undervaluing events that shaped heavily but returned slowly.

Misinterpretation here is not minor; it drives misallocated budgets, flawed prioritization, and strategic blind spots. Influence cannot be reduced to dollars. Return cannot be inferred from directional contribution.

Keep the distinction clear: attribution explains. ROI judges. Collapsing them destroys clarity.

Events create influence, not transactions. They shape conversations, strengthen relationships, and affect decision-making confidence across extended sales cycles. Unlike a form submission or purchase click, this influence cannot be directly observed as a single measurable action.

Because influence is diffuse and time-based, it leaves incomplete signals. Revenue outcomes appear later. Stakeholders engage in different ways. Sales interactions overlap with marketing interactions. The data that remains is partial and fragmented.

Event attribution models exist to interpret those incomplete signals. They provide structure where direct visibility is impossible.

An attribution model is a structured assumption about how influence should be interpreted. It defines how credit is distributed across interactions and time.

Models are necessary because influence cannot be measured directly. They are imperfect because influence does not follow clean rules.

Before examining event attribution models, a boundary must be set. Attribution does not prove causation.

Events operate in environments shaped by prior engagement, active pipeline, competitive dynamics, and sales activity. When revenue follows an event, that sequence reflects correlation. It does not confirm that the event alone caused the outcome.

Event attribution frameworks organize observable interactions to estimate influence. They do not isolate events in controlled conditions.

Despite this limitation, models remain useful. They provide directional insight into how engagement aligns with revenue movement. Their value lies in interpretation, not proof.

Understanding this distinction prevents overconfidence in any single model’s output.

First-touch event attribution assigns credit to the earliest recorded interaction involving an event. If an event represents the first measurable engagement, it receives full credit for subsequent revenue.

Teams are drawn to this model because it is clear and simple. It highlights discovery and initial exposure. In environments focused on awareness or early-stage pipeline creation, it can offer straightforward directional insight.

This model can make sense when events are designed to introduce new audiences to a brand or offering.

When It Breaks Down

First-touch breaks down in long sales cycles where influence unfolds over time. It also breaks down when attendees engage repeatedly across multiple events. If accounts already exist in the pipeline, assigning full credit to the earliest interaction overvalues discovery and undervalues progression.

First-touch assumes influence begins at entry. In reality, influence often accumulates across stages.

Last-touch event attribution assigns credit to the most recent interaction before revenue occurs. If an event precedes a deal close, it receives full credit.

This model feels revenue-aligned because it ties attribution directly to outcomes. It is popular in reporting because it appears to connect events to closed revenue in a clean and direct way.

The appeal lies in its proximity to results.

When It Breaks Down

Last-touch breaks down when sales-driven closings dominate the final stages of deals. It also breaks down when offline conversations or internal approvals influence timing more than event participation. In environments where multiple events shape the buying journey, last-touch reduces cumulative influence to a single moment.

Last-touch rewards timing, not impact. It assumes the final interaction carried decisive weight, even when influence was distributed across earlier stages.

Instead of giving credit to a single point, multi-touch event attribution divides the credit among multiple interactions. Events become a part of a series of interactions that affect revenue as a whole.

In this model, credit may be distributed equally or weighted according to time-based impact. Early interactions, mid-cycle engagement, and late-stage reinforcement may each receive partial recognition. Conceptually, this reflects the reality that influence is rarely isolated.

Multi-touch event attribution feels more realistic because it acknowledges accumulation. It attempts to account for influence weighting across stages.

When It Breaks Down

Multi-touch breaks down when the weighting rules become arbitrary. Assigning percentages to interactions reflects model bias rather than observable truth. It also breaks down when offline signals are missing, leaving partial visibility into the actual buying journey.

More data points do not automatically mean better understanding. Multi-touch models can create false precision by presenting calculated distributions as objective facts.

Account-based event attribution shifts the unit of analysis from individual leads to accounts. Instead of asking which person converted, it evaluates how event participation across stakeholders influenced account-level outcomes.

This model reflects the account-level complexity of B2B environments. Multiple stakeholders may attend the same event. Influence can spread across roles, departments, and decision layers.

By focusing on accounts rather than individuals, this approach aligns more closely with how enterprise revenue is generated.

When It Breaks Down

Account-based models break down when account mapping is incomplete or inaccurate. Ambiguous buying groups can distort influence patterns. In small or transactional deals where a single individual drives the purchase, the account-level lens may add unnecessary abstraction.

Account-based event attribution reflects structural reality better, but it demands stronger assumptions about stakeholder influence and internal alignment.

All event attribution models break down because influence is messy. Offline conversations occur without records. Sales behavior shapes timing in ways that systems cannot fully capture. Organizational bias affects which interactions are logged and which are ignored.

Partial visibility is inherent in event environments. Some influence is documented. Some remains unrecorded. Models attempt to structure this complexity, but they cannot eliminate it.

Models fail not because they are flawed, but because influence does not follow rigid rules. Attribution assumptions simplify reality. Eventually, those assumptions collide with account-level complexity and human decision-making.

Understanding the limitations of event attribution is essential to interpreting its outputs responsibly.

Event attribution models should be treated as sources of directional insight rather than decision proof. They reveal patterns and shifts in engagement relative to revenue outcomes. They do not provide definitive credit allocation.

Comparing trends across periods can be more informative than analyzing isolated results. Changes in influence patterns often matter more than absolute percentages.

Attribution outputs gain meaning when paired with qualitative context from sales teams and account observations. Models interpret signals. Human judgment interprets nuance.

Responsible use begins with acknowledging model bias and measurement trade-offs.

There is no single correct event attribution model. Each reveals a different aspect of influence across time, stakeholders, and opportunities.

Misuse occurs when outputs are treated as objective truth rather than structured interpretation. Assumptions become invisible, and conclusions become overstated.

Event attribution models clarify directional impact, not causation. Understanding their limits is more valuable than searching for perfection.

Digital attribution has become the benchmark for marketing measurement. It offers clear conversion paths, visible user behavior, and immediate reporting. As a result, it often sets expectations for how all marketing channels should perform in measurement environments.

Events are often measured by the same standard. Leadership expects equal attribution certainty and revenue traceability. When event attribution falls short of digital precision, it is seen as weaker.

This expectation is flawed because it assumes both media operate under identical conditions. They do not.

Event attribution is harder, not because events are less valuable, but because they operate in fundamentally different conditions. Those conditions shape what can be observed, inferred, and measured with confidence.

Digital attribution refers to the process of connecting online interactions to defined conversion events. These interactions are typically click-based and system-tracked. User behavior is recorded automatically through cookies, session tracking, and logged actions.

Digital environments are system-native. Every click, view, and submission can be captured as structured data. Individual user tracking allows marketers to follow a defined path from exposure to conversion.

Conversion points are explicit. A form submission, purchase, or demo request marks a clear moment of action. Feedback loops are immediate. Campaign performance can be analyzed in near real time.

These conditions create high observability. Attribution confidence increases because user actions are recorded directly rather than inferred.

Event attribution operates in environments defined by human interaction rather than automated tracking. Conversations occur face-to-face. Questions are asked informally. Influence develops through dialogue and shared context.

Trust-building interactions often take place in settings that software cannot fully observe. A discussion after a session, a private meeting, or a multi-person conversation at a table may shape decision-making confidence without generating a system-recorded signal.

Unlike digital attribution, event attribution depends on partial data visibility. Registration and attendance are recorded. The depth and quality of influence are not directly captured.

Events generate influence where software has limited visibility. Measurement, therefore, relies more on inference and post-event outcomes than on direct behavioral logs.

Many events operate within long sales cycles. Decisions may take months to finalize. During that time, multiple interactions reinforce or reshape buyer perception.

Linear attribution logic assumes a clear progression from touchpoint to conversion. In long sales cycles, influence accumulates gradually. An event may strengthen an opportunity early, while the measurable outcome occurs much later.

As time passes, attribution confidence declines. Additional meetings, follow-ups, and internal discussions intervene. The original event interaction becomes one part of a larger sequence.

The longer the sales cycle, the weaker the last-touch logic becomes. Time-lagged outcomes require models that interpret influence across extended periods rather than relying on immediate conversion signals.

Enterprise decisions rarely involve a single individual. Different roles evaluate different aspects of a solution. Events often attract multiple stakeholders from the same account, each interacting in distinct ways.

Digital attribution typically tracks individuals. Event attribution must consider account-level complexity. One stakeholder may attend a keynote, another may participate in a private meeting, and a third may engage later in a sales discussion.

Influence can spread internally after the event. A participant may share insights with colleagues who did not attend. Decision paths become non-linear as internal alignment develops.

Events rarely influence a single buyer in isolation. Multi-stakeholder decisions introduce ambiguity that reduces attribution certainty at the individual level.

Event attribution loses visibility the moment interaction moves offline. Unlike digital environments, where actions are system-tracked, events generate influence in spaces that leave no automatic record. These blind spots are structural. They cannot be eliminated by reporting discipline alone.

Unrecorded Conversations: Informal networking, private encounters, and in-between sessions are frequently the settings for high-impact conversations. Although they are rarely recorded as organised data, these interactions influence perception and decision-making confidence.

Sales References Without Traceability: Sales teams frequently reference event conversations in later calls or negotiations. The system records the sales activity, not the original event influence. Attribution shifts toward what is logged, not what mattered.

Internal Buyer Discussions: Attendees bring insights back to their organizations. Internal conversations, alignment meetings, and budget discussions occur without visibility. Influence spreads without attribution signals.

Memory-Based Reporting: When influence is not recorded, it is reconstructed from memory. This introduces bias and inconsistency. If it is not logged, it does not appear in attribution models, even when it shaped the outcome.

If attribution feels incomplete for events, it is because critical influence operates in spaces that measurement systems cannot directly access.

Lower precision in event attribution reflects reduced observability, not reduced importance. Strategic decisions, particularly in high-value deals, are influenced by trust, alignment, and decision-making confidence. These factors are rarely transactional.

Digital attribution excels in measuring discrete actions. Events often affect broader strategic movement within accounts. In complex buying environments, influence over direction can matter more than a single conversion event.

Directional insight into pipeline acceleration, stakeholder engagement, and revenue influence may be less precise, but it remains strategically relevant. Precision and impact are not synonymous.

Event attribution should be interpreted as a source of directional signals rather than exact proof of causation. It reveals patterns in account engagement, sales cycle progression, and time-lagged outcomes following events.

Trend comparison over time can offer meaningful insight. Changes in acceleration patterns or account-level participation may indicate shifts in influence.

Because event attribution depends on inference, outputs should be evaluated within context. Partial data visibility requires measured interpretation rather than binary conclusions.

The goal is not to match digital precision, but to understand event influence within its structural limits.

Digital and event environments operate under different structural conditions. Digital attribution benefits from direct observability and immediate conversion signals. Event attribution relies on inference across human interaction and long sales cycles.

Measurement confidence will vary because observability varies. This does not imply unequal value. It reflects distinct operating realities.

Events require different attribution logic because they generate influence differently. Understanding that difference is essential for accurate interpretation.

Lead-based reporting has shaped marketing measurement for over a decade. Dashboards prioritize conversions, campaign responses, and cost per lead. As a result, conversion tracking became the default lens for evaluating performance across channels.

When events are reviewed in the same reporting environments, they are often evaluated using identical metrics. Attendance lists become leads. Badge scans become acquisition signals. Post-event engagement is filtered through conversion frameworks designed for digital campaigns.

The confusion persists because both lead attribution and event attribution appear in revenue discussions and executive summaries. They are presented side by side, which implies equivalence.

Event attribution and lead attribution answer fundamentally different questions, even though they are often reported together. One explains who converted. The other explains how influence shaped pipeline progression across time and stakeholders.

The practice of determining which marketing engagement led to a conversion is known as lead attribution. It links a particular touchpoint to a predetermined action, such as requesting a demo or completing a form.

A lead is a recognisable person who has expressed interest by an explicit action. Lead attribution tracks acquisition by assigning credit to the interaction that generated that action.

It is effective at measuring short-term signals. Form fills, demo requests, gated content downloads, and campaign conversions are clearly defined and time-bound. The data is explicit and directly observable.

Lead attribution performs well in environments where conversion is the primary objective and where the path from interaction to action is relatively short. It measures entry into the funnel at the individual level.

Event attribution measures how an event influenced revenue outcomes across accounts, opportunities, and time. It focuses on influence rather than isolated conversion.

Event attribution does not require the event to generate a new lead or act as the point of conversion. An opportunity may already exist. The event’s role may be to strengthen, accelerate, or reshape that opportunity.

Events often engage multiple stakeholders from the same organization. Event attribution evaluates how that collective engagement affected the account, not just individual attendees.

In long sales cycles, influence appears as movement. Opportunities may advance stages, reduce friction, or gain internal alignment after an event. Event attribution measures this progression.

Events can increase clarity and confidence among decision-makers. This reduces hesitation and shortens timelines. Event attribution captures these acceleration effects as part of revenue influence.

The first difference lies in the unit of measurement. Lead attribution measures individuals. It connects a person to a conversion event. Event attribution often operates at the account level, where multiple stakeholders influence a single revenue outcome.

The second difference is the time horizon. Lead attribution captures immediate or near-term actions. Event attribution evaluates extended influence across longer sales cycles. Influence may emerge weeks or months after participation.

The third difference is the nature of the impact. Lead attribution measures conversion. It records who acted. Event attribution measures influence. It evaluates what changed in the opportunity after engagement.

The fourth difference concerns data visibility. Lead conversions are explicit and system-recorded. Event influence may require analysis of progression, velocity, and post-event outcomes that are not automatically labeled as event-driven.

Lead attribution measures who acted. Event attribution measures what changed.

Events involve multiple stakeholders from the same account. Several attendees may influence a single opportunity. Lead attribution, however, evaluates individuals separately. This creates fragmentation in measurement and ignores account-level impact.

Events also generate offline conversations and relationship-building interactions. These moments influence decision-making confidence but do not always produce immediate conversion signals. Systems designed around explicit digital actions struggle to capture indirect contributions.

Sales-led follow-ups further complicate attribution scope. A conversation at an event may shift the direction of an opportunity, but the measurable action occurs later in a sales meeting or contract negotiation. The system records the final action, not the earlier influence.

Long gaps between the event and revenue outcomes create additional measurement bias. Influence may occur early, while conversion appears much later. Events influence decisions that systems are not designed to label as event-driven.

One common mistake is judging events solely on lead count. This prioritizes acquisition over acceleration and overlooks account-level influence.

Another error is comparing events directly to paid digital campaigns. Campaigns are optimized for short-term conversions, while events often operate across extended sales cycles. The measurement models differ in scope and signal type.

A third mistake is declaring events unsuccessful because they did not generate sufficient leads, even when downstream pipeline progression improved. These outcomes reflect reporting limitations, not event failures.

When events are evaluated only through conversion metrics, influence remains invisible.

Lead attribution remains important for understanding entry into the funnel. It clarifies which channels and campaigns generate new individual interest.

Event attribution adds context by explaining how opportunities progress after initial acquisition. It measures acceleration, alignment, and influence across stakeholders and time.

Mature teams use both models because they answer different questions. Lead attribution explains entry. Event attribution explains momentum.

Together, they provide a fuller view of revenue development across acquisition and progression.

Lead attribution and event attribution are not interchangeable frameworks. Campaigns are designed to generate individual conversions. Events are designed to influence accounts, accelerate opportunities, and strengthen decisions across longer sales cycles.

When events are measured like campaigns, only short-term acquisition is visible. Influence, progression, and revenue acceleration disappear from the analysis. This creates misleading conclusions about event performance.

If the only question asked after an event is how many leads it generated, the wrong measurement model is being applied. Event attribution exists because events operate through influence, not isolated conversion.

Attendance is the most common starting point for evaluating events. It is visible, easy to report, and simple to compare. As a result, it often becomes the primary indicator of success.

But attendance is an activity metric, not a business outcome. It measures who showed up, not what changed commercially. A large audience does not indicate account-level impact, revenue acceleration, or movement in long sales cycles. It confirms participation, not influence.

When event performance is defined by turnout, evaluation stays at the surface. Business leadership, however, evaluates investment through revenue impact. They do not ask how many people attended. They ask whether the event influenced pipeline progression and post-event outcomes.

Event attribution is not about how many people attended an event, but how the event influenced business outcomes. It replaces attendance-based evaluation with a revenue influence lens grounded in commercial effect.

Event attribution refers to the process of identifying and measuring how an event contributes to revenue outcomes. It evaluates the event’s influence on pipeline progression, deal velocity, and account-level impact rather than focusing on isolated conversions.

To put it simply, event attribution is the process of evaluating how an event influenced purchasing decisions. It enquires as to whether the event improved participation, boosted confidence in making decisions, sped up prospects, or affected already-moving revenue.

This approach recognizes that events rarely function as direct conversion points. Instead, they contribute indirectly to revenue through influence. Measuring event influence requires distinguishing between correlation and causation, and focusing on post-event outcomes tied to business progression.

At its core, event attribution centers on revenue influence, not event activity.

Due to their ease of tracking, lead counts have become the standard statistic. Lead generation in digital campaigns frequently has a direct link to form fills, downloads, or gated content. In those environments, leads function as measurable conversion events.

Events operate differently. They typically occur within long sales cycles, where buying intent may already exist. Attendees may be active opportunities, existing customers, or multiple stakeholders from the same account. The event does not necessarily create intent. It interacts with an intent that is already developing.

When success is defined by lead volume, the event’s impact on the pipeline becomes distorted. High lead counts may reflect broad attendance but low commercial relevance. Conversely, a small group of strategically important accounts may drive significant revenue influence without producing large lead numbers.

Most event-influenced revenue never appears as “event-sourced.” Deals may close months later. Opportunities may accelerate without being created at the event. Revenue may be influenced at the account level without generating new contacts.

Lead attribution captures conversion points. Event attribution measures indirect contribution and influence across the buying journey.

Events influence revenue by changing the conditions under which decisions are made. They do not typically generate new intent. Instead, they affect the probability that a deal progresses and the velocity at which it moves through the pipeline. In long sales cycles, this influence is often indirect, but it is commercially significant.

Four mechanisms explain how event revenue influences:

Events increase direct interaction between buyers and sellers. Stronger relationships reduce friction, clarify expectations, and increase responsiveness. This does not create new demand, but it raises the likelihood that existing opportunities advance. Influence is expressed as improved deal progression, not immediate conversion.

Confidence is necessary for complex purchases. Events allow for focused engagement, which shortens the time needed to develop trust. Stakeholder alignment and clarity boost decision-making confidence. This assurance speeds up evaluation cycles and lessens hesitancy, which helps to accelerate revenue.

Most enterprise deals involve multiple decision-makers. Events often engage several stakeholders from the same account simultaneously. This expands account-level impact and increases internal alignment. When more stakeholders are informed and aligned, the probability of progression increases.

Events influence when decisions happen. A single interaction can resolve objections, reposition value, or clarify next steps. These changes affect deal velocity. The opportunity may have existed before the event, but its trajectory shifts afterward.

If event attribution focuses only on conversion creation, these mechanisms remain invisible. Events change probability and velocity. That is how they influence revenue.

Event influence often appears as faster deal progression. An opportunity that had stalled begins moving after key stakeholders attend and engage in substantive discussions. The event did not create the deal, but it accelerated it.

Influence may also appear as broader account engagement. An account that previously involved one contact expands to include additional decision-makers after attending. This increases account-level impact and strengthens internal alignment.

Another pattern is a shift in sales conversations. After the event, discussions move from exploratory questions to evaluation and negotiation. Decision-making confidence increases, and uncertainty decreases.

These examples illustrate measuring event influence through changes in pipeline velocity, engagement depth, and post-event outcomes. They do not rely on immediate conversions or direct causation.

Event attribution is not lead attribution. Counting badge scans or new contacts does not measure revenue influence.

It is not last-touch attribution. Events rarely function as the final interaction before conversion, especially in long sales cycles. Assigning full credit to the most recent touchpoint ignores indirect contribution.

It is not proof of causation. Event attribution distinguishes between correlation and causation and focuses on influence rather than claiming exclusive credit.

It is not a replacement for sales judgment. Revenue decisions involve context, relationships, and timing. Attribution provides structured insight into event impact on the pipeline, but it does not override commercial expertise.

Event performance and revenue impact are not the same. An event can be well executed, well attended, and positively received while still having minimal commercial influence. Execution quality does not guarantee pipeline movement.

Conversely, a small event with limited attendance can influence significant revenue if it engages the right accounts and stakeholders. Account-level impact matters more than scale.

Leadership evaluates events through revenue questions because business outcomes determine investment decisions. Measuring event influence aligns event evaluation with those expectations.

The focus is shifted from operational performance to commercial objectives through event attribution. Instead of focusing on attendance numbers, it evaluates pipeline progression, decision-making confidence, and revenue acceleration.

The emphasis shifts from demonstrating that an event occurred to comprehending how it influenced revenue when attribution is properly understood.

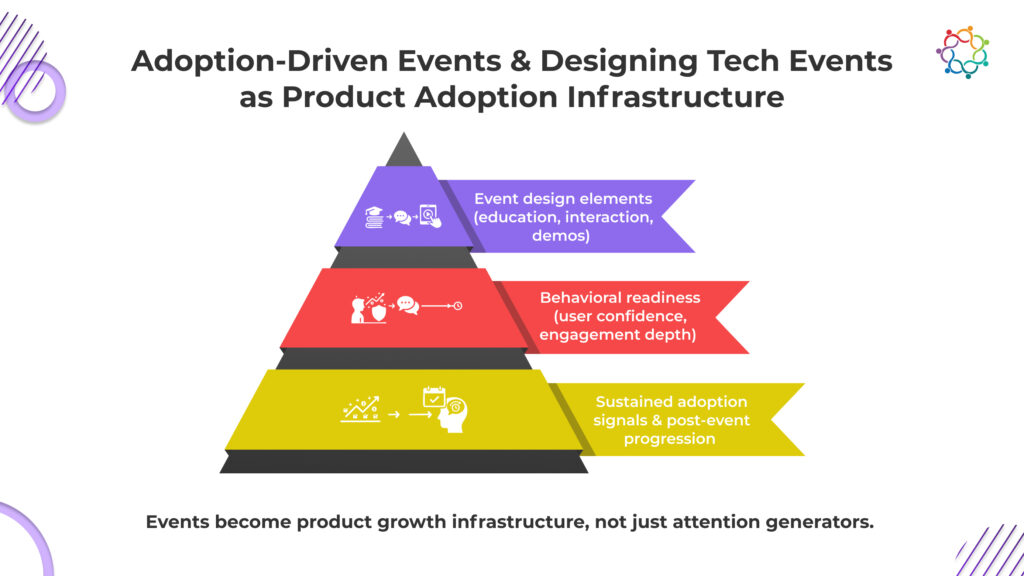

Most B2B tech companies can track demo attendance within minutes. Very few can trace how many of those attendees become active users 30 days later. That measurement gap is where product growth quietly breaks.

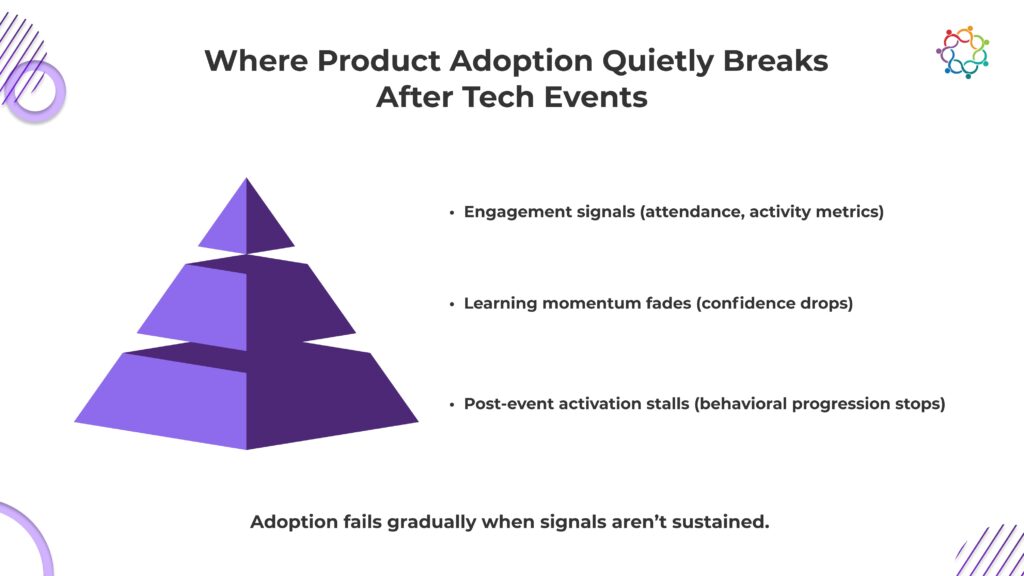

Event dashboards show participation, ratings, and engagement spikes. Leadership reads those signals as momentum. But activation metrics often tell a different story. Usage does not scale. Onboarding does not accelerate. The product remains underutilized.

The disconnect is structural. Demos create guided confidence inside controlled environments. Real adoption requires independent capability under real-world friction. When users leave the event, the guidance disappears. What felt intuitive during the session becomes uncertain in practice.

Awareness expands. Adoption hesitates.

When enthusiasm is mistaken for readiness, growth forecasts inflate while activation stalls. Events generate attention. Product growth depends on behavioral commitment.

This blog examines why demo engagement does not translate into sustained usage, and why tech events must function as adoption infrastructure, not just interest generators.

Tech events are designed to impress. They generate excitement, create a sense of product novelty, and showcase the capabilities of the technology in controlled environments. High demo participation and positive feedback signal internal success. On the surface, everything appears to be working. Leadership interprets these metrics as validation, while product teams quietly observe stagnant activation metrics post-event.

The problem is structural. Events optimize for attention, not adoption. Awareness alone cannot create behavioral change. Adoption requires independent product usage, confidence, and a commitment to integrate a tool into existing workflows. In controlled demo environments, users can follow scripted flows, guided steps, and supportive facilitators. Outside the event, these crutches disappear. Users struggle to replicate what seemed intuitive during the demo.

Key points:

Understanding this illusion is the first step in redesigning tech events to truly drive product growth. Events are not failing because attendees are unmotivated; they are failing because the system treats curiosity as adoption.

Seeing a product does not mean using it. Tech events are built to showcase, not to teach independent application. Demos reduce friction, simplify complexity, and walk participants through optimal situations. In a controlled setting, they instill confidence, but when the event is over, that confidence disintegrates.

Users deal with the product on their own, experience friction in the process, and find it difficult to duplicate what appeared simple during the demonstration. Exposure is a passive process. Adoption is in progress. Users are unprepared for real-world usage when events are created for visibility. This is not a short-term deficit; it is a fundamental issue.

Capability cannot be replaced by excitement, and the behavioural commitment that propels adoption is hidden by dashboards full of demo clicks.

Uncomfortable truths:

Events that do not confront this gap are silently setting up adoption failure.

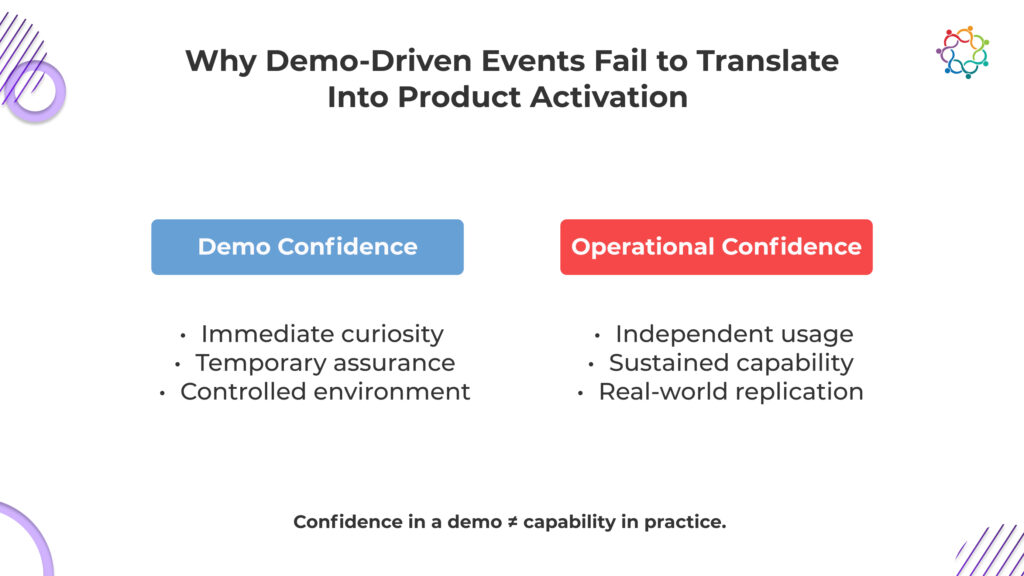

Demo-driven events create an illusion of success. Attendees leave feeling competent because the environment is controlled, scripted, and frictionless. Everything works perfectly when guided by event staff. Users can click, navigate, and follow instructions without encountering real challenges.

This is not confidence, it is convenience.

Operational reality is different. Once the event ends, the support, guidance, and simplified flows vanish. Users attempt to replicate what they observed, but real-world conditions expose gaps in knowledge, skill, and judgment.

The moment they face friction, hesitation sets in. The confidence they felt during the demo collapses. Product adoption does not grow from applause; it grows from repeated independent interaction under real conditions.

Demo engagement measures curiosity, not capability.

Leadership dashboards celebrate clicks and participation while adoption stalls quietly in the background. The uncomfortable truth is that most demo-driven events are designed to impress, not to equip. They optimize for visibility and attention, leaving product teams to deal with the predictable fallout: users inspired, but incapable of meaningful adoption.

Adoption does not fail dramatically. It erodes quietly. Post-event, the signals that could sustain momentum are often absent, creating multiple friction points:

Attendance data, session participation, and demo clicks dominate post-event reporting. Yet these signals rarely translate into actionable adoption insights.

The challenge of distinguishing real adoption impact from surface engagement has been explored deeply in frameworks that help B2B marketers evaluate event success beyond attendance and clicks.

Product teams cannot gauge:

Without these insights, follow-up actions are misaligned. Adoption signals vanish into engagement dashboards, leaving product growth efforts blind.

Learning at tech events is fleeting. Attendees absorb concepts and complete demos under controlled conditions, but the moment they leave, structured guidance disappears. Users are left to navigate complexity alone, and confidence quickly erodes.

The knowledge gained at the event decays without reinforcement, creating hesitation and doubt. Most follow-ups focus on communication rather than building operational capability, leaving users stuck at the awareness stage.

Adoption slows not because the product is difficult, but because the event failed to embed lasting behavioral readiness. Without deliberate reinforcement, excitement collapses into disengagement, silently stalling product growth.

Follow-ups prioritize communication over behavioral progression:

As a result, adoption slows, users disengage, and the revenue potential tied to accelerated usage is lost. Adoption fails not because of user disinterest but because events were never designed to extend confidence beyond the experience.

The majority of tech events aim for participation, visibility, and applause metrics that give leaders confidence. Events driven by adoption follow a different course. Capability, preparedness, and quantifiable behavioural commitment are their top priorities. Ignoring it costs money, and the difference is glaring.

Events that focus on adoption make sure that users leave ready to perform independently. Curiosity is replaced by competence. Without this, demos produce only fleeting enthusiasm.

Adoption-driven events do not chase large audiences. They cultivate engagement density, ensuring each attendee gains actionable knowledge and real-world application skills. Attention without depth is wasted.

Follow-ups are designed to extend learning, reinforce skills, and maintain momentum. Without reinforcement, the adoption gap widens immediately after the event.

Success is tracked through signals of operational readiness, not attendance numbers or click rates. Without these metrics, events remain vanity exercises that look successful but fail to move product usage.

Adoption-driven events expose the uncomfortable truth: high participation does not equal growth. Only deliberate design, reinforced learning, and capability measurement convert engagement into product adoption.

B2B tech events are rarely built for adoption. They are built for applause, attendance, and superficial engagement. This is why excitement spikes while real-world usage stagnates. If events cannot accelerate independent product confidence, they are meaningless to product growth.

More than just exposure is needed for adoption; intentional processes that incorporate behavioural preparedness before, during, and following the event are also necessary.

The friction users will experience after the incident must be taught, reinforced, and replicated in every contact. Events must question presumptions, replicate actual procedures, and develop operational capability in authentic settings. Follow-ups must maintain momentum and direct behavioural evolution; they cannot be general.

Adoption stalls in the absence of these institutions because the lessons learned from the incident disappear. Dashboards continue to demonstrate engagement success, but product teams are left to face consumers who are ill-prepared for real-world use.

Designing tech events as adoption infrastructure is uncomfortable because it forces organizations to measure capability, not clicks. Only by treating events as behavioral scaffolding can curiosity be converted into adoption and product growth.

B2B tech events are often categorized as marketing spend. That framing is strategically wrong. Events either compress or extend your product payback period.

Adoption speed determines revenue velocity. When users leave an event with operational confidence, activation accelerates. Faster activation shortens time-to-value. Shorter time-to-value improves retention probability. Retention stability increases lifetime value.

This is not branding. It is growth math.

When events fail to drive adoption readiness, the economic consequences compound:

Customer acquisition cost inflates because marketing-generated users fail to activate efficiently.

Payback periods stretch because revenue realization is delayed.

Expansion revenue shifts further into the future because usage depth never matures.

Churn risk increases because under-activated users disengage before experiencing core value.

Attendance metrics cannot offset these dynamics. A full room does not reduce CAC. Demo clicks do not improve retention. Only usage does.

Adoption-driven events operate differently. They influence:

Event ROI, therefore, cannot be measured by participation volume. It must be measured by incremental usage acceleration and its downstream revenue impact.

When events are treated as adoption infrastructure, they become levers inside the product growth engine. When they are treated as visibility campaigns, they consume budget while quietly extending payback timelines.

Growth does not follow excitement. It follows activation.

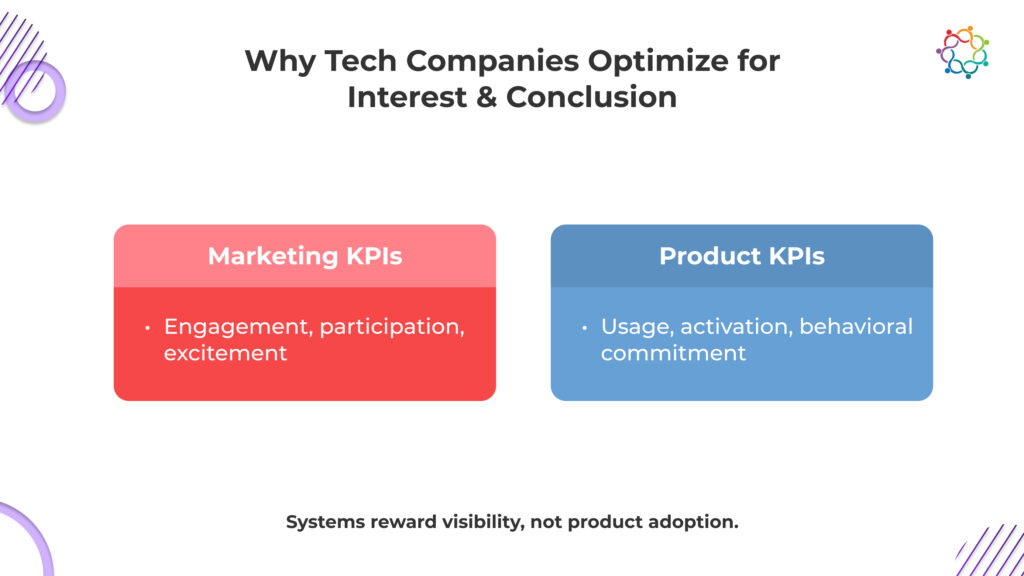

The adoption gap is not accidental. It is a measurement failure.

Marketing teams are rewarded for visible engagement: registrations, demo participation, session attendance. These metrics move immediately and report cleanly. They create the appearance of momentum.

Product teams are measured on activation, usage depth, and retention. These outcomes develop slowly and often outside the event environment. They require sustained behavioral change, not momentary enthusiasm.

The organization optimizes for what it can show quickly, not what compounds economically.

This creates a structural misalignment. Events are engineered to generate attention because attention is rewarded. Adoption readiness is underdesigned because it is harder to measure and slower to surface.

The result is predictable:

Dashboards improve.

Activation does not.

Growth stalls not because the product is weak, but because the system prioritizes interest over usage.

Until incentives shift from participation metrics to activation velocity, tech events will remain attention engines. And attention, without adoption, does not build product growth.

B2B tech events often appear successful because they generate visible engagement and demo participation. Executives see energy and dashboards that suggest traction. The uncomfortable truth is that excitement alone does not create product adoption.

Real growth requires sustained behavioral commitment, independent usage, and operational confidence that extends beyond the event. Interest without activation is meaningless. Events that cannot move users closer to adoption are not strategic investments; they are marketing exercises disguised as growth levers.

Forward-thinking leaders examine how structured support and expert guidance can bridge the gap between event engagement and real-world adoption, transforming participation into tangible growth.

If a tech event does not accelerate product usage readiness, it fails its fundamental purpose and contributes nothing to real product growth.

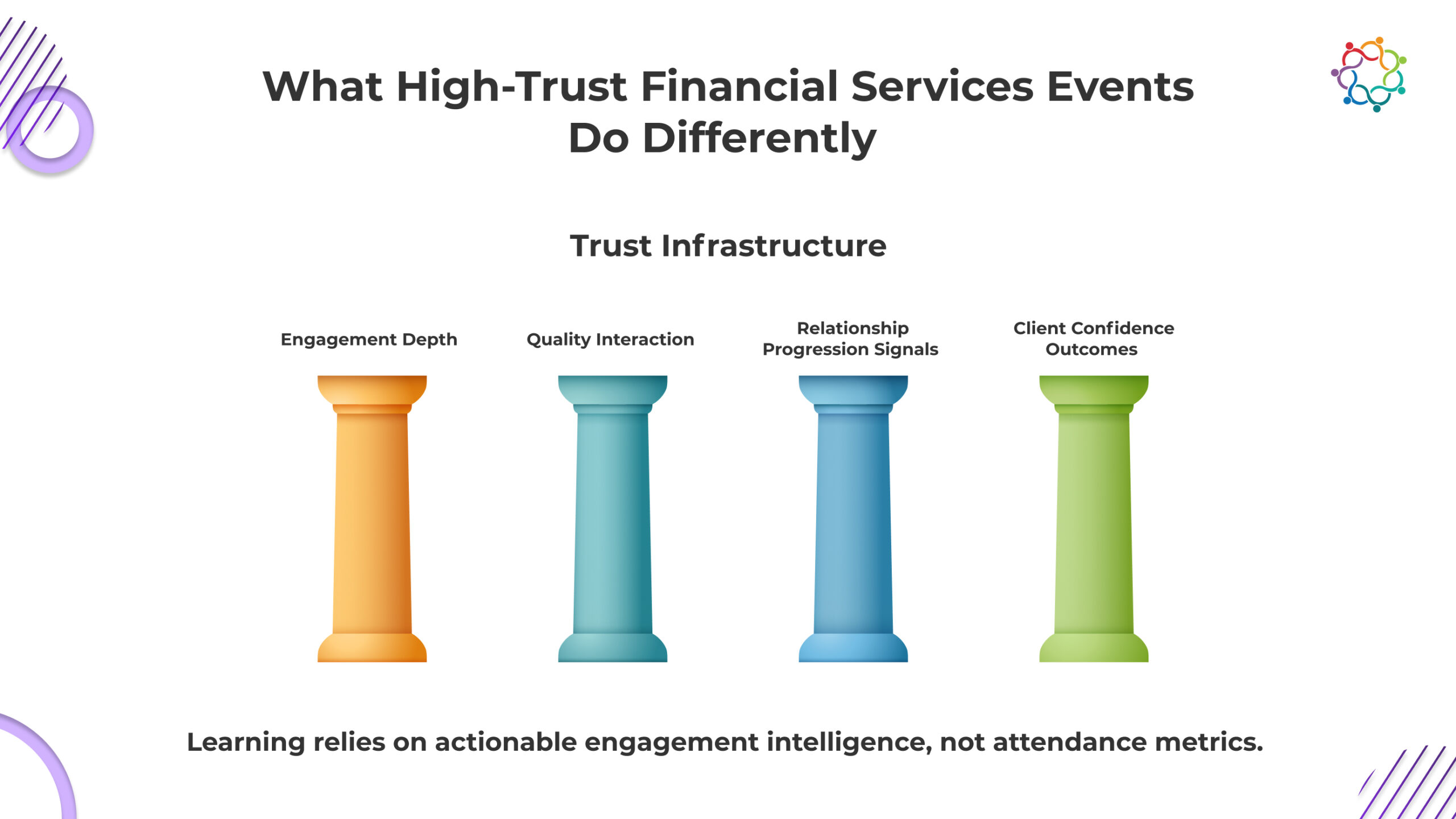

Revenue delays in financial institutions rarely begin in the pipeline. They begin by misinterpreting what event success actually means. When financial services events produce hundreds of leads but fail to accelerate client conviction, revenue timelines silently extend. Relationship managers inherit volume without readiness. Forecasts appear healthy. Closures do not follow. This creates a dangerous financial distortion. Leadership believes future revenue has strengthened, when in reality, revenue probability has not moved at all.

Registrations, attendance, and CRM additions offer visible proof of activity. But financial decisions do not follow activity. They follow trust. Clients do not commit capital because they attended. They commit capital because they believe.

This creates a structural blind spot. Institutions measure who appeared. They fail to measure who advanced toward confidence. And when confidence does not advance, revenue does not materialize.

This blog exposes why trust, not lead volume, determines whether these events influence real revenue.

Every financial event creates interaction. Very few create conviction. This is the gap leadership refuses to confront.

Clients do not attend to be impressed. They attend to assess exposure. Every interaction answers one silent question. “Can this institution be trusted with consequences that cannot be reversed?” If that question remains unresolved, nothing else you presented matters. The relationship does not progress.

You can fill rooms. You cannot force belief. Attendance proves your brand can attract curiosity. It does not prove your institution reduced client hesitation. If hesitation remains, capital remains exactly where it was before your event. Untouched.

Understanding your offering is not the barrier. Clients often understand very quickly. What delays revenue is unresolved doubt about your consistency, intent, and long-term reliability. Until doubt weakens, decision timelines stretch indefinitely while you keep reporting engagement success.

There is no neutral outcome. If client confidence strengthens, future financial conversations accelerate. If confidence weakens or stalls, resistance increases. This means your event did not just fail to help revenue. It actively made future conversion harder, whether you admit it or not.

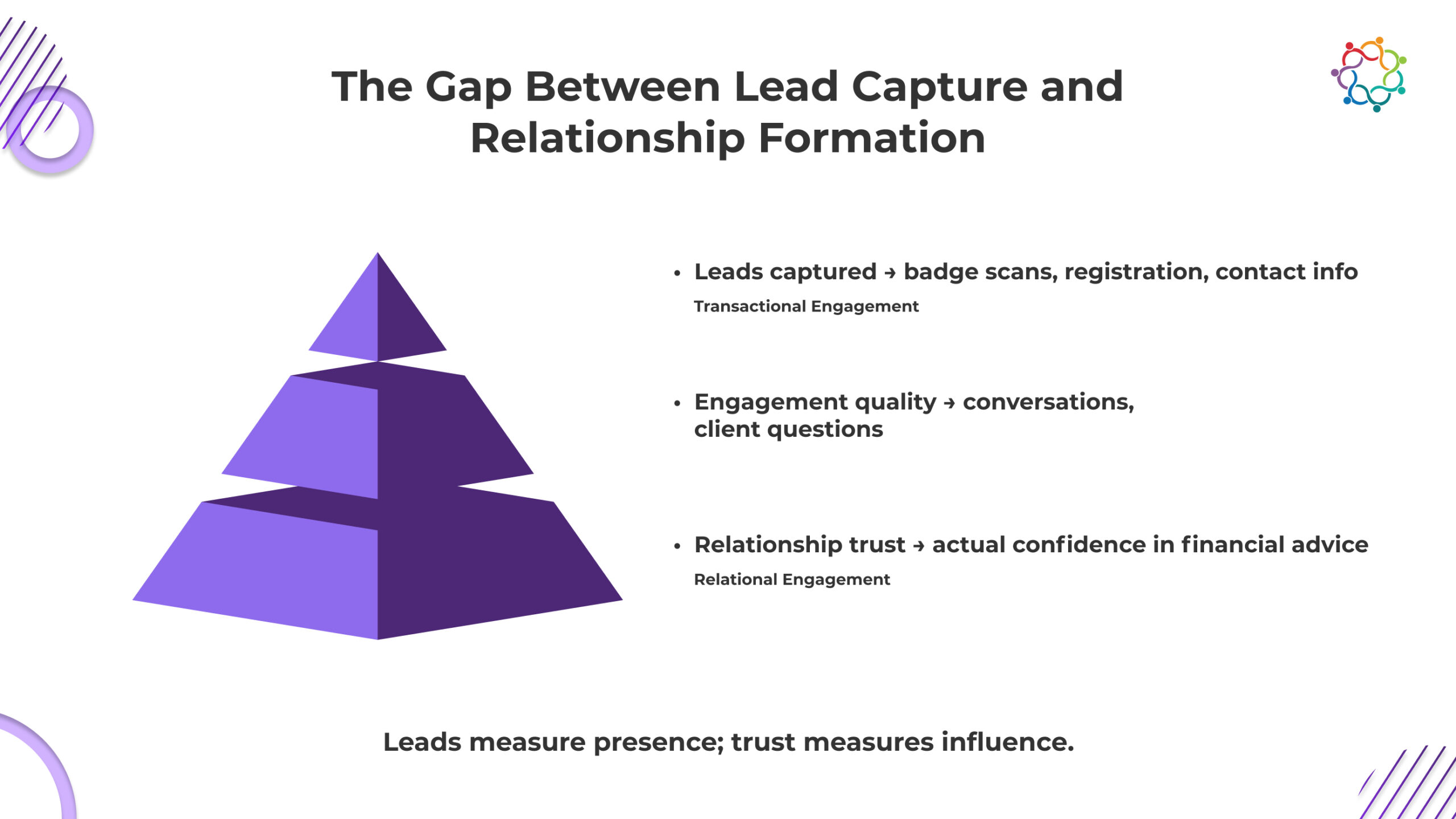

Your pipeline looks full. Your revenue is not. That gap exists because lead capture created visibility without creating trust.

This dynamic impact on trust and forecast accuracy has been explored deeply in case studies demonstrating how events can transform financial services engagement outcomes.

When a client’s badge is scanned, your system records progress. The client has entered your world. But you have not entered theirs. You do not know whether they left with confidence, doubt, or indifference. Yet your forecasts quietly assume forward movement.

This is the structural mistake.

Transactional engagement gives you contact. Relational engagement gives you permission. Only one of these produces revenue. Most leads never convert because nothing actually changed in the client’s mind. They showed interest. They did not develop a belief.

Lead quantity measures how many people you reached. Relationship depth determines how many will trust you with consequences.

If trust did not advance, your pipeline did not advance.

You did not generate future revenue. You generated future uncertainty disguised as opportunity.

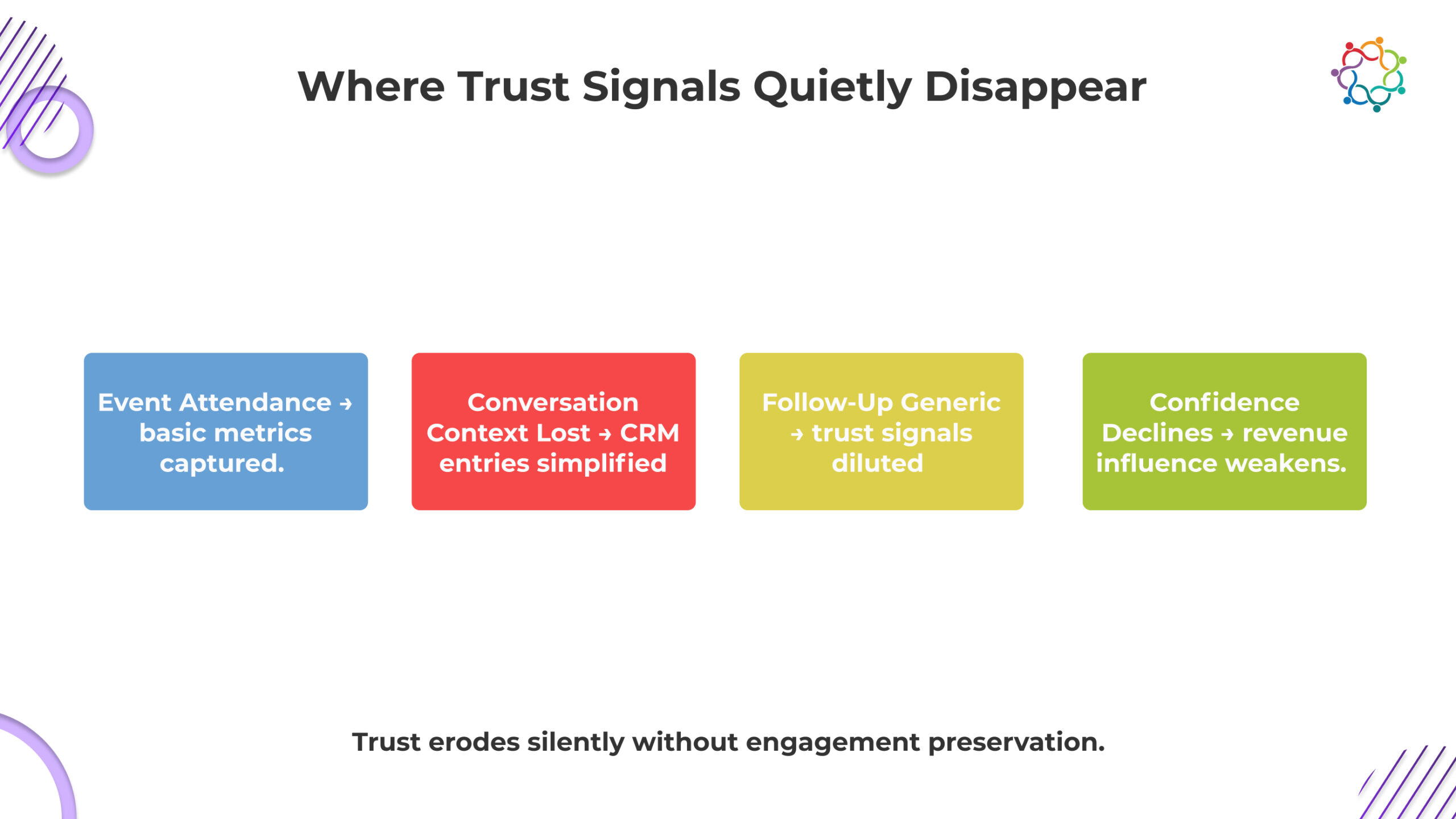

Trust erosion rarely happens during the event itself. It happens afterward, when engagement signals lose context, continuity breaks, and relationship momentum quietly weakens without institutions even realizing it has begun.

Client engagement during events contains layers of meaning. Questions asked, conversations initiated, time invested in discussion, and emotional tone all signal trust formation. But most systems compress these signals into static attendance records.

This simplification destroys relationship intelligence.

When engagement depth gets reduced to attendance logs, institutions lose visibility into:

Without this context, leadership assumes engagement was uniform. It was not.

Trust formation is uneven. Some clients move forward. Others move away. When these distinctions disappear inside reporting systems, institutions lose the ability to understand their own credibility trajectory.

Trust signals do not disappear because clients stopped evaluating. They disappear because institutions stopped observing.

After events conclude, relationship managers inherit lead lists. But they do not inherit engagement intelligence. They receive names without psychological context.

This forces relationship managers to restart conversations without understanding where trust previously stood.

This interruption creates friction:

Clients perceive a disconnection between event interaction and follow-up.

Relationship continuity weakens

Confidence progression slows down.

Clients expect institutional memory. When institutions fail to demonstrate it, credibility weakens. Trust requires continuity. Without continuity, confidence resets.

This extends decision timelines and reduces revenue probability.

Generic follow-ups represent one of the fastest ways to destroy fragile trust formation.

When clients receive outreach that fails to reflect prior interaction depth, they recognize the transactional intent immediately. The institution appears more interested in conversion than understanding.

This perception creates emotional distance.

Trust fades gradually through signals like:

Lack of personalized continuity

Absence of contextual awareness

Communication that prioritizes institutional needs over client confidence

Trust does not collapse instantly. It erodes quietly. By the time institutions recognize this erosion, the client has already disengaged psychologically.

And once confidence weakens, revenue opportunity weakens with it.

Most of your events are optimized to be seen as successful. Very few are optimized to be believed.

You celebrate the attendance scale because it is visible. Clients evaluate credibility because that is consequential. This is the disconnect you are operating inside.

Low-impact environments expand your contact database. High-trust environments expand your revenue probability. The difference is not cosmetic. It is commercial.

Clients do not move closer to allocating capital because they attended. They move closer when their skepticism weakens, and their confidence strengthens.

This creates an uncomfortable reality. If your event increased leads but did not increase client confidence, nothing actually improved. Your reports look stronger. Your revenue position does not.

Trust density, not lead volume, determines whether future financial conversations accelerate or stall.

If confidence did not deepen, your event did not create momentum. It created noise that only your internal dashboards interpreted as progress.

You are treating events as marketing campaigns. Clients are treating them as evidence of whether you deserve their trust. This disconnect is costing you revenue.

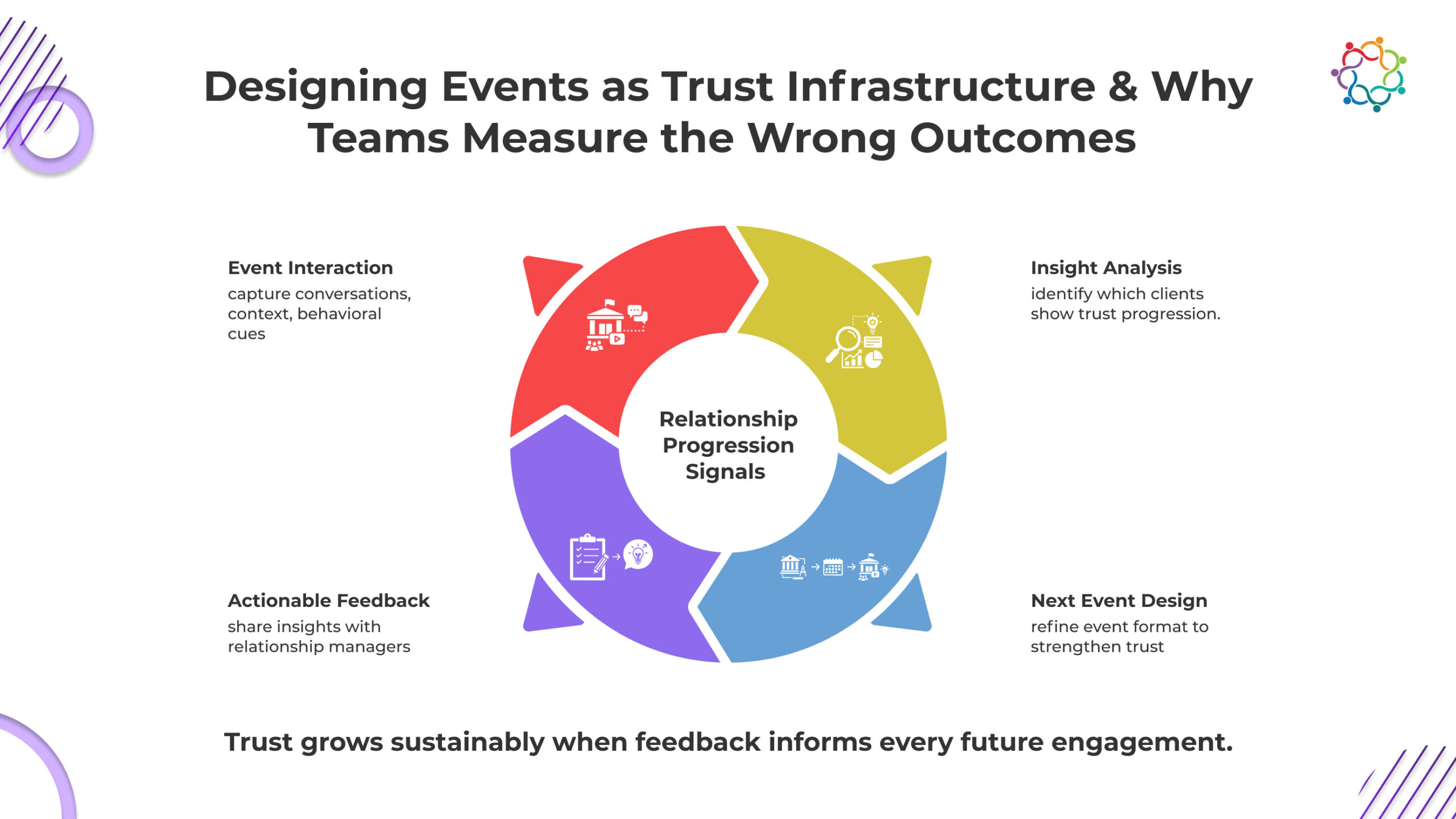

Trust does not form inside a single event. It forms across a sequence of reinforcing signals. Every event either strengthens that sequence or breaks it.

Trust influence operates across three layers:

If continuity breaks after the event, confidence weakens. When confidence weakens, decision timelines expand, and revenue probability drops.

Events do not create revenue directly. They create the conditions that make revenue possible.

If your event cannot sustain trust beyond its duration, then it did not build infrastructure.

It created temporary attention that your revenue cannot use.

Revenue does not happen when clients meet you. It happens when clients stop fearing the consequences of choosing you. This is the progression you cannot see but are financially dependent on. Exposure creates awareness. Credibility shapes perception. Confidence reduces hesitation. Only then does financial commitment become possible.

Most of your leads are stuck between awareness and confidence. They know you exist. They are not ready to trust you. Until trust strengthens, capital does not move. Your pipeline remains visually full but commercially inactive.

Trust accelerates revenue by reducing decision friction. Clients stop delaying. Internal approvals face less resistance. Competitive alternatives lose influence. Without this psychological shift, nothing converts.

This is the reality you must confront. Events do not produce revenue because clients attend.

They produce revenue only when they make you feel safer than waiting.

Organizations measure lead volume because it protects reporting comfort.

Trust progression is slow, ambiguous, and difficult to defend in performance reviews. Lead volume is immediate, clean, and defensible. So the organization defaults to what is easy to show, not what actually moves capital.

This creates a system where visible activity replaces commercial reality.

These metrics create the illusion of momentum. They help justify budgets. They help demonstrate marketing productivity.

But they do not tell you the only thing that matters. Did the client move closer to trusting you with financial consequences?

If that answer is unclear, then your reporting is not protecting revenue. It is protecting perception.

And perception does not close financial relationships. Trust does.

Your dashboards are full. Your revenue may not be. Lead volume creates comfort for leadership. It does not create confidence in clients. Every registration, every badge scan, every post-event interaction that fails to advance trust is a missed revenue opportunity.

Financial decisions are not activity-driven. They are trust-driven. Events influence perception, accelerate confidence, and reduce hesitation, but only if the trust signals survive beyond the event. Otherwise, your contact list is just noise, and your pipeline is just an idea.

If your events do not strengthen trust, they do not influence financial decisions. They are marketing exercises masquerading as revenue engines. The moment you prioritize lead volume over confidence, you guarantee that future financial commitment will remain stalled, invisible, and delayed.

In financial services, revenue does not follow exposure. It follows trust.

If your events are not measurably strengthening client confidence, they are not influencing capital movement. They are simply creating activity your revenue cannot use.

Forward-looking teams are integrating structured frameworks that ensure every client interaction contributes to measurable confidence and long-term financial commitment.

Healthcare professionals no longer attend events because access is rare. They attend because access is abundant. Congresses, medical education events, digital symposia, and pharmaceutical briefings now compete continuously for their attention. Attendance has become routine. Presence no longer guarantees impact.

This is where pharma organizations misread success.

Within pharma event marketing, participation metrics create early reassurance. Large HCP audiences attend. Scientific sessions are delivered. Internal dashboards confirm scale. From the outside, engagement appears strong.

But participation does not reveal what actually happened inside the HCP’s mind.

Organizations cannot clearly see what was understood, what was questioned, or what was dismissed. They cannot confidently determine whether perception strengthened or remained unchanged.

This blog examines why that visibility gap exists. It explains how pharmaceutical events structurally fail to generate engagement intelligence, how that failure weakens long-term healthcare professional engagement, and why events must evolve into feedback-driven learning systems rather than remaining exposure-driven communication channels.

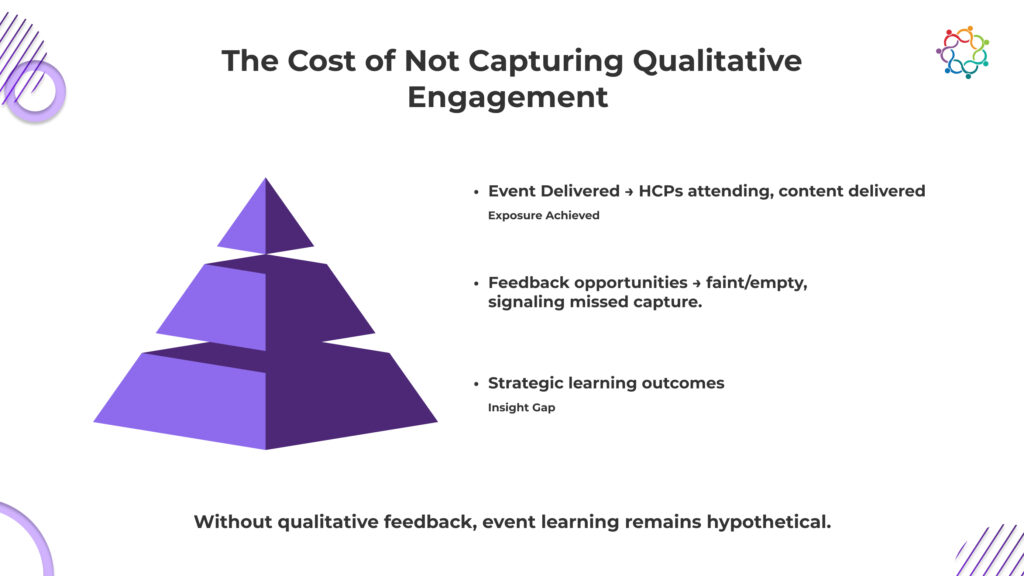

Most pharmaceutical organizations do not lack events. They lack engagement intelligence architecture.

Engagement intelligence architecture is the structured system that captures, interprets, and connects HCP feedback, perception signals, and engagement progression across interactions. It makes engagement visible beyond attendance. It shows not just who participated, but what changed because they participated.

Within pharma event marketing, this architecture is often missing or underdeveloped.

Instead, organizations rely on operational indicators that feel sufficient but are strategically incomplete:

This creates a dangerous illusion of understanding.

You assume engagement happened because the event happened.

But without engagement intelligence architecture, you have no structural mechanism to prove whether HCP perception evolved, whether scientific communication resolved uncertainty, or whether engagement moved forward at all.

The consequence is unavoidable. Strategy continues without verified learning.

You are not refining engagement. You are repeating it.

You are making decisions about HCP engagement without knowing what HCPs actually took away from your events.

That is the uncomfortable truth.

You know how many attended. You know which slides were presented. You know the agenda was completed. But you do not know whether the science clarified thinking or created silent doubt. You do not know whether your message strengthened confidence or exposed gaps in credibility.

This is the Engagement Blind Spot. It exists between what you delivered and what they internalized.

You repeat the same formats. You reinforce the same narratives. You continue investing in engagement you cannot validate.

You are not managing engagement strategy. You are hoping it worked.

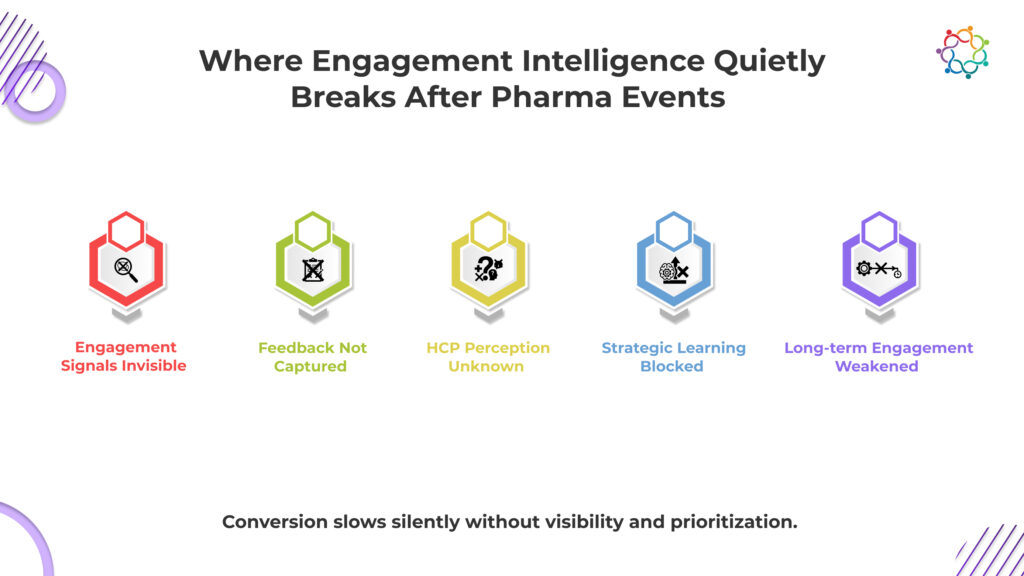

Feedback failure rarely occurs in a single moment. It unfolds in a sequence of small structural gaps that compound over time.

You assume participation equals engagement because it is the only thing you can see. HCPs attend, listen, and move through the agenda. But you have no structural visibility into who was intellectually engaged and who was mentally absent. Passive attendance and active scientific evaluation look identical in your reports.

This means you cannot identify which HCP relationships actually progressed. You leave the event with a list of attendees, not a map of engagement depth. The most valuable engagement signals disappear while you convince yourself the event worked.

The moment the event ends, your visibility collapses. HCP perception begins to fade, and you have no reliable system to capture it while it still exists. You do not know what created clarity, what created resistance, or what created indifference.

This forces you into retrospective guesswork. Scientific exchange feels complete internally, but you have no evidence of its effectiveness externally. You lose the only window where honest engagement signals exist. Once that window closes, the learning opportunity is permanently gone.

You cannot recover insight you never captured.

Your future engagement strategy depends on learning from past interactions. But without feedback, there is nothing real to learn from. So you default to repetition. The same formats. The same messaging. The same assumptions. You call it consistency, but it is actually stagnation.

You are not refining engagement based on evidence. You are preserving familiarity because it feels safe.

This guarantees that weaknesses remain uncorrected. Over time, your engagement strategy stops evolving. It becomes a cycle of activity without progress, sustained by belief instead of proof.

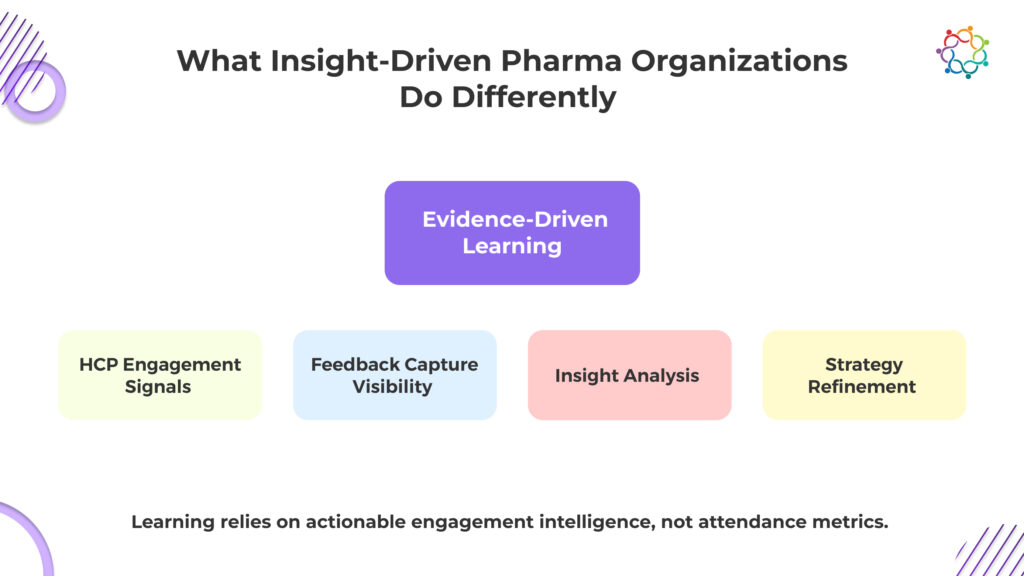

Not all pharmaceutical organizations operate within this blind spot. Some recognize that participation metrics alone cannot sustain long term engagement strategy. They evaluate events through a different strategic lens.

Instead of asking how many HCPs attended, they focus on understanding how engagement progressed.

This creates a fundamental structural contrast.

Insight-driven organizations optimize for something far more strategically valuable.

They focus on engagement visibility. They prioritize understanding how scientific exchange influenced perception. They measure engagement progression across interactions.

This shift changes the role of events entirely.

Events are no longer endpoints. They become input sources for strategic learning. The engagement consequence is transformative. Scientific exchange effectiveness improves over time because engagement insight informs future engagement design.

This dynamic of continuous interaction has been explored deeply in the context of how trusted pharma brands maintain engagement before, during, and after events.

Medical education events evolve based on real HCP response rather than internal assumptions. Strategically, this strengthens long-term healthcare professional engagement.

Organizations build a feedback-informed engagement ecosystem rather than a participation-driven activity cycle. This distinction determines whether events remain operational programs or become strategic intelligence assets.

The value of pharmaceutical events is not defined by attendance volume alone. It is defined by the learning they generate.

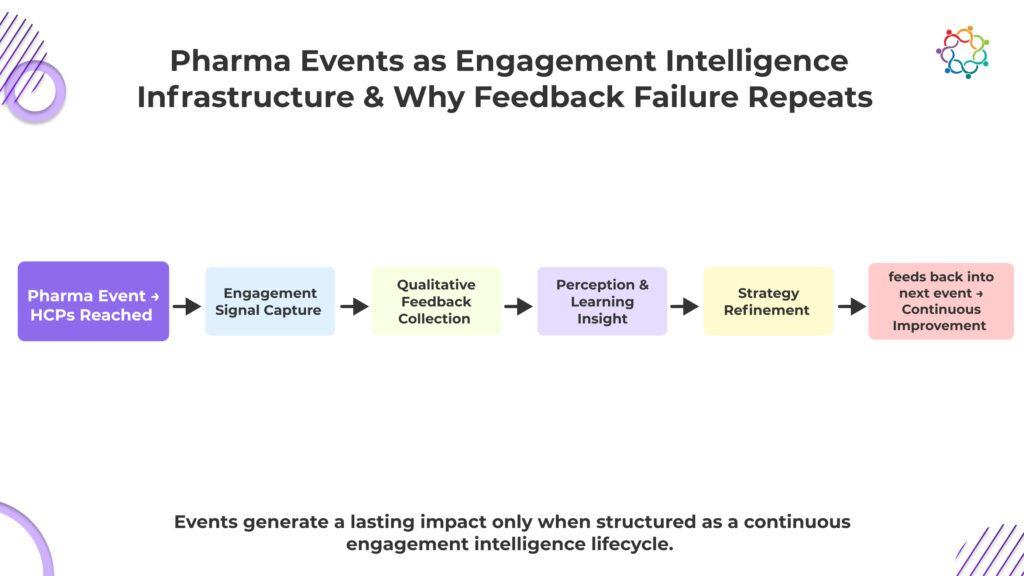

Pharmaceutical events are one of the few controlled environments where HCP attention is fully available. Yet most organizations treat them as isolated communication moments instead of intelligence collection points.

This is a structural failure.

Pharma event marketing must operate as an engagement intelligence infrastructure that connects each event to a larger engagement system. Every interaction should contribute to a longitudinal view of HCP engagement, not remain trapped within a single event cycle.

Without this structural layer, events remain disconnected. Leadership sees participation snapshots, not engagement trajectory. There is no continuity between what happened before, during, and after the event.

This breaks strategic momentum.

Engagement becomes episodic instead of cumulative.

When events function as an intelligence infrastructure, they create continuity. They allow organizations to track engagement movement, identify momentum shifts, and make engagement strategy responsive to real HCP behavior rather than static planning assumptions.

Feedback failure persists because it is structurally reinforced.

Within pharma event marketing, organizational evaluation systems reward visible operational success. Attendance metrics are reported. Participation is celebrated. Geographic expansion is recognized.

Engagement intelligence is rarely evaluated with the same rigor. This creates a powerful institutional incentive. Organizations prioritize what is rewarded. If scale is rewarded, scale becomes the focus. If engagement intelligence is not rewarded, feedback systems remain underdeveloped.

The engagement consequence is systemic repetition. Events continue to optimize for participation visibility rather than perception visibility. Strategically, this creates an engagement system that produces activity but not insight.

This cycle continues because it does not produce immediate failure.

Events appear successful. Participation remains strong. Scientific programming continues. The absence of feedback intelligence remains hidden beneath operational success. This creates institutional comfort with an incomplete understanding.

Organizations continue investing in engagement channels that they cannot fully evaluate.

This is not a tactical oversight. It is a structural outcome of institutional measurement priorities. Until feedback intelligence becomes a core success indicator, feedback failure will continue to repeat.

Exposure will continue to be measured. Understanding will continue to be assumed.

Strategic erosion rarely announces itself immediately. It accumulates slowly as engagement intelligence remains invisible.

Pharmaceutical events often demonstrate operational success. Attendance is strong. Scientific sessions are delivered. Participation targets are achieved.

These indicators create the appearance of justified investment.

But investment justification at leadership levels requires more than activity visibility. Leadership must understand strategic impact.

Without engagement intelligence, organizations cannot demonstrate:

The engagement consequence is budget vulnerability. Event investment appears active but strategically ambiguous.

Leadership begins to question whether investment produces measurable strategic returns.

Over time, events risk being categorized as operational expenses rather than strategic infrastructure.

Budget strength depends on engagement intelligence visibility. Without it, investment justification weakens.

Healthcare professionals evaluate engagement quality based on responsiveness.

Scientific exchange is not defined solely by information delivery. It is defined by mutual learning. When organizations do not visibly incorporate feedback, engagement begins to feel one-directional.

HCPs attend events. They receive information. They participate in discussions. But they do not see how their perspective influences future engagement.

This creates relational stagnation.

Engagement continues operationally, but relational depth does not progress. The engagement consequence is subtle but critical. Trust does not actively decline. It simply does not strengthen.

Scientific exchange effectiveness depends on progressive trust development. Without visible responsiveness, trust progression slows.

Scientific leadership depends on perception, not presence alone. Pharmaceutical organizations invest heavily in communicating evidence and advancing scientific understanding.

But without engagement intelligence, perception remains partially invisible.

Organizations cannot confidently determine:

This creates positioning fragility. Scientific leadership becomes assumed, not validated. Without perception visibility, differentiation weakens, and repetition replaces proven influence.

Scientific positioning depends on perceived value, and without engagement intelligence, your strategy is built on assumptions, not confirmed impact or defensible competitive strength.

Pharma events succeed at assembling HCP audiences. They succeed at delivering scientific content. They succeed at creating visible activity. But none of that guarantees that engagement actually be advanced.

What determines strategic value is not what was delivered. It is what was learned.

If you cannot see how HCP perception shifted, you cannot refine engagement. If you cannot refine engagement, you cannot strengthen scientific influence. And if influence does not strengthen, your events are not building a strategic advantage.

They are maintaining motion.

A pharma event that does not produce engagement intelligence does not strengthen positioning, trust, or future effectiveness. It sustains presence while leaving progress uncertain.

Transforming participation into actionable learning requires integrating platforms that track, analyze, and make engagement intelligence immediately usable.

That is not strategic engagement. That is strategic stagnation.

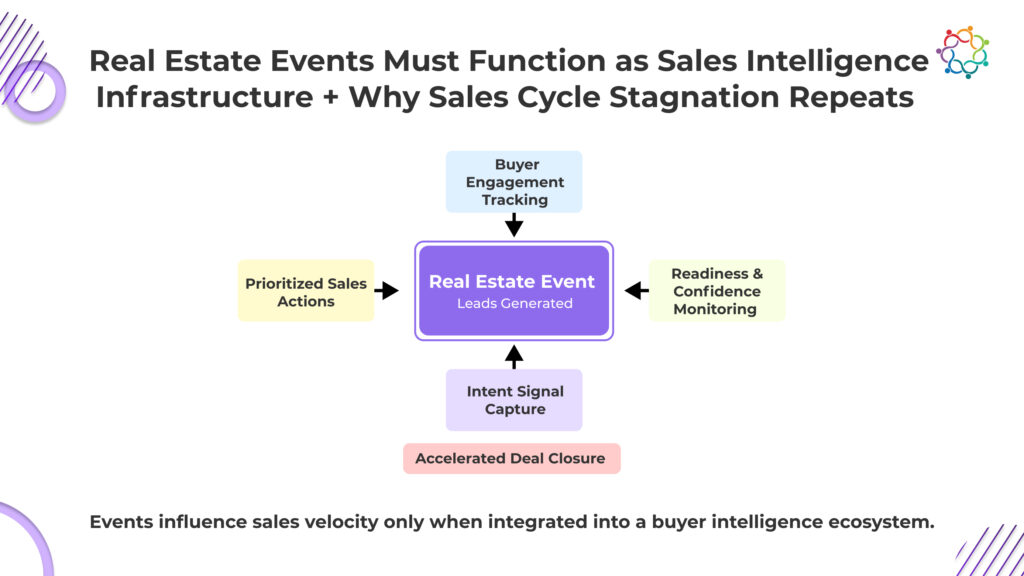

Every real estate sales cycle follows a fixed lifecycle: inquiry, evaluation, financial preparation, decision, and closure. Real estate events intervene at only one point in this chain. They increase inquiries. They do not compress evaluation, financial readiness, or decision-making. This is where the illusion begins. Lead volumes spike. Pipelines expand. Internal dashboards signal momentum. Leadership assumes acceleration is underway.

But closure timelines remain unchanged.

The event has filled the top of the funnel while leaving the rest of the lifecycle untouched. Buyers still take weeks or months to secure financing, align family decisions, and build conviction. The sales cycle continues at its original pace. The apparent acceleration exists only at the entry point, not at the revenue point.

This creates a dangerous misinterpretation. Pipeline growth is mistaken for revenue acceleration. Activity is mistaken for progress.

This blog explores why this structural disconnect exists, where the sales cycle quietly slows after events, and why these events fail to accelerate conversion unless they reveal who is actually ready to buy.

You cannot control buyer readiness. But your sales cycle suffers when you cannot identify it.

Property buying is not a single decision. It is a sequence of financial validation, internal justification, and risk acceptance. Buyers must confirm loan eligibility, evaluate cash flow impact, align family expectations, and convince themselves the timing is right. None of this finishes at an event. The event only introduces the possibility. The actual decision develops afterward, slowly and privately.

This is where your pipeline becomes deceptive.

When buyers enter your pipeline, they bring uncertainty with them. Some will take months. Some will never convert. Yet they occupy the same space as buyers who could close sooner. Without structured nurturing and progression tracking, you cannot influence their movement.

So your sales cycle stretches.

Not because buyers are slow. But because you cannot see where they truly are. Until that changes, your pipeline is not moving toward revenue. It is waiting for it.

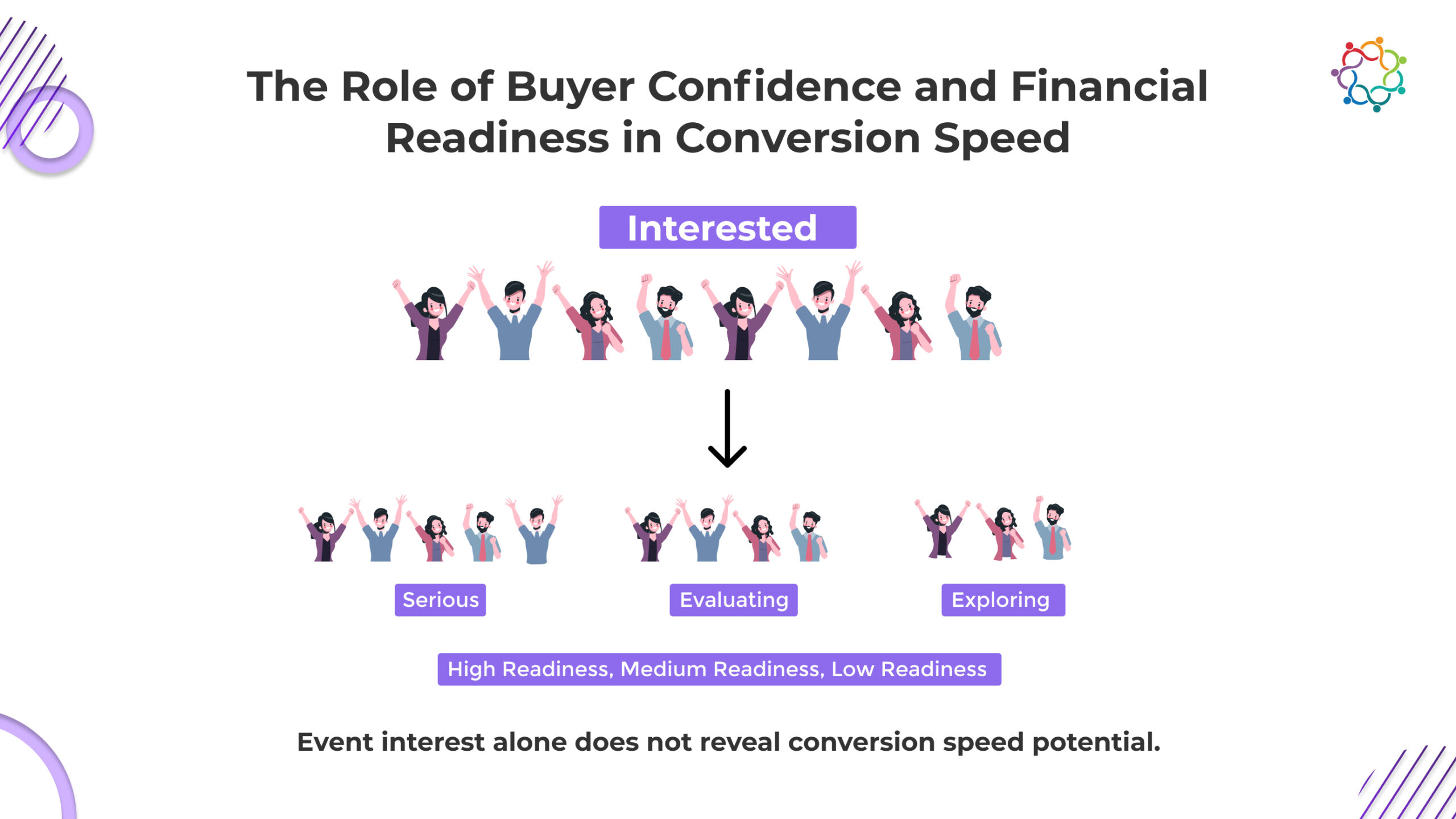

Conversion velocity is directly linked to buyer psychology. At any event, attendees may present similar levels of curiosity, but the depth of their readiness varies widely. Some are actively evaluating immediate purchase options, while others explore possibilities for future consideration. Both categories appear identical during initial engagement, yet their timelines diverge significantly.

This divergence creates the Buyer Readiness Gap: the distance between observable interest and actionable ability to commit. Sales acceleration fails when this gap is unrecognized. Without actionable signals on who is prepared to transact, sales teams cannot focus effort efficiently.

Structured understanding of financial readiness and confidence progression is critical. Buyers with financial constraints, incomplete approvals, or unresolved internal decision debates require nurturing to progress. Events that merely aggregate inquiries obscure these realities. They provide an illusion of activity while leaving serious signals hidden. High-performing organizations invest in systems that translate engagement into discernible readiness signals, allowing sales prioritization to target buyers most likely to shorten the sales cycle.

The growing role of specialized event technology in enabling this shift has been examined in depth across real estate event environments.

Key insights include:

Ignoring these underlying dynamics results in persistently extended sales cycles. To change the perception of events from pipeline generators to acceleration drivers, it is crucial to understand how confidence, financial preparedness, and structured involvement interact.

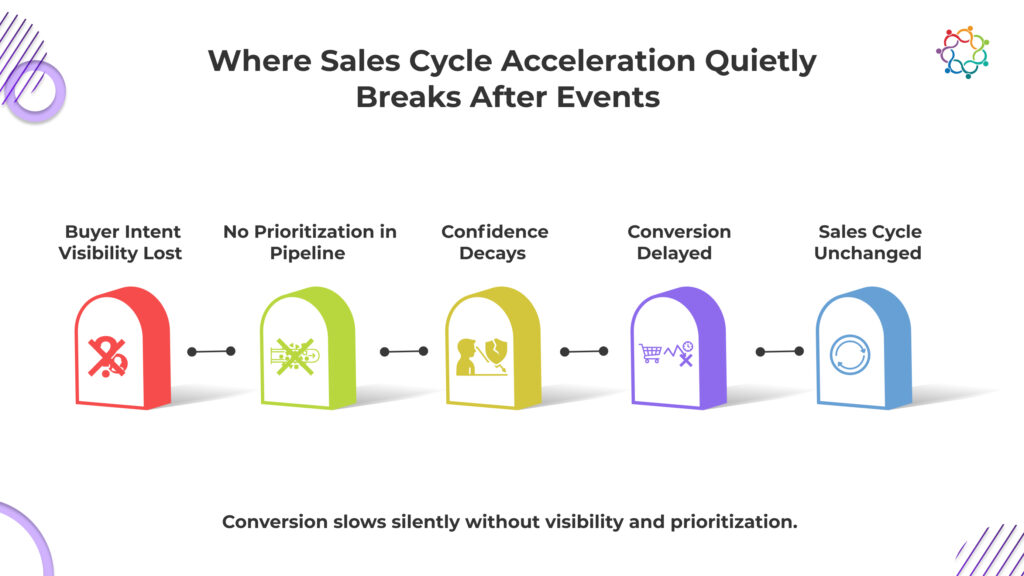

The sales cycle does not slow because buyers disappear. It slows because your visibility disappears. The moment the event ends, your prioritization signal weakens. Every buyer is equally important. Every follow-up feels urgent. But urgency without prioritization creates delay, not acceleration.

What follows is where your sales cycle quietly breaks, and why your pipeline growth fails to translate into faster revenue.

Events often succeed in generating high volumes of visible interest. However, once the event concludes, clarity diminishes. Developers lose track of which buyers are genuinely ready to act versus those simply exploring options. This collapse in intent visibility results in:

The initial optimism created by event attendance is deceptive. Sales teams misallocate time, following up on exploratory leads while high-intent buyers are not engaged quickly enough. Without mechanisms to capture and analyze engagement signals, post-event efforts default to volume-based responses rather than velocity-based actions.

Real estate events traditionally funnel all attendees into a single pipeline. This undifferentiated approach obscures urgency levels, creating systemic inefficiencies:

Segmentation based on readiness and engagement is essential for accelerated outcomes. Low-velocity organizations rely on raw numbers, while high-velocity organizations segment leads to identify early movers and prioritize interventions.

The contrast highlights why pipeline growth without strategic segmentation fails to accelerate conversion.

Event-generated momentum fades rapidly without ongoing engagement. Buyers revisit their decisions independently, often re-evaluating financial, familial, and lifestyle considerations. Confidence erosion leads to extended timelines:

Sales cycles do not stall abruptly; they elongate quietly as event-generated interest decays. Organizations that fail to maintain structured engagement underestimate the hidden cost of unmonitored buyer journeys. Without visibility into confidence decay, developers cannot intervene strategically, cementing the status quo of delayed revenue realization.